- The paper presents ETEGRec, which jointly optimizes item tokenization and recommendation through a dual encoder-decoder architecture.

- It employs Sequence-Item Alignment and Preference-Semantic Alignment to enhance token representations and overall model robustness.

- Experiments on Amazon datasets show notable improvements in generalizability and recommendation performance, especially for unseen users.

End-to-End Learnable Item Tokenization for Generative Recommendation

Introduction

The paper "End-to-End Learnable Item Tokenization for Generative Recommendation" (2409.05546) presents ETEGRec, a generative recommender system framework that integrates item tokenization and autoregressive generation in a unified manner. Traditional generative recommendation approaches often decouple item tokenization from downstream recommender optimization, potentially leading to suboptimal performance. ETEGRec addresses this by using a dual encoder-decoder architecture designed for mutual reinforcement and alignment between item tokenization and recommendation.

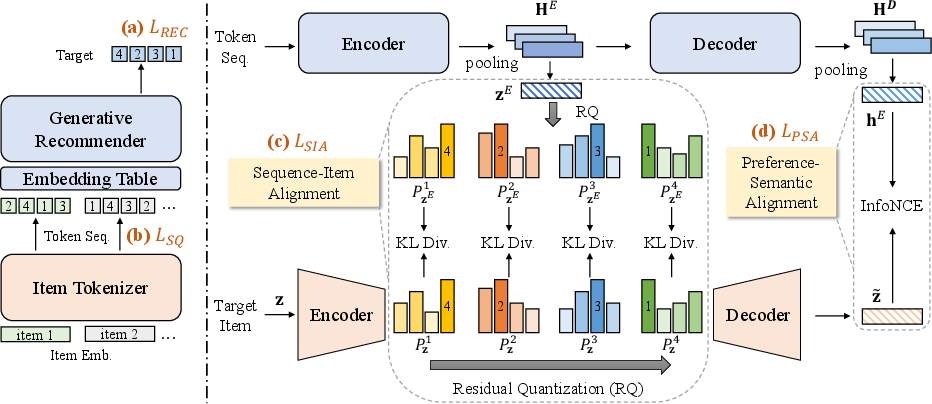

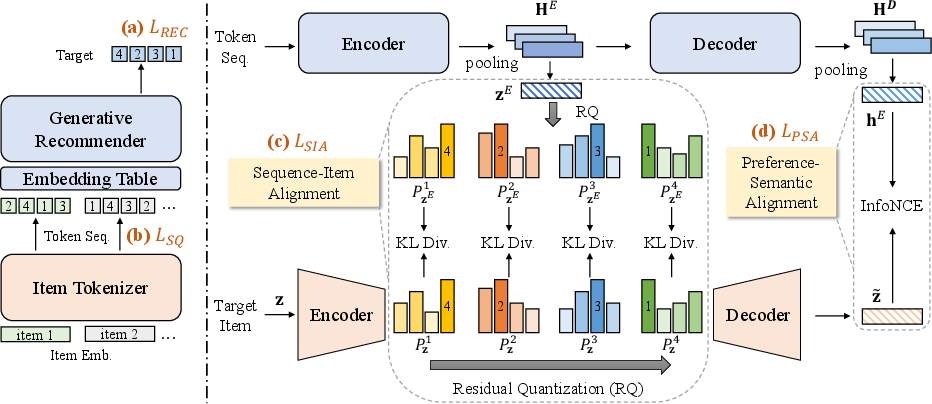

Figure 1: The overall framework of ETEGRec. ETEGRec consists of two main components, the item tokenizer and the generative recommender. Sequence-Item Alignment (SIA) and Preference-Semantic Alignment (PSA) achieve their alignment from two different perspectives for mutual enhancement.

Methodology

Dual Encoder-Decoder Architecture

ETEGRec employs a dual encoder-decoder setup, consisting of an item tokenizer and a generative recommender. The item tokenizer leverages Residual Quantization Variational Autoencoder (RQ-VAE) to map items into multi-level token sequences. The generative recommender, based on the T5 Transformer model, learns to autoregressively generate item identifiers given the tokenized input sequences.

Sequence-Item and Preference-Semantic Alignment

Two alignment strategies are proposed:

- Sequence-Item Alignment (SIA): Aligns the encoder's hidden representations of interaction sequences with the collaborative embedding of items, guiding them to produce similar token distribution representations.

- Preference-Semantic Alignment (PSA): Uses contrastive learning to align user preference encodings (decoder state) with the semantics of the target item as reconstructed by RQ-VAE.

These alignments serve to tightly couple the item tokenizer and recommender, ensuring both utilize the same token representations for mutual benefit.

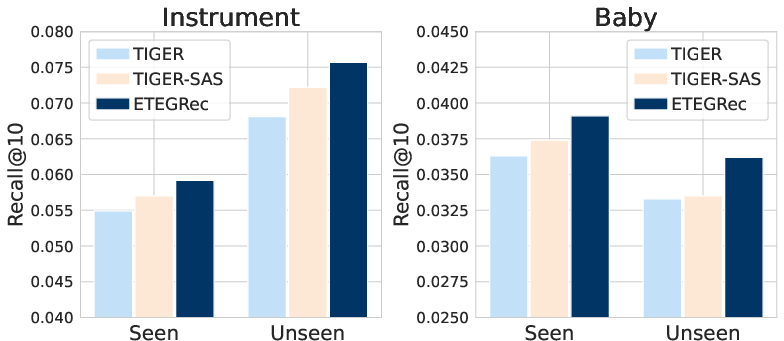

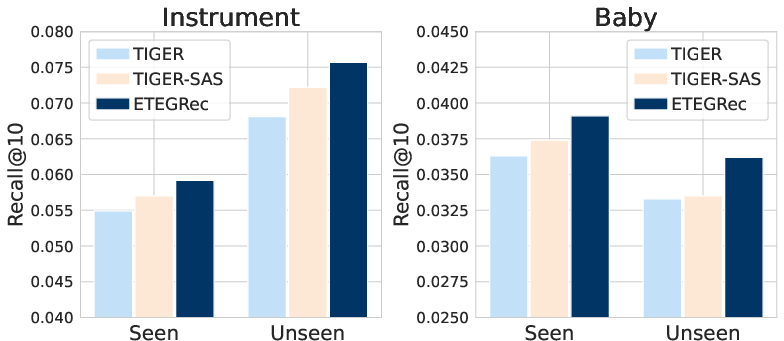

Figure 2: Performance comparison on seen and unseen users.

Experimentation and Results

Experiments on three Amazon datasets demonstrate ETEGRec's superior performance over traditional and other generative recommendation baselines. Extensive ablation studies confirm the efficacy of the alignment strategies and the end-to-end learning approach. Specifically, the results indicate notable improvements in generalizability and adaptability to unseen users and interactions.

ETEGRec's success is largely attributed to the seamless integration and joint optimization of tokenization and recommendation processes, with the alignment strategies promoting model robustness and adaptability.

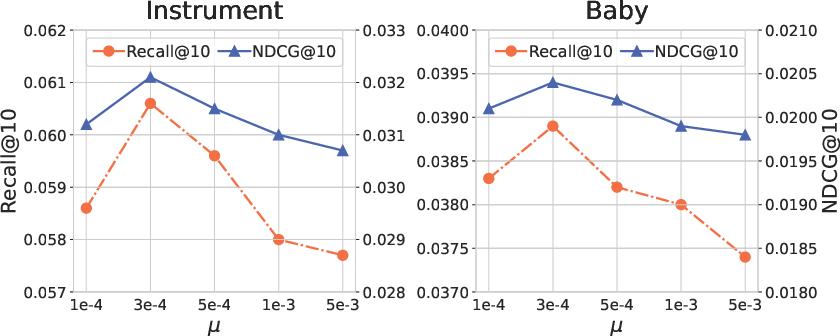

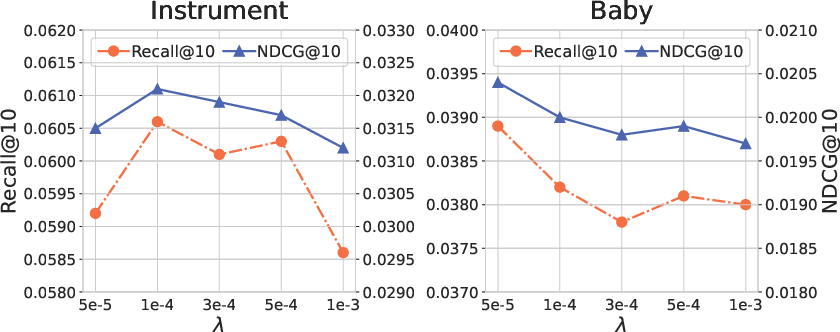

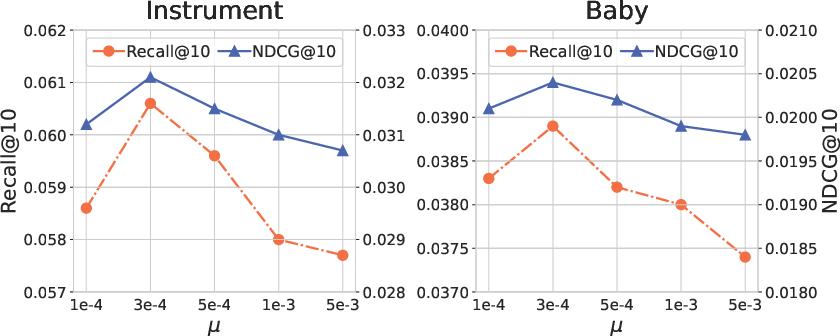

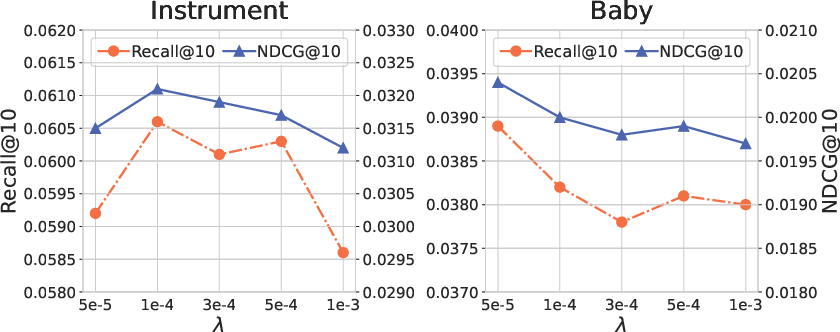

Figure 3: Performance comparison of different alignment loss coefficients.

Discussion

ETEGRec's integrated framework has the potential to redefine the implementation of generative recommender systems. By optimizing item tokenization concurrently with recommendation training, ETEGRec highlights the limitations of traditional decoupled tokenization approaches. Future research could explore scaling ETEGRec with larger models or adapting the framework for diverse recommendation architectures.

Conclusion

ETEGRec effectively demonstrates that end-to-end learnable item tokenization enhances generative recommendation systems by fostering a seamless and synergistic interaction between token generation and sequence prediction. The paper's contributions lay a foundation for further research into integrated generative frameworks in recommendation systems.

In future endeavors, expanding the framework to other model types or exploring its scaling capabilities can prove valuable in enhancing recommendation technology's adaptability and accuracy.