- The paper provides a comprehensive review of dynamic graph neural networks that integrate sequence modeling with traditional GNNs for capturing temporal dependencies.

- It categorizes models into discrete-time and continuous-time approaches while exploring scalable frameworks and pre-training techniques for real-world applications.

- The survey identifies key challenges such as scalability and dataset diversity, and suggests future trends including enhanced interpretability and integration with large language models.

A Survey of Dynamic Graph Neural Networks

Introduction

The paper "A survey of dynamic graph neural networks" (2404.18211) provides a comprehensive examination of dynamic graph neural networks (GNNs), focusing on their ability to handle temporal dependencies in evolving graph structures. Dynamic GNNs extend traditional Graph Neural Networks by integrating sequence modeling to capture the temporal evolution of nodes and edges in graphs, making them more representative of real-world networks. The paper categorizes dynamic GNN models based on their approaches to integrating temporal information, discusses large-scale applications, and explores pre-training techniques. Despite their promise, challenges such as scalability, handling heterogeneous information, and limited datasets persist.

Dynamic Graph Models

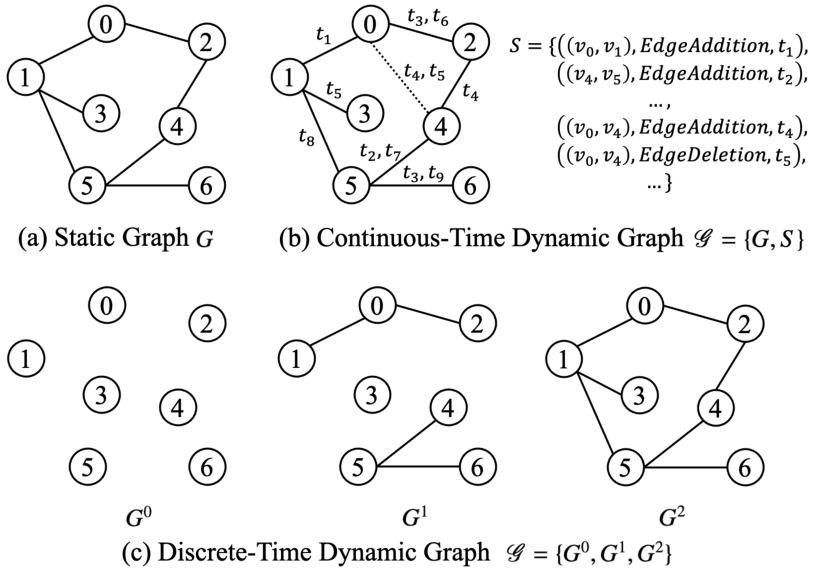

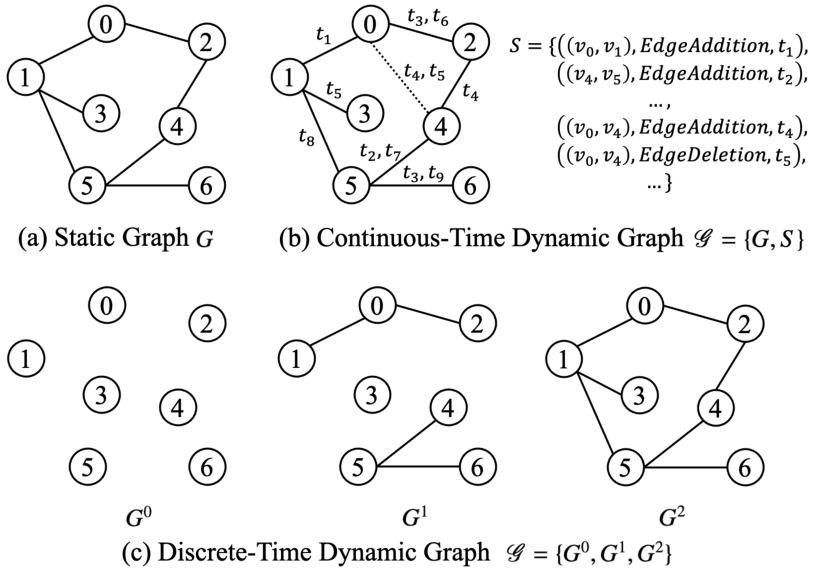

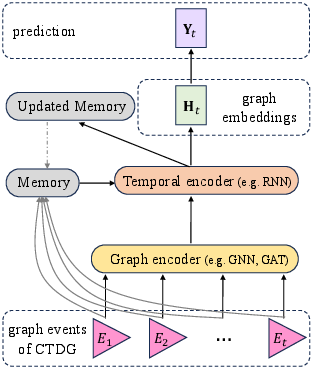

Dynamic graphs reflect real-world complexity by representing evolving structures. Distinguishing between Discrete-Time Dynamic Graphs (DTDGs) and Continuous-Time Dynamic Graphs (CTDGs) is crucial. DTDGs represent changes at discrete intervals (Figure 1(c)), whereas CTDGs continuously track interactions with timestamps (Figure 1(b)). The paper emphasizes the challenges traditional static GNNs face when applied to dynamic graphs, leading to the exploration of models that integrate GNNs with sequence learning techniques, such as TGAT and TGN, to handle temporal dependencies more effectively.

Figure 1: Comparative illustration of different graph representations. (a) Representation of a static graph where the structure remains unchanged over time, without temporal information; (b) Illustration of a Continuous-Time Dynamic Graph (CTDG) where interactions between nodes are labeled by timestamps, and multiple interactions are allowed; (c) Representation of a Discrete-Time Dynamic Graph (DTDG), which captures the evolution of relationships in discrete time intervals.

Notation and Background

The paper defines dynamic graphs and explores their unique features. In this context, the concept of temporal neighbors and the utilization of Hawkes processes are essential. Hawkes processes model the time-sequenced events' influence, providing a framework for integrating the temporal history of graph events, further enhancing the understanding of dynamic interactions and their representation in temporal neural networks.

Graph Representation Learning

Graph representation learning aims to create low-dimensional vector representations of nodes that preserve significant graph information for tasks like node classification and link prediction. In dynamic graphs, representation learning must account for evolving node representations over time, necessitating the modification of static graph representation techniques to accommodate time-dependent changes.

Models for Dynamic Graphs

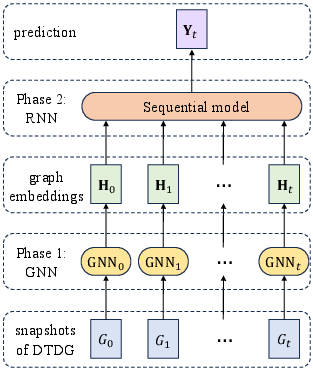

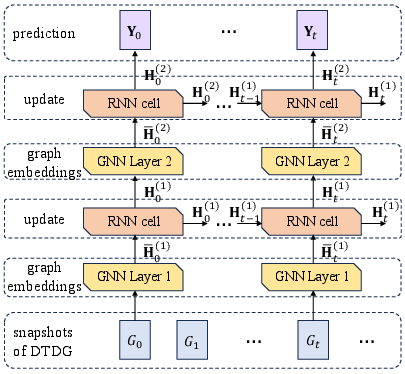

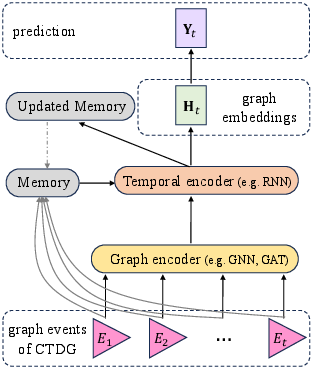

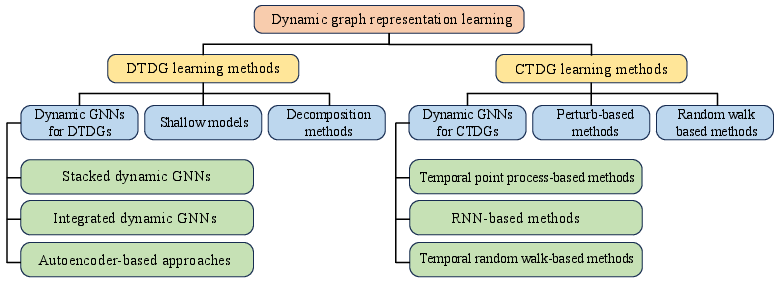

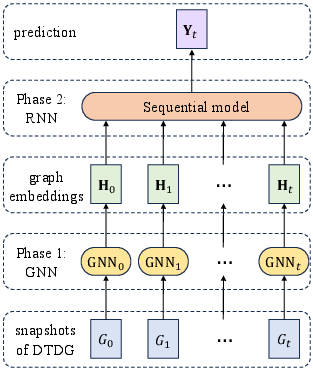

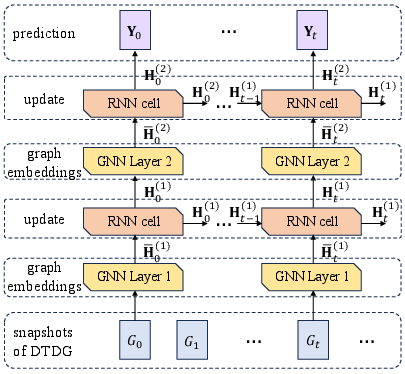

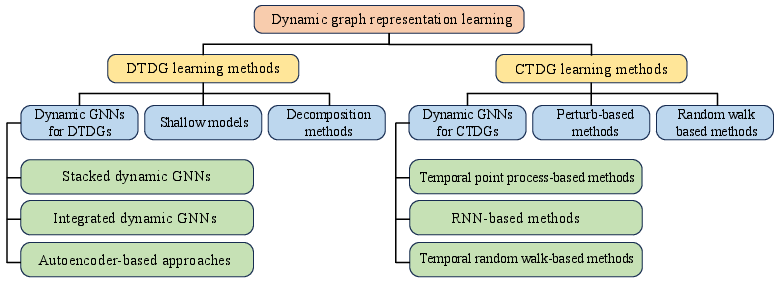

The paper categorizes models for dynamic graphs into discrete-time and continuous-time approaches, aligned with the nature of their data representations. Dynamic graph neural networks are further categorized based on their architectural frameworks (Figure 2). These architectures include stacked models (e.g., models integrating GCNs with LSTMs) and integrated models (e.g., GC-LSTM), which handle spatial and temporal data in dynamic graphs. The inherent flexibility of models like ROLAND and EvolveGCN makes them effective for large-scale graph applications.

Figure 2: Different model architectures for dynamic graphs.

Scalable Dynamic GNNs and Frameworks

Efficient processing of large-scale dynamic graphs remains a significant challenge. The paper explores scalable GNN models like SEIGN and frameworks such as TGL and DistTGL. These methods leverage strategies like dynamic propagation and memory-sync-based parallelism to handle vast amounts of graph data efficiently, highlighting the need for optimized algorithms capable of maintaining performance in large real-world datasets.

Datasets and Benchmarks

A broad array of datasets ranging from social networks to bioinformatics illustrates dynamic GNNs' diverse applications. The need for standardized benchmarks is emphasized to provide consistent evaluation criteria for comparing model performances. Existing benchmarks and evaluation methodologies aim to reflect the models' efficacy across node-level, edge-level, and graph-level tasks.

Advanced Techniques in Graph Processing

Dynamic transfer learning and pre-training techniques are pivotal for optimizing dynamic GNNs. Transfer learning addresses evolving source and target domains, facilitating knowledge adaption in dynamic settings. Pre-training focuses on capturing temporal and structural patterns to enhance model adaptability. The challenges include scalability, real-time adaptation, and maintaining rich temporal dependencies.

Future Trends and Challenges

Research into dynamic GNNs faces multiple challenges, such as interpretability, scalability, and dataset diversity. The paper suggests expanding the dataset range to include more complex, real-world-inspired structures and emphasizes improving models' interpretability to increase their applicability and trustworthiness. Furthermore, the integration of LLMs into dynamic graph learning is proposed, leveraging their few-shot learning and contextual understanding to potentially transform problem-solving in dynamic graph tasks.

Conclusion

Dynamic graph neural networks offer promising tools for capturing temporal dependencies in evolving networks. While significant advancements have been made, ongoing efforts are necessary to address scalability and dataset diversity challenges. Future research should focus on refining dynamic GNNs' theoretical underpinnings and broadening their application to accommodate complex, dynamic real-world structures.

Figure 3: The taxonomy of dynamic graph representation learning.