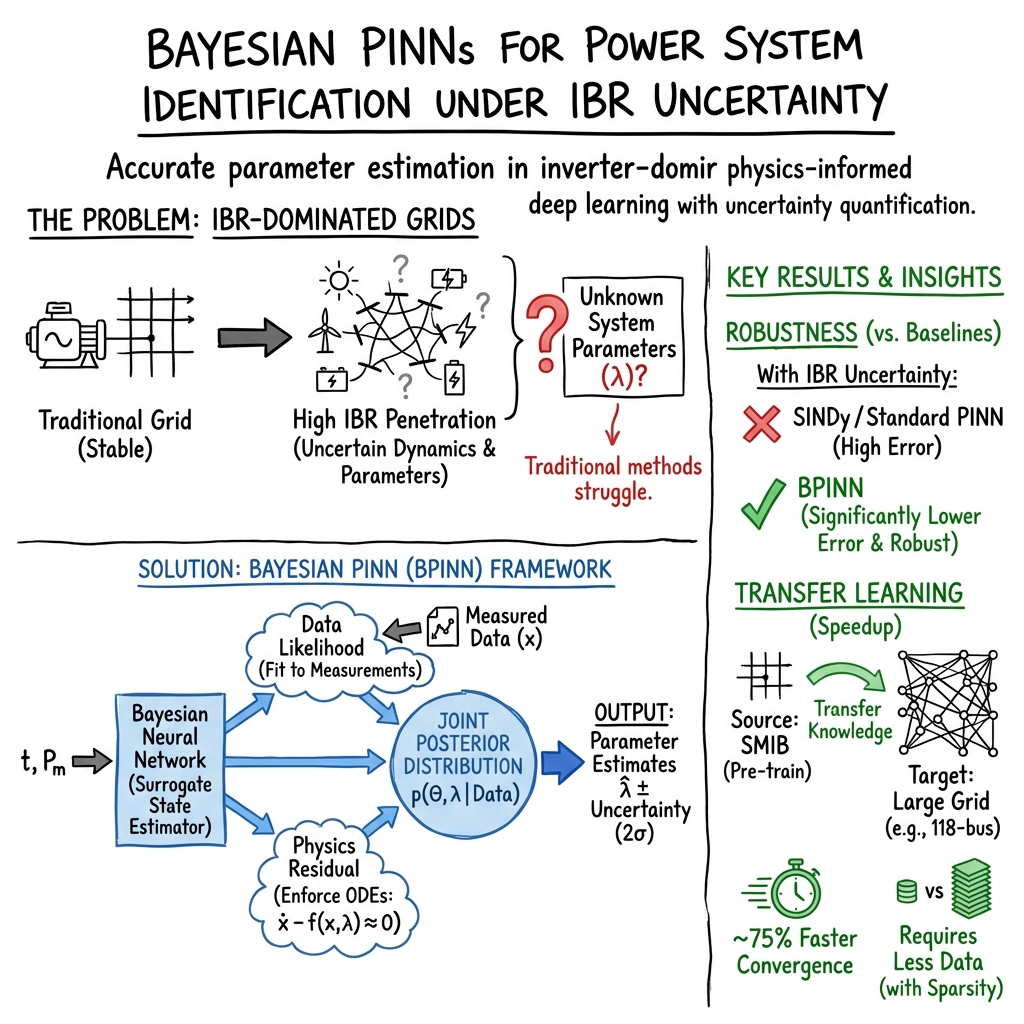

Bayesian Physics-informed Neural Networks for System Identification of Inverter-dominated Power Systems

Abstract: While the uncertainty in generation and demand increases, accurately estimating the dynamic characteristics of power systems becomes crucial for employing the appropriate control actions to maintain their stability. In our previous work, we have shown that Bayesian Physics-informed Neural Networks (BPINNs) outperform conventional system identification methods in identifying the power system dynamic behavior under measurement noise. This paper takes the next natural step and addresses the more significant challenge, exploring how BPINN perform in estimating power system dynamics under increasing uncertainty from many Inverter-based Resources (IBRs) connected to the grid. These introduce a different type of uncertainty, compared to noisy measurements. The BPINN combines the advantages of Physics-informed Neural Networks (PINNs), such as inverse problem applicability, with Bayesian approaches for uncertainty quantification. We explore the BPINN performance on a wide range of systems, starting from a single machine infinite bus (SMIB) system and 3-bus system to extract important insights, to the 14-bus CIGRE distribution grid, and the large IEEE 118-bus system. We also investigate approaches that can accelerate the BPINN training, such as pretraining and transfer learning. Throughout this paper, we show that in presence of uncertainty, the BPINN achieves orders of magnitude lower errors than the widely popular method for system identification SINDy and significantly lower errors than PINN, while transfer learning helps reduce training time by up to 80 %.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following points summarize what remains missing, uncertain, or unexplored, and are framed to be concrete and actionable for future research:

- Real-world validation: Assess BPINN performance on diverse, large-scale PMU datasets from inverter-dominated grids (including grid-forming and grid-following IBRs), rather than solely on simulations.

- Non-Gaussian and time-varying noise: Replace the Gaussian noise assumption with robust likelihoods (e.g., Student‑t, Laplace) and model heteroscedastic/colored noise, outliers, and missing data typical of PMU streams.

- Partial observability: Develop formulations that handle limited sensor coverage, missing channels, asynchronous/multi-rate measurements, and unknown or unmeasured inputs/disturbances.

- Hybrid/DAE dynamics: Extend the physics residual to differential–algebraic and hybrid systems (switching events, protections, saturations, delays) with rigorous handling of stiff dynamics and index issues.

- Identifiability under realistic excitation: Analyze structural and practical identifiability of parameters under typical operating conditions, and design active probing/experiment protocols to ensure informative inputs.

- Separation of uncertainty sources: Provide a principled way to disentangle and report aleatoric versus epistemic uncertainty in BPINNs (e.g., hierarchical models or variance decomposition at the posterior predictive level), beyond a single aggregated measure.

- Uncertainty calibration: Evaluate and report calibration metrics (coverage of credible intervals, reliability diagrams, PIT histograms) to verify that quantified uncertainty is trustworthy.

- Prior specification and constraints: Conduct sensitivity studies on weakly-informative normal–gamma priors (α, β, κ, μ), and enforce physical constraints (e.g., positivity of inertia/damping) via constrained priors or reparameterizations.

- Learning noise scales: Clarify identifiability and estimation of σx and σh (measurement and physics-residual scales) with hierarchical priors or empirical Bayes, and examine the risk of variance terms absorbing model misspecification.

- Posterior quality: Benchmark VI/SVGD against gold-standard MCMC (e.g., HMC/NUTS) for small to medium systems to assess approximation bias, multimodality capture, and convergence diagnostics.

- Scalability and complexity: Provide formal scaling laws and computational complexity analyses (states, parameters, particles, collocation points), and explore distributed/parallel training strategies and memory-efficient implementations.

- Architecture and hyperparameters: Systematically study sensitivity to network depth/width, activation functions, collocation strategies, learning rates, and particle counts, and report best practices for stable training on stiff power-system dynamics.

- Topology-aware modeling: Exploit grid structure (e.g., graph neural networks or modular/zone-based BPINNs) to improve scalability, interpretability, and transferability across network sizes and topologies.

- Handling topology changes and events: Extend BPINNs to detect and adapt to faults, line switching, topology reconfiguration, and parameter jumps (piecewise models, event-triggered retraining, or changepoint methods).

- Model misspecification and learned residuals: Integrate data-driven residual learning (e.g., sparse libraries, SINDy-like terms, or neural operators) to capture unknown physics while retaining physical constraints.

- Domain shift and transfer learning: Characterize when pretraining/transfer helps versus harms (negative transfer), define criteria to select layers to freeze/fine-tune, and measure robustness across different grids, IBR mixes, and operating points.

- Generalization to unseen inputs: Test BPINN predictive accuracy and uncertainty under out-of-distribution disturbances, controls, and renewable variability scenarios.

- Comparative baselines: Broaden comparisons beyond SINDy and deterministic PINNs to include subspace, Koopman, Kalman/particle filtering, and hybrid gray-box estimators under the same uncertainty settings.

- Collocation point selection: Investigate adaptive collocation schemes (error indicators, residual-based refinement) and their impact on accuracy, convergence, and computational cost.

- Practical PMU issues: Address time synchronization, latency, quantization, angle referencing, and clock drift in the likelihood and physics residual to improve real-world applicability.

- Physical plausibility checks: Add post-hoc or embedded constraints to ensure estimated parameters and states satisfy operational limits and energy conservation (e.g., monotonicity/positivity constraints, bound enforcement).

- Online/continual learning: Develop methods for streaming updates, forgetting control, and safe rapid adaptation during evolving grid conditions without retraining from scratch.

- Benchmarking protocols and reproducibility: Establish standardized datasets, scenarios, metrics (MAPE, coverage, CRPS), and open-source implementations to enable fair and replicable comparisons across methods.

Collections

Sign up for free to add this paper to one or more collections.