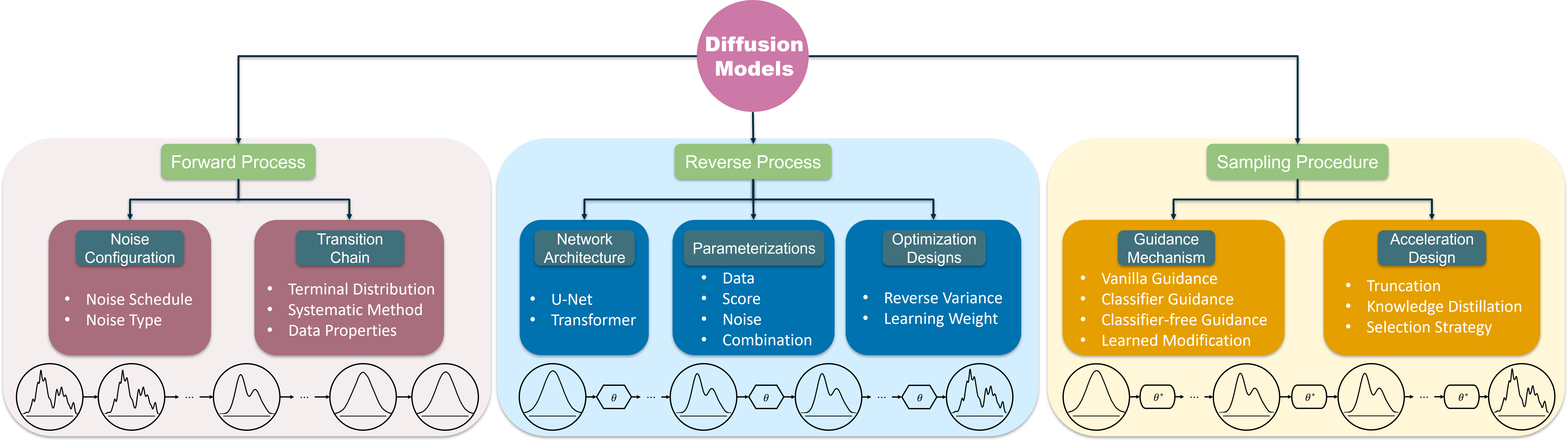

- The paper provides a comprehensive survey of the design fundamentals of diffusion models, analyzing forward, reverse, and sampling processes.

- It examines methodological choices such as noise scheduling and network architecture parameterizations that critically impact model performance.

- The survey highlights practical implications for optimized data generation and sets the stage for future research in generative modeling.

On the Design Fundamentals of Diffusion Models: A Survey

Introduction to Diffusion Models

Diffusion models stand out as a promising family within the generative model domain, capable of producing novel data by structurally adding and removing noise within a learning framework. The methodology operates through a systematic transformation involving noise manipulation in sequences, effectively learning data distributions for generations. Despite previous exhaustive analyses of various generative models, diffusion models require focused scrutiny to unravel their foundational design. This paper provides an evaluative review centered on the crucial elements, namely the forward process, reverse process, and sampling procedure, offering insights into implementation efficiency and potential improvements.

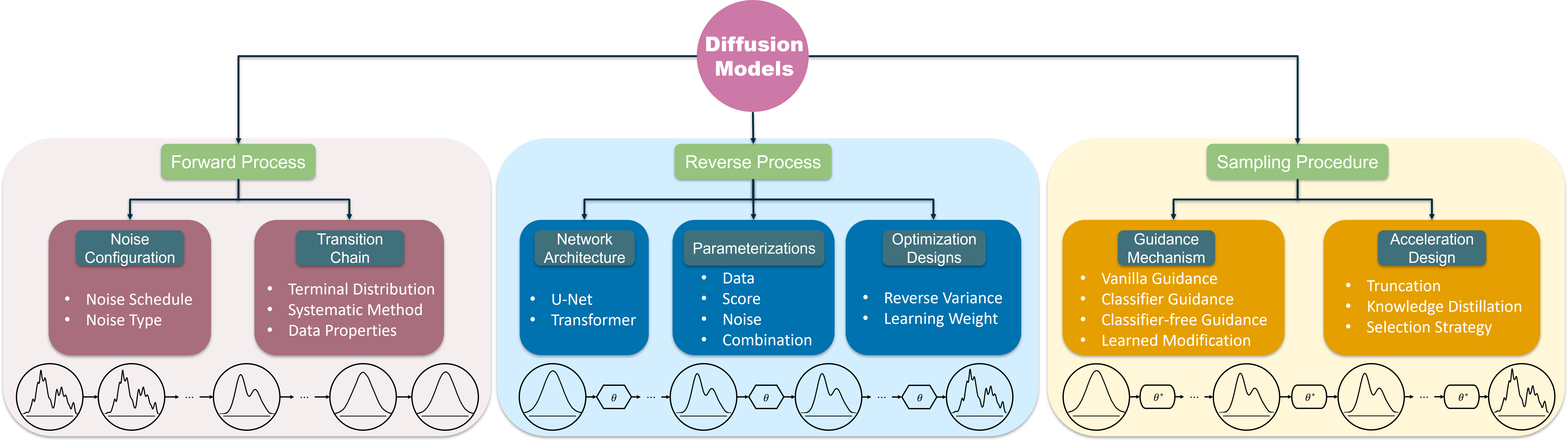

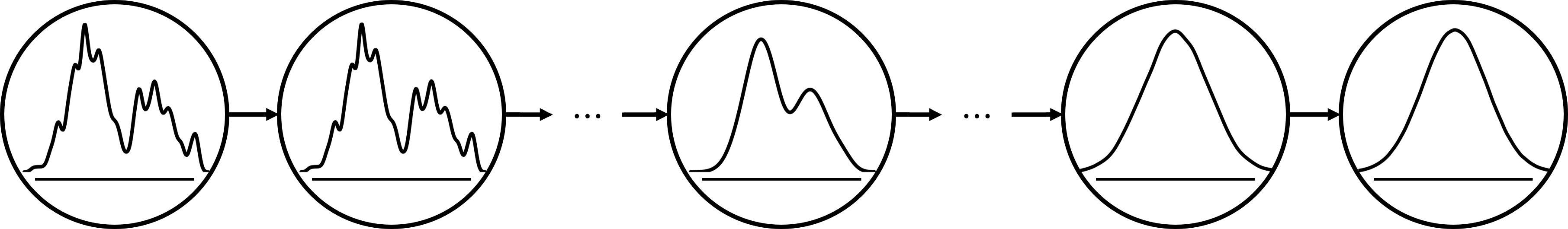

Figure 1: The overview of diffusion models. The forward process, reverse process, and sampling procedure constitute the core components responsible for noise manipulation, network training, and data generation respectively.

Preliminaries of Diffusion Models

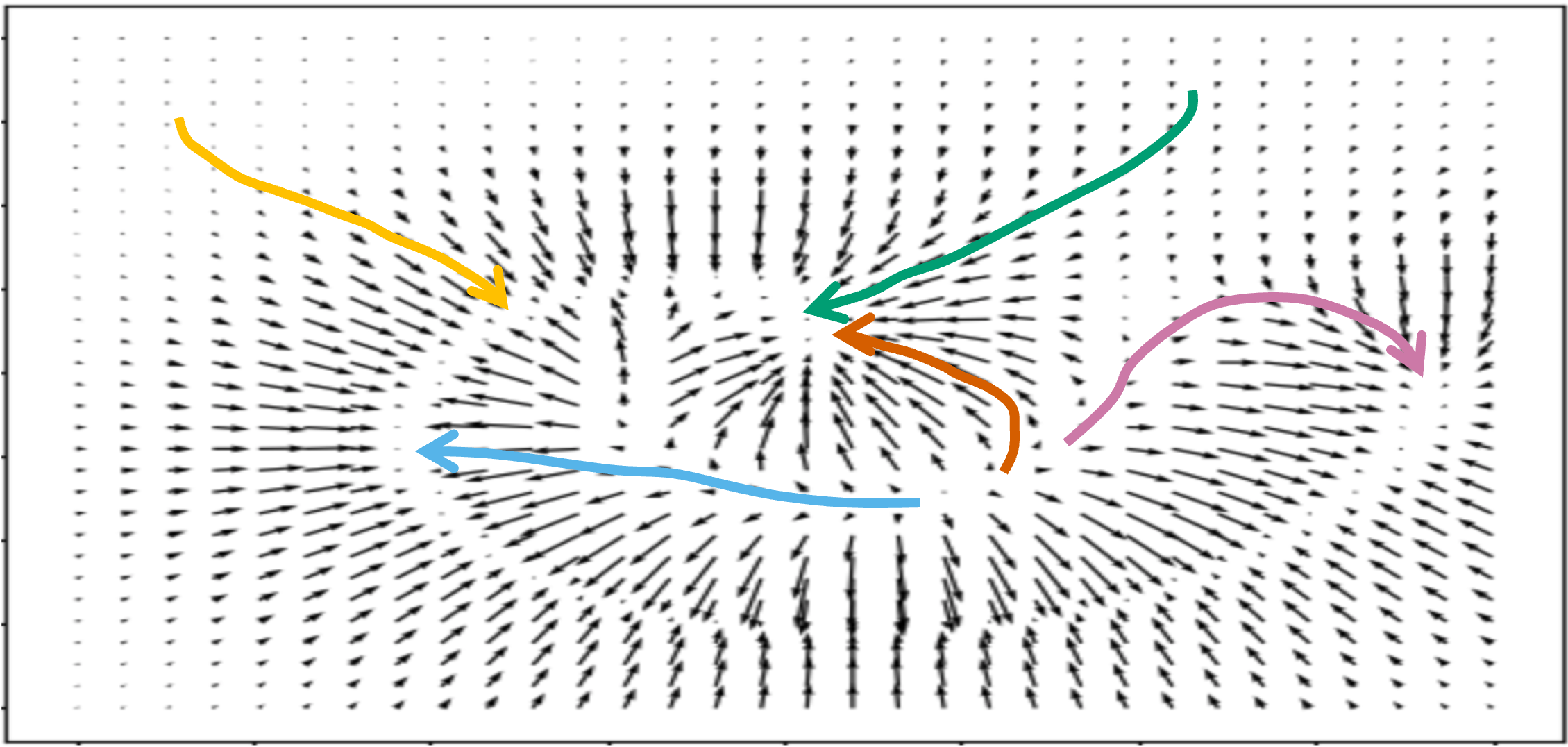

Diffusion models utilize complex mechanisms to facilitate the perturbation and denoising of samples aligned along a timestep-indexed chain.

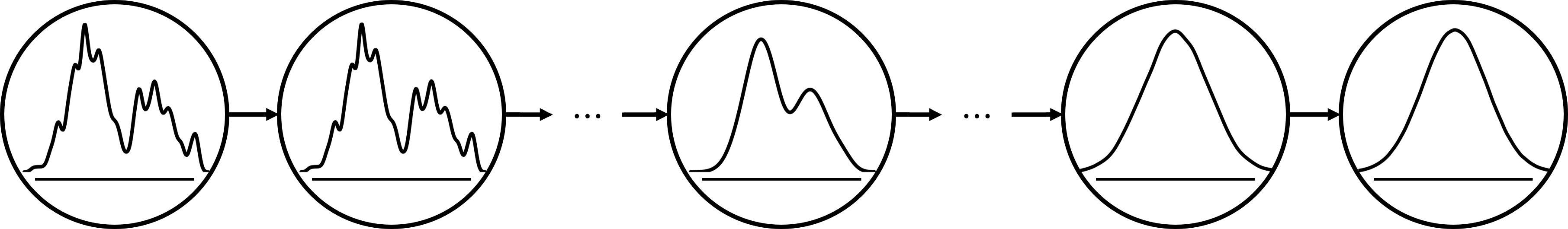

The Forward Process

The forward process initiates with a systematic noise induction that alters the data samples progressively across designated timesteps. The representation of perturbation follows a sequence of forward transitions:

p(xT∣x0)=p(x1∣x0)⋯p(xt∣xt−1)⋯p(xT∣xT−1)=t=1∏Tp(xt∣xt−1)

Figure 2: The forward process perturbs the original distribution by adding noise through a sequence of transitions across multiple timesteps.

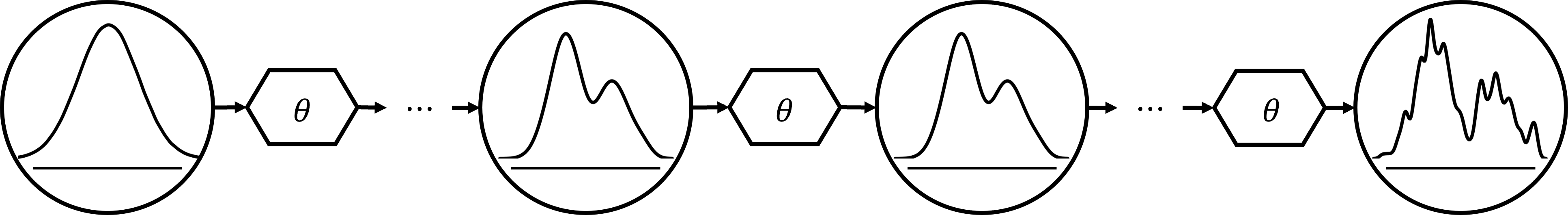

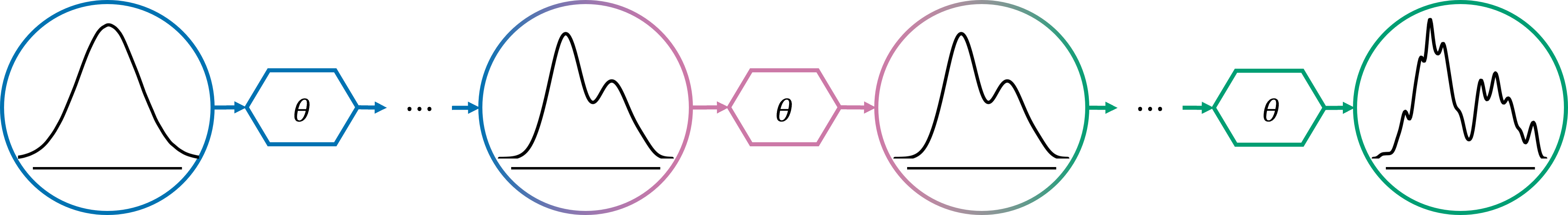

The Reverse Process

Conversely, the reverse process employs an optimal denoising network to systematically remove inserted noise by transforming perturbed samples back to their original states:

pθ(x0)=p(xT)pθ(xT−1∣xT)⋯pθ(x0∣x1)=p(xT)t=1∏Tpθ(xt−1∣xt)

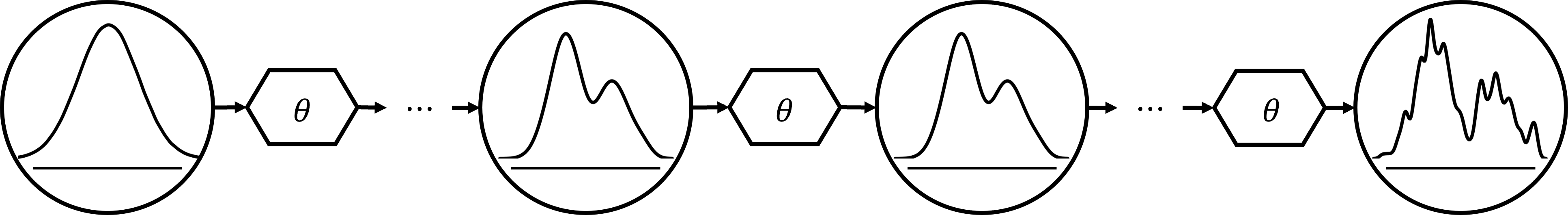

Figure 3: The reverse process trains a neural network to iteratively remove noise added by the forward process.

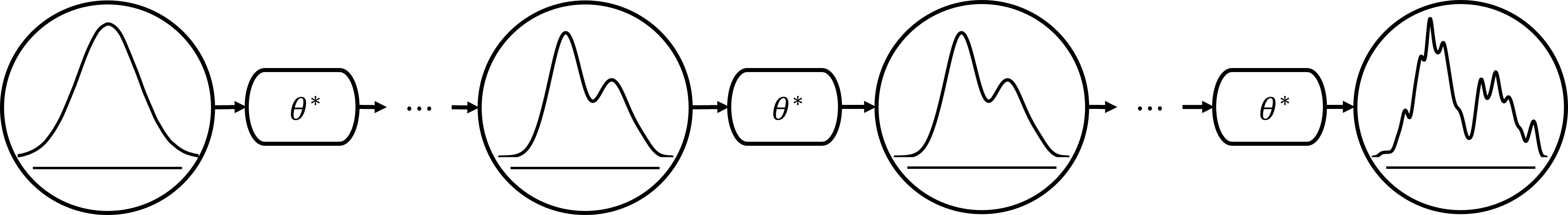

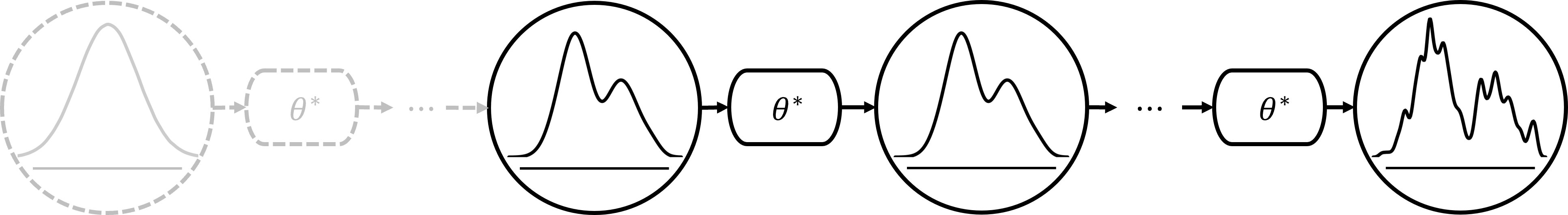

The Sampling Procedure

Following training, the sampling procedure leverages the optimized network to generate novel data, concluding a denoising path similar to the reverse process:

pθ∗(x0)=p(xT)pθ∗(xT−1∣xT)⋯pθ∗(x0∣x1)=p(xT)t=1∏Tpθ∗(xt−1∣xt)

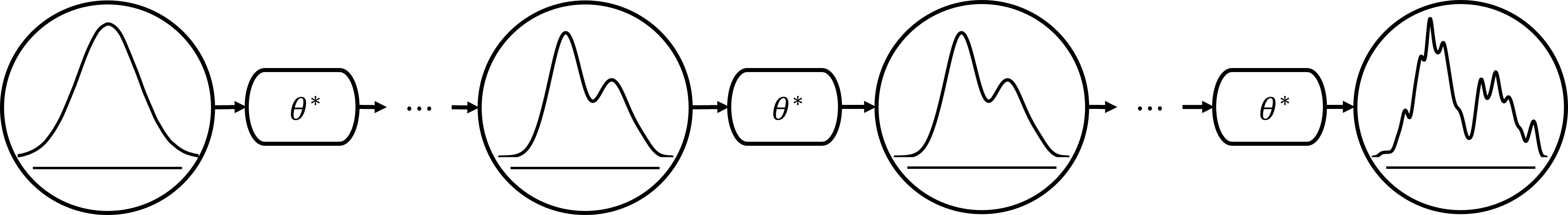

Figure 4: The sampling procedure utilizes the trained denoising network and mirrors the transitions of the reverse process.

Design Aspects of Diffusion Models

Delving deeper, this survey highlights key areas of focus within the components:

Forward Process Design

The design of the forward process requires decisions regarding noise schedules and the types of noise to be applied.

Reverse Process Design

The reverse process targets the refinements necessary for enhancing denoising directions:

Sampling Procedure Design

Efforts within the sampling phase focus on establishing effective generation through adaptation strategies:

Conclusion

This survey provides an in-depth analysis of diffusion models, exploring nuanced design fundamentals, insightful methodologies, and the latest advancements. With clear domains identified, ongoing explorations toward optimizing diffusion model components hold promise for addressing challenges and enhancing their real-world applicability across an array of fields.

This study propels future research on diffusion models, encouraging innovations in architectural paradigms, compositional frameworks, and systematic approaches, without disregarding regulatory concerns on their societal effects. As diffusion models continue to shape the generative model landscape, ongoing evaluative studies remain crucial.