- The paper presents a unified GeneRec architecture combining instruction, editing, and creation modules to innovate beyond static retrieval.

- It demonstrates personalized micro-video recommendations through techniques like CLIP-based thumbnail selection and AI-driven style transfer.

- The study outlines a roadmap for integrating deep user interaction, fidelity checks, and regulatory measures in next-generation recommender systems.

Generative Recommendation: A Comprehensive Analysis of the GeneRec Paradigm

Introduction and Motivation

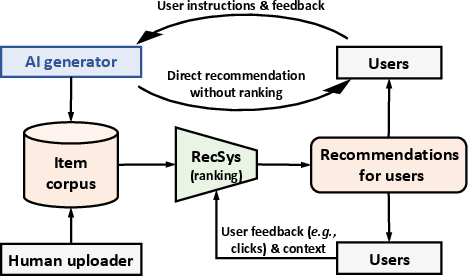

The conventional retrieval-based recommender systems (RS) predominantly rely on matching user profiles with a static corpus of human-generated items. This paradigm, while effective, is inherently constrained by the coverage of the item corpus and the passivity of user feedback mechanisms. The emergence and rapid maturation of AI-generated content (AIGC) and LLMs have opened new avenues for overcoming these limitations by enabling flexible, on-demand content generation and direct, rich instruction-driven interaction with users. The "Generative Recommendation: Towards Next-generation Recommender Paradigm" paper proposes GeneRec, a unified generative recommendation architecture that fundamentally redefines the nature of both items and user-system interaction.

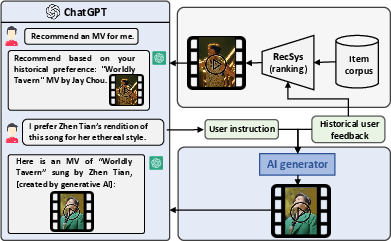

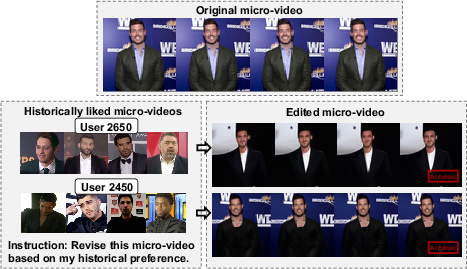

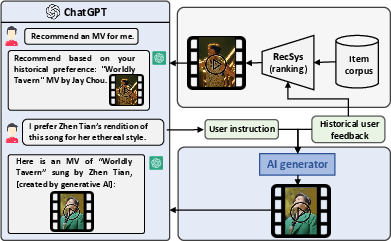

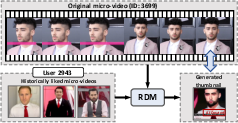

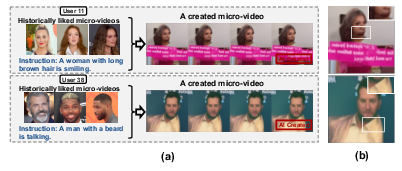

Figure 1: An example of using generative AI to interact with users and generate new items in the micro-video domain.

The GeneRec Architecture

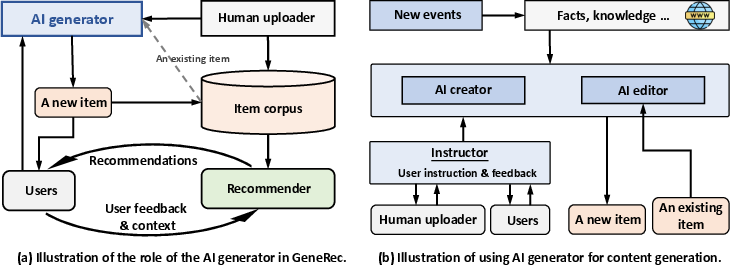

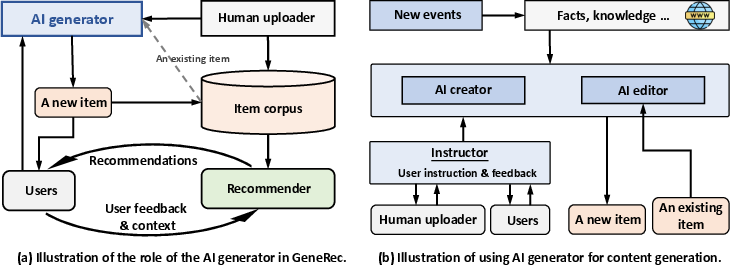

GeneRec instantiates the generative recommender paradigm through three principal modules:

- Instructor: Processes multimodal user instructions and implicit/explicit feedback, determining when and how content generation should be triggered.

- AI Editor: Repurposes or edits existing items based on individual preference, leveraging both user histories and specific instructions.

- AI Creator: Synthesizes entirely new items, conditioned on user intent (both implicit and explicit) and external knowledge.

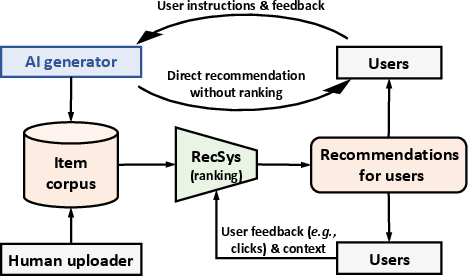

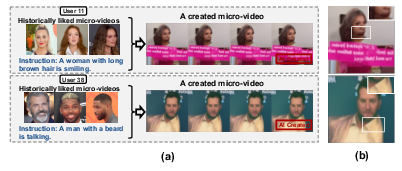

Figure 2: Illustration of the GeneRec paradigm. The AI generator takes user instructions and feedback to generate personalized content, which can be directly recommended or stored for future ranking alongside human-generated items.

This architecture introduces a closed loop between the user and an AI generator, distinct from legacy pipelines. Personalized content, either edited or created de novo, can bypass traditional item ranking if explicitly requested or in the event of repeated negative signals on corpus items.

Figure 3: A demonstration of GeneRec showing interactions between users/human producers and AI generators, and illustrating workflows for repurposing and creation.

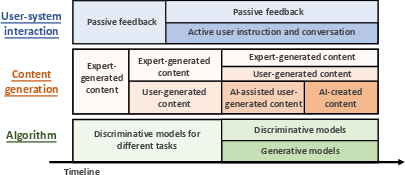

Roadmap and Evolutionary Perspective

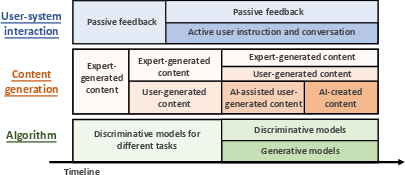

GeneRec presents a comprehensive roadmap for generative RS evolution along three axes:

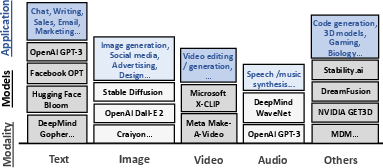

- User-System Interaction: Transitioning from passive feedback (clicks, dwell time) to multimodal conversational interfaces employing LLMs. This will facilitate in-depth, rapid elicitation of user needs and richer preference modeling.

- Content Generation: Three-phased evolution—expert-generated, user-generated, and AI-generated content—where AIGC successively augments and then partly supplants manual item creation.

- Algorithmic Advances: Convergence of discriminative and generative models, advancing to unified frameworks that can handle retrieval, repurposing, and open-ended creation, all informed by LLM-style architectures.

Figure 4: A potential roadmap for the GeneRec paradigm from user interaction, content generation, and algorithmic innovation perspectives.

Fidelity, Evaluation, and Trustworthiness

The authors make the critical point that with AIGC's flexibility comes a heightened need for fidelity checks, mandatory for any practical deployment. The trustworthiness stack for GeneRec encompasses:

- Bias and fairness mitigation

- Privacy preservation given the use of implicit/explicit user data

- Safety filtering (e.g., toxicity, shilling attacks)

- Authenticity verification (to counter hallucination/misinformation)

- Legal compliance (including copyright adjudication and domain-specific regulations)

- Identifiability (distinction between human-/AI-generated content via watermarking or forensic tools)

Additionally, GeneRec employs a dual-pronged evaluation strategy: item-side (objective metrics like FVD for videos, content relevance) and user-side (explicit/implicit satisfaction signals, retention, etc.).

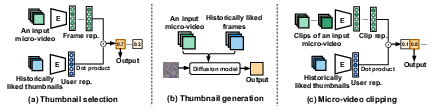

Application Demonstrations in Micro-video Recommendation

The feasibility study operationalizes the GeneRec modules in the context of micro-video recommendation:

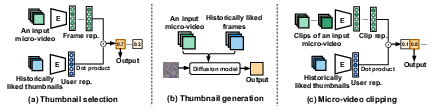

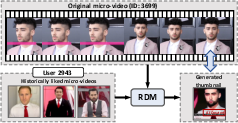

- Personalized Thumbnail Selection and Generation: CLIP-based zero-shot selection aligns frames with user histories, while diffusion models (RDM) yield higher-quality, user-aligned thumbnails, outperforming static baselines.

Figure 5: Illustration of the implementation of editing tasks in the AI editor—thumbnail selection, thumbnail generation, and micro-video clipping.

Figure 6: Cases of personalized thumbnail selection by CLIP.

Figure 7: Cases of personalized thumbnail generation.

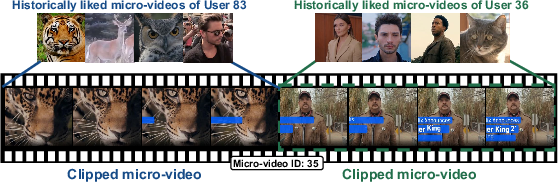

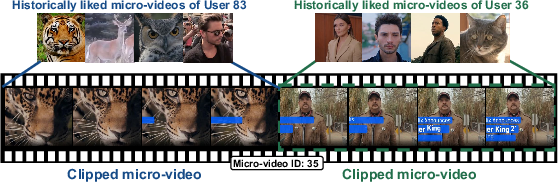

- Micro-video Clipping: Personalized segment extraction using CLIP representations demonstrates user-preferred content localization within longer videos.

Figure 8: Case study of micro-video clipping via CLIP.

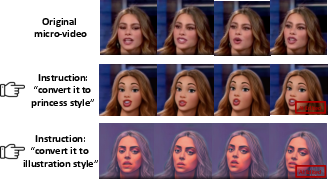

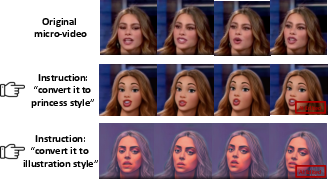

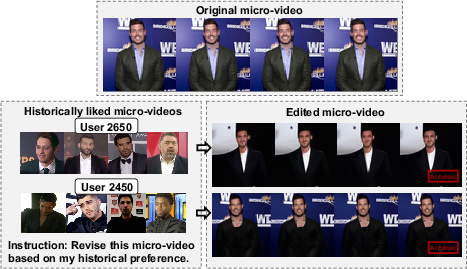

- Style Transfer and Content Editing: VToonify and MCVD enable both explicit (instruction-driven) and implicit (preference-driven) video repurposing, substantiated by both quantitative improvements (Cosine@K, FVD) and qualitative case studies.

Figure 9: Examples of personalized micro-video style transfer via VToonify.

Figure 10: Case study of personalized micro-video content revision via MCVD (User_Emb).

- Personalized Video Creation: Single-turn and multi-turn instructions drive MCVD to synthesize new micro-videos, although with notable current limitations in visual fidelity and domain coverage due to dataset and modeling constraints.

Figure 11: Case study of personalized micro-video content creation via MCVD (User_Emb).

Domain-General Implications and Future Research Directions

The GeneRec paradigm generalizes across multiple domains: news, fashion, music, image, and video. Its modularity allows tailored fidelity checks and generation routines critical for each vertical (e.g., bias detection in news, style/realism in fashion, copyright in music). The approach also foregrounds new research directions:

Comparative Perspective

GeneRec is differentiated from existing conversational recommenders by (a) robust instruction-following faculties powered by LLMs, (b) generative content workflows vs. mere retrieval, and (c) joint optimization for relevance, trust, and compliance. Unlike generic AIGC, GeneRec capitalizes on established user modeling strategies in RS for implicit/explicit preference extraction and integrates generative and discriminative pathways.

Conclusion

GeneRec represents a systemic shift in recommender paradigms, tightly integrating content generation, nuanced user-system communication, and trustworthy recommendation. The presented experiments validate both the opportunities and the technical gaps of current AIGC for personalization. As generative models and LLMs continue to scale in capability and domain adaptation, GeneRec's vision points toward truly user-defined, instruction-driven, and multimodal recommender systems. The paradigm will drive foundational research in fidelity assessment, legal/ethical compliance, unified generative architectures, and advanced user modeling—ushering in a new era of personalized information access and content creation.