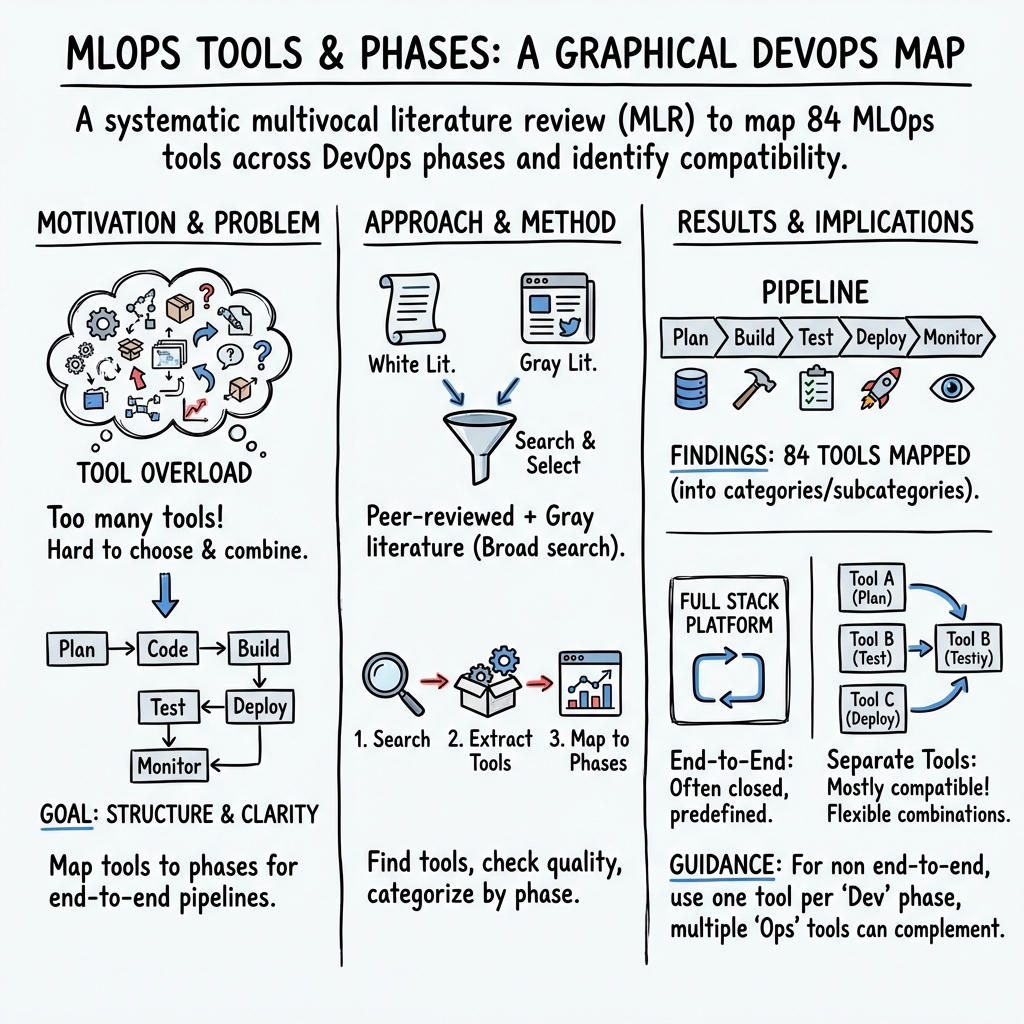

Toward End-to-End MLOps Tools Map: A Preliminary Study based on a Multivocal Literature Review

Abstract: MLOps tools enable continuous development of machine learning, following the DevOps process. Different MLOps tools have been presented on the market, however, such a number of tools often create confusion on the most appropriate tool to be used in each DevOps phase. To overcome this issue, we conducted a multivocal literature review mapping 84 MLOps tools identified from 254 Primary Studies, on the DevOps phases, highlighting their purpose, and possible incompatibilities. The result of this work will be helpful to both practitioners and researchers, as a starting point for future investigations on MLOps tools, pipelines, and processes.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Glossary

- AIOps: Application of AI/ML techniques to automate and enhance IT operations (e.g., monitoring, anomaly detection, remediation). "Keywords: MLOps, AIOps, DevOps, Artificial Intelligence, Machine Learning"

- Backward snowballing: Literature review technique that identifies new studies by examining references in selected papers. "We applied backward snowballing to the academic literature to identify relevant primary studies from the references of the selected sources."

- Cohen's kappa coefficient: Statistical measure of inter-rater agreement that accounts for chance agreement. "The application of the inclusion criteria performed by the author had an almost perfect agreement (Cohen's kappa = 0.870)."

- Configuration Management: The discipline of maintaining consistency of a system’s attributes and configurations across its lifecycle. "Configuration Management: tools used to perform configuration management i.e, establishing consis- tency of a product's attributes throughout its life."

- Construct validity: The extent to which a study accurately measures the theoretical constructs it intends to measure. "Construct validity. Construct validity concerns the extent to which the study's object of investigation accu- rately reflects the theory behind the study, according to a reference."

- Continuous Development (CD): In this context, tools and practices that build deployable software/services or systems that deploy other services. "Continuous Development (CD): based on the DevOps classic definition, CD includes those tools which create a single software package or a service."

- Continuous Integration (CI): Automated practices/tools for validating code, and in MLOps also validating data and models. "Continuous Integration (CI): based on the De- vOps classic definition, CI includes the tools used for testing and validating code and components."

- Continuous Testing (CT): Automation focused on retraining and serving models continuously as part of the pipeline. "Continuous Testing (CT): tools used to automat- ically retrain and serve the models [10]."

- DataOps: Collaborative practices and tooling to streamline and automate data lifecycle operations. "(\"ml-ops\" OR \"ml ops\" OR mlops OR \"machine learning ops\" OR \"machine learning operations\" OR dataops) AND (tool OR application) AND"

- End-to-End Full Stack MLOps tools: Platforms that can implement a complete ML pipeline on their own. "End-to-End Full Stack MLOps tools: includes tools that can be used to create a full pipeline sin- gularly."

- External Validity: The degree to which study findings generalize beyond the specific context examined. "External Validity. External validity is related to the generalizability of the results of our multivocal literature review."

- Feature engineering: The process of transforming raw data into informative input variables for models. "perform feature engineering"

- FeatureStore: A system for managing, versioning, and serving ML features for training and inference. "Not essentials (Traning Orchestration, Explain- able AI, FeatureStore)"

- Gray literature: Non–peer-reviewed sources such as blogs, white papers, videos, and forums used in reviews. "we included 254 PS (51 from the white literature and 203 from the gray literature)"

- Infrastructure Provisioning: Automating the creation and management of infrastructure resources. "Infrastructure Provisioning: tools used for the pro- cess of provisioning or creating infrastructure re- sources."

- Inter-rater agreement: The level of consistency between independent reviewers’ decisions. "to evaluate the quality of the inter-rater agreement before involv- ing the third author, we calculated Cohen's kappa coef- ficient."

- ML Lifecycle: The set of activities around experiments, dataset versioning, and model management. "ML Lifecycle: tools used for experiment tracking, dataset versioning, and model management of ML models."

- MLOps: Practices and tools that integrate ML workflows with DevOps to manage the end-to-end model lifecycle. "MLOps (Machine Learning Operations) is a practice that combines the best practices of software engineering and data science to manage the end-to-end lifecycle"

- Model governance: Processes and controls for managing model versions, performance, and compliance. "MLOps also helps with model governance and man- agement."

- Model serving: Deploying a trained model as a service or API for use in production systems. "model serving"

- Multivocal Literature Review (MLR): A systematic review method that includes both peer-reviewed and gray literature. "Systematic Multivo- cal Literature Review (MLR) methodology"

- Orchestration tools: Systems that coordinate and automate the execution of containerized tasks and workflows. "Orchestration Tools: tools used to automate the pro- cess of coordination of containers."

- Overfitting: When a model learns noise from training data and performs poorly on unseen data. "overfitting and bias"

- Replication package: Publicly shared data and materials enabling others to reproduce the study’s results. "These results are documented in the replication package 11."

- Snowballing: Using the references or citations of papers to discover additional relevant studies. "Snowballing refers to using the refer- ence list of a paper or the citations to the paper to iden- tify additional primary studies [6]."

- White literature: Peer-reviewed academic publications used as formal evidence in reviews. "we included 254 PS (51 from the white literature and 203 from the gray literature)"

Collections

Sign up for free to add this paper to one or more collections.