- The paper introduces a novel credit assignment mechanism by integrating predictive coding with forward-forward processing to update neural weights locally.

- It achieves comparable classification performance to backpropagation methods while excelling in generative image reconstruction.

- The method leverages energy-efficient operations ideal for analog and neuromorphic hardware, promising scalable and sustainable AI systems.

The Predictive Forward-Forward Algorithm

Introduction

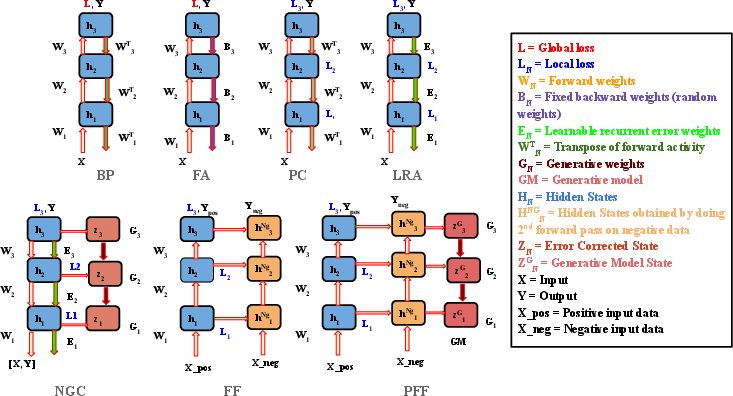

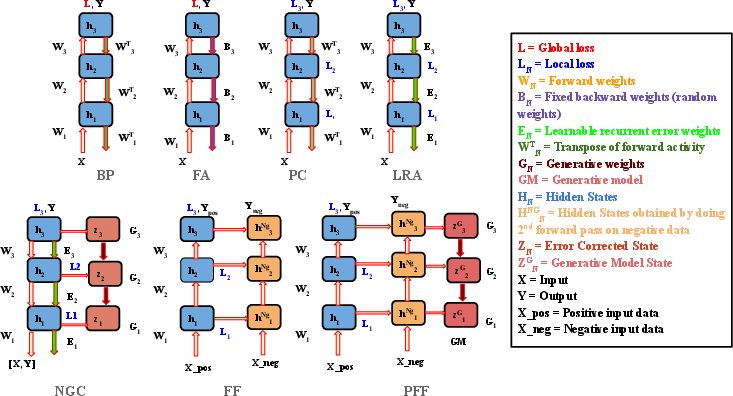

The "Predictive Forward-Forward Algorithm" (2301.01452) introduces a novel approach to credit assignment in neural systems, termed the predictive forward-forward (PFF) algorithm. The authors propose a dynamic recurrent neural system that learns a directed generative circuit alongside a representation circuit. This system eliminates the need for backpropagation-based schemes by efficiently utilizing forward passes for learning signal propagation and synapse updates. The paper positions the PFF as a biologically plausible model that could help in understanding the learning mechanisms in biological neurons devoid of explicit feedback connections.

Predictive Forward-Forward Learning

PFF extends the forward-forward (FF) algorithm, incorporating elements from predictive coding (PC) to form a robust stochastic neural system. The system consists of two neural circuits: a representation circuit and a generative circuit, learning a classifier and a generative model concurrently.

Forward-Forward Learning Rule

In the PFF algorithm, learning is facilitated by evaluating the "goodness" of neural activities, which is indicative of whether an incoming signal originates from the target distribution. The goodness measure is evaluated through the probability that data originates from the positive class, based on empirical measurements like the sum of squared neural activities. Adjustments of synaptic weights are done locally, enhancing the system's inherent suitability for sparse computations in analog or neuromorphic hardware.

Representation and Generative Circuits

The representation circuit uses a recurrent neural network architecture, dynamically updating layer activities with top-down, lateral, and bottom-up influences. An additional focus is placed on implementing learnable lateral synaptic dynamics, enabling lateral competition among neurons. The generative circuit, contrastingly, aims to predict layer activities, utilizing a local Hebbian learning approach akin to those in predictive coding models.

Figure 1: Comparison of credit assignment algorithms that relax constraints imposed by backpropagation of errors (BP). Algorithms visually depicted include feedback alignment (FA), predictive coding (PC), local representation alignment (LRA), neural generative coding (NGC), the forward-forward procedure (FF), and the predictive forward-forward algorithm (PFF).

Experiments

The PFF algorithm was tested on several image-based datasets, focusing on classification and generative performance. Experiments demonstrated that PFF performs comparably to classical backpropagation (BP) models in classification tasks and produces high-quality image reconstructions and generative samples. The results highlight that PFF outperforms other models in certain challenging datasets, underscoring its efficacy and robustness even in scenarios with limited feedback.

Computational Advantages and Implications

PFF's design adheres to computational principles favorable for potential deployment on low-power analog and neuromorphic circuits, given its reliance on forward-pass operations and local learning dynamics. This makes PFF a feasible candidate for deployment in systems where conventional backpropagation is computationally prohibitive.

Moreover, the integration of generative modeling within PFF not only supports robust reconstruction capabilities but also enables self-generated negative samples, which could play a crucial role in self-supervised learning paradigms.

Conclusion

The PFF algorithm presents an innovative approach to neural learning, effectively blending predictive coding with forward-forward processing. Its biologically plausible mechanisms and avoidance of backpropagation offer significant promise for future AI and cognitive modeling developments. As researchers continue to explore energy-efficient and scalable neural learning solutions, PFF's principles may provide critical insights into developing sustainable and broadly applicable AI systems.