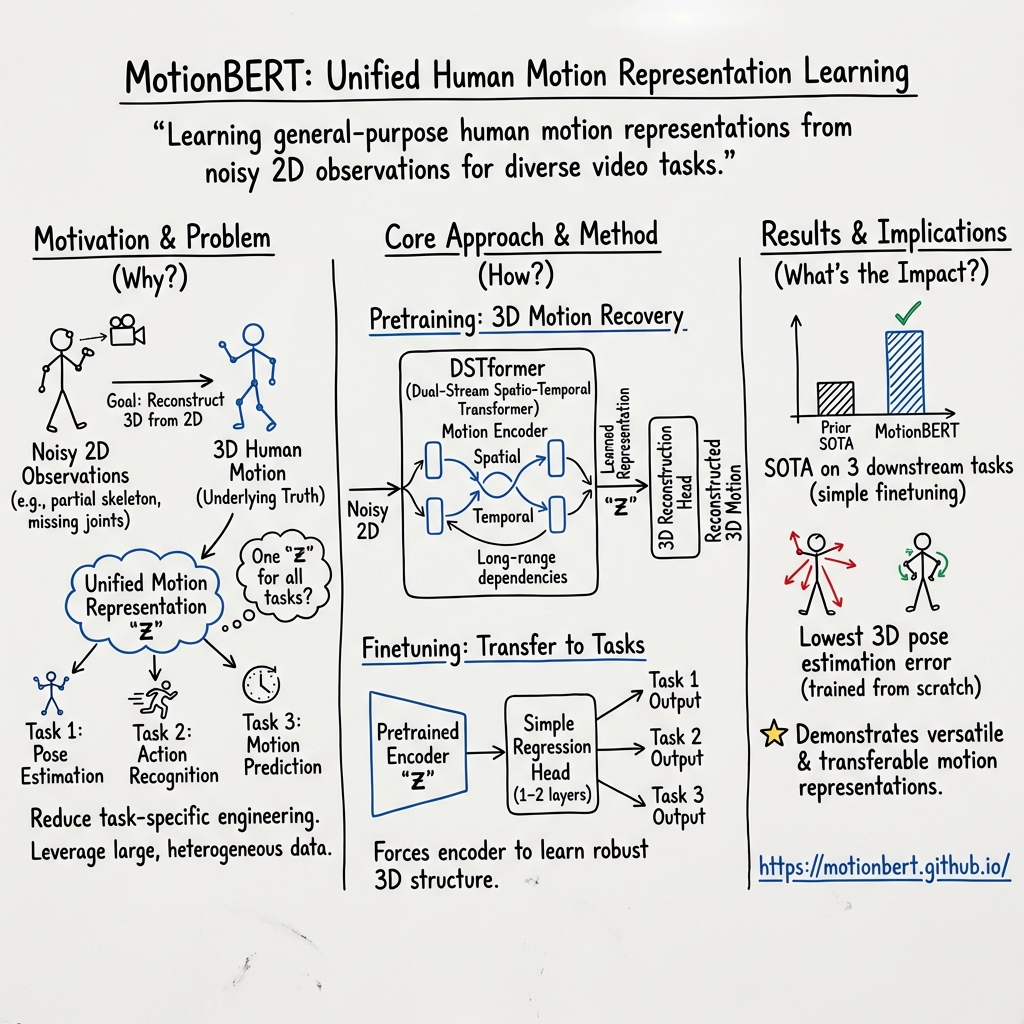

MotionBERT: A Unified Perspective on Learning Human Motion Representations

Abstract: We present a unified perspective on tackling various human-centric video tasks by learning human motion representations from large-scale and heterogeneous data resources. Specifically, we propose a pretraining stage in which a motion encoder is trained to recover the underlying 3D motion from noisy partial 2D observations. The motion representations acquired in this way incorporate geometric, kinematic, and physical knowledge about human motion, which can be easily transferred to multiple downstream tasks. We implement the motion encoder with a Dual-stream Spatio-temporal Transformer (DSTformer) neural network. It could capture long-range spatio-temporal relationships among the skeletal joints comprehensively and adaptively, exemplified by the lowest 3D pose estimation error so far when trained from scratch. Furthermore, our proposed framework achieves state-of-the-art performance on all three downstream tasks by simply finetuning the pretrained motion encoder with a simple regression head (1-2 layers), which demonstrates the versatility of the learned motion representations. Code and models are available at https://motionbert.github.io/

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about (big picture)

This paper introduces MotionBERT, a way for computers to learn about how people move. The idea is to teach one smart model to understand human motion really well, so it can then handle many different video tasks (like figuring out body positions or movements) without needing to be retrained from scratch each time.

What the researchers wanted to find out

They set out to:

- Build a single “motion brain” that learns general rules of human movement (how our joints bend, how bodies balance, what’s physically possible).

- Train it using lots of different kinds of data so it works across many situations (different people, cameras, and activities).

- Show that once this “motion brain” is learned, it can be quickly adapted to several tasks and work better than previous methods.

How they did it (in simple terms)

Think of watching a stick-figure video of a person, where you only see dots for joints (like shoulders, elbows, knees) on a 2D screen. From that flat view, can you guess the real 3D pose—how the body is arranged in space? That’s hard because some joints are hidden or noisy (imprecise). The researchers train a model to do exactly this: take messy, partial 2D joint observations and rebuild the full 3D motion.

Here’s the plan, step by step:

Pretraining: learning from incomplete clues

- The model sees many examples where the 2D joint positions are partly missing or noisy.

- Its job is to “fill in the blanks” and recover the true 3D motion.

- By practicing this, it learns the geometry (shape), kinematics (how parts move), and physics (what motions make sense) of human bodies.

You can imagine it like solving a jigsaw puzzle where some pieces are blurry or missing. To solve it, the model must understand the overall picture of human motion.

The motion encoder: a Dual-stream Spatio-temporal Transformer

- “Encoder” means a part of the model that turns input data into a compact, meaningful representation—like a summary that captures the important patterns.

- “Spatio-temporal” means it looks at space (how joints relate to each other at one moment) and time (how they move across frames).

- “Transformer” is a kind of neural network that uses attention—like focusing on the most important joints and frames—to understand relationships.

- “Dual-stream” means it processes motion in two coordinated ways (for example, two parallel views of the data) so it can better capture different aspects of movement across space and time.

Together, this helps the model notice long-range relationships—like how a step involves coordinated motion from hips to knees to ankles over several frames.

Fine-tuning: adapting to new tasks quickly

- After pretraining, the model already “gets” human motion.

- To use it for a specific job (a “downstream task”), they add a very small extra part on top (a simple 1–2 layer “regression head”) and train briefly on that task’s data.

- Because the model already understands motion well, this small tweak is enough to get strong results.

What they found

- When trained from scratch (without any extra tricks), their model set a new record for low error in 3D human pose estimation—meaning it was very accurate at figuring out 3D body positions from video.

- After pretraining, the same model, with only a tiny extra head added, reached state-of-the-art performance on three different human-motion-related tasks.

- This shows the learned motion representations are versatile and powerful.

Why this matters

- One model for many jobs: Instead of building and training separate systems for each motion task, you can train one strong “motion understanding” model and adapt it quickly.

- Better performance with less extra training: Because the model already knows motion, it needs only a little fine-tuning to perform very well on new tasks.

- Useful in the real world: This can improve things like animation and special effects, sports analysis, physical therapy, AR/VR avatars, and robots that need to understand or imitate human movement.

In short, MotionBERT is a step toward a general-purpose “motion sense” for machines—learning from lots of varied videos to understand how people move, then applying that skill across many different tasks with great accuracy.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains uncertain or unexplored based on the paper’s content (title, abstract, and available metadata):

- Data composition and scaling laws: What is the precise mix, size, and diversity of “large-scale and heterogeneous” datasets needed to learn robust motion representations, and how does performance scale with more/less data or different domain mixes?

- 3D supervision reliance: To pretrain “recover 3D from noisy partial 2D,” how much 3D ground truth is required versus pseudo-3D or purely self-supervised signals? Can comparable performance be achieved with reduced 3D supervision?

- Noise/occlusion modeling: Which noise models and partial-observation patterns are used during pretraining, and how does robustness vary across occlusion types, detector noise levels, dropped joints, and missing frames?

- Cross-skeleton interoperability: How are heterogeneous joint sets unified during pretraining, and how well do learned representations transfer across skeleton definitions with different bone topologies and bone-length distributions?

- Viewpoint/camera generalization: Do representations remain invariant/equivariant to camera intrinsics/extrinsics, lens distortions, and viewpoint changes? Is camera-agnostic pretraining feasible without calibration metadata?

- Uncertainty handling: Does the model quantify ambiguity in the 2D-to-3D lifting (e.g., multi-modal depth hypotheses)? How do probabilistic outputs affect downstream performance?

- Long-horizon temporal generalization: How does DSTformer scale to very long sequences, variable frame rates, and irregular sampling? What are limits on temporal context before performance/memory degrade?

- Multi-person and interaction scenarios: Can the representation handle multi-person interactions, inter-person occlusions, and social dynamics, or is it limited to single-person motion?

- Human-object interactions: Does the learned representation capture contact, manipulation, and object-driven kinematics, and can it transfer to tasks involving tools or props?

- Physical plausibility and contact: To what extent does the representation encode dynamics (e.g., momentum, ground contact) and reduce artifacts like foot sliding? Are there physics-based evaluation metrics confirming this?

- Out-of-distribution motions: How does the model handle rare/extreme motions (e.g., acrobatics, dancing, disabilities, assistive devices)? What is the failure mode profile for OOD actions?

- Demographic fairness and body-shape variability: Does performance remain consistent across age, gender, ethnicity, body shape/size, and clothing styles? Are biases introduced by the training data distribution?

- Domain shift and environmental robustness: How well does the representation transfer across indoor/outdoor scenes, varying lighting, motion blur, occlusions, and different camera rigs?

- Downstream task breadth: Beyond the three demonstrated tasks, how transferable is the representation to action recognition, motion prediction, motion synthesis, tracking, and pose refinement?

- Objective design and ablations: How do alternative pretext tasks (e.g., masked motion modeling, temporal ordering, contrastive objectives) compare to 2D-to-3D recovery in representation quality?

- Architecture-specific contributions: Which gains come from DSTformer versus the pretraining objective/data? Are there ablations isolating spatial, temporal, and dual-stream components and their efficiency/accuracy trade-offs?

- Computational efficiency and deployment: What are training/inference costs, memory footprints, and latencies, especially for long sequences and real-time applications or edge devices?

- Robustness to 2D detector quality: How sensitive is pretraining and downstream performance to the choice and quality of 2D keypoint detectors, and can domain adaptation mitigate detector shifts?

- Skeleton retargeting and standardization: Is there a principled process (and code) for mapping across joint sets and bone lengths that preserves kinematic consistency for downstream use?

- Representation interpretability: Which kinematic/physical attributes are linearly decodable from the embedding? Are the features human-interpretable and localized to joints or temporal events?

- Catastrophic forgetting during finetuning: Does finetuning for a specific task erode general motion knowledge, and can multi-task or regularized finetuning preserve generality?

- Confidence calibration and error bounds: Are the outputs calibrated with respect to true errors, and can the system provide per-joint/temporal confidence for downstream decision-making?

- Ethical and privacy considerations: How are privacy risks and potential misuse addressed when training on large-scale human motion data, and are there mechanisms for dataset consent and de-biasing?

Glossary

- 2D observations: two-dimensional measurements (e.g., image-plane keypoints) used as inputs for vision models. "noisy partial 2D observations"

- 3D motion: the three-dimensional movement of the human body over time. "recover the underlying 3D motion"

- 3D pose estimation: inferring 3D joint locations of a human body from visual inputs. "the lowest 3D pose estimation error so far"

- Downstream tasks: target applications to which pretrained representations are transferred and fine-tuned. "transferred to multiple downstream tasks"

- Dual-stream Spatio-temporal Transformer (DSTformer): a Transformer architecture with two parallel streams to model spatial and temporal dependencies in sequences. "Dual-stream Spatio-temporal Transformer (DSTformer)"

- Finetuning: adapting a pretrained model to a specific task with additional training on task-specific data. "finetuning the pretrained motion encoder with a simple regression head (1-2 layers)"

- Heterogeneous data resources: datasets drawn from diverse sources or modalities with varying characteristics and distributions. "heterogeneous data resources"

- Human-centric video tasks: video analysis problems focused on humans, such as pose estimation or action understanding. "human-centric video tasks"

- Kinematic: relating to kinematics, the study of motion without considering forces. "geometric, kinematic, and physical knowledge"

- Motion encoder: a neural module that maps input observations to a compact representation of motion. "a motion encoder is trained to recover the underlying 3D motion"

- Motion representations: learned latent features that capture geometric, kinematic, and physical properties of human movement. "learning human motion representations"

- Pretraining stage: an initial training phase on a proxy task to learn generalizable features before fine-tuning on specific tasks. "a pretraining stage"

- Regression head: a small neural network module that predicts continuous values from learned features. "a simple regression head (1-2 layers)"

- Skeletal joints: discrete keypoints representing human body joints used in skeleton-based modeling. "skeletal joints"

- Spatio-temporal relationships: dependencies that span both spatial structure (e.g., across joints) and temporal dynamics (across frames). "long-range spatio-temporal relationships"

- State-of-the-art performance: achieving results that surpass all previously reported methods. "state-of-the-art performance"

- Trained from scratch: training a model from random initialization without using pretrained weights. "trained from scratch"

Practical Applications

Immediate Applications

Below are actionable applications that can be deployed now by leveraging MotionBERT’s pretrained motion encoder, its robustness to noisy/partial 2D keypoints, and its simple fine-tuning workflow.

- Monocular 3D human pose estimation upgrade (sectors: AR/VR, sports, healthcare, media)

- Use case: Replace existing 2D→3D lifting modules with the pretrained MotionBERT encoder to reduce error, especially under occlusion.

- Tools/workflows: Off-the-shelf 2D keypoint detector (e.g., OpenPose, Detectron2, MediaPipe) → MotionBERT encoder → 3D skeleton output → downstream analytics/rendering (Unity/Unreal).

- Assumptions/dependencies: Quality 2D keypoints; single-person or reliable multi-person tracking; potential scale ambiguity in monocular setups; GPU/accelerated inference for real-time use.

- Label-efficient adaptation to new domains (sectors: industry, academia)

- Use case: Fine-tune with a 1–2 layer head on small labeled datasets (e.g., warehouse, logistics, retail) to rapidly deploy accurate domain-specific motion analytics.

- Tools/workflows: Pretrained encoder + minimal labeled samples → quick fine-tune → inference pipeline/SDK.

- Assumptions/dependencies: Access to pretrained weights; moderate compute for short fine-tunes; some domain shift may require calibration.

- Occlusion-robust pose tracking in crowded scenes (sectors: retail analytics, sports broadcasting, public safety)

- Use case: Improve skeleton tracking stability when bodies are partially visible or intermittently occluded.

- Tools/workflows: Multi-person 2D keypoint tracker → MotionBERT encoder for temporal smoothing and 3D lifting → analytics dashboards.

- Assumptions/dependencies: Reliable identity tracking across frames; camera viewpoints offering enough keypoint signal; privacy-compliant data handling.

- Action/skill recognition via feature transfer (sectors: video analytics, manufacturing QA, sports)

- Use case: Use MotionBERT’s motion features as inputs to lightweight classifiers for action recognition or skill grading.

- Tools/workflows: Freeze encoder (or lightly fine-tune) → train linear head for task → deploy as event detector (e.g., unsafe lift detection, exercise rep counting).

- Assumptions/dependencies: Task-specific labeled clips for training the head; consistent camera placement improves reliability.

- Motion quality assessment and coaching (sectors: fitness, tele-rehabilitation, education)

- Use case: Real-time posture/form feedback in consumer fitness apps or remote physio sessions using 3D joint angles and kinematic features.

- Tools/workflows: Smartphone/webcam → 2D keypoints → MotionBERT → compute joint-angle metrics and deviations → on-screen or voice guidance.

- Assumptions/dependencies: Clinical validation required for medical claims; fairness across body types; on-device or secure cloud processing for privacy.

- MoCap cleanup and 2D-to-3D reconstruction for VFX/games (sectors: media, animation)

- Use case: Convert reference videos to clean 3D skeletons; fill gaps and denoise MoCap; retarget to character rigs.

- Tools/workflows: Batch 2D keypoints from editorial footage → MotionBERT → retarget in Blender/Maya/Unreal.

- Assumptions/dependencies: Skeleton mapping/retargeting setup; consistent frame rates; quality control by artists.

- Ergonomics and workplace safety analytics (sectors: manufacturing, logistics)

- Use case: Compute risk scores (e.g., RULA/REBA proxies) from 3D joint angles to flag hazardous postures and repetitive strain risks.

- Tools/workflows: Fixed industrial cameras → 2D keypoints → MotionBERT → risk dashboards and alerts.

- Assumptions/dependencies: Calibration or anthropometric scaling for absolute angles; environment-specific thresholds; union/privacy compliance.

- HRI perception front-end for robots and cobots (sectors: robotics, warehousing)

- Use case: More reliable human pose/intent signals for safe interaction and task handovers.

- Tools/workflows: ROS node wrapping MotionBERT → publish 3D skeletons/intents → robot planner/controller.

- Assumptions/dependencies: Real-time constraints (latency/throughput); deployment on edge accelerators; lighting and viewpoint variability.

- Privacy-preserving analytics via skeleton-only processing (sectors: policy, enterprise IT)

- Use case: Replace raw video with anonymized 2D keypoints and 3D skeletons to reduce privacy risk while enabling analytics.

- Tools/workflows: On-prem 2D keypoint extraction → discard RGB frames → MotionBERT inference on skeleton streams → store only derived metrics.

- Assumptions/dependencies: Organizational policies allowing skeleton data; robust security; user consent where required.

- Academic baselines and benchmarks (sectors: academia, R&D labs)

- Use case: Reproducible, strong baseline for 3D pose estimation and related tasks; rapid prototyping of new motion tasks with a unified encoder.

- Tools/workflows: Use released code/models; plug-in heads for new tasks; cross-dataset evaluation.

- Assumptions/dependencies: Proper citation and licensing; compute for experiments; dataset access.

Long-Term Applications

These require additional research, scaling, integration, or validation before widespread deployment.

- Foundation model for human motion across tasks (sectors: software, research)

- Vision: A single motion encoder powering pose estimation, action recognition, motion prediction, and reconstruction with minimal task-specific heads.

- Dependencies: Larger, more diverse pretraining corpora; standardized skeletons across datasets; robust cross-domain generalization.

- Physically consistent human mesh and contact estimation (sectors: AR/VR, telepresence, sports science)

- Vision: Extend from joints to full body mesh, contacts, and forces for realistic interaction with virtual/real environments.

- Dependencies: Integration of physics priors; datasets with contact/force supervision; calibration for scale and ground-plane.

- Multi-person 3D social interaction understanding (sectors: social robotics, safety, retail)

- Vision: Understand group activities, proxemics, and interpersonal dynamics from 3D motion representations.

- Dependencies: Training on multi-person 3D datasets; robust association/tracking; bias mitigation across demographics and scenes.

- Edge deployment on mobile/AR glasses (sectors: consumer devices, wearables)

- Vision: On-device MotionBERT inference for always-on posture tracking and low-latency AR overlays.

- Dependencies: Model compression (quantization/distillation), efficient 2D keypoint models, hardware NPUs/NP accelerators.

- Imitation learning from human videos to robot skills (sectors: robotics, manufacturing)

- Vision: Map human 3D motion representations to robot kinematics for learning by demonstration.

- Dependencies: Cross-embodiment alignment, safety-constrained policy learning, sim-to-real transfer.

- Clinical gait and movement disorder assessment (sectors: healthcare)

- Vision: Non-invasive screening and longitudinal monitoring (e.g., Parkinson’s, post-stroke rehab) using 3D kinematics.

- Dependencies: Clinical datasets and validation, regulatory approval, robustness across clinical environments and assistive devices.

- Injury risk prediction and performance optimization (sectors: elite sports, occupational health)

- Vision: Predict overuse risk and optimize training loads using motion features fused with wearables.

- Dependencies: Longitudinal datasets linking kinematics to outcomes, biomechanical modeling, user-specific calibration.

- Generative motion synthesis and editing (sectors: media, gaming, virtual humans)

- Vision: Text-/audio-conditioned motion generation and editing using MotionBERT as a motion prior within diffusion/transformer stacks.

- Dependencies: Paired multimodal datasets; controllable generative training; evaluation protocols for realism and diversity.

- Cross-species articulated motion modeling (sectors: biology, animal behavior)

- Vision: Extend DSTformer-like encoders to animals for veterinary assessment and behavior analytics.

- Dependencies: Animal keypoint detectors and labeled skeletons; species-specific kinematics.

- Policy and standards for skeleton-data privacy and fairness (sectors: policy, compliance)

- Vision: Standardize collection, storage, and sharing practices for pose data; fairness auditing across body types, attire, and mobility aids.

- Dependencies: Multi-stakeholder consensus (industry, regulators, academia), impact assessments, compliance tooling.

Notes on feasibility and dependencies

- Data and labels: The approach benefits from large, heterogeneous datasets; label efficiency reduces but does not eliminate the need for high-quality labeled samples for specific tasks.

- 2D keypoint dependency: Many pipelines require a reliable 2D detector; performance degrades with extreme occlusion or poor video quality.

- Scale/metric ambiguity: Monocular 3D pose often lacks absolute scale; applications needing metric accuracy require calibration or anthropometric assumptions.

- Compute and latency: Real-time deployments need GPU/edge acceleration and optimized models; batch analytics can run offline in the cloud.

- Generalization and bias: Cross-domain robustness and fairness must be evaluated, especially in healthcare, safety, and policy-sensitive contexts.

- Licensing and integration: Adoption depends on model/code licenses and ease of integrating the pretrained encoder into existing toolchains (SDKs/APIs, ROS, game engines).

Collections

Sign up for free to add this paper to one or more collections.