- The paper introduces ITSRN, an implicit transformer framework designed to perform continuous super-resolution on screen content images.

- ITSRN leverages pixel coordinates and implicit position encoding to accurately predict transform weights, preserving sharp edges and high contrast.

- Experimental results on SCI benchmark datasets demonstrate that ITSRN outperforms existing methods with superior PSNR and visual quality.

Implicit Transformer Network for Screen Content Image Continuous Super-Resolution

Introduction

The paper "Implicit Transformer Network for Screen Content Image Continuous Super-Resolution" (2112.06174) presents a novel approach to the super-resolution (SR) of screen content images (SCIs), which are becoming increasingly prevalent due to the widespread use of screen sharing, remote cooperation, and online education. Unlike typical SR techniques aimed at natural images, SCIs necessitate a method capable of processing images at arbitrary enlargement scales while preserving sharp edges and high contrast. To address this challenge, the paper introduces the Implicit Transformer Super-Resolution Network (ITSRN), which leverages a novel implicit transformer mechanism and position encoding to achieve continuous SR for SCIs.

Proposed Approach

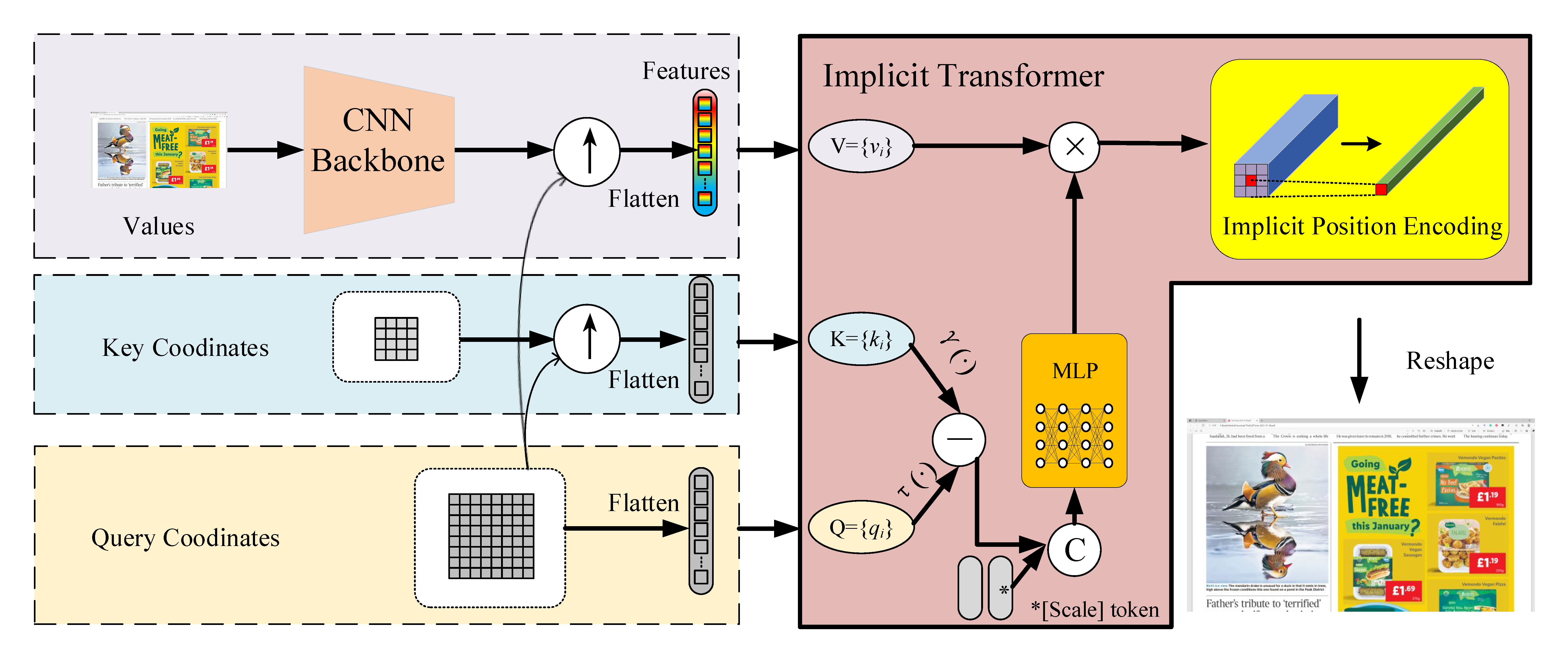

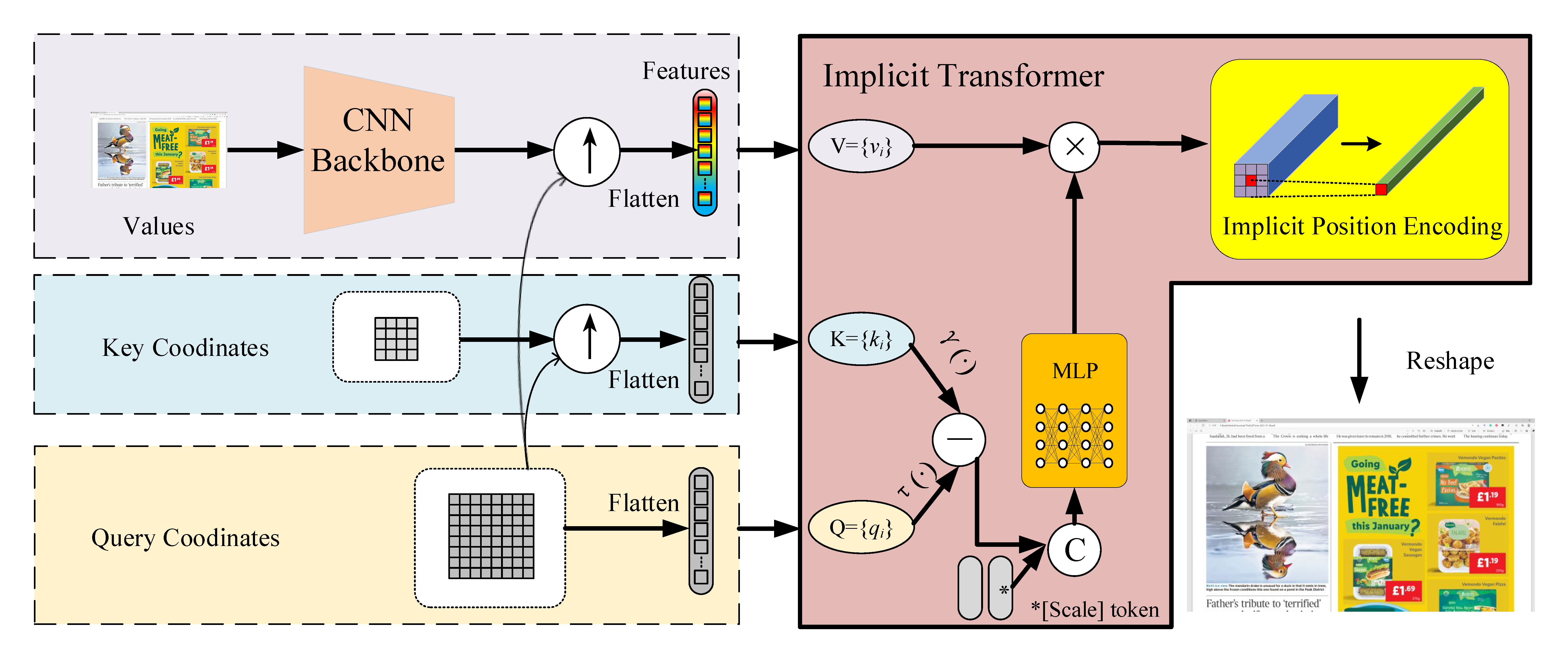

The core contribution of the paper is the development of the ITSRN framework. The framework begins by extracting pixel features from the input low-resolution (LR) image using a CNN backbone. These features, along with key coordinates, are upsampled via nearest neighbor interpolation based on query coordinates. The unique proposition is the use of an implicit transformer to map these elements into transform weights, which combine with pixel features to predict pixel values. This is further refined using an implicit position encoding to aggregate neighboring pixel values.

Figure 1: The architecture of ITSRN highlights its key components such as CNN backbone, implicit transformer, and position encoding.

ITSRN distinguishes itself by using pixel coordinates, rather than pixel values, to determine transform weights, allowing for continuous magnification tailored to SCIs and their unique properties, such as thin and sharp edges, little color variance, and high contrast. Additionally, ITSRN encompasses a scale token to embed global magnification factors, enhancing the prediction of RGB values.

Experimental Results

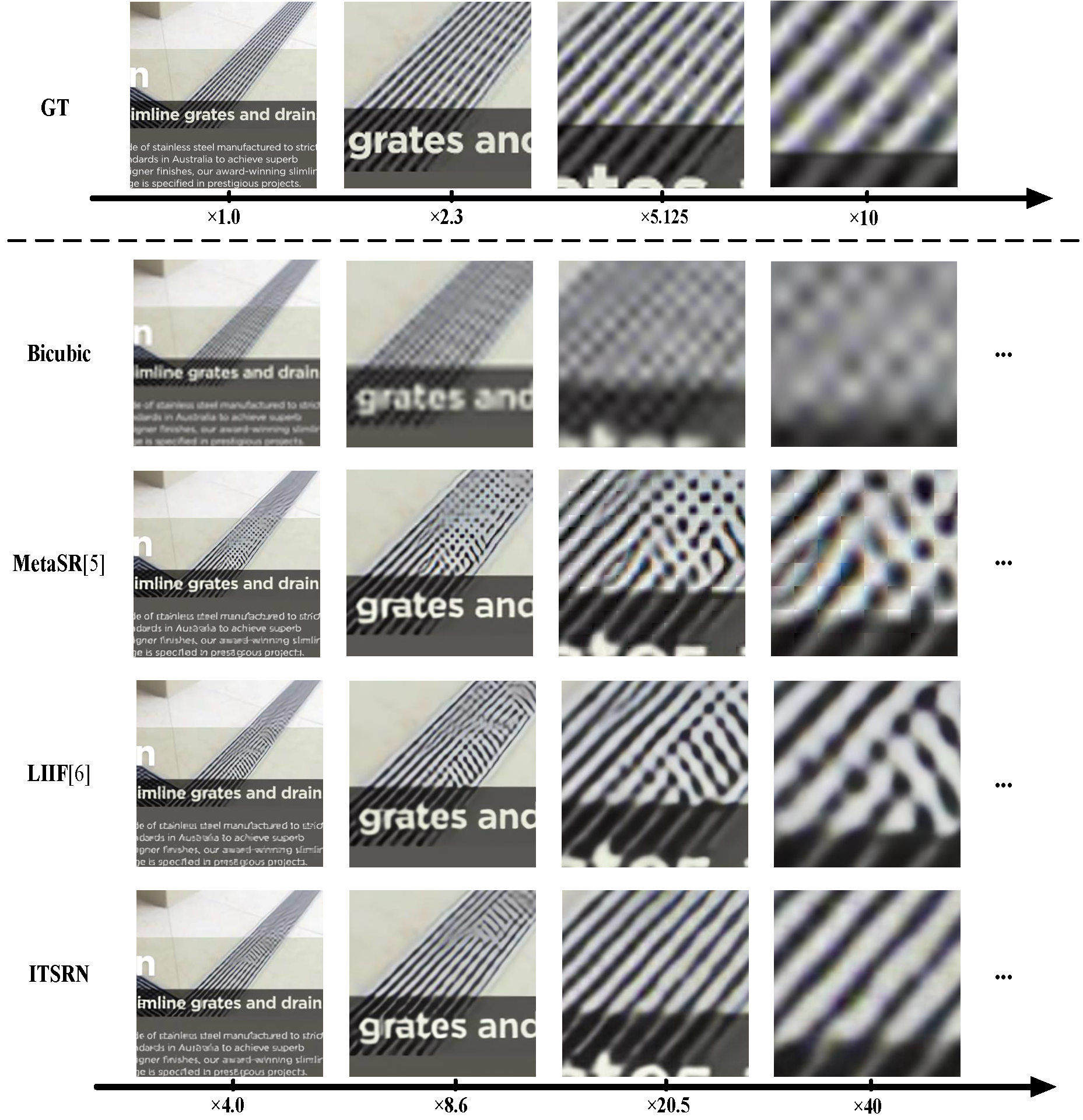

The authors have constructed benchmark datasets, SCI1K and SCI1K-compression, to evaluate the performance of ITSRN against competing SR methods for compressed and uncompressed SCIs. Experiments reveal that ITSRN consistently outperforms existing continuous and discrete SR methods, demonstrating significant improvements in reconstructing fine details for varying magnification scales.

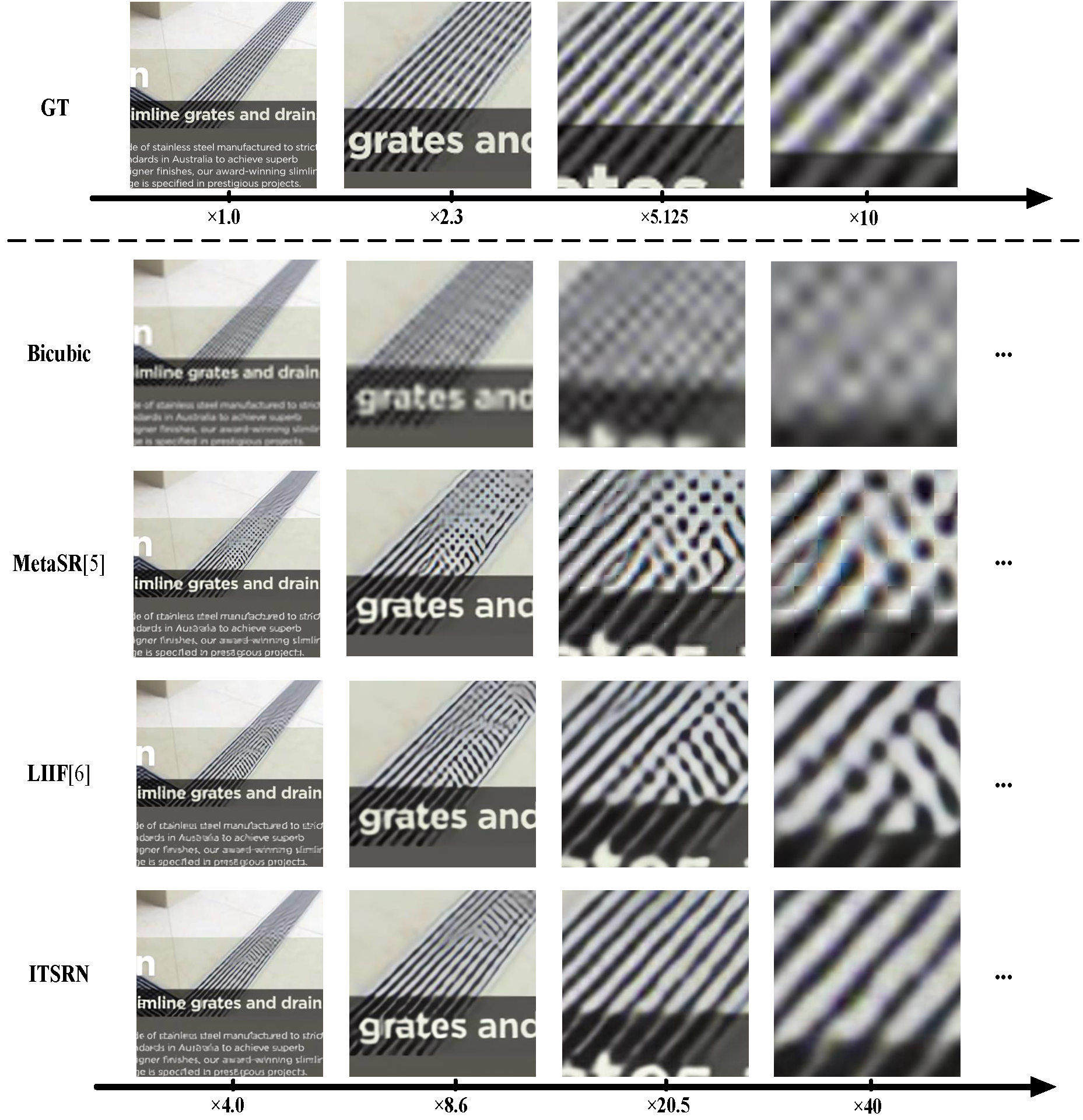

Figure 2: Visual comparison of ITSRN against other state-of-the-art methods in terms of image continuous magnification.

Quantitative analysis across multiple datasets shows ITSRN achieving superior PSNR scores, reinforcing its capability to handle arbitrary-scale SR tasks effectively. The accompanying visual comparisons further illustrate ITSRN's prowess in preserving edge details and contrast in SCIs.

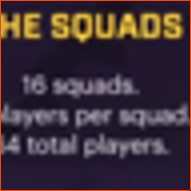

Figure 3: Visual comparison at times4 SR illustrates superior edge reconstruction by ITSRN compared to other methods.

Implications and Future Work

The paper addresses the unique characteristics of SCIs, providing a method for effective SR applicable in scenarios like remote sharing and wireless display, where preserving image quality is critical. The ability to adapt to screens of various sizes without predefined magnification scales represents a practical advancement.

However, the paper acknowledges potential limitations in real-world applications, noting that simulation degradation processes might differ from actual conditions encountered during transmission or storage. Future efforts could involve the development of blind distortion-based SR techniques to further enhance the robustness and adaptability of ITSRN to real scenarios.

Conclusion

In conclusion, the ITSRN offers a significant improvement in the domain of screen content image SR, enabling continuous scale magnification while maintaining sharp edges and high contrast. With its robust architecture and performance across diverse datasets, ITSRN paves the way for enhanced image quality in numerous practical applications. However, as the screen content image domain evolves, further research and datasets will be essential to capture its complexities fully. The proposed benchmark datasets make a valuable contribution to future investigations and innovations in the field of screen content image processing.