- The paper introduces an interventional GNN model for causal inference that aligns with structural causal models.

- It proves that any SCM can be represented by a GNN layer and demonstrates this conversion via an interventional variational graph auto-encoder.

- Empirical validations confirm the model's ability to accurately estimate causal effects and capture intervention-driven changes.

Relating Graph Neural Networks to Structural Causal Models

Introduction

The paper "Relating Graph Neural Networks to Structural Causal Models" (arXiv ID: (2109.04173)) establishes theoretical connections between Graph Neural Networks (GNNs) and Structural Causal Models (SCMs), introducing new insights into neural causal models. The paper defines a model class for GNN-based causal inference, dissecting the relationship between GNNs and SCMs to propose a framework potentially necessary and sufficient for causal effect identification.

Theoretical Analysis and Model Definition

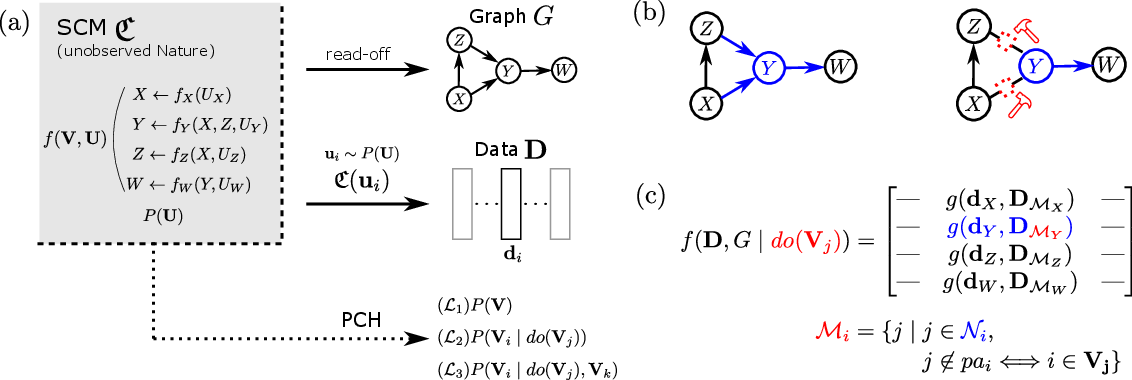

The authors begin by analyzing the underlying mechanisms of causal inference in both GNNs and SCMs. The paper introduces the concept of Interventional GNN, defining intervention within the GNN computation layer to mimic causal interventions in SCMs.

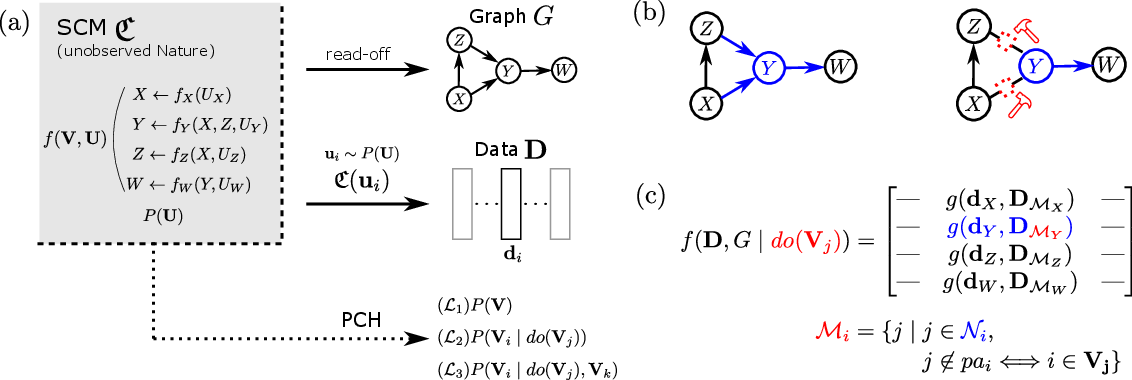

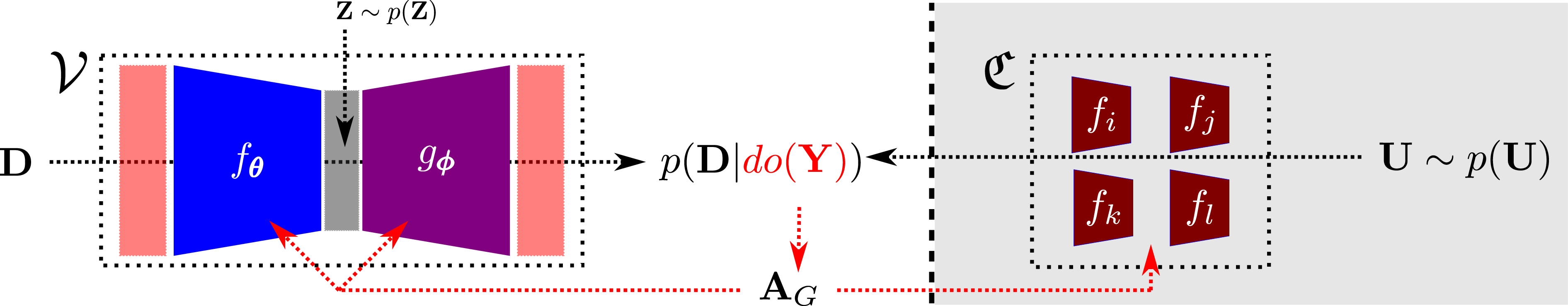

Figure 1: Graph Neural Networks and Interventions akin to SCM. A schematic overview. (a) shows the unobserved SCM C that generates data D through instantiations of the exogenous variables U.

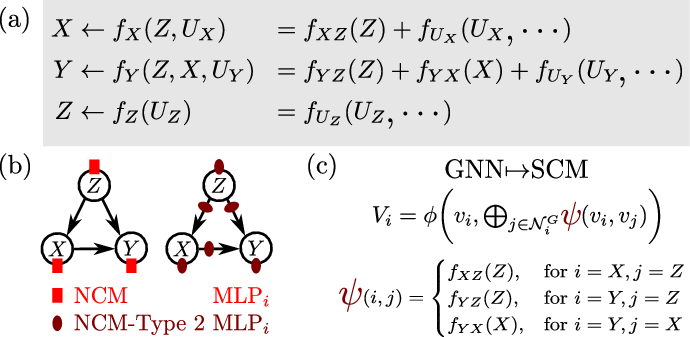

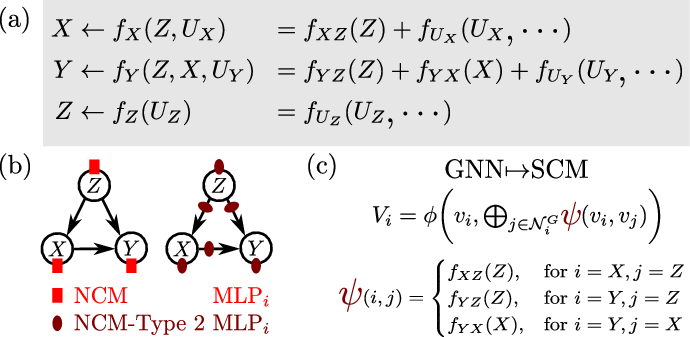

The relationship between GNNs and SCMs is demonstrated through a theorem that proves any SCM can be represented by a GNN layer with appropriately chosen structures and shared functions. This forms the basis for an NCM-Type 2 model, which is a more refined version of the Neural Causal Model (NCM) as compared to earlier definitions.

Neural-Causal Models and Conversions

The paper further investigates the conversion between SCMs and GNNs, emphasizing the use of message-passing GNN architectures. The theorem suggests that the inherently graph-structured nature of SCMs naturally aligns with GNNs, offering a pathway for neural models to perform causal inferences.

Figure 2: NCM-Type 2 and the GNN-SCM Conversion. A schematic overview of the results established in Thm.\ref{thm:gnnscm}.

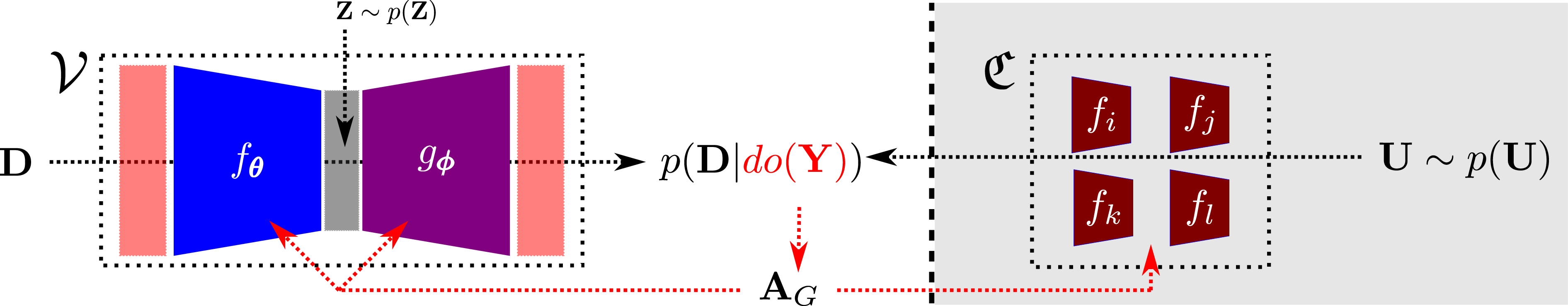

An Interventional Variational Graph Auto-Encoder (iVGAE) is defined, combining variational inference methods with interventional GNN layers to create a model capable of causal inference.

Figure 3: Neural-Causal Model based on Interventional GNN Layers. A schematic overview of the inference process within the neural-causal iVGAE V model.

Empirical Validation and Application

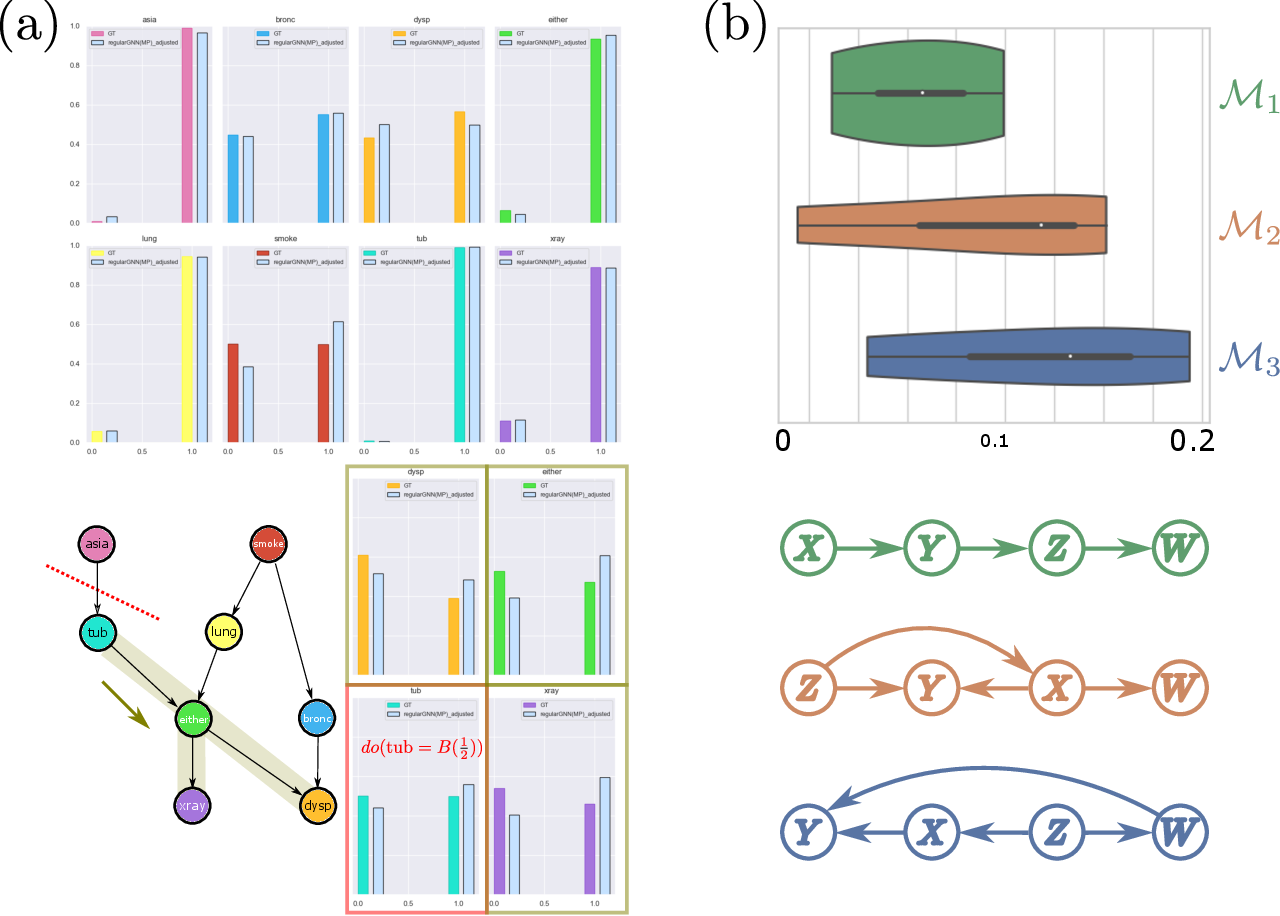

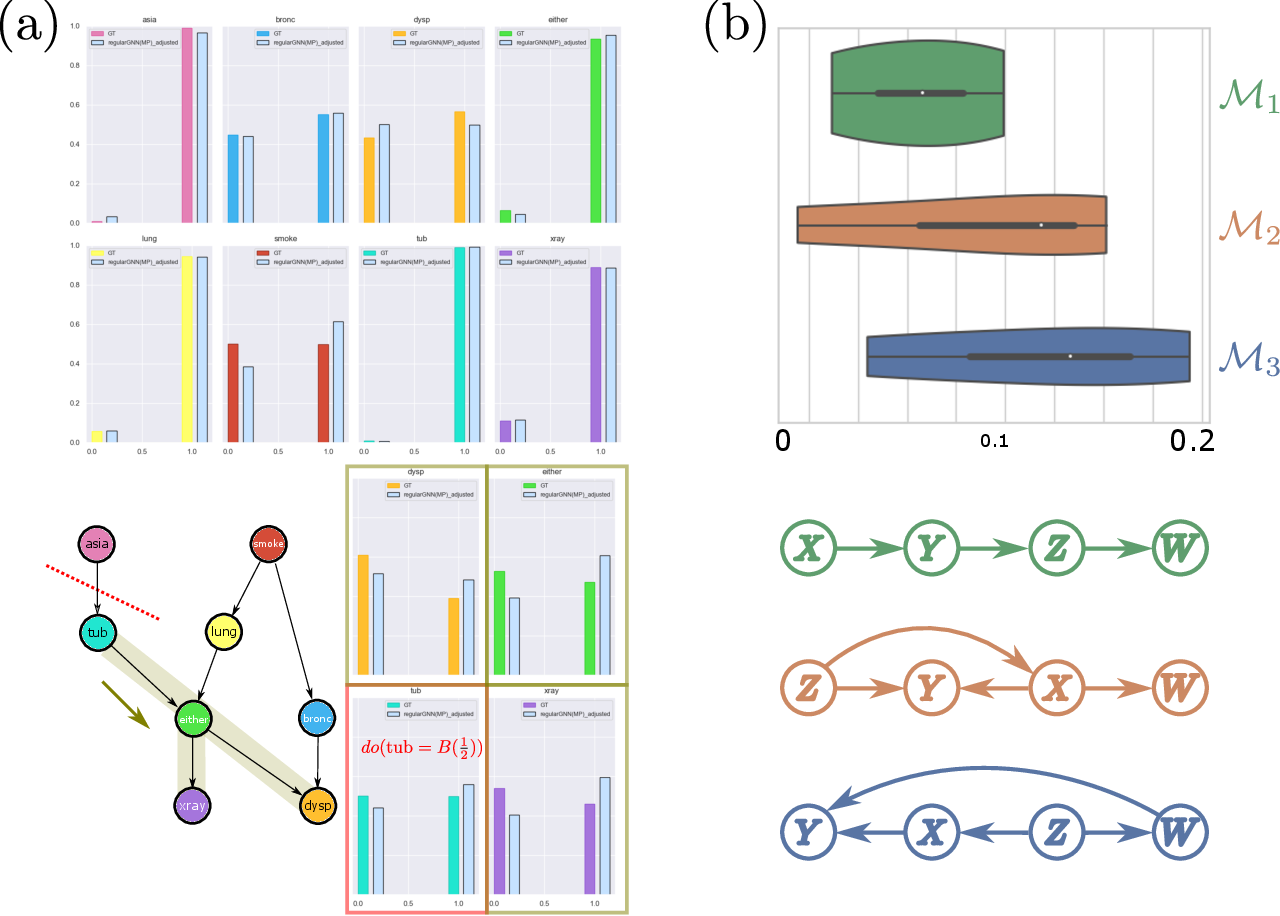

The paper provides empirical validation through simulations and real-world benchmarks, demonstrating the feasibility, expressivity, and identifiability of the proposed model classes. The experimental results highlight the ability of iVGAE to approximate causal densities and estimate causal effects accurately.

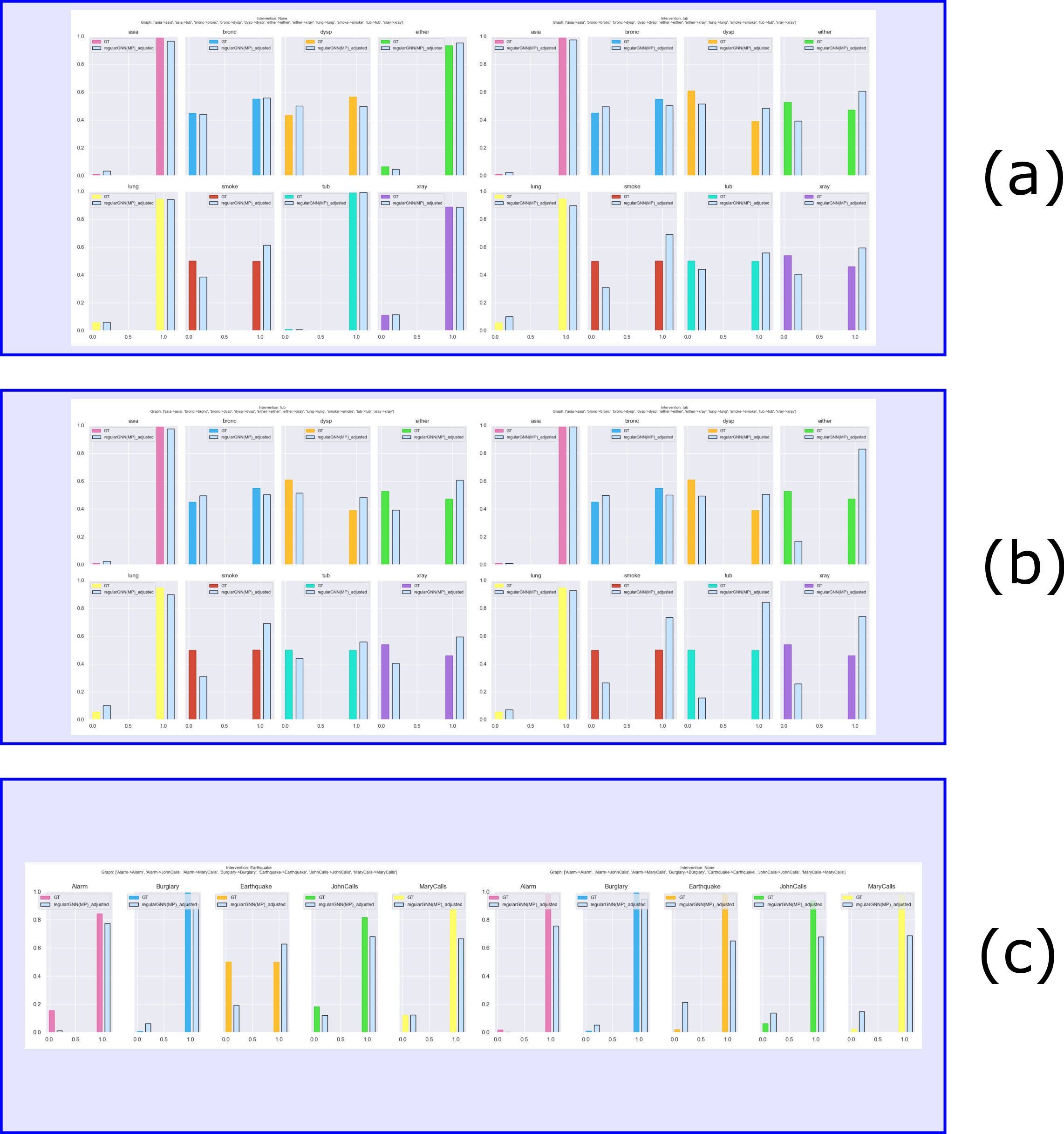

Figure 4: GNN Capture Causal Influence. Empirical illustration (a) shows causal density estimation (top L1, bottom L2 with $\doop(\text{tub}=\mathcal{B}(\frac{1}{2}))$).

Systematic Investigation

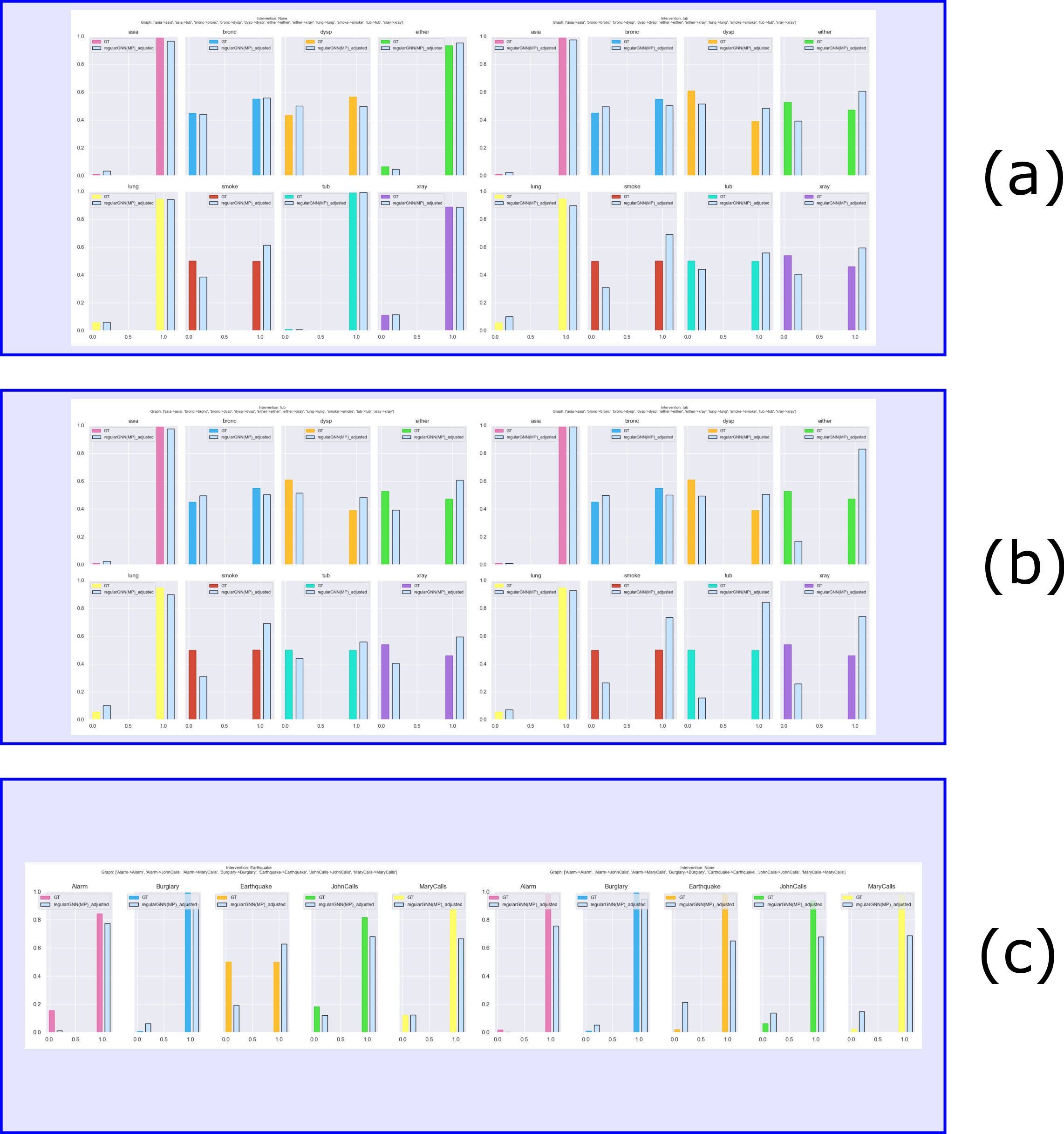

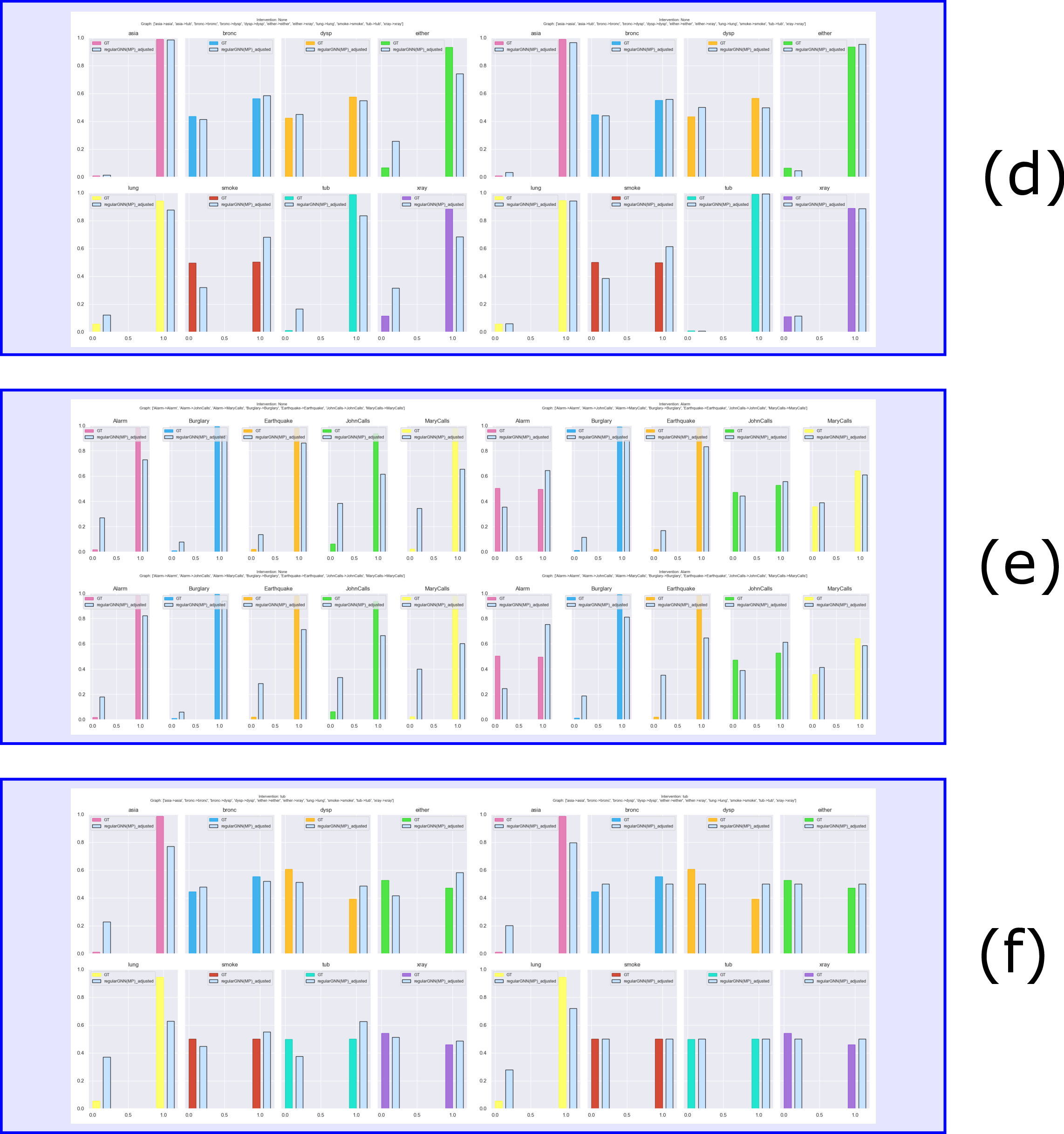

A critical systematic investigation covers various aspects such as the model's ability to capture interventional changes, variance in training, scalability, and parameter tuning importance. These results are crucial in understanding how GNNs can be effectively utilized in causal inference settings.

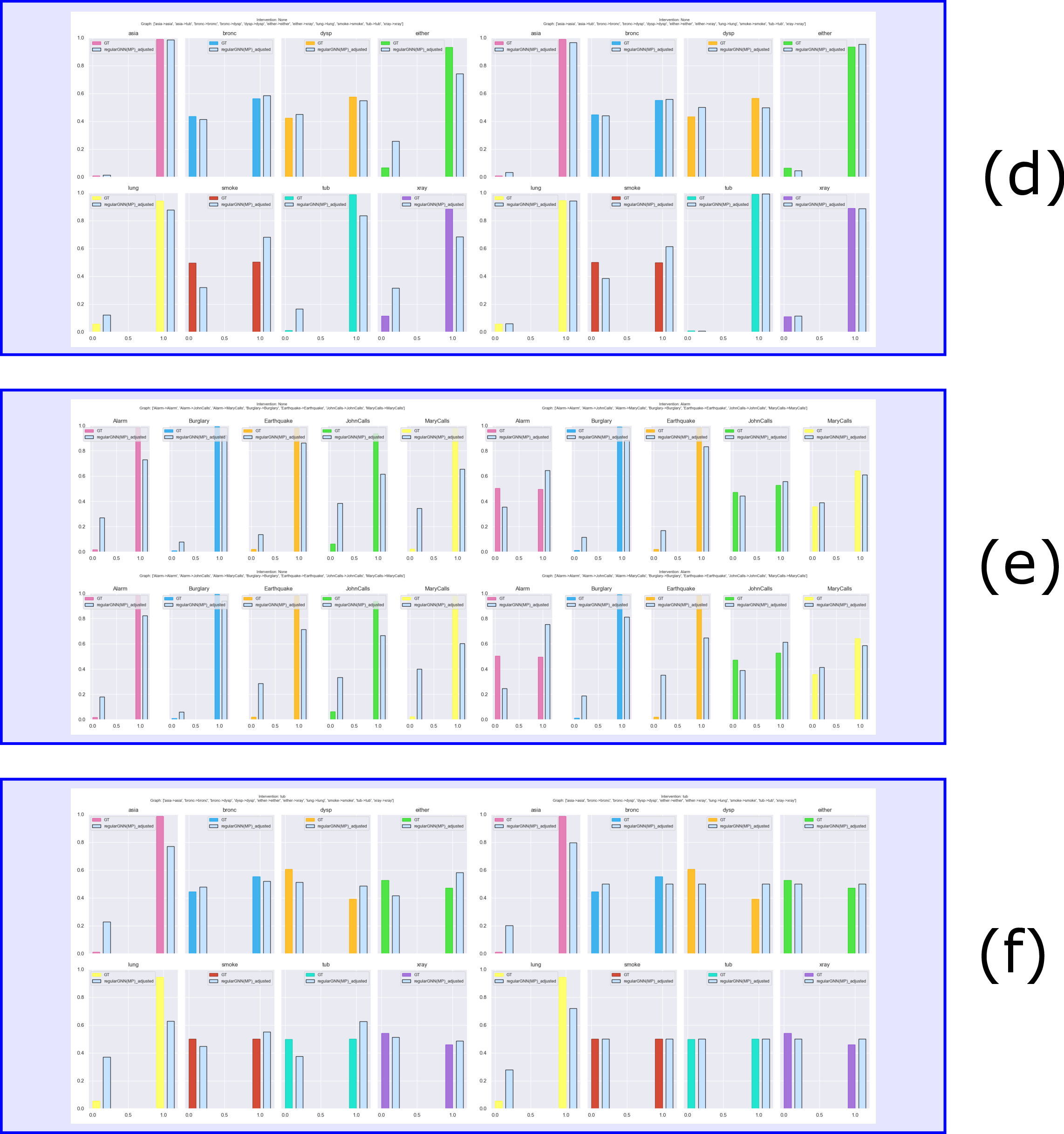

Figure 5: Systematic Investigation: Questions (a), (b), and (c).

Figure 6: Systematic Investigation: Questions (d), (e), and (f).

Conclusions

The establishment of theoretical connections between GNNs and SCMs and the introduction of interventional neural models mark significant advancements in causal inference using machine learning techniques. The authors highlight avenues for future explorations, such as supporting the complete Pearl Causal Hierarchy without feasibility trade-offs and testing models on larger causal systems.

The paper concludes by suggesting practical implications for deploying these models in various fields where causal inference is paramount. By integrating GNNs into causal learning frameworks, the potential for neural networks to perform causal computations becomes increasingly viable, fostering advancements in AI towards more robust and interpretable models.