- The paper proposes an action-delayed RL method that models traffic signal control as a deterministic delay Markov decision process (DDMDP) in mixed autonomy settings.

- The methodology integrates AV communications with optimal control strategies to actively reduce queue lengths and energy usage at urban intersections.

- Simulation results show that the RL controller minimizes waiting times and improves energy efficiency compared to conventional traffic management approaches.

"Control of a Mixed Autonomy Signalised Urban Intersection"

Introduction

This paper addresses the control of a signalised urban intersection in a mixed autonomy scenario, where both human-driven vehicles (HDVs) and autonomous vehicles (AVs) coexist. The focus is on efficiently managing the traffic lights to minimize queue lengths and energy consumption while maximizing vehicle throughput. By modelling the intersection controller's decision process as a deterministic delay Markov decision process (DDMDP), an action-delayed reinforcement learning (RL) approach is proposed to optimize traffic signal control. AVs benefit from communication with the controller, allowing them to adapt their dynamics based on anticipated green or red signals.

System Model

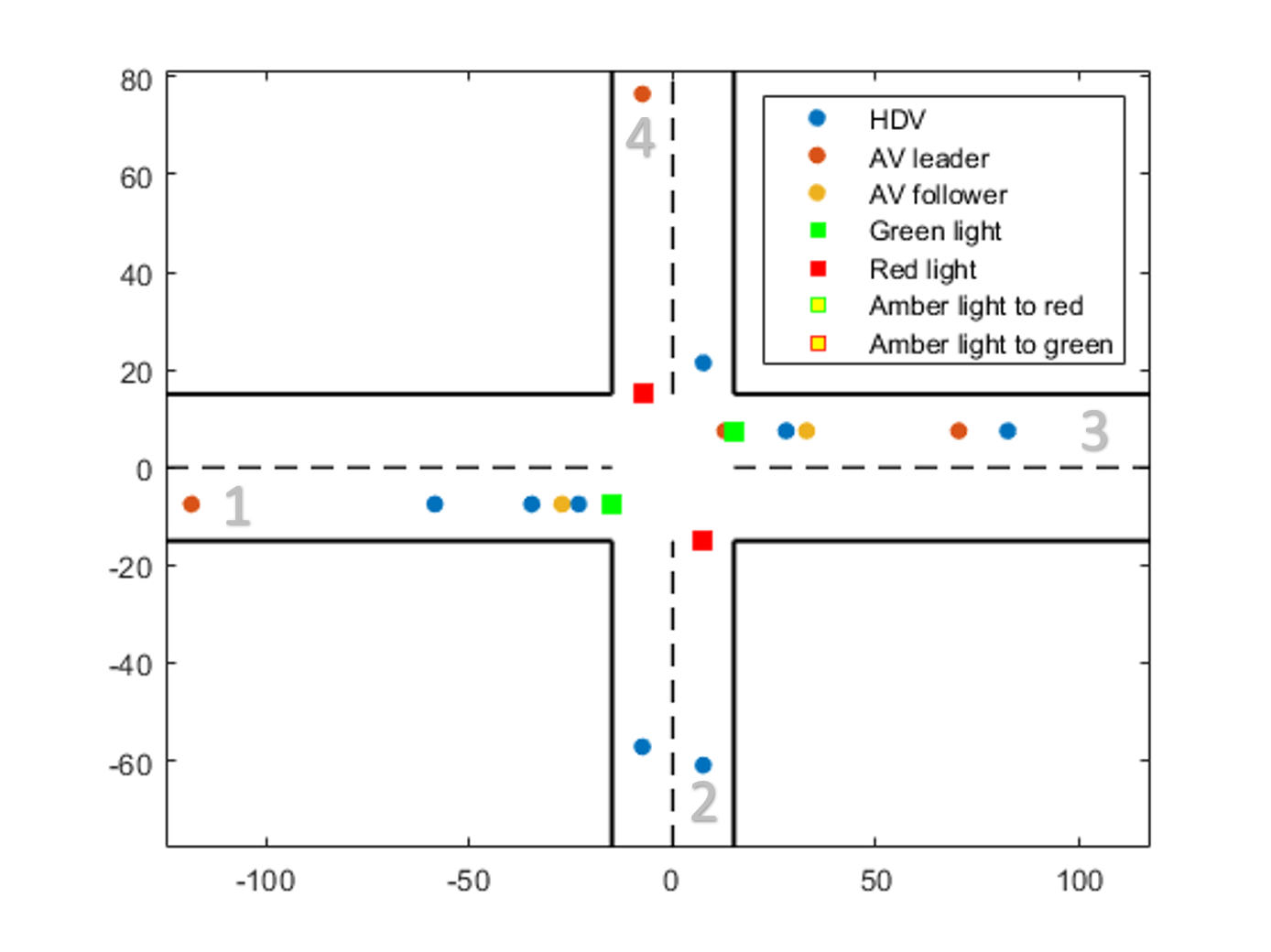

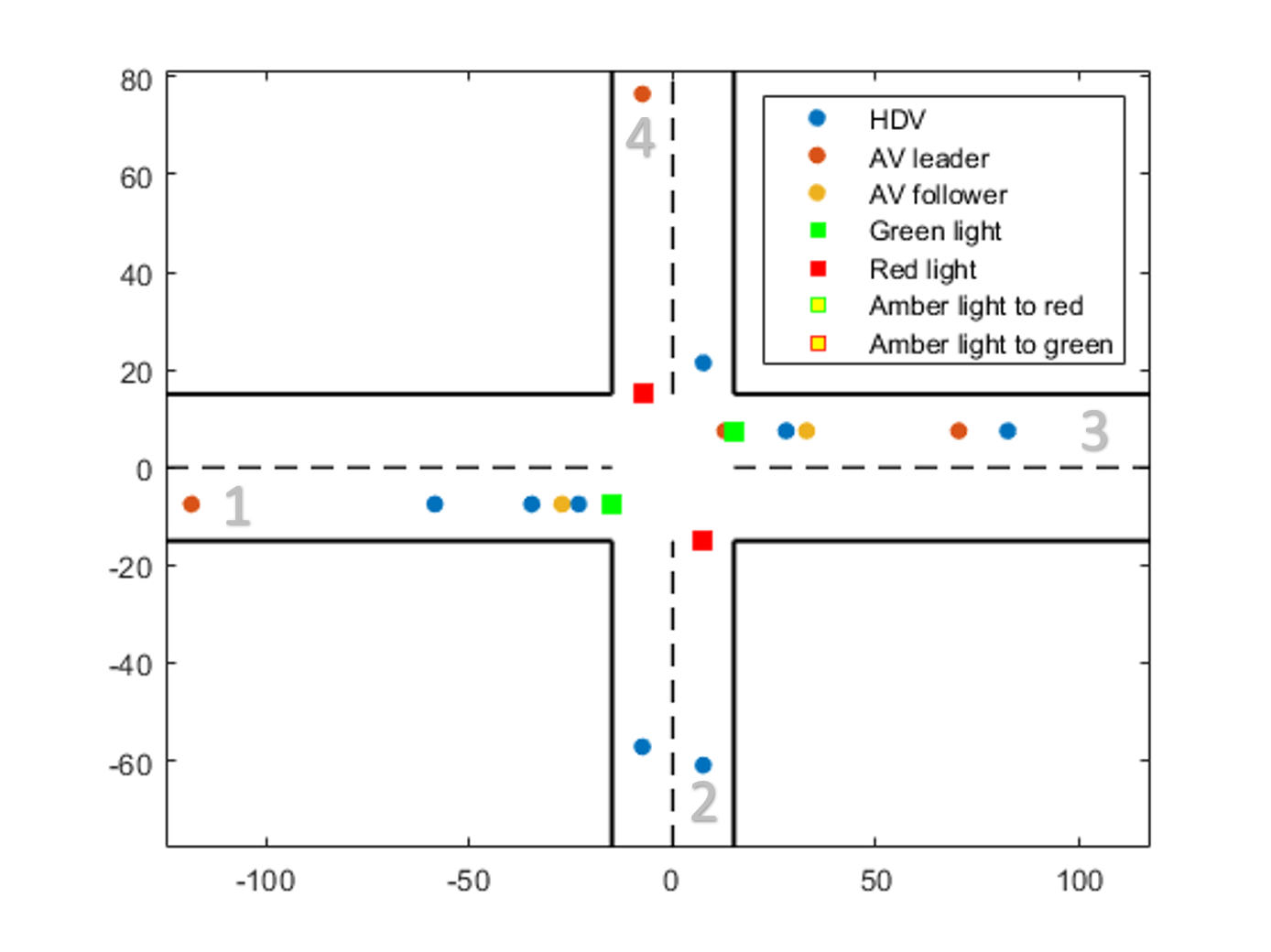

The system consists of a four-lane urban intersection divided into three main zones: the Merging Zone (MZ), Control Zone (CZ), and Exiting Zone (EZ). The traffic light controller determines green or red lights for each traffic-block over multiple horizons, informing AVs about future signal states. This enables AVs to adjust their dynamics by solving an optimal control problem.

AV and HDV Dynamics

HDV dynamics are governed by the Intelligent Driver Model (IDM), which ensures safety in car-following scenarios and reacts to traffic signals. For AVs, the paper proposes an optimal control strategy which minimizes energy costs, subject to constraints such as velocity limits and timing for intersection crossing (Figure 1). AVs navigate based on RL-informed signal changes, reducing fuel consumption and improving throughput.

Reinforcement Learning Approach

The RL framework characterizes the decision-making process as a DDMDP, factoring in the delayed implementation of traffic light decisions. The state set includes queue lengths, while the action space dictates the signal status. The reward function, critical to RL optimization, measures queue length reduction between time intervals. A tabular Q-Learning algorithm is used to iteratively improve the controller's policy, ensuring optimal lane accessibility and congestion management.

Implementation

Simulation results demonstrate the RL controller's efficacy in both mixed and exclusive HDV scenarios. The average waiting time and cumulative reward are calculated over simulation periods, revealing minimal differences between scenarios, yet highlighting the energy efficiency of AVs. Tabulated statistical indices for the integral of squared acceleration indicate substantial benefits for AVs compared to HDVs, showcasing superior energy consumption rates and dynamics adaptation efficiency.

Figure 1: Traffic light controlled intersection in a mixed autonomy scenario

Conclusion

The proposed RL-based algorithm effectively models and optimizes traffic signal control in mixed autonomy intersections by incorporating AV dynamics. AVs demonstrate reduced energy consumption, validating the communication advantage with the controller. Future research will explore reward customizations, controller communication reliability, and optimization parameters, aiming at further enhancing intersection management.

In conclusion, this paper presents a sophisticated approach to intersection control that leverages modern RL techniques and advanced vehicle dynamics, notable for its capability to integrate future autonomous technology with existing human-driven systems.