- The paper introduces Variational Causal Network, applying variational inference with autoregressive LSTM models for scalable Bayesian causal discovery.

- It achieves lower Hellinger distance and improved SHD and AUROC metrics compared to baseline methods in both low and high-dimensional experiments.

- VCN robustly quantifies epistemic uncertainty in causal structures, paving the way for budgeted experimental design and advanced causal learning.

Variational Causal Networks: Approximate Bayesian Inference over Causal Structures

Introduction

The paper "Variational Causal Networks: Approximate Bayesian Inference over Causal Structures" focuses on advancing the techniques for inferring causal structures from data via Bayesian methods. It introduces an approach using variational inference to manage the complexity and inherent uncertainties in causal structure learning, particularly in scenarios constrained by limited observational data. The use of Structural Causal Models (SCMs) serves as the foundation for this investigation, leveraging their ability to represent causal mechanisms albeit the challenges posed by non-identifiability and the vast space of potential Directed Acyclic Graphs (DAGs).

Problem Setting and Key Contributions

Bayesian Approach to Causal Learning

By employing Bayesian inference, the paper aims to quantify epistemic uncertainty over DAGs, critical for tasks like selecting informative interventions. Traditional causal inference methods often stop at obtaining a single causal graph, neglecting the field of uncertainty which is vital for robust decision-making, especially when dealing with finite, potentially sparse data.

Variational Causal Network (VCN)

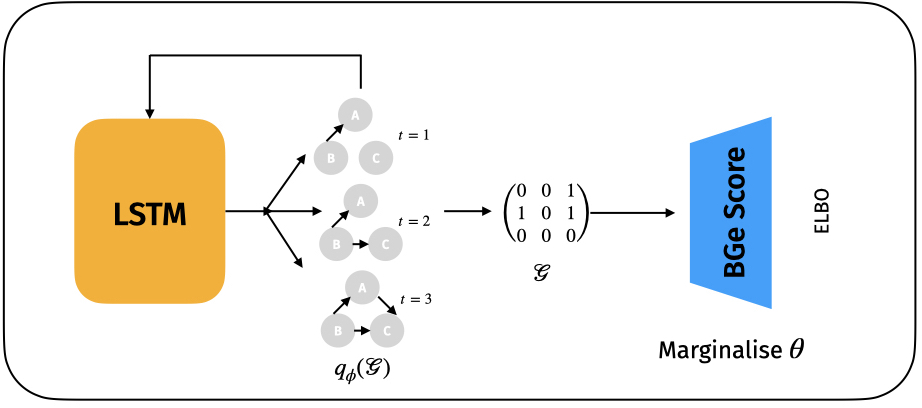

The paper proposes the Variational Causal Network (VCN) which leverages variational inference to approximate Bayesian posteriors over causal structures. Different from categorical distributions that grow exponentially with the number of variables, VCN uses an autoregressive model design to parameterize distributions over adjacency matrices, ensuring a feasible learning process without losing the richness required to model the true distribution.

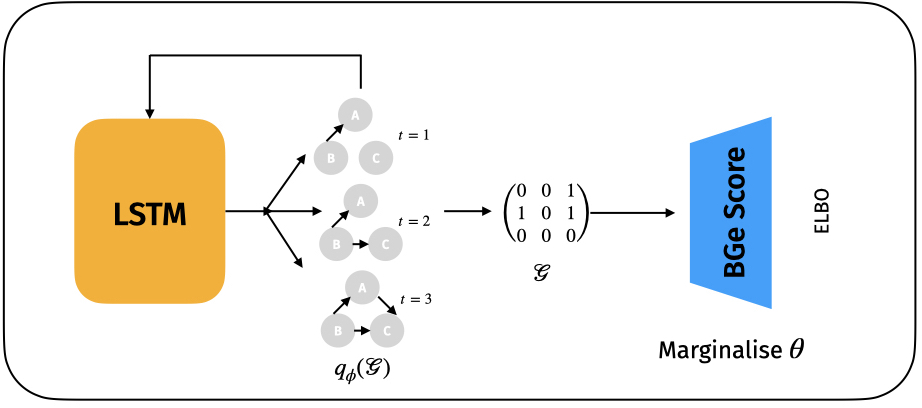

Figure 1: Schematic Diagram of Variational Causal Networks illustrates the autoregressive modeling approach for DAGs using LSTMs.

Methodology

Variational Inference Framework

Despite the intractability of the full evidence term in Bayesian posterior computation, variational inference enables approximation via an Evidence Lower Bound (ELBO). The authors develop a novel parametric variational family modeled by autoregressive distributions on DAG adjacency matrices. Long Short-Term Memory (LSTM) networks are employed to capture the dependencies and ensure valid DAG configurations, which ergodically represents the super-exponential graph space.

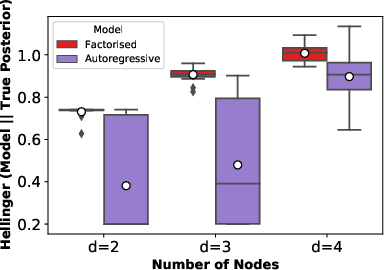

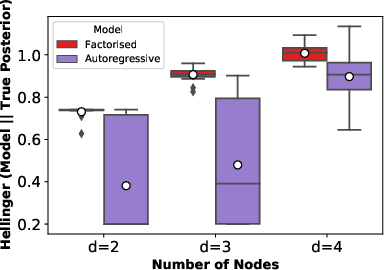

Figure 2: Hellinger distance of the full posterior of the approximation with the true posterior shows the effectiveness of the alternative approach in low-dimensional setups.

Evaluation Metrics and Experiments

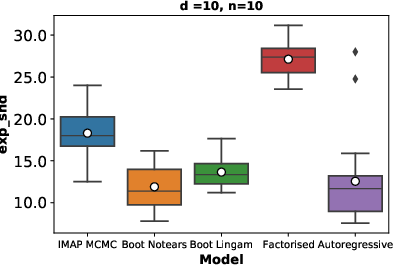

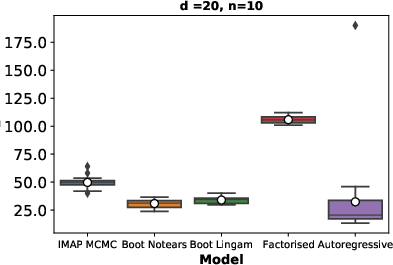

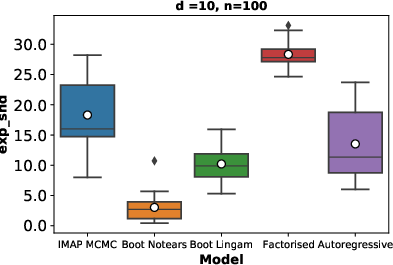

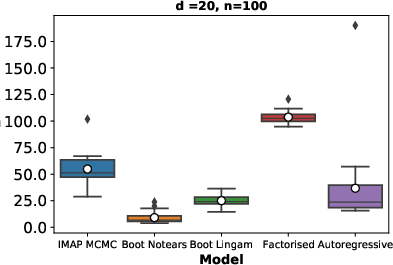

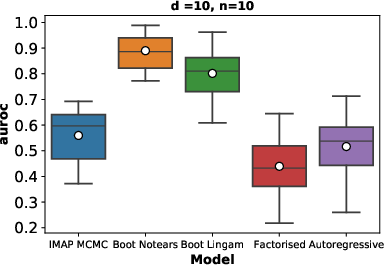

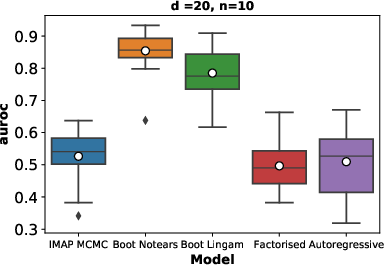

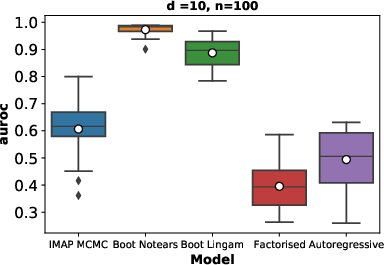

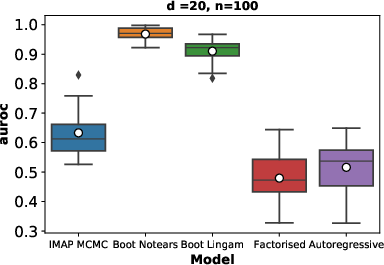

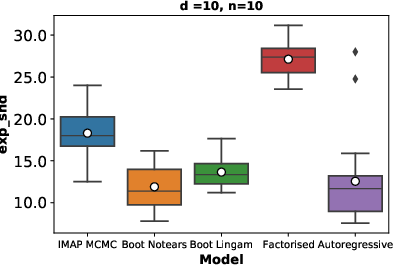

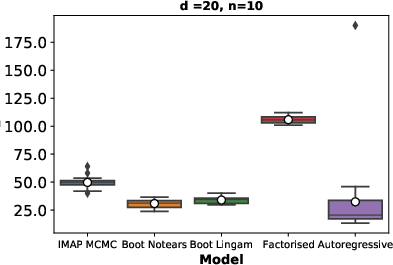

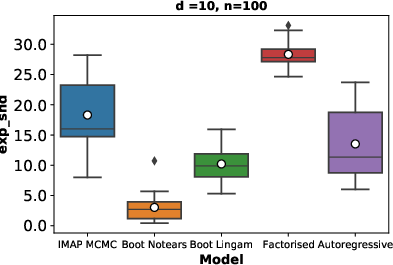

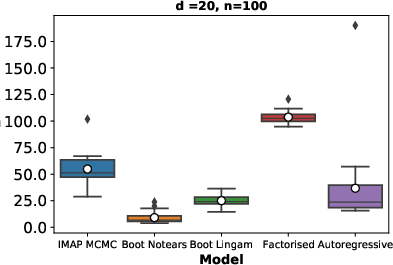

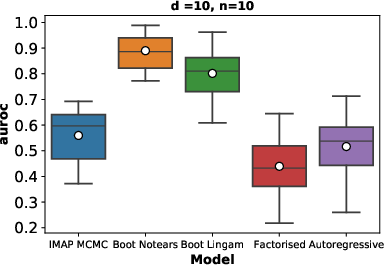

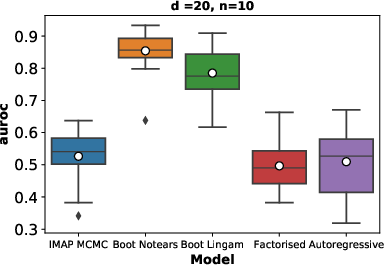

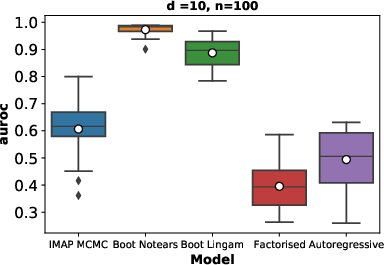

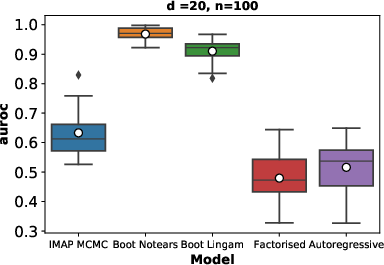

For low-dimensionality settings, the VCN technique achieves a smaller Hellinger distance to the true posterior than existing methods, attesting to its superior approximation capacity. Further comprehensive metrics like Expected Structural Hamming Distance ([SHD]) and AUROC are utilized for higher-dimensional evaluations, demonstrating VCN’s better empirical performance over baseline techniques like DAG bootstrap and factorized posterior methods.

Experimental Results

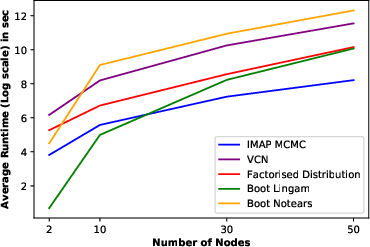

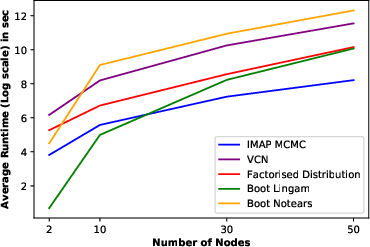

The paper’s experiments highlighted that VCN provided more accurate and computationally feasible approximations of Bayesian posteriors over causal structures across varied test conditions, maintaining a balance between computational runtime and performance. This advantage is critical for tasks necessitating Bayesian perspectives, such as budgeted experimental design and causal representation learning.

Figure 3: [SHD] for d=10 and d=20 node ER random graphs (lower is better). Results obtained using 20 different random graphs.

Figure 4: AUROC for d=10 and d=20 node ER random graphs (higher is better). Results obtained using 20 different random graphs.

Conclusion

The study introduces a scalable framework for Bayesian inference on causal structures, significantly improving upon the challenges faced with traditional methods in capturing multi-modal posterior distributions. As an approach leveraging sparse data effectively, VCN shows promise for application across increasingly complex causal discovery tasks. Future work could explore extending the technique to accommodate non-linear SCMs and integrate techniques like normalizing flows for enhancing sampling efficiency within high-dimensional spaces, solidifying VCN’s utility in varied domains of AI-driven causal inference.