- The paper introduces T-LEAF, a framework that embeds symbolic temporal logic into deep sequential models via DFA representations and GNN-based hierarchical embeddings.

- The framework combines logic loss with task-specific training, improving performance in sequential human activity recognition and imitation learning.

- Experimental results show that incorporating temporal constraints enhances prediction accuracy, speeds up convergence, and fosters robust learning in complex scenarios.

Embedding Symbolic Temporal Knowledge into Deep Sequential Models

Introduction

The paper "Embedding Symbolic Temporal Knowledge into Deep Sequential Models" introduces the Temporal-Logic Embedded Automata Framework (T-LEAF), an innovative approach to enhancing deep sequential models by incorporating symbolic temporal knowledge. This methodology leverages Linear Temporal Logic (LTL) to provide prior knowledge in the form of symbolic specifications, which are embedded into deep models using a Graph Neural Network (GNN)-based approach. The primary focus is on tasks such as sequential human activity recognition and imitation learning, where temporal logic can offer substantial guidance in the form of high-level constraints and structured knowledge.

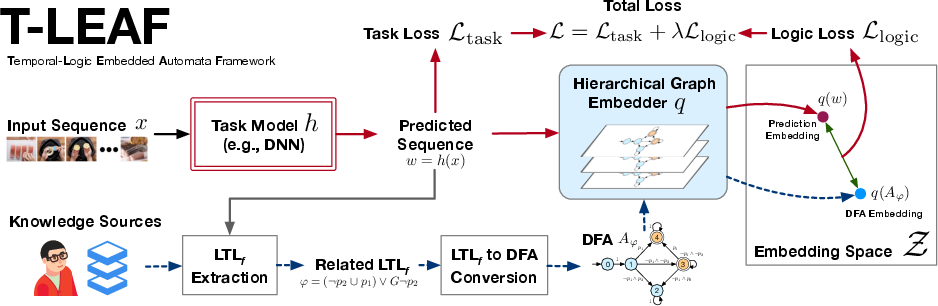

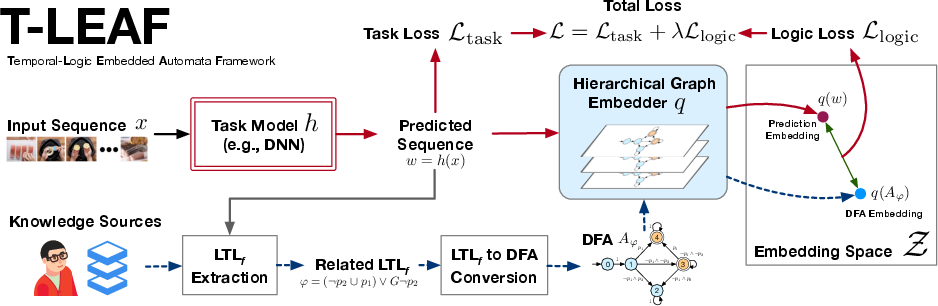

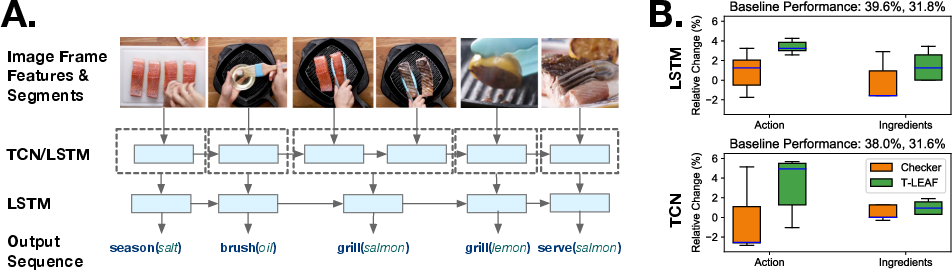

Figure 1: An overview of the Temporal-Logic Embedded Automata Framework (T-LEAF).

Temporal Logic and Automata Embedding

Temporal Logic, specifically Linear Temporal Logic (LTL), serves as the backbone of the framework by offering a structured language to express temporal constraints and dynamic relationships within sequential data. The authors argue that LTL, with its well-defined semantics and less ambiguity than natural language, is particularly suited for robotic and AI applications where understanding and leveraging temporal coherence is crucial.

T-LEAF converts LTL formulas into Deterministic Finite Automata (DFA), which are then embedded into a continuous vector space using a hierarchical embedding scheme comprised of an edge-embedder and a meta-embedder. This conversion allows the models to align their predictions with temporal specifications by evaluating a logic loss during training.

Hierarchical Graph Embedder

Central to T-LEAF’s methodology is the hierarchical graph embedder, which processes DFA representations of LTL formulas into embeddings suitable for neural networks. This two-stage embedder consists of:

- Edge Embedder (q_e): Converts propositions along DFA edges into intermediate embeddings.

- Meta Embedder (q_m): Processes the intermediate graph, converting the entire structure into a unified vector representation.

The embedder is trained using a triplet loss, ensuring that embeddings of DFA representations are close to their satisfying traces and distant from unsatisfying ones, facilitating the learning of meaningful and generalizable relations in the embedding space.

Framework Application in Sequential Model Training

T-LEAF integrates the logic loss with standard task-specific losses to train sequential models. This combined loss encourages models to produce outputs that are not only accurate according to task specifications but also consistent with pre-existing symbolic knowledge. The flexibility of T-LEAF allows it to be incorporated into various neural architectures, enhancing their performance by aligning their outputs with structured temporal knowledge.

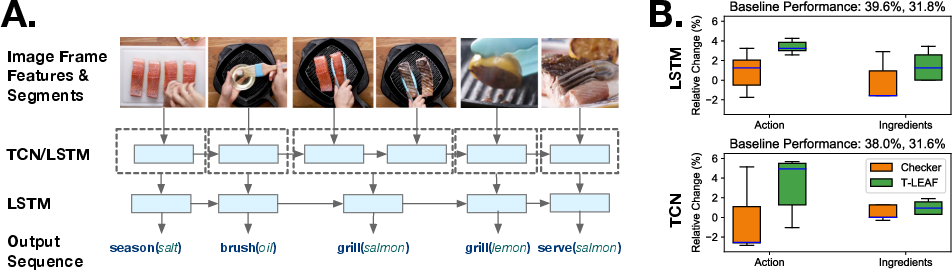

Figure 2: Sequential Human Action Recognition Experiment. T-LEAF improves performance over baseline models by incorporating temporal knowledge.

Experimental Validation

Empirical results demonstrate T-LEAF’s capability to improve performance across different tasks. In sequential human activity recognition, T-LEAF consistently provided gains over baseline models by utilizing temporal knowledge to inform action predictions. The experiments showed that incorporating structured logic improved neural networks' ability to infer complex temporal dependencies, enhancing their predictive accuracy.

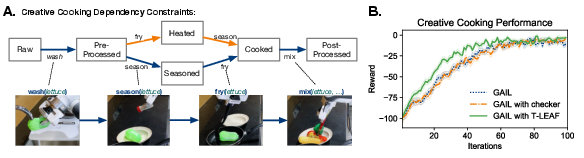

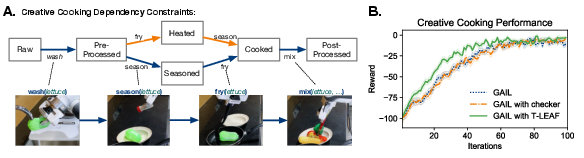

In imitation learning, T-LEAF enabled faster convergence and improved performance by embedding domain-specific temporal constraints into the policy learning process. This indicates the framework's potential applicability in complex environments where traditional learning methods struggle to efficiently utilize limited expert demonstrations.

Figure 3: Creative Cooking Imitation Learning Experiment. T-LEAF improves GAIL’s convergence rate through enhanced gradient directionality.

Conclusion

The T-LEAF framework presents a novel approach to embedding symbolic temporal knowledge into deep models via hierarchical graph embeddings of DFA. Its ability to integrate human-like reasoning processes into automated learning systems highlights its promise for applications in robotics and AI where temporal dynamics and structured knowledge play pivotal roles. Future research could expand on this work by exploring further applications of symbolic embeddings in other domains and refining the embedding techniques to capture more complex relational dynamics.