- The paper assesses an in-IDE NL2Code assistant, revealing that current NL2Code tools do not significantly speed up task completion or improve code correctness.

- It employs a PyCharm plugin that combines tree-based semantic parsing for generation with a custom Stack Overflow retrieval engine, evaluated via controlled experiments with 31 developers.

- Users appreciate the convenience of in-IDE suggestions, yet generated and retrieved code often require substantial modifications, highlighting key areas for improvement.

In-IDE Code Generation: Promise and Challenges

This paper investigates the potential of integrating natural language to code (NL2Code) generation and retrieval techniques within the software development workflow. Through a controlled human study, the authors evaluate the impact of an in-IDE NL2Code assistant on developer productivity, code quality, and overall experience. The findings highlight the promise and challenges of current NL2Code technology, providing valuable insights for future research and development in this field.

Study Design and Implementation

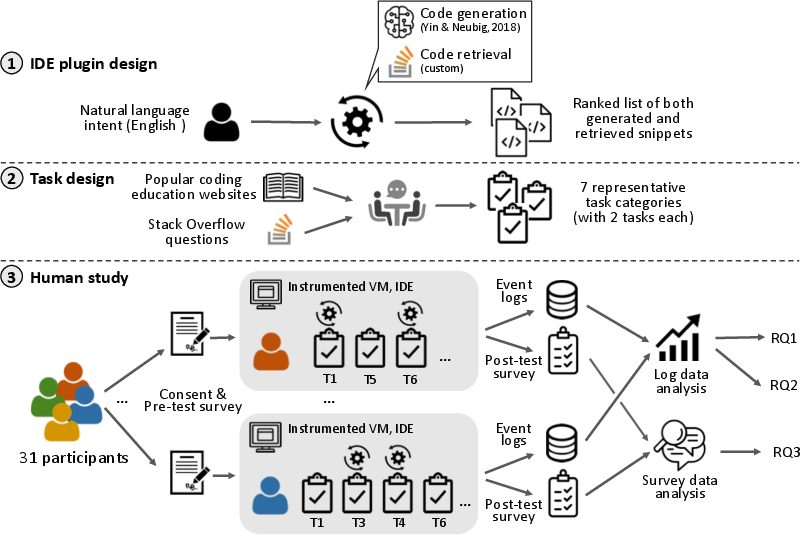

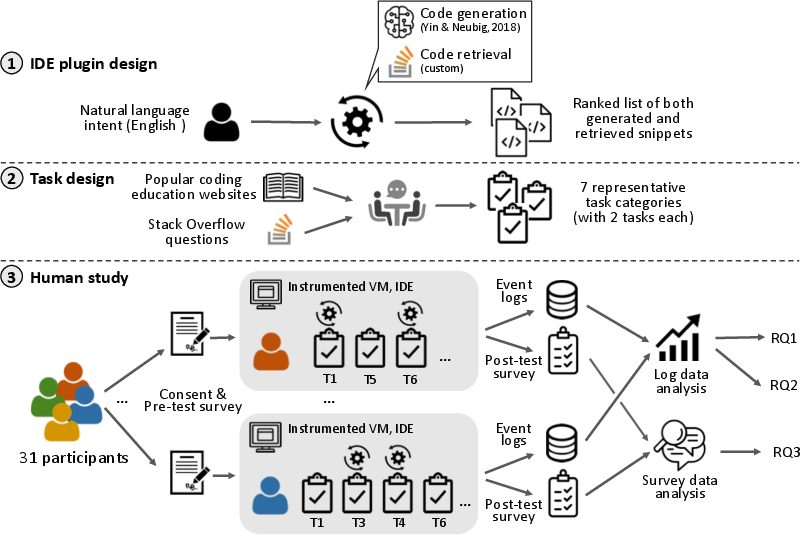

The study involved the development of a PyCharm plugin that combines code generation and retrieval functionalities. The code generation component utilizes a tree-based semantic parsing approach, while the code retrieval component leverages a custom Stack Overflow code search engine. The plugin takes natural language queries as input and presents developers with a ranked list of code snippets generated and retrieved by the respective models.

To assess the effectiveness of the NL2Code assistant, a controlled experiment was conducted with 31 participants. Participants were assigned a series of Python programming tasks, spanning various difficulty levels and application domains, and were asked to complete these tasks with and without the aid of the plugin. A virtual environment was instrumented to collect detailed data on user interactions, code edits, web browsing activities, and task completion times. The (Figure 1) provides a good overview of the experimental setup.

Figure 1: Overview of our study.

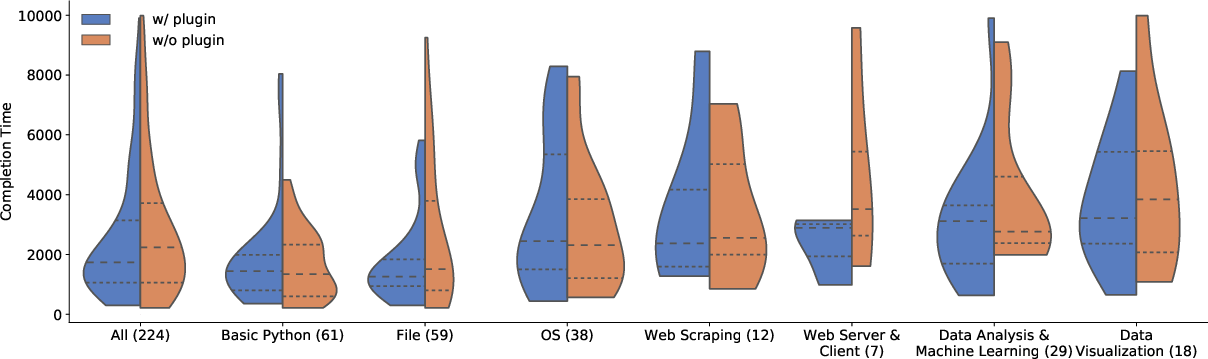

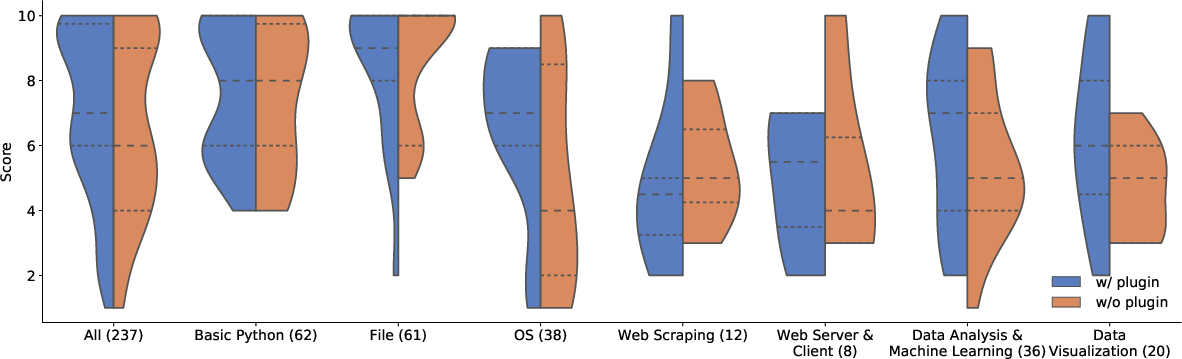

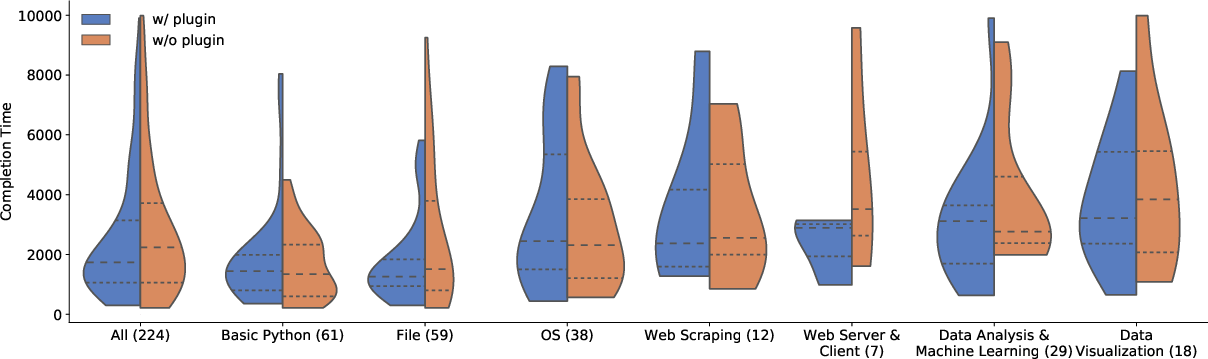

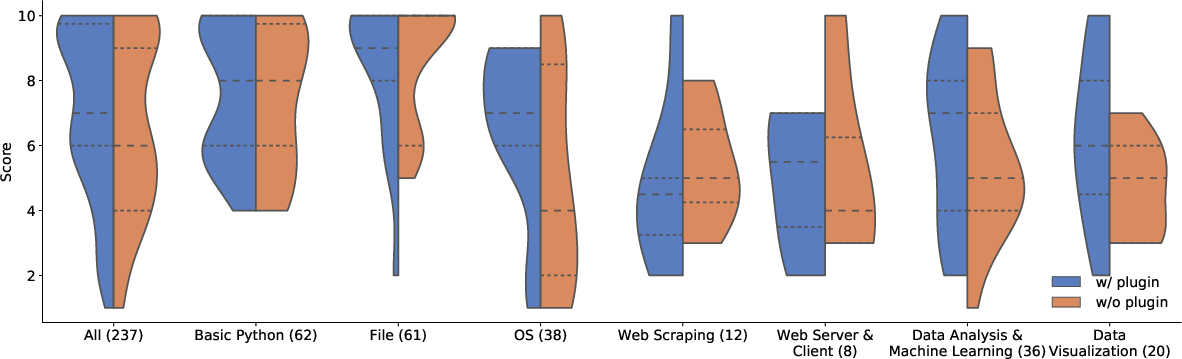

One of the primary research questions explored in the paper is the impact of the NL2Code assistant on task completion time and program correctness. Quantitative results from the study revealed no statistically significant gains in either of these metrics when using the plugin. Participants with access to the NL2Code assistant did not complete tasks faster or produce more correct code compared to those who did not use the plugin.

(Figure 2) shows the distribution of task completion times with and without the plugin. (Figure 3) shows the distribution of task correctness scores with and without the plugin.

Figure 2: Distributions of task completion times (in seconds) across tasks and conditions (w/ and w/o using the plugin). The horizontal dotted lines represent 25\% and 75\% quartiles, and the dashed lines represent medians.

Figure 3: Distributions of task correctness scores (0--10 scale) across tasks and conditions. The horizontal dotted lines represent 25\% and 75\% quartiles, and the dashed lines represent medians.

Analysis of User Queries and Code Snippets

The study also explores the characteristics of user queries and the quality of code snippets generated and retrieved by the plugin. The findings indicate that users tend to favor code generation for basic Python functionality, while code retrieval is preferred for more complex tasks involving specialized libraries.

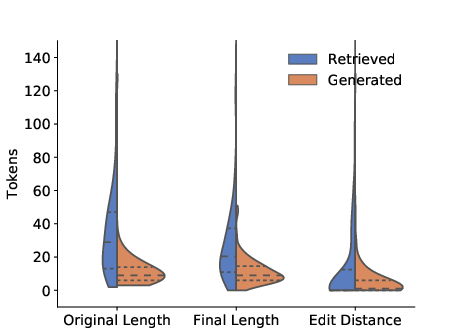

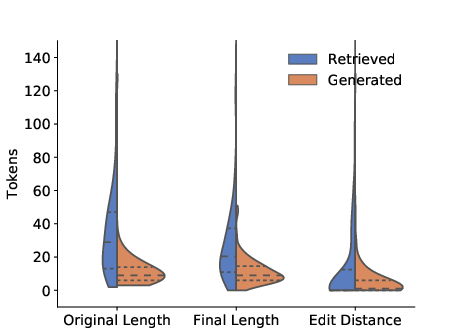

Furthermore, an analysis of code snippet edits reveals that snippets retrieved from Stack Overflow often require more modifications to fit the specific context of the user's code. This suggests that retrieved snippets may contain spurious elements or boilerplate code that needs to be removed or adapted. (Figure 4) shows a comparison of code snippet length before and after potential edits.

Figure 4: Split violin plots comparing the length (in tokens) of the code snippets chosen by the study participants across all successful queries, before and after potential edits in the IDE. The horizontal dotted lines represent 25\% and 75\% quartiles, and the dashed lines represent medians.

User Perceptions and Feedback

Qualitative surveys conducted as part of the study provide insights into user perceptions of the NL2Code assistant. Participants generally appreciated the convenience of having code snippets readily available within the IDE, reducing the need to switch to external web browsers.

However, users also expressed concerns about the quality and relevance of the generated and retrieved code snippets. Many participants noted that the plugin's suggestions often required modifications to be directly usable. Users also suggested that providing additional context and documentation alongside the code snippets would be beneficial for understanding and utilizing the suggestions effectively.

Implications and Future Directions

The findings of this study have several implications for the development and deployment of NL2Code assistants in software development workflows. The results suggest that while current NL2Code technology holds promise, there is a need to further improve the accuracy and relevance of code generation and retrieval models. Additional factors, such as providing documentation and contextual information, should also be considered to enhance the usability and impact of these tools.

The authors propose several concrete recommendations for future work, including:

- Combining code generation with code retrieval to leverage the strengths of both approaches.

- Incorporating the user's local context into the input to improve the relevance of code suggestions.

- Considering the user's local context in the output to tailor the generated or retrieved code to the specific development environment.

- Providing more context and documentation for each returned snippet to facilitate understanding and utilization.

- Developing a unified and intuitive query syntax to simplify the interaction with the NL2Code assistant.

- Implementing a dialogue-based query capability to enable users to refine their queries and obtain more precise results.

Conclusion

This paper provides a comprehensive evaluation of the potential and challenges of integrating NL2Code technology into the software development workflow. The study's findings highlight the need for continued research and development in this field, with a focus on improving the accuracy, relevance, and usability of NL2Code assistants. By addressing the identified pain points and incorporating the proposed recommendations, future NL2Code tools can potentially transform the way software is developed, making programming more accessible and efficient for developers of all skill levels.