- The paper introduces a novel human-centric method that shuffles video frames to isolate and identify temporal action classes.

- It establishes a Temporal Dataset by merging existing datasets with a new temporal score to evaluate time-sensitive recognition performance.

- Benchmark results show that models with temporal convolutions, like R2+1D, outperform static models, underscoring the importance of temporal dynamics.

Discovering Temporal Data for Temporal Modeling: An Expert Analysis

Temporal understanding plays a pivotal role in video analysis, yet current datasets often fail to capture actions that genuinely depend on temporal information. The paper "Only Time Can Tell: Discovering Temporal Data for Temporal Modeling" addresses this issue by proposing a perceptual methodology to identify action classes that necessitate temporal understanding, thus establishing a Temporal Dataset as a benchmark for evaluating temporal models in video recognition.

Methodology

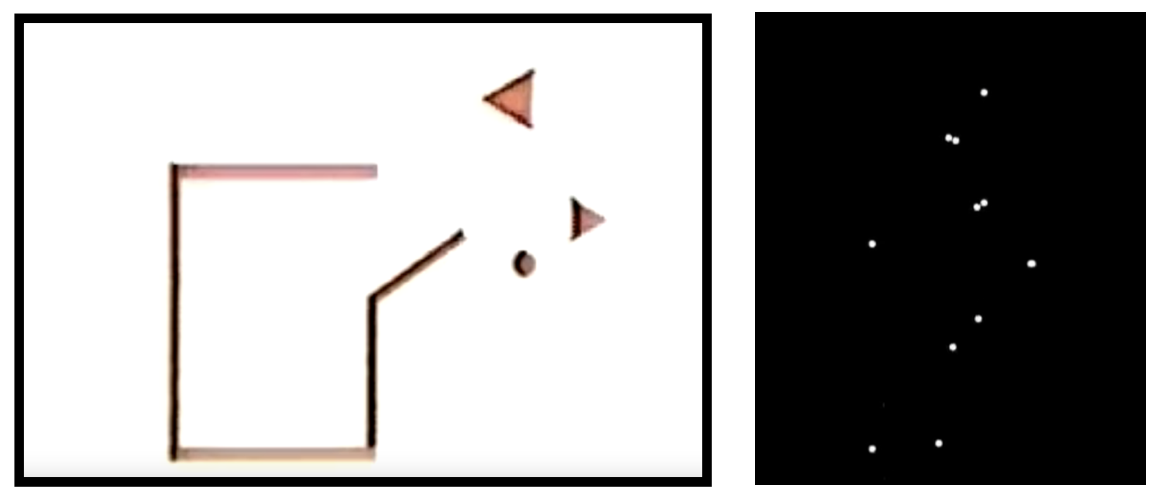

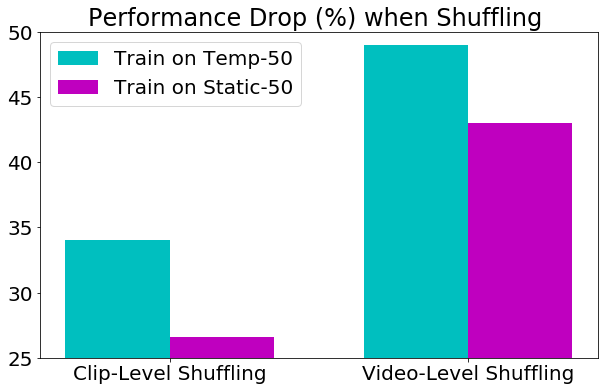

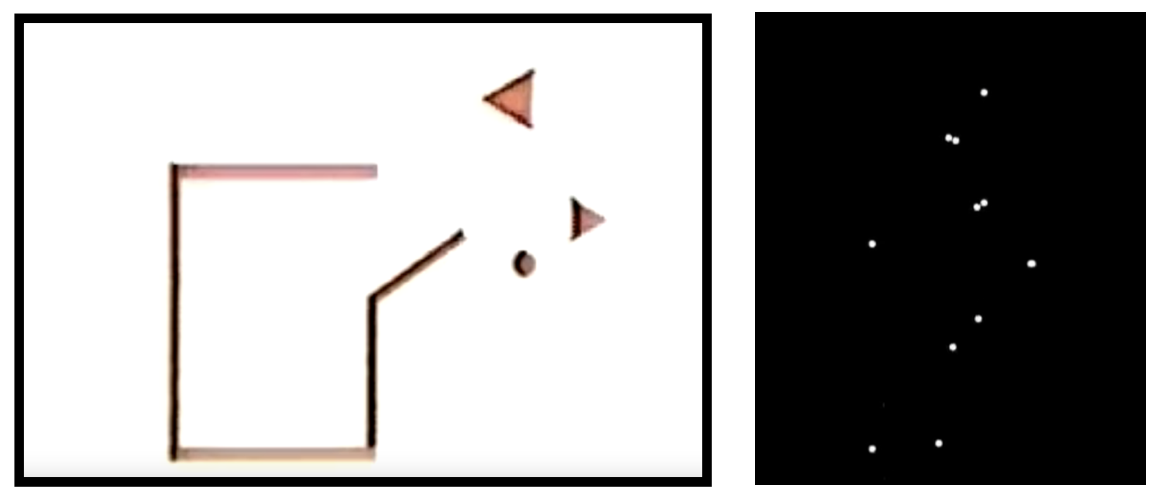

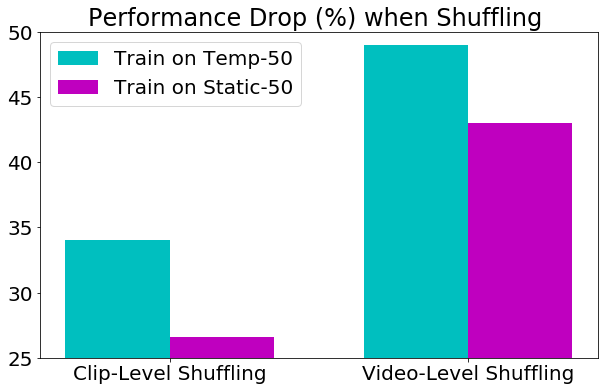

Temporal data understanding is evaluated through an innovative approach utilizing human perception rather than computational modeling, thus decoupling the dataset bias from currently available models. The methodology involves shuffling frames to remove temporal information and conducting human annotation experiments to determine actions that are difficult to recognize without temporal cues. This process identifies "temporal classes" where motion is essential to recognition.

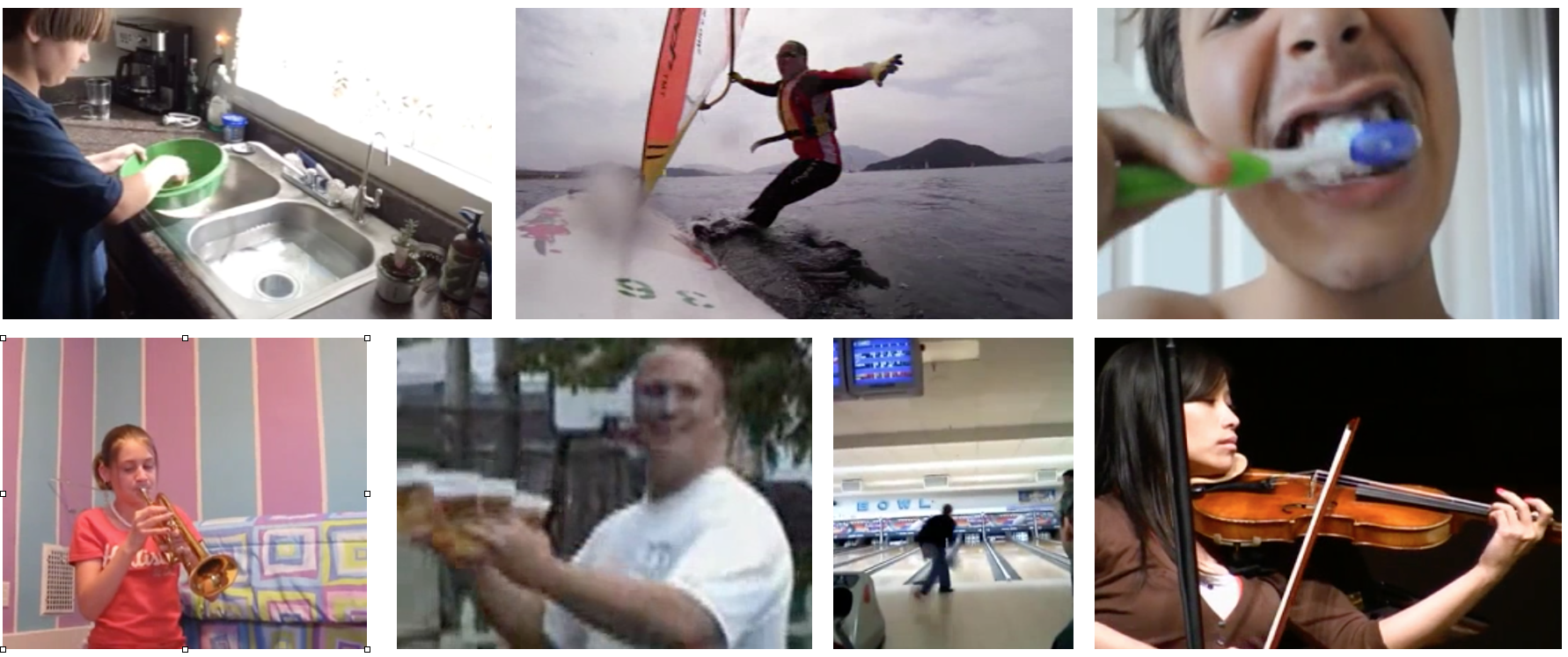

Figure 1: Can you guess these actions? "yawning", "sneezing" or "crying"?

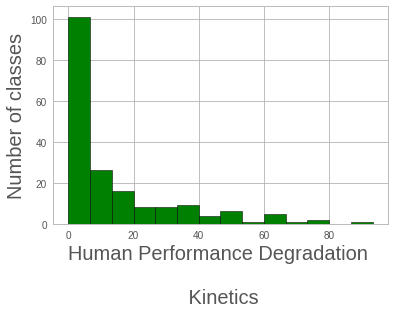

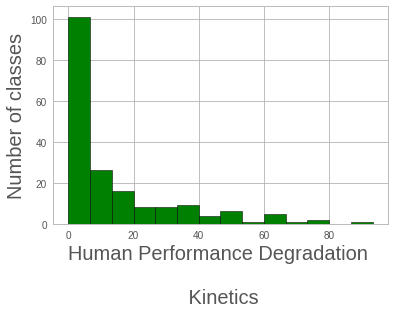

The challenge is to determine which action classes are genuinely temporal by measuring recognition drop-off in human perception when temporal information is scrambled. The identified classes are then consolidated into a Temporal Dataset that includes both Kinetics and Something-Something datasets, aiming for a comprehensive analysis of temporal importance.

Temporal Dataset Properties

The Temporal Dataset showcases statistically different properties compared to existing static datasets. As confirmed by rigorously applying the Kolmogorov-Smirnov test, these temporal classes differ significantly in their representation and recognition metrics.

Figure 2: Temporal task.

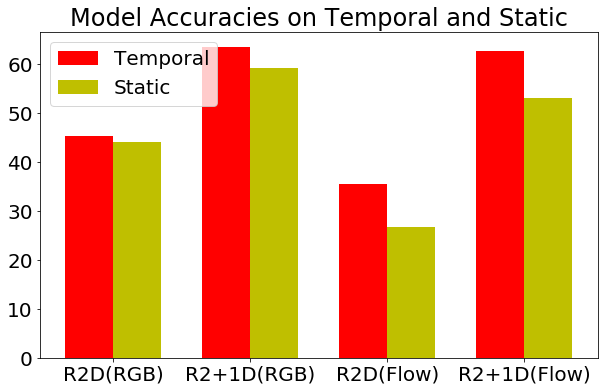

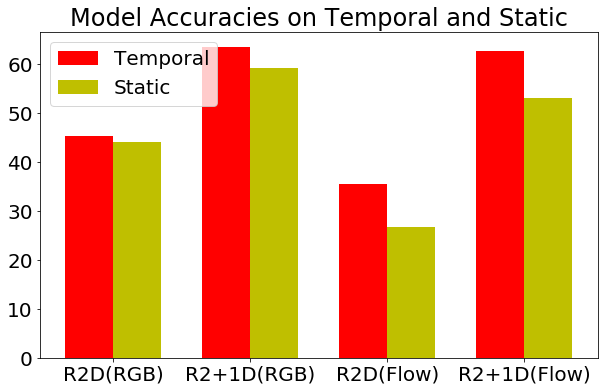

Consequently, networks capable of capturing temporal features demonstrate superior performance on the Temporal Dataset. For example, models that integrate temporal convolutions like R2+1D exhibit improved accuracy over temporally naive models like R2D, confirming the hypothesis that temporal capabilities are critical to understanding these classes.

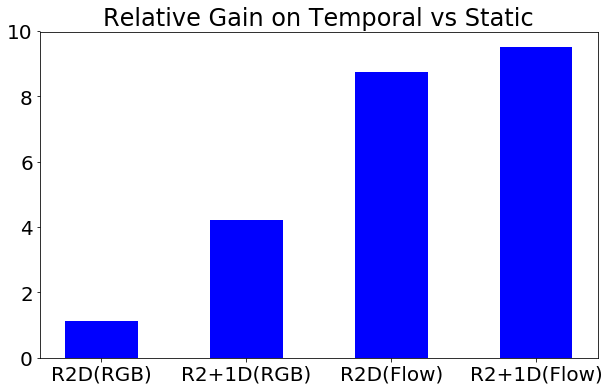

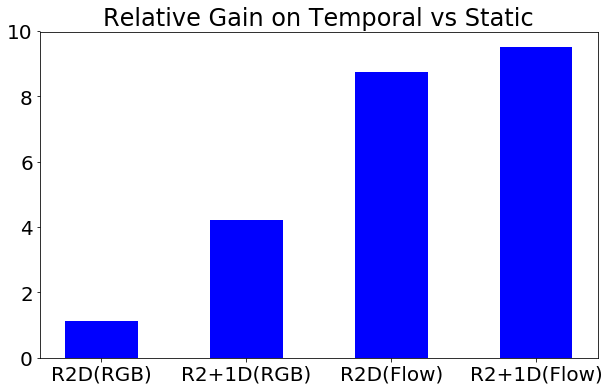

Benchmarking Temporal Models

The Temporal Dataset provides an effective benchmarking tool for evaluating the temporal modeling capabilities of current state-of-the-art networks such as R2+1D, I3D, and others. Utilizing a novel temporal score, which calculates the relative gain of a network on temporal classes over static classes, this benchmark reveals differential biases in action recognition performance. Notably, flow-based models show substantial improvements on the Temporal Dataset compared to their RGB counterparts.

Figure 3: Histogram of performance drop (%).

Training on Temporal Data

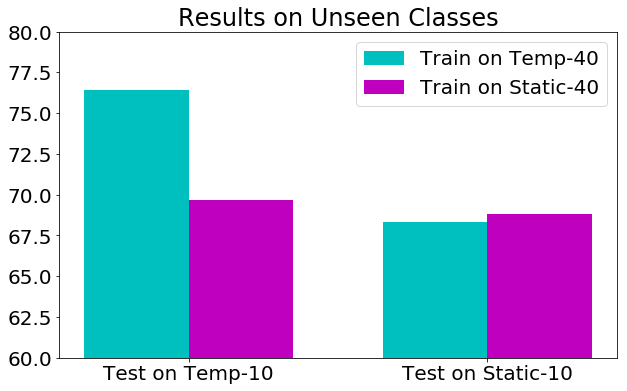

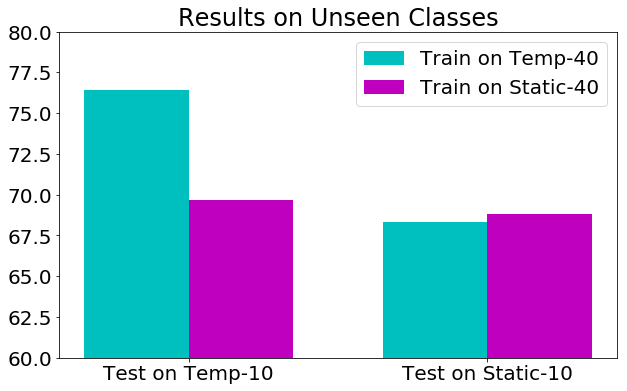

The Temporal Dataset not only evaluates but also enhances training regimens aimed at capturing temporal dynamics. Training on temporal classes demonstrates a clear benefit, creating models that generalize better to unseen classes and show enhanced sensitivity to temporal structures.

Figure 4: Absolute Accuracy (%).

Models trained on the Temporal Dataset develop features that are more responsive to temporal variabilities—an essential characteristic for handling unfamiliar test scenarios.

Figure 5: Transfer learning.

Conclusion

The study offers a compelling framework to disentangle temporal dynamics from static representations in video recognition tasks. By introducing a human-centric approach to identify temporal content, it lays a foundation for subsequent model innovations aimed at enhancing temporal understanding. The Temporal Dataset not only exposes the inadequacies of current datasets but also fosters the development of models capable of nuanced temporal reasoning, a crucial step towards robust video analytics. Future directions could focus on refining this methodology and expanding the dataset to further bridge the temporal comprehension gap in machine learning.