Adding Value by Combining Business and Sensor Data: An Industry 4.0 Use Case

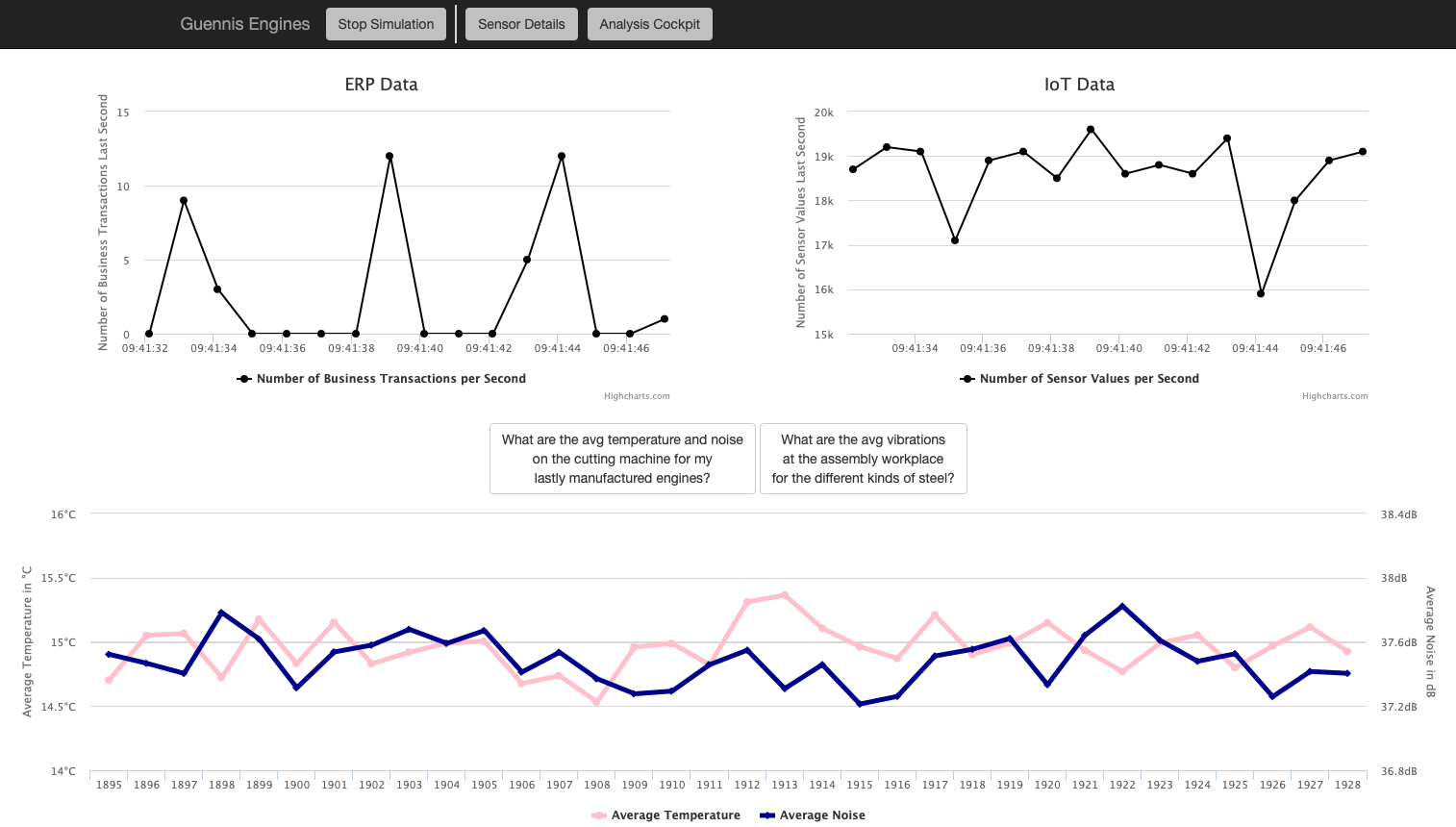

Abstract: Industry 4.0 and the Internet of Things are recent developments that have lead to the creation of new kinds of manufacturing data. Linking this new kind of sensor data to traditional business information is crucial for enterprises to take advantage of the data's full potential. In this paper, we present a demo which allows experiencing this data integration, both vertically between technical and business contexts and horizontally along the value chain. The tool simulates a manufacturing company, continuously producing both business and sensor data, and supports issuing ad-hoc queries that answer specific questions related to the business. In order to adapt to different environments, users can configure sensor characteristics to their needs.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Practical Applications

Immediate Applications

Below are concrete use cases that can be deployed now using the paper’s demo system, methods, and data model.

- ERP–IoT integration sandbox for proof-of-concepts

- Sectors: Manufacturing, Software/Data Platforms

- What: Use the open-source demo to rapidly prototype vertical (sensor↔ERP) and horizontal (value-chain) data integrations; validate time-based joins between shop-floor events and production orders.

- Tools/Workflows: Demo app as a testbed; SQL ad-hoc analytics; columnar in-memory DB; JSON-based sensor config.

- Assumptions/Dependencies: Accurate workplace entry/exit timestamps in ERP; reliable time synchronization across machines; mapping between SENSOR_ID/WORKPLACE_ID and ERP entities.

- Supplier quality analytics (out-of-the-box queries)

- Sectors: Manufacturing, Supply Chain

- What: Build dashboards correlating vibration/temperature/noise anomalies with suppliers to surface quality issues early and update supplier scorecards.

- Tools/Workflows: Predefined query “average vibrations by supplier”; KPI tiles for anomaly frequency by supplier; alerting on threshold breaches.

- Assumptions/Dependencies: Clean supplier–order linkage in ERP; calibrated sensors; stable units/metadata (e.g., vibration units).

- Workplace KPI monitoring and root-cause triage

- Sectors: Manufacturing Operations

- What: Monitor average temperature/noise per workstation for recent products; investigate spikes linked to throughput drops or rework.

- Tools/Workflows: Real-time ingestion charts; ad-hoc SQL; pivot by workplace/order; simple thresholds for alerts.

- Assumptions/Dependencies: Near-real-time ingestion to the in-memory DB; well-defined WORKPLACE_IDs; sufficient sensor placement coverage.

- Synthetic data generation for benchmarking data pipelines

- Sectors: Software Engineering, Data Engineering, Database Systems

- What: Generate realistic ERP+sensor loads to stress-test ETL/ELT jobs, streaming joins, and query optimizers; compare performance across architectures.

- Tools/Workflows: Adjustable sensor rates; workload replays; query performance tracking; CI pipelines that run data-volume stress tests.

- Assumptions/Dependencies: Access to representative hardware; observability for throughput/latency metrics; alignment of schema to target systems.

- Curriculum and hands-on labs for Industry 4.0 data integration

- Sectors: Education, Academic Research

- What: Teach vertical/horizontal integration, time-based joins, and KPI design; run controlled experiments on data volumes and query performance.

- Tools/Workflows: Lab scripts; SQL exercises; scenario-based case studies (e.g., supplier impact); student projects extending the demo.

- Assumptions/Dependencies: Basic SQL skills; classroom compute resources; instructor-provided scenarios and datasets.

- Data model and governance template for ERP–IoT convergence

- Sectors: Enterprise IT, Data Governance

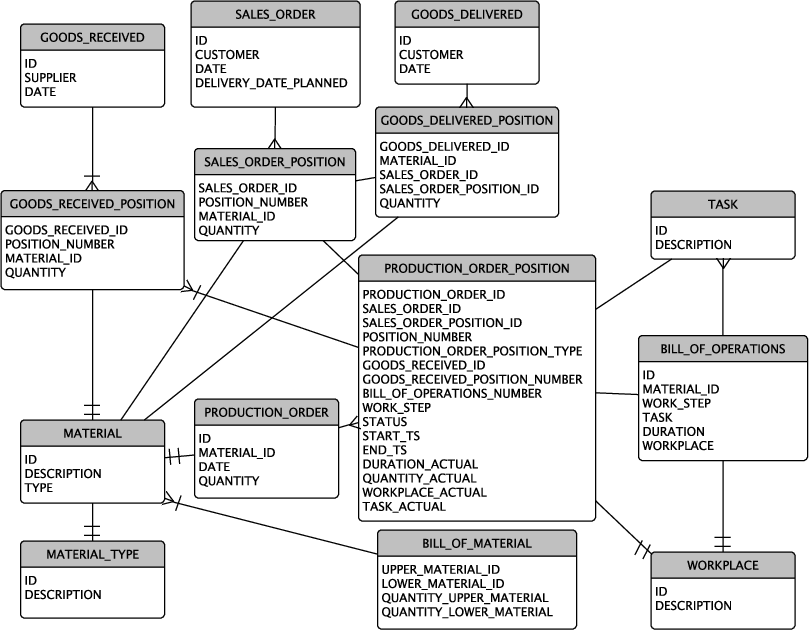

- What: Reuse the head/item ERP schema and sensor table pattern to define minimal viable schemas, lineage, and unit/ID standards for integration programs.

- Tools/Workflows: Schema blueprints; conformance checks (IDs, units, timestamps); data catalog entries linking ERP and IoT entities.

- Assumptions/Dependencies: Compatibility with existing ERP (e.g., SAP-like head/item); agreed-upon units/measurement taxonomies.

- Sensor deployment planning and change impact analysis

- Sectors: Operations, Robotics/Automation

- What: Simulate adding/removing sensors and rate changes to estimate ingestion loads and analytic sensitivity before buying hardware.

- Tools/Workflows: JSON sensor config experiments; what-if scenarios; capacity planning for databases and networks.

- Assumptions/Dependencies: Representative simulation parameters; rough mapping of simulated rates to real devices.

- Stakeholder workshops to demonstrate interoperability value

- Sectors: Policy, Consortia, Executive Enablement

- What: Use the demo to make vertical/horizontal integration tangible in standards or investment discussions; show quick wins from linked KPIs.

- Tools/Workflows: Guided scenarios (supplier quality, workstation anomalies); before/after KPI comparisons.

- Assumptions/Dependencies: Curated demo datasets; facilitator familiar with both OT and IT contexts.

Long-Term Applications

These applications extend the paper’s ideas toward production systems and broader ecosystems; they require additional research, scaling, or integration.

- Production-grade vertical integration platform

- Sectors: Manufacturing Software, Industrial Platforms

- What: Productize the sandbox into a robust stack with connectors to OPC UA/MTConnect, major ERPs, and streaming (Kafka) for scalable, secure, low-latency joins.

- Potential Products: ERP–IoT integration middleware; time-synchronized data lakehouse layer.

- Assumptions/Dependencies: Industrial connectors; security and OT compliance; exactly-once/ordering guarantees; high-availability infrastructure.

- Predictive maintenance and predictive quality using integrated data

- Sectors: Manufacturing, Reliability Engineering

- What: Train models linking sensor patterns and business outcomes (scrap, rework, downtime); move from descriptive to predictive/prescriptive analytics.

- Potential Tools: Feature stores uniting ERP+IoT; model monitoring; early-warning systems.

- Assumptions/Dependencies: Labeled failure/quality events; long-term historical data; MLOps; robust drift detection.

- Digital twin of production lines (business + sensor fidelity)

- Sectors: Operations, Robotics/Automation

- What: Build digital twins that mirror process state and economics (orders, WIP, throughput) with live sensor telemetry for what-if simulations and scenario planning.

- Potential Products: Twin-driven planning and anomaly simulation; operator training simulators.

- Assumptions/Dependencies: Accurate process models; deterministic mapping between physical and digital events; latency budgets.

- Closed-loop optimization (shop-floor control informed by ERP+IoT KPIs)

- Sectors: Manufacturing, Control Systems

- What: Automatically adjust schedules, speeds, or process parameters when KPIs (e.g., vibration spike) predict quality or downtime risks.

- Potential Workflows: Supervisory control recommendations; human-in-the-loop approval pipelines.

- Assumptions/Dependencies: Safe control integration; change management; formal verification and safety certification.

- Standardized data models and certification for interoperability

- Sectors: Policy, Standards Bodies, Consortia

- What: Define and certify schemas and metadata (IDs, units, timestamps, workplace events) for vertical/horizontal integration across vendors.

- Potential Outputs: Open reference models; conformance tests; procurement guidelines mandating standards.

- Assumptions/Dependencies: Multi-stakeholder consensus; governance processes; alignment with Asset Administration Shell/OPC UA information models.

- Supplier risk and contract optimization using sensor-derived quality signals

- Sectors: Supply Chain, Procurement, Finance

- What: Incorporate sensor-based defect/variability indicators into supplier scorecards, payment terms, and sourcing decisions.

- Potential Tools: Risk-scoring services; dynamic contract clauses tied to quality KPIs.

- Assumptions/Dependencies: Data-sharing agreements; bias and confounder control (machine vs. material effects); legal/commercial acceptance.

- Automated sensor configuration and data observability

- Sectors: Data Engineering, OT/IT Operations

- What: Use usage patterns and query feedback to recommend sensor placements/rates; auto-detect schema/unit drifts and timestamp misalignments.

- Potential Products: Sensor config advisors; observability dashboards for ERP–IoT pipelines.

- Assumptions/Dependencies: Telemetry on pipeline health; policy constraints (network, power); feedback loops into config management.

- Cross-site benchmarking and continuous improvement networks

- Sectors: Manufacturing Networks, Consortia

- What: Compare normalized KPIs across plants/suppliers to identify best practices and quantifiable improvement opportunities.

- Potential Tools: Privacy-preserving benchmarking (e.g., federated analytics); league tables by process/workstation.

- Assumptions/Dependencies: Data anonymization; harmonized metrics; trust frameworks and governance.

Collections

Sign up for free to add this paper to one or more collections.