- The paper introduces a novel nonlinear generative model that transforms traditional archetypal analysis using deep variational methods.

- It enforces interpretability by mapping data to fixed simplex corners with convex constraints and integrating side information.

- Empirical results on synthetic, facial, and chemical datasets demonstrate improved interpolation, sampling, and robust latent representation learning.

Deep Archetypal Analysis: A Nonlinear Generative Approach for Interpretable Representation Learning

Motivation and Limitations of Linear Archetypal Analysis

Archetypal Analysis (AA) is a convex factorization technique suited for data exhibiting mixtures of distinct populations or mechanisms, representing data points as convex combinations of a small number of extreme points (archetypes). While AA has yielded interpretability in domains such as evolutionary biology, imaging, and document analysis, its linear structure imposes several critical limitations: (i) inability to handle curved (nonlinear) manifolds, as archetypes can only be extremes in linear spaces; (ii) dependence on expert-driven preprocessing (dimension scaling, selection, and number of archetypes), with little ability to leverage external side information; (iii) lack of a generative model, precluding interpolation, sampling, and conditional generation. These constraints restrict AA's practical and theoretical utility in both unsupervised and supervised contexts.

Methodology: Deep Archetypal Analysis

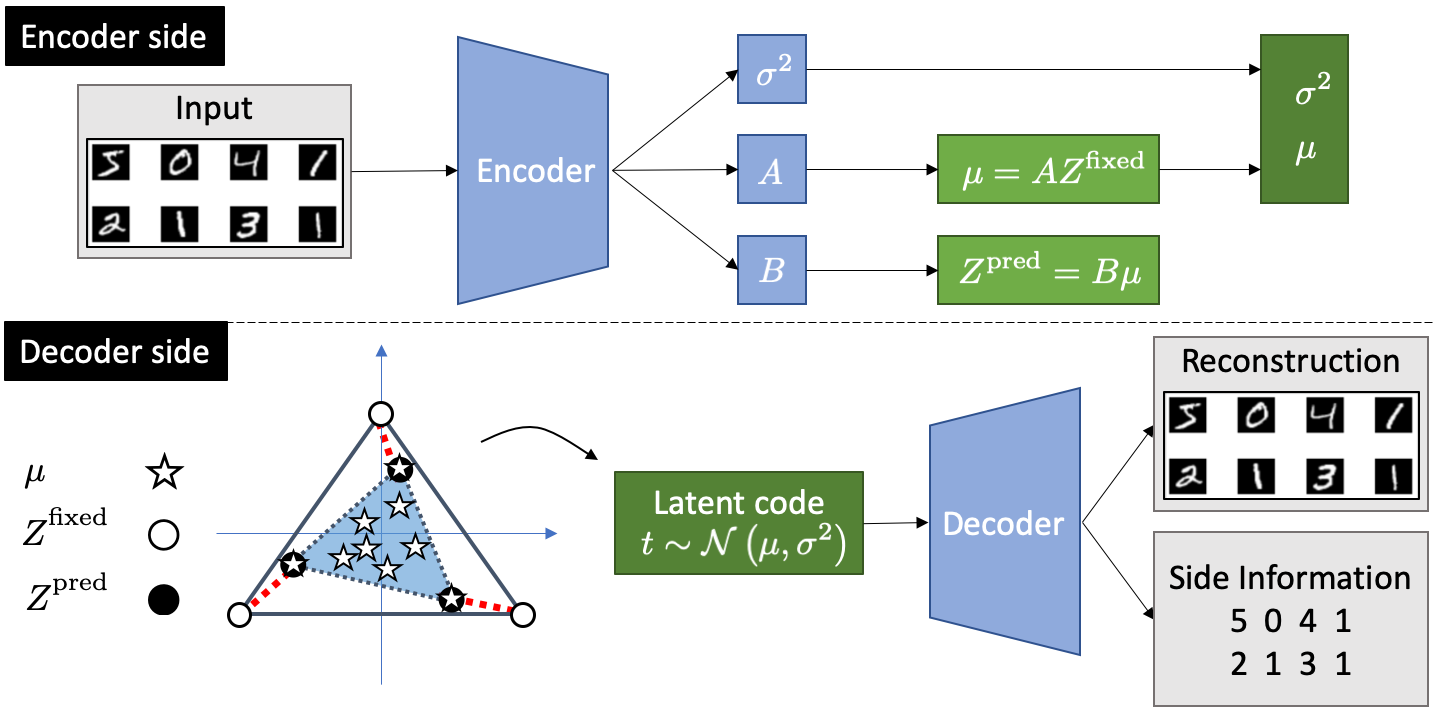

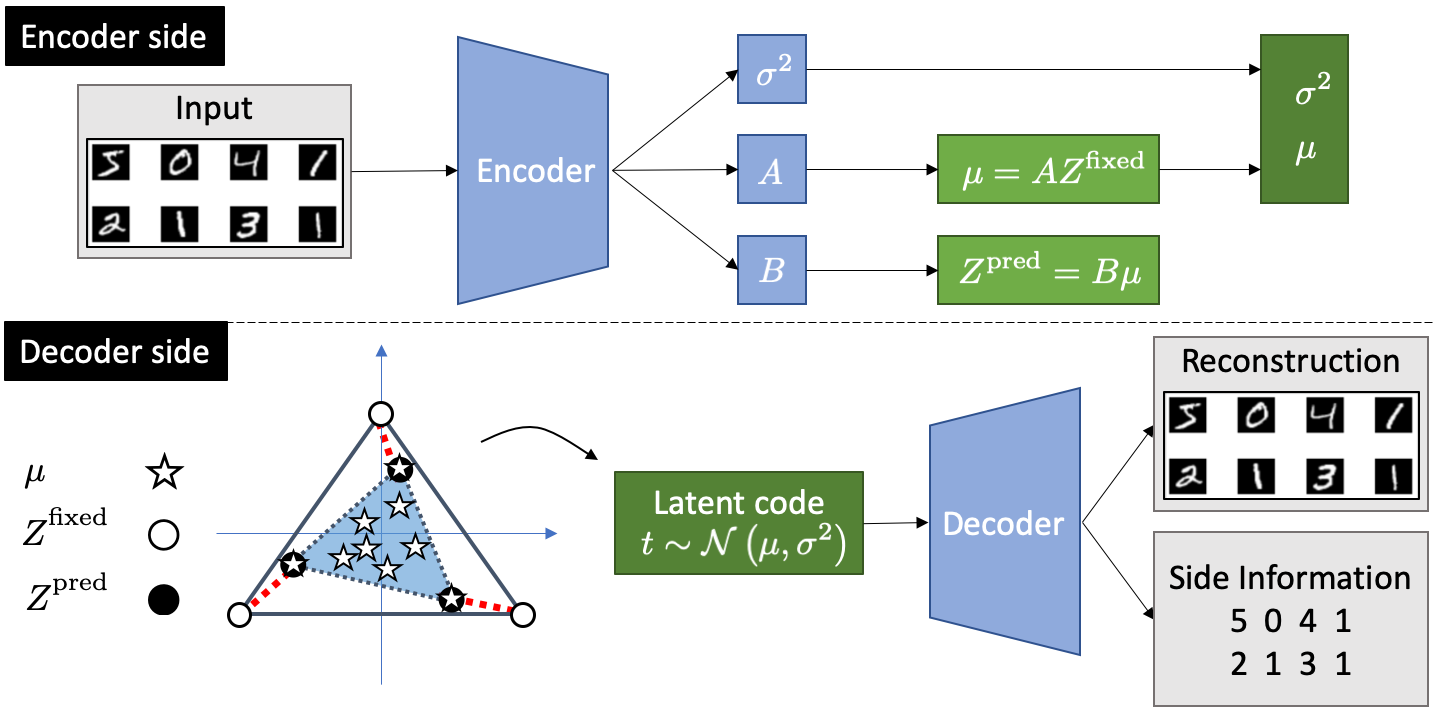

The proposed Deep Archetypal Analysis (DeepAA) recasts archetypal analysis into a nonlinear, variational, and generative latent variable framework. The model is based on the Deep Variational Information Bottleneck (DVIB), establishing a bridge to VAEs, and incorporates side information by construction. DeepAA defines a latent variable T such that data points are modeled as convex combinations of archetypes, with T generated via a neural encoder parameterizing mixture coefficients and archetypes under stochasticity (aij∼ Dirichlet, archetypes as convex mixtures of data). Archetypes are placed at fixed vertices of a simplex in latent space, and the archetype loss ℓAT encourages predicted archetype representations to match these simplex corners. Constraints of non-negativity and row-stochasticity on mixture matrices A,B are enforced via softmax layers, guaranteeing convex combinations.

Figure 1: Illustration of the DeepAA model architecture enforcing archetype structure via learned weight matrices and archetype loss in the latent space.

The DeepAA objective integrates mutual information and reconstruction terms (via DVIB), regularized by ℓAT, enabling data-driven representation learning, interpretability over nonlinear manifolds, and conditioning on side information Y.

Empirical Analysis: Artificial and Real-World Data

Artificial Data: Nonlinear Manifolds

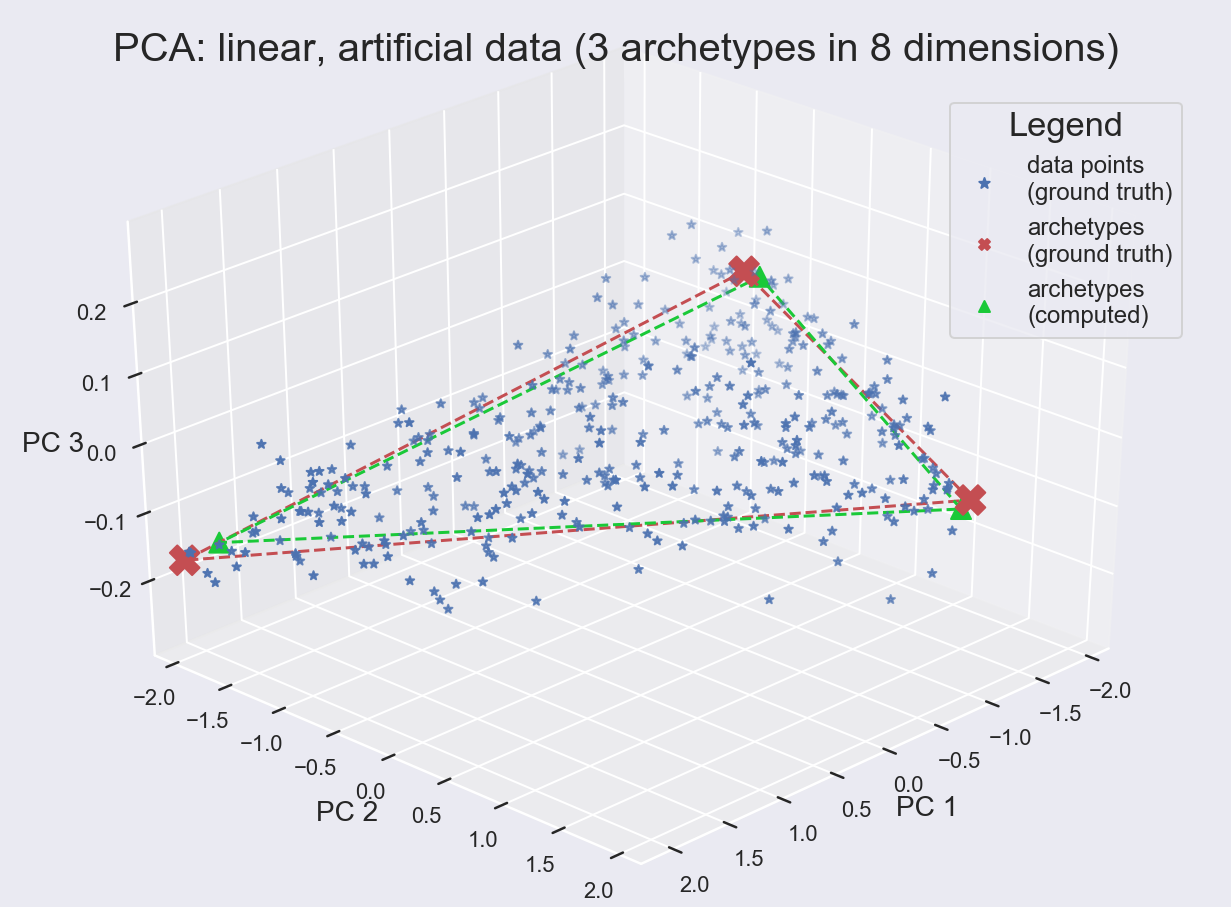

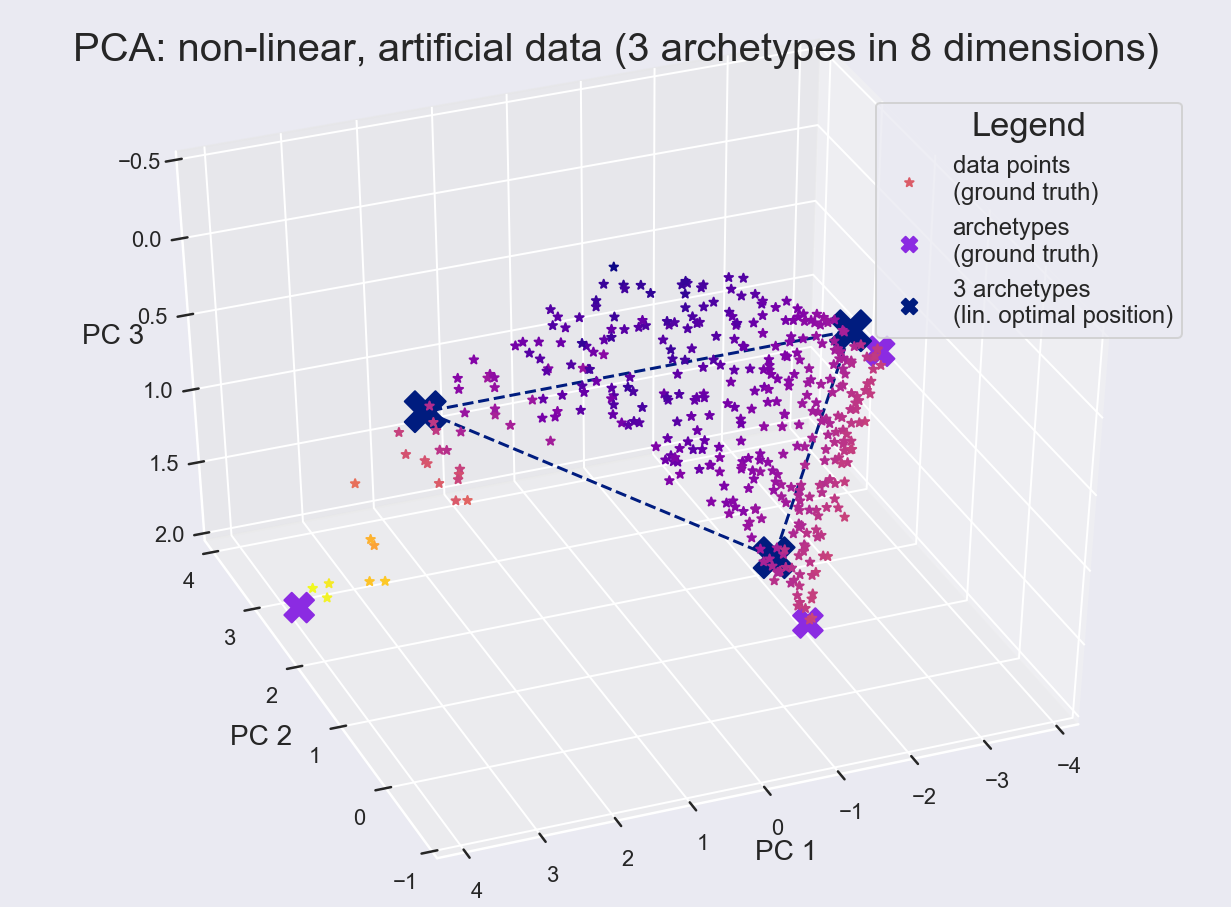

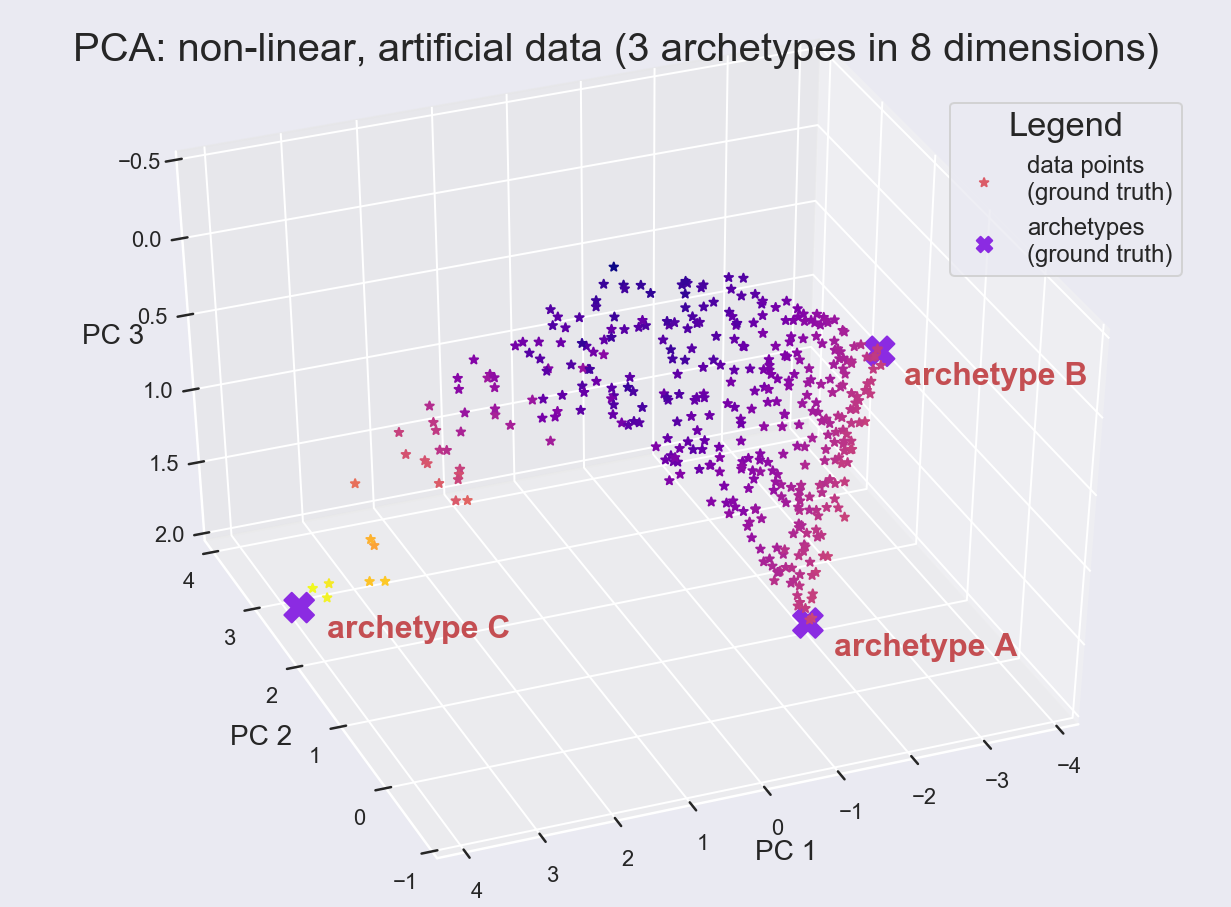

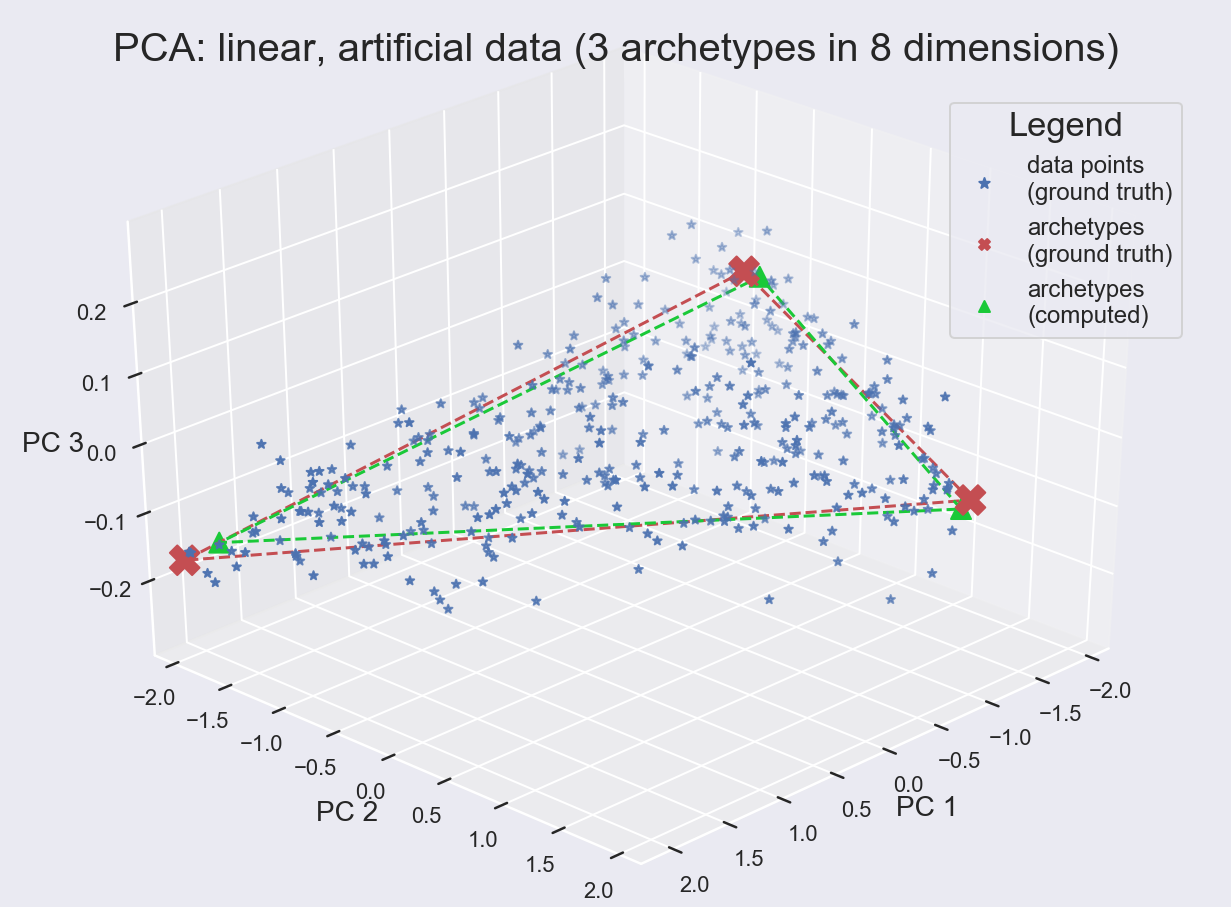

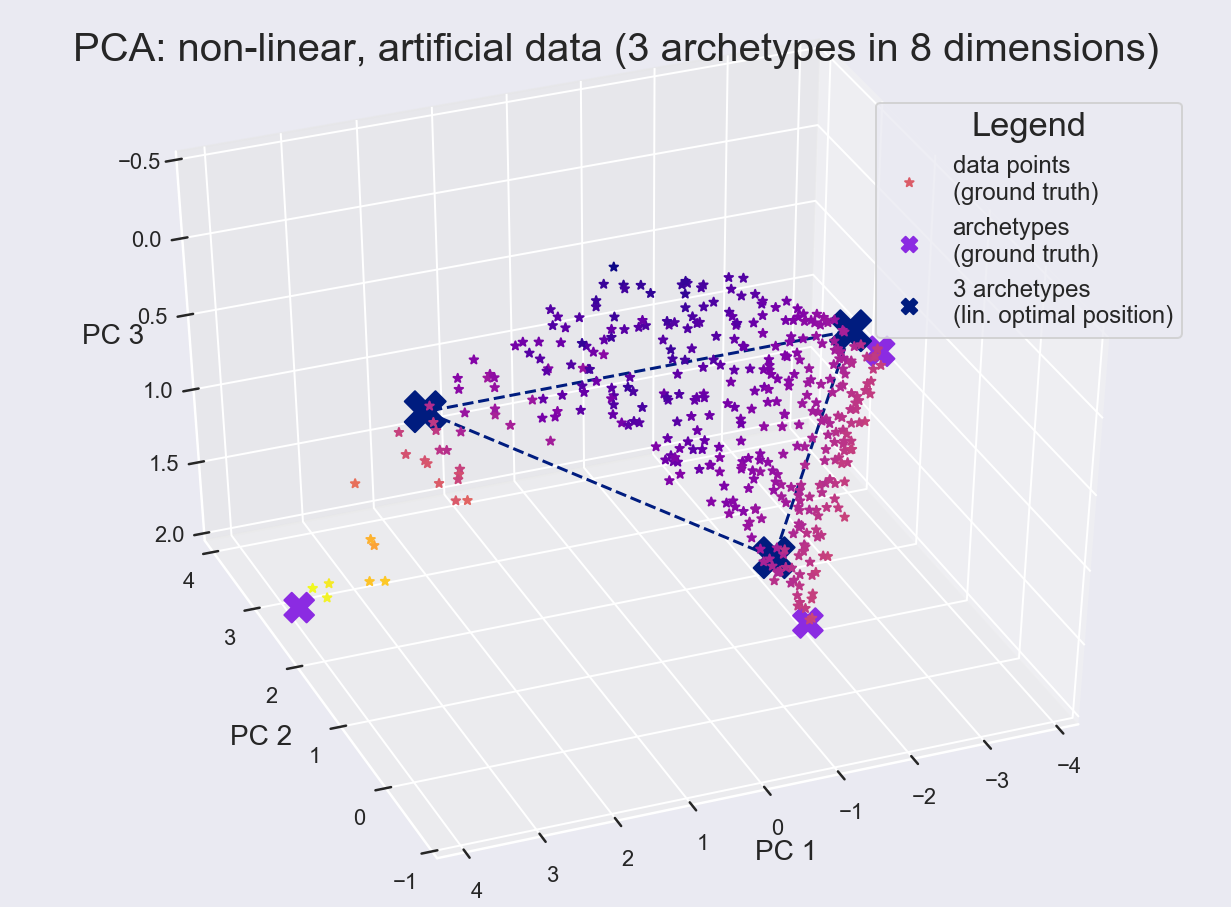

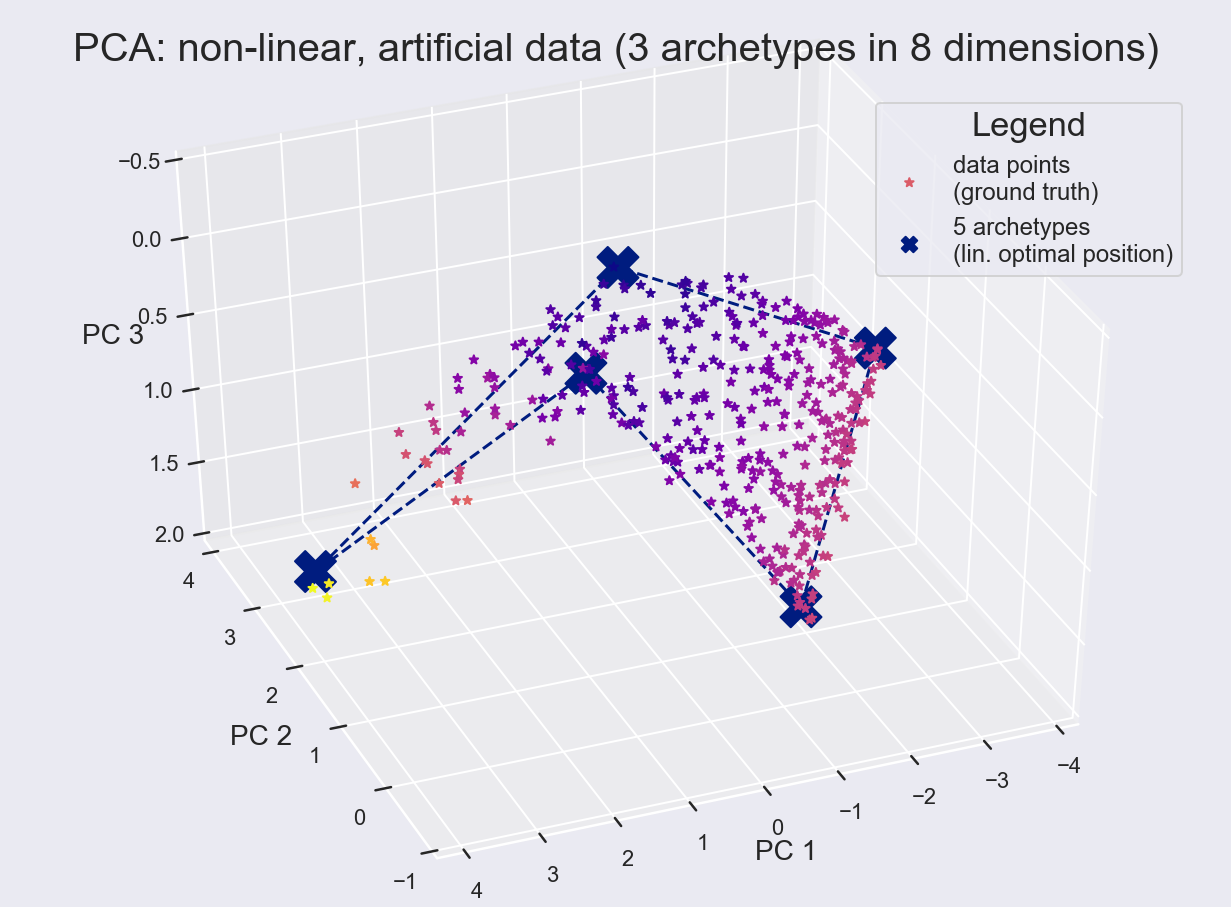

Experiments on synthetic datasets demonstrate the failure of linear AA to recover true archetypes when data is embedded in nonlinear manifolds (e.g., exponential transformations induce curvature, requiring more archetypes for similar accuracy, and losing extremal interpretability).

Figure 2: PCA projection after linear AA; archetypes identified as extremes for linear submanifolds.

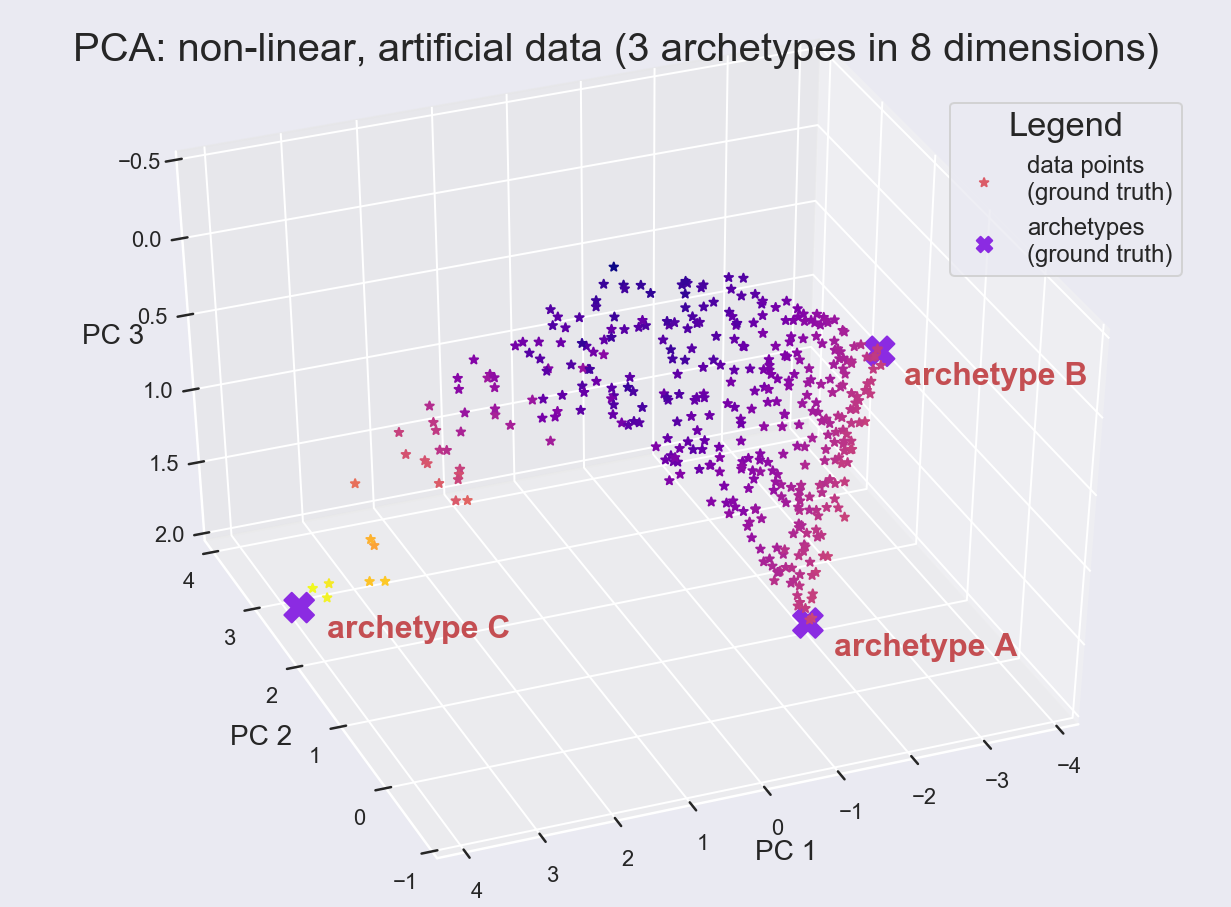

Figure 3: Archetypal analysis on nonlinear data; linear AA fails to select meaningful extremes.

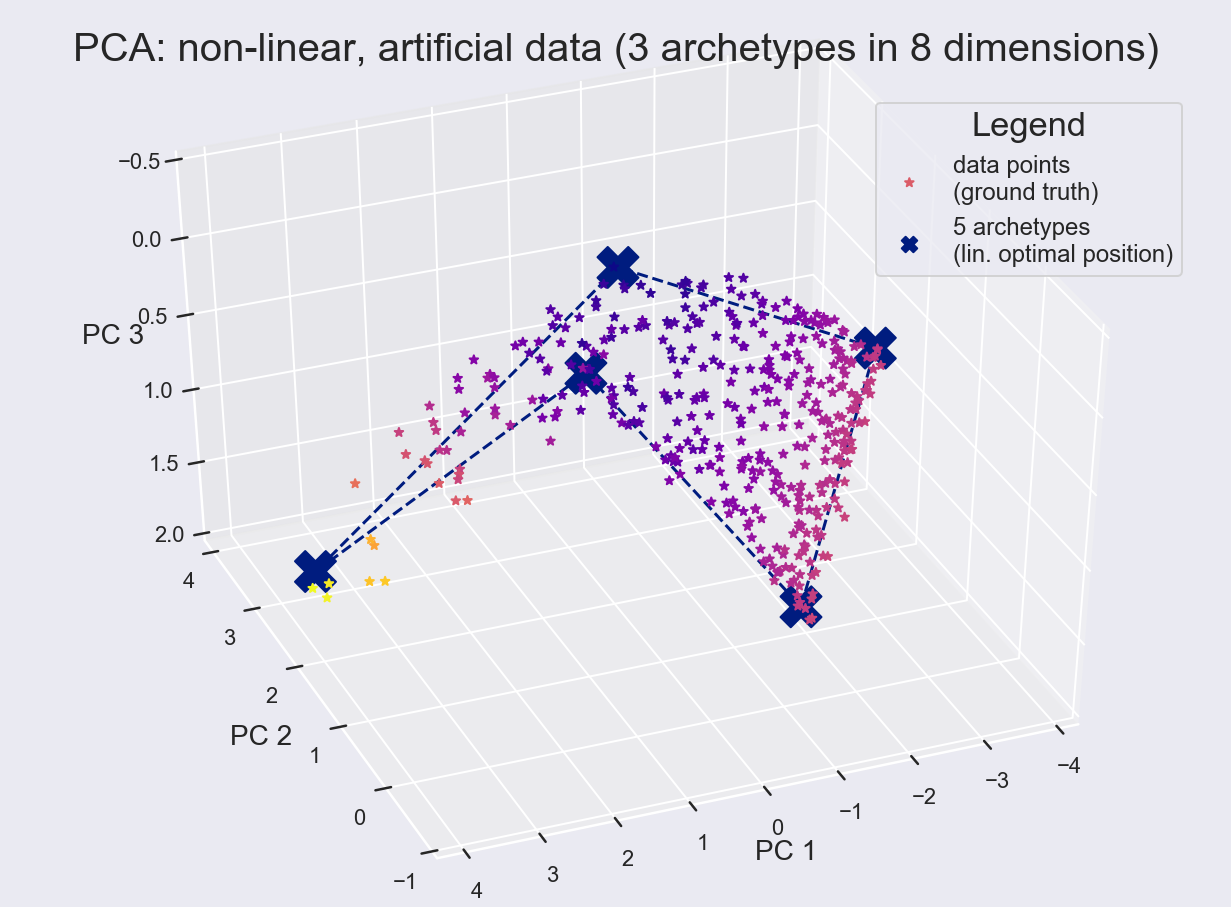

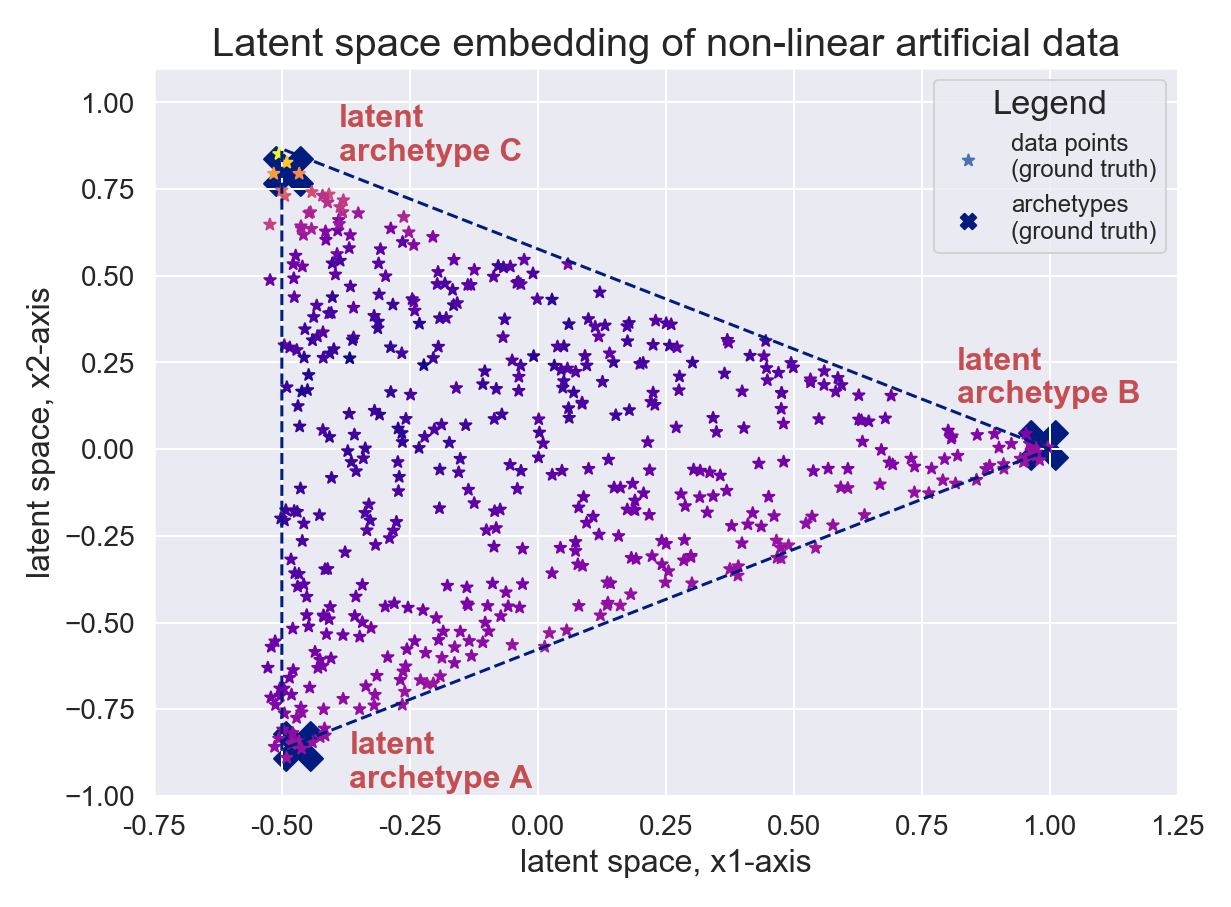

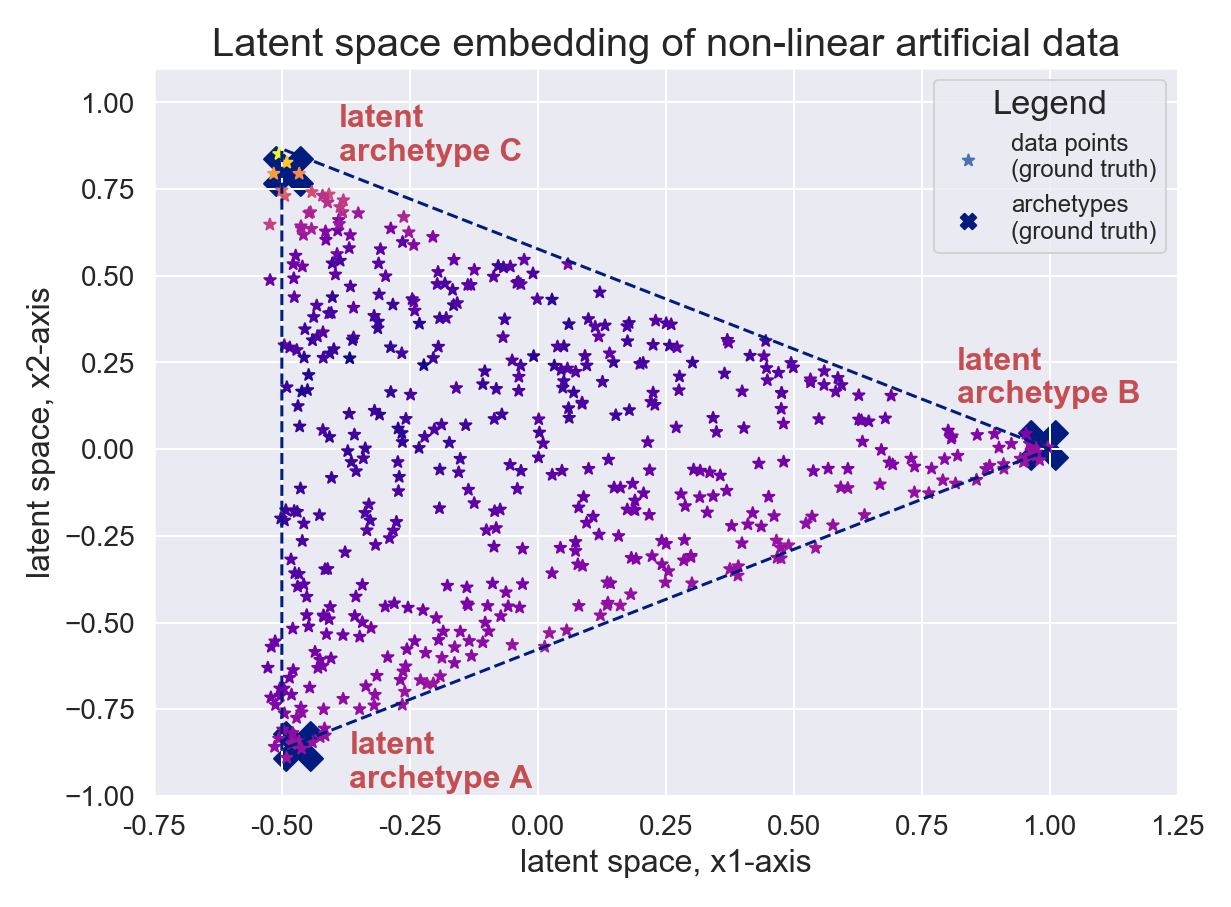

In contrast, DeepAA with a 2D latent space and a prior on the archetype count successfully maps nonlinear data to simplex corners, preserving both archetype interpretability and latent space geometric structure.

Figure 4: Principal components of nonlinear data; three archetypes are appropriately identified by DeepAA.

Generative Aspects and Model Selection

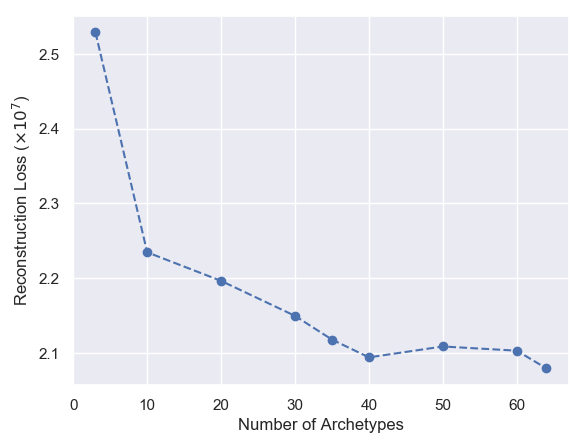

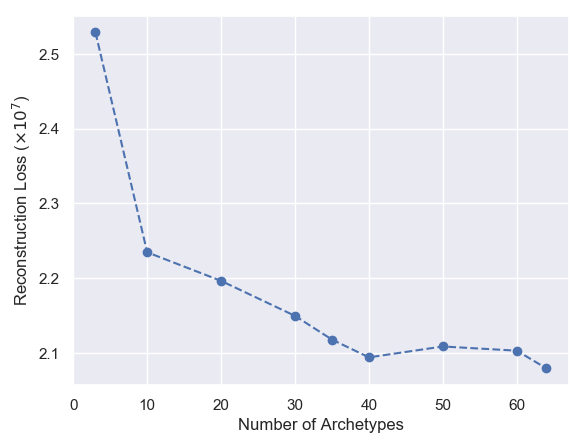

DeepAA proves generative: specifying convex mixtures in latent space allows for interpolation and conditional sample generation. In face datasets (CelebA), model selection via reconstruction loss and the elbow method reveals optimal archetype counts, and interpolations in the latent space reinforce archetypal features.

Figure 5: Interpolation in latent space towards an archetype, reinforcing archetypal features of faces.

Figure 6: CelebA reconstruction loss vs. number of archetypes, showing elbow for optimal selection.

Supervised Exploration of Chemical Space

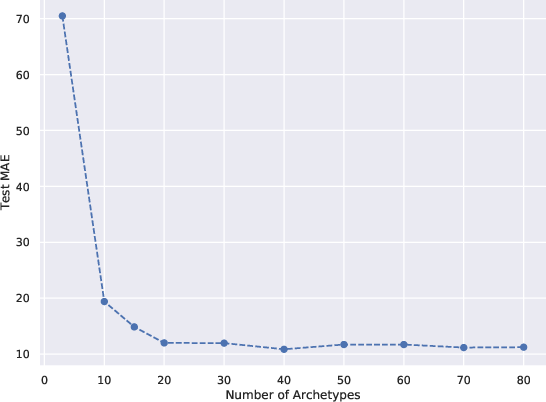

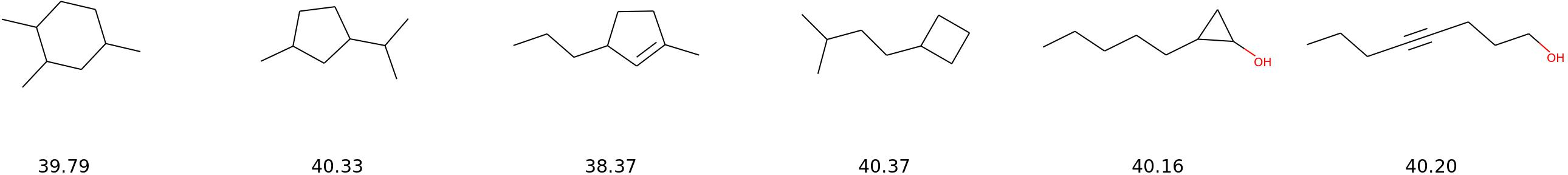

DeepAA is particularly compelling in chemical property modeling, addressing the many-to-one mapping of molecules to properties by learning archetypes that encode distinctive structural and property extremes. Using the QM9 dataset (134k organic molecules), molecular features and side information (heat capacity, band gap energy) are integrated into the DGIB framework for supervised latent representations.

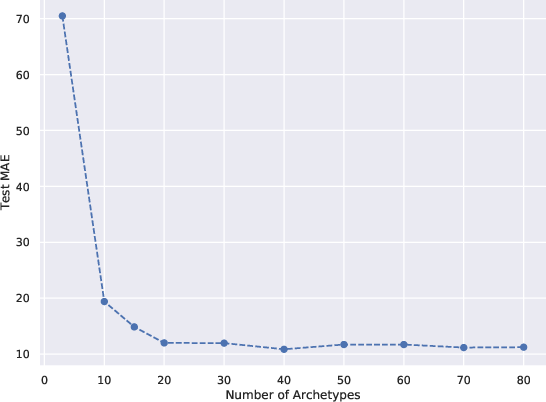

Model selection via MAE convergence on test data demonstrates the trade-off between archetype count and reconstruction fidelity.

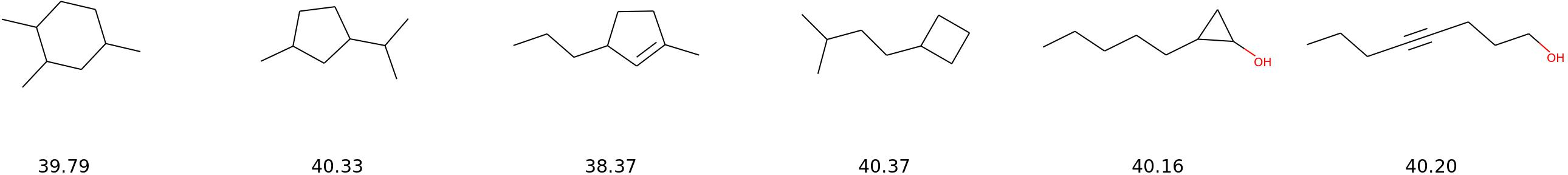

Figure 7: Interpolation between two archetypes in chemical space, showing heat capacity transition.

Archetype analysis exposes structure-property relationships — e.g., long-chain vs. short-chain archetypes, geometric differences converging on identical heat capacities, and latent space interpolations revealing continuous transitions in molecular shape and property while respecting physical constraints.

Implications and Extensions

DeepAA addresses three prevailing issues in AA: nonlinear manifold modeling, removal of expert-driven preprocessing, and integration of rich side information for regularization and interpretability. The framework enables generative sampling, interpolation, and interpretable latent explorations, making it suitable for tasks beyond unsupervised clustering, including conditional generation, supervised property optimization, and multi-modal exploration. Chemical space experiments highlight practical potential for de novo molecular design, interpretable feature discovery, and property-constrained search in material science.

Theoretically, DeepAA suggests possible extensions toward causal latent bottlenecks, multi-modal side information, and domain-specific archetype discovery (e.g., in genomics, materials). Future developments may focus on hierarchical archetypal structures, sparse convex modeling, scalability in high-dimensional spaces, and integration with symbolic or physics-informed priors for increased interpretability and validity.

Conclusion

Deep Archetypal Analysis provides a principled, nonlinear, generative approach for learning interpretable latent representations in datasets governed by mixed populations or mechanisms. It enables accurate modeling of data manifolds, robust integration of side information, and generative exploration of extremes and interpolations. Empirical results demonstrate effectiveness in modeling synthetic, facial, and chemical data, with implications for both practical applications and theoretical advances in latent variable modeling and interpretable AI.