Results from a search for dark matter in the complete LUX exposure

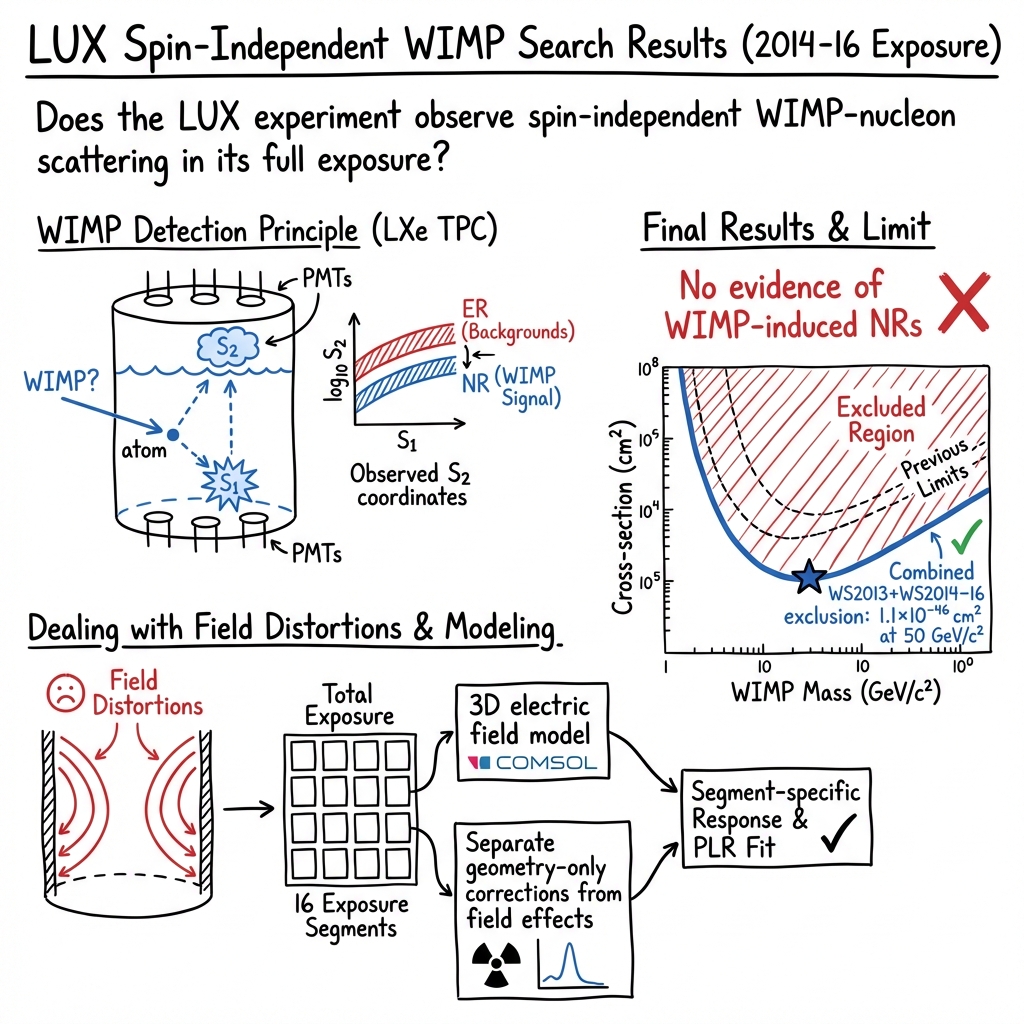

Abstract: We report constraints on spin-independent weakly interacting massive particle (WIMP)-nucleon scattering using a 3.35e4 kg-day exposure of the Large Underground Xenon (LUX) experiment. A dual-phase xenon time projection chamber with 250 kg of active mass is operated at the Sanford Underground Research Facility under Lead, South Dakota (USA). With roughly fourfold improvement in sensitivity for high WIMP masses relative to our previous results, this search yields no evidence of WIMP nuclear recoils. At a WIMP mass of 50 GeV/c2, WIMP-nucleon spin-independent cross sections above 2.2e-46 cm2 are excluded at the 90% confidence level. When combined with the previously reported LUX exposure, this exclusion strengthens to 1.1e-46 cm2 at 50 GeV/c2.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This paper reports the final results from LUX, a highly sensitive experiment that tried to directly detect dark matter—specifically a popular candidate called a WIMP (Weakly Interacting Massive Particle). The team looked for tiny “bumps” WIMPs might make when they hit xenon atoms inside the detector. They didn’t find any signs of WIMPs, but they set the strongest limits (at the time) on how often WIMPs could collide with matter.

The main questions the researchers asked

- Can we see evidence that dark matter particles (WIMPs) bump into xenon atoms?

- If not, how tightly can we limit how often such bumps could happen?

- How can we separate real dark-matter-like signals from ordinary background signals?

- How do we deal with quirks in the detector so our measurements are trustworthy?

How the experiment worked (in everyday terms)

Think of LUX as a super-quiet, 3D “camera” sitting deep underground, looking for extremely faint, rare sparks inside a tank of ultra-clean liquid xenon.

- The detector: It’s a dual-phase (liquid-and-gas) xenon time projection chamber (TPC). When a particle hits a xenon atom, two things can happen: 1) A quick burst of light (called S1), like a flash. 2) Some electrons get knocked free. These drift upward in an electric field, pop into the gas layer, and create a second burst of light (S2), slightly later.

By measuring S1 and S2 with sensitive light sensors above and below, the team can tell: - How much energy was deposited (from the amounts of S1 and S2). - Where the hit happened in 3D (from the timing and pattern of the light).

- Separating signal from background: Ordinary particles (like gammas and betas) tend to make “electronic recoils,” while WIMPs would make “nuclear recoils.” The ratio of S2 to S1 is different for these two cases, so the team can tell them apart statistically.

- Shielding and cleanliness: The detector sits deep underground in South Dakota, inside a big water tank. The overlying rock and water act like thick blankets, blocking cosmic rays and other noise. The xenon is purified to remove trace contaminants that could mimic signals.

- Calibrations (practice shots): They frequently “taught” the detector how to respond using known sources:

- Krypton-83m (uniform, known-energy flashes) to map out position effects.

- Tritium (very low-energy beta particles) to check low-energy response.

- A special neutron source to mimic the kind of recoils a WIMP would cause.

- Fixing a detector quirk: After improving the detector’s voltages, static charge built up on the white plastic walls (PTFE). This bent the paths of drifting electrons, so the positions of events looked shifted. The team built a detailed 3D electric field model (using a simulation tool and frequent krypton calibrations) to “un-warp” the positions. They also split the data into 16 segments (by time and depth) and used a physics model (NEST) to capture how light and charge yields changed with the local electric field, keeping everything accurate.

- Background modeling and fair testing: They modeled three main backgrounds—ordinary gamma/beta events, events near the wall that can lose charge and “leak” inwards, and random pairs of unconnected S1 and S2 pulses that accidentally line up. To avoid bias, they “blinded” the analysis by sprinkling in fake WIMP-like events (“salt”) whose details were hidden from the analyzers until all cuts and methods were finalized.

In total, they collected 332 live days of new data (2014–2016) plus an earlier 95-day run (2013), amounting to a very large “exposure” (mass × time) to potential dark matter events.

What they found and why it matters

- No WIMPs detected: After carefully accounting for backgrounds and detector effects, the data showed no excess of nuclear recoils that would point to dark matter.

- Strong new limits: Not finding WIMPs lets them say, “If WIMPs exist with a mass around 50 GeV, they collide with normal matter even less often than X.” Concretely, they ruled out spin‑independent WIMP–nucleon collision rates (cross sections) above about 2.2 × 10⁻⁴⁶ cm² for the new data alone, and 1.1 × 10⁻⁴⁶ cm² when combined with the earlier run. These were among the world’s strongest constraints at the time.

- Better sensitivity: Compared to their previous results, they improved sensitivity by about four times for heavier WIMPs. This came from better electron extraction, extensive calibrations, careful modeling of the electric fields, and long, stable running.

What this means going forward

- Narrowing down the possibilities: Even though LUX didn’t spot dark matter, it significantly shrank the space where popular WIMP models can hide. The “no” is scientifically valuable because it tells theorists and future experiments where not to look—and where to focus next.

- Proving the technique: LUX showed that dual-phase xenon TPCs can be run with exquisite control and understanding, even when tricky effects (like charged walls and varying fields) show up. This success paved the way for bigger, more sensitive experiments (like LZ), which continue the search with even larger xenon targets and better shielding.

- The bigger picture: Dark matter is still out there—we can see its gravitational effects in galaxies and the universe. Experiments like LUX keep tightening the net, improving the odds that if WIMPs are the answer, future detectors will find them. And if not, these results help point the community toward other ideas and candidates.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following items summarize what remains uncertain or unexplored in the paper and suggest concrete directions for future research.

- In-situ validation of the PTFE surface-charge model: directly measure PTFE charge densities and their spatial/time evolution to quantify residual biases in the mapping from true recoil positions to observed S2 coordinates and their impact on acceptance and background leakage.

- Event-wise electric-field estimation: replace the assumption of uniform fields within 16 exposure segments with per-event field estimators to capture spatial heterogeneity and reduce systematic uncertainties.

- Residual field effects after geometry-only corrections: quantify how much field dependence remains in corrected S1/S2, especially near thresholds and in regions with steep field gradients, and propagate it to the response models.

- Low-energy NR yield below 1.1 keV: measure NR light/charge yields at sub-keV energies (currently set to zero) and assess how this affects sensitivity to low-mass WIMPs and efficiency modeling.

- Dependence on NEST microphysics: explore alternative models/priors and parameter degeneracies (e.g., recombination fluctuation F_r, Lindhard k), and propagate these uncertainties into exclusion limits rather than treating them as negligible.

- PTFE surface (radon progeny) background modeling: validate the empirical leakage model with dedicated surface calibrations, characterize its time evolution with radon plate-out, and quantify charge-loss systematics under varying fields.

- Accidental coincidences model assumptions: test the separability (f1(S1)×f2(log10 S2)) and stationarity assumptions, check for correlations or time dependence, and refine modeling during periods of elevated single-electron activity.

- Post-unblinding cuts on S1 topology: quantify potential analysis bias, verify uniform signal acceptance across phase space, and predefine such pulse-quality criteria to avoid post-unblinding adjustments in future analyses.

- Halo-model sensitivity: present limits under varied halo parameters (, , ) and provide halo-independent constraints; assess sensitivity to streams or anisotropies in the local dark-matter distribution.

- Single-scatter neutron background validation: directly measure or bound single-scatter neutron rates in situ (e.g., with dedicated sources/monitors), rather than inferring from multiple-scatter events, and include their uncertainty in the background model.

- Coherent solar-neutrino NR background: quantify modeling uncertainties and explore discrimination strategies (e.g., spectral, spatial, or timing features) as sensitivity approaches the neutrino floor.

- Single-electron and S2-only backgrounds: model their rates and time profiles following large S2s, measure residual contamination after selection, and include uncertainty bands in the accidental-coincidence model.

- Position reconstruction near threshold: characterize (x,y) resolution/bias versus S2 size using data, and propagate this to wall-leakage estimates and fiducial selection systematics.

- Time-varying wall position and fiducialization: quantify systematic uncertainties from wall-position mapping (via 210Pb) and their impact on fiducial mass, acceptance, and surface-background leakage across dates.

- g1/g2 gain stability: propagate date-dependent variations and uncertainties in g1/g2 to energy scales and ER/NR band positions, and assess their effect on low-mass sensitivity.

- Electron extraction efficiency mapping: measure spatial/time dependence and its correlation with field distortions; quantify how uncertainties in extraction efficiency affect S2 thresholds and detection efficiency curves.

- NR detection efficiency validation: cross-check simulated efficiency curves with data-driven methods (e.g., tag-and-probe or embedded calibrations) and report uncertainty bands, particularly at low energies.

- Calibration coverage completeness: ensure ER/NR calibrations sample the full fiducial volume and time span; quantify any spatial/temporal biases in response models due to uneven calibration statistics.

- Statistical framework robustness: compare the PLR approach and power-constraining choices with alternative methods (CLs, Bayesian) to gauge robustness of exclusion limits under different statistical treatments.

- Combined WS2013+WS2014–16 systematics: assess correlated uncertainties across runs with differing detector states (e.g., field uniformity) and consider a unified joint fit to harmonize response and background models.

- Non–spin-independent interactions: extend analysis to spin-dependent, isospin-violating, momentum-dependent, and inelastic scattering scenarios using the existing dataset to broaden theoretical coverage.

- Blinding protocol efficacy: evaluate whether salt injection sufficiently prevented analysis bias, document lessons learned, and formalize improved blinding strategies for future exposures.

- Recovery of excluded low-lifetime periods: investigate whether data taken during low electron-lifetime periods can be partially recovered with corrections, and quantify exposure/sensitivity trade-offs.

- Pre-PLR selection boundary robustness: test sensitivity of results to alternative “far-from-ROI” exclusion boundaries and optimize for minimal bias while maintaining background rejection.

- Gas-event and merged-scatter rejection: develop more robust classifiers (e.g., machine learning) for pulse-shape and topology to reduce misclassification; measure and report misclassification rates and their uncertainties.

Glossary

Compton scattering: The interaction of photons with matter where the photon is deflected from its original path, transferring energy to an electron. Example: "Two types of ER background populations are simulated: Compton scattering of $\upgamma$ rays (originating in trace radioactivity in detector components)."

Dual-phase time projection chamber (TPC): A detector technology using noble gases to measure particle interactions with high spatial resolution by detecting scintillation and ionization signals. Example: "The Large Underground Xenon (LUX) search is performed with a dual-phase (liquid-gas) xenon time projection chamber."

Electroluminescence: The process by which a material emits light in response to an electric field or electrical stimulation. Example: "They are extracted into the gas and accelerated by a larger electric field, producing secondary electroluminescence photons collected in both arrays."

Electron extraction efficiency: The measurement of the fraction of electrons that cross the liquid-gas interface in a TPC detector. Example: "The measured electron extraction efficiency (i.e.~the fraction of electrons which promptly cross the liquid--gas interface) increased from (493)\% in WS2013 to (734)\% in WS2014--16."

Electron drift trajectories: Paths taken by electrons under the influence of an electric field within a detector. Example: "Though the grid conditioning campaign achieved the goal of an increased electron extraction efficiency, it was observed during calibrations that electron drift trajectories were significantly altered."

Fluorescence yield: The number of photons emitted by a substance following the absorption of a photon. Example: "For the 50 to 600\,V/cm field variation over the fiducial region relevant to this analysis, the average light yield for a 5\,keV ER event falls by 15\%."

Helm form factor: A mathematical description of the distribution of nuclear matter used in scattering calculations. Example: "Nuclear-recoil energy spectra for the WIMP signal are derived from a standard Maxwellian velocity distribution... and a Helm form factor."

Ionization signal: The signal generated by the movement and collection of charged particles produced by ionizing radiation in a detector. Example: "Energy deposited by particle interactions in the LXe induces two measurable signal channels: prompt VUV photons from scintillation (S1), and free electrons from ionization."

LUXSim: A software based on Geant4 used for simulating the LUX detector environments and interactions. Example: "A counting of detector materials\,\cite{Akerib:2014:bg} informs a Geant4-based LUXSim\,\cite{Akerib:2012:luxsim} simulation."

Metropolis-Hastings Markov Chain Monte Carlo algorithm: An iterative algorithm used for obtaining a sequence of random samples from a probability distribution. Example: "These 42 individual electrostatic charge densities are varied through a Metropolis-Hastings Markov Chain Monte Carlo algorithm fitting procedure."

Neutron multiple scatters: A phenomenon where neutrons interact more than once within a detector. Example: "Simulations show that the multiple scatter event rates are significantly higher than the single scatter rates."

Noble Element Simulation Technique (NEST): A computational model to describe the interaction of particles with noble elements in detectors. Example: "Periodic H calibrations provide each of the 16 exposure segments with a unique calibration set from which to construct a unique individual response model."

Profile likelihood ratio (PLR) method: A statistical method used in hypothesis testing based on the ratio of likelihoods. Example: "These 16 ER and 16 NR models are then used within a profile likelihood ratio (PLR) method\,\cite{Cowan:2010js} to search for evidence of dark-matter scattering events."

Scintillation signal: The signal produced when a material emits light following the absorption of radiation. Example: "Energy deposited by particle interactions in the LXe induces two measurable signal channels: prompt VUV photons from scintillation."

Spin-independent cross section: A measure of the probability of interaction between dark matter particles and nucleons without consideration of spin-related interactions. Example: "Upper limits on the spin-independent elastic WIMP-nucleon cross section at 90\% C.L."

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed now, drawing on the paper’s methods, tooling, and operational innovations.

- Dual-phase TPC calibration and stability workflows for low-background detectors

- Sectors: academia (neutrino/dark-matter experiments), nuclear safeguards and metrology, radiation detection instrumentation.

- What: Adopt the paper’s combined use of 83mKr (field probing via S1-doublet ratios), tritium (geometry-only response), and DD-neutron calibrations to disentangle geometry vs. field effects and maintain calibrated response over long runs.

- Tools/workflows: Periodic in-situ source injections; geometry-only correction maps; energy reconstruction via combined S1+S2 (CES) that is field-independent; segmentation of data into time/depth “exposure segments” with tailored response models.

- Assumptions/dependencies: Availability of noble gas handling, access to safe calibration sources (DD generator, tritium), and adoption of NEST-like response modeling.

- Electric-field diagnostics and correction via COMSOL + data-driven MCMC fitting

- Sectors: academia (TPCs in particle physics), industrial instrumentation using drift chambers/ionization detectors, high-voltage (HV) device design.

- What: Use the COMSOL-based 3D electrostatic model and Metropolis–Hastings MCMC fitting to weekly 83mKr data to map spatially/temporally varying fields and surface charge densities.

- Tools/workflows: Partition dielectric boundaries into sections; periodic field-map fitting; feed field maps directly into analysis space (S2-coordinates).

- Assumptions/dependencies: Access to finite-element solvers (COMSOL), well-characterized calibration data, and computational resources.

- HV electrode “conditioning” (burn-in) to boost electron extraction in liquid-gas interfaces

- Sectors: radiation detectors (industry/academia), cryogenic sensors, HV R&D.

- What: Apply controlled corona conditioning to raise stable extraction fields, improving electron extraction efficiency (observed 49%→73%).

- Tools/workflows: Cold-gas HV burn-in procedures with careful monitoring.

- Assumptions/dependencies: Risk management for dielectric charging (see next item); need for monitoring and mitigation of side effects.

- Management of dielectric charging and field distortions in PTFE and other insulators

- Sectors: HV equipment, spacecraft/instrument insulation, detector design.

- What: Recognize and model long-lived negative charging of PTFE under VUV/coronal exposure; monitor charge evolution and its impact on drift paths; implement compensation in reconstruction and fiducialization.

- Tools/workflows: Routine field-mapping, wall-charge monitoring, adaptive fiducial cuts tied to measured wall position and reconstruction uncertainty.

- Assumptions/dependencies: Materials behave similarly to PTFE under operational conditions; access to calibration data for drift-path mapping.

- Background modeling for coincidence-based sensing (random-coincidence controls)

- Sectors: medical imaging (PET/SPECT), radiation monitoring, lidar/photon-counting systems.

- What: Use separable models for accidental coincidences (f(S1)×f(S2) analogs) built from measured single-channel rates to suppress random-coincidence backgrounds.

- Tools/workflows: Online monitoring of single-channel rates; inclusion of random coincidence components in likelihood analyses.

- Assumptions/dependencies: Stationary or measured time-dependence of single rates; sufficient statistics for model stability.

- Surface-background characterization and mitigation near boundaries

- Sectors: radiation detection, non-destructive assay, security screening.

- What: Empirical modeling of surface events with suppressed charge yield and inward leakage controlled by position-reconstruction resolution (∝ S2-1/2); refine fiducial cuts near boundaries.

- Tools/workflows: High-radius control samples to derive leakage PDFs; dynamic radial boundaries informed by surface-activity tracers (e.g., 210Pb).

- Assumptions/dependencies: Stable position reconstruction performance; ability to tag/control surface contamination.

- Gas purification and contaminant removal (e.g., krypton and radon)

- Sectors: industrial gas suppliers (xenon/argon), semiconductor/cleanroom operations, environmental monitoring, medical xenon applications.

- What: Apply charcoal chromatography and low-background handling to reduce 85Kr and Rn in noble gases, improving performance of detectors and purity for medical/industrial xenon.

- Tools/workflows: Skid-mounted charcoal columns; emanation screening and materials selection; continuous recirculation and purity monitoring.

- Assumptions/dependencies: Capital equipment for cryogenic handling and chromatography; QC for emanation rates.

- Data blinding via “salted” synthetic events to reduce analysis bias

- Sectors: academia (large collaborations), regulated analytics (clinical/forensic), cybersecurity detection validation, high-stakes AI/ML pipelines.

- What: Insert realistic, sequestered synthetic events to preserve blindness while exercising the full analysis chain; unblind only after cuts/models are frozen.

- Tools/workflows: Synthetic waveform pairing to mirror true signals; external oversight of unblinding keys; audit trails.

- Assumptions/dependencies: Governance structures to enforce blinding; ability to generate realistic synthetic events without leaking artifacts.

- Likelihood-based analysis with constrained nuisance parameters

- Sectors: scientific data analysis, reliability engineering, quality control.

- What: Use profile likelihood ratios with Gaussian-constrained nuisance parameters (e.g., background rates, detector gains) for robust limit setting or detection claims.

- Tools/workflows: Modular PLR frameworks; background-only trials and power-constrained limits to avoid over-optimism.

- Assumptions/dependencies: Validated priors for constraints; sufficient computing for ensemble studies.

- Low-background infrastructure know-how (shielding, underground siting, clean materials)

- Sectors: radiation metrology, nuclear forensics/safeguards, environmental radioactivity monitoring.

- What: Deploy water shielding and material screening to reduce gamma/neutron backgrounds; lessons from underground siting inform site selection and shielding.

- Tools/workflows: Geant4-based background simulations tied to materials assays; water-tank shielding with muon veto capability.

- Assumptions/dependencies: Facility space and capital cost; material assay access.

Long-Term Applications

These applications will require further R&D, scaling, or adaptation before routine deployment.

- Noble-liquid PET and advanced medical imaging using LXe/dual-phase TPCs

- Sectors: healthcare (medical imaging).

- What: Translate S1/S2-based 3D reconstruction, fast scintillation timing, and random-coincidence modeling to LXe-based PET systems for improved energy resolution and scatter rejection.

- Dependencies: Cost and supply of xenon; cryogenic reliability; compact HV and light-sensor integration; clinical validation and regulatory approval.

- Coherent neutrino scattering and reactor monitoring with low-threshold noble-liquid detectors

- Sectors: energy (nuclear safeguards), policy (nonproliferation).

- What: Adapt low-background operation, segmentation of response models, and surface-background suppression to CEvNS detectors for non-intrusive reactor monitoring.

- Dependencies: Optimized target (xenon vs argon) and detector size; siting near reactors; long-term stability with low systematics.

- Space and HV industry: dielectric charging management under UV/corona exposure

- Sectors: aerospace, HV grid components, pulsed-power systems.

- What: Use the observed charge buildup dynamics in PTFE (and analogous polymers) and field-model validation to design insulators and conditioning procedures that minimize charge trapping and drift-path distortions.

- Dependencies: Material studies across temperatures/UV spectra; industrial testbeds; integration with lifetime modeling.

- Next-gen low-background facilities and standards for ultra-trace radioactivity

- Sectors: policy (standards), industry (semiconductor/cleanroom), environmental monitoring.

- What: Formalize protocols for material screening, radon mitigation, and gas purification leveraged in this work as industrial standards for ultra-trace assays and clean manufacturing.

- Dependencies: Inter-lab standardization; supply chain engagement; cost-benefit alignment for industry.

- Scalable, automated field-mapping and self-calibrating detectors

- Sectors: instrumentation (scientific and industrial).

- What: Generalize the “calibrate-then-model” loop—periodic uniform sources + MCMC field fitting + analysis-in-native-observation-space—to self-calibrating large detectors and industrial sensors.

- Dependencies: Embedded calibrators; robust inversion software; real-time computing; safety approvals for sources.

- Advanced AI/ML anomaly detection married to physics-based NEST-like models

- Sectors: software, industrial monitoring, scientific computing.

- What: Combine microphysics-aware simulators with ML to capture time-/space-varying detector responses and rare-event classification with calibrated uncertainties.

- Dependencies: High-fidelity simulators; uncertainty-quantification frameworks; curated calibration datasets.

- Environmental and treaty monitoring: improved radioxenon detection and background suppression

- Sectors: policy (CTBT verification), environmental surveillance.

- What: Apply gas purification and low-background xenon handling to enhance sensitivity and specificity of radioxenon monitoring stations.

- Dependencies: Integration with existing IMS stations; compatibility with sampling/processing pipelines; sustained purity controls.

- Design rules for ultra-low-noise photodetection in cryogenic systems

- Sectors: sensors and photonics (including SiPM integration), quantum sensing.

- What: Leverage “phd” calibration, multi-photoelectron statistics, and waveform-based quality cuts to guide next-gen cryo photodetectors with improved noise and linearity.

- Dependencies: Cryo-compatible photodetectors; electronics co-design; comprehensive test campaigns.

- Fundamental-physics–driven policy and funding prioritization

- Sectors: policy (science funding and roadmapping), academia.

- What: Use the tightened WIMP cross-section exclusions to refocus portfolios toward parameter spaces/technologies with highest discovery potential (e.g., lower-mass DM, novel interactions, alternative detectors).

- Dependencies: Community consensus processes; cross-experiment data synthesis; long-term investment strategies.

- Technology transfer to industrial non-destructive assay and security screening

- Sectors: security, manufacturing QA.

- What: Adapt low-background design, event-reconstruction fidelity, and random-coincidence suppression to improve sensitivity and false-positive control in scanning systems.

- Dependencies: Engineering re-implementation at room temperature; ruggedization; cost optimization.

In all cases, feasibility depends on access to high-purity noble gases and cryogenic/HV infrastructure, validated calibration sources and safety protocols, and (for long-term applications) the cost and supply-chain realities of xenon and specialized materials.

Collections

Sign up for free to add this paper to one or more collections.