- The paper introduces a scalable framework that dynamically synthesizes domain-specific APIs and leverages realistic tool use for multi-turn dialogue generation.

- It employs a modular pipeline with SQL-backed execution and a user simulator to ensure stateful interactions, precise tool invocation, and comprehensive error recovery.

- Experimental evaluations demonstrate improved multi-turn coherence and consistency, establishing new benchmarks for robust agentic model performance.

Overview and Motivation

The transition of LLMs into large reasoning models (LRMs) has foregrounded the agentic paradigm, where autonomous agents execute complex tasks through iterative reasoning and tool mediation. Despite significant progress, data scarcity and rigidity remain core limitations. Existing datasets predominantly rely on static toolsets and single-shot interactions, resulting in poor coverage of the stochastic, multi-turn nature of authentic human-agent collaboration. This paper introduces a scalable, user-oriented framework for multi-turn dialogue generation that integrates realistic tool use, synthesizes domain-specific APIs, and decouples task specification from user interaction via a dedicated simulator grounded in human behavioral rules.

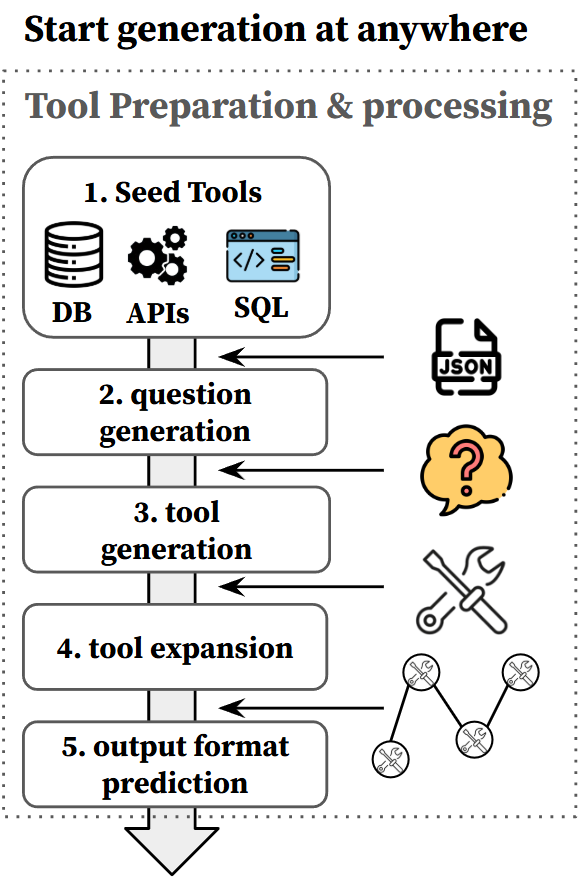

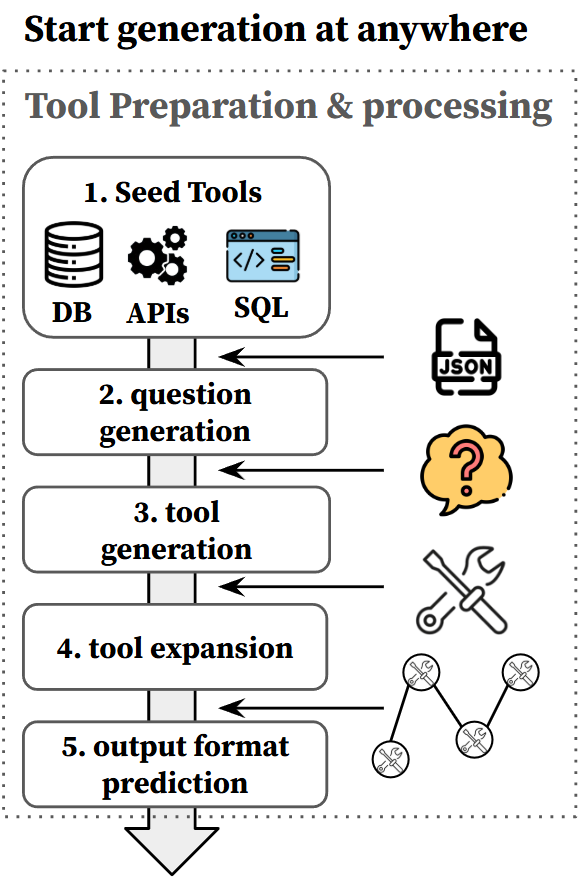

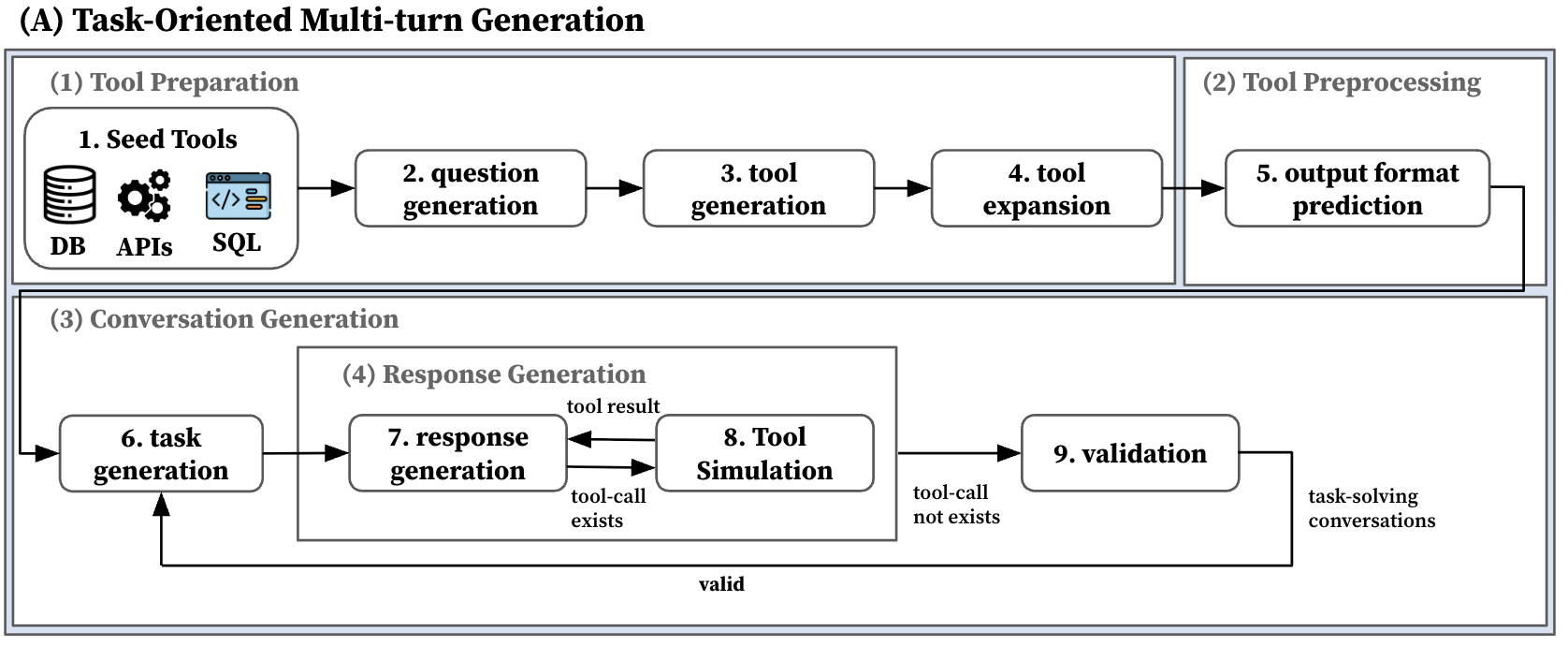

Figure 1: A modular pipeline for dynamic tool synthesis and preprocessing, designed to initiate multi-turn data generation from any arbitrary state.

Pipeline Architecture and Core Components

The framework is realized through a plug-and-play, modular architecture encompassing three principal phases:

- Tool Preparation and Expansion: Begins with minimal seed tools, from which the system dynamically constructs a diverse set of domain-specific APIs using LRM-powered synthesis inspired by practical scenarios. Subsequent preprocessing aligns all tools to explicit, machine-verifiable schema definitions, particularly leveraging JSON schemas for output consistency and type preservation across tool invocations.

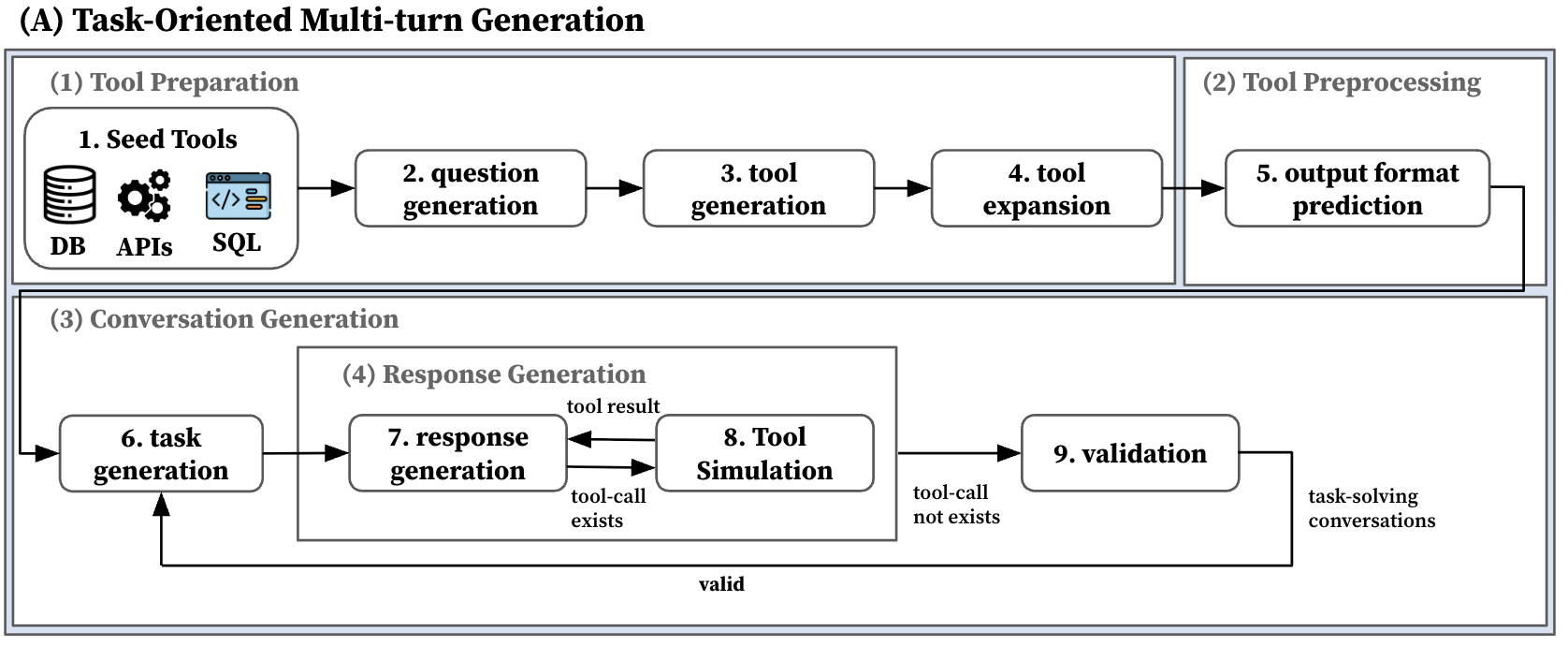

- Task-Oriented Multi-Turn Generation: Emulates classic agentic pipelines where task completion is performed optimally, yielding trajectories focused on direct, efficient tool invocation. Despite scalability, this paradigm exhibits an "efficiency trap"—minimizing conversational density and omitting intermediate requests, clarifications, and interaction variability, which are characteristic of real-world dialogue.

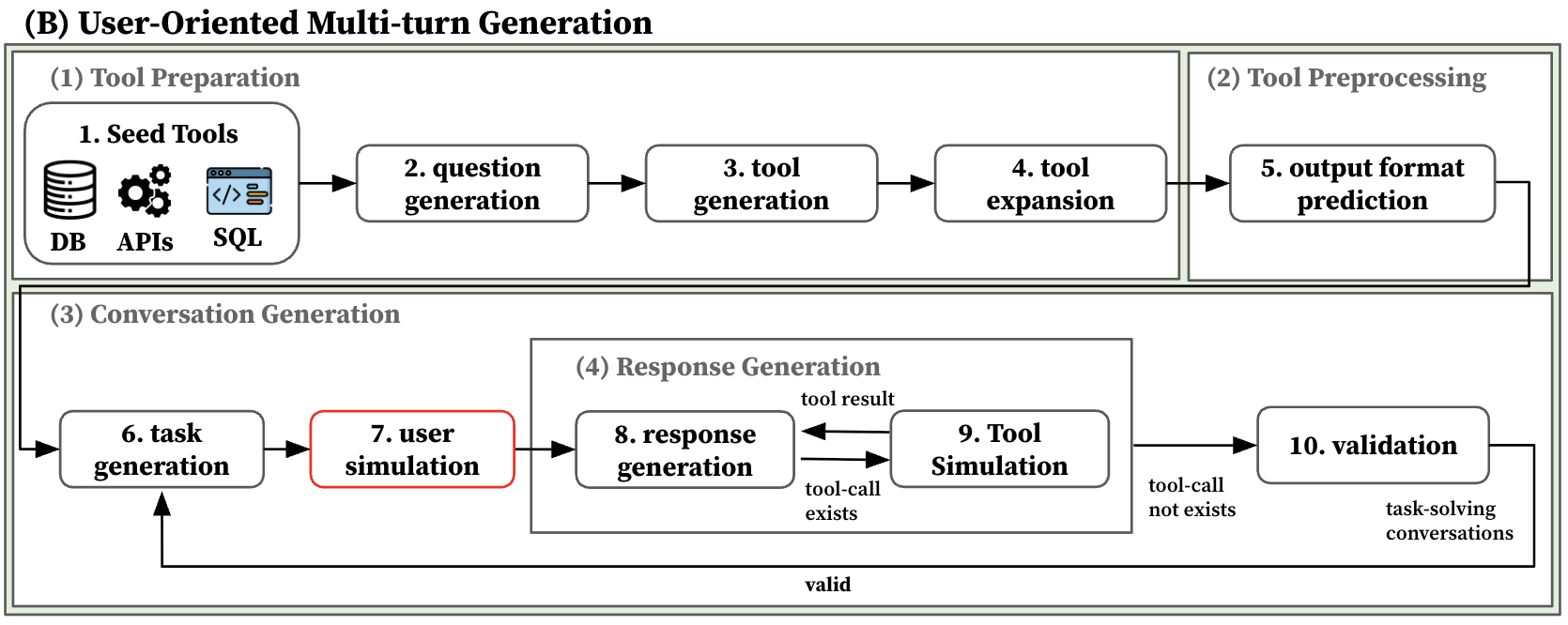

Figure 2: An automated framework that generates tool-use trajectories focused on efficient task completion through direct simulator-based responses.

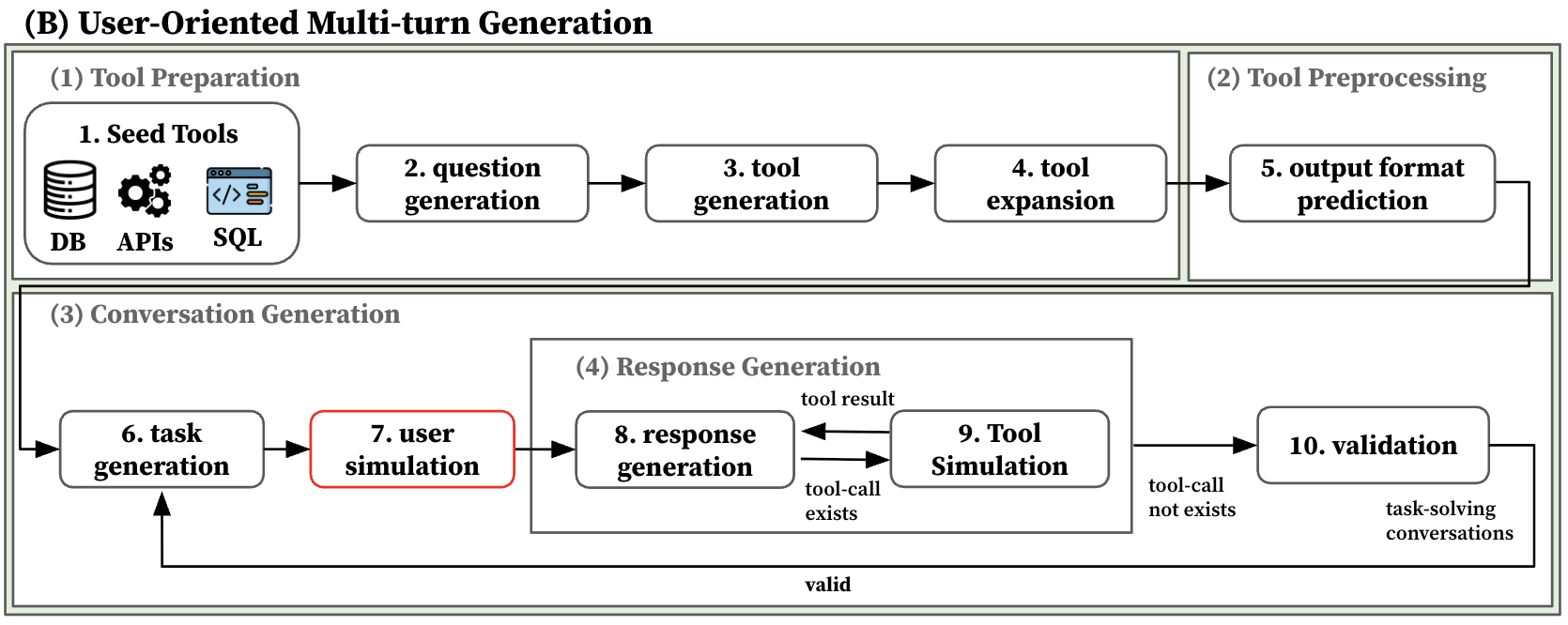

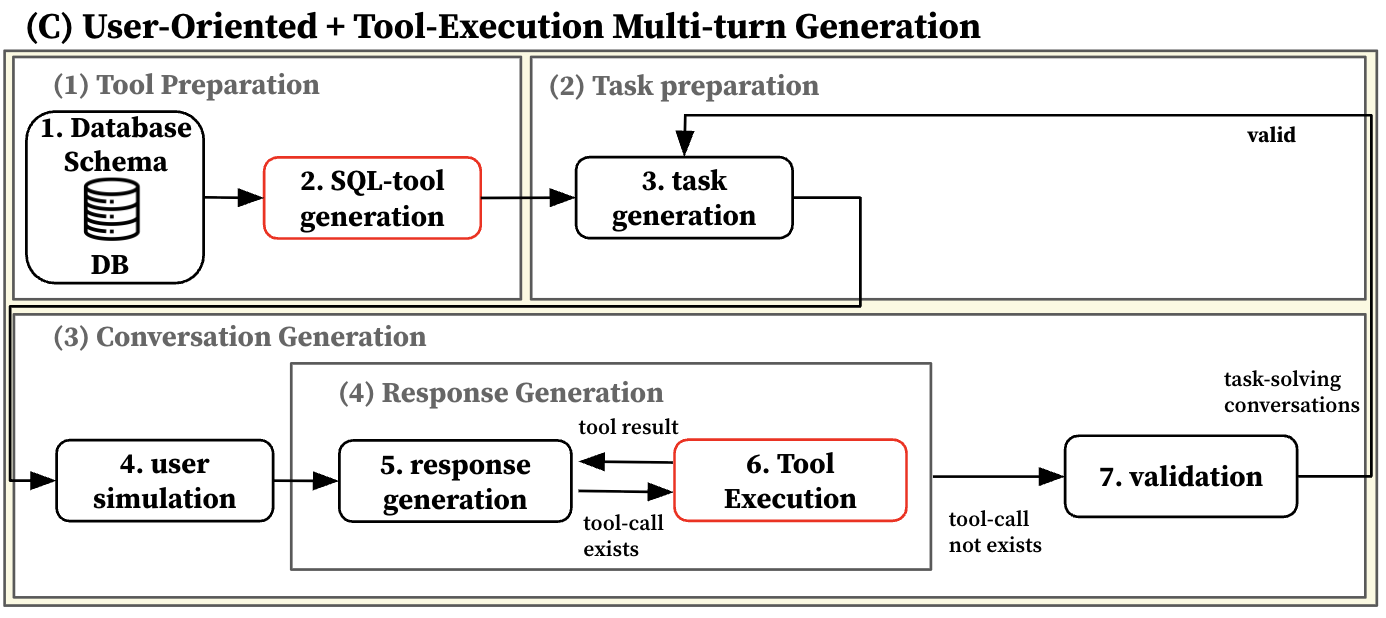

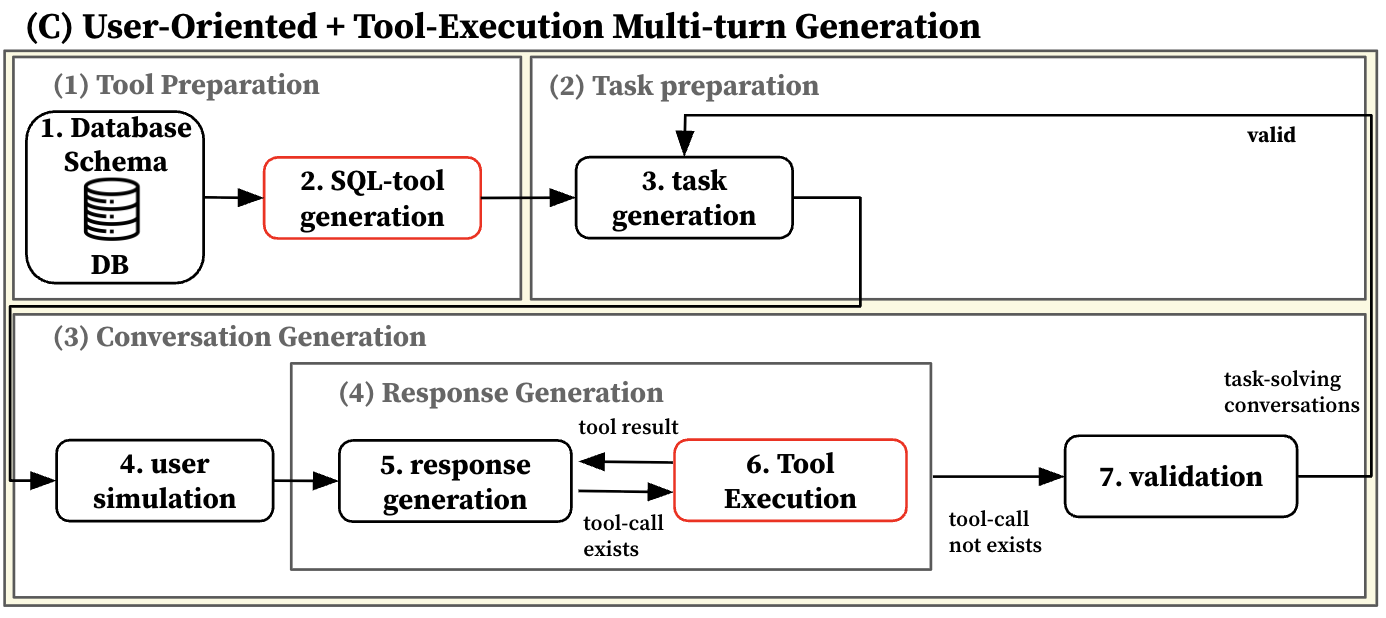

- User-Oriented and Execution-Grounded Generation: Shifts to a simulation-first approach that separates latent task goals from user behavior. A user simulator, governed by incremental, turn-wise feedback rules, orchestrates multi-turn interactions, enforces intermediate clarification, and induces realistic task subdivision. Critically, tool executions are grounded in real database environments, particularly via SQL-backed tool synthesis over relational schemas. This yields executable, verifiable outputs and persistent conversational state, elevating the fidelity and robustness of generated trajectories.

Figure 3: A framework that decouples tasks from interaction by employing a dedicated user simulator to mimic incremental human feedback and request-making.

Figure 4: This pipeline integrates a SQL-tool generation module grounded in real-world database schemas with a dedicated user simulator to produce verifiable, high-fidelity multi-turn dialogues.

Dataset Properties and Domain Coverage

The resulting high-density dataset is characterized by:

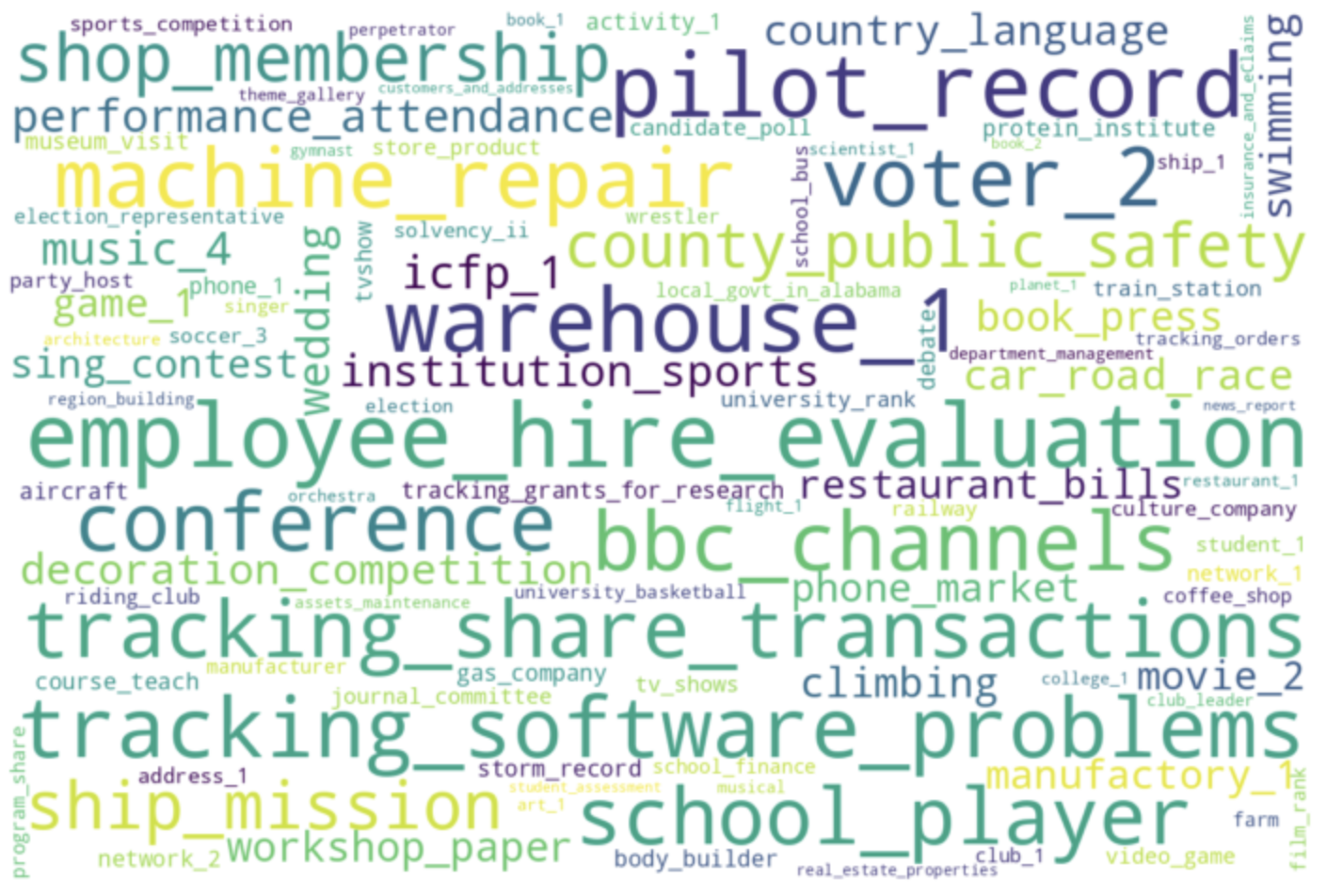

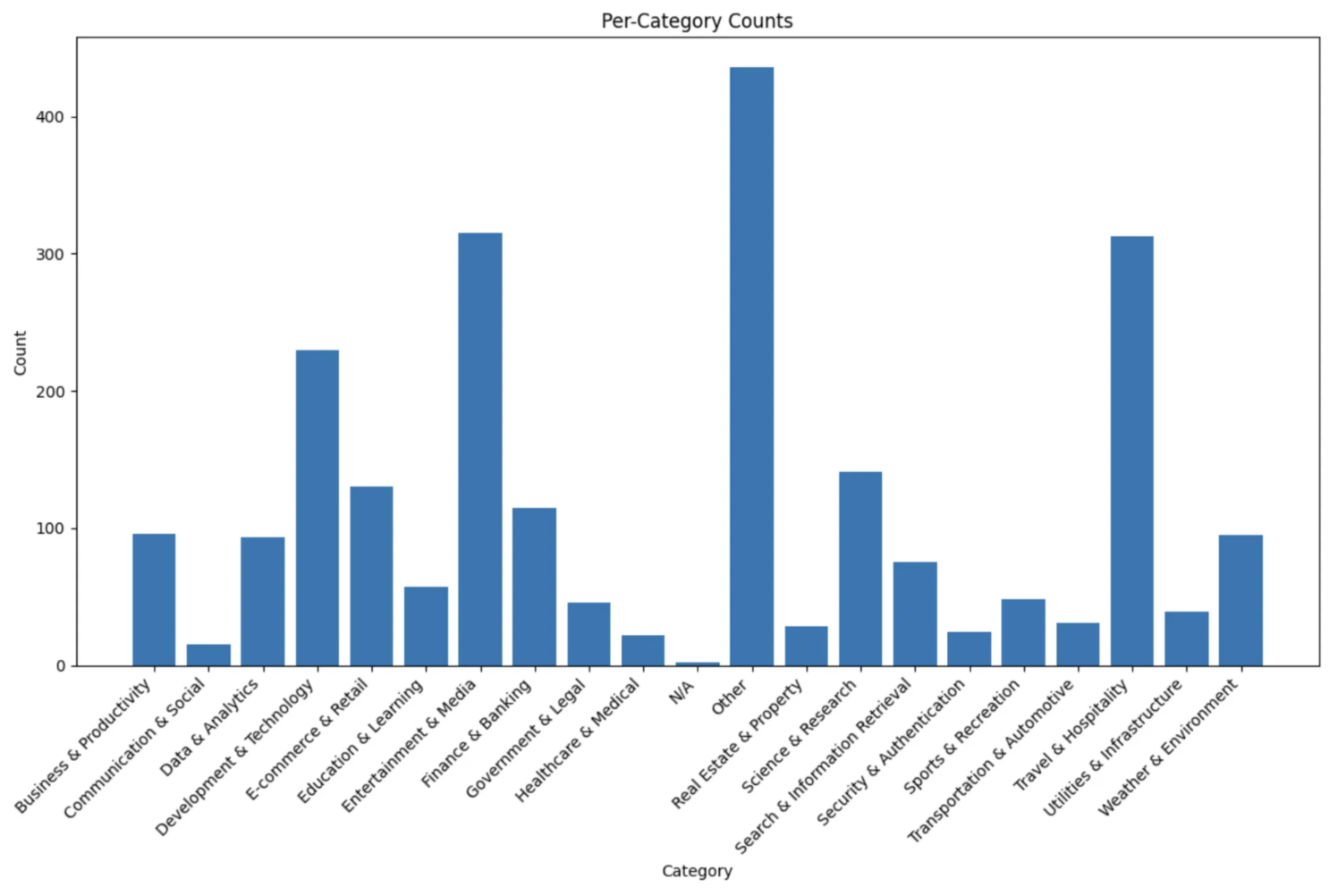

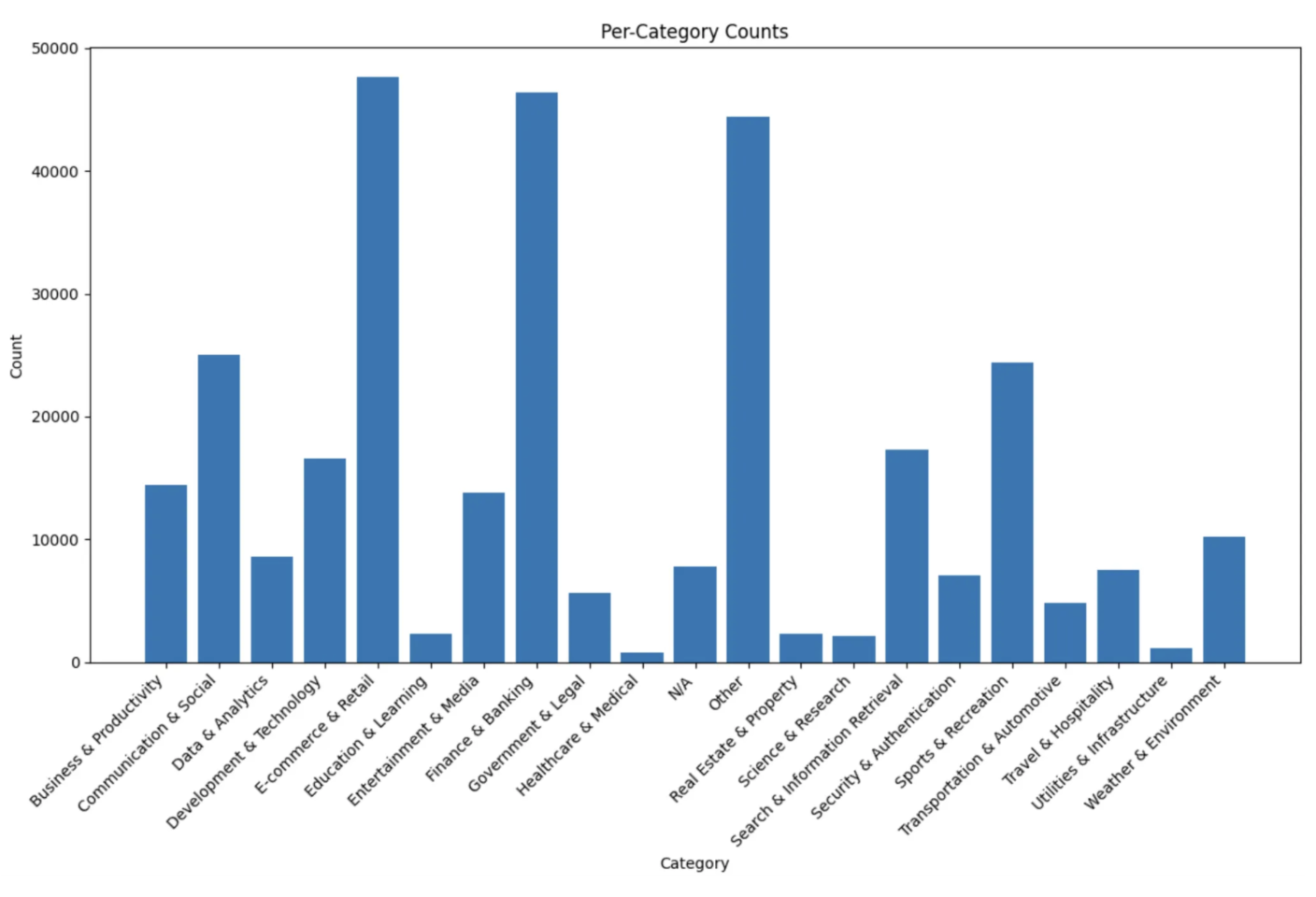

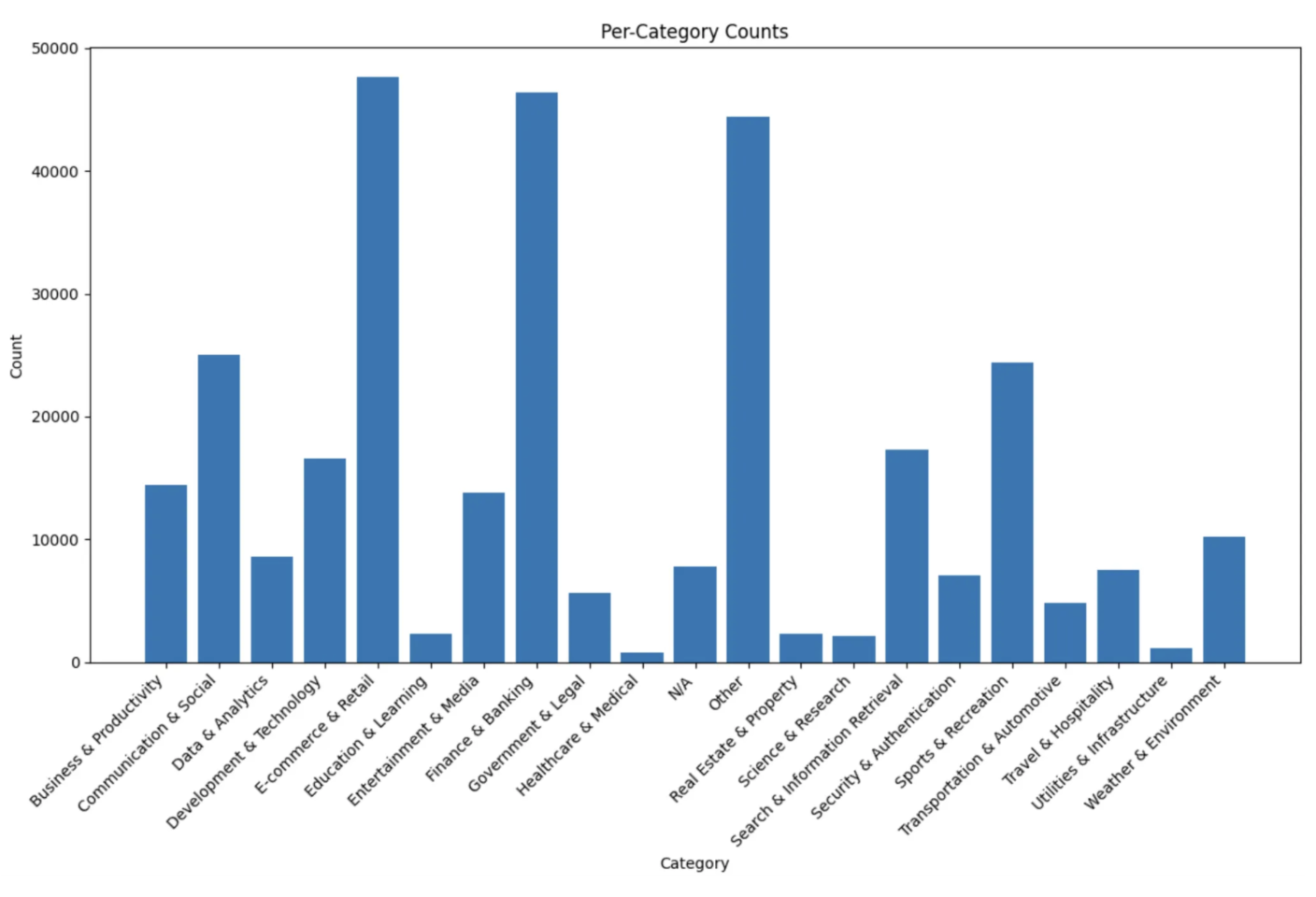

Figure 6: (Top): Distribution of tool categories in the BFCL. (Bottom): Category counts for Nemotron Post-training, highlighting scale and density.

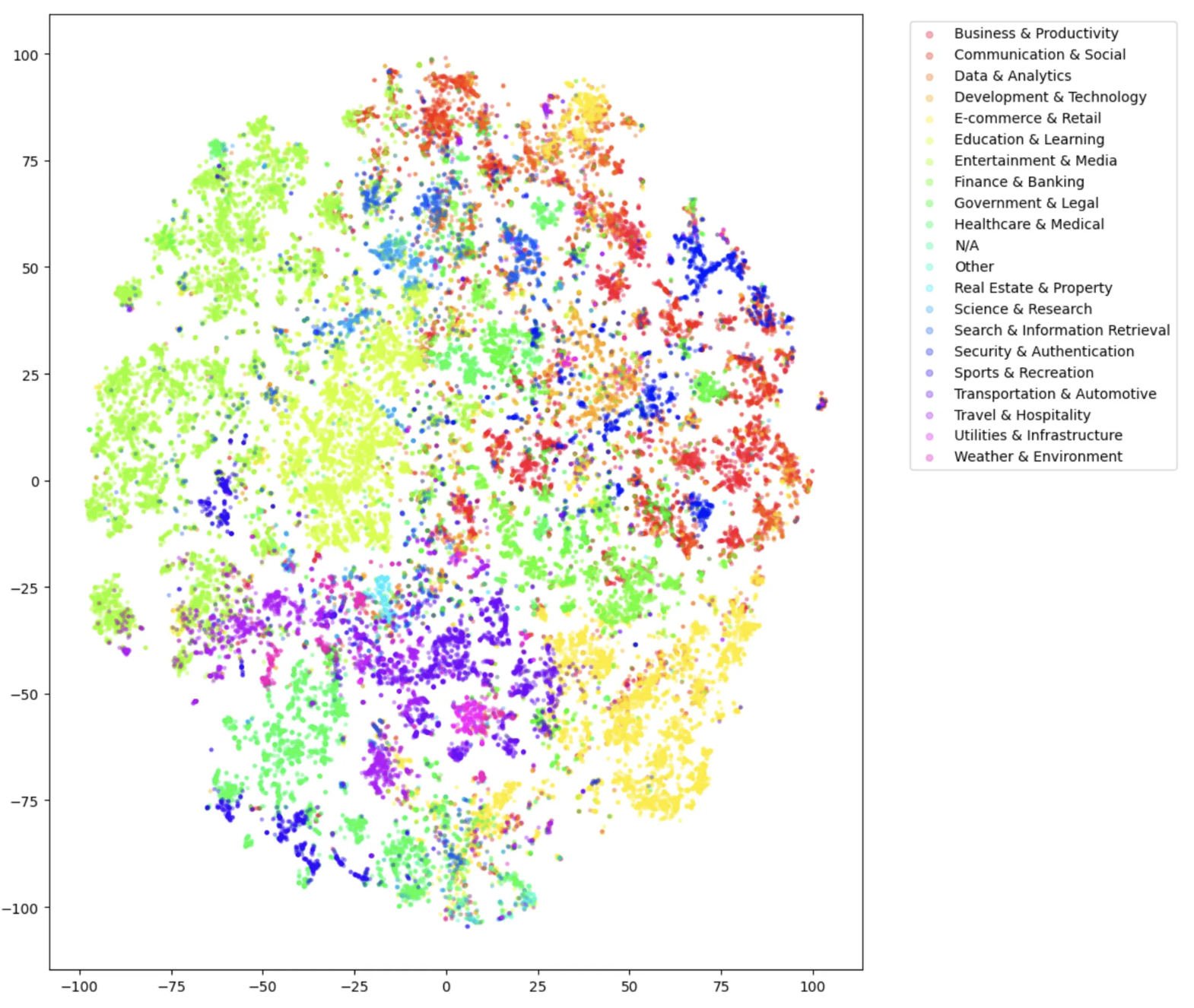

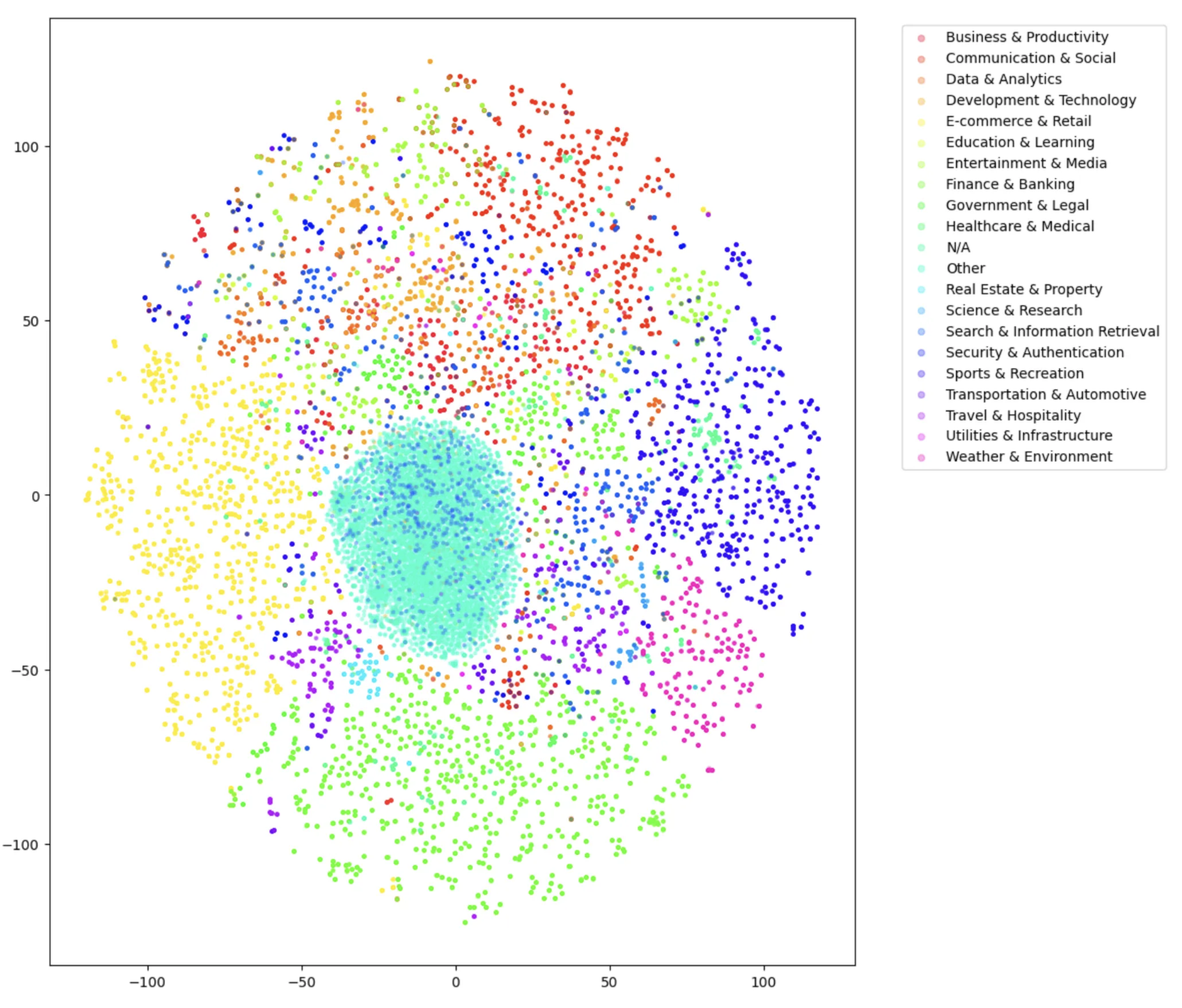

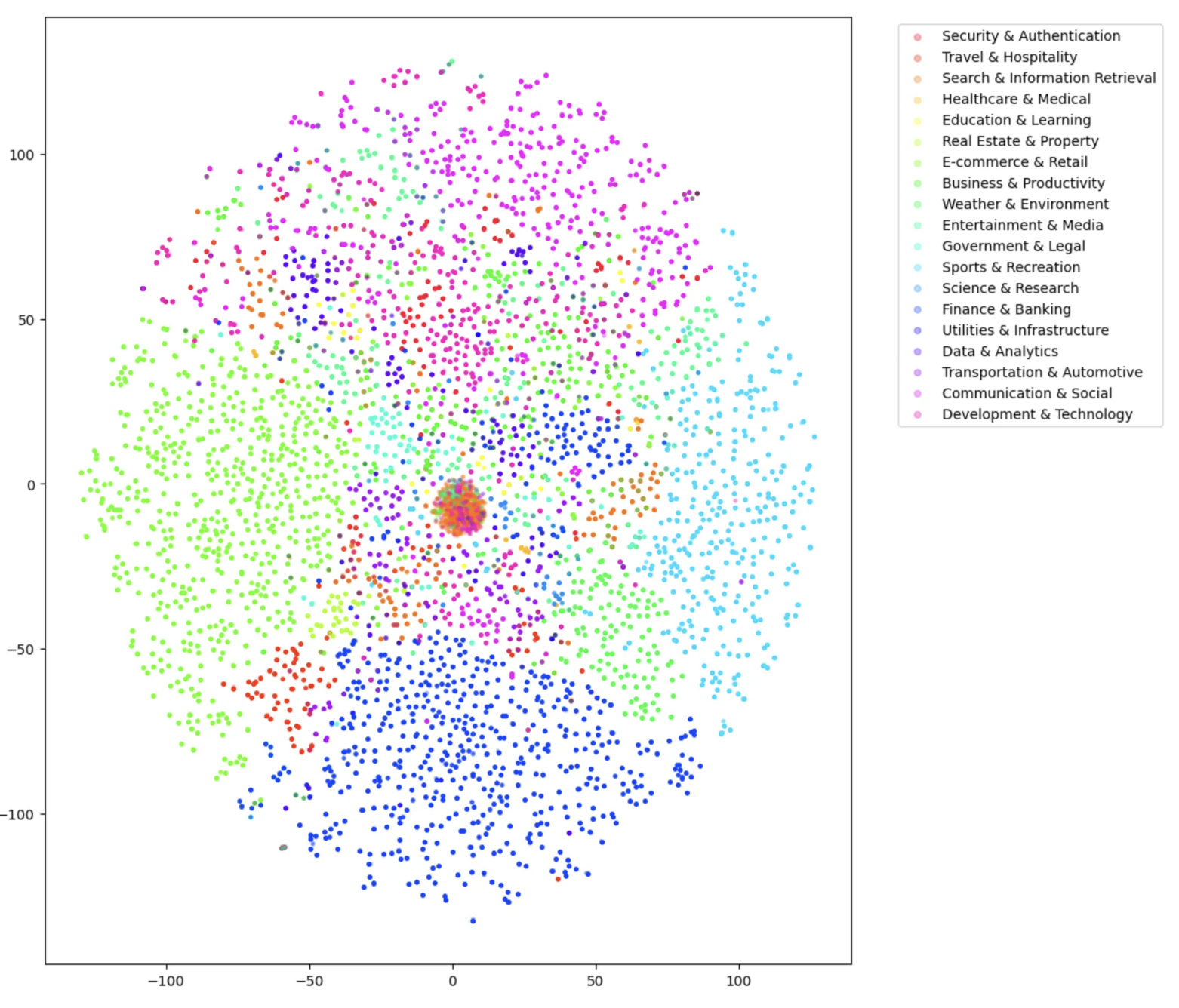

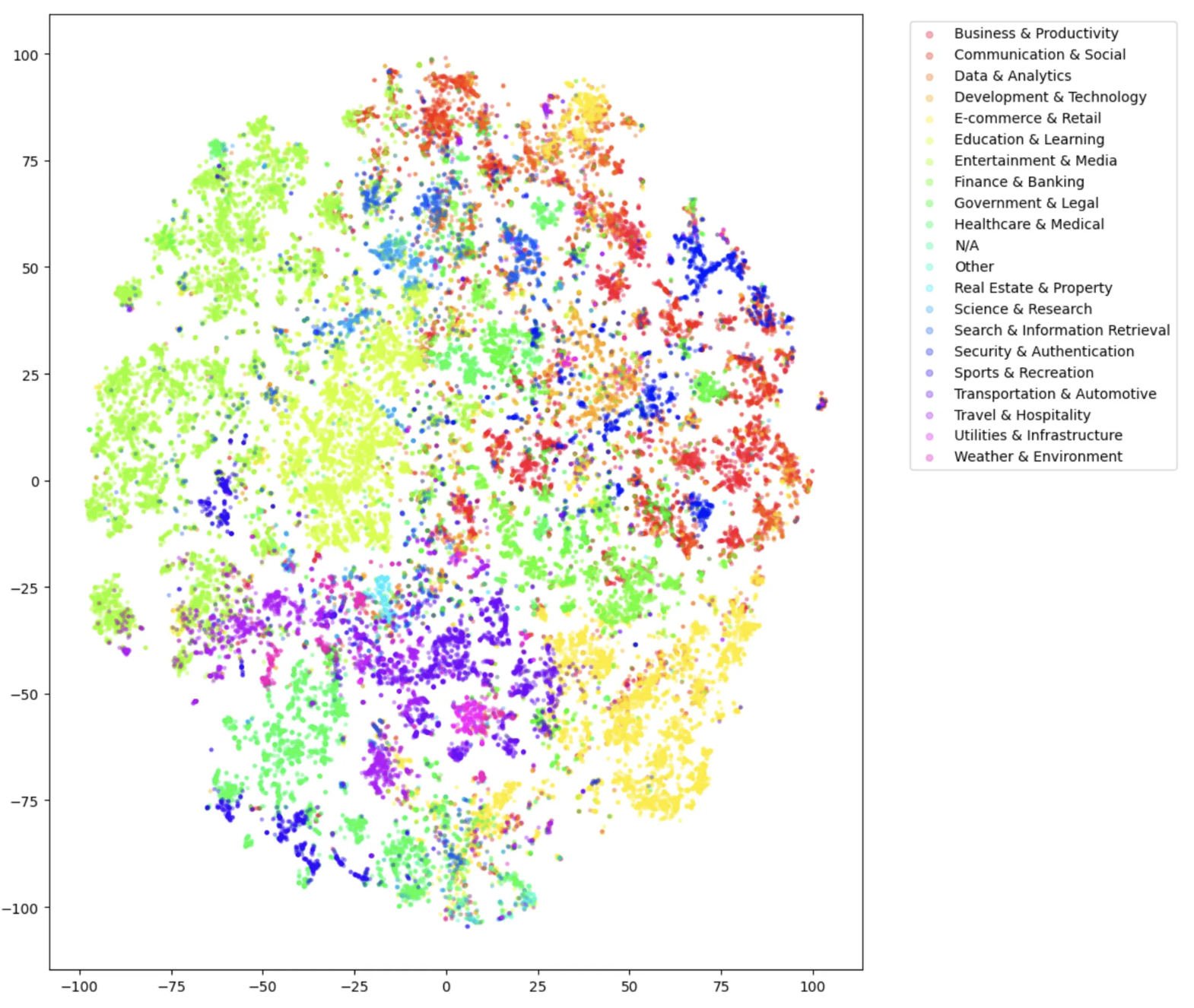

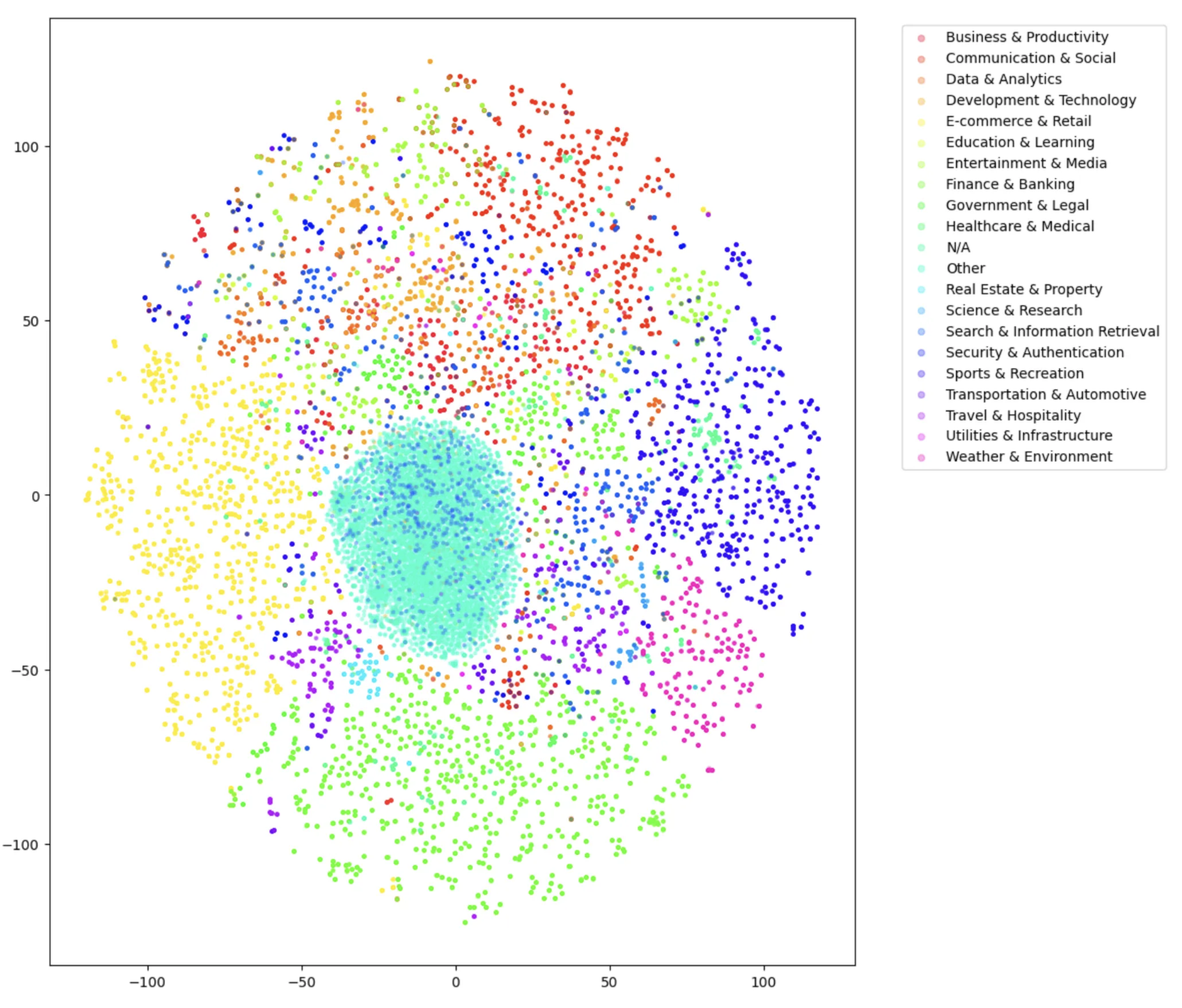

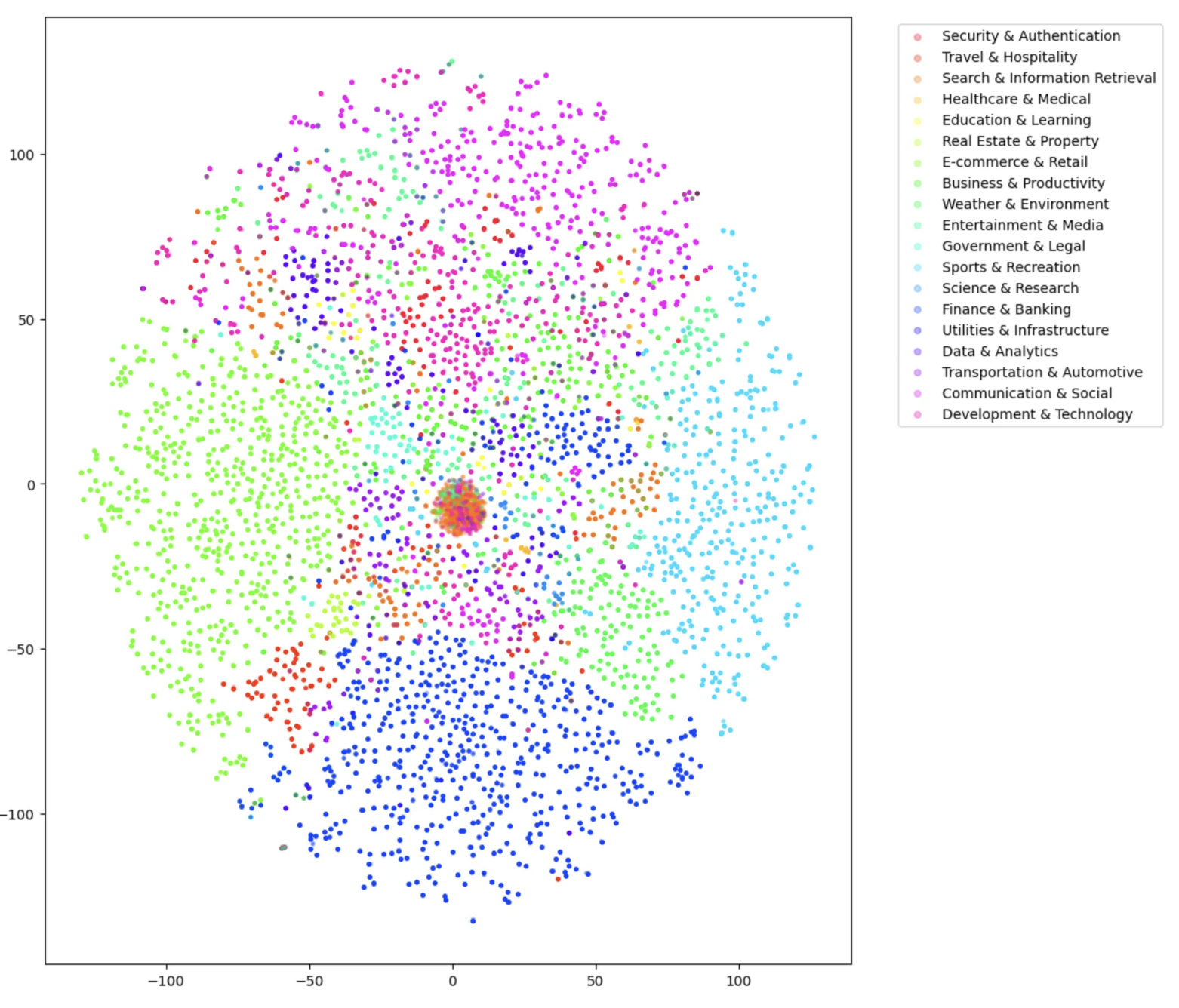

Figure 7: Semantic domain embedding projections for BFCL and Nemotron, visualizing spread and underlying domain sparsity.

Experimental Evaluation

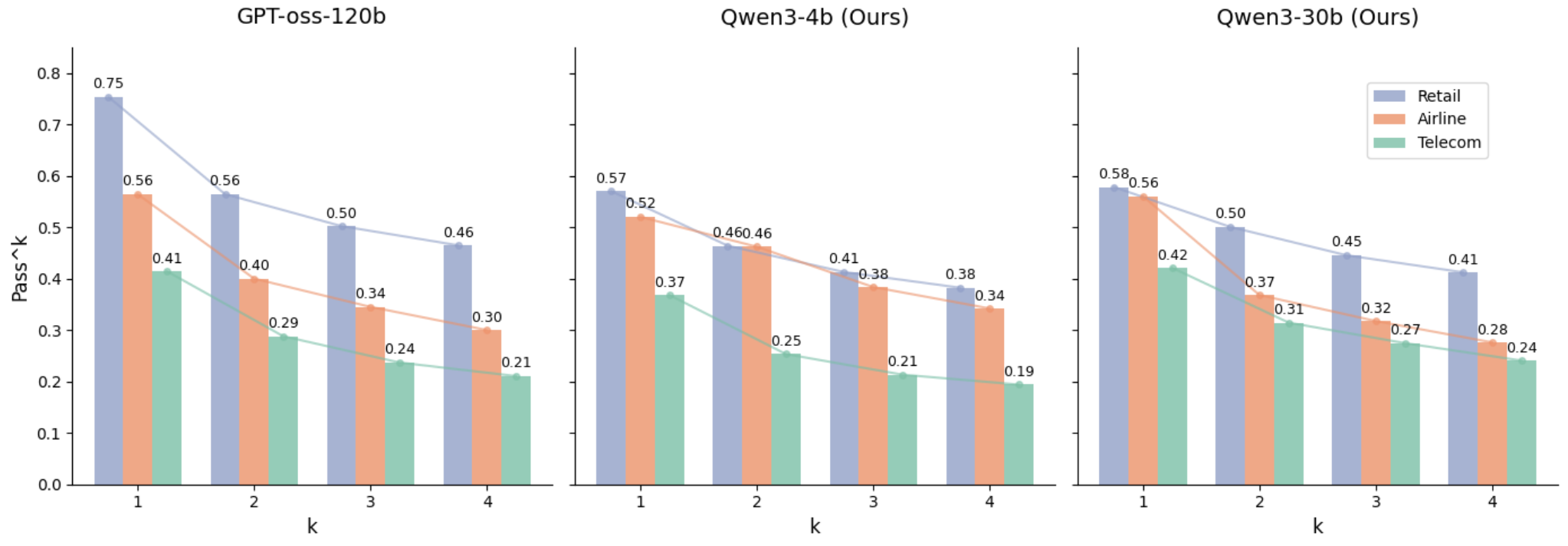

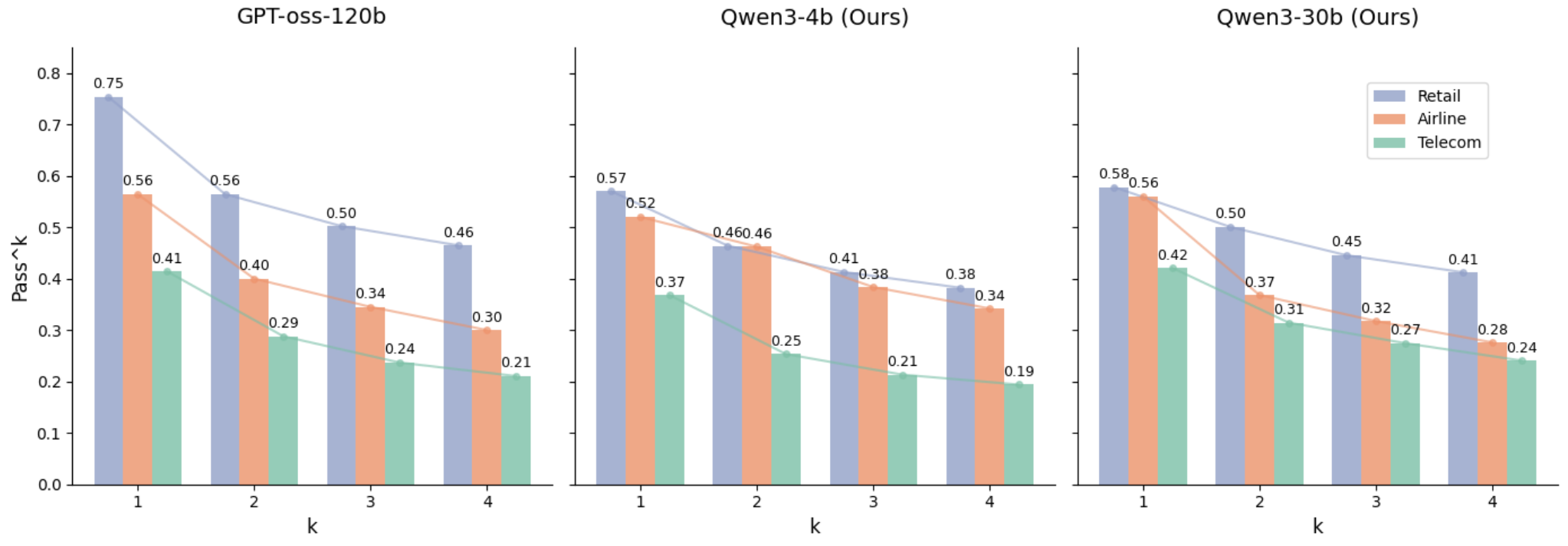

Fine-tuning leading open-source agentic models (Qwen3-4B, Qwen3-30B, GPT-OSS-120b) on the proposed dataset delivers systematically improved performance on multi-turn benchmarks (BFCL, τ2). Notably:

- Multi-Turn Coherence: Models trained via user-oriented data sustain goal awareness, tool-calling strategies, and session persistence, as evidenced by elevated scores on complex domains such as Telecom.

- Execution Grounding: SQL-driven tool execution further augments reliability; models exposed to executable context maintain stateful interactions and error correction capabilities.

- Consistency Analysis: The Passk metric verifies sustained, repeatable tool-use success across multiple dialogue replicates, demonstrating generalization beyond isolated, one-off task completion.

Figure 8: Passk performance for GPT-OSS-120b, Qwen3-4b, and Qwen3-30b across domains, visualizing scalable consistency with increasing k.

Practical and Theoretical Implications

The procedural shift toward user-oriented, multi-turn dialogue generation provides critical insights and infrastructure for future agentic LLM development:

- Practical Impact: The framework produces verifiable samples for fine-tuning agentic models in dynamic, real-world problem solving, substantially enhancing robustness to incremental, noisy user requests. SQL-backed execution supports integration with enterprise-scale data platforms.

- Theoretical Significance: By decoupling latent goal setting from user interaction, the paradigm enables explicit modeling of user intent, feedback loops, and error recovery. This is essential for scaling decision-making and task decomposition in environments with mutable state and imperfect information.

- Scalability and Cost Tradeoff: While interaction realism incurs higher computational expense and slower throughput, the resulting trajectory density and conversational fidelity justify the increased resource allocation, especially for domains requiring high state persistence and fault tolerance.

Future Directions

Further generalization across cross-domain environments, enhanced error-handling mechanisms, and improved state recovery strategies remain open areas. The SQL-based executable pipeline hints at scalable agentic training for heterogeneous environments, though brittleness under partial database views and persistent state mutation requires robust mitigation.

Conclusion

This paper introduces a scalable, modular framework for user-oriented multi-turn dialogue generation grounded in realistic tool use. Through plug-and-play design and execution-backed simulation, the pipeline achieves substantial advances in multi-turn coherence, robustness, and verifiability. Experimental results confirm consistent gains in agentic benchmarks, establishing new standards for interactive data generation in the agentic LLM research community (2601.08225).