Bridging Language Gaps: Advances in Cross-Lingual Information Retrieval with Multilingual LLMs

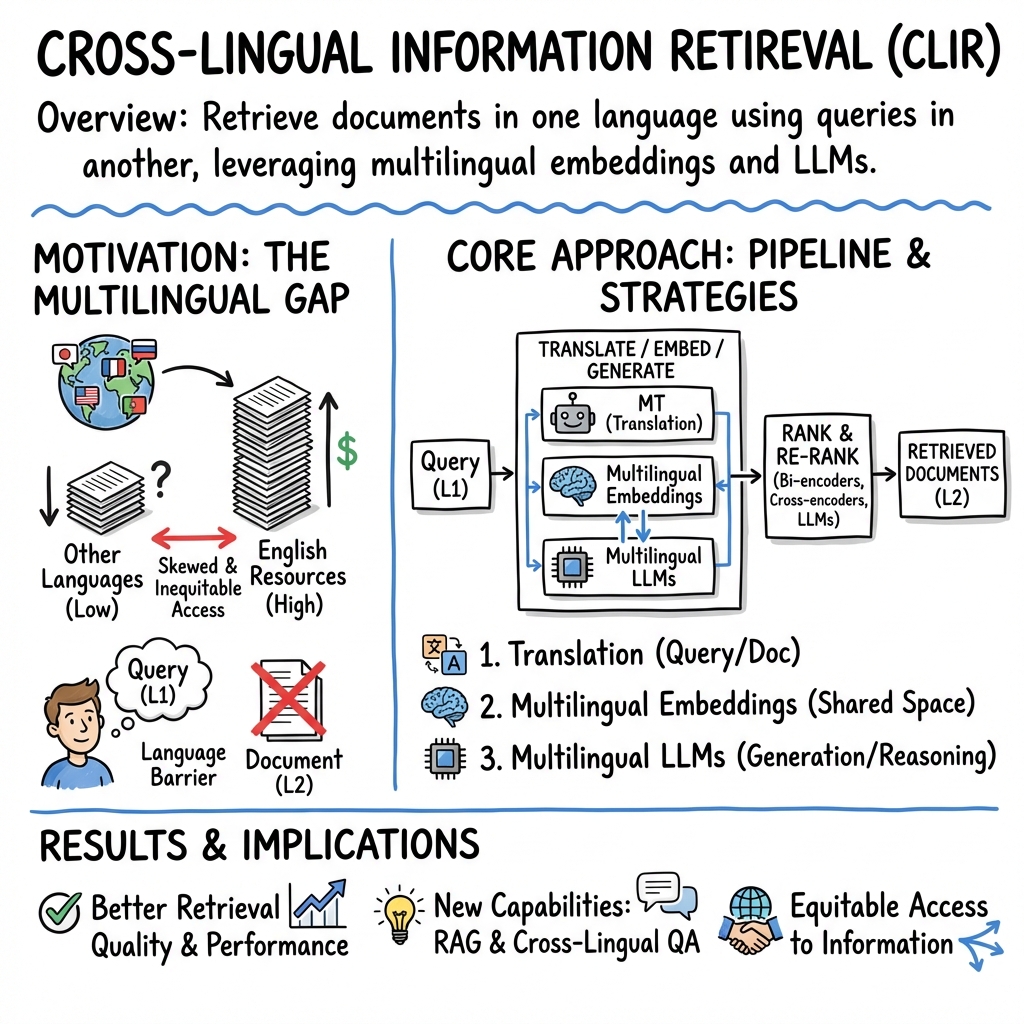

Abstract: Cross-lingual information retrieval (CLIR) addresses the challenge of retrieving relevant documents written in languages different from that of the original query. Research in this area has typically framed the task as monolingual retrieval augmented by translation, treating retrieval methods and cross-lingual capabilities in isolation. Both monolingual and cross-lingual retrieval usually follow a pipeline of query expansion, ranking, re-ranking and, increasingly, question answering. Recent advances, however, have shifted from translation-based methods toward embedding-based approaches and leverage multilingual LLMs, for which aligning representations across languages remains a central challenge. The emergence of cross-lingual embeddings and multilingual LLMs has introduced a new paradigm, offering improved retrieval performance and enabling answer generation. This survey provides a comprehensive overview of developments from early translation-based methods to state-of-the-art embedding-driven and generative techniques. It presents a structured account of core CLIR components, evaluation practices, and available resources. Persistent challenges such as data imbalance and linguistic variation are identified, while promising directions are suggested for advancing equitable and effective cross-lingual information retrieval. By situating CLIR within the broader landscape of information retrieval and multilingual language processing, this work not only reviews current capabilities but also outlines future directions for building retrieval systems that are robust, inclusive, and adaptable.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper is about helping people find useful information written in other languages. Imagine you search in English, but the best answer is in Spanish, Swahili, or Chinese. Cross‑lingual information retrieval (CLIR) is the technology that lets your English question find those non‑English documents. The paper is a survey: it reviews what the field has tried in the past, what works now, what still doesn’t, and where things are going—especially with new multilingual LLMs.

What questions does the paper try to answer?

The authors set out to:

- Explain the main parts of a search system and how they change when languages differ.

- Compare old translation-based methods with newer “embedding” and LLM-based methods.

- Summarize the datasets and tests used to measure CLIR performance.

- Point out ongoing problems (like unfairness to low‑resource languages) and suggest future directions.

How did the researchers study this?

This is a survey paper, which means the authors didn’t build just one new system. Instead, they:

- Read and organized many previous studies.

- Described the standard search pipeline in simple parts:

- Query expansion: adding helpful words to short or unclear searches.

- Ranking: quickly finding likely relevant documents.

- Re‑ranking: doing a slower, smarter re-check of the top results.

- Question answering: writing a clear answer from what was found, often using an LLM.

- Explained cross‑lingual strategies:

- Translation-based: translate the query or documents and use normal search.

- Embeddings: turn texts from different languages into points in the same “meaning space,” so similar ideas are close together even if the words are different.

- Multilingual LLMs: models trained on many languages that can understand questions and produce answers across languages.

- Reviewed how systems are evaluated and what data is available.

Think of it like this: they’re mapping the landscape—what paths exist, which are fast, which are accurate, and which are fair.

What did they find, and why does it matter?

Main takeaways:

- There’s a shift from “translate first, search later” to “compare meanings directly.” Old systems relied on translation and exact word matching. Newer systems use embeddings and multilingual LLMs to compare ideas across languages, often working better and enabling direct answer generation.

- The search pipeline still helps. Even with fancy models, breaking the job into query expansion, ranking, re‑ranking, and answering gives better results and speed.

- Multilingual embeddings and LLMs are powerful but not perfect. Aligning different languages into the same meaning space is hard, especially for languages with little training data. This can cause mistakes or “semantic drift” (the meaning shifts slightly during translation or alignment).

- Data imbalance is a big problem. Most web text and model training data is in a few dominant languages (especially English). That means many languages remain under‑served, which makes search less fair and less useful for those speakers.

- Better evaluation is needed. It’s tough to build fair, high‑quality test sets across many languages. Without good tests, we can’t tell if systems are truly improving for everyone.

- Hybrid methods help. Combining old and new techniques (like mixing keyword search with dense embeddings or using LLMs to clean up results) often works best in practice.

- Retrieval‑augmented generation (RAG) is promising. Instead of having an LLM “guess,” the system fetches real documents and asks the LLM to write an answer based on them—like an open‑book test. This reduces hallucinations and helps produce answers in the user’s language.

Why it matters:

- People should be able to access information no matter what language they speak. Strong CLIR helps bridge global knowledge gaps, supports education and research, and makes technology more inclusive.

What does this mean for the future?

- Build fairer systems: Put more effort into low‑resource languages, better datasets, and evaluation methods that don’t favor English.

- Go beyond translation: Keep improving cross‑lingual embeddings and alignment so systems understand meaning across languages more reliably.

- Use the best of both worlds: Combine sparse (keyword), dense (embedding), and LLM-based methods for speed, accuracy, and explainability.

- Safer, clearer answers: Use RAG and careful prompting to reduce hallucinations, and present sources so users can trust the results.

- Domain adaptation: Tune systems for specialized fields (like medicine or law) in multiple languages, not just in English.

In short, the field is moving from “translate and hope” to “understand and answer.” If done well, future systems will let anyone ask a question in their language and find the best information—wherever it lives on the web and in whatever language it’s written.

Knowledge Gaps

Knowledge Gaps, Limitations, and Open Questions

The following list distills what remains missing, uncertain, or unexplored based on the paper’s scope and claims, with concrete targets for future work:

- Lack of systematic, large-scale comparisons between translation-based, embedding-based, and generative CLIR pipelines across many language pairs, scripts, and domains with matched compute and latency budgets.

- Insufficient methods for aligning multilingual embeddings without parallel data, especially for typologically diverse and low-resource languages (rich morphology, non-Latin scripts, code-switching).

- Open question on how to robustly mitigate hubness and anisotropy in multilingual embedding spaces to prevent cross-lingual nearest-neighbor failures.

- Underexplored joint approaches that couple translation and query expansion end-to-end (vs. sequential), including when and how to interleave them for maximum CLIR gains.

- Limited evidence on the effectiveness and failure modes of LLM-based query expansion in CLIR (hallucination control, prompt sensitivity, cross-script transliteration variants, proper names).

- No clear best practices for multilingual pseudo-relevance feedback (PRF) and relevance feedback transfer when judgments exist only in a pivot language (label noise, semantic drift).

- Sparse guidance on language-aware document segmentation for dense retrieval (optimal passage lengths, tokenization differences, sentence boundary errors per language).

- Inadequate techniques for language-conditioned score calibration and fusion (e.g., BM25 + dense + cross-encoder + LLM) to prevent language-specific over/under-scoring.

- Limited evaluation of late-interaction and multi-vector retrievers in CLIR at web scale (index size, compression, approximate nearest neighbor indexing, cross-language latency).

- Unresolved trade-offs between cross-encoders and LLM-based re-rankers across languages: cost, latency, stability under prompt permutations, and domain shift.

- Lack of robust, reproducible protocols for zero-shot/few-shot LLM re-ranking in CLIR (seed sensitivity, instruction formats, listwise vs. pairwise prompting across languages).

- Minimal study of fairness and equity metrics tailored to CLIR (e.g., ensuring comparable nDCG@k across languages with different resource levels and content distributions).

- Absence of standardized multilingual RAG evaluation suites measuring answer faithfulness, grounding, and citation quality when queries and sources are in different languages.

- Limited methods to detect and prevent cross-lingual hallucinations in QA (answer fabrication, wrong-language citations, mistranslated entities).

- Open question on how to leverage multilingual entity linking and KB alignment to improve cross-lingual retrieval and QA (disambiguation across scripts and transliterations).

- Underexplored continual and lifelong CLIR training (avoiding catastrophic forgetting across languages; handling temporal drift and newly emergent dialects).

- Insufficient research on interactive, conversational CLIR tailored to multilingual settings (intent clarification, turn-by-turn disambiguation, cross-language preference modeling).

- Lack of user-centric studies on presentation strategies (show source-language passages vs. translated snippets vs. summaries) and their impact on trust and task success.

- Evaluation gaps for domains with high precision needs (legal, medical): benchmarks with bilingual gold rationales, error taxonomies, and explainability requirements.

- Limited explainability methods that are language-aware (token-level rationales across scripts, alignment visualizations that make cross-lingual matches auditable).

- Insufficient analysis of domain adaptation techniques for CLIR (continued pretraining, instruction tuning, and contrastive alignment) and their cross-language generalization.

- Open problem of robust CLIR under noisy, user-generated content (code-switching, mixed scripts, transliteration, orthographic variation).

- Lack of standardized annotation protocols and quality control for multilingual relevance judgments (inter-annotator agreement across cultures, translation effects on guidelines).

- Underexplored privacy, safety, and adversarial robustness in multilingual RAG (prompt injection in non-English sources, toxic content transfer, data residency/legal constraints).

- Limited energy and cost accounting for multilingual re-ranking and RAG at scale; need for cost-aware, green CLIR optimizations and reporting standards.

- Incomplete coverage of hybrid sparse+dense CLIR with unified indexing that is explicitly language-aware (learned language tags, language-adaptive compression).

- Little evidence on effectiveness of calibration/normalization to mitigate positional and language biases in re-ranking for multilingual settings at scale.

- Unclear best practices for leveraging behavioral/structural features (clicks, freshness, authority) in multilingual re-ranking without reinforcing language bias.

- Gaps in evaluating and handling code-mixed queries and documents, including metrics, datasets, and model adaptations for mixed-language contexts.

- Limited study of multimodal cross-lingual retrieval (text-to-image/video across languages) and how multilingual text models interact with visual encoders for CLIR.

- Risk of benchmark contamination from LLM pretraining in multilingual settings; need for leakage audits and clean-room evaluation for CLIR and QA benchmarks.

- Open question on optimal tokenization and subword vocabularies for highly diverse scripts and morphologies to improve retrieval consistency across languages.

- Lack of principled strategies for selecting pivot languages (or multiple pivots) to maximize CLIR performance under strict low-resource constraints.

- Few methodologies for reliable cross-lingual negative mining and hard negative construction without introducing language or cultural biases.

- Missing standardized protocols for measuring end-to-end utility (task success, time-to-answer, user satisfaction) in multilingual search and QA beyond nDCG/MAP.

Practical Applications

Immediate Applications

Below are deployable use cases that can be built today using the survey’s pipeline (query expansion → ranking → re‑ranking → QA), embedding-based CLIR, hybrid sparse+dense retrieval, LLM-driven query reformulation, and multilingual RAG.

- Multilingual enterprise search and knowledge discovery (software; enterprise)

- What: Let employees query in their preferred language and retrieve documents in any language, with answer summaries generated in the query language.

- How (workflow): language detection → query expansion (LLM prompting/PRF) → hybrid BM25+multilingual dense (mSBERT/LaBSE, ColBERT/ColBERTv2) + Reciprocal Rank Fusion → cross‑encoder or LLM re‑ranking → RAG answer with citations.

- Tools: Elasticsearch/OpenSearch+vector plugin, Vespa, Weaviate/Milvus/FAISS/Pinecone, XLM‑R/mSBERT/LaBSE, SPLADE/SPLADE‑X, ColBERT, LangChain/LlamaIndex.

- Dependencies: adequate multilingual coverage for target languages; indexing pipeline (segmentation, metadata, language IDs); latency/cost budget for re‑rankers and RAG; data governance and access controls.

- Cross‑lingual customer support and self‑service portals (software; customer service)

- What: Users submit questions in any language; the system retrieves and summarizes FAQs, tickets, and manuals written in other languages.

- How: LLM query rewriting (keyword and concept expansion), doc expansion (Doc2Query/HyDE), dense retrieval with language‑aware metadata, answer generation with grounding and references.

- Dependencies: privacy/PII redaction; multilingual terminology alignment for product names; guardrails for hallucination; click/feedback loops for PRF.

- E‑commerce product discovery across languages (retail)

- What: Cross‑lingual product search and recommendation; user queries mapped to multilingual catalog content.

- How: hybrid sparse+dense retrieval (BM25+SPLADE‑X or LaBSE), LLM keyword expansion (synonyms/attributes), behavioral signals (CTR, purchases) in learning‑to‑rank, Maximal Marginal Relevance for diversity.

- Dependencies: normalized product taxonomy and attributes; bias mitigation for dominant‑language products; latency SLAs for high-traffic search.

- Academic and clinical literature discovery (academia; healthcare)

- What: Cross‑lingual retrieval and summarization of research articles and guidelines; question answering over multilingual corpora.

- How: dense retrieval (mDPR/mSBERT) and late‑interaction (ColBERT) for ranking, cross‑encoder re‑ranking (XLM‑R/mT5), RAG with citation constraints; domain adaptation via in‑domain continued pre‑training or instruction tuning.

- Dependencies: licenses/access to content; domain adaptation for medical/legal vocab; human review in high‑stakes decisions; factuality checks.

- Legal e‑discovery and cross‑border compliance scanning (legal; finance)

- What: Search and summarize contracts, filings, and case law across languages; monitor regulatory changes internationally.

- How: language‑aware indexing → dense retrieval + feature‑enhanced ranking (freshness, authority) → LLM/cross‑encoder re‑ranking → answer extraction/summary with citations.

- Dependencies: auditability/explainability (extractive rationales); chain‑of‑custody; jurisdictional constraints on data transfer; bias/score normalization across languages.

- Cross‑border risk, ESG, and adverse‑media monitoring (finance)

- What: Detect signals in multilingual news, reports, and social content; produce English summaries for analysts.

- How: multilingual dense retrieval + QA‑oriented re‑ranking (answerability scoring), LLM summarization with source attribution, novelty promotion (MMR) to avoid redundancy.

- Dependencies: deduplication; stance and semantic drift control; clear escalation workflows for human verification.

- Government/citizen information access and public services (public sector; policy)

- What: Citizens query in their language to retrieve/understand policies and forms in other languages; cross‑lingual QA over public portals.

- How: hybrid retrieval with language filters, LLM‑assisted conversational search to clarify intent, RAG with citations and reading-level adaptation.

- Dependencies: accessibility and inclusivity coverage for under‑served languages; privacy and security; content freshness.

- Journalism and OSINT fact‑checking (media; civil society)

- What: Rapidly search and corroborate claims across multilingual sources; generate grounded summaries of evidence.

- How: late‑interaction/dense retrieval, listwise LLM re‑ranking, cross‑lingual QA; explicit provenance tracking.

- Dependencies: dataset bias across languages; misinformation robustness; human‑in‑the‑loop verification.

- Humanitarian and crisis information access (NGOs)

- What: Retrieve guidance and situational reports across languages; summarize into field‑team language.

- How: compact hybrid retrieval with offline caching; language ID and transliteration; QA‑oriented re‑ranking optimizing answerability.

- Dependencies: low‑resource language support; intermittent connectivity; safety and misinformation controls.

- Developer productivity across multilingual technical docs (software engineering)

- What: Query in native language to retrieve docs/issues in other languages; summarize into preferred language.

- How: dense retrieval with terminology preservation; LLM query expansion for APIs and error messages; RAG with code-aware parsers.

- Dependencies: handling code tokens and mixed scripts; domain adaptation to technical jargon.

- Library and education portals (education)

- What: Students/teachers search multilingual learning materials; personalized reading lists with cross‑lingual coverage.

- How: hybrid retrieval + listwise re‑ranking, conversational search for intent clarification, RAG summarization at target reading level.

- Dependencies: rights/licensing; age‑appropriate filtering; fairness across language availability.

- Practical pipeline improvements for existing search products (cross‑sector)

- What: Drop‑in gains via LLM‑based query expansion (HyDE/Query2Doc), Reciprocal Rank Fusion of sparse+dense indices, zero/few-shot LLM re‑ranking for top‑k.

- How: A/B tested cascades that gate expensive re‑rankers; fairness and score normalization across languages; logging for PRF.

- Dependencies: inference cost control; prompt stability; monitoring for cross‑lingual scoring bias.

Long‑Term Applications

These rely on advances highlighted in the survey—better alignment for low‑resource languages, fairness-aware evaluation, more efficient LLM re‑ranking, explainability, and scalable architectures (late‑interaction, memory‑augmented, hierarchical).

- Equitable CLIR for low‑resource and morphologically rich languages (policy; academia; public sector)

- What: High‑quality retrieval and QA for under‑represented languages to reduce digital inequity.

- How: community‑sourced corpora, alignment without parallel data (contrastive learning), implicit expansion, transliteration handling.

- Dependencies: new datasets/benchmarks, investment in data collection, bias/fairness evaluation standards, sustainable compute.

- Fully conversational cross‑lingual search with robust disambiguation and code‑switching (software; education)

- What: Dialog systems that ask clarifying questions, adapt to code‑switching, and iteratively refine queries.

- How: reinforcement learning with user feedback, multilingual preference optimization, conversational PRF, session‑aware memory.

- Dependencies: scalable, low‑latency LLMs; safe prompts; consistent evaluation across languages and tasks.

- Cross‑lingual multimodal retrieval (speech, images, video) (media; robotics; education)

- What: Query by text/audio in one language to retrieve/understand multimedia in another (e.g., video tutorials).

- How: joint multilingual embeddings across modalities, ASR/MT for low‑resource speech, late‑interaction for long videos.

- Dependencies: robust ASR/MT for many languages; copyright and consent; compute/storage for long-context indexing.

- Privacy‑preserving and federated cross‑lingual search (finance; healthcare; government)

- What: Query across jurisdictions while data stays in‑country; secure cross‑lingual scoring.

- How: federated retrieval and secure score fusion, on‑prem vector indices, homomorphic encryption/secure enclaves.

- Dependencies: production‑grade federated protocols; latency budgets; regulatory acceptance.

- On‑device personal multilingual RAG assistants (daily life; productivity)

- What: Private assistants that search personal notes/emails in mixed languages and summarize locally.

- How: compressed multilingual encoders, sparse+dense hybrid indices on device, memory‑efficient late‑interaction.

- Dependencies: model compression/distillation; battery/compute constraints; strong privacy guarantees.

- Explainable and auditable CLIR for high‑stakes domains (healthcare; legal; public policy)

- What: Rankers that justify results with extractive rationales and calibrated scores across languages.

- How: cross‑lingual XAI (e.g., SEAT-like attention stabilization), entailment‑based verification, listwise training aligned to nDCG/MAP with fairness constraints.

- Dependencies: accepted explanation standards; human factors research; performance‑explainability trade‑off management.

- Compliance‑aware multilingual RAG with verification (finance; pharma; safety‑critical)

- What: Answers that must cite, verify, and comply with jurisdiction/language-specific policies.

- How: QA‑oriented re‑ranking optimized for answerability, verifier models (entailment/fact‑checking), policy‑as‑code applied to citations.

- Dependencies: reliable verifiers across languages; robust citation grounding; policy encoding frameworks.

- Knowledge‑graph‑augmented cross‑lingual retrieval (software; research)

- What: Entity- and relation-aware CLIR that resolves cross‑lingual entities and aliases to improve recall/precision.

- How: align multilingual KGs with vector indices, entity linking during indexing and query time, hybrid KG+text rank fusion.

- Dependencies: high‑coverage multilingual KGs; consistent entity disambiguation across scripts.

- Memory‑augmented and hierarchical retrieval at web scale (search; documentation)

- What: Efficient retrieval over long documents/corpora with better passage selection and context management.

- How: hierarchical passage/document retrieval, memory slots (EMAT/MoMA‑style), adaptive cascades for cost control.

- Dependencies: storage/computation; complex MLOps; generalization across domains/languages.

- Adaptive, feedback‑driven, bias‑aware re‑ranking (all sectors)

- What: RL‑based rankers that learn from interactions while controlling positional and language bias.

- How: counterfactual logging, debiased click models, fairness‑constrained optimization, dynamic truncation for LLM re‑rankers.

- Dependencies: sufficient interaction data; ethical oversight; robust online evaluation.

- Sector‑specific advanced deployments

- Healthcare: bedside cross‑lingual QA integrated with EHRs and device manuals; Dependencies: clinical validation, safety/risk management, privacy.

- Robotics: service robots retrieving multilingual maintenance knowledge; Dependencies: multimodal integration, robust ASR/MT in noisy environments.

- Energy/Manufacturing: predictive maintenance retrieval across global logs/manuals; Dependencies: standardized nomenclature, domain adaptation.

- Policy and evaluation infrastructure for fair multilingual IR (policy; academia; standards bodies)

- What: Benchmarks and metrics that reflect linguistic diversity and equitable performance.

- How: balanced datasets (beyond dominant languages), cross‑lingual bias audits, standardized reporting and governance checklists.

- Dependencies: cross‑institution collaboration, funding for annotation in low‑resource languages, open evaluation platforms.

These applications draw directly from the survey’s methods: LLM‑based query/document expansion (HyDE, Query2Doc), multilingual dense and late‑interaction retrieval (mSBERT/LaBSE/ColBERT), hybrid sparse+dense with RRF, cross‑encoder and LLM re‑ranking (pointwise/pairwise/listwise), QA‑oriented re‑ranking and RAG, fairness/bias mitigation, cost‑aware cascades, and explainability—adapted to the constraints of each sector and deployment setting.

Glossary

- Adaptive candidate truncation: A technique to dynamically reduce the number of candidates before expensive re-ranking to save compute while preserving quality. "Adaptive candidate truncation for LLM-based re-rankers \cite{meng2024rlt} further optimises the trade-off between efficiency and retrieval quality."

- Annotation transfer: The process of reusing labels or annotations across languages or datasets, often introducing noise in cross-lingual settings. "annotation transfer introduces noise and inconsistency."

- Behavioural signals: User interaction features (e.g., clicks, ratings) used as implicit relevance feedback in ranking. "Behavioural signals such as clicks, ratings, and purchases, serve as implicit relevance feedback, often underperforming purely textual features."

- Bilingual LDA (BiLDA): A topic model aligning topics across two languages using bilingual resources. "Bilingual LDA (BiLDA)~\cite{vulic2013bilda}"

- Bi-encoder (dual-encoder): A retrieval architecture that encodes queries and documents independently into vectors for efficient similarity search. "A common architecture is the bi-encoder, also known as a dual-encoder, in which queries and documents are independently encoded into fixed-size embeddings."

- BM25: A classic probabilistic retrieval function based on term frequency and document length normalization. "BM25~\cite{robertson2009probabilistic}"

- BM25+: An enhanced variant of BM25 addressing document length saturation and term frequency scaling. "BM25+ \cite{lv_zhai_2011}"

- Cascade Model: A click model capturing positional bias by modeling users’ top-down examination of ranked lists. "Positional bias is further addressed by the Cascade Model/UBM click models and re-ranking with RandPair or FairPair~\cite{craswell2008cumulative, chapelle2009dynamic, wang2018position, joachims2007position}."

- Chain-of-Thought (CoT) prompting: An LLM prompting technique that elicits step-by-step reasoning to improve task performance. "A comparative study found CoT prompting to be the most effective \cite{jagerman_2023}."

- COIL: A multi-vector neural retrieval model that encodes token-level or span-level representations for fine-grained matching. "COIL~\cite{gao2021coil}"

- ColBERT: A late-interaction retrieval model computing token-level MaxSim similarities for efficient fine-grained matching. "late-interaction models (e.g. ColBERT~\cite{khattab2020colbert} and ColBERTv2~\cite{santhanam2022colbertv2})"

- ColBERTv2: An improved version of ColBERT with better performance and efficiency in late-interaction retrieval. "ColBERTv2~\cite{santhanam2022colbertv2}"

- Co-occurrence analysis: Statistical analysis of term co-occurrence used to select expansion terms related to multiple query terms. "For example, through co-occurrence analysis \cite{hsu_2006, hsu_2008}."

- Contrastive learning: A training paradigm that brings semantically similar representations closer and dissimilar ones apart, widely used in retrieval. "multilingual embedding alignment, contrastive learning, multilingual pre-training strategies, and the integration of generative LLMs"

- Cross-encoder: A re-ranking architecture that jointly encodes a query-document pair to model token-level interactions with high precision. "Encoder-based methods form the foundation of neural ranking in CLIR, primarily realised as cross-encoders and bi-encoders, explained in detail in Section \ref{subsec:model architecture}."

- Cross-lingual embeddings: Vector representations aligned across languages to enable multilingual similarity comparisons. "The emergence of cross-lingual embeddings and multilingual LLMs has introduced a new paradigm, offering improved retrieval performance and enabling answer generation."

- Cross-lingual information retrieval (CLIR): Retrieving documents in languages different from the query language. "Cross-lingual information retrieval (CLIR) addresses the challenge of retrieving relevant documents written in languages different from that of the original query."

- DeepCT: A neural term-weighting method that predicts term importance for sparse retrieval. "Approaches include term-weighting methods such as DeepCT \cite{dai2019deepct}"

- Dense Hierarchical Retrieval: A retrieval approach that first retrieves documents and then refines at the passage level. "Dense Hierarchical Retrieval \cite{liu-etal-2021-dense-hierarchical}"

- Dense Passage Retrieval (DPR): A dense retrieval method encoding passages independently to improve recall over sparse methods. "Dense Passage Retrieval (DPR) embeds text segments (100--300 words) independently and has shown substantial improvements over BM25, though performance depends on segmentation."

- Dense retrieval: Retrieval using dense vector embeddings and similarity metrics rather than exact term matching. "Dense retrieval models."

- Doc2query: A document expansion technique that predicts and appends likely queries to documents to improve sparse retrieval. "Nogueira et al.'s \cite{nogueira_2019_doc2query} Doc2query approach, in which a set of possible queries is predicted for each document and appended as pseudo-queries."

- Document entailment: Using entailment judgments to assess whether a document supports a query or claim for re-ranking. "Document entailment {paper_content} instructionâtuned models."

- FairPair: An unbiased re-ranking approach using interleaving/pairwise comparisons to mitigate position bias. "re-ranking with RandPair or FairPair~\cite{craswell2008cumulative, chapelle2009dynamic, wang2018position, joachims2007position}."

- Freshness: A temporal feature capturing how recent a document is, used as a ranking signal. "freshness (i.e. a temporal feature that measures how recent a document is relative to the query or current time)"

- Gold-standard judgments: High-quality human relevance annotations used as ground truth for evaluation. "Constructing high-quality, gold-standard judgments is resource-intensive, while annotation transfer introduces noise and inconsistency."

- Hallucinations (in LLMs): Fabricated or irrelevant outputs that degrade retrieval or QA performance. "hallucinations (i.e. irrelevant or erroneous expansions that degrade retrieval performance)."

- Hierarchical Representations: Multi-level embeddings (e.g., word→sentence→paragraph) to capture both local and global context. "Hierarchical Representations"

- Hybrid models (sparse + dense): Systems that combine sparse and dense signals for improved retrieval effectiveness. "Hybrid models combine sparse and dense signals \cite{Bruch2023_Hybrid, Weaviate2025_Hybrid}, either through parallel retrieval with later rank fusion (e.g. Reciprocal Rank Fusion \cite{cormack2009rrf, Weaviate2025_Hybrid} or concatenation, which often outperforms either approach alone)."

- Hybrid Sparse + Dense Retrieval: A retrieval setup fusing inverted-index term matching with dense semantic vectors. "Hybrid Sparse + Dense Retrieval"

- HyDE (Hypothetical Document Embeddings): An LLM-based method that generates pseudo-documents to create query embeddings for dense retrieval. "Hypothetical Document Embeddings (HyDE) \cite{gao_2023_hyde}"

- In-context learning: Prompting LLMs with examples in the input to induce task performance without parameter updates. "Few shot-prompting, also known as in-context learning, has also been used in the Query2doc method"

- InPars: A prompting-based data generation method for training/re-ranking with LLMs. "InPars \cite{bonifacio_2022_inpars}"

- Instruction tuning: Fine-tuning LLMs on instruction-format tasks to improve alignment and generalization. "instruction tuning with domain-relevant tasks to better capture real information needs~\cite{liu2021xlbel}."

- Instruction-tuned LLMs: LLMs fine-tuned to follow natural language instructions, often improving re-ranking. "Instruction-tuned LLMs like FlanâT5 \cite{chung2022scaling}, Zephyr \cite{tunstall2023zephyrdirectdistillationlm}, and ChatGPT \cite{openai_chatgpt_announcement} demonstrate strong capability in assessing document relevance."

- Inverted index: A data structure mapping terms to documents that contain them, enabling fast sparse retrieval. "Sparse retrieval models such as BM25, SPLADE, SPLADEâX represent queries and documents as high-dimensional sparse vectors, enabling inverted-index lookup"

- LambdaMART: A gradient-boosted learning-to-rank algorithm widely used with heterogeneous features. "Learning-to-rank methods like LambdaMART~\cite{karmaker2017application} incorporate these signals effectively"

- Latent Dirichlet Allocation (LDA): A generative topic model capturing latent themes in documents. "Latent Dirichlet Allocation (LDA) and multilingual extensions grouped documents into topics"

- Learning-to-rank: A family of machine learning methods for optimizing ranking functions using supervised signals. "Learning-to-rank methods like LambdaMART~\cite{karmaker2017application}"

- ListMLE: A listwise ranking loss optimizing the likelihood of correct permutations. "ListMLE \cite{xia2008listwise}"

- ListNet: A listwise learning-to-rank method that models permutations of ranked lists. "ListNet \cite{cao2007learning}"

- Margin Ranking Loss: A loss encouraging correct pairwise ordering by enforcing a margin between relevant and non-relevant scores. "contrastive objectives such as Multiple Negatives Ranking Loss or Margin Ranking Loss"

- Maximal Marginal Relevance (MMR): A diversification method balancing relevance and novelty to reduce redundancy. "methods like Maximal Marginal Relevance selectively promote new information while maintaining relevance."

- MaxSim: A late-interaction scoring function summing maximum token similarities between query and document. "then compute token-level similarity via MaxSim \cite{chan-ng-2008-maxsim}"

- Mean Average Precision (MAP): A retrieval metric averaging precision across recall thresholds for multiple queries. "Mean Average Precision (MAP)~\cite{manning2008introduction}"

- ME‑BERT: A multi-vector retrieval model encoding documents into multiple embeddings for fine-grained matching. "MEâBERT~\cite{luan2020mebert}"

- Memory-augmented architectures: Models enhanced with external memory slots to store and attend over document parts in retrieval. "Memory-augmented architectures (e.g. EMAT \cite{wu2022emat}, MoMA \cite{ge2023moma})"

- Multiple Negatives Ranking Loss: A contrastive loss that treats other positives in a batch as negatives to scale retrieval training. "contrastive objectives such as Multiple Negatives Ranking Loss or Margin Ranking Loss"

- MultiSPLADE: A multilingual extension of SPLADE producing sparse expanded representations for multiple languages. "MultiSPLADE~\cite{lassance2023multisplade}"

- Normalisation strategies (for ranking): Techniques to standardize feature or score distributions to reduce bias across languages or models. "normalisation strategies \cite{zhang2022evaluating} help reduce bias"

- Normalised Discounted Cumulative Gain (nDCG): A ranking metric emphasizing correct ordering at top ranks with position-based discounting. "Normalised Discounted Cumulative Gain (nDCG)~\cite{jarvelin2002cumulated}"

- PageRank: A link-analysis algorithm used as a structural feature for ranking. "Structural features, including PageRank, freshness (i.e. a temporal feature that measures how recent a document is relative to the query or current time), and categorical tags, further refine ranking."

- Polylingual Labelled LDA: A supervised multilingual topic model aligning topics using labels across languages. "Polylingual Labelled LDA \cite{posch2015polylingual}"

- Polylingual Topic Models (PLTM): Topic models that jointly model multiple languages to align topical structures. "Polylingual Topic Models (PLTM)~\cite{mimno2009polylingual}"

- Pseudo-documents: Synthetic documents generated (often by LLMs) to enrich queries or documents for retrieval. "LLMs have also been used to generate pseudo-documents, thereby enriching the document side of retrieval."

- Pseudo-relevance feedback (PRF): Automatic feedback assuming top-ranked results are relevant to expand queries. "Pseudo-relevance feedback (PRF), introduced by Croft and Harper~\cite{croft_harper_1979}, employs a similar approach but removes user input by assuming the top-ranked documents are relevant."

- Query expansion: Augmenting the original query with related terms to improve recall and disambiguation. "Traditional monolingual information retrieval systems are generally organised into a multi-stage pipeline: (i) query expansion"

- Query2doc: An LLM-based method generating pseudo-documents from queries to enhance both sparse and dense retrieval. "Query2doc also produces pseudo-documents, which can then be concatenated with the query for both sparse and dense retrieval \cite{wang_2023_query2doc}."

- RAG (Retrieval augmented generation): A framework combining retrieval with generation to ground answers in evidence. "Retrieval augmented generation (RAG) addresses these issues by combining IR with generative models."

- RankGPT: An LLM-based re-ranking approach that leverages prompting for ranking tasks. "RankGPT\cite{sun-etal-2023-chatgpt}"

- RankNet: A neural pairwise learning-to-rank model comparing document pairs to learn ordering. "RankNet \cite{burges2005learning}"

- Reciprocal Rank Fusion (RRF): A simple, effective method to fuse rankings from multiple systems using reciprocal ranks. "Reciprocal Rank Fusion \cite{cormack2009rrf} avoids score-scale mismatches and is used in Zilliz~\cite{zilliz_rrf_ranker} and Azure AI~\cite{azure_rrf_hybrid}."

- Relevance feedback: Using user-identified relevant (or non-relevant) documents to refine and expand queries. "Early work by Rocchio \cite{rocchio_1965, rocchio_1971} ... uses user-identified relevant documents to expand queries through local feedback expansion"

- Relevance model (Lavrenko–Croft): A probabilistic model estimating the distribution of terms in relevant documents for query expansion. "builds on Lavrenko and Croft's relevance model \cite{lavrenko_croft_2001}"

- Rocchio algorithm: A classic relevance feedback method adjusting query vectors towards relevant and away from non-relevant documents. "Early work by Rocchio \cite{rocchio_1965, rocchio_1971}, based on the SMART retrieval system"

- Semantic drift: The unintended change in meaning introduced by translation or alignment that degrades accuracy. "Translation and alignment may cause semantic drift, altering meaning and reducing accuracy."

- SPLADE: A sparse neural retriever that expands and weights terms using transformers while retaining inverted-index efficiency. "SPLADE \cite{formal2021splade, formal2021spladev2}"

- SPLADEv2: An improved SPLADE variant with enhanced training and performance. "SPLADEv2 \cite{formal2021spladev2}"

- SPLADE‑X: A cross-lingual extension of SPLADE enabling multilingual sparse retrieval. "SPLADE-X~\cite{nair2022spladex}"

- SpaDE: A sparse expansion model that generates additional relevant terms for retrieval. "SpaDE \cite{choi2022spade}"

- Sparse neural retrievers: Neural models that produce sparse representations compatible with inverted indexes. "sparse neural retrievers, have been designed specifically for information retrieval and often outperform traditional approaches."

- Sparse retrieval: Keyword-based retrieval using inverted indexes and exact term matching. "Sparse retrieval models."

- Stemming: Reducing words to their base form to improve matching in lexical retrieval. "One-to-one associations link each query term directly to an expansion term, often using stemming \cite{porter_1980}"

- Term Frequency–Inverse Document Frequency (TF‑IDF): A weighting scheme balancing term frequency with corpus-wide rarity to score relevance. "Statistical methods such as Term Frequency-Inverse Document Frequency (TF-IDF) \cite{sparck1972statistical}"

- Topic modelling: Discovering latent topics in a corpus to represent documents at a thematic level. "Before the widespread adoption of neural embeddings, CLIR systems frequently employed topic modelling to capture latent semantics across languages."

- Translate-Distill: A knowledge-distillation approach where a cross-encoder teacher guides a bi-encoder student for efficient retrieval. "Translate-Distill \cite{yang2024translate_distill}"

- UBM click models: User Browsing Models capturing user examination behavior to correct positional bias in click data. "the Cascade Model/UBM click models"

- Vocabulary problem: The mismatch between users’ query terms and document terminology leading to retrieval failures. "Another issue is the vocabulary problem \cite{furnas_1987}"

- WordNet: A lexical ontology used as a resource for query expansion and semantic relations. "thesauri and ontologies such as WordNet \cite{miller_1990_wordnet}"

- Zero-shot prompting: Using LLMs without task-specific training to generate expansions or rankings via prompts. "Prompting methods, where models directly generate expanded queries or related documents, include zero-shot, few-shot, and Chain-of-Thought (CoT) techniques."

Collections

Sign up for free to add this paper to one or more collections.