- The paper presents a novel approach using GPT-4o and a chain-of-thought dataset to reframe SAR target recognition as a visual reasoning task.

- It integrates multimodal LLMs with structured SAR imagery and textual annotations to enhance classification interpretability and reduce misclassification.

- Results indicate promising performance under challenging conditions, though further refinement is needed for targets with weak texture features.

Reframing SAR Target Recognition with Multimodal LLMs

Introduction

The paper "Reframing SAR Target Recognition as Visual Reasoning: A Chain-of-Thought Dataset with Multimodal LLMs" (2507.09535) introduces a novel approach to Synthetic Aperture Radar (SAR) image recognition by leveraging multimodal LLMs (MLLMs) and a Chain-of-Thought (CoT) reasoning paradigm. Conventional methods struggle with the intrinsic limitations of SAR data, such as high noise and weak texture features. This research challenges traditional paradigms by reframing SAR target recognition tasks as multimodal reasoning operations facilitated by MLLMs.

Methodology

The authors propose using GPT-4o, a variant of the GPT-4 model, to classify targets in SAR imagery, guided by candidate categories and enhanced with CoT reasoning. The work introduces a new dataset constructed from the FAIR-CSAR benchmark, encompassing raw SAR images, structured annotations, and GPT-generated reasoning chains. By transforming SAR recognition into a reasoning exercise, the model aims to deliver logically coherent and interpretable inferences, therefore addressing the contextual and ambiguous nature of SAR imagery.

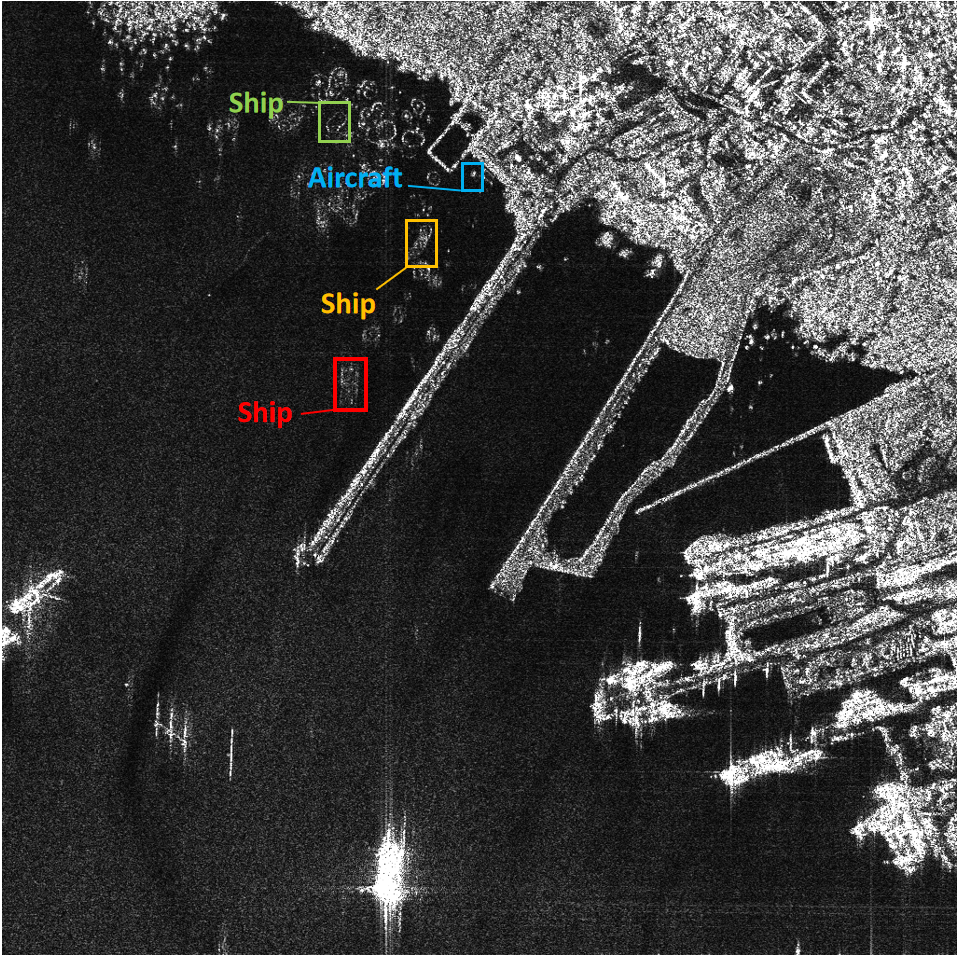

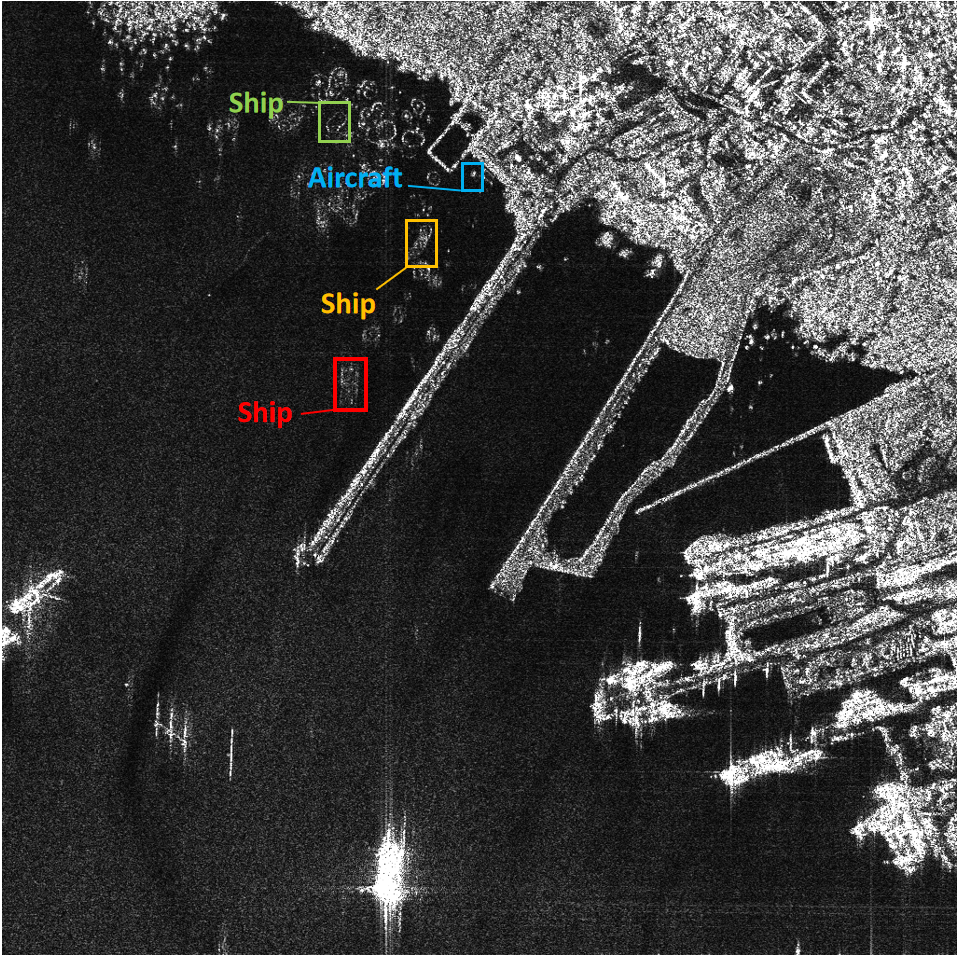

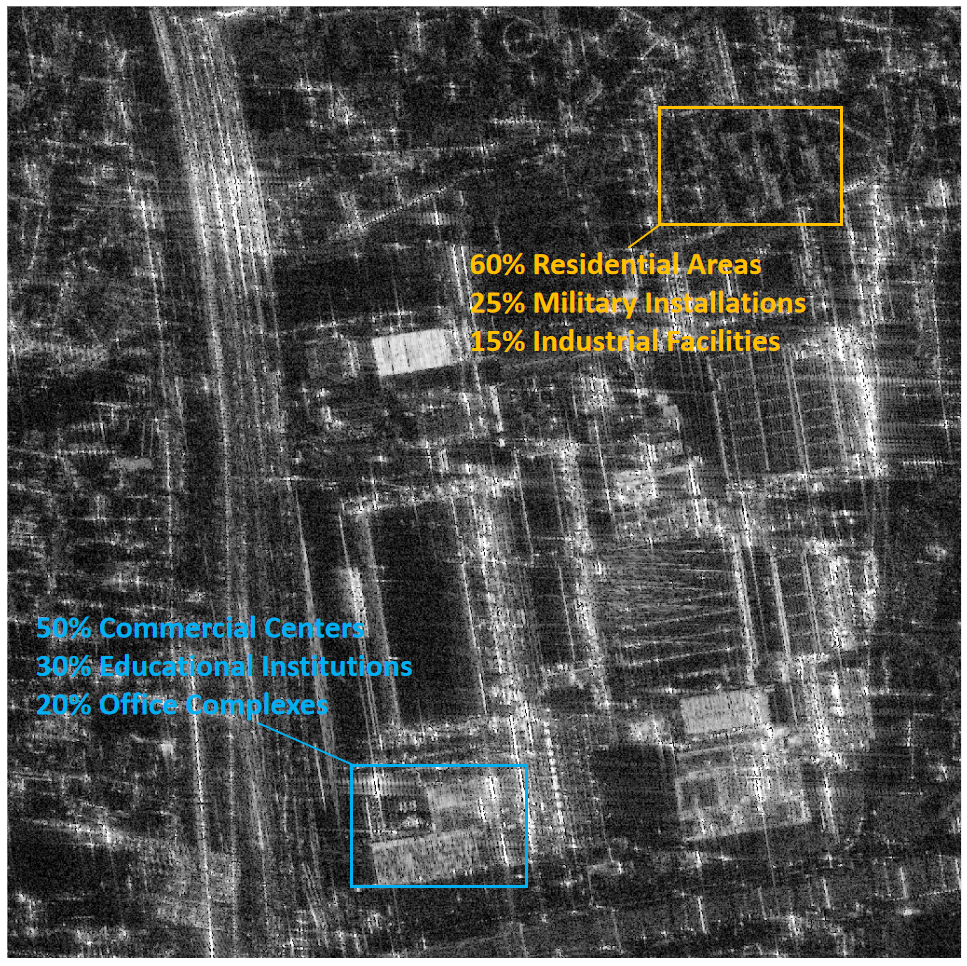

Figure 1: Top-1 prediction from other models causes semantic conflict.

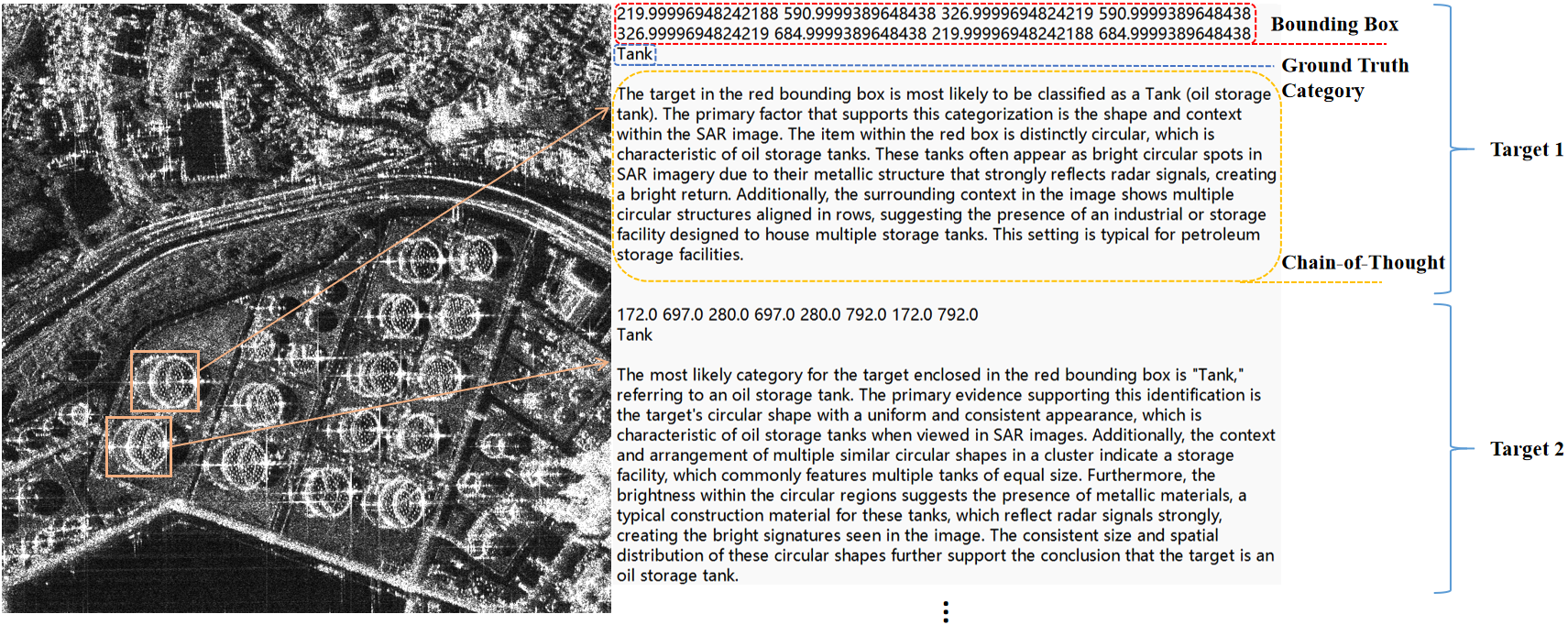

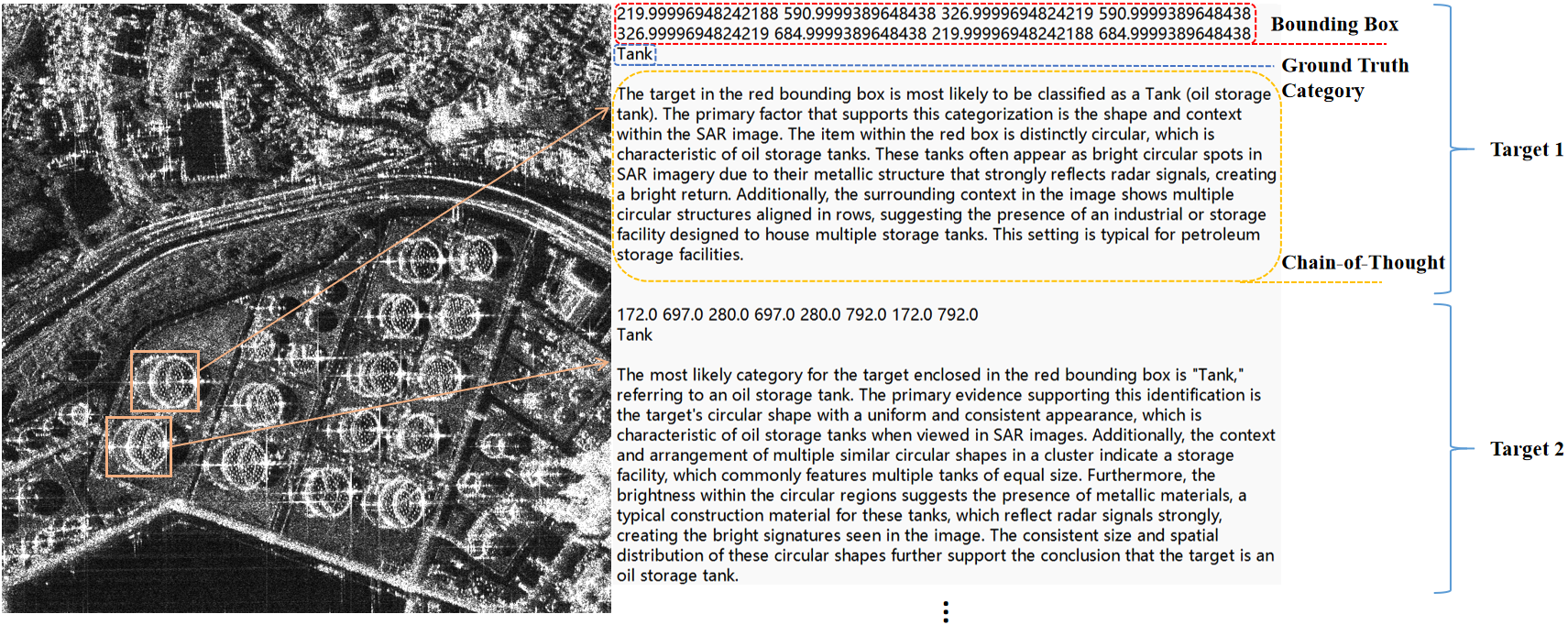

Figure 2: Data format example in the dataset.

Dataset Construction

The dataset architecture combines diverse SAR images with textual annotations, including candidate categories and CoT reasoning chains. The images are processed by overlaying bounding boxes on targets and providing a set of candidate labels, from which the model deduces the most appropriate classification through reasoning.

Analysis

The analysis demonstrates promising results, showing that MLLMs can successfully interpret SAR data under complex conditions. The reasoning chains generated by GPT-4o were largely accurate, despite challenges with certain categories featuring high visual similarity. The paper identifies critical instances where misclassification remains dominant due to weak feature representation.

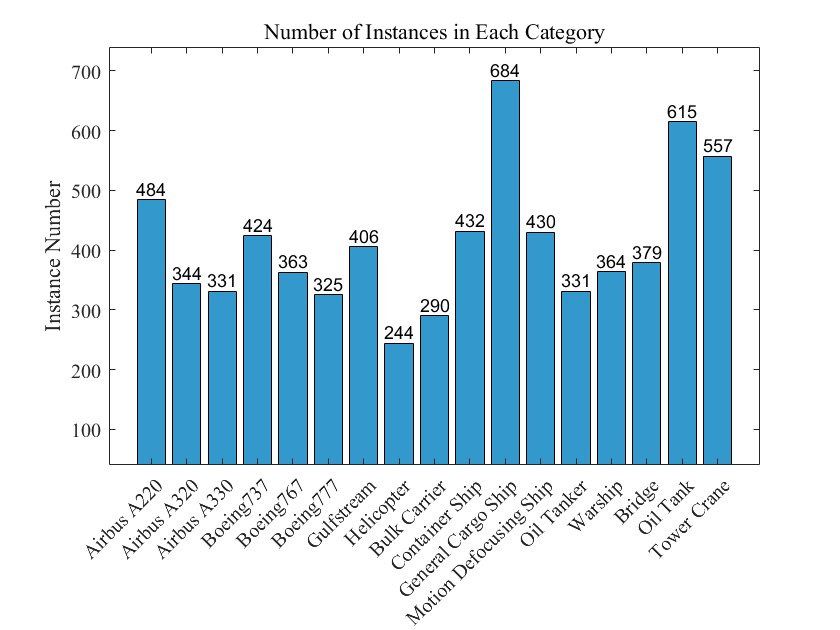

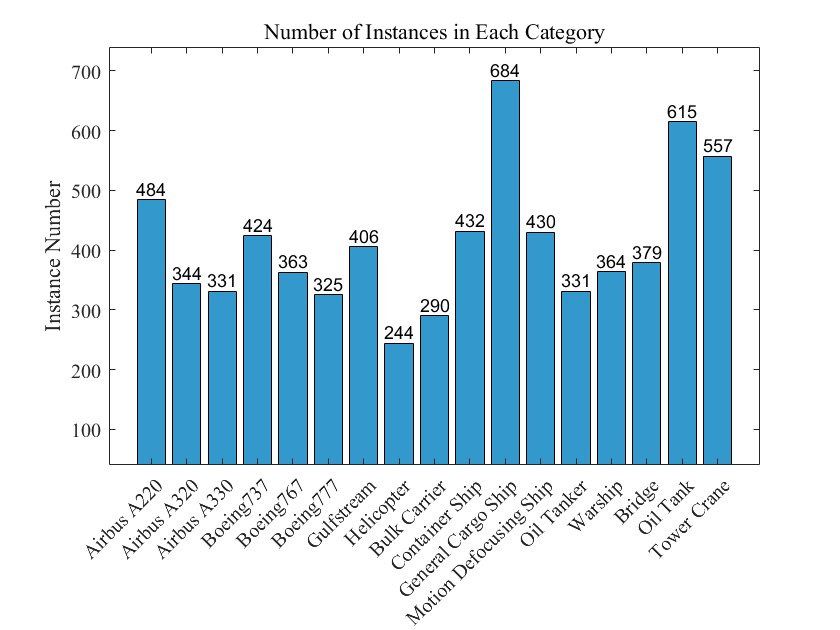

Figure 3: Number of instances in each category.

Discussion on Multimodal LLMs

The integration of MLLMs in SAR image recognition showcases the potential benefits of coupling visual data with language processing capabilities, providing an enriched context for target identification. MLLMs such as GPT-4o can leverage implicit relationships and produce interpretable reasoning chains, valuable in scenarios where optical information is sparse or obscured.

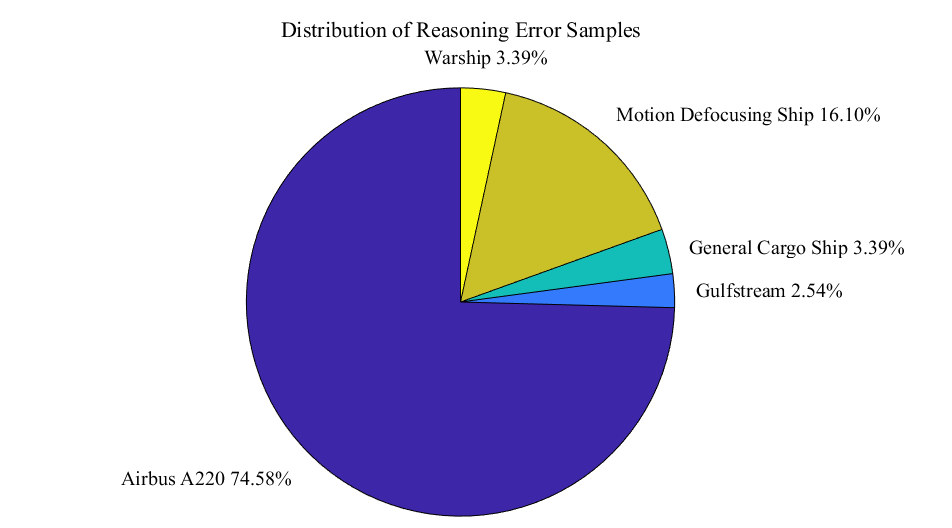

Error Analysis

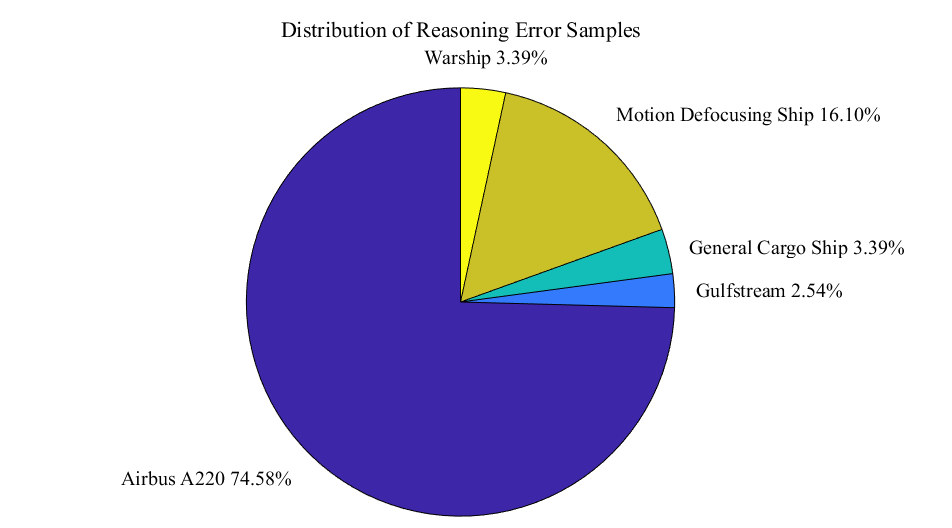

The study notes particular difficulties in classifying Airbus A220 targets due to SAR characteristics being shared with other similar aircraft. This underlines model biases toward visually dominant categories. Repeated inference attempts reveal that the model adheres to faulty reasoning paths in certain instances, necessitating targeted interventions and enhanced model training protocols.

Figure 4: Distribution of reasoning error samples.

Conclusion

The paper delivers a significant contribution to SAR image recognition by reframing the problem into multimodal reasoning tasks facilitated by MLLMs. It establishes a foundation for future research in incorporating LLMs into SAR analysis pipelines. Future work should focus on expanding the dataset, implementing domain-specific evaluation protocols, and developing fine-tuned multimodal architectures for enhanced SAR target recognition.

The research holds implications for broadening the application of MLLMs across diverse remote sensing challenges, potentially transforming decision-support systems by marrying visual and linguistic modalities. Further exploration could aim at refining reasoning models within other complex data domains, promoting advancements in AI-driven interpretation capabilities.