Decentralizing AI Memory: SHIMI, a Semantic Hierarchical Memory Index for Scalable Agent Reasoning

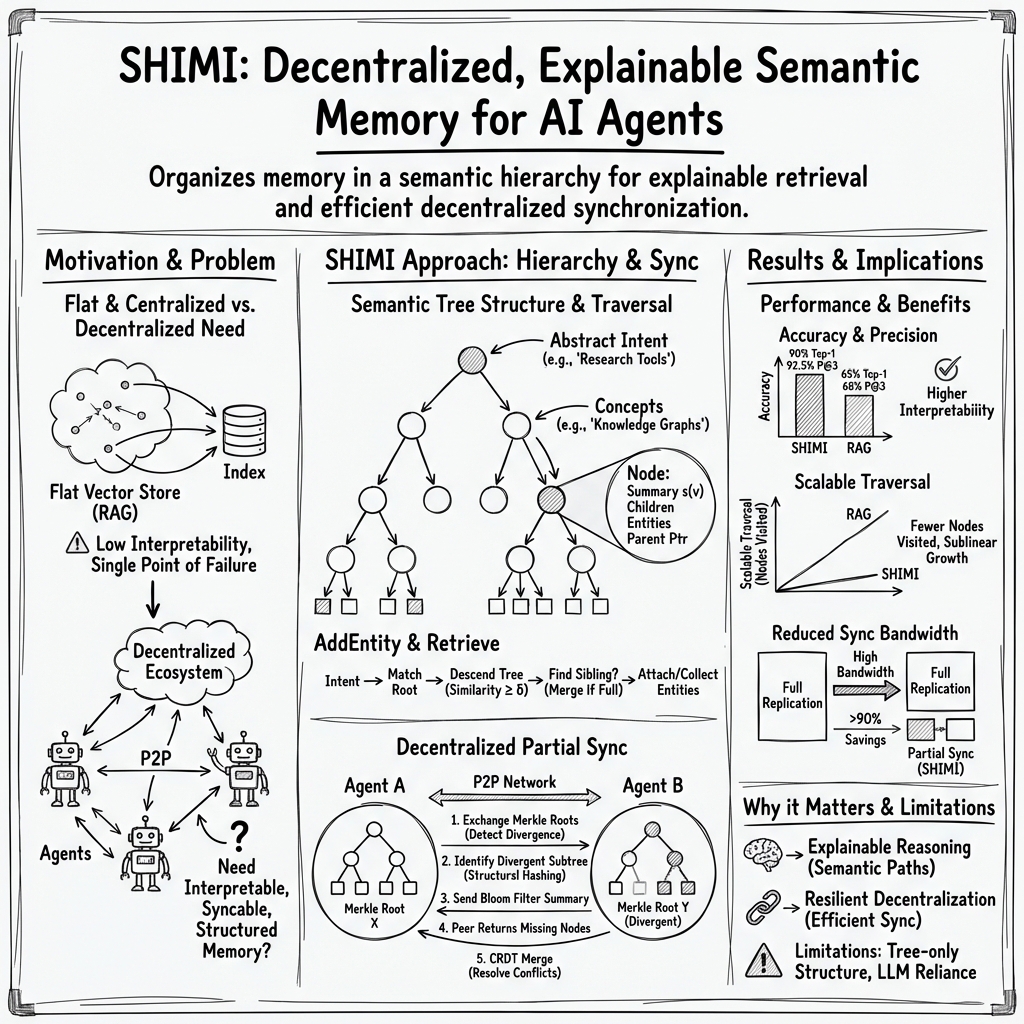

Abstract: Retrieval-Augmented Generation (RAG) and vector-based search have become foundational tools for memory in AI systems, yet they struggle with abstraction, scalability, and semantic precision - especially in decentralized environments. We present SHIMI (Semantic Hierarchical Memory Index), a unified architecture that models knowledge as a dynamically structured hierarchy of concepts, enabling agents to retrieve information based on meaning rather than surface similarity. SHIMI organizes memory into layered semantic nodes and supports top-down traversal from abstract intent to specific entities, offering more precise and explainable retrieval. Critically, SHIMI is natively designed for decentralized ecosystems, where agents maintain local memory trees and synchronize them asynchronously across networks. We introduce a lightweight sync protocol that leverages Merkle-DAG summaries, Bloom filters, and CRDT-style conflict resolution to enable partial synchronization with minimal overhead. Through benchmark experiments and use cases involving decentralized agent collaboration, we demonstrate SHIMI's advantages in retrieval accuracy, semantic fidelity, and scalability - positioning it as a core infrastructure layer for decentralized cognitive systems.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces SHIMI, a new way for AI systems to remember and find information. Instead of storing facts in one big flat list, SHIMI organizes knowledge like a neatly arranged tree of topics, from general ideas at the top to very specific details at the bottom. This makes it easier for AI “agents” (software programs that act on their own) to find the right information based on meaning, not just on words that look similar.

SHIMI is also built for decentralized systems—places where there isn’t one central server in charge. Each agent keeps its own memory and can share updates with others in a smart, low-bandwidth way. The result is a memory system that is more accurate, easier to explain, and able to scale to lots of agents working together.

What questions did the researchers ask?

The paper explores simple, practical questions:

- Can organizing memory as a hierarchy of concepts make retrieval more accurate than regular vector-based search (like standard RAG systems)?

- Can this kind of memory explain why it found something (not be a “black box”)?

- Can many agents, each with their own memory, keep in sync without sending huge amounts of data?

- Will this approach stay fast as the amount of information and number of agents grow?

How did they do it?

Think of SHIMI like a well-organized library:

- Books aren’t just tossed in a pile. They’re placed on shelves in sections: “Science” → “Biology” → “Animals” → “Cats,” and so on.

- When you add a new book, you walk down the shelves from general to specific, and place it where it fits best.

- When you search, you start from a broad area and quickly narrow down to the exact shelf you need.

Here’s how that translates to AI:

- Semantic hierarchy: SHIMI stores information as a tree of concepts. Top levels are broad (“Science”), lower levels are specific (“Marine biology → Coral reefs”). “Semantic” means it groups items by meaning.

- Meaning-based matching: When adding or searching information, SHIMI checks meaning, not just similar words. For example, it knows “feline” and “cat” are related.

- Smart merging: If a section gets crowded, SHIMI can merge similar subtopics under a new shared parent category, keeping things tidy and balanced.

- Clear paths: When SHIMI finds an answer, it can show the path it followed through the tree. This makes results explainable.

To make this work across many agents without a central server, SHIMI uses three lightweight syncing tools:

- Merkle-DAG “fingerprints”: Like a tree-shaped fingerprint for each agent’s memory. Agents compare fingerprints to quickly spot which parts differ.

- Bloom filters: A tiny, compact checklist that can say “definitely not here” or “maybe here.” This helps avoid sending full lists back and forth.

- CRDT-style merging: Simple, reliable rules for combining changes (like merging edits in a shared document) so everyone eventually agrees, even if they were offline.

Under the hood, a LLM helps judge relationships between concepts (for example, whether one concept is more general than another), but you don’t need to understand the math to get the idea.

What did they find?

Across tests and simulations, SHIMI outperformed regular vector-based RAG systems:

- Better accuracy and precision: SHIMI found the right answer more often (Top-1 accuracy about 90% vs. 65% for the baseline) and returned more relevant results in the top few suggestions.

- More explainable results: Human evaluators rated SHIMI’s reasoning paths as more understandable, because you can see the concept trail it followed.

- Faster searches as data grows: SHIMI checked fewer nodes per query and stayed efficient even as the memory grew. Instead of scanning everything, it prunes irrelevant branches early.

- Huge sync savings: When agents synchronized, SHIMI sent over 90% less data than copying everything, thanks to its “only share what changed” approach.

- Scales well: As the number of stored items increased, SHIMI’s search time grew slowly, while the flat vector baseline slowed down much more.

Why this matters:

- More accurate and explainable results help build trust and make debugging easier.

- Lower bandwidth and faster syncing make it practical for many agents on different networks.

- Good scaling means it can handle real-world, growing systems.

What does this mean for the future?

SHIMI could become a foundation for how autonomous AI agents remember and share knowledge in decentralized systems. That can help in:

- Decentralized agent marketplaces: Matching the right agent to a task by meaning, even if descriptions use different words.

- Federated knowledge networks: Organizations can keep their own structures but still share and align knowledge without a central authority.

- Multi-agent systems: Swarms of robots, bots, or assistants can learn locally and share efficiently.

- Blockchain-based task platforms: Providing a clear, semantic index for tasks and capabilities with explainable matching.

The authors also note some limits and next steps:

- Trees are great, but some knowledge fits better in overlapping graphs. Future versions may support that.

- The system relies on LLMs for judging meaning, which can be imperfect. Adding more rules or logic could improve reliability.

- Tests were simulated; real-world trials with messy data and network hiccups are an important next step.

In short, SHIMI shows that organizing memory by meaning, not just by surface similarity, can make AI agents smarter, clearer, and better at working together—especially when no single server is in charge.

Practical Applications

Immediate Applications

Below are deployable use cases that can be built with today’s LLMs, existing P2P stacks (e.g., IPFS/OrbitDB), and standard agent frameworks, leveraging SHIMI’s hierarchical semantic retrieval and partial sync protocol.

- Bold semantic memory for agent frameworks (Software/AI tooling)

- Tools/Products: SHIMI SDK and Python library; adapters for LangChain/LlamaIndex and vector DBs (hybrid SHIMI+embedding store).

- Workflow: Replace flat vector stores with SHIMI for agent memory; use semantic descent for retrieval; fall back to embeddings when no path exists; log traversal paths for audit.

- Assumptions/Dependencies: Reliable LLM similarity/ontology prompts; tuning of T, L, δ, γ; modest data volumes for initial rollouts.

- Decentralized agent marketplace matching (Software/Web3)

- Tools/Products: Off-chain SHIMI index with on-chain anchors (Merkle roots); matching and reputation dashboards with explainable traces.

- Workflow: Agents publish capability summaries to local trees; marketplaces query SHIMI across peers via partial sync; matches include traversal justification.

- Assumptions/Dependencies: P2P connectivity; basic trust/reputation; agent self-descriptions; off-chain compute oracle.

- Federated knowledge indexing for research consortia (Academia/Healthcare)

- Tools/Products: SHIMI overlay on IPFS/OrbitDB; ontology adapters for SNOMED, ICD, MeSH; governance UI for merges/conflicts.

- Workflow: Each institution curates a local semantic tree; partial sync exchanges only divergent subtrees; CRDT merges preserve provenance and explanations.

- Assumptions/Dependencies: De-identified or consented data; institutional data-sharing agreements; alignment with controlled vocabularies.

- Explainable enterprise knowledge retrieval (Finance/Insurance/Legal)

- Tools/Products: SHIMI-backed enterprise search server; audit APIs exporting retrieval paths; compliance dashboards.

- Workflow: Ingest policies/regulations/case law into semantic trees; employees query and receive path-justified results; export trails for audits.

- Assumptions/Dependencies: Content governance; LLM guardrails; security controls and access policies.

- Helpdesk triage and routing (IT/Customer Support)

- Tools/Products: Routing engine powered by SHIMI; connectors for Zendesk/ServiceNow; ticket-to-playbook semantic mapping.

- Workflow: Tickets are summarized and inserted; retrieval maps issues to KB articles and automation bots via hierarchical concepts (e.g., device → OS → error).

- Assumptions/Dependencies: Ticket summarization quality; taxonomy seeding; integration with existing ITSM.

- Multi-agent MLOps registry (Software/ML platforms)

- Tools/Products: SHIMI-backed registry of models/datasets/endpoints; capability-based routing for inference services.

- Workflow: Register models with semantic capabilities and constraints; retrieval routes requests to suitable models with explainable matching.

- Assumptions/Dependencies: Accurate metadata; org-wide adoption; change management for model lifecycle.

- Code understanding and refactoring assistance (DevTools/Software)

- Tools/Products: IDE plugin indexing repositories into SHIMI; semantic navigation/refactor suggestions with path explanations.

- Workflow: Summarize functions/modules into trees (domain → component → pattern); queries traverse to relevant code regions; propose refactors.

- Assumptions/Dependencies: Reliable code-to-text summarization; repo-scale indexing; developer workflow integration.

- Educational content mapping to learning objectives (Education/EdTech)

- Tools/Products: LMS plugin; SHIMI-based curriculum map (objective → concept → activity).

- Workflow: Ingest syllabi and resources; semantic retrieval aligns student needs to targeted resources; educators see reasoning traces.

- Assumptions/Dependencies: Alignment with Bloom’s taxonomy or domain standards; quality of resource summaries.

- Cybersecurity knowledge and incident playbooks (Security/SOC)

- Tools/Products: SOC memory layer indexing ATT&CK techniques, detections, and IR steps; explainable alert enrichment.

- Workflow: Alerts semantically matched to tactics/techniques; retrieval returns prioritized steps with provenance paths.

- Assumptions/Dependencies: Up-to-date threat intel; high-quality playbooks; secure on-prem deployment.

- Supply chain document alignment and vendor onboarding (Logistics/Manufacturing)

- Tools/Products: SHIMI adapter for EDI/ERP; semantic alignment between supplier catalogs/specs and internal categories.

- Workflow: Vendors upload specs → local tree; partial sync with buyers exchanges only divergent nodes; explainable mappings reduce manual harmonization.

- Assumptions/Dependencies: Access to structured/unstructured docs; baseline category mapping; data-sharing policies.

- Smart-contract task boards and bounties (Web3/DAOs)

- Tools/Products: SHIMI index for tasks/bids; EVM integration with off-chain signatures of traversal proofs.

- Workflow: Tasks and proposals organized semantically; retrieval surfaces best-fit contributors with transparent criteria.

- Assumptions/Dependencies: Off-chain compute; decentralized identity and reputation; gas-efficient anchoring.

- Personal knowledge base across devices (Daily life/Edge)

- Tools/Products: Local-first SHIMI app for phone/laptop; peer-to-peer partial sync using Bloom filters; optional encrypted cloud relay.

- Workflow: Notes/emails/bookmarks inserted; queries traverse personal concept trees; devices sync only changed subtrees.

- Assumptions/Dependencies: Small-footprint LLM or API; encryption and key management; user consent and privacy controls.

Long-Term Applications

These require further research, scaling, or protocol evolution (e.g., polyhierarchies/graphs, on-device LLMs, privacy-preserving semantics, adversarial robustness).

- Cross-institution healthcare semantic exchange (Healthcare)

- Tools/Products: SHIMI-backed federated EHR/clinical knowledge exchange; semantic provenance and CRDT merges across providers.

- Workflow: Hospitals maintain local trees (conditions → interventions → outcomes); partial sync shares validated abstractions and de-identified insights.

- Assumptions/Dependencies: HIPAA/GDPR compliance; strong de-identification; alignment with clinical ontologies; auditability and governance.

- Swarm robotics shared semantic memory (Robotics/Autonomy)

- Tools/Products: ROS2-compatible SHIMI module; on-device LLMs for semantic relation checks; real-time CRDT sync.

- Workflow: Robots learn environment/task abstractions locally; synchronize minimal divergent subtrees; plan cooperatively with explainable paths.

- Assumptions/Dependencies: Real-time constraints; robust wireless mesh; energy-efficient on-device inference; safety certification.

- Smart grid coordination via semantic agents (Energy)

- Tools/Products: Grid agent memory layer capturing assets, constraints, and market signals; explainable dispatch decisions.

- Workflow: Distributed agents negotiate/load-balance using semantically aligned goals; partial sync ensures local views converge.

- Assumptions/Dependencies: Regulatory approval; cyber-physical safety; latency tolerance; interoperability with SCADA.

- Financial compliance ledger with explainable AI memory (Finance/RegTech)

- Tools/Products: SHIMI-based compliance graph anchored to blockchain; zero-knowledge proofs of retrieval paths.

- Workflow: Policies, decisions, and evidence are indexed; audits verify decisions via cryptographically committed semantic traces.

- Assumptions/Dependencies: ZK proof systems for semantic steps; industry standards; regulator acceptance.

- Global, decentralized research knowledge commons (Academia/Policy)

- Tools/Products: Open SHIMI protocol for semantic synchronization; contributor reputation and merge governance.

- Workflow: Labs maintain local trees of findings/datasets; partial sync and dispute resolution build a convergent, explainable knowledge map.

- Assumptions/Dependencies: Incentive structures; governance frameworks; anti-spam/adversarial defenses.

- Privacy-preserving semantic sync (Privacy Tech)

- Tools/Products: Differential privacy or secure enclaves for semantic summaries; private set intersection for Bloom-like filtering.

- Workflow: Exchange of abstracted, noise-added summaries enabling utility without sensitive leakage.

- Assumptions/Dependencies: Formal privacy guarantees; performance overhead management; policy alignment.

- Polyhierarchical/graph extension of SHIMI (Software/Knowledge graphs)

- Tools/Products: SHIMI-G (DAG/graph version) supporting multiple parents, cycles, and richer relations.

- Workflow: Generalize merge and traversal to graphs; retain explainability via multi-path traces; update sync/CRDT semantics.

- Assumptions/Dependencies: New algorithms for conflict resolution on graphs; complexity control; UI for multi-parent explanations.

- Adversarially robust semantic merging (Security/AI Safety)

- Tools/Products: Verification layers that detect prompt injection and semantic poisoning; hybrid symbolic checks for merges.

- Workflow: Pre-merge validation, anomaly detection on summaries, human-in-the-loop approvals for sensitive nodes.

- Assumptions/Dependencies: Benchmarks/datasets for semantic attacks; certified LLM components; operational playbooks.

- Edge/offline deployments with tiny LLMs (Edge/IoT)

- Tools/Products: Quantized on-device models for GetRelation/sim; bandwidth-aware sync strategies.

- Workflow: Devices operate offline with local semantics; opportunistic partial sync when connectivity returns.

- Assumptions/Dependencies: Model compression; hardware accelerators; energy constraints.

- Cross-enterprise legal contract lifecycle network (Legal/Enterprise)

- Tools/Products: SHIMI network for clauses/precedents/obligations with provenance; negotiation assistants using semantic traversal.

- Workflow: Firms maintain local trees; selectively sync clause families; explainable contract diffs and risk mappings.

- Assumptions/Dependencies: Standardized clause taxonomies; confidentiality and access controls; partner adoption.

Notes on common assumptions and dependencies

- LLM reliance: Core operations (GetRelation/similarity/merging) depend on LLM quality; domain-specific prompts and guardrails are needed.

- Governance and trust: Decentralized sync requires policies for merge conflicts, provenance, and abuse mitigation.

- Privacy and compliance: Sector deployments (healthcare/finance) need strong privacy, audit, and regulatory alignment.

- Infrastructure: Effective use of Merkle-DAGs, Bloom filters, and CRDTs presumes reliable P2P networks and content-addressed storage.

- Schema alignment: Bootstrapping benefits from existing ontologies and taxonomies; mapping/adaptation tools reduce onboarding friction.

Collections

Sign up for free to add this paper to one or more collections.