- The paper presents a theoretical framework predicting the formation of periodic channel structures in DNN weight morphologies independent of training data.

- Microscopic perturbations and feedback loops between interconnected layers drive the emergence of large-scale weight channels during early training.

- Empirical validations on synthetic and real datasets demonstrate that these emergent channels enhance training efficiency and feature extraction.

Emergent Weight Morphologies in Deep Neural Networks

Introduction

The study of emergent behaviors in deep neural networks (DNNs) is crucial for both understanding the internal workings of deep learning and assessing the security risks associated with advanced AI systems. This paper investigates the emergence of weight morphologies during the training of DNNs, drawing an analogy to morphological structures in condensed matter physics. The authors develop a theoretical framework predicting the formation of periodic channel structures within trained networks, independent of the data utilized during the training process.

Theoretical Framework

The proposed theoretical model considers DNNs as non-equilibrium systems comprised of interacting units representing local weight morphologies. The emergent behaviors are derived from a formalism akin to methods in condensed matter physics, which provide a structured approach to predict macro behaviors from micro interactions.

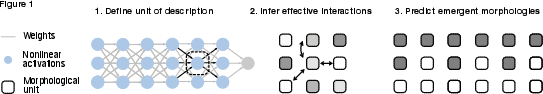

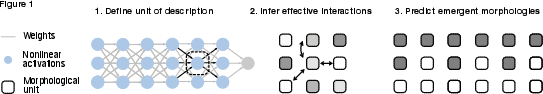

Figure 1: Illustration of the theoretical approach. As a first step, we define a unit of description that describes the local morphology of weights (dashed rectangle). We then infer effective interactions between these morphological units (arrow). The shading represents the value of the morphological unit. We finally predict emergent, large-scale morphological structures from these interactions.

The theory posits that the homogeneous initial state of fully connected feed-forward networks is morphologically unstable, leading to the emergence of channel-like structures characterized by large-scale order in weights throughout multiple layers.

Microscopic Dynamics and Predictions

The study begins at the microscopic level, assessing small perturbations in initial weights. These perturbations result in instabilities that induce the reorganization of weight morphologies into larger structures.

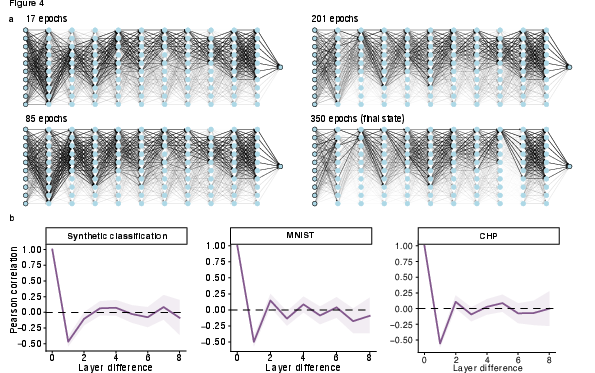

Figure 2: a Snapshots of a neural network at different stages during early training. The shade of the connecting lines denotes the relative absolute strength of the weight with respect to the maximum within each layer. The neural networks were trained on synthetic cluster data.

The dynamics of weight updates indicate significant coupling between interconnected weights in successive layers, which results in feedback loops that stabilize emergent structures. As weights increase uniformly during training, this uniform growth facilitates the prediction and consistent formation of these channel structures.

Numerical Validation

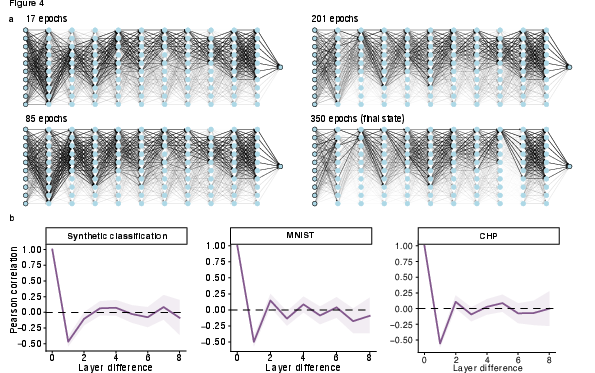

Empirical validation through numerous simulations across various datasets — synthetic cluster data, California Housing, and MNIST — supports the theoretical predictions. Neural networks trained on these datasets consistently exhibit emergent channel structures early in the training process. Notably, these structures were deterministic of the training conditions and independent of the data specifics.

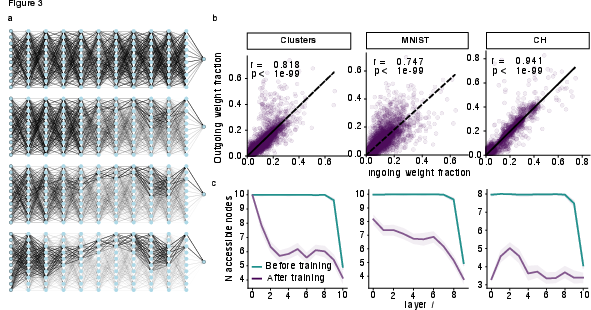

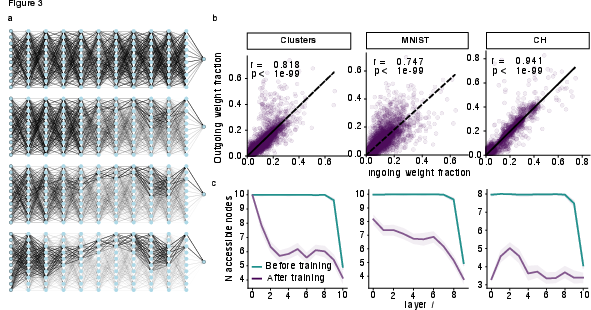

Figure 3: a Snapshots of a neural network at different stages after channel formation until the end of training. The neural networks were trained on synthetic cluster data. Line shades as in Fig. 3c.

The statistical analysis indicates that the emergence of channels depicts a significant reduction in complexity and weight dynamics, correlating well with predictions from the theoretical model. Moreover, channel structure formation is shown to be not necessarily essential but beneficial for enhancing the training efficiency and the network's ability to generalize.

Functional Implications

One crucial implication of the research is the understanding that emergent weight morphologies contribute to the functional characteristics of the network, although they are not inherently tied to the data specifics. The formation of periodic channels supports the network's ability to improve by modifying the dimensionality of data representations in intermediate layers, thus facilitating better separability and compression throughout the network's operations.

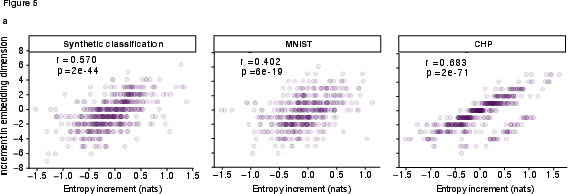

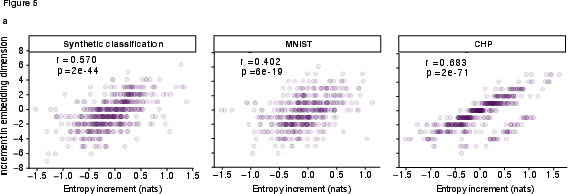

Figure 4: a To quantify the relation between the channel width and embedding dimensions we computed the entropy increments from one layer to the next, and scatter this against the corresponding change in embedding dimension. Each point thus corresponds to a comparison of two successive layers.

Empirical results demonstrate that modifications in channel morphologies are associated with changes in the network's hidden data representations, increasing the potential for advanced feature extraction and inherently contributing to enhanced network performance.

Conclusion

This study extends our understanding of DNNs by highlighting the emergence of weight morphologies that occur independently of the training data. The documented emergence of channels and their influence on the function of neural networks underscore the importance of considering these structures in both theoretical analyses and practical implementations. These findings pave the way for further research into emergent properties in larger and more complex artificial neural networks, raising essential questions about the predictability and reliability of AI systems as they continue to evolve.