3D Face Tracking from 2D Video through Iterative Dense UV to Image Flow

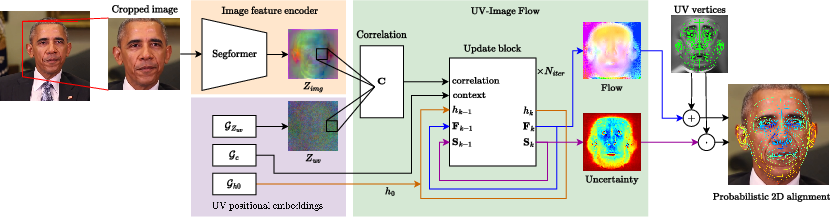

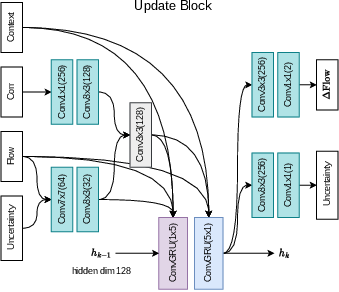

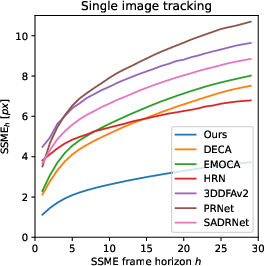

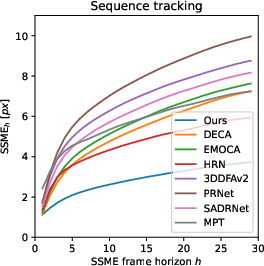

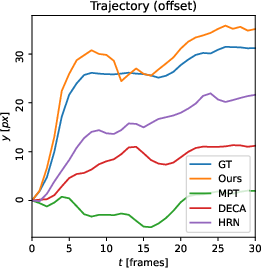

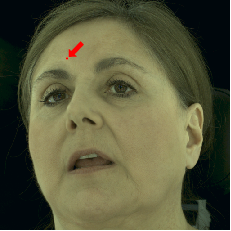

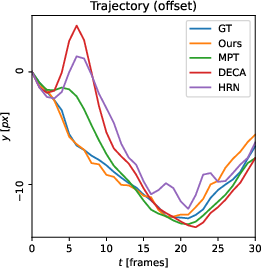

Abstract: When working with 3D facial data, improving fidelity and avoiding the uncanny valley effect is critically dependent on accurate 3D facial performance capture. Because such methods are expensive and due to the widespread availability of 2D videos, recent methods have focused on how to perform monocular 3D face tracking. However, these methods often fall short in capturing precise facial movements due to limitations in their network architecture, training, and evaluation processes. Addressing these challenges, we propose a novel face tracker, FlowFace, that introduces an innovative 2D alignment network for dense per-vertex alignment. Unlike prior work, FlowFace is trained on high-quality 3D scan annotations rather than weak supervision or synthetic data. Our 3D model fitting module jointly fits a 3D face model from one or many observations, integrating existing neutral shape priors for enhanced identity and expression disentanglement and per-vertex deformations for detailed facial feature reconstruction. Additionally, we propose a novel metric and benchmark for assessing tracking accuracy. Our method exhibits superior performance on both custom and publicly available benchmarks. We further validate the effectiveness of our tracker by generating high-quality 3D data from 2D videos, which leads to performance gains on downstream tasks.

- Stirling/esrc 3d face database. https://pics.stir.ac.uk/ESRC/. Accessed: 2023-10-25.

- A morphable model for the synthesis of 3d faces. In Proceedings of the 26th Annual Conference on Computer Graphics and Interactive Techniques, page 187–194, USA, 1999. ACM Press/Addison-Wesley Publishing Co.

- Instant multi-view head capture through learnable registration. In Conference on Computer Vision and Pattern Recognition (CVPR), pages 768–779, 2023.

- How far are we from solving the 2d & 3d face alignment problem? (and a dataset of 230,000 3d facial landmarks). In International Conference on Computer Vision, 2017.

- Stabilized real-time face tracking via a learned dynamic rigidity prior. ACM Trans. Graph., 37(6), 2018.

- Realy: Rethinking the evaluation of 3d face reconstruction, 2022.

- Hiface: High-fidelity 3d face reconstruction by learning static and dynamic details, 2023.

- Voxceleb2: Deep speaker recognition. In INTERSPEECH, 2018.

- Capture, learning, and synthesis of 3D speaking styles. In Proceedings IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), pages 10101–10111, 2019.

- Emoca: Emotion driven monocular face capture and animation, 2022.

- Imagenet: A large-scale hierarchical image database. In 2009 IEEE conference on computer vision and pattern recognition, pages 248–255. Ieee, 2009.

- Accurate 3d face reconstruction with weakly-supervised learning: From single image to image set. In IEEE Computer Vision and Pattern Recognition Workshops, 2019.

- Faceformer: Speech-driven 3d facial animation with transformers. arXiv preprint arXiv:2112.05329, 2021.

- Learning an animatable detailed 3d face model from in-the-wild images. CoRR, abs/2012.04012, 2020.

- Reconstruction of personalized 3d face rigs from monocular video. ACM Trans. Graph., 35(3), 2016a.

- Corrective 3d reconstruction of lips from monocular video. ACM Trans. Graph., 35(6), 2016b.

- Neural head avatars from monocular rgb videos. arXiv preprint arXiv:2112.01554, 2021.

- Attention mesh: High-fidelity face mesh prediction in real-time. CoRR, abs/2006.10962, 2020.

- Towards Fast, Accurate and Stable 3D Dense Face Alignment, pages 152–168. 2020.

- Densereg: Fully convolutional dense shape regression in-the-wild, 2017.

- Deep residual learning for image recognition, 2015.

- Adam: A method for stochastic optimization. In 3rd International Conference on Learning Representations, ICLR 2015, San Diego, CA, USA, May 7-9, 2015, Conference Track Proceedings, 2015.

- A hierarchical representation network for accurate and detailed face reconstruction from in-the-wild images, 2023.

- Pose space deformation: A unified approach to shape interpolation and skeleton-driven deformation. In Proceedings of the 27th Annual Conference on Computer Graphics and Interactive Techniques, page 165–172, USA, 2000. ACM Press/Addison-Wesley Publishing Co.

- Learning a model of facial shape and expression from 4D scans. ACM Transactions on Graphics, (Proc. SIGGRAPH Asia), 36(6):194:1–194:17, 2017.

- Fixing weight decay regularization in adam. CoRR, abs/1711.05101, 2017.

- Survey on 3d face reconstruction from uncalibrated images. CoRR, abs/2011.05740, 2020.

- Voxceleb: Large-scale speaker verification in the wild. Computer Science and Language, 2019.

- Shape preserving facial landmarks with graph attention networks. In 33rd British Machine Vision Conference 2022, BMVC 2022, London, UK, November 21-24, 2022. BMVA Press, 2022.

- Towards realistic generative 3d face models, 2023.

- SADRNet: Self-aligned dual face regression networks for robust 3d dense face alignment and reconstruction. IEEE Transactions on Image Processing, 30:5793–5806, 2021.

- Mobilenetv2: Inverted residuals and linear bottlenecks, 2019.

- Learning to regress 3d face shape and expression from an image without 3d supervision. In Proceedings IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), 2019.

- Self-supervised monocular 3d face reconstruction by occlusion-aware multi-view geometry consistency. arXiv preprint arXiv:2007.12494, 2020.

- RAFT: recurrent all-pairs field transforms for optical flow. CoRR, abs/2003.12039, 2020.

- Face2face: Real-time face capture and reenactment of rgb videos, 2020.

- Accurate 3d face reconstruction with facial component tokens. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023.

- Delving into high-quality synthetic face occlusion segmentation datasets. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops, 2022.

- Mead: A large-scale audio-visual dataset for emotional talking-face generation. In ECCV, 2020.

- Prnet: Self-supervised learning for partial-to-partial registration, 2019.

- 3d face reconstruction with dense landmarks, 2022.

- An anatomically-constrained local deformation model for monocular face capture. ACM Trans. Graph., 35(4), 2016.

- Multiface: A dataset for neural face rendering. In arXiv, 2022.

- Segformer: Simple and efficient design for semantic segmentation with transformers. In Neural Information Processing Systems (NeurIPS), 2021.

- Codetalker: Speech-driven 3d facial animation with discrete motion prior, 2023.

- Facescape: a large-scale high quality 3d face dataset and detailed riggable 3d face prediction, 2020.

- Generating holistic 3d human motion from speech, 2023.

- Bisenet V2: bilateral network with guided aggregation for real-time semantic segmentation. CoRR, abs/2004.02147, 2020.

- CelebV-Text: A large-scale facial text-video dataset. In CVPR, 2023.

- S33{}^{3}start_FLOATSUPERSCRIPT 3 end_FLOATSUPERSCRIPTfd: Single shot scale-invariant face detector, 2017.

- I M avatar: Implicit morphable head avatars from videos. CoRR, abs/2112.07471, 2021.

- Pointavatar: Deformable point-based head avatars from videos. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- Star loss: Reducing semantic ambiguity in facial landmark detection, 2023.

- Face alignment across large poses: A 3d solution. CoRR, abs/1511.07212, 2015.

- Instant volumetric head avatars. 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 4574–4584, 2022a.

- Towards metrical reconstruction of human faces, 2022b.

- State of the art on monocular 3d face reconstruction, tracking, and applications. 2018.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.