- The paper introduces CT-GAN, a framework that fuses structural (DTI) and functional (rs-fMRI) neuroimaging data using a bi‐attention transformer for improved Alzheimer’s prediction.

- Its architecture integrates CNNs for functional extraction, GCNs for structural analysis, and a hybrid loss function, yielding superior classification performance.

- Experimental results on the ADNI dataset demonstrate enhanced accuracy, sensitivity, and specificity, highlighting CT-GAN’s potential for early Alzheimer’s stage detection.

The "Cross-Modal Transformer GAN" paper presents a novel approach leveraging cross-modal fusion of neuroimaging data to predict Alzheimer's Disease (AD) progression. A new architecture, CT-GAN, is introduced to efficiently amalgamate the functional data from resting-state fMRI (rs-fMRI) with the structural data from Diffusion Tensor Imaging (DTI) using a bi-attention transformer mechanism.

Introduction

AD often eludes precise characterization due to the brain's inherent complexity as a network. The paper emphasizes the necessity of capturing both functional and structural brain connectivities to accurately identify AD-related features. Traditionally, functional connectivity (FC) is derived from BOLD signals in rs-fMRI while structural connectivity (SC) is deduced from DTI. Current methods largely consider each modality in isolation, risking the omission of critical cross-modal information relevant to understanding AD.

The proposed CT-GAN attempts to rectify this by exploiting a bi-attention mechanism integrated within transformers to marry these modalities within the GAN framework. GANs provide the flexibility in training and architectural simplicity that facilitates effective learning of complex distributions, vital for the generative tasks required in neuroimaging.

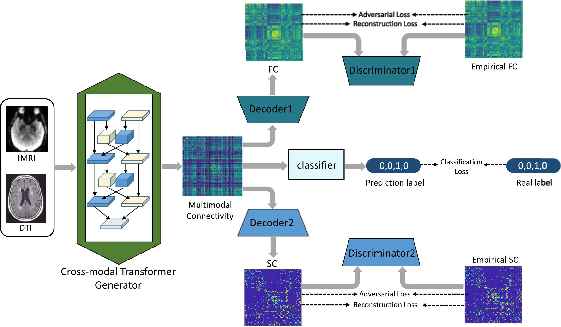

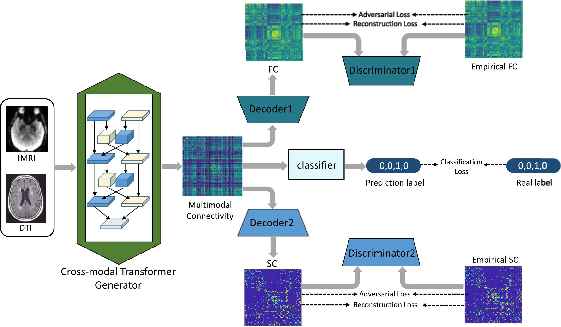

Figure 1: The framework of the proposed CT-GAN.

Methodology

Architecture Overview

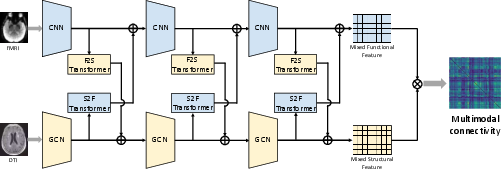

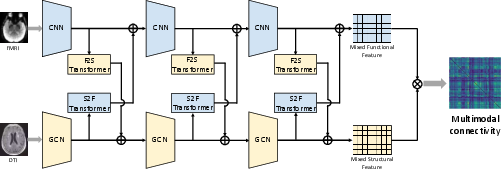

The CT-GAN is composed of a cross-modal transformer generator, decoders for functional and structural connectivity, respective discriminators, and a classifier for AD stage prediction. The generator's architecture includes convolutional neural networks (CNNs) for extracting functional information and graph convolutional networks (GCNs) for structural extraction. The bi-attention mechanism permits layer-wise fusion of complementary information, allowing for the construction of a multimodal connectivity matrix.

Figure 2: The network architecture of proposed generator.

Loss Function

A hybrid loss function comprising adversarial, classification, and connectivity reconstruction losses strengthens model robustness. Adversarial losses help ensure realistic multimodal connectivity matrices by aligning them with empirical SC and FC data. Classification loss optimizes the network's predictive accuracy for AD stages such as NC, EMCI, LMCI, and AD, while reconstruction losses impose topological constraints.

Experimental Results

Extensive experimentation was performed on the ADNI dataset comprising rs-fMRI and DTI data from 268 subjects. Performance evaluation underscored the CT-GAN's superior classification accuracy (ACC), sensitivity (SEN), and specificity (SPEC) when juxtaposed with existing multimodal fusion approaches. The bi-attention mechanism exhibited enhanced capability in extracting and utilizing complementary information, thereby offering improved prediction of AD stages.

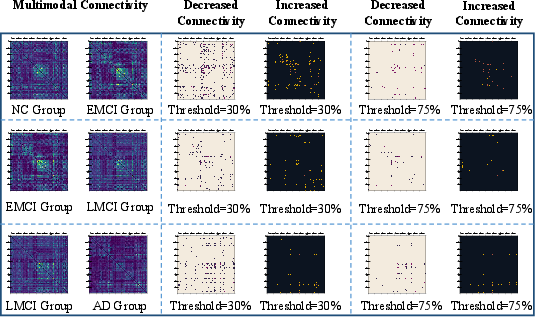

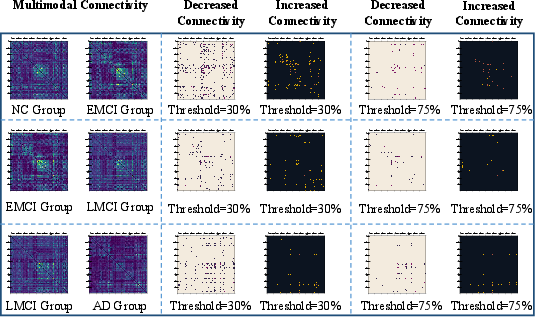

Figure 3: Averaged multimodal connectivity of different groups with the same disease stage and the changes of such connectivity under the proceeding of AD stages.

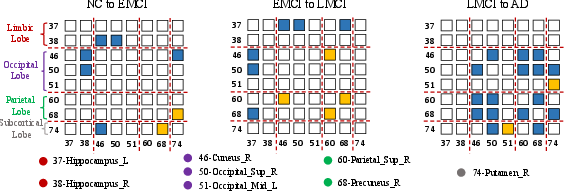

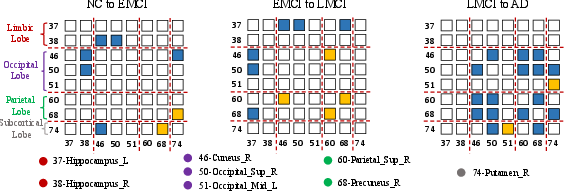

Figure 4: The change of multimodal connectivity between the top 8 AD-related ROIs. The number before ROI is the corresponding AAL id for this ROI. The blue represents decreased connectivity; The yellow represents increased connectivity. The red dotted lines divide the 8 ROIs into their corresponding brain lobe.

Conclusion

The CT-GAN's integration of a bi-attention mechanism within the generative adversarial framework presents a compelling method for fusing structural and functional neuroimaging data, offering a significant advance in AD research. Predictive accuracy surpasses that of existing models, underscoring the utility of the proposed approach. While centrally focused on AD, the model's design facilitates applications extending to other neurodegenerative disorders, providing a flexible and powerful tool for brain network analysis.

In essence, this work charts a significant pathway for future research in cross-modal data fusion, capitalizing on the strength of GANs and transformers to unravel complex neuroimaging data in understanding and predicting the progression of neurodegenerative conditions.