Unable to Forget: Proactive lnterference Reveals Working Memory Limits in LLMs Beyond Context Length

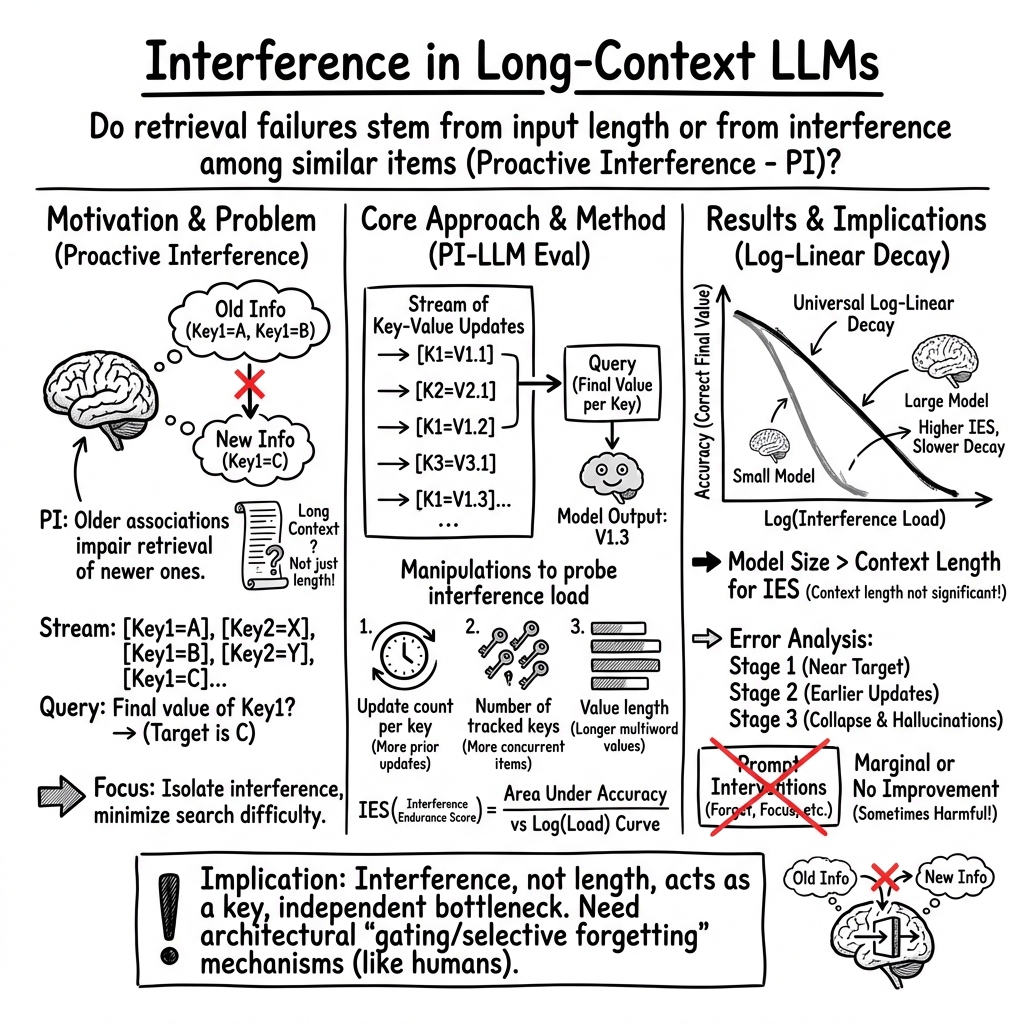

Abstract: Information retrieval in LLMs is increasingly recognized as intertwined with generation capabilities rather than mere lookup. While longer contexts are often assumed to improve retrieval, the effects of intra-context interference remain understudied. To address this, we adapt the proactive interference (PI) paradigm from cognitive science, where earlier information disrupts recall of newer updates. In humans, susceptibility to such interference is inversely linked to working memory capacity. We introduce PI-LLM, an evaluation that sequentially streams semantically related key-value updates and queries only the final values. Although these final values are clearly positioned just before the query, LLM retrieval accuracy declines log-linearly toward zero as interference accumulates; errors arise from retrieving previously overwritten values. Attempts to mitigate interference via prompt engineering (e.g., instructing models to ignore earlier input) yield limited success. These findings reveal a fundamental constraint on LLMs' ability to disentangle interference and flexibly manipulate information, suggesting a working memory bottleneck beyond mere context access. This calls for approaches that strengthen models' ability to suppress irrelevant content during retrieval.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Plain-language summary of “Unable to Forget: Proactive Interference Reveals Working Memory Limits in LLMs Beyond Context Length”

1) What is this paper about?

This paper looks at how LLMs, like chatbots, remember and pull out information from long pieces of text. The authors show that the biggest problem isn’t just how much text a model can read at once (its “context window”), but how easily the model gets confused when many similar pieces of information appear—especially when older facts get replaced by newer ones. They call this confusion “interference,” and they borrow ideas from human memory research to study it.

Imagine your friend changes their phone number several times. When asked for the current number, you might accidentally recall an old one. That mix-up is interference. The paper tests whether LLMs have a similar problem—and finds they do, in a big way.

2) What questions were the researchers asking?

In simple terms, they asked:

- Do LLMs struggle to pick the newest, correct piece of information when lots of similar, older information appears before it?

- Is this struggle mainly caused by too much text (length), or by interference from similar items?

- Do bigger models handle interference better?

- Can we fix the problem by telling the model (in the prompt) to “ignore the old stuff”?

- Is there a single “working memory–like” limit in LLMs that gets used up by different kinds of interference?

3) How did they test it?

They built a simple, controlled task inspired by human memory experiments:

- The model is given a stream of key–value updates, like:

- BP: 120 → later → BP: 128 → later → BP: 125

- The task is to report the latest value (here: “BP: 125”).

- This setup keeps “search” easy (the correct answer is always the last update for that key) and focuses on interference (old values that can cause confusion).

They tested interference in several ways:

- Increase how many times each key gets updated (more older values to confuse the model).

- Increase how many different keys are being tracked at once (more to juggle).

- Keep the total input length fixed but ask for the final values of more keys (so length doesn’t change, only interference does).

- Make each value longer (like turning “Apple” into “AppleOrangeBananaMango…”), which adds information load per item—similar to how longer words are harder for people to remember.

They measured accuracy (how often the model picked the correct, latest value) and created a score called the Interference Endurance Score (IES) to capture how well a model resists interference overall.

4) What did they find, and why is it important?

Key findings (briefly explained):

- Retrieval accuracy drops predictably as interference grows:

- As more similar, older items appear, models get worse at picking the newest, correct item.

- This drop happens in a steady, reliable way across many models (it falls off quickly and keeps going downward).

- The main errors are “old answer” mistakes:

- Models often return a previous value instead of the final one—just like proactive interference in humans (where old info gets in the way of new info).

- Under very heavy interference, models sometimes respond with values that never appeared (hallucinations).

- Bigger models resist interference better than smaller ones:

- The Interference Endurance Score is higher for larger models.

- The size of the context window (how much text a model can see) does not predict resistance to interference.

- Interference is not the same as input length:

- Even when the total input length is kept constant, increasing the number of items the model must track still hurts accuracy.

- This shows the problem isn’t just “too many tokens,” but “too many similar, competing items.”

- Longer values (more words per value) make the problem worse:

- As each item gets longer, accuracy drops sharply—mirroring a classic human memory effect where longer words are harder to remember.

- Prompting the model to “forget” or “focus” helps only a little:

- Instructions like “ignore earlier updates” or “pay attention to what comes next” barely reduce interference.

- Unlike humans, models don’t seem to flexibly suppress old, irrelevant items when told to.

Why this matters:

- Many real tasks require tracking the latest state (like logs, settings, or records). If models can’t reliably pick the newest update when there are many similar older ones, they can make simple but serious errors.

- The results suggest LLMs have a “working memory–like” bottleneck: not about how much they can read, but how well they can keep the right information active and ignore the rest.

5) What does this mean for the future?

- Simply giving models longer context windows isn’t enough. The real challenge is interference—teaching models to suppress or “unbind” old, outdated information when new information arrives.

- Model size helps, but even the best current models show strong interference effects.

- We may need new training strategies or architectures that handle memory more like humans do—using control mechanisms to forget or downweight irrelevant content.

- The paper’s Interference Endurance Score offers a practical way to compare models on this ability, which could become a standard benchmark alongside things like context length.

In short: LLMs can read a lot, but they’re not great at “forgetting” what’s no longer relevant. To make them more reliable, future research should focus on reducing interference—so models can keep track of the latest facts without getting tripped up by the past.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a consolidated list of concrete gaps and open questions that remain unresolved and could guide follow-up research.

External validity and task scope

- Generalization to real-world data is untested: validate PI effects on natural corpora (e.g., EHR logs, code edit histories, meeting notes, multi-document RAG contexts) with realistic noise, formatting, and heterogeneous schemas.

- Limited semantic diversity: current interference is induced via repeated exact keys and category-constrained values; test paraphrastic/synonymous keys, fuzzy matches, morpho-syntactic variants, and cross-lingual or cross-script interference.

- Structure and modality: examine whether PI patterns hold for structured values (JSON, tables), numeric/time-series updates, citations, and multimodal streams (text+tables, text+images).

- Interaction with retrieval tools: assess whether retrieval-augmented generation, memory modules, or vector-store chunking mitigate PI or merely shift the locus of interference.

Experimental controls and analysis

- Positional confounds: explicitly control and report the distribution of last-update positions (and distances) to disentangle PI from positional/recency biases beyond “fixed-length” conditions.

- Update scheduling: evaluate contiguous vs interleaved updates, variable inter-update gaps, burstiness, and curriculum-like segmentations to isolate factors driving interference.

- Decoding and prompting sensitivity: systematically vary decoding strategies (greedy, beam, temperature), output-format constraints, and prompt templates to quantify robustness of IES and decay slopes.

- Scoring robustness: clarify and test robustness of exact-match scoring versus tolerant matching (tokenization-aware, normalization, synonyms), to separate PI-driven errors from parsing/formatting mismatches.

- Language and tokenizer dependence: replicate in multiple languages and tokenizers (BPE vs unigram vs SentencePiece variants) to test whether word/token length effects are tokenizer artifacts.

Metrics, statistics, and scaling

- IES validation: provide formal goodness-of-fit for the claimed log-linear decay (fit statistics, confidence bands), and sensitivity of IES to choice of log base, range of update counts, and sampling noise.

- Variance explained: the regression explains ~26% of IES variance; identify additional predictors (pretraining data size/diversity, training compute, RLHF strength, pretraining objectives, positional encoding variants) via multivariate analyses.

- Capacity thresholds: define and report explicit capacity points (e.g., interference load at 90%→50%→10% accuracy) to enable standardized comparisons and scaling-law analyses.

- MoE hypothesis testing: directly measure active parameters, routing entropy, and expert overlap to test the conjecture that effective active capacity (not nominal parameters) governs interference tolerance.

Mechanistic understanding

- Causal mechanisms: perform mechanistic interpretability (attention head/MLP circuit analyses, probing, causal ablations) to identify how earlier values suppress later ones and why unbinding fails.

- Role of positional encodings: compare RoPE, ALiBi, linear attention, and learned positional schemes to isolate their contribution to PI.

- Theoretical account: develop and test a formal model explaining the observed log-linear decay (e.g., interference-as-softmax competition, retrieval noise models, signal-to-interference frameworks) and predict cross-condition transfers.

- Phase transition characterization: quantify the “phase change” to hallucinations (early-warning indicators, sharpness, hysteresis) and its dependence on load, noise, and decoding.

Mitigation strategies and training interventions

- Beyond prompts: evaluate architectural or algorithmic interventions (gating/unbinding modules, key–value memory slots, retrieval controllers, recurrent/segment-level resets, attention sparsification) that explicitly support selective forgetting.

- Training-time approaches: test pretraining/finetuning curricula for update-tracking, anti-interference contrastive objectives, supervised unbinding, or RL with interference-aware rewards.

- Tool-mediated strategies: assess whether planner/executor decompositions, iterative reading with scratchpads, or external memory APIs reduce PI under compute/time budgets.

- Cost–performance tradeoffs: systematically evaluate whether allowing more inference-time compute (CoT, multi-pass rereading) yields durable PI mitigation relative to latency/throughput constraints.

Human–LLM comparison

- Matched human baselines: run human experiments on the exact stimuli and protocols (including value-length manipulations) to directly quantify plateau effects and compare curves, not just cite prior literature.

- Instructional control: test whether structured, machine-targeted control signals (tags/markers) approximate human directed forgetting better than natural language, and whether models learn to obey them after finetuning.

- Individual-differences analogs: examine whether model-to-model variation in IES predicts performance on other WM-like tasks (list updating, n-back, binding/unbinding), mirroring human factor structure.

Evaluation protocol and reproducibility

- Benchmark release: publish datasets, generators, parsing/scoring code, and evaluation harnesses (including prompts and all decoding settings) for exact replication and extension.

- Stress limits: explore beyond current bounds (more than 46 keys, longer horizons) while ensuring models remain within context windows, to test whether any plateau emerges at extreme scales.

- Output normalization and error taxonomy: standardize error categories (prior-value retrieval, off-key, off-value hallucination) and report per-model distributions to facilitate comparative diagnostics.

Applicability and broader impacts

- Downstream impacts: quantify PI effects in applied settings where “latest value” is critical (EHR triage, financial feeds, software configuration diffs) and estimate real-world error rates.

- Safety considerations: investigate whether PI-induced retrieval of outdated information contributes to harmful outputs and how safeguards (e.g., provenance tracing, freshness checks) can be integrated.

Practical Applications

Overview

Based on the paper’s findings that proactive interference (PI) within the prompt—not sheer context length—drives a log-linear collapse in LLM retrieval accuracy, the most practical applications revolve around (1) evaluating and selecting models by interference tolerance, and (2) redesigning data flows, prompts, and systems to reduce or externalize interference. Below are actionable use cases for industry, academia, policy, and daily life, grouped by deployment timeline.

Immediate Applications

These can be deployed now with existing models and tooling, focusing on process, engineering, and evaluation changes rather than architectural breakthroughs.

- Industry (Software, Enterprise AI): Interference-aware model evaluation and procurement

- What: Adopt PI-LLM-style tests and the Interference Endurance Score (IES) as core evaluation metrics for model selection and vendor RFPs.

- Tools/Workflows: Add a PI benchmarking stage in MLOps; publish IES scorecards; set task-specific IES thresholds.

- Assumptions/Dependencies: Access to models and benchmark code; compute to run PI tests; willingness to move beyond “context length” as a purchasing criterion.

- Industry (RAG Platforms, Data Engineering): Preprocessing to collapse update streams

- What: Pre-aggregate and keep only the latest value per key before passing content to the LLM (e.g., event-sourced “last-write-wins” compaction).

- Tools/Workflows: ETL transformers, stream processors, log compaction in Kafka/Fluentd; deduplication and conflict resolution policies.

- Assumptions/Dependencies: Reliable key identification; acceptable loss of historical detail for the task; domain schemas or ontologies.

- Industry (Agent Frameworks, Automation, Robotics): External, structured state instead of in-context “memory”

- What: Maintain live state in a key–value store or database; let the LLM read/write via tools or function calls rather than rely on raw text updates in the prompt.

- Tools/Workflows: Tool calling for get_state/set_state; typed state stores; state machines with deterministic updates; “source of truth” databases.

- Assumptions/Dependencies: Tool reliability and latency budgets; security/compliance for state access; engineering effort.

- Industry (RAG/Search): Recency- and freshness-aware retrieval

- What: Deduplicate by key; favor the most recent records/documents; time-decay ranking; enforce “latest-only” filters prior to generation.

- Tools/Workflows: Indexing with timestamps; ANN pipelines with freshness filters; post-retrieval dedup heuristics.

- Assumptions/Dependencies: Accurate metadata; consistent timestamps; risk of over-pruning if history is needed.

- Safety-Critical Domains (Healthcare, Finance, Legal): Freshness validation gates

- What: Guardrails that verify generated answers align with the most recent records for each key; flag or block outputs using stale values.

- Tools/Workflows: Post-generation validators cross-checking answers with a state store; explainability with cited timestamps/versions.

- Assumptions/Dependencies: Access to ground-truth systems; robust extraction of keys/values from LLM outputs; regulatory audit trails.

- Security/Testing (AppSec, Red-Teaming): Interference fuzzing for LLM apps

- What: Systematically inject semantically similar distractors to test whether apps return outdated or hallucinated values.

- Tools/Workflows: CI pipelines with PI-LLM-based fuzzers; fail builds on stale-answer rates exceeding thresholds.

- Assumptions/Dependencies: Test harness integration; representative distractor dictionaries; acceptance criteria aligned to business risk.

- Model Operations (Cost/Performance): Model routing by interference risk

- What: Route high-interference tasks to larger dense models (higher IES); avoid MoE when active parameter count is low for such tasks.

- Tools/Workflows: Traffic policies conditioned on “interference risk score” (e.g., many keys, frequent updates); dynamic model selection.

- Assumptions/Dependencies: Budget for larger models; empirical measurement of IES for candidates; task classification pipeline.

- Prompt/UX Design (Product, Support, Docs): Minimize concurrent tracked variables and value length

- What: Split tasks into batches; ask for one or few keys at a time; keep values concise; use structured tables with explicit “latest” markers.

- Tools/Workflows: Wizard flows; sequential Q&A per variable; schema-driven prompts (JSON with lastUpdatedAt).

- Assumptions/Dependencies: More interaction turns; user adoption; the paper shows prompt-only cues help marginally—structure and batching are key.

- Operations/Observability (SRE, ITSM, Customer Support): Rolling summarization with state carry-over

- What: Periodically summarize streams into compact current-state snapshots (explicitly overwriting outdated values) and pass only the snapshot to the LLM.

- Tools/Workflows: Scheduled summarizers; snapshot schemas; incremental updates to a shared state store.

- Assumptions/Dependencies: Summarizer quality; snapshot correctness; potential loss of nuance.

- Training/Data Strategy (Enterprise Fine-Tuning): Include PI tasks in evaluation and data curation

- What: Use synthetic PI datasets to screen fine-tuned models and discourage learning that conflates repeated cues.

- Tools/Workflows: Data generators for key–value updates; evaluation suites with interference axes (updates, keys, value length).

- Assumptions/Dependencies: Fine-tuning pipeline access; monitoring overfitting to synthetic distributions.

- Daily Life/Education: Effective prompt habits for dynamic information

- What: Provide only the final state; avoid long chains of overwritten details; ask the model to confirm that each output value cites its timestamp/version.

- Tools/Workflows: Templates with “Current values” sections; tabular prompts; per-item confirmation.

- Assumptions/Dependencies: User discipline; trade-off between completeness and clarity.

- Policy/Government Procurement: Update evaluation standards beyond “context length”

- What: Require interference-tolerance metrics (e.g., IES) for public-sector AI procurement; disclose PI performance alongside context size.

- Tools/Workflows: Benchmark annexes in RFPs; third-party verifications; acceptance tests.

- Assumptions/Dependencies: Stakeholder awareness; standardized benchmark kits.

Long-Term Applications

These require further research and development in model architectures, training, and standards.

- AI Research (Model Architecture): Working-memory mechanisms for unbinding and gating

- What: Incorporate explicit write/erase gates, selective attention decay, or key-scoped memory within transformers to suppress outdated associations.

- Tools/Workflows: Memory-augmented transformers; controller modules; sparse attention with interference-aware routing.

- Assumptions/Dependencies: Demonstrated gains on PI-LLM-like tests; training stability; compute budgets.

- AI Research (Training Objectives): Directed forgetting and recency-aligned learning

- What: Design loss functions or RLHF protocols that penalize stale retrieval and reward correct “latest-value” behavior under interference.

- Tools/Workflows: Curriculum learning with PI tasks; counterfactual training where earlier items become irrelevant; recency-weighted objectives.

- Assumptions/Dependencies: High-quality training signals; balancing retention vs. forgetting.

- Model Design (MoE Evolution): Increase active parameters or interference-aware expert routing

- What: Improve MoE routing so active capacity better matches interference-heavy inputs; hybrid dense+MoE regimes for critical spans.

- Tools/Workflows: Router training with PI signals; expert specialization for state tracking.

- Assumptions/Dependencies: Router generalization; cost/latency trade-offs.

- Hardware/Systems: Capacity scaling for interference robustness

- What: Co-design hardware and memory hierarchies to support larger effective active capacity (linked to higher IES).

- Tools/Workflows: Inference-time activation scaling; memory-optimized kernels.

- Assumptions/Dependencies: Economics of larger dense activations; energy constraints.

- Agent Platforms: Formally verified state management

- What: Agent frameworks where world state is maintained in typed stores with verification that outputs reflect the latest state.

- Tools/Workflows: Contracts over state transitions; static analysis; runtime monitors that reject stale reads.

- Assumptions/Dependencies: Developer adoption; formal method tooling.

- Sector Solutions (Healthcare, Finance, Legal): Certified “freshness-safe” assistants

- What: Assistants with guarantees (and audits) that clinical readings, market data, or contract clauses reflect the most recent records.

- Tools/Workflows: Freshness attestations, provenance tracing, policy engines gating outputs.

- Assumptions/Dependencies: Deep EHR/market/EDR integrations; regulatory frameworks; liability allocation.

- Standards/Policy: Interference robustness benchmarks as compliance norms

- What: Establish sector and cross-sector standards (e.g., NIST-like) for PI robustness; require disclosure and minimum performance levels.

- Tools/Workflows: Open PI-LLM test suites; certification bodies; public leaderboards.

- Assumptions/Dependencies: Industry consensus; governance funding.

- Security: Defenses against “interference poisoning”

- What: Detect and neutralize adversarial insertion of outdated/conflicting cues in prompts or retrieved context.

- Tools/Workflows: Context sanitizers, contradiction detectors, provenance scoring for segments.

- Assumptions/Dependencies: Low false positives; robust detection in open-ended text.

- Consumer/Personal AI: Privacy-preserving personal working memory with gating

- What: On-device or federated memory that can intentionally forget outdated personal facts and prioritize recency.

- Tools/Workflows: User-controlled memory policies; local state stores; per-key TTLs.

- Assumptions/Dependencies: On-device compute; strong privacy guarantees.

- Education/Science: Unified benchmarks connecting human and LLM working memory

- What: Cross-disciplinary programs to co-evolve cognitive theories and LLM architectures, using PI tasks to benchmark progress.

- Tools/Workflows: Shared datasets, human–LLM comparative studies; academic challenges.

- Assumptions/Dependencies: Funding; reproducibility infrastructure.

- Marketplace/MLOps: Task-to-model routing using standardized IES profiles

- What: Registries where models advertise IES and interference profiles; orchestration routes tasks accordingly.

- Tools/Workflows: Model metadata standards; policy engines in serving layers.

- Assumptions/Dependencies: Vendor participation; consistent scoring methodologies.

Notes on feasibility across items:

- The paper finds prompt-only interventions have marginal effect; near-term gains hinge on data engineering, structured state, and model choice rather than clever instructions.

- Larger parameter count correlates with higher IES; budget and latency will influence deployment choices.

- Many applications depend on reliable extraction/identification of keys and timestamps; domain-specific schemas improve success.

- In safety-critical uses, freshness validation and external state should precede dependence on long-context recall.

Glossary

- Anti-interference capacity: A model’s intrinsic limit on resisting distractors during retrieval before performance collapses. "This change in retrieval behavior resembles a phase transition: once the modelâs anti-interference capacity is exhausted, it no longer retrieves plausible candidates, consistent with limited-resource theories of working memory failure."

- Anti-interference resources: The finite internal resources a model uses to suppress irrelevant or competing information. "Under fixed input length, increasing the number of simultaneously tracked keys leads to lower accuracy, in line with this log-linear pattern... tracked keys compete for a limited pool of anti-interference resources, which are rapidly depleted as their number grows."

- Area under the curve (AUC): An aggregate performance metric computed by integrating a curve (e.g., accuracy vs. interference) over a specified range. "The IES is defined as the area under the curve (AUC) of retrieval accuracy, calculated across log-scaled update counts."

- Bootstrapped 95% confidence intervals: Uncertainty estimates derived via resampling from data to approximate the variability of a statistic. "Error bars indicate bootstrapped 95\% confidence intervals."

- CoT (Chain-of-Thought): A prompting or inference technique that elicits step-by-step reasoning or multi-step processing. "If applicable, model names were suffixed with âMoEâ or âCoTâ."

- Context window: The maximum span of tokens a LLM can attend to in a single input. "Statistical analyses across a broad range of LLMs show that larger models (i.e., with higher parameter counts) achieve substantially higher IES, whereas the nominal length of the context window has no significant effect."

- Dense models: Architectures in which all (or most) parameters are active for each input, as opposed to routing among expert subsets. "Comparison of retrieval accuracy between Mixture-of-Experts (MoE) and dense models."

- Directed forgetting: A cognitive process in which individuals intentionally discard specific information when instructed, reducing interference. "Two particularly relevant mechanisms are gating... and directed forgetting, where individuals intentionally discard certain information when explicitly instructed to do so"

- Executive control mechanisms: Cognitive processes that manage, update, and selectively attend to relevant information while suppressing interference. "Classic working memory studies attribute this resilience to executive control mechanisms."

- Gating: A control process that suppresses or discards outdated information as new information is encoded. "Two particularly relevant mechanisms are gating, which automatically suppresses or discards outdated information as new items are encoded"

- Hallucinations: Model outputs that assert information not present in the input or evidence. "The model increasingly returns values that never appeared in the promptâso-called hallucinations."

- Interference Endurance Score (IES): A metric quantifying a model’s resistance to proactive interference, computed from accuracy across interference levels. "To disentangle the factors governing resistance to interference in LLMs, we introduced the Interference Endurance Score (IES) to quantify a modelâs ability to resist proactive interference."

- Intra-context interference: Disruption arising from competing or similar information within the same input context. "While longer contexts are often assumed to improve retrieval, the effects of intra-context interference remain understudied."

- Log-linear: A relationship where performance changes approximately linearly with the logarithm of an independent variable (e.g., interference load). "LLM retrieval accuracy declines log-linearly toward zero as interference accumulates"

- Lost-in-the-Middle: A benchmark examining how the position of information within the prompt affects retrieval accuracy. "This synthetic keyâvalue retrieval task is closely related to âLost-in-the-Middleâ"

- Mixture-of-Experts (MoE): An architecture that routes inputs to different expert subnetworks, activating only a subset of parameters per input. "Our benchmark covers both dense and Mixture-of-Experts (MoE) architectures, spanning diverse training data volumes and hardware resources."

- Needle-in-a-Haystack paradigm: A long-context evaluation setup where a small target item must be found within a large body of text. "Recent long-context benchmarksâmost of which evolve from the original Needle-in-a-Haystack paradigm, such as DeepMindâs Michelangelo"

- Phase transition: An abrupt qualitative shift in system behavior after surpassing a critical threshold. "This change in retrieval behavior resembles a phase transition"

- Primacy bias: A tendency to favor or recall early-occurring items more strongly than later ones. "a substantial portion of errors remains anchored to the earliest bins, reflecting a persistent primacy bias toward the first few updates for each key, even as retrieval fidelity breaks down."

- Proactive interference (PI): A memory phenomenon where earlier information impairs recall of newer information associated with the same cue. "we adapt the proactive interference (PI) paradigm from cognitive science"

- Prompt engineering: The practice of designing and wording prompts to shape model behavior and outputs. "Attempts to mitigate interference via prompt engineering (e.g., instructing models to ignore earlier input) yield limited success."

- Recency bias: A tendency to favor recent information when making judgments or retrieving memories. "This demonstrates that neither recency bias nor explicit prompt cues are sufficient to overcome interference."

- Regression analysis: A statistical approach to model relationships between variables and assess predictive effects. "we conducted a regression analysis of the Interference Endurance Score (IES) against both variables."

- R-squared (R²): A measure of the proportion of variance explained by a statistical model. "R-squared value is derived from Spearman correlation."

- Spearman correlation: A nonparametric correlation measuring the strength of a monotonic relationship between ranked variables. "Within this range, the Spearman correlation between parameter size and IES remains strong and significant (, p = 0.0016; see Figure~\ref{fig:regression})."

- Unbinding mechanisms: Processes that decouple or discard outdated cue–value associations from working memory. "In contrast, we hypothesize that LLMs lack such unbinding mechanisms"

- Word-length effect: The decline in memory performance as the length of items to remember increases. "One such manipulation is the classic word-length effect: in human memory research, increasing the length of words to be remembered impairs performance"

- Working memory capacity: The limited ability to actively maintain and manipulate information over short time spans. "In humans, susceptibility to such interference is inversely linked to working memory capacity."

Collections

Sign up for free to add this paper to one or more collections.