- The paper introduces DynamicLimit-Exp, a novel method modeling human working memory growth to enhance syntactic learning in language models.

- It employs dynamic constraints via ALiBi to simulate critical language acquisition, evaluated using AO-CHILDES and Wikipedia data.

- Experimental results validate the Less-is-More hypothesis, demonstrating improved grammatical learning and suggesting future multilingual applications.

Developmentally-plausible Working Memory Shapes a Critical Period for Language Acquisition

Introduction

The paper entitled "Developmentally-plausible Working Memory Shapes a Critical Period for Language Acquisition" (2502.04795) explores integrating the developmental characteristics of human working memory into LLMs to enhance their language acquisition efficiency. The research proposes a novel mechanism inspired by the Critical Period Hypothesis, particularly emphasizing the exponential growth of working memory during early language learning stages, and evaluates its impact through targeted syntactic benchmarks.

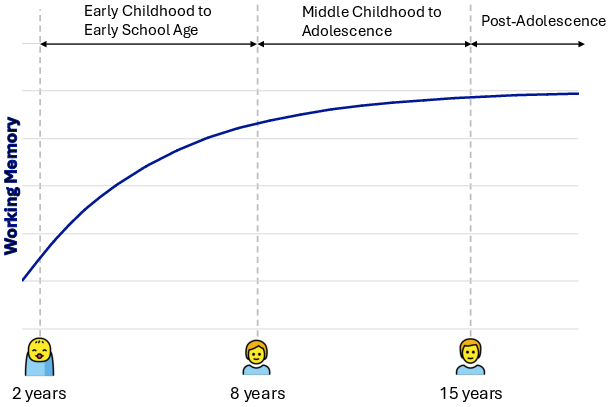

Human language acquisition is theorized to occur most efficiently during a biological "critical period," supported by psycholinguistic and neurobiological evidence [lenneberg1967biological]. The Less-is-More Hypothesis further suggests that cognitive limitations in early childhood, such as restricted working memory capacity, facilitate the extraction of fundamental language patterns, providing a learning advantage over adults with more developed cognitive faculties [NEWPORT199011].

Computational models have become indispensable in testing such hypotheses, offering controlled environments to simulate language learning processes. However, traditional models like GPT-2 often fail to naturally exhibit critical period phenomena, necessitating the incorporation of tailored mechanisms such as Elastic Weight Consolidation to artificially induce these effects [constantinescu2024investigatingcriticalperiodeffects].

Proposed Method: Dynamic Growing of Working Memory

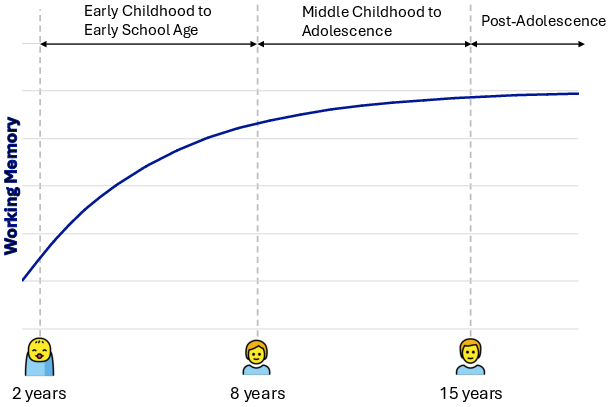

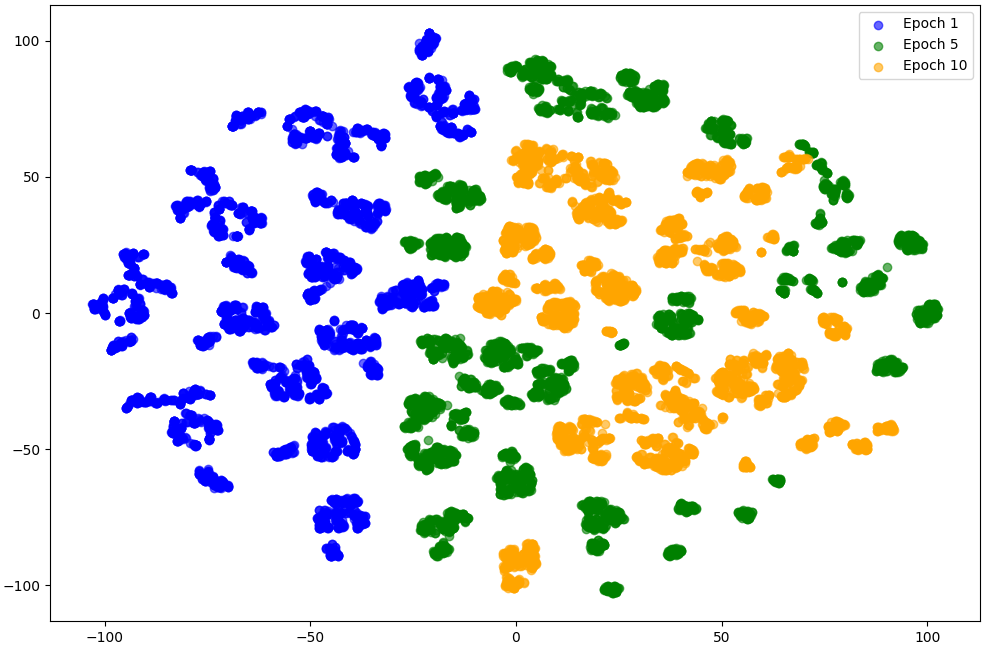

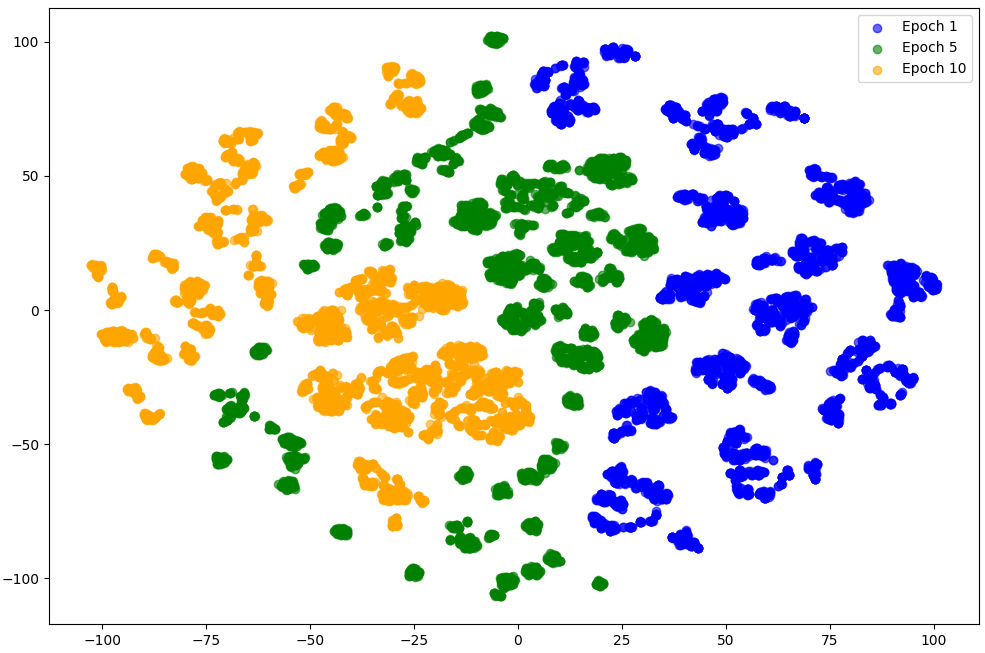

The proposed method, DynamicLimit-Exp, modifies neural LLMs by introducing dynamic constraints on working memory capacity that evolve throughout the training process, mirroring the developmental trajectory observed in human cognition (Figure 1). Using Attention with Linear Biases (ALiBi) as a foundational technique, this approach penalizes attention scores for distant query-key pairs, initially enforcing a recency bias that gradually diminishes over time, allowing the model to expand its contextual processing capabilities.

Experimental Setup and Results

Experiments were conducted using a modified GPT-2 architecture trained on Child-Directed Speech (AO-CHILDES) and Wikipedia datasets to evaluate the efficacy of the developmental-inspired learning schedule across different linguistic stimuli [aochildes2021, huebner-etal-2021-babyberta]. Targeted syntactic evaluations using the Zorro benchmark revealed that DynamicLimit-Exp models consistently outperformed static and unconstrained baselines, demonstrating enhanced efficiency in grammatical learning tasks (Table 1).

Performance improvements were particularly evident in LLMs trained with AO-CHILDES data, emphasizing that the gradual escalation of working memory constraints effectively facilitated pattern extraction irrespective of the linguistic input type (Table 2). This indicates that improved learning efficiency derives not solely from exposure to child-centric language but from the model's intrinsic developmental tuning capabilities.

Analysis of Cognitive Constraints

To substantiate the impact of developmental working memory characteristics, a cognitively implausible baseline was introduced, reversing the direction of memory growth constraints. The analysis confirmed that DynamicLimit-Exp's success is rooted in the Less-is-More hypothesis rather than input variability [NEWPORT199011], with the gradual expansion proving vital for extracting and generalizing complex grammatical rules.

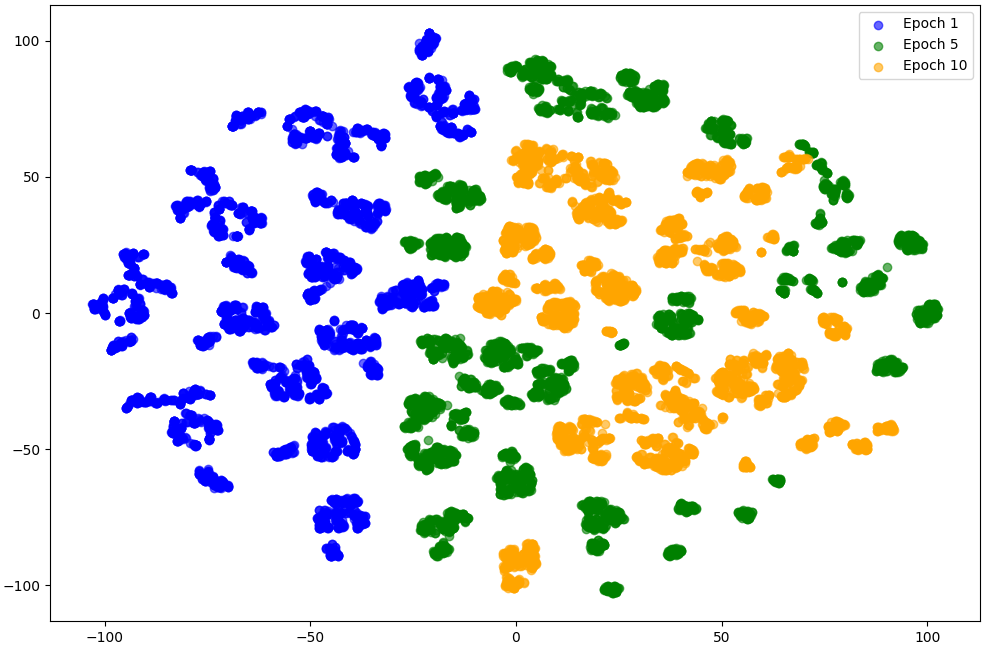

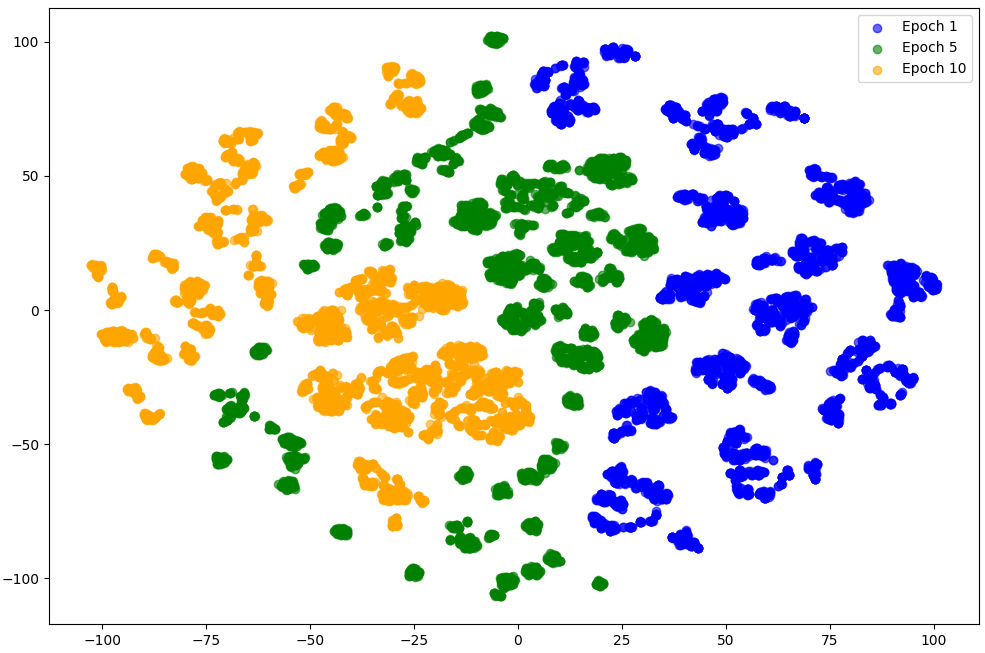

Further evaluation of feature extraction capabilities revealed sustained improvements in representation learning across training epochs. DynamicLimit-Exp maintained diverse embedding spaces with high entropy and isotropy, crucial for facilitating contextually adaptive learning (Figure 2, Table 3).

Implications and Future Directions

The incorporation of developmentally plausible cognitive constraints into LLMs offers new avenues for enhancing data efficiency and understanding language acquisition mechanisms. Future research should explore scalability to larger models and multilingual applications, leveraging benchmarks like Zorro across diverse linguistic contexts.

The proposed cognitive-inspired approach may also complement existing techniques that target syntactic smoothing and frequency bias reduction in pretraining models, potentially augmenting generalization capabilities [diehl-martinez-etal-2024-mitigating].

Conclusion

This study advances our understanding of language acquisition by integrating a developmental growth curve of human working memory into LLMs, improving efficiency in syntactic learning tasks. These findings suggest that adopting cognitive developmental patterns can enrich model architectures, providing more efficient learning strategies and insights into the unique attributes of human language processing.

Figure 3: Developmental trajectory of human working memory.

Figure 1: Trajectory of working memory capacity for each model (num. of epochs = 10).

Figure 2: Embedded space at each learning stage for NoLimit and DynamicLimit-Exp (CASE).