- The paper introduces Steinmetz neural networks that split complex inputs into separate real and imaginary subnetworks to create interpretable latent spaces.

- It employs a novel consistency constraint using the discrete Hilbert transform to enforce orthogonality and reduce generalization error.

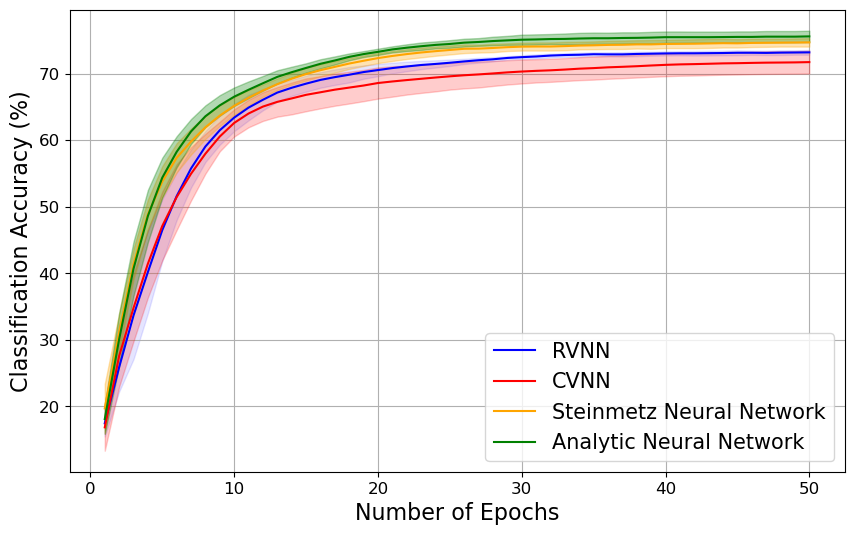

- Empirical results on benchmarks like complex-valued MNIST show that both Steinmetz and Analytic Neural Networks outperform traditional CVNNs and RVNNs.

Steinmetz Neural Networks for Complex-Valued Data

Introduction

The paper "Steinmetz Neural Networks for Complex-Valued Data" (2409.10075) introduces a novel approach to processing complex-valued data using deep neural networks (DNNs). These networks consist of parallel real-valued subnetworks with coupled outputs, aiming to create more interpretable representations within the latent space through multi-view learning. The study focuses on addressing the challenges associated with complex-valued neural networks (CVNNs), such as higher computational costs and instability in optimization, by leveraging a real-valued architecture that maintains the capability to handle complex data efficiently.

Neural Network Architecture

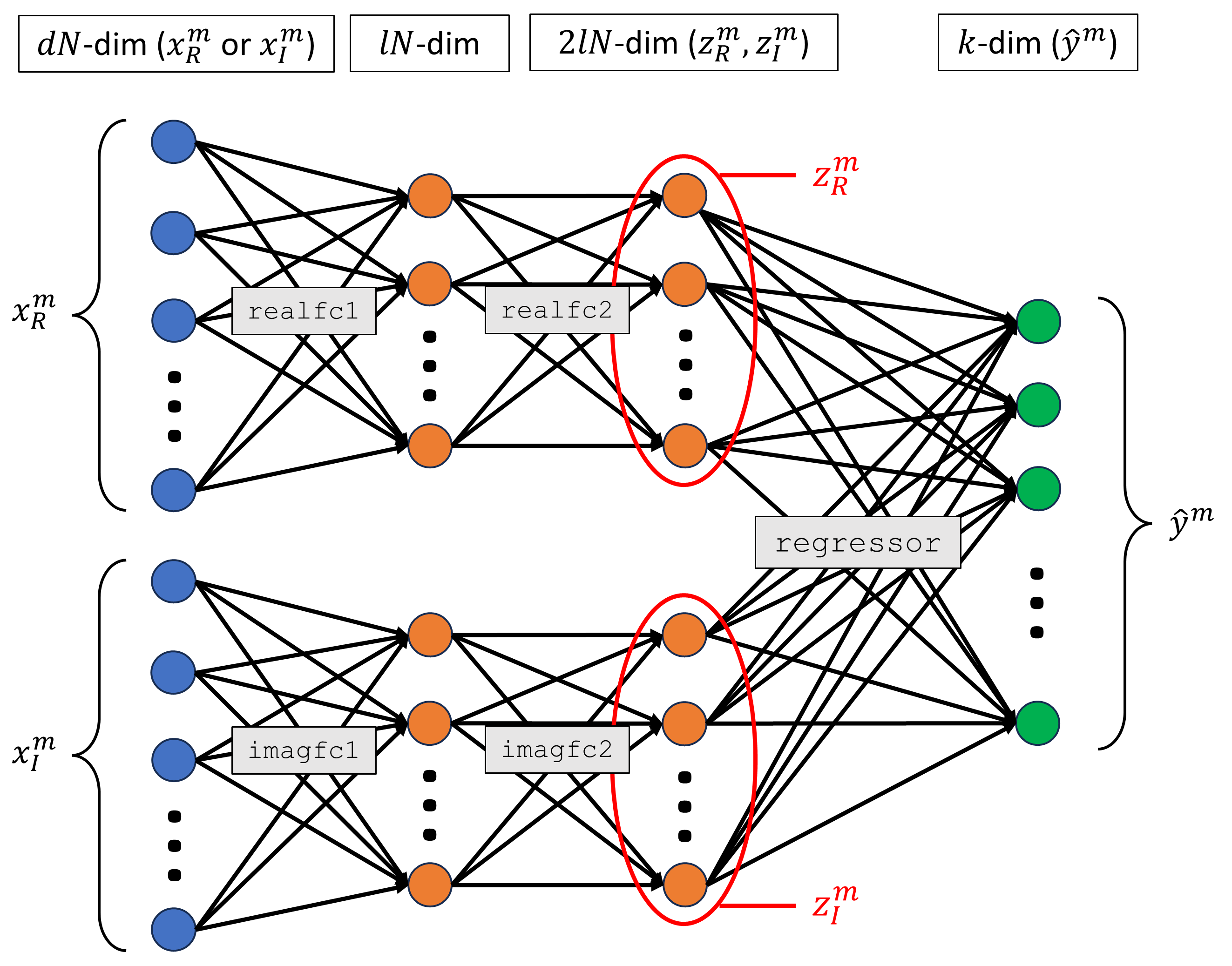

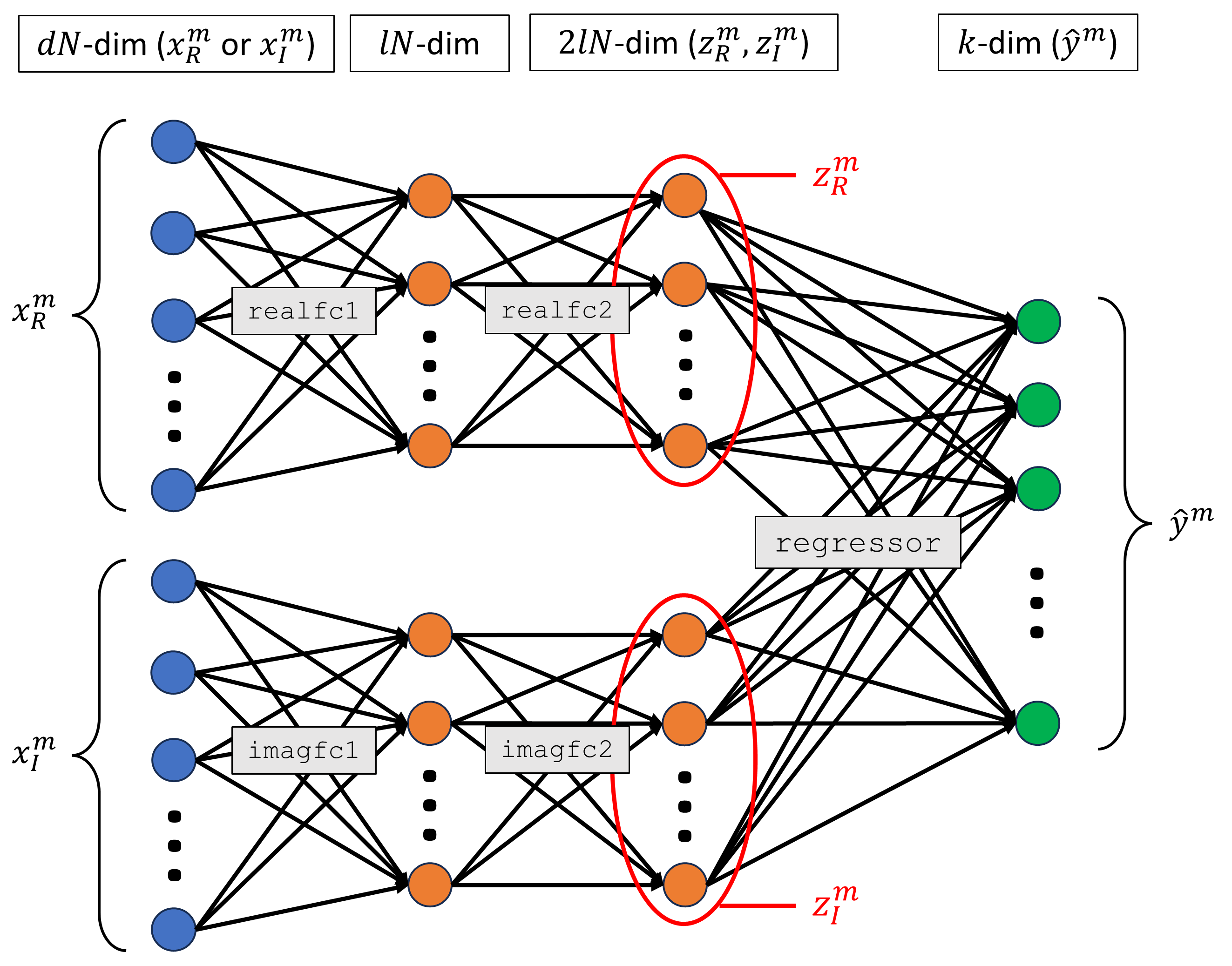

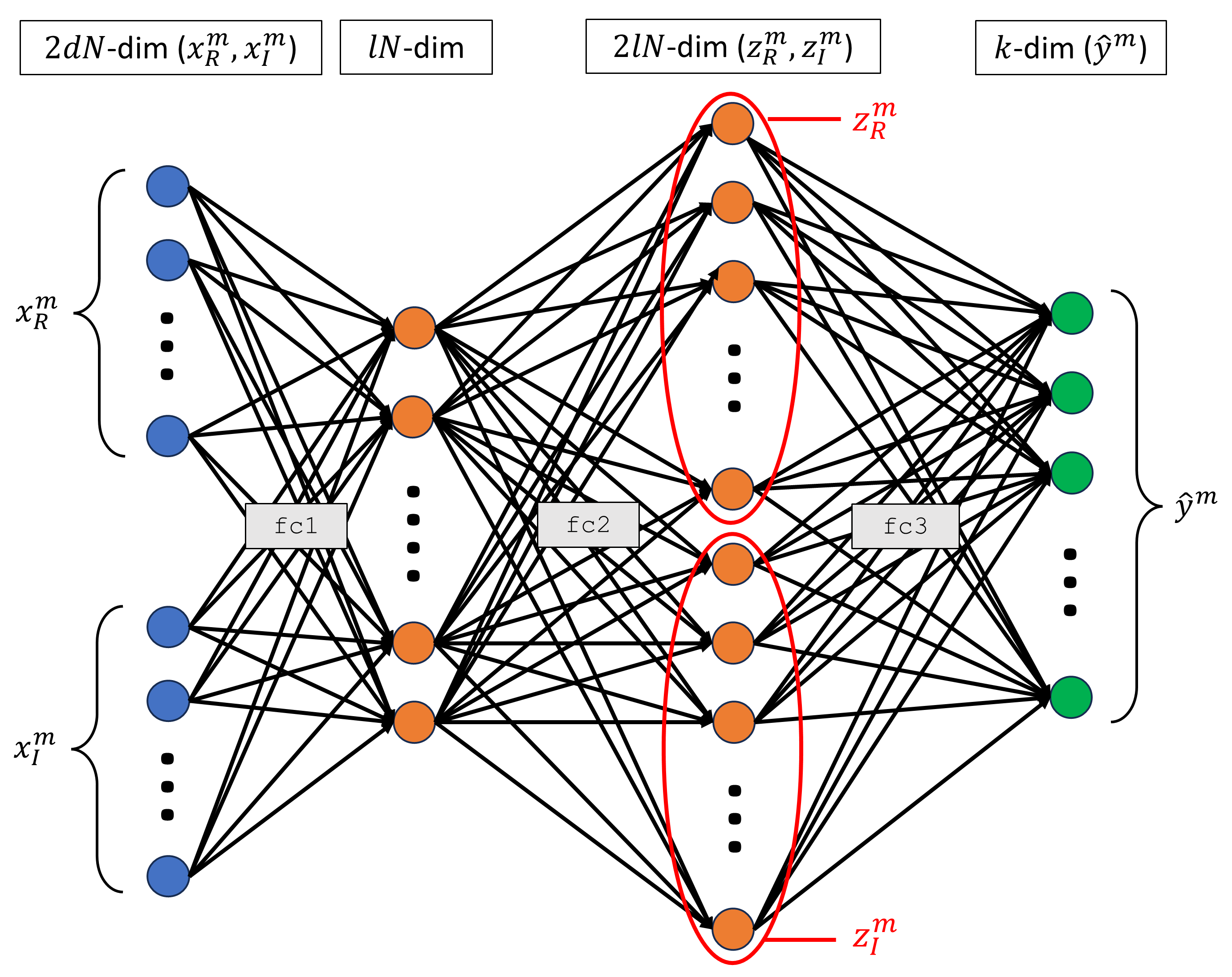

The Steinmetz neural network architecture presents a real-valued framework specifically designed to process complex-valued data. This is achieved through separate processing of the real and imaginary components of the input data using distinct subnetworks, followed by a joint processing stage. This separate-then-joint processing allows for better capture of task-relevant information by independently filtering out irrelevant components before combining essential features from both views.

Figure 1: Steinmetz neural network architecture.

Unlike classical CVNNs, which typically process real and imaginary components jointly, the Steinmetz architecture utilizes the complementarity principle of multi-view learning to enhance the interpretability and efficiency of feature representations. This results in the formation of more coherent latent spaces by reducing representational complexity.

Consistency Constraint

To further improve the generalization capabilities of Steinmetz neural networks, the paper introduces a consistency constraint on the latent space. This constraint encourages a deterministic relationship between the real and imaginary components, minimizing generalization error. The constraint leverages the discrete Hilbert transform (DHT) to enforce orthogonality between component features, thus enhancing diversity and robustness in representation.

Analytic Neural Network

Building upon the Steinmetz framework, the paper proposes the Analytic Neural Network, which incorporates a Hilbert transform consistency penalty. This penalty is designed to promote analytic signal representations in the latent space, ensuring that extracted features are orthogonally arranged to maximize interpretability and predictive performance.

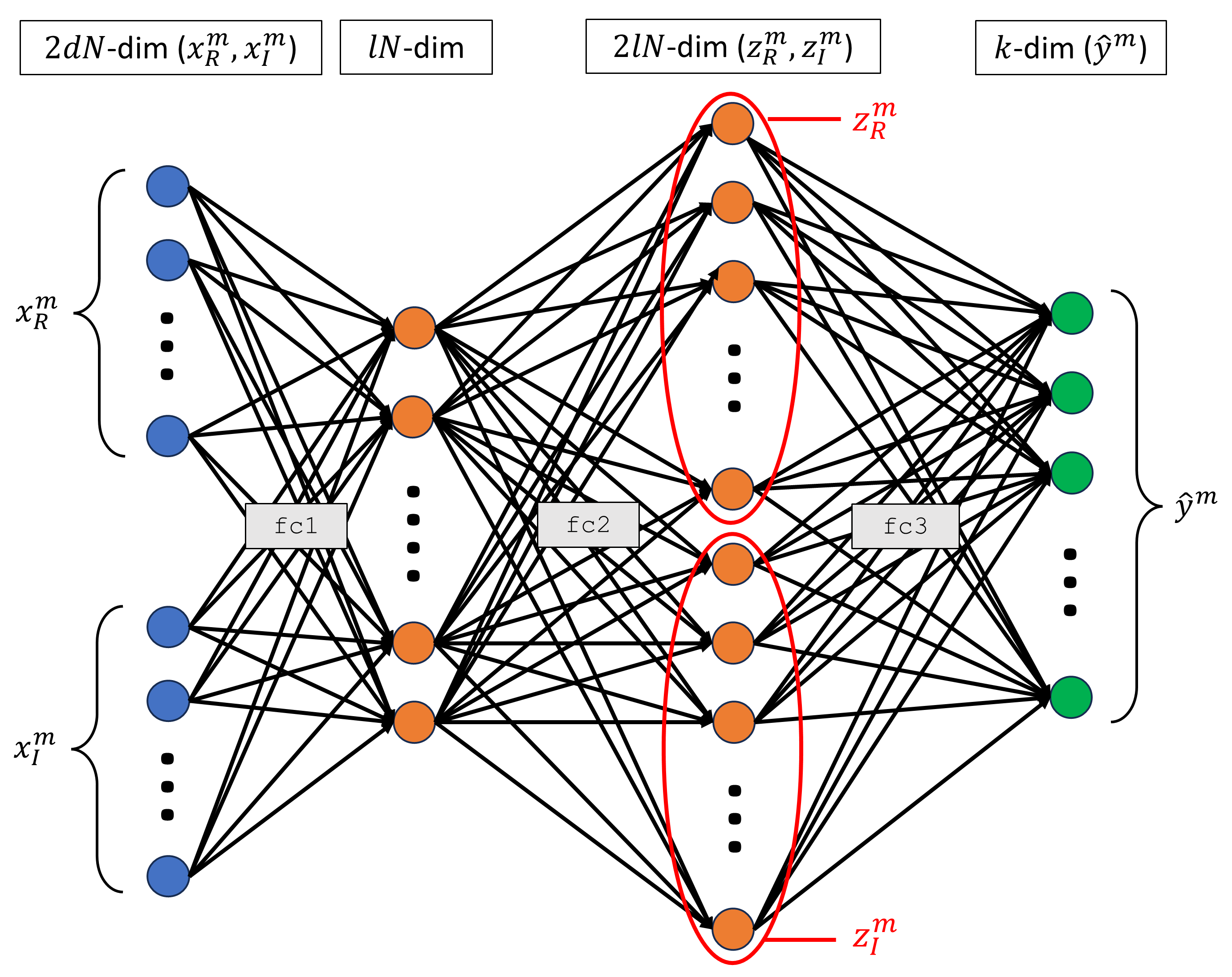

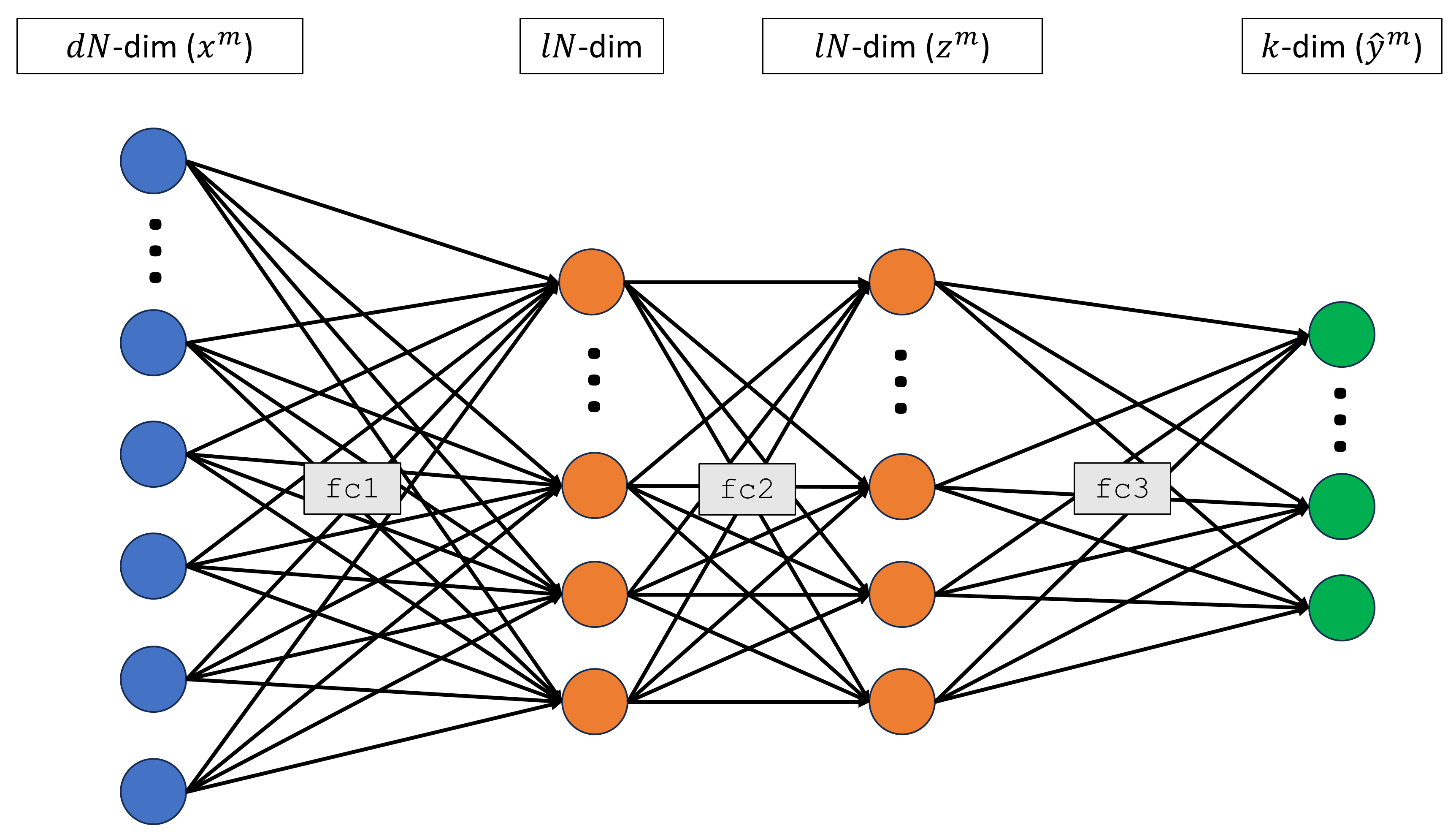

Figure 2: Complex-valued neural network architecture.

The Analytic Neural Network demonstrates superior predictive capabilities compared to traditional RVNNs and CVNNs, particularly in tasks that require accurate phase information extraction, such as radar signal processing. By applying a structured consistency constraint, these networks achieve improved generalization performance with reduced error bounds.

Empirical Results

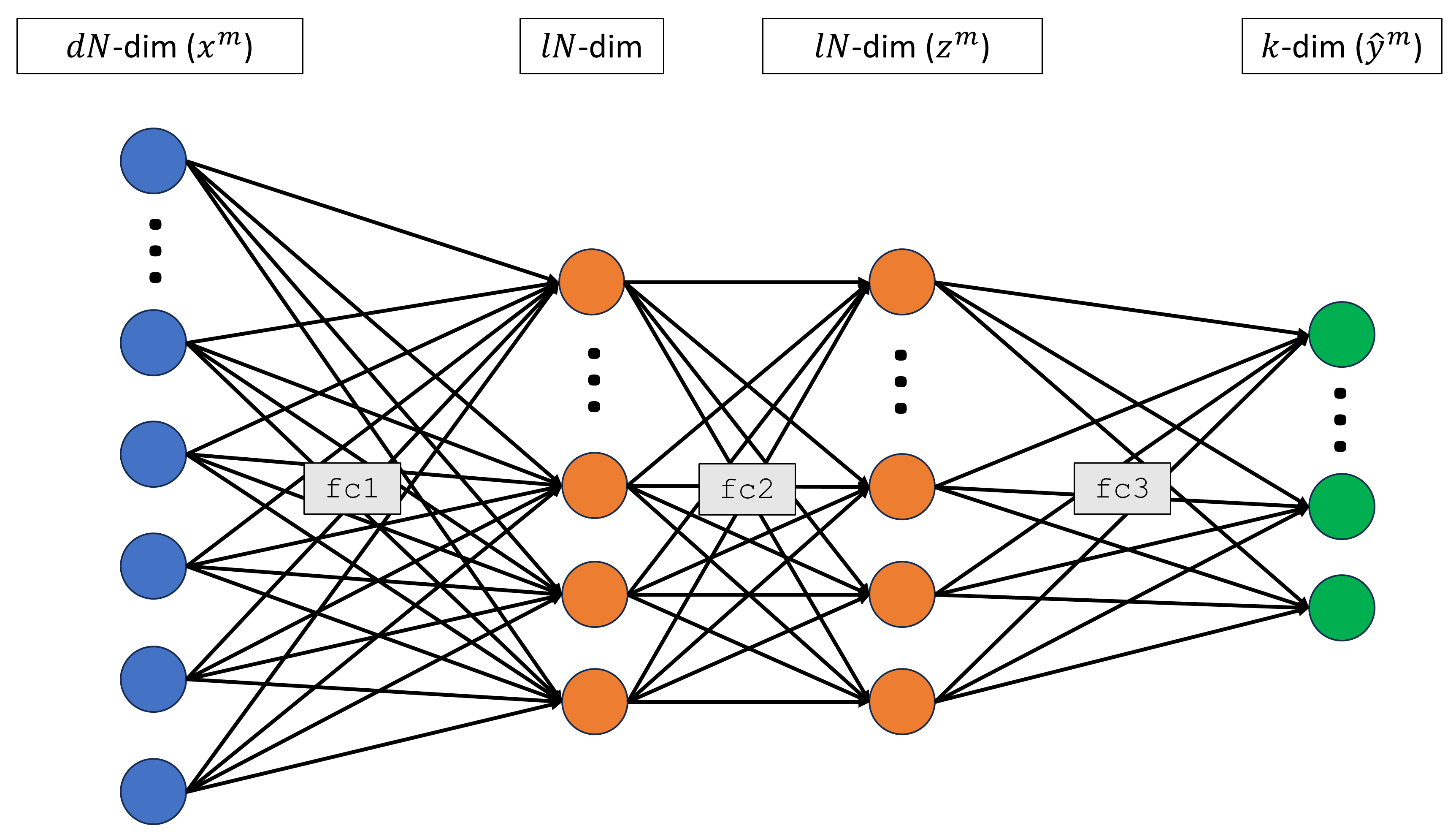

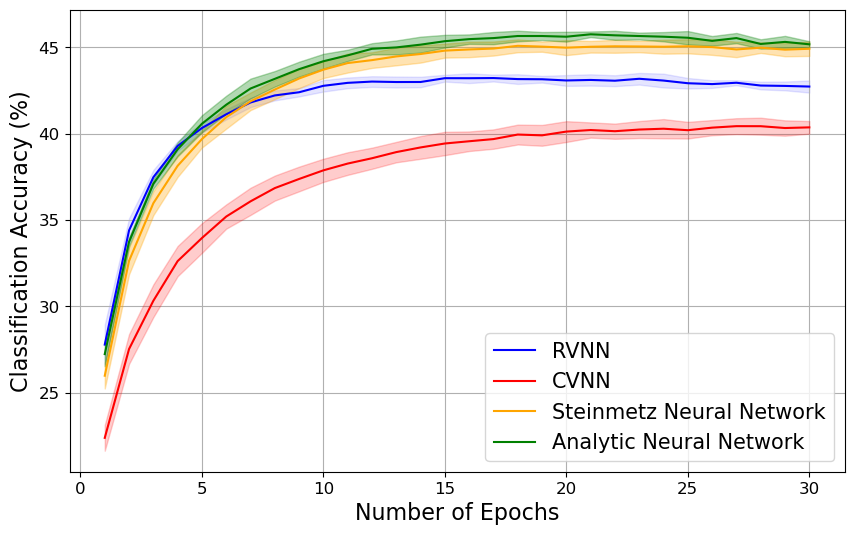

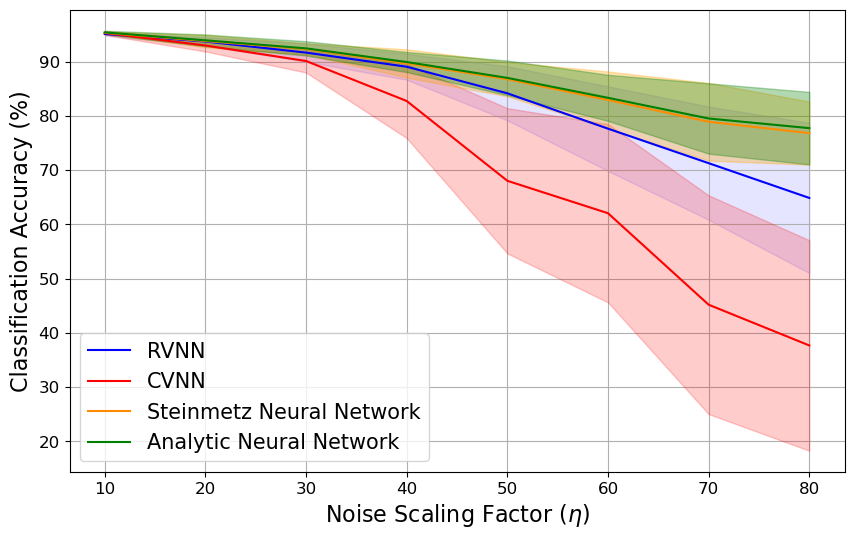

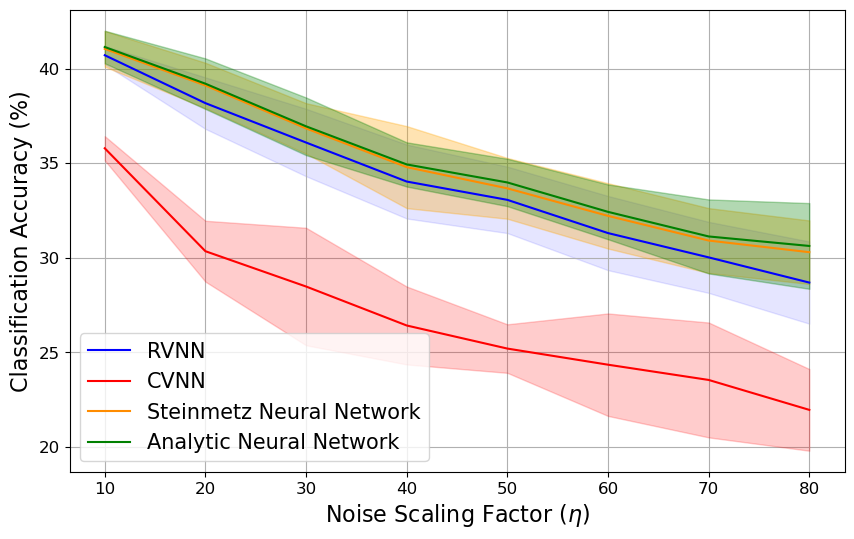

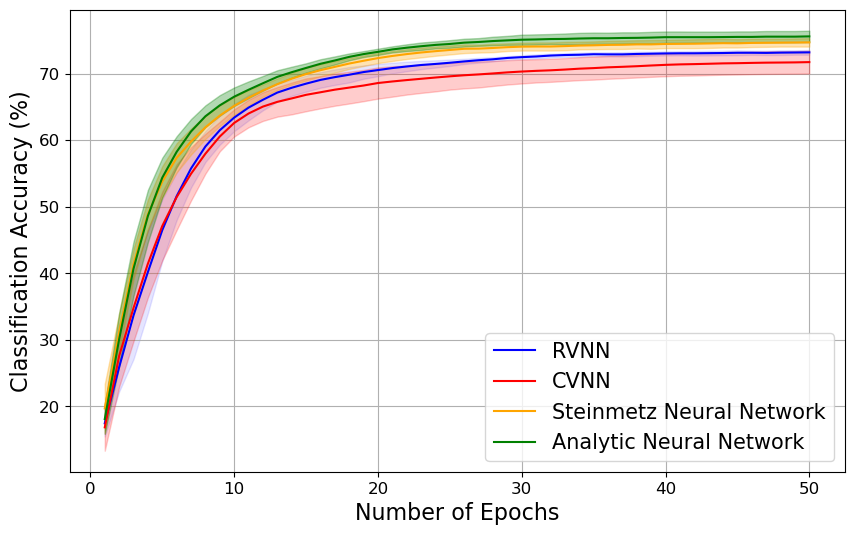

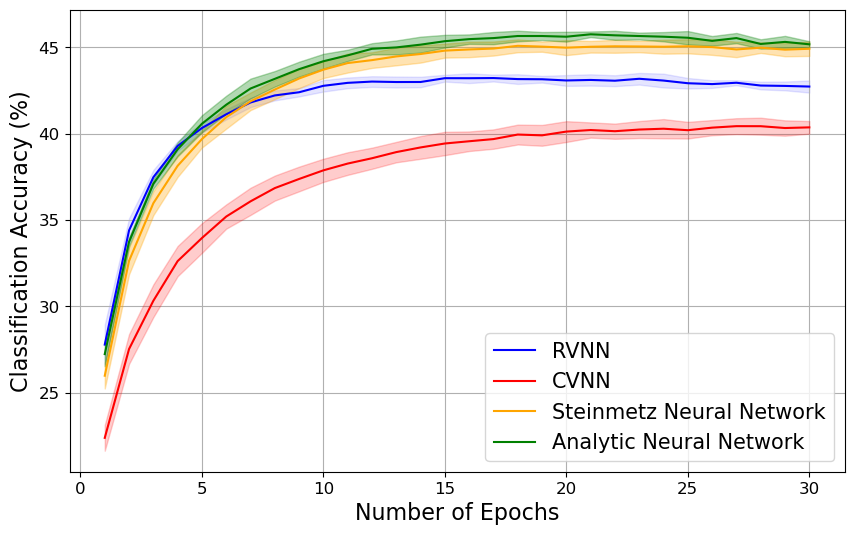

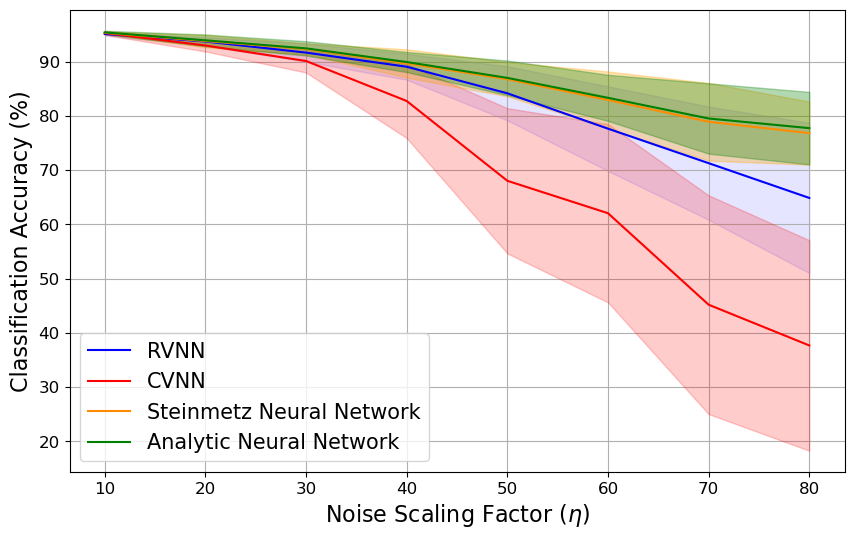

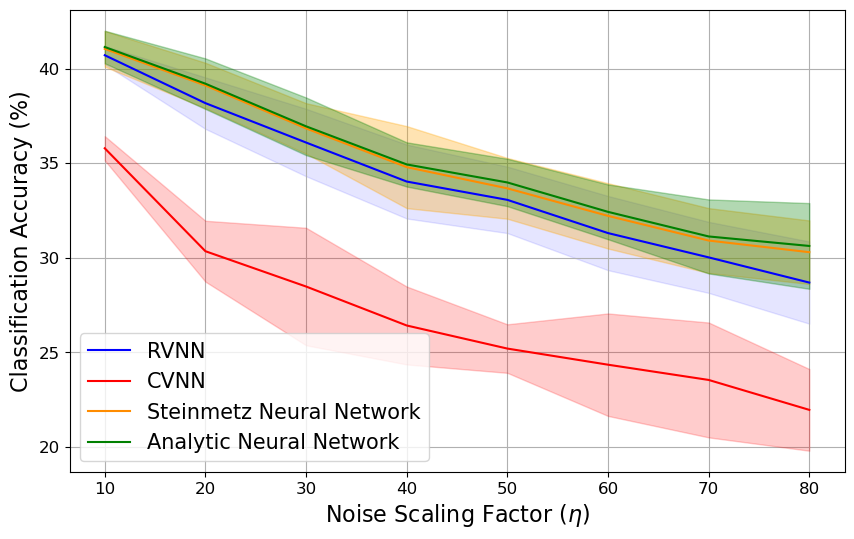

Empirical evaluations on benchmark datasets, including complex-valued MNIST and CIFAR-10, as well as synthetic examples, show that Steinmetz and Analytic Neural Networks outperform existing CVNNs and RVNNs. The networks exhibit robust performance across both classification and regression tasks, proving particularly effective in handling real-world noisy data scenarios.

Figure 3: Complex-Valued MNIST.

Figure 4: Noisy Complex-Valued MNIST.

The results affirm the practicality of the Steinmetz neural network architecture in complex-valued data processing and suggest its potential for broader applications requiring robust and interpretable signal analysis.

Conclusion

The introduction of Steinmetz Neural Networks represents a significant step toward efficient complex-valued data processing within DNNs. By employing separate-these-joint processing and introducing a consistency constraint, the networks achieve improved interpretability and generalization performance. Future work could explore further optimization techniques and theoretical guarantees to expand the applicability of Steinmetz and Analytic Neural Networks in complex-valued signal processing tasks and beyond.