M-RAG: Reinforcing Large Language Model Performance through Retrieval-Augmented Generation with Multiple Partitions

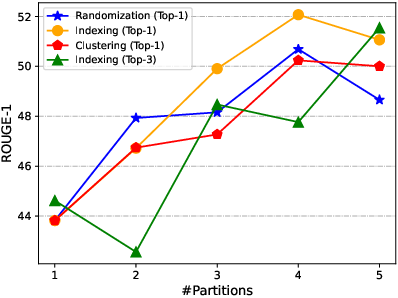

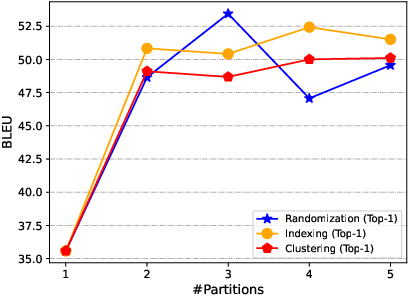

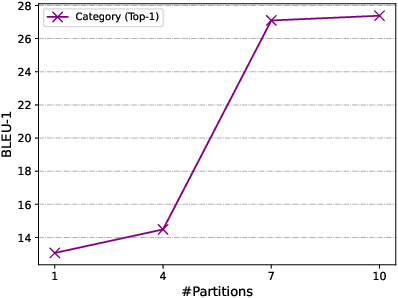

Abstract: Retrieval-Augmented Generation (RAG) enhances LLMs by retrieving relevant memories from an external database. However, existing RAG methods typically organize all memories in a whole database, potentially limiting focus on crucial memories and introducing noise. In this paper, we introduce a multiple partition paradigm for RAG (called M-RAG), where each database partition serves as a basic unit for RAG execution. Based on this paradigm, we propose a novel framework that leverages LLMs with Multi-Agent Reinforcement Learning to optimize different language generation tasks explicitly. Through comprehensive experiments conducted on seven datasets, spanning three language generation tasks and involving three distinct LLM architectures, we confirm that M-RAG consistently outperforms various baseline methods, achieving improvements of 11%, 8%, and 12% for text summarization, machine translation, and dialogue generation, respectively.

- Phi-2: The surprising power of small language models. https://www.microsoft.com/en-us/research/blog/phi-2-the-surprising-power-of-small-language-models.

- Lingua: Addressing scenarios for live interpretation and automatic dubbing. In AMTA, pages 202–209.

- Self-rag: Learning to retrieve, generate, and critique through self-reflection. CoRR, abs/2310.11511.

- V. Blagojevi. 2023. Enhancing rag pipelines in haystack: Introducing diversityranker and lostinthemiddleranker. https://towardsdatascience.com/enhancing-rag-pipelines-in-haystack-45f14e2bc9f5.

- Language models are few-shot learners. NeurIPS, 33:1877–1901.

- Walking down the memory maze: Beyond context limit through interactive reading. CoRR, abs/2310.05029.

- UPRISE: universal prompt retrieval for improving zero-shot evaluation. In EMNLP, pages 12318–12337.

- Lift yourself up: Retrieval-augmented text generation with self memory. NeurIPS.

- Towards coherent and cohesive long-form text generation. CoRR.

- Promptagator: Few-shot dense retrieval from 8 examples. In ICLR.

- Retrieval-augmented generation for large language models: A survey. CoRR, abs/2312.10997.

- Filtered-diskann: Graph algorithms for approximate nearest neighbor search with filters. In WWW, pages 3406–3416.

- Search engine guided neural machine translation. In AAAI, pages 5133–5140. AAAI Press.

- Manu: a cloud native vector database management system. PVLDB, 15(12):3548–3561.

- A comprehensive survey on vector database: Storage and retrieval technique, challenge. CoRR.

- Simple and effective retrieve-edit-rerank text generation. In ACL, pages 2532–2538.

- Piotr Indyk and Rajeev Motwani. 1998. Approximate nearest neighbors: towards removing the curse of dimensionality. In STOC, pages 604–613.

- Way off-policy batch deep reinforcement learning of implicit human preferences in dialog. CoRR.

- Product quantization for nearest neighbor search. TPAMI, 33(1):117–128.

- Mistral 7b. CoRR, abs/2310.06825.

- Mixtral of experts. CoRR, abs/2401.04088.

- Llmlingua: Compressing prompts for accelerated inference of large language models. In EMNLP, pages 13358–13376.

- Dense passage retrieval for open-domain question answering. In EMNLP (1), pages 6769–6781.

- Can neural machine translation be improved with user feedback? CoRR.

- Carolin Lawrence and Stefan Riezler. 2018. Improving a neural semantic parser by counterfactual learning from human bandit feedback. CoRR.

- Retrieval-augmented generation for knowledge-intensive nlp tasks. NeurIPS, 33:9459–9474.

- Stylized dialogue generation with multi-pass dual learning. In NeurIPS, pages 28470–28481.

- A diversity-promoting objective function for neural conversation models. In HLT-NAACL, pages 110–119.

- Structure-aware language model pretraining improves dense retrieval on structured data. In ACL (Findings), pages 11560–11574.

- Dailydialog: A manually labelled multi-turn dialogue dataset. In IJCNLP(1), pages 986–995.

- Chin-Yew Lin. 2004. ROUGE: A package for automatic evaluation of summaries. In Text Summarization Branches Out, pages 74–81.

- RA-DIT: retrieval-augmented dual instruction tuning. CoRR, abs/2310.01352.

- SCATTER: selective context attentional scene text recognizer. In CVPR, pages 11959–11969.

- Query rewriting for retrieval-augmented large language models. EMNLP, pages 5303–5315.

- Yu A Malkov and Dmitry A Yashunin. 2018. Efficient and robust approximate nearest neighbor search using hierarchical navigable small world graphs. TPAMI, 42(4):824–836.

- Approximate nearest neighbor algorithm based on navigable small world graphs. Information Systems, 45:61–68.

- Gemma: Open models based on gemini research and technology. CoRR, abs/2403.08295.

- Playing atari with deep reinforcement learning. CoRR.

- Webgpt: Browser-assisted question-answering with human feedback. CoRR, abs/2112.09332.

- Don’t give me the details, just the summary! topic-aware convolutional neural networks for extreme summarization. In EMNLP, pages 1797–1807.

- Text and code embeddings by contrastive pre-training. CoRR.

- Training language models to follow instructions with human feedback. NeurIPS, 35:27730–27744.

- Survey of vector database management systems. CoRR.

- Matt Post. 2018. A call for clarity in reporting BLEU scores. In WMT, pages 186–191.

- BIGPATENT: A large-scale dataset for abstractive and coherent summarization. In ACL (1), pages 2204–2213.

- Aleksandrs Slivkins et al. 2019. Introduction to multi-armed bandits. Foundations and Trends® in Machine Learning, 12(1-2):1–286.

- The jrc-acquis: A multilingual aligned parallel corpus with 20+ languages. In LREC, pages 2142–2147.

- Llama 2: Open foundation and fine-tuned chat models. CoRR, abs/2307.09288.

- Training data is more valuable than you think: A simple and effective method by retrieving from training data. In ACL, pages 3170–3179.

- Knowledgpt: Enhancing large language models with retrieval and storage access on knowledge bases. CoRR, abs/2308.11761.

- Multimodal query suggestion with multi-agent reinforcement learning from human feedback. In WWW, pages 1374–1385.

- Collectively simplifying trajectories in a database: A query accuracy driven approach. CoRR, abs/2311.11204.

- Error-bounded online trajectory simplification with multi-agent reinforcement learning. In KDD, pages 1758–1768.

- Recursively summarizing books with human feedback. CoRR.

- More is better: Enhancing open-domain dialogue generation via multi-source heterogeneous knowledge. In EMNLP, pages 2286–2300.

- KSAM: infusing multi-source knowledge into dialogue generation via knowledge source aware multi-head decoding. In ACL (Findings), pages 353–363.

- Lm-cocktail: Resilient tuning of language models via model merging. CoRR, abs/2311.13534.

- RECOMP: improving retrieval-augmented lms with compression and selective augmentation. CoRR, abs/2310.04408.

- Secure k𝑘kitalic_k nearest neighbors query for high-dimensional vectors in outsourced environments. IEEE TBD, 4(4):586–599.

- Towards coherent and engaging spoken dialog response generation using automatic conversation evaluators. CoRR.

- Open-source large language models are strong zero-shot query likelihood models for document ranking. In EMNLP (Findings), pages 8807–8817.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.