- The paper introduces I-IDL-ALM which linearizes subproblems with indefinite proximal terms for faster convergence in convex programming.

- It leverages larger step sizes and simplified computations to improve efficiency in large-scale optimization tasks like SVM and image segmentation.

- The method achieves a convergence rate of O(1/N) under specific conditions, demonstrating robust performance in numerical experiments.

Indefinite Linearized Augmented Lagrangian Method for Convex Programming

This paper introduces an indefinite linearized augmented Lagrangian method (I-IDL-ALM) specifically designed to address convex programming problems with linear inequality constraints. It builds upon the classic augmented Lagrangian method (ALM), which primarily focuses on equality-constrained problems, extending its techniques to handle inequality constraints efficiently.

Proposed Methodology

The focus of the study is on the convex programming problem characterized by a closed, proper convex function θ and linear inequality constraints Ax≥b. The traditional ALM approach requires solving challenging subproblems due to the non-smooth nature of these constraints. The proposed method aims to simplify these subproblems via linearization and indefinite proximal terms, which leads to computational efficiency and improved convergence properties.

The key modifications introduced in this method compared to the classic approach include:

- Linearization of Subproblems: The introduction of linearized terms in the ALM framework is extended to inequality constraints.

- Indefinite Proximal Terms: The use of indefinite proximal matrices allows for larger step sizes, resulting in faster convergence.

- Application to Large-Scale Optimization: By simplifying the core subproblem, the new method is especially advantageous for large-scale optimization problems.

Implementation Details

The new algorithm is specified by the iterative scheme:

1

2

3

4

5

6

|

1. Compute the linearized Lagrangian:

\mathcal{L}_\beta^{\,\mathrm{I\!\!I}(x,\lambda):=\theta(x)+\frac{1}{2\beta}\Big\{\|[\lambda-\beta(Ax-b)]_{+}\|^2-\lambda^T\lambda\Big\}

2. Update x and λ iteratively:

x^{k+1} = \arg\min\{\mathcal{L}_\beta^{\,\mathrm{I\!\!I}(x,\lambda^k) + \frac{1}{2}\|x-x^k\|_{D_0}^2 | x \in \mathcal{X}\}

\lambda^{k+1} = [\lambda^k - \beta(Ax^{k+1} - b)]_{+} |

The convergence of this method is assured under the condition that τ∈(0.75,1), and it enjoys a convergence rate of O(1/N).

Numerical Results

The paper provides extensive numerical results on applications of the proposed method:

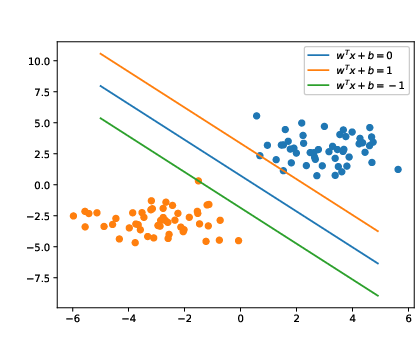

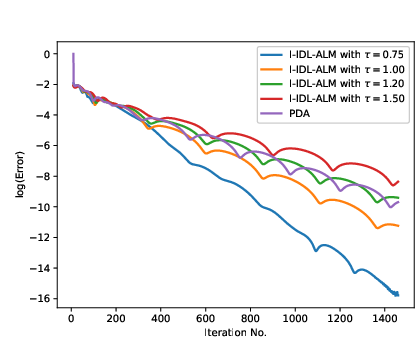

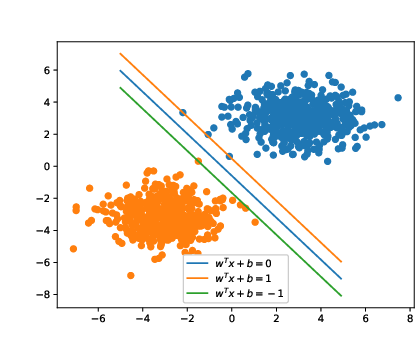

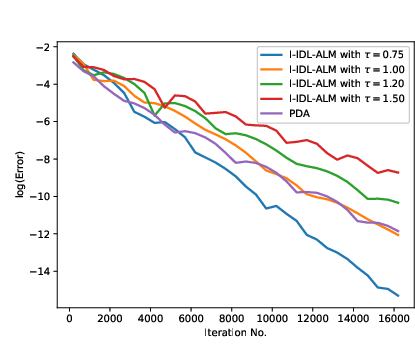

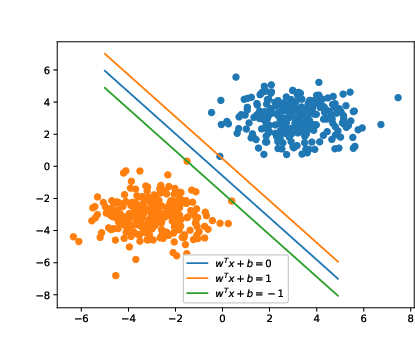

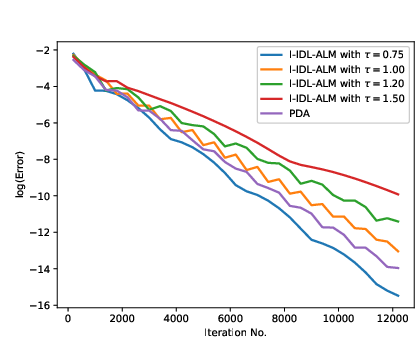

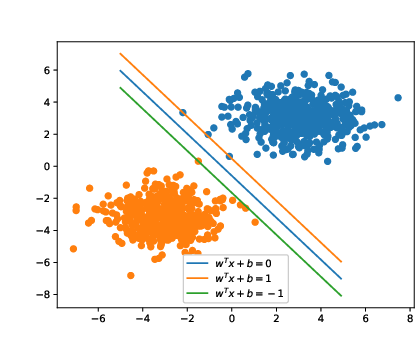

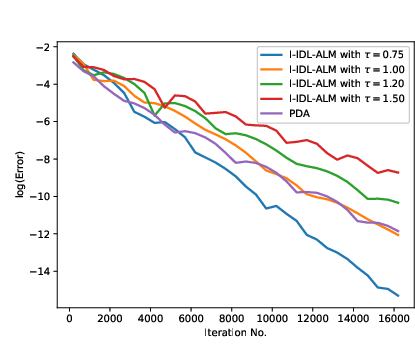

- Support Vector Machine (SVM): The algorithm is applied to linear SVM problems, showcasing fewer iterations and faster convergence than traditional approaches (Figure 1).

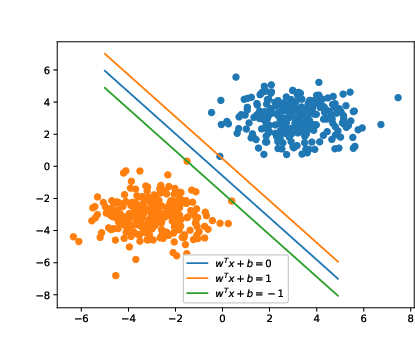

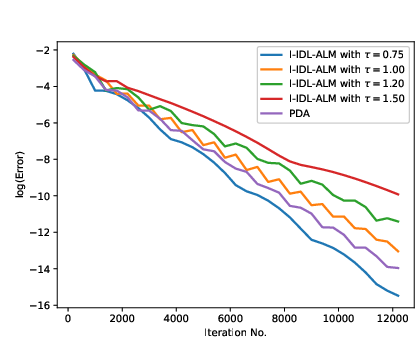

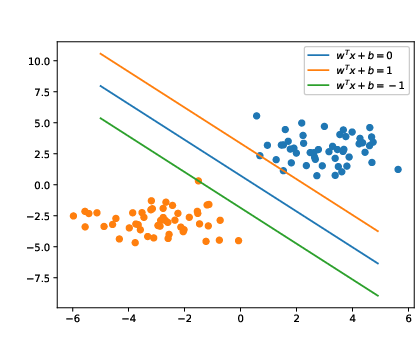

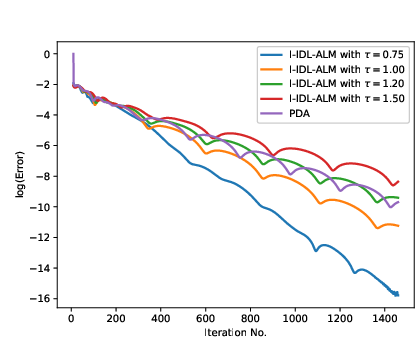

- Image Segmentation: In both foreground-background and multiphase segmentation tasks, the method performs robustly, segmenting complex images with reduced computational overhead (Figures 2-5).

Figure 1: Efficient classification results in the left for support vector machines, where I-IDL-ALM attained convergence faster and with fewer iterations compared to benchmark methods.

Figure 2: From left to right: the original image and the computed segmentation result solved by the I-IDL-ALM for image segmentation.

Conclusions

The proposed I-IDL-ALM method extends the applicability of ALM to problems with linear inequality constraints without compromising on efficiency or convergence guarantees. The ability to utilize a smaller regularization parameter while keeping a larger step size underlines its potential in various large-scale optimization contexts. Future work may focus on exploring more applications and refinements to further enhance its practical utility and theoretical robustness.