- The paper introduces AutoDS, a novel framework that quantifies belief shifts using Bayesian surprise to guide hypothesis discovery.

- It employs KL divergence to measure surprise and leverages Monte Carlo Tree Search to balance exploration and exploitation across diverse datasets.

- Experimental results indicate a 5-29% improvement in detecting surprising hypotheses compared to baselines, aligning well with expert judgments.

Open-ended Scientific Discovery via Bayesian Surprise

Introduction

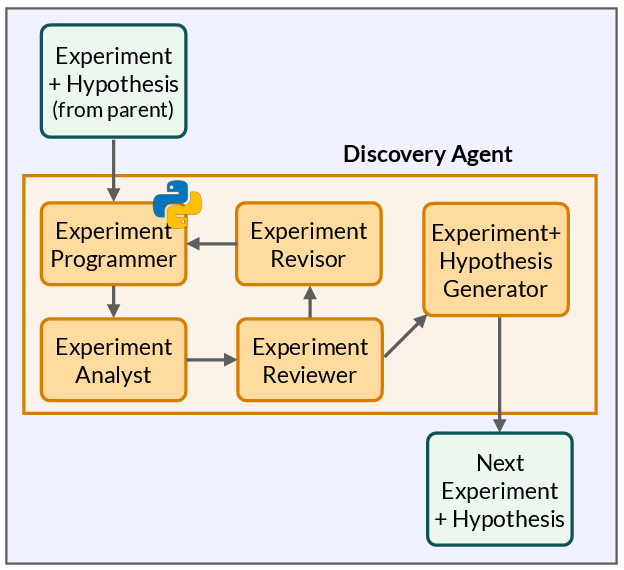

The paper "Open-ended Scientific Discovery via Bayesian Surprise" explores the limitations of traditional autonomous scientific discovery (ASD) frameworks that predominantly function in goal-driven settings. These systems typically require pre-defined research questions to guide hypothesis generation and experimentation. However, the paper introduces a novel framework, AutoDS, which diverges from conventional methods by operating in an open-ended setting where predefined questions are absent. AutoDS leverages Bayesian surprise as a reward mechanism to guide the discovery process, enabling the system to autonomously identify and investigate novel and surprising hypotheses.

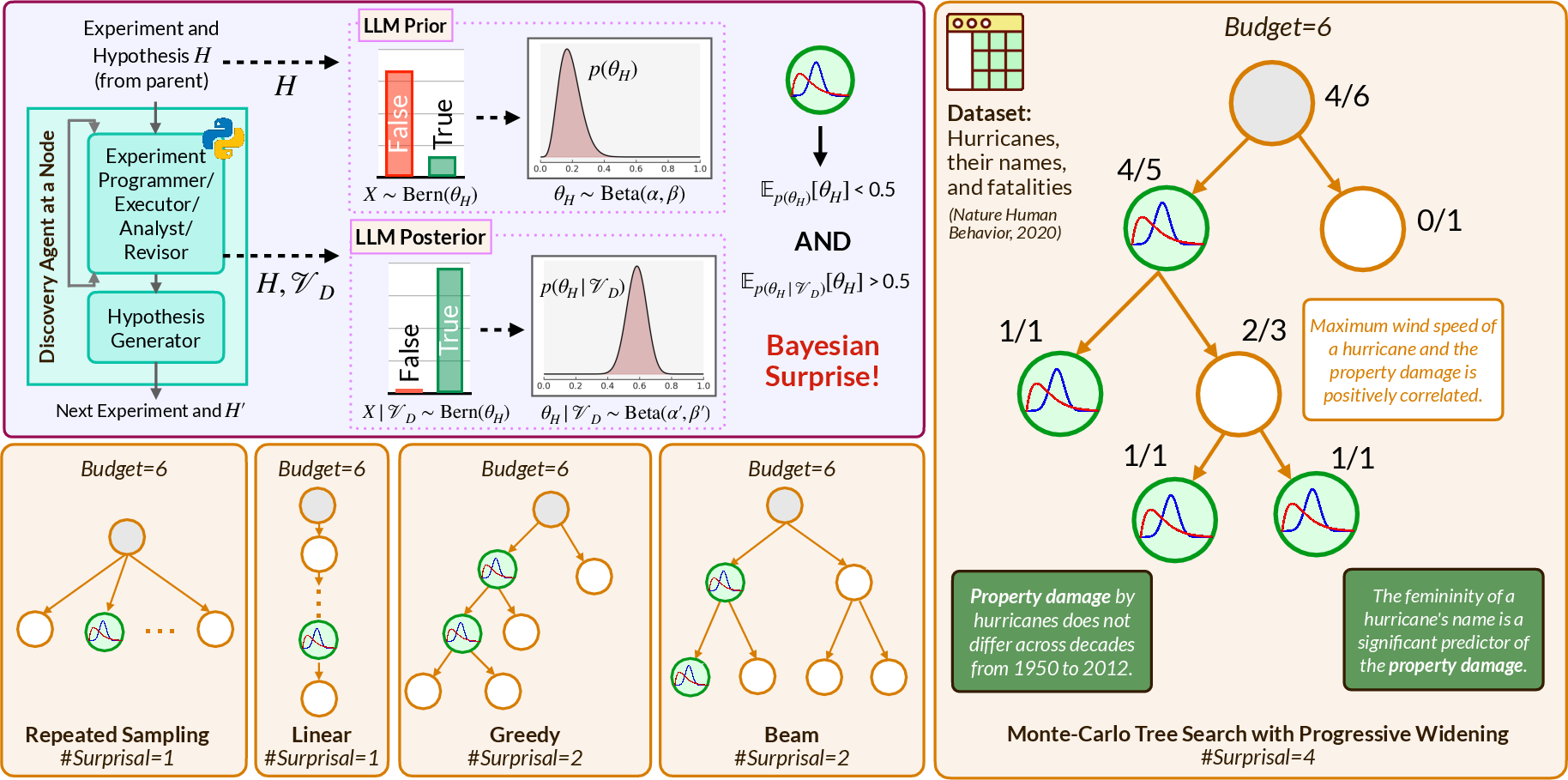

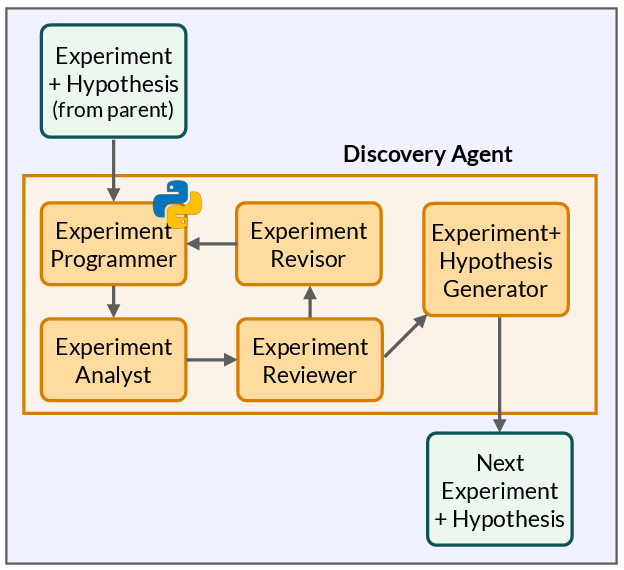

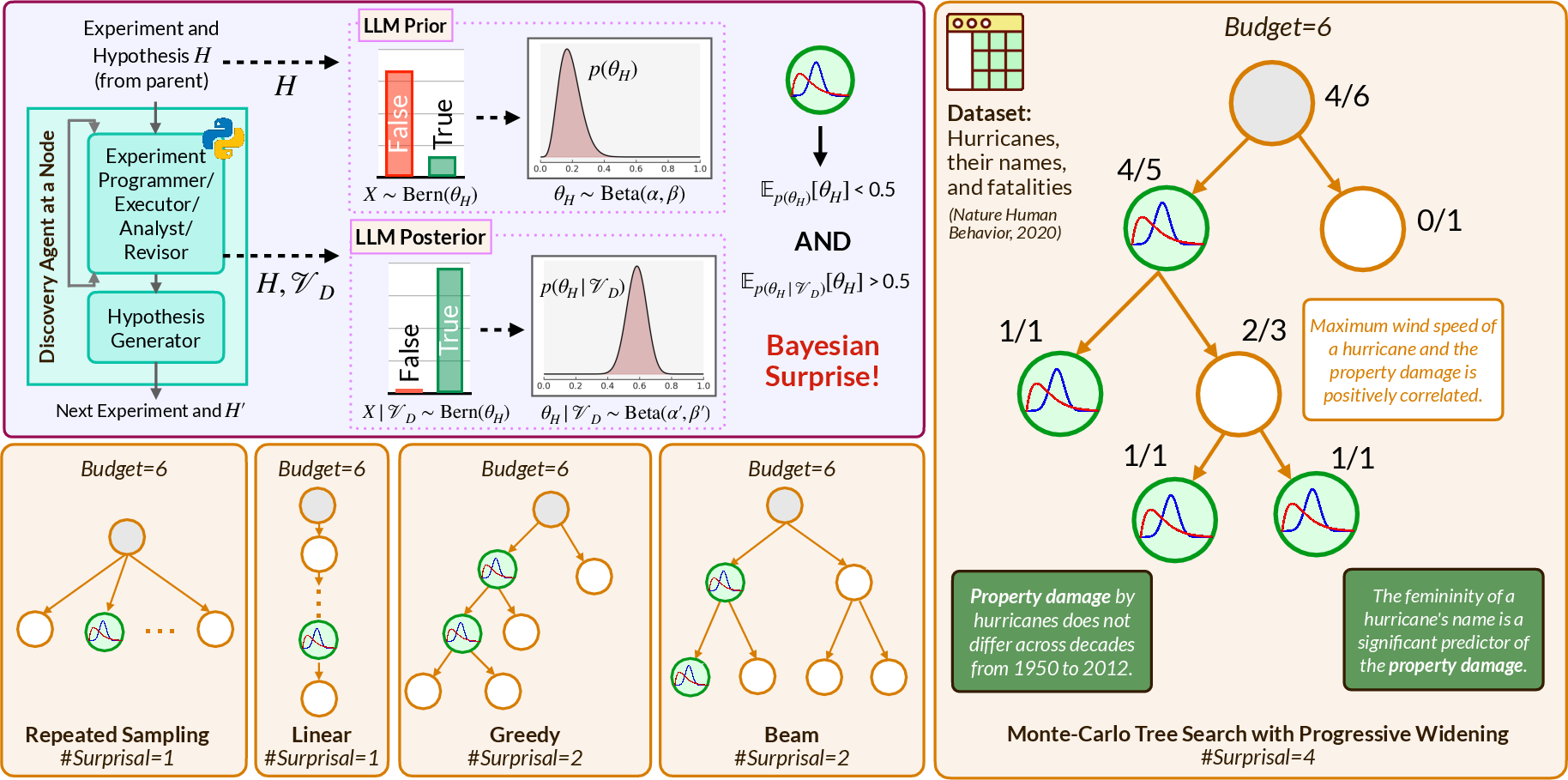

Figure 1: Overview of AutoDS: A method guided by Bayesian surprise, using surprisal as a reward function within an MCTS procedure for discovering surprising hypotheses.

Framework: AutoDS

AutoDS is an innovative framework that models the LLM as a Bayesian observer to quantify surprise. This is achieved by computing the epistemic shift from the LLM's prior beliefs to posterior beliefs following empirical evaluation of a hypothesis. Surprisal acts as the reward, guiding the exploration of the hypothesis space effectively. This exploration is conducted via Monte Carlo Tree Search (MCTS) with progressive widening, which balances exploration with exploitation. The goal is to extend the boundaries of knowledge as encoded in the LLM's model.

Surprisal Measurement

In AutoDS, Bayesian surprise quantifies belief shift when an LLM observes experimental data. For a hypothesis H, surprisal is quantified as the Kullback-Leibler divergence between the prior distribution and posterior distribution of beliefs about H. This divergence signifies the magnitude of change in beliefs—effectively measuring surprise. Surprisal-driven hypothesis exploration not only efficiently directs computational resources towards promising areas of the hypothesis space but also identifies the hypotheses that challenge the LLM’s existing knowledge.

Experimental Evaluation

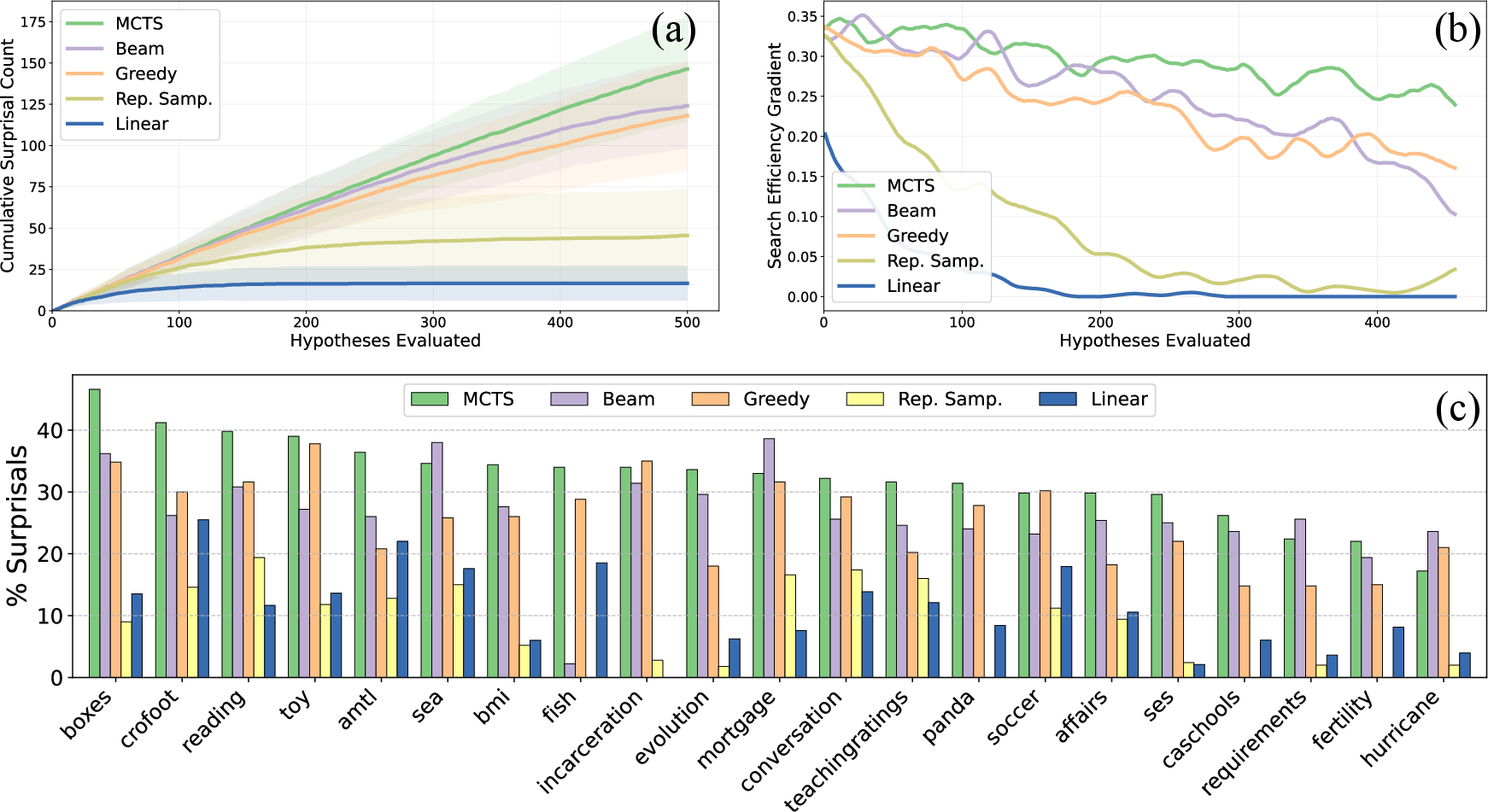

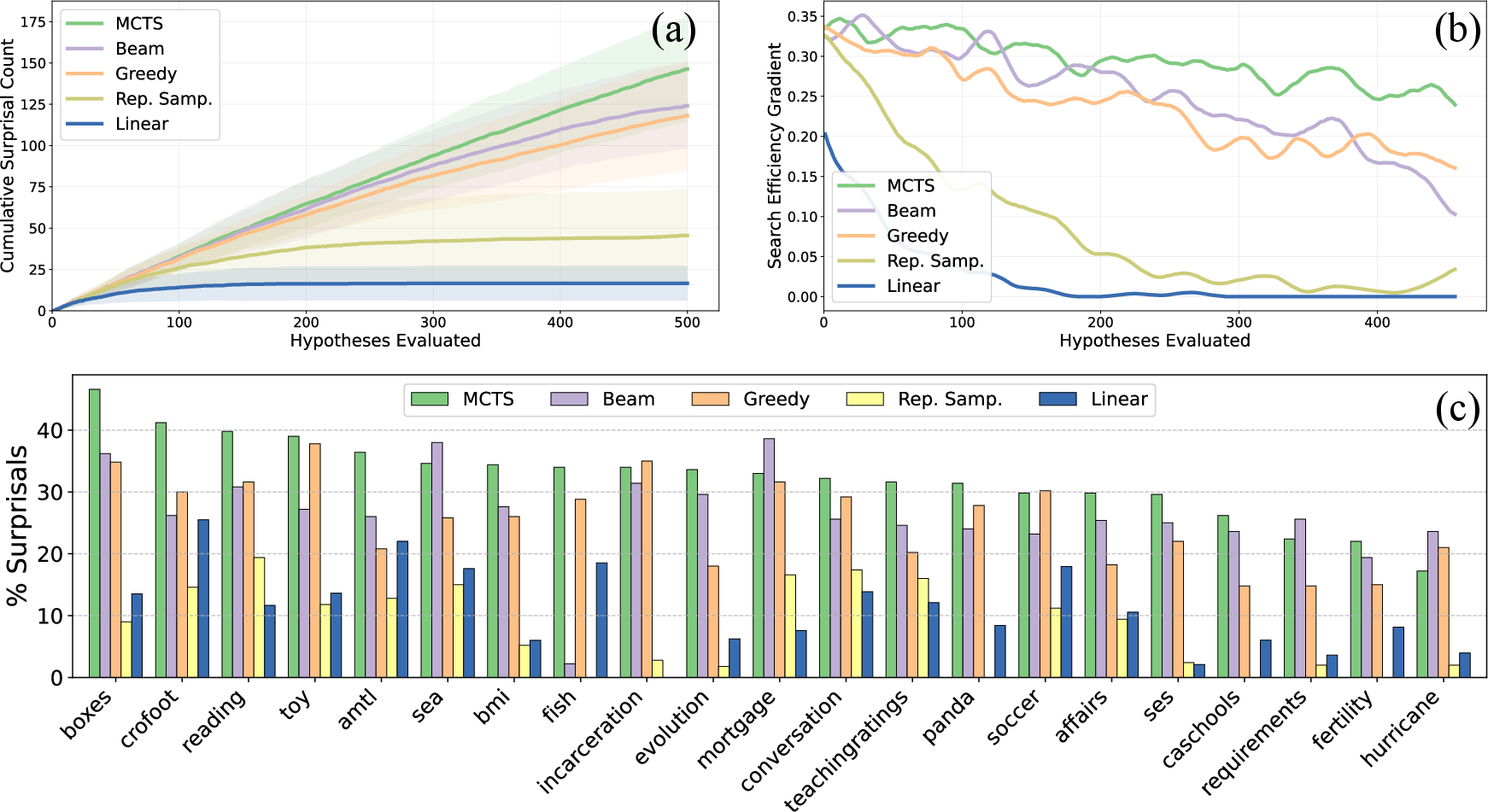

The authors validate AutoDS through experiments conducted on 21 real-world datasets across various domains, including biology, economics, and finance. Under a fixed evaluation budget, the results reveal that AutoDS discovers 5-29% more hypotheses that are surprising to the LLM compared to existing baselines, including tree-based search algorithms. Moreover, a human evaluation indicated that two-thirds of hypotheses categorized as surprising by AutoDS were similarly regarded by human experts.

Figure 2: Search Performance: AutoDS yields superior search efficiency and more surprises than baselines, including other tree-search methods.

Comparative Analysis and Baselines

AutoDS is compared against repeated sampling, linear sampling, greedy tree search, and beam search. Among these, MCTS within AutoDS was found to outperform others in discovering unique and surprising hypotheses. The use of Bayesian surprise proved to be an adept metric in navigating vast hypothesis spaces over relying solely on diversity or human-interest proxies.

Results with Alternative LLMs

The evaluation extends to testing with alternative LLMs like o4-mini, which is trained on reasoning tasks. The results with o4-mini indicate that reasoning models can potentially improve the discovery of complex, worth-investigating hypotheses, suggesting a promising direction for leveraging diversified LLM capabilities in open-ended ASD frameworks.

Figure 3: Belief shift across datasets using AutoDS with Bayesian surprise, indicating differential evidence processing across domains.

Implications and Future Directions

AutoDS suggests a shift towards more exploratory and open-ended methodologies within ASD. The application of Bayesian surprise as a guiding principle holds potential for discovering impactful scientific hypotheses autonomously. It shows that modeling LLMs as Bayesian observers can bridge the gap between human-like curiosity and machine-driven exploration. Future research could further integrate reasoning capabilities and domain-specific knowledge bases to enhance the system's ability to generate deeper and more insightful scientific hypotheses autonomously.

Conclusion

AutoDS demonstrates significant advancements in open-ended ASD by introducing Bayesian surprise as a central metric for hypothesis discovery, outperforming conventional goal-driven models. The framework not only addresses the vast hypothesis space challenge but also aligns well with human measures of surprise, marking a substantial step forward in the development of autonomous scientific systems. The research highlights the potential of integrating Bayesian surprise in enhancing the ASD capabilities of LLMs, pointing towards a sophisticated approach to future AI-driven scientific discovery.