LLM-Agent-UMF: LLM-based Agent Unified Modeling Framework for Seamless Design of Multi Active/Passive Core-Agent Architectures

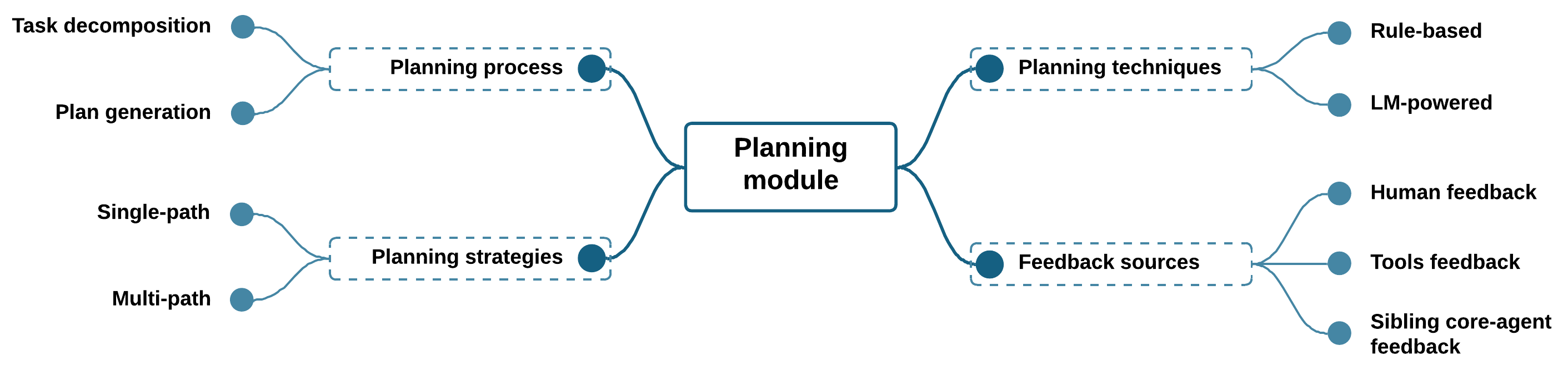

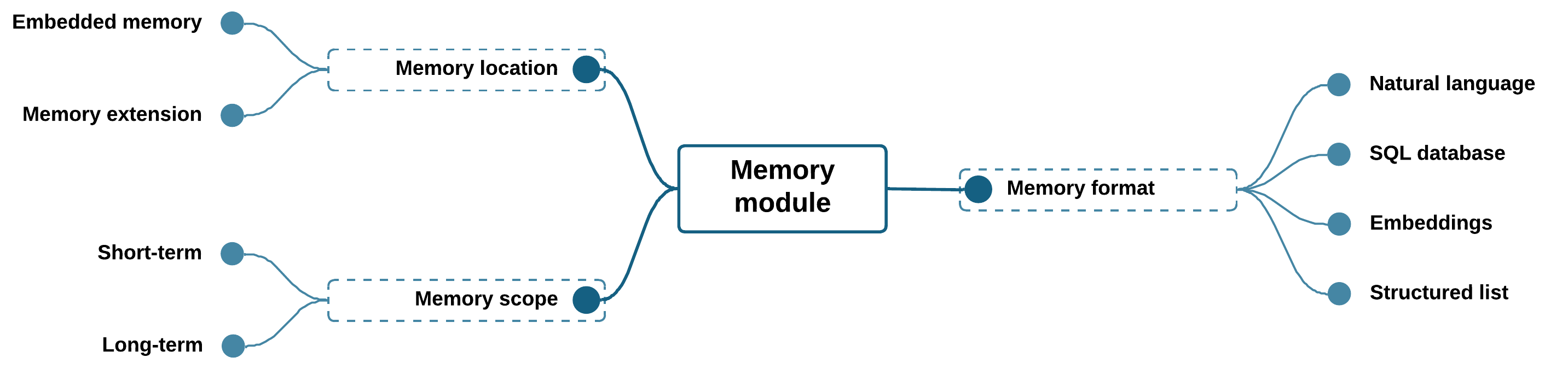

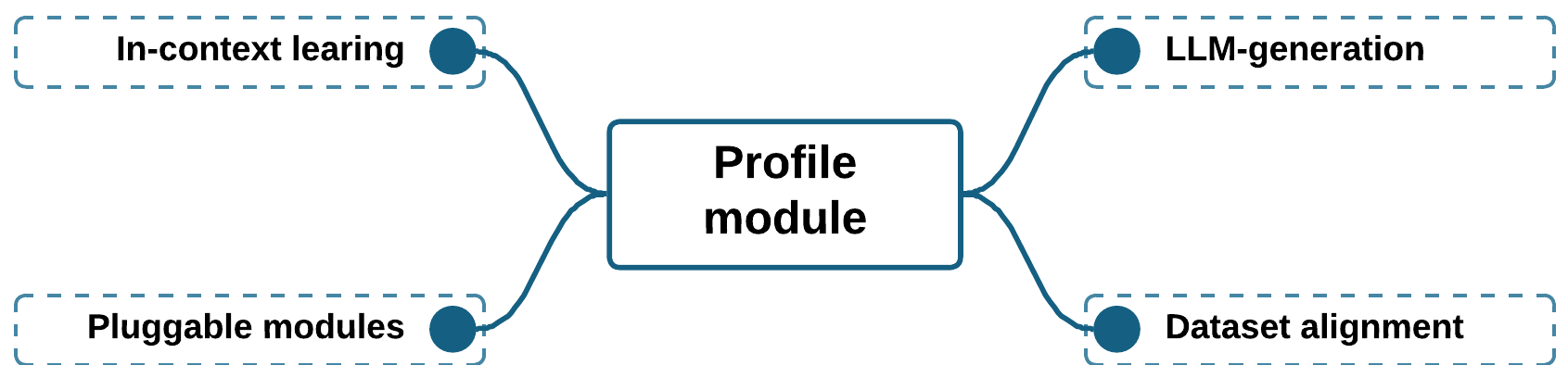

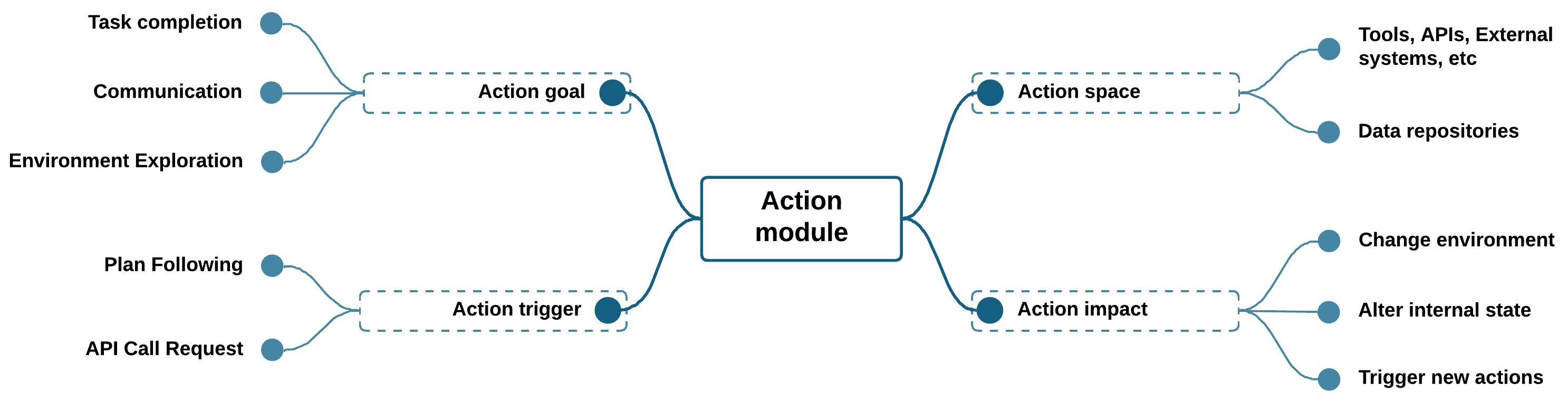

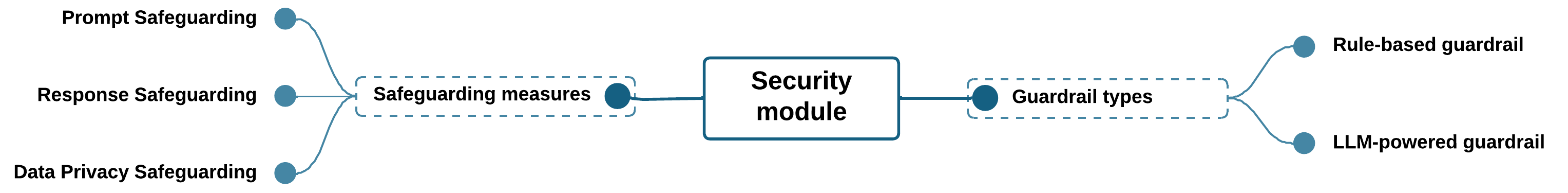

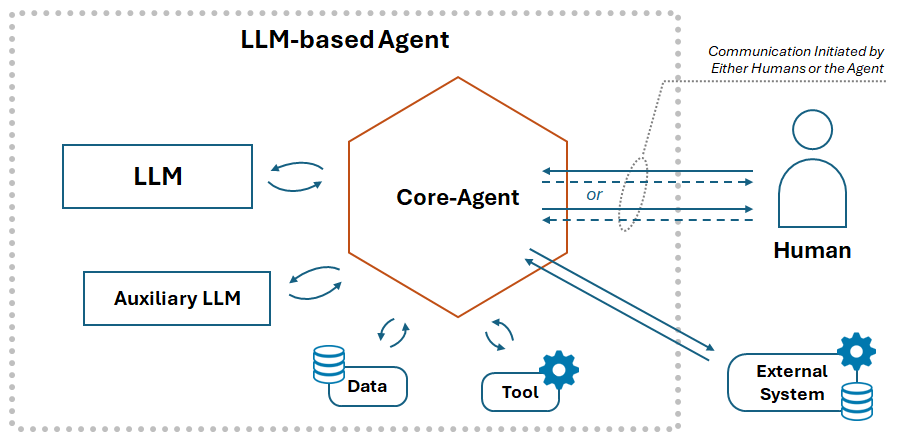

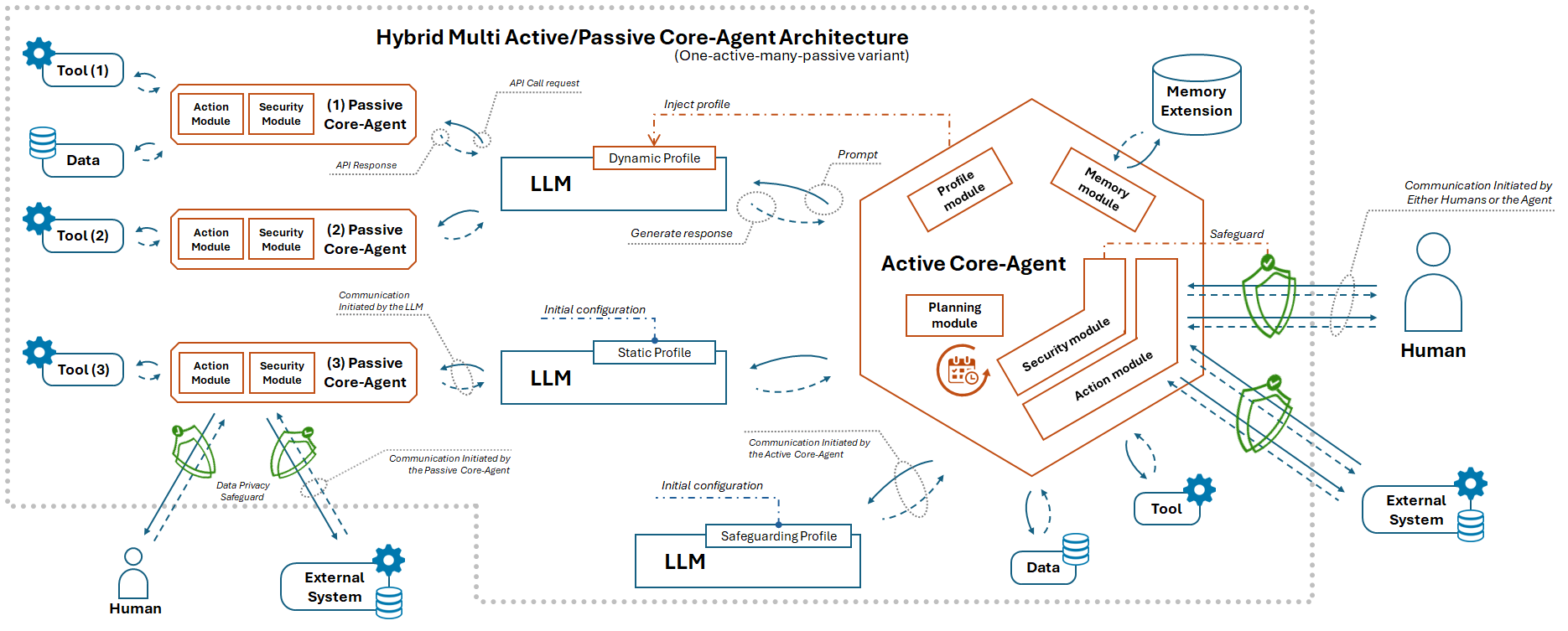

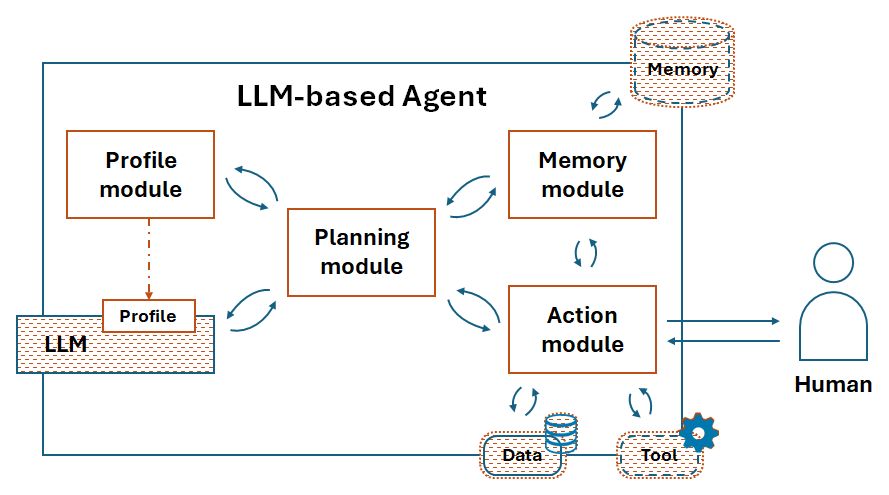

Abstract: In an era where vast amounts of data are collected and processed from diverse sources, there is a growing demand for sophisticated AI systems capable of intelligently fusing and analyzing this information. To address these challenges, researchers have turned towards integrating tools into LLM-powered agents to enhance the overall information fusion process. However, the conjunction of these technologies and the proposed enhancements in several state-of-the-art works followed a non-unified software architecture, resulting in a lack of modularity and terminological inconsistencies among researchers. To address these issues, we propose a novel LLM-based Agent Unified Modeling Framework (LLM-Agent-UMF) that establishes a clear foundation for agent development from both functional and software architectural perspectives, developed and evaluated using the Architecture Tradeoff and Risk Analysis Framework (ATRAF). Our framework clearly distinguishes between the different components of an LLM-based agent, setting LLMs and tools apart from a new element, the core-agent, which plays the role of central coordinator. This pivotal entity comprises five modules: planning, memory, profile, action, and security -- the latter often neglected in previous works. By classifying core-agents into passive and active types based on their authoritative natures, we propose various multi-core agent architectures that combine unique characteristics of distinctive agents to tackle complex tasks more efficiently. We evaluate our framework by applying it to thirteen state-of-the-art agents, thereby demonstrating its alignment with their functionalities and clarifying overlooked architectural aspects. Moreover, we thoroughly assess five architecture variants of our framework by designing new agent architectures that combine characteristics of state-of-the-art agents to address specific goals. ...

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Knowledge Gaps

Unresolved Knowledge Gaps, Limitations, and Open Questions

The following list captures what remains missing, uncertain, or unexplored in the paper and offers concrete directions for future research:

- Lack of empirical validation: the framework is evaluated via AFTRAM scenarios, not through implemented agents or end-to-end deployments; quantitative experiments (latency, throughput, cost, accuracy) are absent.

- No benchmarking suite: there is no standardized set of tasks, datasets, and metrics to evaluate quality attributes (modularity, reusability, scalability, security) across architectures and agents.

- Optimality claim untested: the “one-active–many-passive” architecture is asserted as optimal without comparative empirical studies, ablation analyses, or formal proofs across diverse tasks and domains.

- Missing interface contracts: concrete API specifications, data schemas, and communication protocols for core-agent modules (planning, memory, profile, action, security) are not defined to enable interoperability and reuse.

- Ambiguous multi-core coordination: protocols for task allocation, synchronization, and conflict resolution among multiple core-agents (especially with more than one active core-agent) are not formalized.

- Concurrency control and consistency: strategies for handling concurrent plans/actions, deadlock avoidance, and consistency models (strong/eventual) across shared state are unspecified.

- Memory management policies: retention, eviction, deduplication, provenance tracking, and cross-core memory sharing semantics remain undefined; GDPR-compliant data lifecycle policies are not operationalized.

- Privacy compliance operationalization: the paper references PbD and trust boundaries, but lacks concrete Data Protection Impact Assessments, PII classification schemes, consent management, and auditable data flow mappings.

- Security threat model missing: a formal, task- and environment-specific threat model (assets, adversaries, attack surfaces, likelihood/impact) is not provided to guide guardrail design and evaluation.

- Guardrail efficacy unquantified: no red-team evaluations or precision/recall/FPR/FNR metrics for rule-based vs LLM-based guardrails; trade-offs between false positives and utility are not measured.

- Performance–security trade-offs: the runtime overhead and resource costs introduced by the security module (prompt/response filtering, privacy safeguards) are not quantified.

- Dynamic role switching: criteria, triggers, and safeguards for passive core-agents becoming active (and vice versa) at runtime are not specified; governance and escalation paths are unclear.

- Human-in-the-loop protocols: concrete workflows for human feedback (approval, override, auditing), conflict arbitration with agent plans, and consent mechanisms are not detailed.

- Tool trust and sandboxing: methods for dynamic tool discovery, capability-based permissions, sandbox execution, and tool reputation/trust scoring are not provided.

- Heterogeneous LLM orchestration: policies for capability-aware routing, fallback, versioning, and consistency across diverse LLMs (sizes, modalities, vendors) are not described.

- Multimodal integration gaps: handling of non-text inputs/outputs (vision, audio, code execution traces) and streaming/asynchronous modalities is not specified within module boundaries.

- Long-running and streaming tasks: lifecycle management for asynchronous actions, cancellations, retries, and partial results delivery remains undefined.

- Resilience and recovery mechanisms: although resilience/fault tolerance are quality requirements, concrete recovery strategies (checkpointing, failover, replication, backoff, incident response) are missing and explicitly deferred.

- Scalability limits and stress testing: upper bounds on the number of core-agents, throughput under load, and degradation patterns are not characterized via stress or chaos testing.

- Formal semantics for planning: the planning module’s processes/strategies lack a formal representation (DSLs, state machines, temporal logic) to enable verification and reproducible execution.

- Profile module deployment: practical guidance on fine-tuned pluggable modules (training data standards, packaging, safety vetting, compatibility guarantees) is absent.

- Migration path: there is no methodology or tooling to refactor existing agents (e.g., Autogen, LangGraph, LangChain-based systems) into the proposed core-agent architecture.

- Monitoring and MLOps integration: observability (logging, metrics, traces), drift/anomaly detection, configuration management, and rollback procedures are not operationalized.

- Ethical alignment measurement: methods and metrics to assess safety and ethical compliance (normative frameworks, value alignment tests) are not proposed.

- Standardization and adoption: how the new terminology (core-agent) and module delineations will be standardized, adopted, and measured for researcher/practitioner comprehension is unspecified.

- Cross-jurisdiction legal mapping: concrete compliance patterns for differing regulatory regimes (e.g., EU AI Act vs other jurisdictions), including auditing and documentation templates, are missing.

- Reference implementation: no open-source artifacts, exemplar code, or integration guides are provided to demonstrate feasibility, accelerate adoption, and enable reproducibility.

- Comparative analysis: systematic comparisons with existing architectural approaches (e.g., multi-agent frameworks, graph-based orchestrations) are qualitative; quantitative head-to-head evaluations are absent.

- Tool–memory interplay: policies to prevent semantic leakage via memory retrieval (prompt injection through retrieved content, tool outputs contaminating memory) are not detailed.

- Feedback source arbitration: when human/tool/sibling core-agent feedback conflicts, adjudication rules, prioritization policies, and escalation paths remain undefined.

Glossary

- Active core-agent: An authoritative core-agent subtype that encompasses all five modules and leads planning and decision-making for complex tasks. "active (authoritative, encompassing all five modules for complex task management)"

- AFTRAM: A scenario-driven method within ATRAF for evaluating tradeoffs and risks in architectural frameworks. "we leveraged ATRAF's Architectural Framework Tradeoff and Risk Analysis Method (AFTRAM), identifying quality attribute goals, developing scenarios, and analyzing architectural risks"

- AGI: Artificial General Intelligence; a hypothesized form of AI capable of general, human-like intelligence across diverse tasks. "steppingstones towards AGI, offering hope for the development of AI agents that can adapt to diverse scenarios"

- ATRAF: Architecture Tradeoff and Risk Analysis Framework; a structured framework for evaluating architectural decisions and risks. "The Architecture Tradeoff and Risk Analysis Framework (ATRAF)~\cite{benhassouna2025atraf} provides a robust evaluation method"

- ATRAF-driven IMRaD Methodology: A methodology mapping ATRAF’s phases to the IMRaD academic structure for rigor and reproducibility. "per the ATRAF-driven IMRaD Methodology~\cite{benhassouna2025atrafimrad}"

- C.I.A. Triad: The security principle comprising Confidentiality, Integrity, and Availability to safeguard systems and data. "C.I.A. Triad: The Confidentiality, Integrity, and Availability (C.I.A.) triad serves as a foundational principle to ensure secure and reliable systems, safeguarding data confidentiality, maintaining data and process integrity, and ensuring system availability \cite{151}."

- Core-agent: The central coordinating component in an LLM-based agent, orchestrating LLMs, tools, and modules. "we introduce a new unit within the agent, which we label as the ``core-agent''"

- Defense in Depth: A security strategy that employs multiple layered controls to protect against threats. "Defense in Depth: Multiple layers of security controls are implemented to protect against threats, achieving Security and Integrity, enhancing system resilience \cite{175}."

- Embeddings: Vector representations of data used to capture semantic information, often for memory or retrieval. "data representation formats (natural language, embeddings, SQL databases, structured lists) \cite{133,127}."

- Fine-tuned Pluggable Modules: Role-defining components optimized via fine-tuning that can be attached to the core-agent to improve efficiency. "introduces a novel fourth method, Fine-tuned Pluggable Modules, which enhances Performance by reducing context size and memory footprint for efficient inference."

- Guardrails: Safety mechanisms or constraints that monitor and restrict agent behavior for secure operation. "notable absence of direct security measures or guardrails within the agents to ensure the protection of sensitive information and enhance overall system integrity."

- Hybrid Memory: A memory setup combining short-term and long-term storage within agents. "Unified Memory (short-term, in-context learning) and Hybrid Memory (short- and long-term)."

- In-Context Learning: A capability where LLMs learn or adapt during inference using examples within the prompt. "in-context learning"

- Jailbreaks: Attacks that coerce LLMs into bypassing safety constraints or producing disallowed content. "one critical risk involves jailbreaks which can be mitigated through the implementation of more robust monitoring and control mechanisms"

- Loose Coupling: An architectural principle reducing interdependencies among components to improve flexibility and maintainability. "Open-Closed Principle (OCP) and Loose Coupling: Systems are open for extension but closed for modification, with components designed to have minimal dependencies, supporting Modifiability, Maintainability, Scalability, Flexibility, and Extensibility."

- LLM-Agent-UMF: The proposed LLM-based Agent Unified Modeling Framework that delineates agent components and interactions. "we propose the LLM-based Agent Unified Modeling Framework (LLM-Agent-UMF)."

- Memory-augmented planning: Planning techniques that incorporate stored information from memory to improve task decomposition and decisions. "enabling collaboration with sibling modules, such as memory for memory-augmented planning \cite{108}."

- Multi-core agent: An agent architecture composed of multiple core-agents (active or passive) that cooperate to handle tasks. "introduce various multi-core agent architectures"

- Open-Closed Principle (OCP): A design principle where components are open for extension but closed for modification. "Open-Closed Principle (OCP) and Loose Coupling: Systems are open for extension but closed for modification"

- Passive core-agent: A non-authoritative core-agent subtype focused on execution with limited modules (action and security). "passive (non-authoritative, limited to action and security modules for simplified execution)"

- Privacy by Design (PbD): An approach integrating privacy protections from the outset of system design. "Privacy by Design (PbD): Privacy considerations are integrated from the start to achieve Privacy, protecting sensitive data and aligning with data protection standards~\cite{delreal2024sss, obiokafor2025integrating}."

- Prompt learning: Techniques for training or adapting models via prompts, often with privacy-preserving methods. "privacy-preserving algorithms for prompt learning"

- Rethinking module: A reflective component (from prior frameworks) that guides future actions based on introspection and feedback. "``Rethinking'' module, functionally akin to planning, guides future actions through introspection on past actions and feedback"

- Security by Design (SbD): A principle embedding security considerations throughout the development lifecycle. "Security by Design (SbD): Security is integrated into the development process from the outset to achieve Security and Integrity, ensuring robust protection against threats \cite{151, giorgini2005}."

- Single Responsibility Principle (SRP): A design principle where each module has one distinct responsibility to reduce overlap. "Modularity, Single Responsibility Principle (SRP), and Separation of Concerns: Systems are broken into smaller, independent modules, each with a single responsibility and distinct functionality, to enhance Maintainability, Scalability, Reusability, Modifiability, and Performance."

- Technology-Agnostic Design: An approach that avoids binding the framework to specific technologies to maximize flexibility and interoperability. "Technology-Agnostic Design: The framework avoids dependency on specific technologies to promote Flexibility, Maintainability, and Interoperability"

- Tool-augmented LLMs: LLMs enhanced with the ability to call external tools and APIs to perform tasks beyond text generation. "tool-augmented LLMs are a major advancement in NLP that combine the language understanding and generation capabilities of LLMs with the ability to interface with external tools and Application Programming Interfaces (APIs)."

- Trust Boundaries Definition: Explicitly defined zones controlling data and access to ensure security and compliance. "Trust Boundaries Definition: Zones of trust are clearly defined to manage security and access control, supporting Security, Integrity, and Privacy, ensuring compliance with data protection requirements~\cite{112}."

- Variability Management: Systematic handling of configuration and requirement differences across agent designs. "Variability Management: Variations in requirements or configurations are handled systematically, supporting Flexibility, Extensibility, Maintainability, and Reusability, accommodating diverse agent \nobreak{designs}."

Collections

Sign up for free to add this paper to one or more collections.