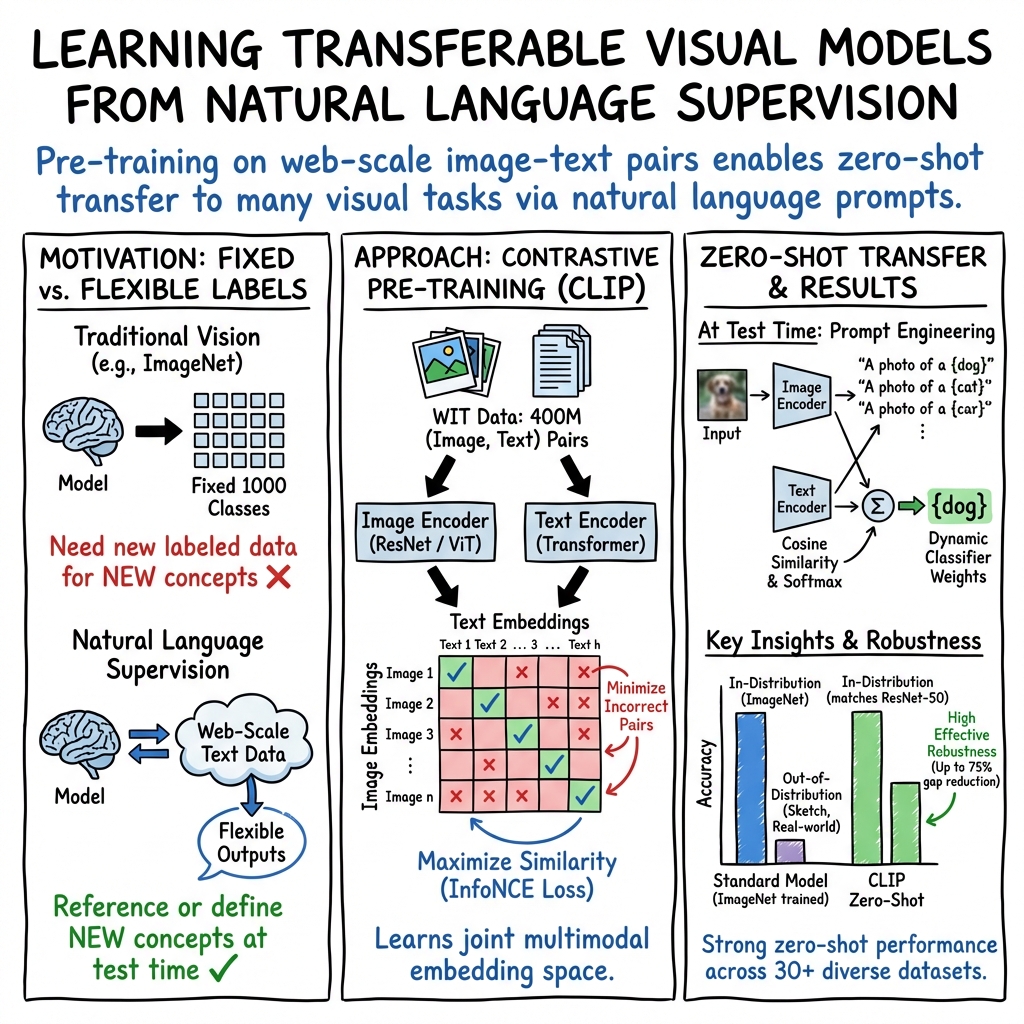

- The paper introduces CLIP, a novel framework that uses natural language supervision to learn visual representations without relying on extensive labeling.

- The paper details a contrastive pre-training method that jointly optimizes image and text encoders using cosine similarity to match correct pairs.

- The paper demonstrates CLIP’s scalability and versatility by achieving competitive zero-shot performance across various visual tasks.

Analyzing the Potential of Learning Transferable Visual Models from Natural Language Supervision

In the rapidly evolving field of deep learning, the quest for efficient and robust methods for learning visual models is unending. A recent study, Learning Transferable Visual Models From Natural Language Supervision, introduces a promising approach that utilizes the vast amounts of unlabelled data on the web for training state-of-the-art computer vision systems. This paper presents a method that leverages natural language supervision, derived from raw text about images, to learn visual representations; a novel strategy aimed at overcoming the limitations imposed by the current supervised learning methods.

Introduction to Natural Language Supervision for Visual Learning

State-of-the-art computer vision systems typically rely on a fixed set of predetermined object categories, requiring extensive labeled datasets to specify other visual concepts. This paper introduces a scalable method that predicts the compatibility between a caption and an image, using a dataset of 400 million (image, text) pairs collected from the internet. After pre-training, natural language is used to reference learned visual concepts (or describe new ones), enabling zero-shot transfer of the model to downstream tasks.

Methodology and Approach

The proposed method, dubbed CLIP (Contrastive Language-Image Pre-training), moves away from traditional training objectives. Instead of predicting exact words of the text accompanying each image, CLIP trains to solve the more manageable task of predicting which text in a batch is paired with which image. This simplification, combined with a contrastive objective, significantly boosts training efficiency.

CLIP consists of two main components: an image encoder and a text encoder, which are trained jointly. The training objective is to maximize the cosine similarity of the image and text embeddings of the N real pairs in a batch, while minimizing the similarity of the N²-N incorrect pairings. This method has shown impressive scalability, enabling the study of behaviors of image classifiers trained with natural language supervision at a significantly larger scale than previous studies.

Theoretical and Practical Implications

The paper explores the practical and theoretical underpinnings of learning from natural language supervision. The scalability of CLIP is detailed by training a series of models spanning nearly two orders of magnitude in compute, demonstrating that transfer performance is a smoothly predictable function of compute.

On the practical side, CLIP exhibits remarkable versatility across a wide array of visual tasks, including OCR, geo-localization, and action recognition in videos. It often matches or surpasses task-specific supervised baseline models without requiring dataset-specific training data. This capability to generalize from zero-shot inference has notable implications for the deployment of vision systems across diverse applications.

Future Developments and Challenges

While CLIP represents a significant step forward, it is not without limitations. Its performance, although competitive, still lags behind state-of-the-art models specifically trained on large labeled datasets for certain tasks. Additionally, the reliance on vast amounts of data for pre-training raises questions about efficiency and sustainability.

Furthermore, ethical considerations surrounding the use of unfiltered internet data for training are mentioned, highlighting the potential for learned social biases. Addressing these biases, enhancing data efficiency, and closing the performance gap in specialized tasks are outlined as key areas for future research.

Conclusion

The study of CLIP introduces a transformative approach to learning visual representations, leveraging the abundance of text descriptions associated with images on the internet. By effectively utilizing natural language as a source of supervision, it opens new frontiers in creating flexible, robust, and broadly applicable visual models. Despite its challenges, the methodology presents a compelling case for the future direction of computer vision research, where the synergy between language and vision can be harnessed to remarkable effect.