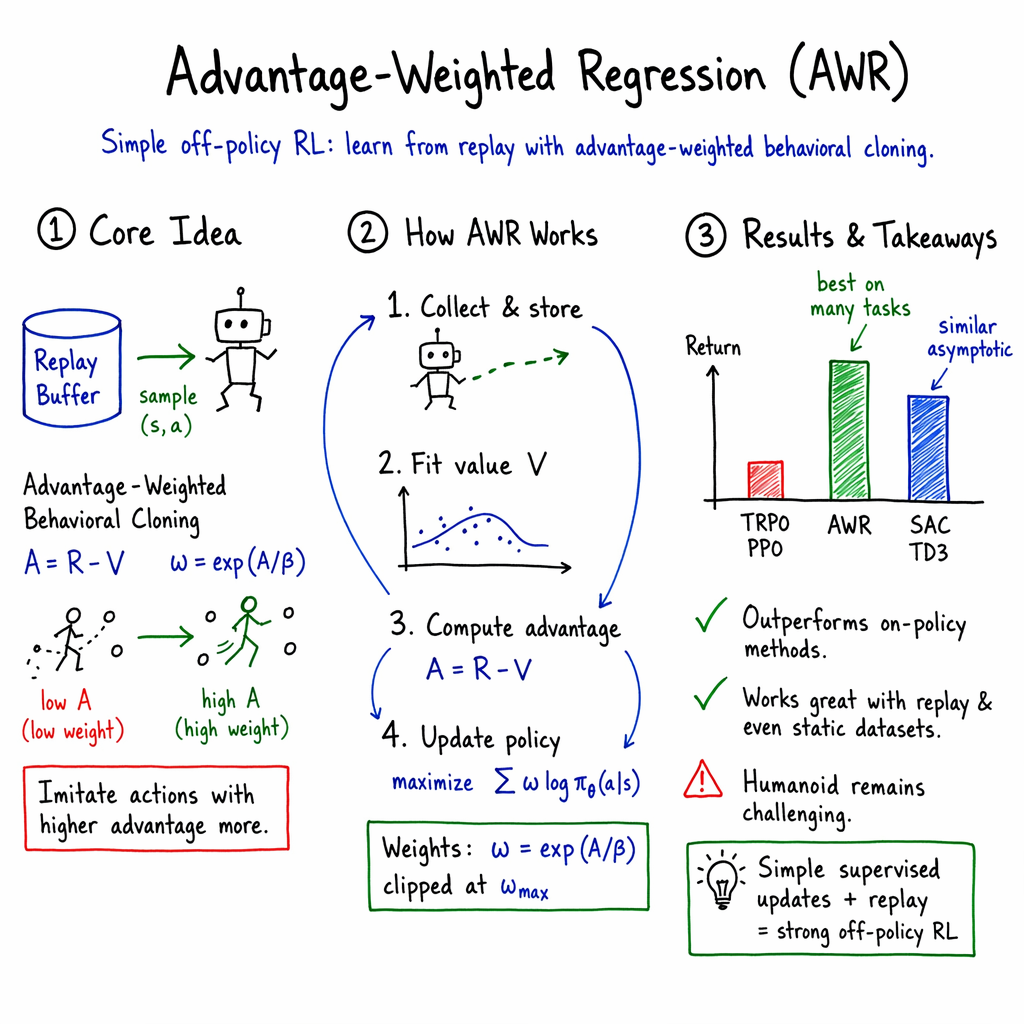

- The paper introduces Advantage-Weighted Regression (AWR), a method that reframes policy updates as weighted regression to efficiently utilize off-policy data.

- It employs two supervised learning steps—one for value function regression and another for policy action regression—across both continuous and discrete action spaces.

- Empirical results on OpenAI Gym benchmarks demonstrate that AWR achieves competitive performance with state-of-the-art RL algorithms while improving sample efficiency and stability.

Overview of Advantage-Weighted Regression: Simple and Scalable Off-Policy Reinforcement Learning

The paper "Advantage-Weighted Regression: Simple and Scalable Off-Policy Reinforcement Learning" by Xue Bin Peng et al. presents a reinforcement learning (RL) algorithm focused on simplicity and scalability. This method, known as Advantage-Weighted Regression (AWR), leverages standard supervised learning techniques to enhance the RL process, specifically incorporating off-policy data to improve efficiency and effectiveness.

Technical Approach

AWR consists of two primary supervised learning steps: one for regressing onto target values for the value function and another for performing weighted regression onto target actions for the policy. The algorithm operates with both continuous and discrete action spaces, demonstrating its versatility across various RL tasks.

The approach is characterized by utilizing simple maximum likelihood loss functions. This ease of implementation is emphasized, claiming that the method can be applied with minimal coding additions over existing supervised learning frameworks.

Theoretical Foundations and Methodology

The authors provide a theoretical foundation for AWR, exploring its properties and advantages when incorporating off-policy data through experience replay. The derivation positions AWR as an approximation of a constrained policy optimization procedure, where the policy update leverages the weighted advantage of actions.

A key component of this method is the ability to handle experience replay—the algorithm can simultaneously utilize historical data from previous policy iterations, thus enhancing sample efficiency. The report describes the value function as a single mean approximator of the policy value functions over the replay data, which contributes to stability and reliability even with older data.

Evaluation and Results

The performance of AWR was empirically validated on various OpenAI Gym benchmarks. The findings indicate that AWR achieves competitive performance versus traditional RL algorithms like TRPO, PPO, DDPG, and SAC. AWR outperforms several off-policy RL algorithms when trained on static datasets without environmental interactions.

Additionally, the algorithm successfully tackles complex control tasks involving high-dimensional simulated characters, exhibiting impressive skill acquisition despite the high degree of complexity involved.

Implications and Future Directions

The implications of AWR are significant for the reinforcement learning domain, particularly in simplifying off-policy learning. By reducing the complexity of RL implementation while maintaining, or even enhancing, performance on competitive benchmarks, AWR presents a viable alternative for both research applications and practical deployments.

Although AWR shows promising results, there is room for further exploration in enhancing sample efficiency—an area where some existing off-policy algorithms may still excel. Future work could focus on integrating more advanced optimization techniques or adaptive mechanisms to further refine the balance between stability and learning speed, particularly when dealing with fully off-policy datasets.

Overall, AWR serves as an insightful contribution to the field, offering a streamlined yet effective approach for reinforcement learning practitioners.