- The paper introduces the EON model that reformulates Boltzmann machines using entropic layers for gradient-descent-free learning.

- It employs a non-equilibrium, entropy-optimizing approach to achieve computational efficiency and high prediction accuracy in regression and classification tasks.

- The model demonstrates robust performance in small-data scenarios, bioinformatics, and ENSO prediction while mitigating overfitting and overconfidence.

An entropy-optimal path to humble AI

Introduction

The paper introduces a novel reformulation of Boltzmann machines, leveraging a non-equilibrium entropy-optimizing approach based on the law of total probability and convex polytope representations. The proposed EON model delivers highly performative and computationally efficient machine learning solutions featuring gradient-descent-free learning. It addresses the issues of high cost, overconfidence, and computational inefficiency prevalent in modern AI models.

EON Model Architecture

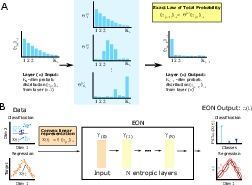

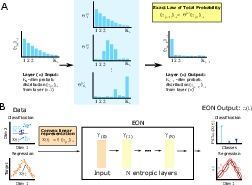

The EON model's architecture utilizes entropic layers instead of the classical feedforward network layers. These entropic layers enable the calculation of probabilistic transformations without relying on backpropagation or gradient descent. Instead, they employ an exact formulation of probabilistic relationships, allowing for closed-form solutions at each layer.

Figure 1: Illustration of the structure of the proposed EON model, featuring entropic layers and probabilistic outputs for classification and regression tasks.

The EON model is formulated as a series of constrained optimization problems, each solvable analytically. It employs probabilistic distance measures and non-equilibrium settings, diverging from the equilibrium assumptions of traditional BMs. This approach enhances computational efficiency and robustness, essential for small data learning scenarios where overfitting is a concern.

- Regression Benchmarks:

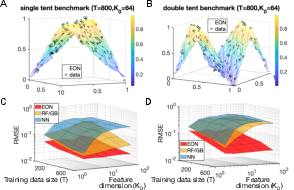

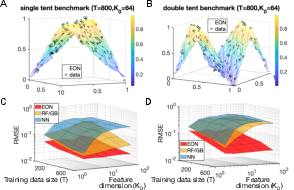

The EON model shows superior performance compared to neural networks and ensemble models like RF/GB across different regression benchmarks. It maintains accurate predictions with fewer instances and in higher dimensions, thanks to its noise-resilient entropic approach.

Figure 2: Benchmark results illustrating EON's performance in regression tasks, showcasing lower RMSE compared to neural networks and ensemble models.

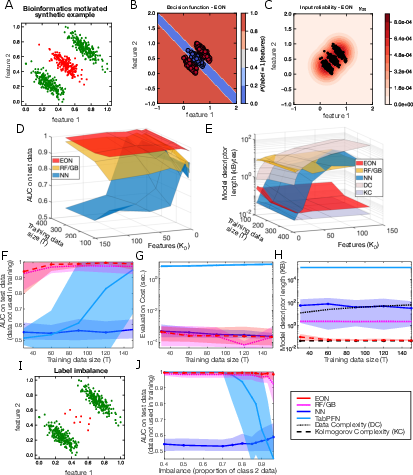

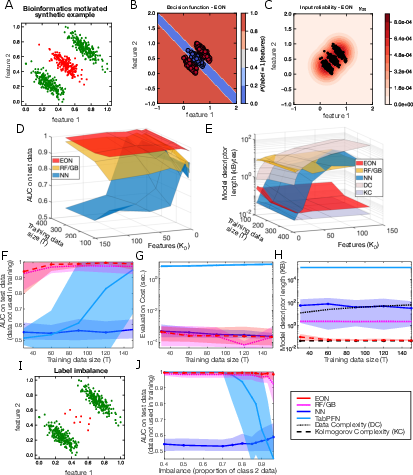

- Bioinformatics Scenario:

A bioinformatics dataset example demonstrates EON's ability to identify informative dimensions and provide reliable input measures, outperforming baseline models in tightly bounded Kolmogorov complexity.

Figure 3: EON's decision function and input reliability measure in a bioinformatics classification scenario.

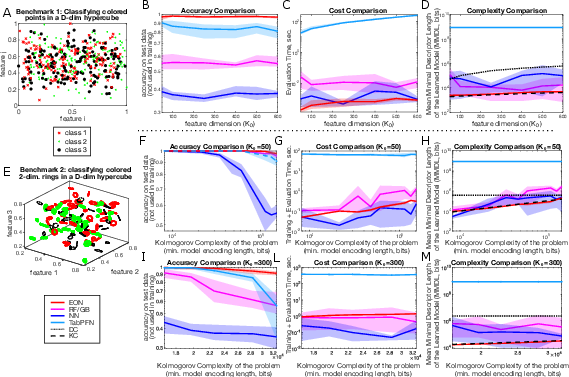

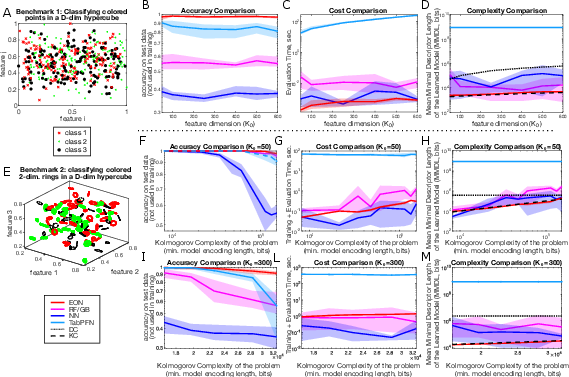

- Synthetic Classification Tasks:

EON consistently delivers high accuracy and efficient learning with minimal parameter usage in synthetic classification tasks, even exceeding the performance of modern LLMs under certain constraints.

Figure 4: EON's classification accuracy and efficiency compared to other methods across varying synthetic datasets and complexities.

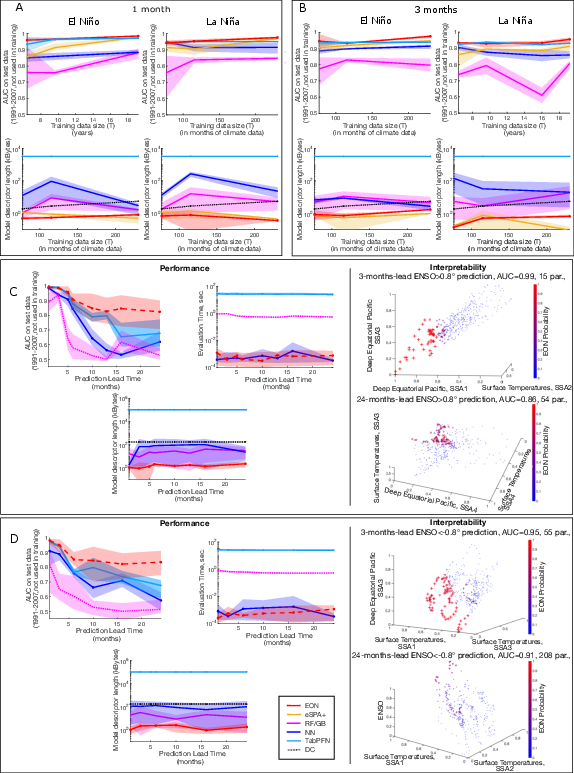

Real-world Application: ENSO Prediction

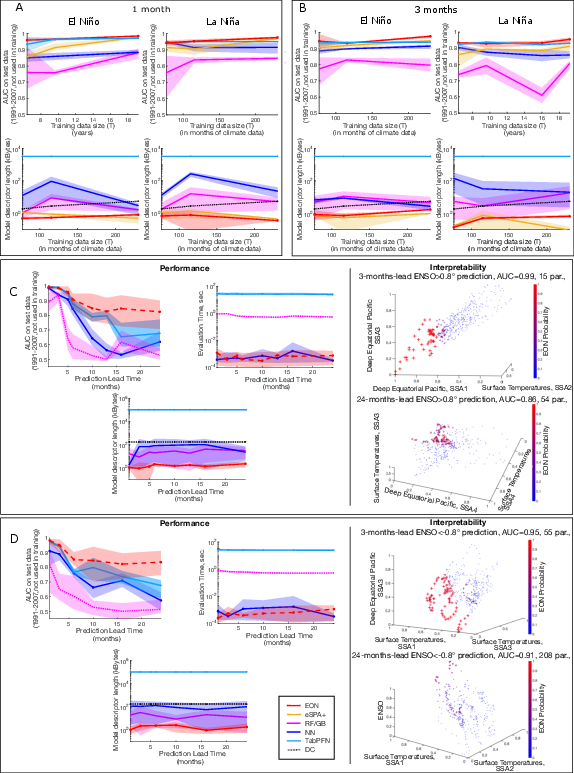

For ENSO climate prediction, EON achieves high predictive accuracy with reduced data reliance compared to other AI methodologies, indicating its suitability for real-world applications requiring precise yet resource-efficient models.

Figure 5: EON's performance in ENSO prediction across different lead times and event classifications, with superior AUC and efficient model sizing.

Conclusion

The EON model addresses critical shortcomings in current AI systems related to cost, overfitting, and confidence measurement. Its unique entropy-based approach ensures efficient learning applicable to small data problems, making it a robust alternative to conventional deep learning models. Future directions include expanding EON to other domains and exploring its integration with hybrid learning systems.