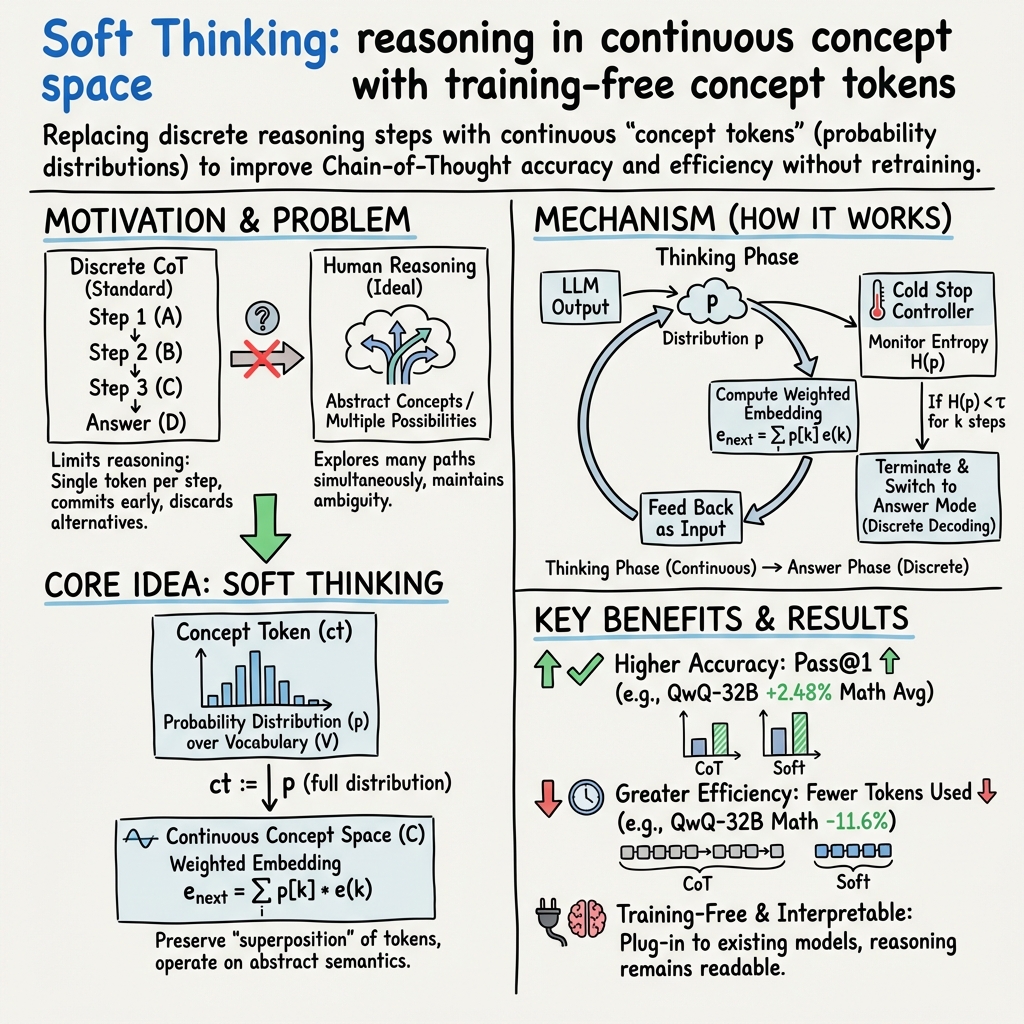

- The paper introduces Soft Thinking, a training-free method that improves LLM reasoning by simulating human-like abstract concept processing in a continuous concept space, departing from discrete token methods.

- Soft Thinking shows marked performance improvements on mathematical and coding benchmarks, increasing accuracy up to 2.48 points and reducing token usage up to 22.4% compared to traditional techniques.

- The framework transitions reasoning into a continuous concept space for richer semantic representations and enables implicit parallel exploration of multiple reasoning paths for enhanced capabilities.

Soft Thinking: Unlocking the Reasoning Potential of LLMs in Continuous Concept Space

The paper "Soft Thinking: Unlocking the Reasoning Potential of LLMs in Continuous Concept Space" by Zhang et al. introduces a novel approach to enhance the reasoning capabilities of LLMs by simulating the human-like processing of abstract concepts. The authors propose "Soft Thinking," a training-free method that devotes attention to integrating soft, abstract concept tokens to enable smoother transitions within a continuous concept space, a significant departure from the conventional, discrete token-based Chain-of-Thought (CoT) reasoning methods widely used in LLMs.

The primary limitation the paper addresses is the bottleneck created by discrete token embeddings in current reasoning models. These models operate within the constraints of human language, relying on a sequential, token-by-token approach that often leads to incomplete exploration of possible reasoning paths. Neurally inspired, Soft Thinking allows the generation of concept tokens, which are probability-weighted mixtures of token embeddings existing within a continuous concept space. This methodology enables LLMs to encapsulate multiple meanings simultaneously and explore various reasoning paths, thereby enhancing accuracy and reducing unnecessary computations.

Quantitative assessments reveal that the proposed Soft Thinking framework shows marked improvements in performance across standard benchmarks in mathematical and coding tasks. Notably, experimental results demonstrate an increase in pass@1 accuracy by up to 2.48 points while also reducing token usage by up to 22.4% compared to traditional CoT techniques. These improvements are achieved without additional training requirements, highlighting Soft Thinking's inherent efficiency and potential for practical applications.

To address potential challenges arising from out-of-distribution (OOD) inputs that might lead to model collapse, the authors introduce a "Cold Stop" mechanism. This technique monitors the entropy of the model's output distribution and terminates the reasoning process should the model exhibit high confidence over several consecutive steps. By doing so, the model avoids unnecessary computation and reduces risks associated with generation collapse, ensuring robust and efficient reasoning.

Soft Thinking brings two key innovations to LLM reasoning processes. First, by transitioning reasoning into a continuous concept space, models can produce richer semantic representations, allowing for a nuanced understanding and handling of abstract concepts. Second, it enables implicit parallel exploration of multiple reasoning paths, broadening the domain of reasoning capabilities beyond the traditional one-token-at-a-time constraint.

Overall, this research contributes to the growing field of reasoning in LLMs by offering a methodology that balances the benefits of abstract human thought processes with the computational power of AI systems. The implications of Soft Thinking are significant: this framework not only paves the way for more sophisticated reasoning models but also suggests a potential shift in how AI systems might emulate aspects of human cognitive processes.

Future research directions could explore the integration of training-based methods that adapt to the continuous concept spaces, potentially improving model stability and robustness. Additionally, refining the Cold Stop mechanism to utilize a wider array of indicators could further enhance the resilience of LLMs against OOD problems. These improvements could extend the applicability of Soft Thinking across a broader range of reasoning tasks and facilitate advancements in AI's ability to process complex, multifaceted ideas akin to human reasoning.