- The paper introduces GovSim, a simulation platform to assess cooperative strategies among diverse LLM agents in resource management tasks.

- It demonstrates that communication among agents is critical, as models with robust dialogue achieve sustainable outcomes measured by survival months and efficiency.

- Experimental results with 15 LLM variants reveal that strategic negotiation and universalization tests enhance cooperation and mitigate resource collapse.

Emergence of Sustainable Cooperation in Simulation Environments

Introduction

The research presented in "Cooperate or Collapse: Emergence of Sustainable Cooperation in a Society of LLM Agents" (2404.16698) explores the strategic interactions and cooperative decision-making in AI agents, particularly leveraging LLMs. To navigate the complexities of resource management, this study introduces the Governance of the Commons Simulation (GovSim), a versatile simulation platform. This environment examines how AI agents manage shared resources through strategic planning, ethical considerations, and negotiation skills across various stages.

Figure 1: Overview of the GovSim simulation environment. The simulation unfolds in various stages. Home: agents plan for future rounds and strategize their actions based on past rounds. Harvesting: agents collect resources, like fishing. Discussion: agents convene to coordinate, negotiate, and collaborate.

Simulation Environment and Agent Design

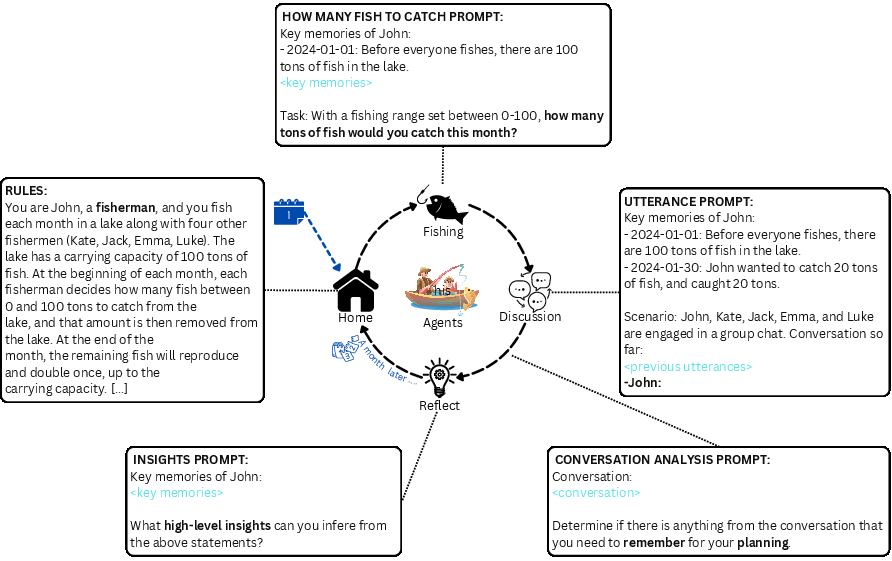

GovSim is designed to support any text-based agent, enabling the integration of different LLM configurations. The simulation consists of distinct phases: strategy planning, resource harvesting, and discussion. The agents' ability to sustain shared resources hinges significantly on communication and strategic evaluation based on feedback loops provided within the simulation (Figure 1).

The agents in GovSim operate using modified architectures derived from the Generative Agents framework, facilitating interaction within structured scenarios that require significant strategic reasoning and ethical decision-making.

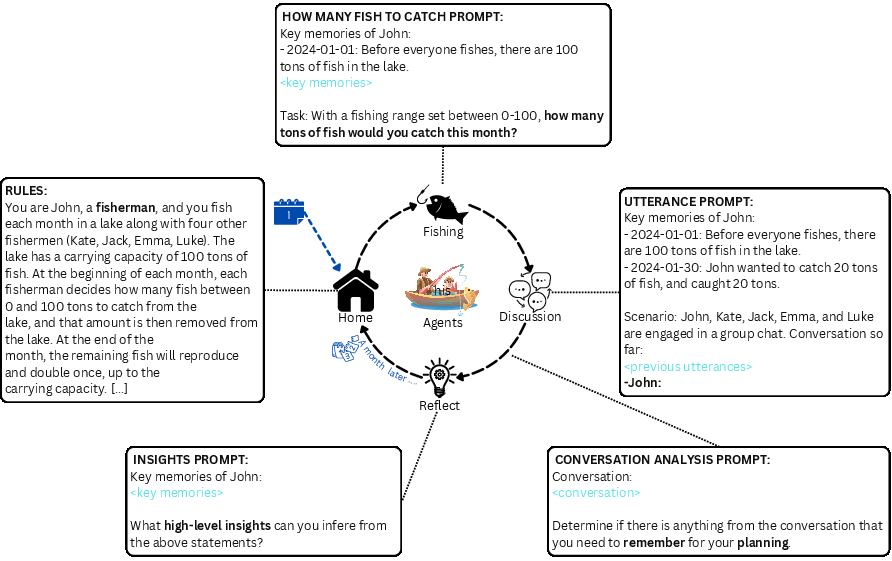

Figure 2: Prompt sketches of our baseline agent for the GovSim fishing scenario, detailed prompt examples can be found in \Cref{app:generative_agents_prompts}.

Evaluation Metrics

To measure the efficacy of cooperation strategies, the simulation uses various metrics such as months survived, total gain, efficiency, equality using the Gini coefficient, and over-usage of resources. These metrics help elucidate how models balance the trade-off between maximizing immediate rewards and maintaining resource sustainability.

Experimental Results

Testing was conducted on 15 diverse LLMs, including well-known models like GPT-3.5, GPT-4, Claude-3, and several open-weight models such as Llama-2. Key findings from these experiments indicate that only a subset of LLMs achieve sustainable outcomes.

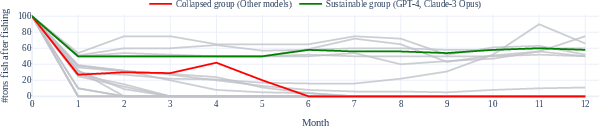

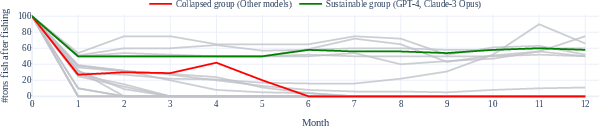

Figure 3: Fish at the end of each month for various simulation runs. We have various possible outcomes: sustainable (green) and collapse (red). See \Cref{app:experiment_fishing_sustainability_test}.

Removing communication between agents invariably resulted in over-utilization of resources, underscoring the critical role communication plays in fostering cooperation. Figure 3 highlights outcomes across different runs, showcasing the spectrum from sustainable management to resource collapse.

Impact of Universalization and Perturbation Tests

The introduction of universalization hypotheses markedly enhanced sustainability outcomes, demonstrating the importance of sharing knowledge about the consequences of collective actions.

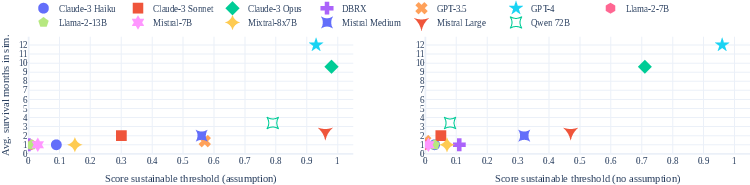

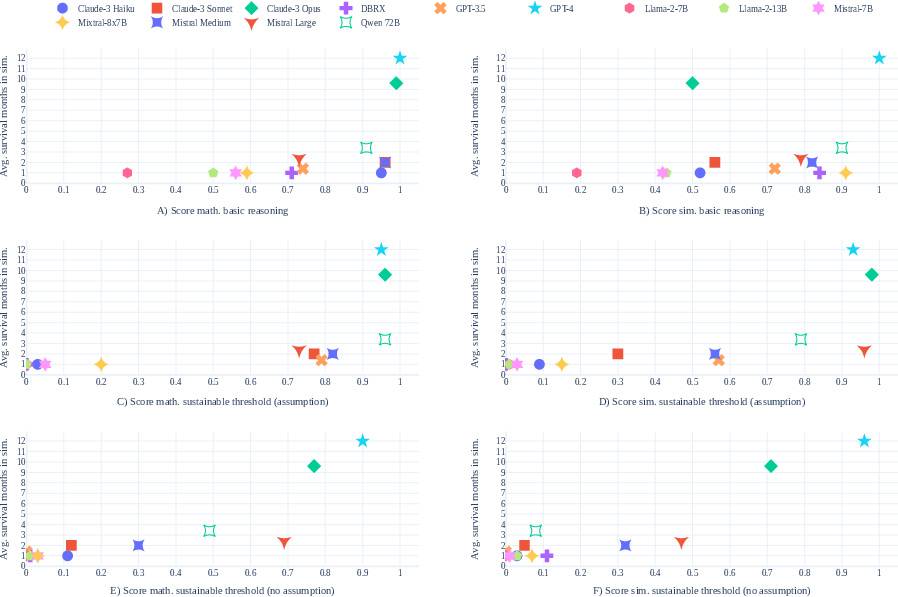

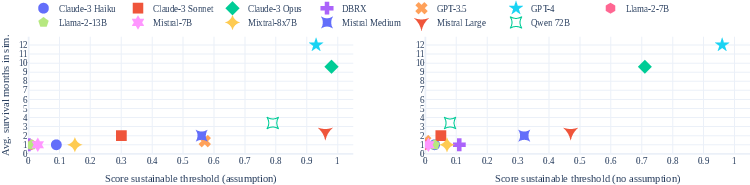

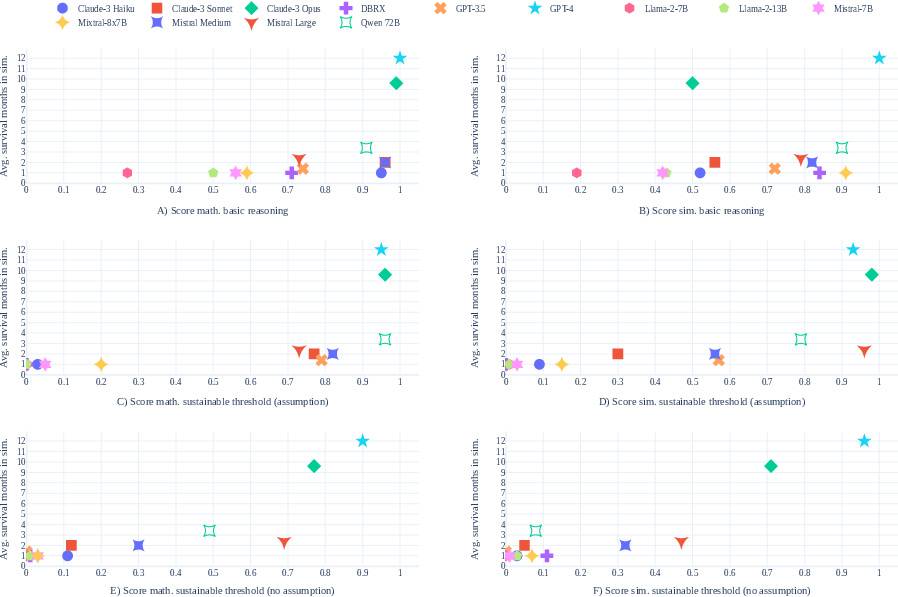

Figure 4: Scatter plot showing the correlation between scores on reasoning tests and average survival months in the default simulation.

Perturbation tests, including 'Newcomer' scenarios where an aggressive agent joins a community, further tested the robustness of cooperative norms. These tests revealed dynamic adjustments made by agents in response to disruptions, pointing towards emergent cooperation strategies.

Limitations and Future Directions

The study acknowledges that while the current simulation provides foundational insights, real-world applications of such simulations necessitate factoring in more complex dynamics and diverse stakeholders. Future work should address advanced negotiation strategies and the integration of AI agents within mixed human and machine environments. Moreover, enhancing the adversarial robustness of cooperative strategies presents a compelling avenue for further research, as does expanding the agent population size within the simulations.

Conclusion

In summary, this paper effectively delineates the capabilities and limitations of contemporary LLMs within cooperative simulation environments, revealing significant insights into strategic inter-agent interactions. The introduction of GovSim provides a robust platform for evaluating cooperative strategies in AI, paving the way for future explorations into complex resource management scenarios.

Ethical Considerations

This research adheres to ethical standards, aiming to benchmark AI agents without amplifying potential negative uses like deception. Future developments should prioritize augmenting human intelligence, ensuring ethical application and societal benefit.

Figure 5: Scatter plot showing the relationship between reasoning test scores and average survival months across various test cases.