HINTs: Sensemaking on large collections of documents with Hypergraph visualization and INTelligent agents

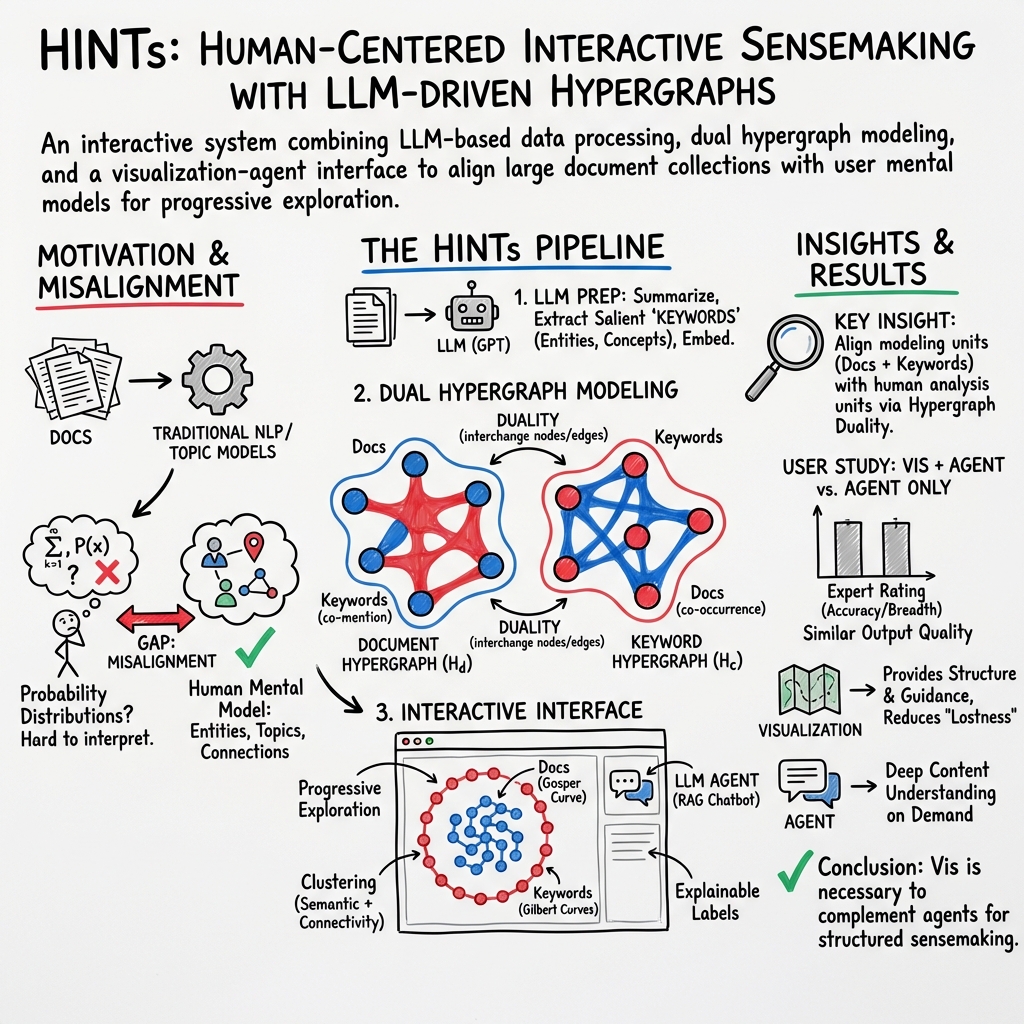

Abstract: Sensemaking on a large collection of documents (corpus) is a challenging task often found in fields such as market research, legal studies, intelligence analysis, political science, computational linguistics, etc. Previous works approach this problem either from a topic- or entity-based perspective, but they lack interpretability and trust due to poor model alignment. In this paper, we present HINTs, a visual analytics approach that combines topic- and entity-based techniques seamlessly and integrates LLMs as both a general NLP task solver and an intelligent agent. By leveraging the extraction capability of LLMs in the data preparation stage, we model the corpus as a hypergraph that matches the user's mental model when making sense of the corpus. The constructed hypergraph is hierarchically organized with an agglomerative clustering algorithm by combining semantic and connectivity similarity. The system further integrates an LLM-based intelligent chatbot agent in the interface to facilitate sensemaking. To demonstrate the generalizability and effectiveness of the HINTs system, we present two case studies on different domains and a comparative user study. We report our insights on the behavior patterns and challenges when intelligent agents are used to facilitate sensemaking. We find that while intelligent agents can address many challenges in sensemaking, the visual hints that visualizations provide are necessary to address the new problems brought by intelligent agents. We discuss limitations and future work for combining interactive visualization and LLMs more profoundly to better support corpus analysis.

- All The News. https://components.one/datasets/all-the-news-2-news-articles-dataset.

- Comparative evaluation of bipartite, node-link, and matrix-based network representations. IEEE Transactions on Visualization and Computer Graphics, 29(1):896–906, 2022.

- Serendip: Topic model-driven visual exploration of text corpora. In 2014 IEEE Conference on Visual Analytics Science and Technology (VAST), pp. 173–182, 2014. doi: 10 . 1109/VAST . 2014 . 7042493

- Large-scale evaluation of topic models and dimensionality reduction methods for 2d text spatialization. arXiv preprint arXiv:2307.11770, 2023.

- Gospermap: Using a gosper curve for laying out hierarchical data. IEEE transactions on visualization and computer graphics, 19(11):1820–1832, 2013.

- ReFinED: An efficient zero-shot-capable approach to end-to-end entity linking. In NAACL, 2022.

- A systematic literature review of user trust in ai-enabled systems: An hci perspective. International Journal of Human–Computer Interaction, pp. 1–16, 2022.

- A multitask, multilingual, multimodal evaluation of chatgpt on reasoning, hallucination, and interactivity. arXiv preprint arXiv:2302.04023, 2023.

- Through the looking glass: insights into visualization pedagogy through sentiment analysis of peer review text. IEEE Computer Graphics and Applications, 41(6):59–70, 2021.

- P. P. . F. T. Bogumił Kamiński. Community detection algorithm using hypergraph modularity. In Complex Networks & Their Applications IX: Volume 1, Proceedings of the Ninth International Conference on Complex Networks and Their Applications COMPLEX NETWORKS 2020, pp. 152–163. Springer, 2021.

- Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901, 2020.

- Cartolabe: A web-based scalable visualization of large document collections. IEEE Computer Graphics and Applications, 41(2):76–88, 2020.

- Solarmap: Multifaceted visual analytics for topic exploration. In 2011 IEEE 11th International Conference on Data Mining, pp. 101–110. IEEE, 2011.

- Facetatlas: Multifaceted visualization for rich text corpora. IEEE transactions on visualization and computer graphics, 16(6):1172–1181, 2010.

- Vairoma: A visual analytics system for making sense of places, times, and events in roman history. IEEE Transactions on Visualization and Computer Graphics, 22(1):210–219, 2016. doi: 10 . 1109/TVCG . 2015 . 2467971

- Utopian: User-driven topic modeling based on interactive nonnegative matrix factorization. IEEE Transactions on Visualization and Computer Graphics, 19(12):1992–2001, 2013. doi: 10 . 1109/TVCG . 2013 . 212

- Termite: Visualization techniques for assessing textual topic models. In Proceedings of the international working conference on advanced visual interfaces, pp. 74–77, 2012.

- Interpretation and trust: Designing model-driven visualizations for text analysis. In Proceedings of the SIGCHI conference on human factors in computing systems, pp. 443–452, 2012.

- S. Citraro and G. Rossetti. Eva: Attribute-aware network segmentation. In Complex Networks and Their Applications VIII: Volume 1 Proceedings of the Eighth International Conference on Complex Networks and Their Applications COMPLEX NETWORKS 2019 8, pp. 141–151. Springer, 2020.

- I-louvain: An attributed graph clustering method. In Advances in Intelligent Data Analysis XIV: 14th International Symposium, IDA 2015, Saint Etienne. France, October 22-24, 2015. Proceedings 14, pp. 181–192. Springer, 2015.

- Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv preprint arXiv:1810.04805, 2018.

- Hierarchicaltopics: Visually exploring large text collections using topic hierarchies. IEEE Transactions on Visualization and Computer Graphics, 19(12):2002–2011, 2013.

- Towards a survey on static and dynamic hypergraph visualizations. In 2021 IEEE visualization conference (VIS), pp. 81–85. IEEE, 2021.

- Chatgpt outperforms crowd-workers for text-annotation tasks. arXiv preprint arXiv:2303.15056, 2023.

- wto: an r package for computing weighted topological overlap and a consensus network with integrated visualization tool. BMC bioinformatics, 19(1):1–16, 2018.

- Hisva: A visual analytics system for studying history. IEEE Transactions on Visualization and Computer Graphics, 28(12):4344–4359, 2022. doi: 10 . 1109/TVCG . 2021 . 3086414

- Development of nasa-tlx (task load index): Results of empirical and theoretical research. In Advances in psychology, vol. 52, pp. 139–183. Elsevier, 1988.

- vispubdata.org: A metadata collection about IEEE visualization (VIS) publications. IEEE Transactions on Visualization and Computer Graphics, 23(9):2199–2206, Sept. 2017. doi: 10 . 1109/TVCG . 2016 . 2615308

- Unsupervised dense information retrieval with contrastive learning. Transactions on Machine Learning Research, 2022.

- Dense passage retrieval for open-domain question answering. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 6769–6781. Association for Computational Linguistics, Online, Nov. 2020. doi: 10 . 18653/v1/2020 . emnlp-main . 550

- A new measure of modularity in hypergraphs: Theoretical insights and implications for effective clustering. In Complex Networks and Their Applications VIII: Volume 1 Proceedings of the Eighth International Conference on Complex Networks and Their Applications COMPLEX NETWORKS 2019 8, pp. 286–297. Springer, 2020.

- ivisclustering: An interactive visual document clustering via topic modeling. In Computer graphics forum, vol. 31, pp. 1155–1164. Wiley Online Library, 2012.

- The human touch: How non-expert users perceive, interpret, and fix topic models. International Journal of Human-Computer Studies, 105:28–42, 2017.

- Evaluating chatgpt’s information extraction capabilities: An assessment of performance, explainability, calibration, and faithfulness. arXiv preprint arXiv:2304.11633, 2023.

- Large-scale graph visualization and analytics. Computer, 46(7):39–46, 2013. doi: 10 . 1109/MC . 2013 . 242

- P. Maddigan and T. Susnjak. Chat2vis: Generating data visualisations via natural language using chatgpt, codex and gpt-3 large language models. IEEE Access, 2023.

- C. Muelder and K.-L. Ma. Rapid graph layout using space filling curves. IEEE Transactions on Visualization and Computer Graphics, 14(6):1301–1308, 2008.

- Vitality: Promoting serendipitous discovery of academic literature with transformers & visual analytics. IEEE Transactions on Visualization and Computer Graphics, 28(1):486–496, 2021.

- vitality: Promoting serendipitous discovery of academic literature. 2022.

- Named entity recognition and relation extraction: State-of-the-art. ACM Computing Surveys (CSUR), 54(1):1–39, 2021.

- Comparative exploration of document collections: a visual analytics approach. In Computer Graphics Forum, vol. 33, pp. 201–210. Wiley Online Library, 2014.

- Networks of collaborations: Hypergraph modeling and visualisation. CoRR, abs/1707.00115, 2017.

- Conceptvector: Text visual analytics via interactive lexicon building using word embedding. IEEE Transactions on Visualization and Computer Graphics, 24(1):361–370, 2018. doi: 10 . 1109/TVCG . 2017 . 2744478

- A new concave hull algorithm and concaveness measure for n-dimensional datasets. Journal of Information science and engineering, 28(3):587–600, 2012.

- Docflow: A visual analytics system for question-based document retrieval and categorization. IEEE Transactions on Visualization and Computer Graphics, 2022.

- Explain and trust: An interactive machine learning framework for exploring text embeddings. IEEE Transactions on Visualization and Computer Graphics, 2023.

- Entity linking with a knowledge base: Issues, techniques, and solutions. IEEE Transactions on Knowledge and Data Engineering, 27(2):443–460, 2014.

- Interactive document clustering revisited: A visual analytics approach. In 23rd International Conference on Intelligent User Interfaces, pp. 281–292, 2018.

- Jigsaw: Supporting investigative analysis through interactive visualization. In 2007 IEEE Symposium on Visual Analytics Science and Technology, pp. 131–138, 2007. doi: 10 . 1109/VAST . 2007 . 4389006

- A comparison of document clustering techniques. 2000.

- Sdrquerier: A visual querying framework for cross-national survey data recycling. IEEE Transactions on Visualization and Computer Graphics, 2023.

- Phrasemap: Attention-based keyphrases recommendation for information seeking. IEEE Transactions on Visualization and Computer Graphics, 2022.

- I. Vayansky and S. A. Kumar. A review of topic modeling methods. Information Systems, 94:101582, 2020.

- M. Vijaymeena and K. Kavitha. A survey on similarity measures in text mining. Machine Learning and Applications: An International Journal, 3(2):19–28, 2016.

- Data formulator: Ai-powered concept-driven visualization authoring. IEEE Transactions on Visualization and Computer Graphics, 2023.

- Llms as workers in human-computational algorithms? replicating crowdsourcing pipelines with llms. arXiv preprint arXiv:2307.10168, 2023.

- W. Xiang and B. Wang. A survey of event extraction from text. IEEE Access, 7:173111–173137, 2019.

- Vistopic: A visual analytics system for making sense of large document collections using hierarchical topic modeling. Visual Informatics, 1(1):40–47, 2017.

- Extractive summarization via chatgpt for faithful summary generation. arXiv preprint arXiv:2304.04193, 2023.

- Context-faithful prompting for large language models, 2023.

- J. Červený. https://github.com/jakubcerveny/gilbert/commits/master generalized hilbert (”gilbert”) space-filling curve for rectangular domains of arbitrary (non-power of two) sizes., 2019.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about (in simple terms)

This paper introduces HINTs, a computer tool that helps people make sense of huge piles of text, like thousands of news stories or research papers. It mixes two powerful ideas:

- Smart AI (like ChatGPT) that can read, extract key information, and chat with you, and

- Clear visuals that show how documents and ideas are connected.

The goal is to help you quickly see big themes (topics), find important names and ideas (keywords), and then dive into the exact documents you care about—all in a way that matches how people naturally think.

What questions the researchers asked

The authors wanted to solve a few basic problems:

- How can we understand the main topics in a giant collection of documents without getting lost?

- How can we track important people/places/ideas (keywords) across many documents in a way that makes sense?

- How can we give users both a helpful overview and an easy way to zoom in for details?

- How can AI assistants (like chatbots) help with analysis without making mistakes or confusing the user?

- How can we make the computer’s “mental model” match a human’s mental model, so the display is trustworthy and easy to interpret?

How the system works (using everyday examples)

Here’s the general flow, explained with simple analogies:

- Data preparation (reading and organizing)

- Summarization: The AI first creates short summaries for each document—like writing a quick book report so you don’t have to read everything.

- Keyword extraction: The AI finds the most important “characters and concepts” in each document (people, places, techniques—depending on the domain).

- Disambiguation: If two keywords mean the same thing (e.g., “USA” and “United States”), the system links them together so you don’t get duplicates.

- Embeddings (making a “map of meaning”): The system turns documents and keywords into numbers (vectors) so it can measure how similar they are—like putting them on a map where close points mean similar ideas.

- Topic labels: The AI names groups (clusters) with short, human-readable labels so users can tell what each group is about.

- Modeling the connections (hypergraphs)

- Graph vs hypergraph: A normal graph connects pairs (like two friends). A hypergraph can connect many at once (like a group chat). This is perfect for text: one keyword can link many documents, and one document can contain many keywords.

- Two views of the same world:

- Document hypergraph: documents are dots; each keyword connects all the documents that mention it.

- Keyword hypergraph: keywords are dots; each document connects all the keywords it mentions.

- These two are “dual” to each other—two sides of the same coin—so interactions make sense in both.

- Grouping similar items (clustering)

- The system groups items step-by-step (agglomerative clustering): start with everything separate; merge the most similar pairs; keep going until you get a tidy hierarchy.

- “Similar” means two things at once:

- Semantic similarity: do they talk about similar stuff? (measured using the “map of meaning” vectors)

- Connectivity similarity: do they share many of the same links? (e.g., documents that mention many of the same keywords)

- The system balances both to form meaningful, human-friendly clusters.

- Visualizing the information (space-filling curves)

- Space-filling curve: Imagine a single line that snakes through every seat in a stadium without crossing itself, so you can place items in order and fill the space nicely.

- The tool uses two different curves:

- Center area (documents): a Gosper curve lays out documents so topic groups are easy to see.

- Outer ring (keywords): four connected Gilbert curves lay out keywords around the outside.

- Extra touches to make it clear and stable:

- Automatic cluster expansion if a group is too big or too simple.

- Smart spacing so large clusters have more room and expanding a group doesn’t scramble the whole layout.

- Concave hulls (smooth borders) around clusters, with clean labels placed where they won’t clutter.

- Interacting and analyzing

- Cluster View: See the whole landscape—topics in the center, keywords around the edge. Hover and click to highlight, expand, or filter.

- Document View: When you select groups, the matching documents appear in a neat list.

- Chatbot View: You can ask an AI agent questions (e.g., “Summarize these 20 papers” or “Compare these two topics”). The system can feed the AI the exact documents you selected, so answers are grounded in the data you’re exploring.

What they found and why it matters

From two case studies (news articles and research papers) and a user study, the authors observed:

- Intelligent agents (like the chatbot) are very helpful for sensemaking—they can summarize, compare, and answer questions quickly.

- But AI can make mistakes or be vague. Visual “hints” (clear clusters, labels, connections) are essential so users can verify and better understand what the AI says.

- Combining topic-based and entity/keyword-based views in one system (with good labels and clear grouping) boosts trust and understanding.

- Using AI early (for extracting summaries, keywords, and labels) makes the visualization match how humans think (better “model alignment”), which improves interpretability for non-experts.

Why it’s important:

- People in many fields (market research, law, intelligence, policy, science) must read and compare huge amounts of text quickly.

- HINTs shows a practical way to combine AI and visualization to turn information overload into a clear, navigable map.

What this could mean for the future

- Better tools for exploring big text collections: HINTs demonstrates that AI plus visualization can help people find patterns, verify facts, and ask deeper questions faster.

- Safer, more trustworthy AI workflows: Visual overviews help users spot when an AI might be wrong or missing something, reducing blind trust.

- Broader applications: The same approach can be used for emails, reports, customer feedback, legal documents, and more.

- Next steps: Improve keyword linking, scale to even larger datasets, reduce AI mistakes (“hallucinations”), and integrate AI even more deeply while keeping human verification front and center.

In short, HINTs is like a smart, visual map for huge libraries of text, with an AI guide who can answer your questions—while the map helps you keep the guide honest.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of unresolved issues that future research could address to strengthen, generalize, and validate HINTs.

- Lack of quantitative evaluation of LLM-based extraction: no reported precision/recall, error analysis, or baseline comparisons for summarization, keyword extraction, and topic labeling versus standard IE/NLP methods.

- Unvalidated impact of extraction errors: no study of how LLM hallucinations or missed/merged keywords propagate through disambiguation, hypergraph construction, clustering, and user insights.

- Disambiguation reliability: the fallback embedding-based keyword merging relies on a fixed similarity threshold without sensitivity analysis or guarantees against conflating distinct entities (polysemy) or splitting synonyms.

- Domain generalization: prompts and keyword definitions are tailored to news and research papers; no evidence the pipeline generalizes to other domains (legal, clinical, social media, patents) with different discourse structures and jargon.

- Multilingual and code-switched corpora: the approach is evaluated only in English; support for multilingual texts, translation effects, and cross-lingual embeddings is unaddressed.

- Scalability of LLM preprocessing: token and cost constraints for summarizing and extracting keywords at corpus scale (e.g., 100k+ docs) are not analyzed; batching, caching, and incremental updates are unspecified.

- Label generation scalability and faithfulness: the bottom-up topic labeling uses sampled summaries, but the sampling strategy, label stability across runs, and faithfulness of labels to full clusters are not evaluated.

- Reproducibility and model drift: reliance on proprietary LLMs/embeddings (gpt-3.5-turbo-16k-0613, text-embedding-ada-002) raises reproducibility concerns as models update; no mitigation (version pinning, local models) or variance analysis is provided.

- Privacy and governance: sending corpora to external APIs presents data security and compliance risks; no discussion of privacy-preserving alternatives (on-prem LLMs, redaction, differential privacy).

- Hypergraph-to-graph transformation details: the method for converting hypergraphs for clustering (e.g., clique/star/line expansion, weighting) is not specified, hindering reproducibility and analysis of structural biases.

- Parameter sensitivity: the weighting factor α for combining semantic and connectivity similarity is not justified or tuned; no ablation or guidance on choosing α for different datasets.

- Clustering complexity and scale limits: agglomerative clustering is typically O(n2) or worse; no complexity analysis, runtime/memory benchmarks, or upper bounds on corpus size are reported.

- Heuristic auto-expansion: the “expand if > kN or single child” rule (k=0.3) lacks empirical validation; no learning-based or user-adaptive alternative is explored, and the effect on user performance is unknown.

- Missing uncertainty visualization: the system does not quantify or visualize confidence/uncertainty in LLM outputs, disambiguation, embeddings, or cluster assignments to guide user trust and decisions.

- Temporal dynamics: many corpora (e.g., news) evolve; the hypergraph and embedding pipeline do not model time, drift, or incremental updates, nor support temporal sensemaking.

- Missing grounded QA safeguards: the chatbot lacks mechanisms for grounded answers (e.g., citations, quote-level retrieval, factuality checks); strategies to reduce hallucinations or enforce source-consistent responses are not described.

- Human-in-the-loop correction: there is no workflow for users to correct extraction/disambiguation errors, refine keywords, or relabel clusters, nor methods to propagate corrections through the model and UI.

- Explainability of similarities: while embeddings drive clustering, the system does not expose interpretable rationales for why documents/keywords are grouped beyond labels; no feature/phrase-level explanations are provided.

- Evaluation of visualization efficacy: no quantitative comparison of the SFC hypergraph layout against alternative layouts (scatterplots, trees, matrix, other hypergraph visualizations) on sensemaking tasks.

- Edge visibility and relationship clarity: extra-node representation plus SFC layout may obscure individual hyperedges/co-occurrences; the paper does not specify how users inspect specific relationships at scale.

- Label placement robustness: the radial label placement for expanded sub-clusters may suffer from overlaps and ambiguity in dense regions; no empirical assessment or collision-handling strategy is given.

- Impact of summarization on embeddings: embeddings are computed on summaries rather than full texts; the degree to which summarization biases similarity/clustering is not measured.

- Cross-object embedding calibration: using the same embedding space for document summaries and keyword explanations is assumed but unvalidated; calibration and alignment quality are unknown.

- Retrieval and navigation performance: T3 (navigation to documents of interest) lacks metrics (time-to-find, success rate) and comparisons to search/baseline interfaces.

- Generality of “keyword” concept: the definition of keywords as “significantly discussed” items is prompt-dependent; criteria for salience, granularity control, and consistency across domains are unspecified.

- Visual scalability limits: maximum number of documents/keywords the SFC layout can show without overplotting, and UI responsiveness under interactions, are not benchmarked.

- Comparative user study details: the abstract mentions a comparative user study, but task design, participant expertise, statistical outcomes, and limitations are not presented in the text.

- Ethical and bias considerations: potential amplification of model and data biases in extracted keywords, labels, and chatbot responses is unaddressed; no bias audits or mitigation strategies are proposed.

- Integration with retrieval/IR systems: opportunities to combine keyword- and embedding-based retrieval with the hypergraph for targeted exploration are not explored or evaluated.

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed now by leveraging the paper’s hypergraph-based corpus modeling, LLM-driven data preparation (summarization, keyword extraction/disambiguation, labeling), hierarchical clustering that fuses semantic and connectivity signals, space-filling-curve visualization for progressive disclosure, and the integrated chatbot agent for targeted analysis.

- Market research and competitive intelligence — Sectors: software, consumer goods, telecom, automotive, finance

- What you can do: Ingest press releases, earnings transcripts, product announcements, and news; cluster by topics and key entities (companies, products); trace co-mentions; ask the agent for “what changed this quarter for Competitor X across products?” and generate briefs.

- Potential tools/workflows: “Market Pulse Hypergraph” dashboard; weekly landscape reports; alerting on new clusters or entity co-occurrences.

- Dependencies/assumptions: Access to LLM API or on-prem LLM; data licensing for filings/news; prompt templates tuned for domain-specific keywords (e.g., product lines); manageable corpus size or batching.

- Customer feedback triage and root-cause discovery — Sectors: SaaS, e-commerce, gaming, consumer electronics

- What you can do: Analyze tickets, app reviews, NPS verbatims; group recurring issues; map keywords to features; ask the agent for prioritized defect clusters with example quotes.

- Potential tools/workflows: “Feedback Explorer” integrated with Zendesk/Jira; sprint planning aids; semi-automated release notes.

- Dependencies/assumptions: PII handling (GDPR/CCPA) and redaction; domain keyword extraction prompts; on-prem or VPC deployment for sensitive data.

- Legal e-discovery and case preparation — Sectors: legal services, compliance, internal audit

- What you can do: Organize emails, memos, and productions by topics and entities (people, orgs, projects); quickly navigate co-mentions; ask the agent to summarize clusters and flag privilege-risk documents.

- Potential tools/workflows: “Discovery Hypergraph Workbench” for early case assessment; privilege review prioritization.

- Dependencies/assumptions: Strict privacy controls; on-prem LLMs; chain-of-custody and audit logs; validated entity linking to legal entity knowledge bases.

- Scientific literature mapping and triage — Sectors: academia, pharma/biotech, materials, climate

- What you can do: Cluster thousands of papers by methods, datasets, and findings; label clusters with LLMs; ask for evidence summaries per cluster or contrasts between methods.

- Potential tools/workflows: “Lab Literature Map”; systematic review screening support; grant portfolio analysis.

- Dependencies/assumptions: High-quality summaries and domain keyword linking (e.g., MeSH/UMLS); PDF-to-text pipelines; token limits handled via bottom-up label generation as in the paper.

- Newsroom and OSINT research desks — Sectors: media, policy analysis, intelligence, NGO

- What you can do: Make sense of evolving events by mapping actors, locations, and themes; pivot from topics to entities; ask the agent to compile event timelines per cluster.

- Potential tools/workflows: “Breaking News Explorer”; editorial brief generator; OSINT corroboration checklists.

- Dependencies/assumptions: Batch or near-real-time ingestion (streaming is a long-term enhancement); disambiguation via Wikidata/GeoNames.

- Patent landscaping and prior-art search — Sectors: IP strategy, R&D, venture capital

- What you can do: Cluster patents by techniques, components, and applications; identify co-occurring concepts; query the agent for gaps or crowded areas.

- Potential tools/workflows: “Patent Hypergraph Mapper”; competitive IP heatmaps.

- Dependencies/assumptions: PDF parsing quality; extraction prompts tuned for technical claims; linkage to CPC/IPC codes.

- Regulatory/public comment analysis — Sectors: government, energy, finance, environmental policy

- What you can do: Group public comments by themes and stakeholders; surface recurring arguments and entities; ask the agent for theme-level summaries and representative documents.

- Potential tools/workflows: “Docket Explorer” for agencies; response drafting aids with citations.

- Dependencies/assumptions: Transparency and provenance of agent answers; secure handling of submissions; bias checks.

- Enterprise knowledge base navigation — Sectors: any large org (engineering, sales, support, ops)

- What you can do: Map wikis, design docs, and Slack exports into topic/entity hypergraphs; let users pivot between projects, teams, and components; agent answers grounded in selected docs.

- Potential tools/workflows: “CorpWiki Navigator”; onboarding and discovery tools.

- Dependencies/assumptions: Access controls, retention policies; on-prem deployment; connectors to SharePoint/Confluence/Slack.

- Finance research and risk monitoring — Sectors: asset management, banking, insurance

- What you can do: Cluster filings, earnings call transcripts, and news by risk factors (e.g., supply chain, regulatory, ESG); ask the agent for issuer- or sector-specific risk digests.

- Potential tools/workflows: “Issuer Risk Explorer”; PM brief generators; compliance pre-reads.

- Dependencies/assumptions: Market data entitlements; careful prompt design to avoid hallucinated risk claims; human review in the loop.

- Education and course design — Sectors: higher ed, corporate L&D

- What you can do: Map reading lists and MOOCs by themes and core concepts; identify prerequisite clusters; agent-generated study guides per cluster.

- Potential tools/workflows: “Syllabus Map”; personalized reading paths.

- Dependencies/assumptions: Instructor validation of cluster labels; alignment with learning objectives; access to course materials.

- Personal knowledge management — Sectors: daily life

- What you can do: Organize saved articles and notes by topics/entities; ask the agent questions about a selected subset (e.g., “Compare positions across these essays”).

- Potential tools/workflows: Browser extension feeding a local HINTs workspace; research-journal summaries.

- Dependencies/assumptions: Lightweight, privacy-preserving local models or careful cloud use; reduced-scale datasets.

Long-Term Applications

The following opportunities require additional research, scaling, domain adaptation, or platform integration before large-scale deployment.

- Real-time, streaming sensemaking for fast-evolving corpora

- Sectors: media, cybersecurity, fraud monitoring, supply chain

- What it enables: Continuous updates of hypergraphs and cluster labels as new documents arrive; drift detection and alerting on emergent clusters.

- Tools/workflows: “Live Hypergraph Monitor” with incremental embeddings and hierarchical clustering.

- Dependencies/assumptions: Incremental hypergraph/cluster algorithms; low-latency embeddings; streaming data infra; UI stability under frequent updates.

- Privacy-preserving, on-prem, or air-gapped deployments

- Sectors: healthcare, defense, finance, legal

- What it enables: Running the entire pipeline with local or fine-tuned LLMs/embedding models; compliant processing of PHI/PII and classified data.

- Tools/workflows: Kubernetes-deployed HINTs with local LLMs (e.g., Llama-class) and vector DBs; audit trails.

- Dependencies/assumptions: Comparable on-prem model quality; MLOps; hardware acceleration; redaction and access control layers.

- Multilingual and cross-lingual corpus analysis

- Sectors: global enterprises, international policy, humanitarian organizations

- What it enables: Joint hypergraphs over documents in many languages; disambiguation across scripts; cross-lingual cluster labels and QA.

- Tools/workflows: Cross-lingual embeddings; KB linking across Wikidata + domain ontologies.

- Dependencies/assumptions: High-quality multilingual LLMs; normalization of named entities; evaluation datasets.

- Multimodal sensemaking (text + tables + figures + audio/video transcripts)

- Sectors: scientific/technical domains, media, legal

- What it enables: Hyperedges that include table- or figure-derived concepts; richer topic/entity modeling; better document explanations.

- Tools/workflows: PDF table extraction, figure caption parsers, ASR for audio; multimodal embeddings.

- Dependencies/assumptions: Robust multimodal extraction; aligning modalities into shared semantics.

- Massive-scale corpora (tens of millions of documents)

- Sectors: web-scale search, national archives, large enterprises

- What it enables: Distributed processing pipelines; approximate but stable clustering; level-of-detail rendering and interaction.

- Tools/workflows: Sharded hypergraphs; approximate nearest neighbor search; sampling-based label generation with provenance.

- Dependencies/assumptions: Distributed compute; memory-efficient hypergraph representations; stability-aware layout updates.

- Agentic workflows that plan, verify, and author with provenance

- Sectors: research, journalism, policy

- What it enables: Agents that traverse clusters, retrieve evidence, verify claims (RAG), and output reports with citations and confidence assessments.

- Tools/workflows: Integrated RAG with selected clusters; tool-use plugins for fact-checking; report templates.

- Dependencies/assumptions: Strong guardrails and hallucination mitigation; transparent citation pipelines; human-in-the-loop acceptance.

- Domain-specialized extraction and knowledge integration

- Sectors: healthcare (pharmacovigilance, clinical guidelines), cybersecurity (ATT&CK), energy (regulatory filings)

- What it enables: Prompt templates + ontologies for precise keyword/entity extraction; semantic linking to domain KBs; cluster labels aligned to domain taxonomies.

- Tools/workflows: “PV Signal Explorer” (adverse event hypergraphs), “ATT&CK Threat Mapper,” “Regulatory Filing Navigator.”

- Dependencies/assumptions: Curated ontologies (UMLS, SNOMED CT, MITRE ATT&CK); annotation for prompt/adapter tuning; evaluation metrics for faithfulness.

- Collaborative, auditable sensemaking environments

- Sectors: enterprises, agencies, academia

- What it enables: Shared annotations, saved views, discussion threads on clusters; change logs for compliance and reproducibility.

- Tools/workflows: Role-based access and sharing; notebook-like exports of interaction history and agent prompts.

- Dependencies/assumptions: Multi-tenant architecture; versioning of hypergraphs and labels; governance policies.

- Bias, fairness, and trust calibration in corpus analysis

- Sectors: policy, hiring, credit, healthcare

- What it enables: Diagnostics for label/summary bias; counterfactual cluster views; confidence/uncertainty overlays on labels and agent answers.

- Tools/workflows: Bias dashboards; human feedback loops to correct cluster labels and keyword mappings.

- Dependencies/assumptions: Audit datasets for bias evaluation; methods for uncertainty estimation in LLM-generated labels.

- Productization and ecosystem integration

- Sectors: enterprise software

- What it enables: A “Hypergraph Sensemaking Platform” with connectors to M365, Google Workspace, Confluence, Jira, Slack; API for embedding HINTs views into existing apps.

- Tools/workflows: Plugin SDK; SSO and SCIM; export to BI/analytics tools.

- Dependencies/assumptions: Stable APIs; data governance; performance SLAs.

- Applied operations analytics (logs and incident reports)

- Sectors: robotics, manufacturing, aviation, energy utilities

- What it enables: Clustering of incident narratives and maintenance logs by failure modes and components; entity co-occurrence (parts, locations); agent-generated corrective action summaries.

- Tools/workflows: “Ops Incident Mapper”; reliability engineering dashboards.

- Dependencies/assumptions: High-quality log text; safe deployment in regulated environments; taxonomy alignment for parts/components.

Notes on Assumptions and Dependencies Across Applications

- LLM-related: Availability and cost of LLM APIs or quality of local models; context window limits (mitigated by bottom-up label generation); hallucination risks (necessitating guardrails, retrieval grounding, and human oversight).

- Data and privacy: Licensing constraints on corpora; handling of PII/PHI; need for on-prem/vPC deployments in sensitive sectors.

- Knowledge bases: Effective entity linking depends on KB coverage (e.g., Wikidata, UMLS, ATT&CK, CPC/IPC); domain gaps may require custom ontologies.

- Compute and scalability: Embedding generation and hierarchical clustering at scale; incremental update strategies for streaming; GPU/accelerator availability.

- UX and interpretability: User trust benefits from visual “hints” and progressive disclosure; cluster labels should be validated, especially in high-stakes settings.

- Integration: Connectors to enterprise systems (DMS, ticketing, chat) and governance (RBAC, audit logs) are often prerequisites for adoption.

Glossary

- Agglomerative clustering algorithm: A bottom-up hierarchical clustering method that iteratively merges the most similar clusters until a single cluster remains or a stopping criterion is met. "it is then hierarchically clustered and visualized with enhanced space-filling curve layouts~\cite{muelder2008sfc}."

- BERT: A transformer-based LLM for contextual text representations pre-trained on large corpora. "Deep NLP models (e.g., BERT~\cite{devlin2018bert}) can perform"

- Bipartite graph: A graph whose nodes can be divided into two disjoint sets such that edges connect nodes only across sets, not within them. "effectively transforming the hypergraph visualization problem into a bipartite graph visualization problem."

- Centroid similarity: A cluster similarity measure based on the similarity between cluster centroids (mean representations). "For the similarity between clusters, we used centroid similarity, i.e., the similarity between two clusters is the similarity between the centroids of the two clusters."

- Clique expansion: A method to visualize hypergraphs by replacing each hyperedge with a clique among its incident nodes. "An extra-node representation improves the existing clique expansion of hypergraphs by adding extra nodes to represent hyperedges"

- Concave hull: A polygon that more tightly wraps a set of points than a convex hull, capturing concavities in the point distribution. "we use a concave hull algorithm~\cite{park2012concavehull} to generate an approximation polygon for each cluster."

- Cosine similarity: A measure of similarity between two vectors computed as the cosine of the angle between them. "The semantic similarity is the cosine similarity of the embeddings (dense vectors) of the two nodes"

- Dense vector space: A continuous vector space where items (e.g., documents) are represented by dense (non-sparse) numerical vectors capturing semantics. "popularizes the idea of embedding documents in a dense vector space"

- Dual (of a hypergraph): The hypergraph obtained by swapping the roles of nodes and hyperedges in the original hypergraph. "The dual of a hypergraph is simply another hypergraph, where the nodes and hyperedges are interchanged"

- Embeddings: Numerical vector representations of text (documents, keywords) capturing semantic relationships for similarity and clustering. "We used OpenAI's ``text-embedding-ada-002'' model for all embeddings"

- Entity linking: The task of mapping mentions in text to entries in a knowledge base to resolve ambiguity. "we thus prioritize using an entity linking model~\cite{shen2014entity} to disambiguate keywords."

- Extra-node representation: A hypergraph visualization technique that introduces explicit nodes for hyperedges to form a bipartite graph. "we find the extra-node representation introduced by Ouvrard et al.~\cite{ouvrard2017hypergraph} most flexible and intuitive."

- Few-shot prompt: A prompting strategy providing a small number of examples to guide an LLM’s behavior on a task. "A few-shot prompt was adopted to give the LLM examples of what kind of events would be described as the main event."

- Gilbert curve: A space-filling curve that traverses rectangular grids similarly to the Hilbert curve but supports rectangular regions. "we concatenate four Gilbert curves~\cite{gilbert}."

- Gosper curve: A fractal space-filling curve used for aesthetically pleasing layouts in visualization. "We decided to use a simple Gosper curve (\autoref{fig: gilbert}-c) to lay out the nodes for better aesthetics."

- Hierarchical clustering: A family of clustering methods that build nested clusters, typically represented as a tree (dendrogram). "Once the hypergraph is constructed, it is then hierarchically clustered and visualized with enhanced space-filling curve layouts~\cite{muelder2008sfc}."

- Hilbert curve: A continuous fractal space-filling curve mapping a one-dimensional line to a two-dimensional space while preserving locality. "A Gilbert curve is a generalized version of the Hilbert curve"

- Hyperedge: An edge in a hypergraph that can connect any number of nodes, representing multi-way relationships. "A hyperedge thus represents a multi-way relationship between nodes."

- Hypergraph: A generalization of a graph where edges (hyperedges) can connect more than two nodes. "we model the corpus as a hypergraph that matches the user's mental model when making sense of the corpus."

- LLM: A transformer-based model trained on massive text corpora capable of various language tasks via prompting. "integrates LLMs as both a general NLP task solver and an intelligent agent."

- Modularity metric: A quality function used in community detection to evaluate the strength of division of a network into modules. "generalize the modularity metric for graphs to hypergraphs"

- Named Entity Recognition (NER): An NLP task that identifies and classifies named entities (e.g., persons, organizations, locations) in text. "Some of its subtasks include Named Entity Recognition (NER), Relation Extraction (RE) and Event Extraction (EE)"

- Overlap Coefficient: A set similarity measure defined as the intersection size divided by the smaller set’s size. "which is a weighted generalization of the Overlap Coefficient~\cite{vijaymeena2016survey}"

- Semantic similarity: A measure of how similar two items are in meaning, often computed from embeddings. "by combining connectivity similarity and semantic similarity."

- Space-Filling Curve (SFC): A curve that passes through every point in a grid or region, used to map 1D sequences into 2D layouts while preserving locality. "The SFC layout method uses pre-computed clustering to order nodes in a sequence and then applies a space-filling curve on the node sequence to map it to a two-dimensional screen space~\cite{muelder2008sfc}."

- t-SNE: A non-linear dimensionality reduction technique that visualizes high-dimensional data by modeling pairwise similarities. "then a dimensionality reduction technique (e.g., t-SNE) is used to project the documents back into a two-dimensional space for visualization."

- Token limit: The maximum number of tokens an LLM can process in a single prompt-response context. "without breaking the token limit."

- Weighted Topological Overlap (wTO): A weighted measure of node similarity in graphs based on shared neighbors and connection strengths. "The connectivity similarity is the weighted Topological Overlap (wTO)~\cite{gysi2018wto}"

- Zero-shot prompt: A prompting strategy where no task-specific examples are provided; the model relies solely on instructions. "We employed an instruction-based zero-shot prompt, where the model is instructed to act as a text summarizer."

Collections

Sign up for free to add this paper to one or more collections.