Multilingual Instruction Tuning With Just a Pinch of Multilinguality

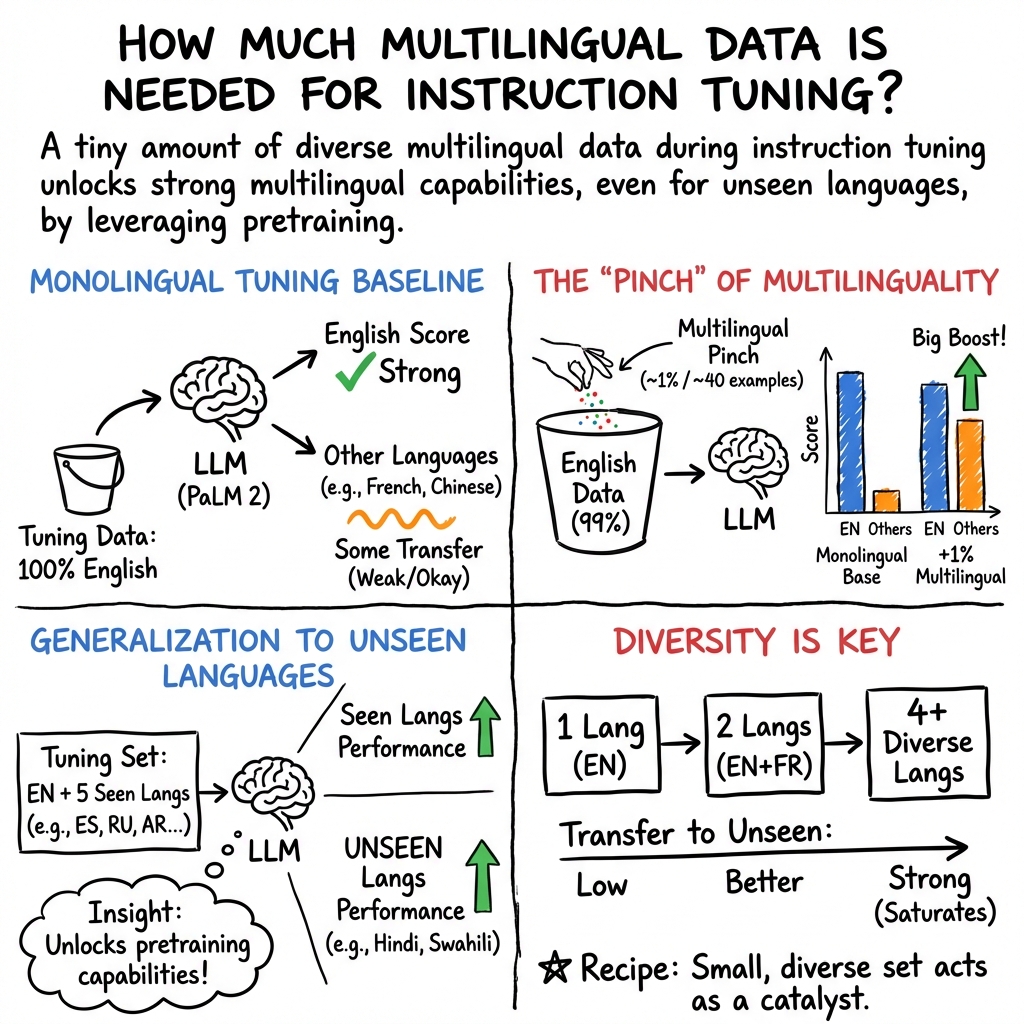

Abstract: As instruction-tuned LLMs gain global adoption, their ability to follow instructions in multiple languages becomes increasingly crucial. In this work, we investigate how multilinguality during instruction tuning of a multilingual LLM affects instruction-following across languages from the pre-training corpus. We first show that many languages transfer some instruction-following capabilities to other languages from even monolingual tuning. Furthermore, we find that only 40 multilingual examples integrated in an English tuning set substantially improve multilingual instruction-following, both in seen and unseen languages during tuning. In general, we observe that models tuned on multilingual mixtures exhibit comparable or superior performance in multiple languages compared to monolingually tuned models, despite training on 10x fewer examples in those languages. Finally, we find that diversifying the instruction tuning set with even just 2-4 languages significantly improves cross-lingual generalization. Our results suggest that building massively multilingual instruction-tuned models can be done with only a very small set of multilingual instruction-responses.

- Palm 2 technical report.

- Mikel Artetxe and Holger Schwenk. 2019. Massively multilingual sentence embeddings for zero-shot cross-lingual transfer and beyond. Transactions of the Association for Computational Linguistics, 7:597–610.

- Training a helpful and harmless assistant with reinforcement learning from human feedback.

- Sparks of artificial general intelligence: Early experiments with gpt-4.

- Monolingual or multilingual instruction tuning: Which makes a better alpaca.

- Vicuna: An open-source chatbot impressing gpt-4 with 90%* chatgpt quality.

- Unsupervised cross-lingual representation learning at scale. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 8440–8451, Online. Association for Computational Linguistics.

- Emerging cross-lingual structure in pretrained language models. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 6022–6034, Online. Association for Computational Linguistics.

- QLoRA: Efficient finetuning of quantized LLMs. In Thirty-seventh Conference on Neural Information Processing Systems.

- BERT: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), pages 4171–4186, Minneapolis, Minnesota. Association for Computational Linguistics.

- Alpacafarm: A simulation framework for methods that learn from human feedback.

- Scaling laws for multilingual neural machine translation.

- Koala: A dialogue model for academic research. Blog post.

- The false promise of imitating proprietary llms.

- The curious case of neural text degeneration. In International Conference on Learning Representations.

- LoRA: Low-rank adaptation of large language models. In International Conference on Learning Representations.

- Cross-lingual ability of multilingual bert: An empirical study. In International Conference on Learning Representations.

- Openassistant conversations – democratizing large language model alignment.

- Okapi: Instruction-tuned large language models in multiple languages with reinforcement learning from human feedback. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing: System Demonstrations, pages 318–327, Singapore. Association for Computational Linguistics.

- Self-alignment with instruction backtranslation.

- Chin-Yew Lin. 2004. ROUGE: A package for automatic evaluation of summaries. In Text Summarization Branches Out, pages 74–81, Barcelona, Spain. Association for Computational Linguistics.

- A balanced data approach for evaluating cross-lingual transfer: Mapping the linguistic blood bank. In Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pages 4903–4915, Seattle, United States. Association for Computational Linguistics.

- Cross-task generalization via natural language crowdsourcing instructions. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 3470–3487, Dublin, Ireland. Association for Computational Linguistics.

- Crosslingual generalization through multitask finetuning. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 15991–16111, Toronto, Canada. Association for Computational Linguistics.

- Training language models to follow instructions with human feedback. In Advances in Neural Information Processing Systems, volume 35, pages 27730–27744. Curran Associates, Inc.

- English intermediate-task training improves zero-shot cross-lingual transfer too. In Proceedings of the 1st Conference of the Asia-Pacific Chapter of the Association for Computational Linguistics and the 10th International Joint Conference on Natural Language Processing, pages 557–575, Suzhou, China. Association for Computational Linguistics.

- How multilingual is multilingual BERT? In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, pages 4996–5001, Florence, Italy. Association for Computational Linguistics.

- Exploring the limits of transfer learning with a unified text-to-text transformer. Journal of Machine Learning Research, 21(140):1–67.

- Multitask prompted training enables zero-shot task generalization. In International Conference on Learning Representations.

- Causes and cures for interference in multilingual translation. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 15849–15863, Toronto, Canada. Association for Computational Linguistics.

- Stanford alpaca: An instruction-following llama model. https://github.com/tatsu-lab/stanford_alpaca.

- Llama: Open and efficient foundation language models.

- Llama 2: Open foundation and fine-tuned chat models.

- Attention is all you need. In Advances in Neural Information Processing Systems, volume 30. Curran Associates, Inc.

- Self-instruct: Aligning language models with self-generated instructions. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 13484–13508, Toronto, Canada. Association for Computational Linguistics.

- Finetuned language models are zero-shot learners. In International Conference on Learning Representations.

- Shijie Wu and Mark Dredze. 2019. Beto, bentz, becas: The surprising cross-lingual effectiveness of BERT. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), pages 833–844, Hong Kong, China. Association for Computational Linguistics.

- mT5: A massively multilingual pre-trained text-to-text transformer. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pages 483–498, Online. Association for Computational Linguistics.

- Language versatilists vs. specialists: An empirical revisiting on multilingual transfer ability.

- Plug: Leveraging pivot language in cross-lingual instruction tuning.

- Judging llm-as-a-judge with mt-bench and chatbot arena.

- Lima: Less is more for alignment.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview: What this paper is about

This paper looks at how to teach LLMs—like smart chatbots—to follow instructions in many different languages. The big idea is: you don’t need tons of training data in every language to make a model good at following instructions across the world. Even a small amount of multilingual training can help the model do better in many languages, including ones it hasn’t been specially trained on.

Goals: What questions the researchers asked

- If we train a model to follow instructions using only one language (like English), will it learn to follow instructions in other languages too?

- How many examples in other languages do we need to add to the training to make the model better at those languages—without hurting its performance in English?

- Does training on more different languages (not just more examples) help the model get better at new languages it hasn’t been trained on?

- Do things like language similarity (e.g., shared alphabet) or the amount of pre-training data in a language predict how well skills transfer between languages?

Methods: How they tested their ideas

Think of training an LLM like teaching a student. First, the student studies many languages (this is “pre-training”). Then, the student gets practice on “instruction-following”—pairs of a prompt (instruction) and a good answer—this is “instruction tuning.”

Here’s what they did:

- Model used: PaLM 2, a multilingual LLM that was pre-trained on hundreds of languages. They used the smaller “PaLM 2-S” for training and a larger “PaLM 2-L” as a “judge.”

- Training data: About 4,600 high-quality instruction–response pairs mostly in English (from public datasets). They translated these into 12 languages (like Arabic, Chinese, Spanish, Hindi, etc.) using Google Translate, so the content was the same across languages.

- Experiments:

- Monolingual tuning: Train 12 separate models, each on one language, then test them across all languages to see what transfers.

- Small multilingual mixes: Replace a tiny portion of English training examples (even just 40 examples total across many languages—about 1%) with multilingual examples and see how much that helps.

- Fewer training languages: Train with only 6 of the 12 languages and test on all 12 to see if skills transfer to languages not used in tuning.

- More languages in the mix: Gradually add more languages to the training set (2, 3, 4, up to 6) while keeping the total number of examples fixed, and see how cross-language performance changes.

- Evaluation: They did “side-by-side” comparisons, where an LLM judge sees two answers to the same instruction and picks the better one. To be fair, they swapped the order and used a scoring system where wins, ties, and losses each contribute to a final percentage score. They also checked with human evaluators in multiple languages to confirm the LLM judge’s decisions matched human preferences pretty well.

Findings: What they discovered and why it matters

- Monolingual training transfers: Training a model on just one language (like English, Spanish, or Italian) still teaches it to follow instructions in many other languages. English, Italian, and Spanish were especially strong at transferring skills.

- Tiny multilingual additions help a lot: Swapping just 40 English examples for multilingual ones (spread across 11 non-English languages) noticeably improved performance in those languages—and even helped in languages not included in the tuning set!

- Multilingual beats monolingual for some languages: A model trained on a multilingual mix (with fewer examples per language) often matched or beat a model trained only on one language with 10 times more examples. This means diversity can be more powerful than quantity.

- More languages, better generalization: Adding just 2–4 languages to the training set made the model significantly better at languages it had never seen during instruction tuning.

- Similarity and pre-training size aren’t strong predictors: Language similarity (like sharing the same script) and how much pre-training data a language had didn’t strongly explain which languages transferred better. In other words, just adding multilingual variety during instruction tuning mattered more than those factors.

Implications: Why this is useful

This research suggests we can build LLMs that follow instructions in many languages without collecting huge amounts of training data for each one. By adding just “a pinch” of multilingual examples and a small number of different languages, we can:

- Make models more useful globally, even for languages with limited data.

- Save time and resources by not needing massive datasets in every language.

- Improve performance in both seen and unseen languages during tuning.

In short, to get a model that understands and follows instructions across the world, you don’t need a mountain of multilingual data—just a smart mix with a small amount of multilingual diversity can go a long way.

Notes on limitations

- They used machine-translated data rather than native, human-written text, which can introduce noise.

- They tested on a limited set of languages and with one model family (PaLM 2), so results might vary with other models or more languages.

- Still, the core message is strong: small, diverse multilingual instruction tuning can meaningfully improve cross-language instruction-following.

Glossary

- adapter-based framework: A method that adds small, trainable modules (adapters) to a frozen pre-trained model to adapt it to new tasks or languages with minimal additional parameters. "suggested an adapter-based framework to improve cross-lingual and task generalization."

- bilingual tuning: Training a model using instruction–response data from exactly two languages to encourage cross-lingual transfer. "bilingual tuning helps generalize to new languages better than monolingual tuning."

- cross-lingual generalization: The ability of a model to perform well in languages it was not tuned on, leveraging knowledge learned from other languages. "significantly improves cross-lingual generalization."

- cross-lingual transfer: The phenomenon where skills learned in one language improve performance in another language. "cross-lingual transfer has emerged as a promising approach"

- discounted-tie scoring method: An evaluation scheme that assigns 1 point for a win, 0.5 for a tie, and 0 for a loss when comparing model outputs. "We use a discounted-tie \citep{zhou2023lima} scoring method"

- instruction-following: A model’s capability to understand and execute natural language instructions appropriately. "significantly improves instruction-following in those languages."

- instruction tuning: Fine-tuning a pre-trained LLM on pairs of instructions and responses to teach it to follow user prompts. "Instruction tuning is a fundamental aspect of building modern general-purpose LLMs"

- LLM judge: Using a LLM to evaluate and compare responses generated by other models. "To validate that the LLM judge decisions align with human preferences across languages,"

- low-rank adaptation: A parameter-efficient tuning technique that injects trainable low-rank matrices into the model (e.g., LoRA) instead of updating all parameters. "investigated the effects of full parameter training vs low-rank adaptation \cite{hu2022lora}"

- monolingual instruction tuning: Instruction tuning conducted with training data from a single language. "transferability of monolingual instruction tuning across different languages."

- mutual intelligibility: The degree to which speakers of different but related languages can understand each other without prior study. "aspects of language similarity like the script or mutual intelligibility might affect"

- PaLM 2: A family of Google’s LLMs pre-trained on hundreds of languages. "We use the PaLM 2 model family of Transformer-based \citep{NIPS2017_3f5ee243} LLMs"

- parameter sharing: Reusing the same set of model parameters across multiple languages or tasks to enable transfer and efficiency. "parameter sharing is more important than shared vocabulary."

- Pearson correlation: A statistical measure of linear association between two variables, ranging from -1 to 1. "We find a weak Pearson correlation of 0.22 between the average cross-lingual score of each language and the number of documents in that language in pre-training corpus"

- pivot language: An intermediate language used to facilitate translation or instruction tuning across languages. "by prepending the instruction and response translated into a pivot language (e.g English) to the response in the target language."

- pre-training corpus: The large unlabeled dataset used to pre-train a LLM before fine-tuning. "across languages from the pre-training corpus."

- reinforcement learning from human feedback: Training that uses human preference judgments to shape model behavior via reinforcement learning. "trained multilingual instruction-following models for 26 languages with reinforcement learning from human feedback"

- side-by-side automatic evaluation protocol: An assessment method where an LLM compares two candidate responses to the same instruction and selects the better one. "We conduct a side-by-side automatic evaluation protocol"

- Transformer-based: Refers to models built on the Transformer architecture that relies on self-attention mechanisms. "We use the PaLM 2 model family of Transformer-based \citep{NIPS2017_3f5ee243} LLMs"

- zero-shot cross-lingual transfer: Applying capabilities to a target language without any tuning data in that language, leveraging transfer from other languages. "To explore zero-shot cross-lingual transfer of instruction tuning in multilingual LLMs,"

Collections

Sign up for free to add this paper to one or more collections.