- The paper demonstrates a dual-model framework that employs a palm detector and a hand landmark model for efficient, real-time hand tracking.

- It leverages a mix of real and synthetic datasets to reduce landmark error to 13.4%, ensuring high accuracy in detection.

- The system's optimized architecture facilitates seamless AR/VR integration on mobile devices with low computational overhead.

Introduction

The paper "MediaPipe Hands: On-device Real-time Hand Tracking" presents a computational framework for performing efficient hand tracking in real-time on mobile devices with minimal resources. This technology considerably advances the use of hand tracking in augmented reality (AR) and virtual reality (VR) applications by utilizing just a single RGB camera, eliminating the need for additional hardware like depth sensors. The proposed solution comprises a dual-model architecture, optimized for Mobile GPUs using the MediaPipe framework, ensuring both precision and real-time performance.

System Architecture

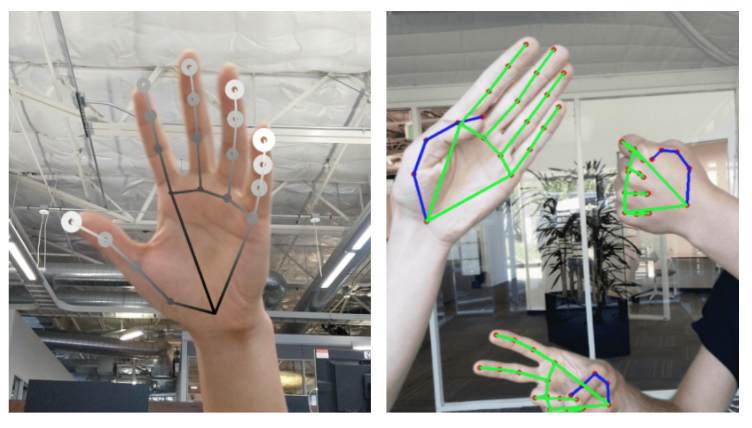

The methodology adopts a two-stage approach by employing a palm detector and a hand landmark model. The palm detector initializes the process by identifying and providing bounding boxes for hands in the image, which are then passed to the hand landmark model for precise landmark detection.

Figure 1: Rendered hand tracking result. (Left): Hand landmarks with relative depth presented in different shades. (Right): Real-time multi-hand tracking on Pixel 3.

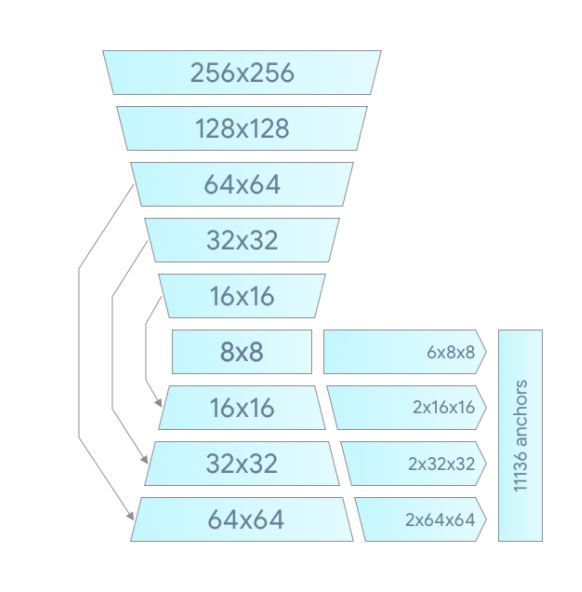

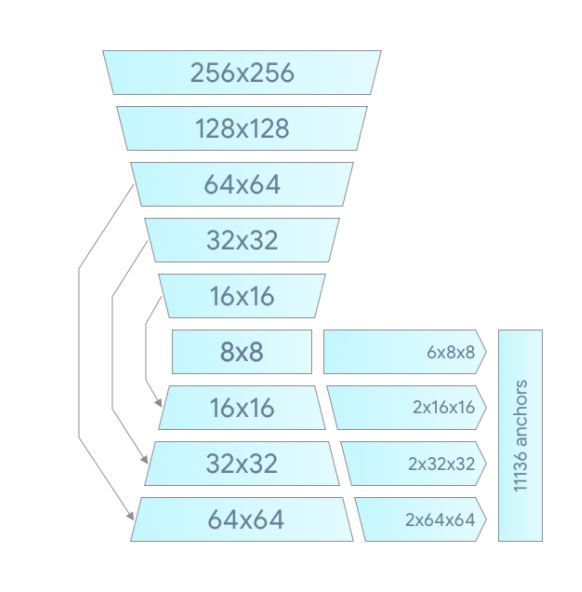

The palm detection utilizes an encoder-decoder architecture inspired by BlazeFace, specifically adapted for the increased complexity of hand detection. In contrast to faces, hands lack distinct contrasting features, making reliable detection challenging. The usage of square bounding boxes for palms simplifies this process, significantly reducing computational overhead due to a decrease in anchor complexity.

Figure 2: Palm detector model architecture.

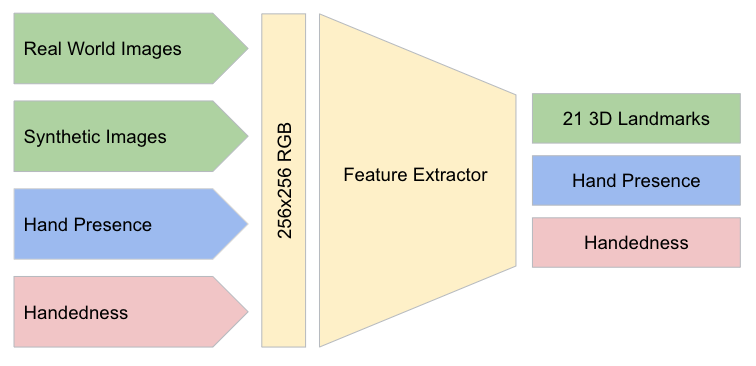

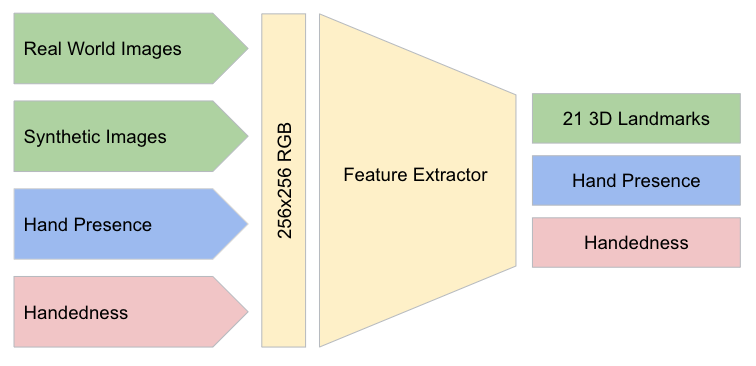

The hand landmark model refines the detected area to predict 21 2.5D hand landmarks. This model uses a shared feature extractor with three different outputs: precise landmarks, presence probability, and handedness classification. The landmark estimation incorporates feedback from prior frames, enhancing accuracy and efficiency.

Figure 3: Architecture of our hand landmark model. The model has three outputs sharing a feature extractor.

Dataset and Training

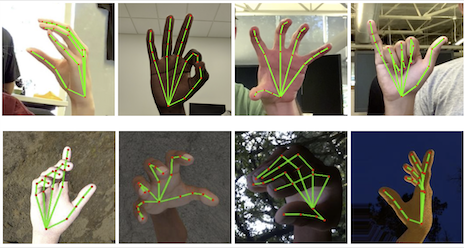

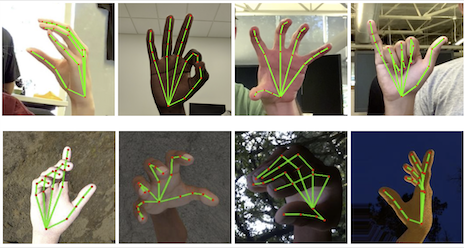

A diverse dataset was used for training, including an in-the-wild dataset for broad environmental conditions, an in-house gesture-specific dataset, and synthesized datasets for exhaustive pose variations. The synthetic dataset is pivotal, allowing for in-depth depth supervision and covering a broad spectrum of hand poses, which are often impossible to capture with real-world data alone.

Figure 4: Examples of our datasets. (Top): Annotated real-world images. (Bottom): Rendered synthetic hand images with ground truth annotation.

Combination of these datasets significantly reduced errors, with the combined approach yielding a mean squared error normalized by palm size of only 13.4%, outperforming training with any single dataset.

Results and Implementation

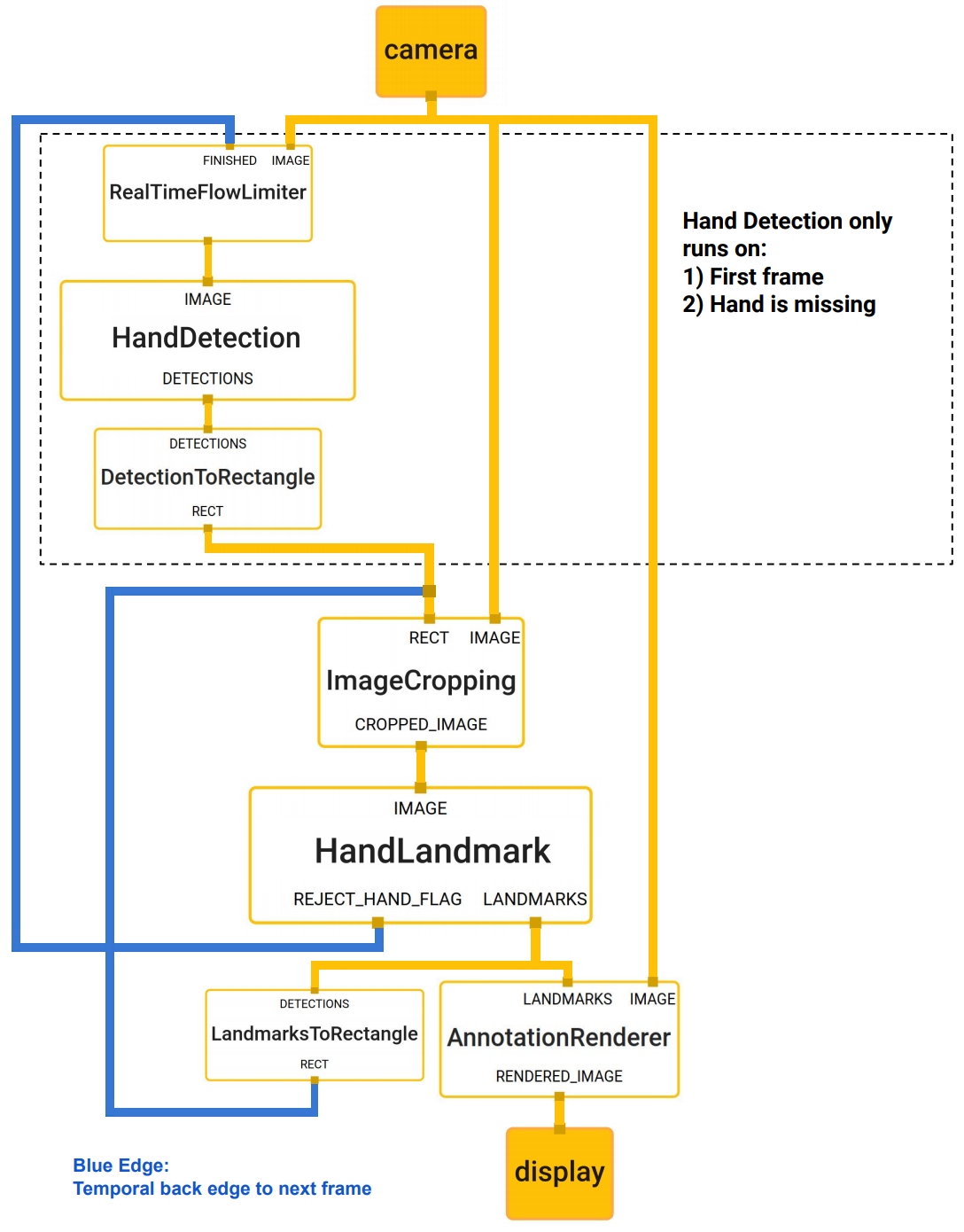

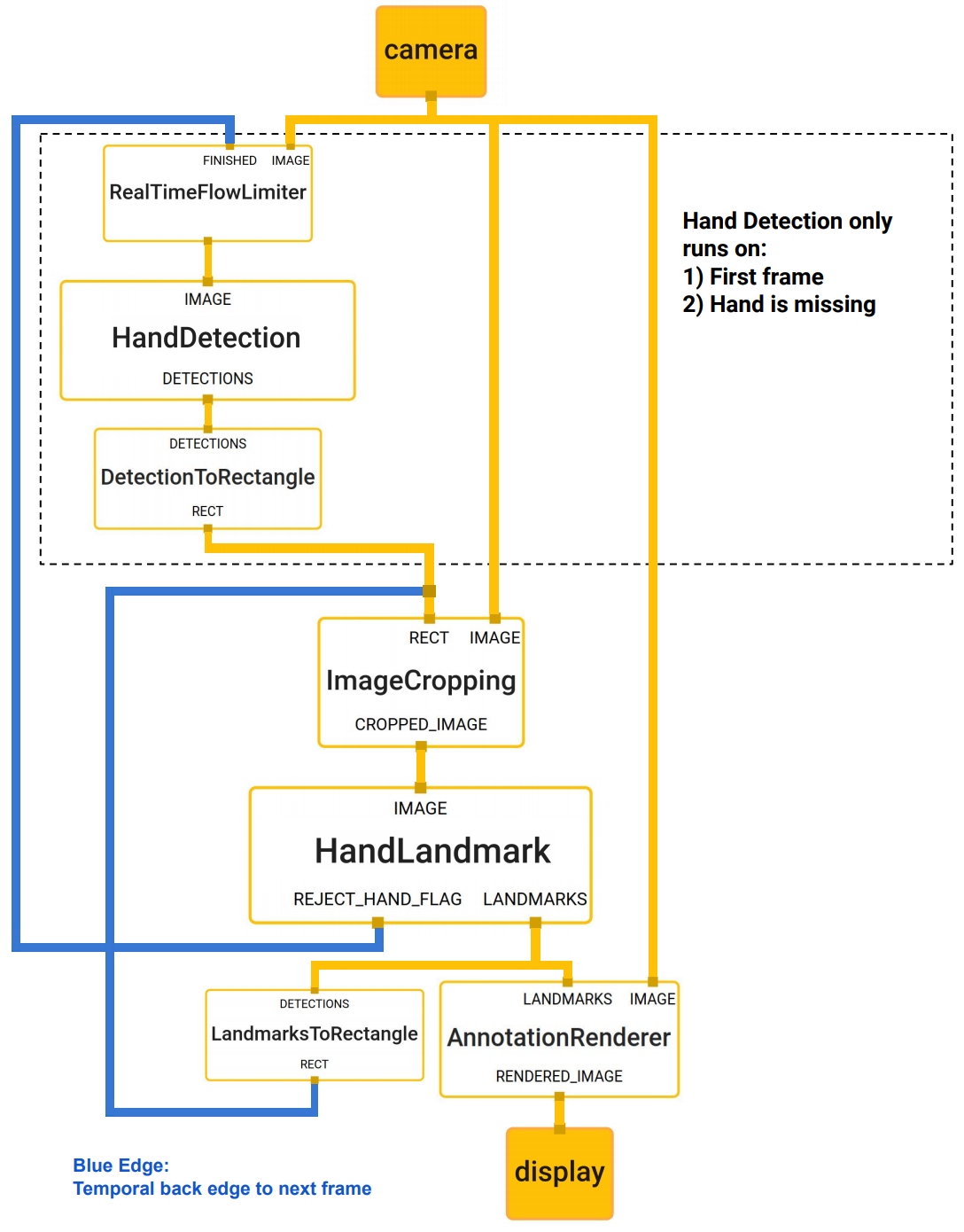

The implementation of the model in MediaPipe facilitates seamless integration and deployment across various platforms, utilizing computational graphs and modular components. The architecture is optimized to determine hand presence based on previously computed landmarks without redundantly engaging the palm detector, providing computational efficiency and ensuring real-time processing capabilities.

Figure 5: The hand landmark model output controls when the hand detection model is triggered, optimizing the ML pipeline.

Performance evaluations demonstrated that the "Full" model balances precision and speed, maintaining low processing times on mobile devices while preserving accuracy, achieving average precision improvements when utilizing decoder-enhanced models and focal loss optimization.

Application Examples

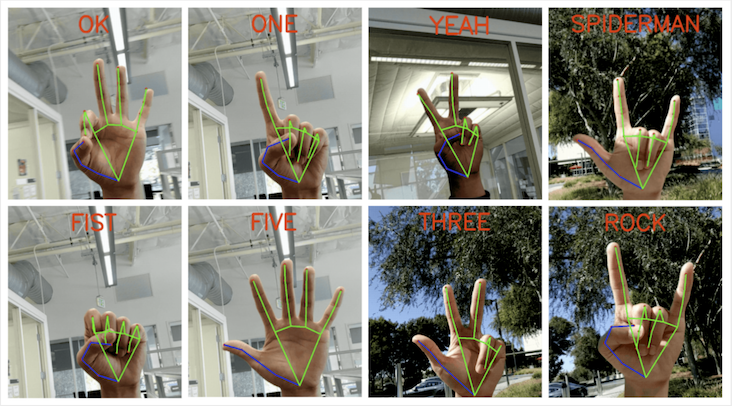

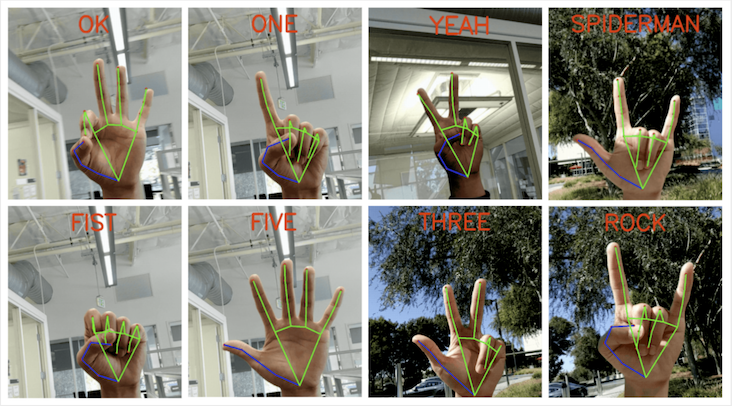

MediaPipe Hands provides broader applications in gesture recognition and AR effects. Gesture recognition is facilitated by mapping detected hand skeletons to predefined gesture sets, utilizing a simple yet effective method involving the aggregation of joint angles to determine finger states.

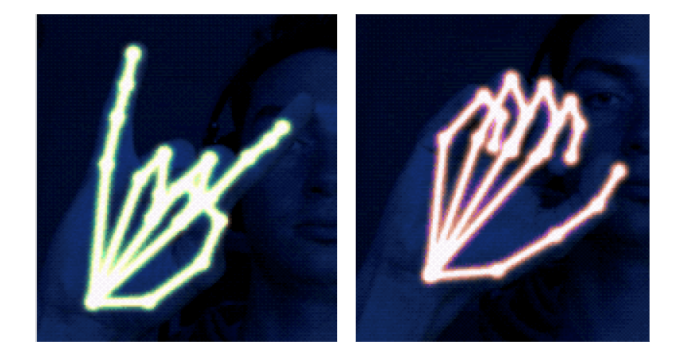

Figure 6: Screenshots of real-time gesture recognition.

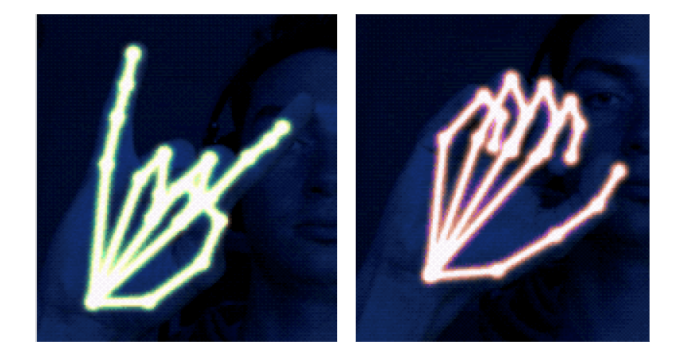

The predicted hand skeletons enable vibrant AR features, illustrating potential for creative uses in consumer applications, ranging from interactive entertainment to precise control interfaces.

Figure 7: Example of real-time AR effects based on our predicted hand skeleton.

Conclusion

The framework introduced in "MediaPipe Hands" contributes significantly to the accessibility of real-time hand tracking across platforms without additional hardware requirements, enriching the repertoire of tools available for AR/VR developers. The open-source nature of MediaPipe Hands paves the way for further innovation and application, providing a benchmark for the integration of efficient hand tracking in mobile applications.