- The paper presents novel optimization and statistical poisoning frameworks that significantly degrade regression model performance using gradient ascent and data manipulation techniques.

- It demonstrates that optimization-based attacks can spike MSE, while statistical attacks stealthily inject malicious data, complicating detection.

- The proposed trimmed loss defense algorithm effectively mitigates poisoning impacts, achieving only a 6.1% MSE increase compared to unpoisoned models.

Overview of "Manipulating Machine Learning: Poisoning Attacks and Countermeasures for Regression Learning" (1804.00308)

This paper presents a comprehensive study on poisoning attacks against linear regression models and proposes robust defense mechanisms. Poisoning attacks involve injecting malicious data into the training set, aiming to subvert the model's performance by skewing its predictions. The paper introduces a novel optimization framework for facilitating these attacks and a fast statistical attack strategy that operates under limited knowledge assumptions. Beyond introducing attack methods, the paper proposes a defense mechanism that significantly mitigates the impact of these attacks.

Poisoning Attacks on Regression Models

Attack Methodologies

The paper elaborates on two primary forms of attack strategies: optimization-based and statistical-based methods.

- Optimization-based Attacks:

- The proposed framework adapts existing classification poisoning techniques for regression contexts by optimizing both feature values and associated response variables.

- A gradient ascent approach is employed to maximize the error on an untainted validation set, leveraging techniques like KKT conditions to maintain computational efficiency.

- Different initialization strategies are explored (Inverse Flipping and Boundary Flipping) along with different objectives to achieve significant performance degradation.

- Statistical-based Attacks:

- This method targets generating poisoned samples that are statistically similar to legitimate data, making them difficult to detect.

- It operates efficiently by requiring minimal access and manipulation, providing a practical deployment advantage.

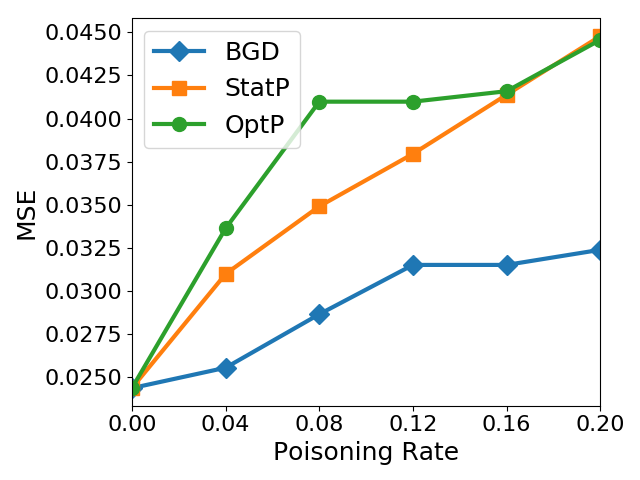

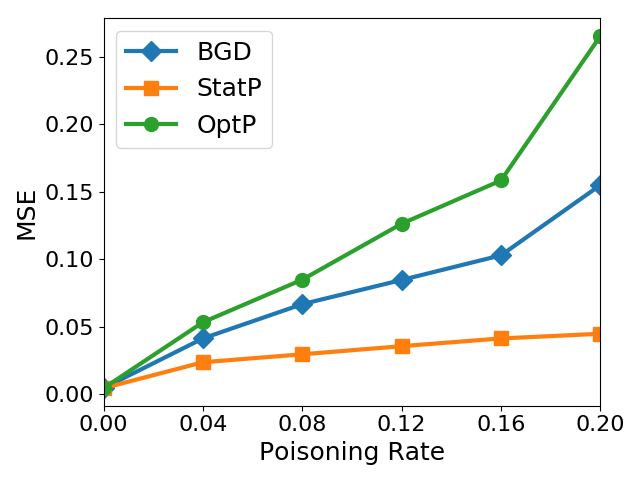

Evaluation

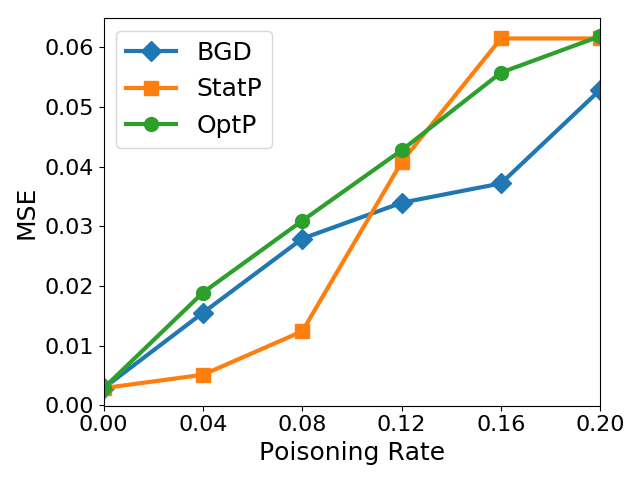

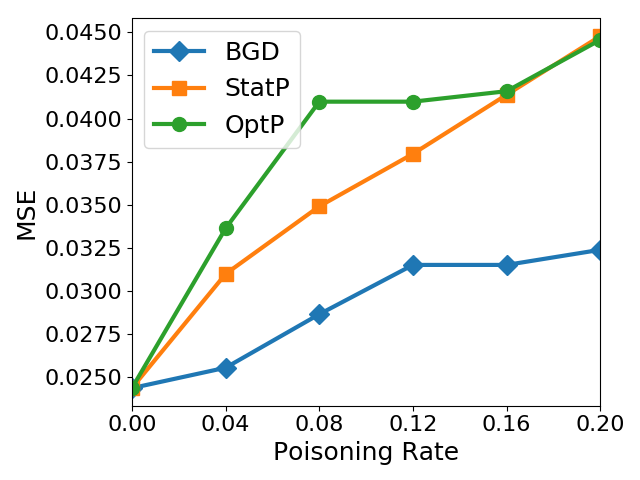

The attack strategies were evaluated across various regression models—OLS, LASSO, ridge, and elastic net—on datasets from diverse domains such as healthcare, loan assessments, and real estate. Results showed that the optimization-based attacks could increase MSE significantly, illustrating the efficacy of these approaches.

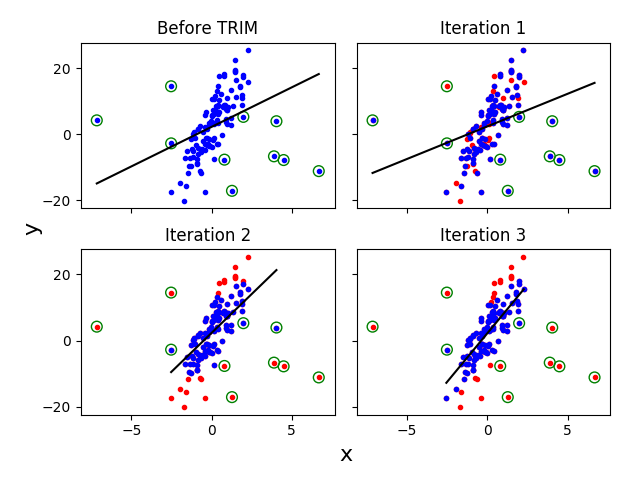

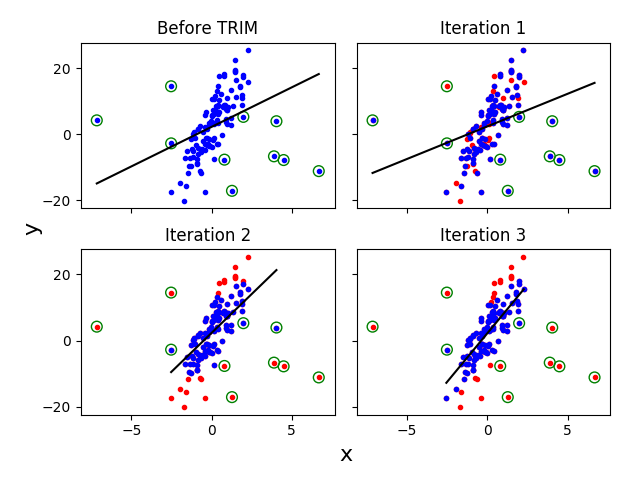

Figure 1: Several iterations of the algorithm. Initial poisoned data is in blue in the top left graph. The top right graph shows initial randomly removed points. Subsequent iterations refine high residual points and enhance model robustness.

Defense Mechanism: The Trimmed Loss Approach

Proposed Defense: \ Algorithm

- The novel defense approach iteratively estimates regression parameters by trimming loss functions to remove high-residual points iteratively, enhancing the model's robustness to poisoned samples.

- The \ algorithm quantitatively outperforms conventional robust statistical methods like Huber and RANSAC, demonstrating significant improvements in maintaining model integrity in the presence of poisoned data.

Theoretical Analysis

The proposed defense algorithm \ is mathematically grounded with formal convergence proofs and robustness guarantees. It achieves superior resistance to a wide range of poisoning attacks by ensuring that the model is trained on a subset of data minimizing the influence of adversarial points.

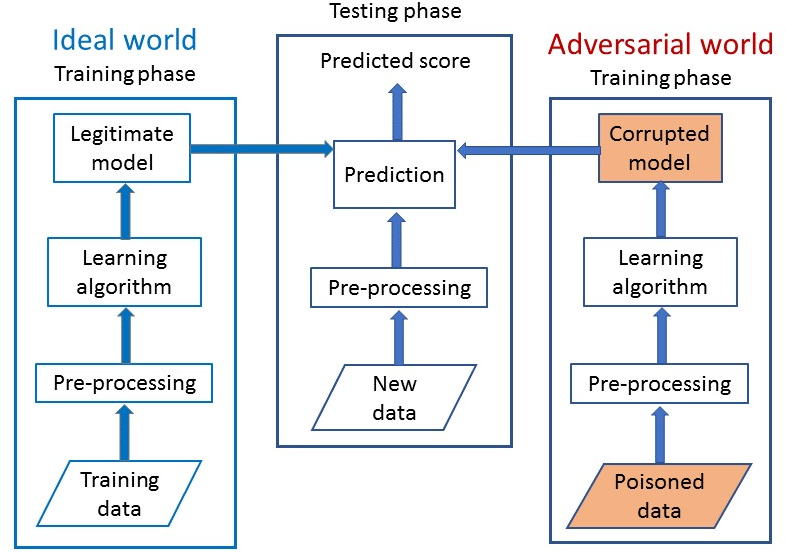

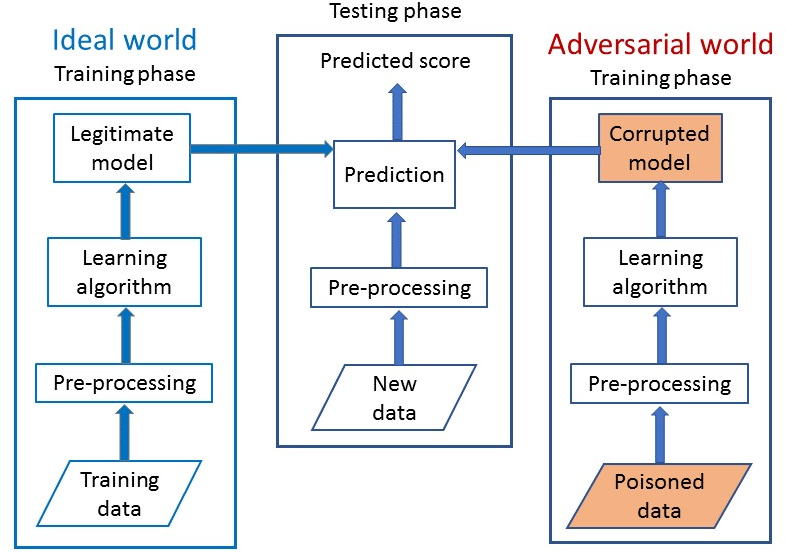

Figure 2: System architecture showcasing the learning process under poisoning attack scenarios and the deployment of the \ defense.

Empirical Results

Extensive experimentation revealed that the \ defense achieves a median MSE increase of only 6.1% for poisoned models compared to unpoisoned baselines, illustrating its effectiveness in preserving model performance across datasets. This marks a substantial improvement over conventional robust statistics and other adversarially resilient algorithms, like those proposed by Chen et al.

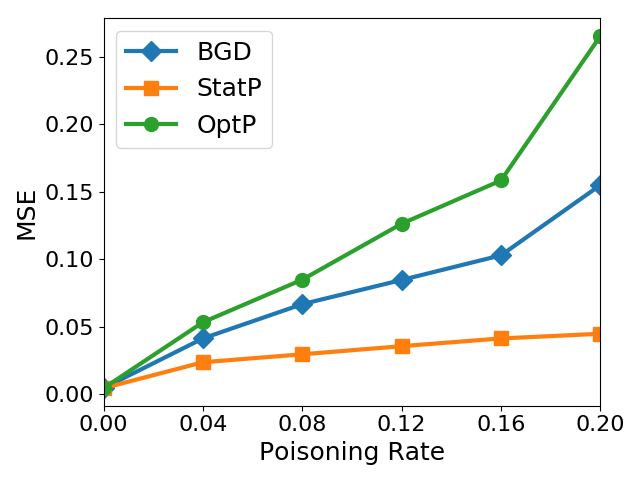

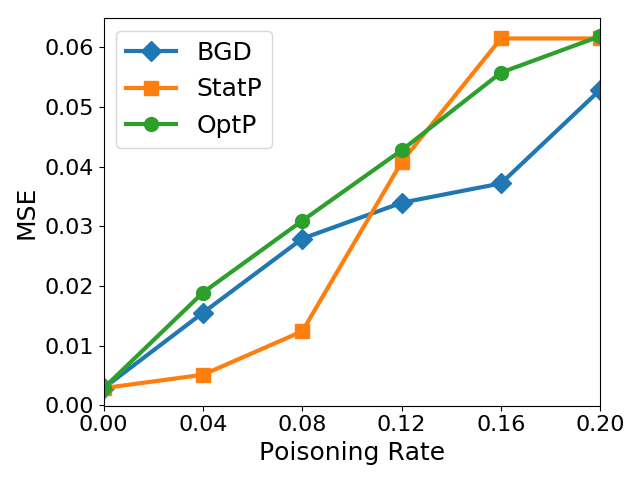

Figure 3: Health Care Dataset.

Conclusion

The study presents groundbreaking advancements in understanding and addressing poisoning attacks on regression models. By integrating novel attack strategies with robust defense techniques, it sets a foundation for developing more secure machine learning models. Future research directions may explore extending these methodologies to other learning paradigms and understanding the long-term impacts on adaptive systems.