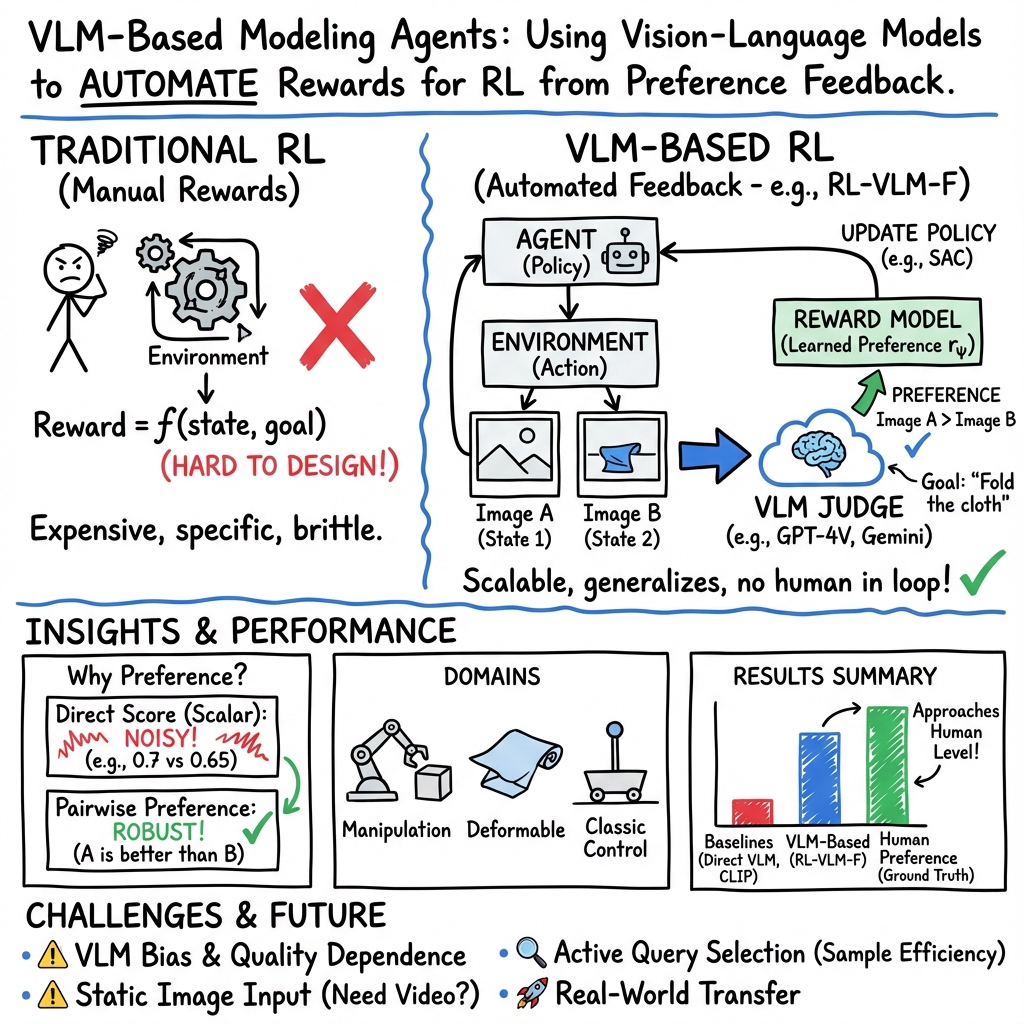

VLM-based Modeling Agents

- VLM-based modeling agents are interactive systems using large vision-language models to interpret visual data and natural language instructions.

- They automate reward generation through pairwise preference feedback, eliminating the need for manual reward engineering in reinforcement learning.

- These agents demonstrate robust performance in diverse tasks, from robotic control to deformable object manipulation, enabling scalable and generalizable learning.

A VLM-based modeling agent is an artificial agent that leverages large vision-LLMs (VLMs) as a central component for perception, reasoning, and control within interactive environments. These agents utilize pretrained VLMs—often foundation models such as Gemini-Pro or GPT-4V—to convert visual observations and text instructions into actionable feedback or commands without requiring extensive human labeling, environment code access, or reward engineering. The architecture of VLM-based modeling agents enables automation across a variety of tasks, generalizes to novel settings, and mitigates many of the classical limitations of traditional reinforcement learning or imitation learning workflows.

1. Automated Reward Generation via VLM Feedback

A core advance of VLM-based modeling agents is the use of VLMs to supply preference-based feedback, which substitutes for human-provided rewards in reinforcement learning (RL). In this paradigm, exemplified by RL-VLM-F, the agent does not receive numerical rewards from the environment or hand-crafted specifications. Instead, the agent interactively collects image observations and queries a VLM with pairs of images paired with the task goal in natural language. The VLM judges, for each pair, which image better satisfies the goal—delivering preference labels (chosen from ). These labels are then used to train a reward function, which is employed to optimize the agent’s policy.

The core workflow is cyclical:

- Environment data is gathered by the agent.

- Pairs of sampled states and task descriptions are input to the VLM.

- The VLM returns preferences.

- Preference-based reward modeling is performed using a Bradley-Terry loss to infer a reward for each observation.

- Policy training (e.g., via Soft Actor-Critic) uses the learned reward function, driving environment exploration and improvement.

This system eliminates reward engineering and human-in-the-loop supervision, replacing them with scalable, automated feedback derived from high-capacity vision-LLMs.

2. Preference-Based Reward Modeling: Formalism and Implementation

The agent’s reward function is identified using a standard pairwise comparison approach. Given a dataset —where , are single images and is the VLM-given preference—the reward model is optimized via the following objective (see Equation 2):

with

where is a parameterized neural network over image observations.

This preference learning paradigm provides robustness to noisy or inconsistent VLM judgments, as it leverages comparative rather than absolute scoring.

3. Empirical Domains and Practical Use Cases

The practical scope of VLM-based modeling agents extends across multiple RL domains:

- Classic Control: Tasks like CartPole, where the agent must balance a pole atop a cart using visual inputs.

- Robotic Manipulation: MetaWorld environments, including Sawyer-arm tasks such as Open Drawer (manipulating an articulated object), Soccer (pushing a ball to a target), and Sweep Into (moving an object into a slot).

- Deformable Object Manipulation: SoftGym environments including Fold Cloth, Straighten Rope, and Pass Water, which involve manipulating objects with nonrigid properties using only image observations.

The agent operates using only a natural language description of the goal (e.g., “straighten the rope as much as possible”) and visual input, providing generalization across a spectrum of task modalities.

4. Comparative Performance and Analysis

Extensive evaluation shows that VLM-based modeling agents, using RL-VLM-F, outperform prior approaches in several domains:

- Direct VLM Scoring Baselines: Methods using the VLM to assign a scalar score to a single image at each step (rather than pairwise preferences) are consistently outperformed by preference-based learning due to increased noise and poor calibration.

- Contrastive Methods (CLIP/BLIP-2): Methods using static, pretrained vision-language embeddings for reward or state scoring perform acceptably on basic control tasks but fail in complex or visually ambiguous scenarios such as robotic manipulation of deformable objects.

- Human-Baseline Comparison: RL-VLM-F policies approach, and sometimes surpass, the performance of policies trained using ground-truth human preference feedback, illustrating the practical sufficiency of pre-trained VLMs as automated “preference annotators.”

VLM preference accuracy, particularly in distinguishing large state differences, tracks with human annotator reliability, suggesting VLMs can substitute for humans in most but not all preference labeling contexts.

5. Limitations, Challenges, and Broader Implications

Several limitations and implications are observed:

- VLM Quality Dependence: The effectiveness of the agent is contingent on the VLM’s quality and generalization for the visual domain. Certain tasks (e.g., complex cloth manipulation) are sensitive to the choice of VLM, as differences in learning curves have been observed (e.g., GPT-4V vs. Gemini-Pro).

- Data Bias and Safety: Trained VLMs may encode dataset biases; thus, any downstream RL policy may also reflect these, raising considerations for deployment in safety-critical domains.

- Static Observation Assumption: RL-VLM-F, as reported, operates on single images per preference query. Tasks where reward requires trajectory-level or temporal reasoning may suffer from this limitation, as the model cannot fully capture dynamic context.

- Automation Benefits: The method eliminates costly manual reward design, lowers the barrier to specifying new RL tasks, and enables generalization to states and tasks that are visually and semantically novel.

6. Future Directions

Research trajectories flagged by this work include:

- Active Query Selection: Enabling the system to select maximally informative or uncertain preference queries may improve sample efficiency and reduce the number of VLM calls required.

- Real-World Application: Transferring success from simulated robotic environments to physical systems, where direct code/state access is unavailable, remains a priority.

- Bias Mitigation and Auditing: Developing tools to audit, quantify, and mitigate bias in VLM-based reward models, especially for high-risk settings.

- Richer Modality Integration: Extending feedback from images to full video or temporal sequences, potentially improving performance on tasks with substantial temporal dependencies.

- Integration with Next-Generation VLMs: Leveraging increasingly capable, general VLMs will likely further amplify the scalability and generalizability of this approach.

7. Summary Table

| Aspect | RL-VLM-F Contribution | Notes |

|---|---|---|

| Reward Source | VLM pairwise preferences | No manual reward engineering |

| Task Domains | Control, rigid/articulated/deformable manipulation | Generalizes across standard RL suites |

| Supervision Requirement | None (no human annotation) | Only text description and camera images |

| Performance | Outperforms direct VLM, CLIP/BLIP-2, RoboCLIP baselines | Sometimes matches/surpasses ground-truth preference RL |

| Limitations | Static image queries, VLM bias, VLM gating | Temporal tasks may require further extension |

VLM-based modeling agents represent an influential trend in interactive AI, substituting the need for manual reward function design and human annotation with large-scale, automated vision-language feedback. This approach enables scalable, generalizable, and efficient agent learning in visually complex and unstructured domains, though careful consideration of model bias, temporal understanding, and real-world constraints remains important for broader deployment.