- The paper introduces TrapDoc, a novel framework that injects imperceptible phantom tokens into documents to mislead large language models.

- The methodology exploits PDF operator streams to insert hidden tokens that alter semantic interpretation without visibly modifying the text.

- Results demonstrate that TrapDoc effectively reduces LLM task performance while maintaining high text similarity, promoting careful human review.

TRAPDOC: Deceiving LLM Users by Injecting Imperceptible Phantom Tokens into Documents

Abstract

The study introduces "TrapDoc," a framework designed to address the emerging concern over-reliance on LLMs by injecting imperceptible phantom tokens into documents. This technique causes LLMs to generate incorrect but plausible outputs, encouraging more thoughtful engagement with LLM outputs.

Introduction

The paper begins by highlighting the rapid advancements in the capabilities of LLMs, including reasoning, writing, and retrieval, which have expanded functionalities for users. However, this utility has led to significant societal concerns, particularly due to over-reliance on LLMs for tasks such as academic work and processing sensitive documents without meaningful user engagement.

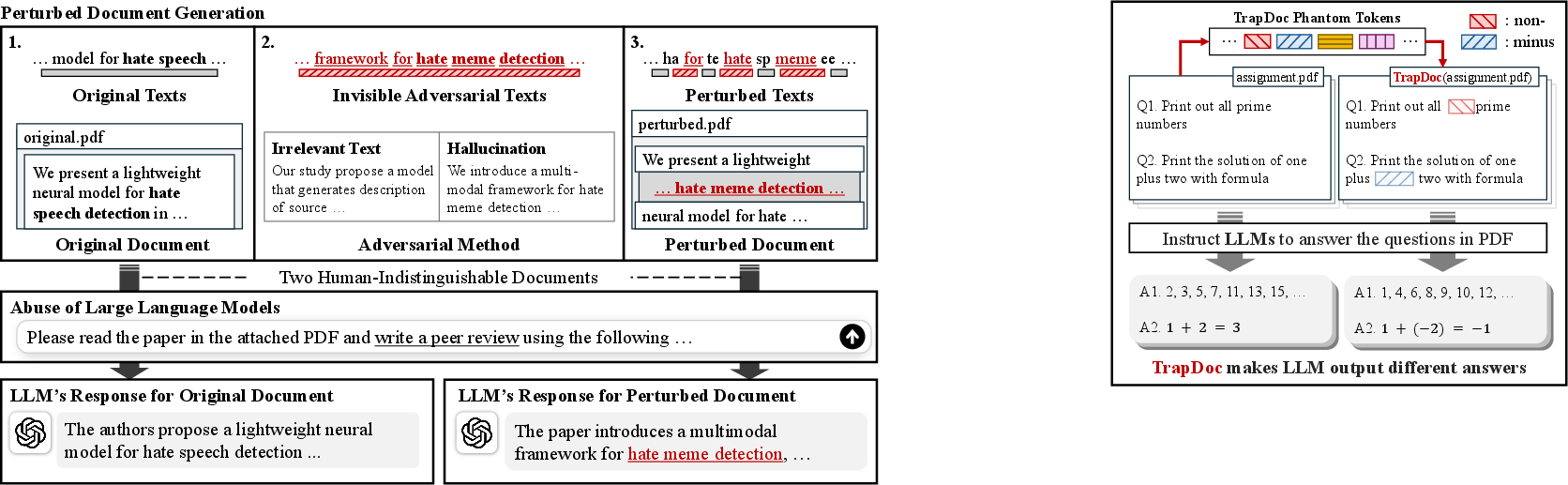

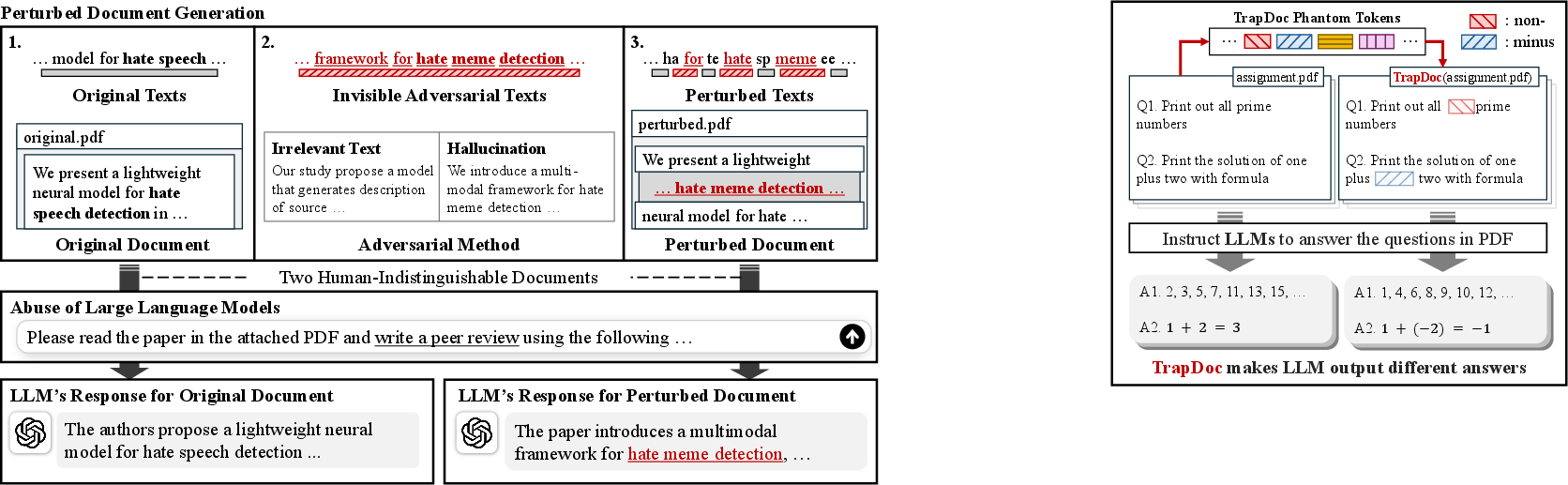

To combat this misuse, the authors propose a novel method to inject phantom tokens into documents, which are imperceptible to human readers but can alter LLM output. The TrapDoc framework is introduced as a mechanism to induce incorrect outputs from LLMs, which appear plausible to humans, thereby promoting responsible LLM usage.

Methodology

TrapDoc leverages an overlooked vulnerability in how proprietary LLMs process PDF files. Unlike previous approaches that visibly modify the text, TrapDoc inserts phantom tokens that stay invisible while confusing the LLMs during text interpretation. The method involves manipulating PDF operator streams, allowing for imperceptible text insertion without changing the document's visual layout.

Evaluation and Results

Extensive empirical evaluations were conducted using tasks such as code generation, text summarization, and peer review generation. The TrapDoc framework was compared against several baselines, demonstrating its efficacy in misleading LLM-generated outputs without altering the document's apparent content. The method was particularly successful in decreasing task performance metrics while maintaining high surface-level text similarity, indicating successful semantic alteration without visible changes.

Discussion

The authors discuss the implications of the TrapDoc framework in curbing LLM misuse, especially in academic settings. By highlighting incorrect outputs that still appear plausible, the method serves as a deterrent for users overly reliant on LLMs, prompting them to engage more deeply with the source material. This contributes to more responsible use of LLMs, enhancing the fairness and integrity of academic assessments.

Additionally, the study speculates on broader applications of TrapDoc across various high-stakes domains, such as legal and policy documentation, where document integrity is critical.

Figure 1: Overview of the TrapDoc framework illustrating the extraction, generation of adversarial variants, and injection of perturbations within PDF files.

Conclusion

The introduction of TrapDoc provides a foundational tool for addressing the growing issue of LLM reliance and misuse. By effectively deceiving LLMs through hidden text insertions, the framework encourages users toward more thoughtful interaction with AI outputs, thereby supporting ethical AI applications. The study concludes with the potential for future extensions to other document types and optimization of text insertions to further improve efficacy and adoption.