- The paper introduces a neuro-symbolic framework that integrates a Neural Knowledge Base to represent entity states for enhanced Theory-of-Mind reasoning.

- The paper employs an iterative masking mechanism to enable efficient perspective-taking and scalable multi-hop reasoning by reducing the complexity of unobservable events.

- The paper demonstrates up to a 16% improvement in higher-order ToM accuracy over baselines, highlighting its practical benefits for complex social inference tasks.

EnigmaToM: Enhance LLMs' Theory-of-Mind with Entity-Based Knowledge

Introduction

The paper "EnigmaToM: Improve LLMs' Theory-of-Mind Reasoning Capabilities with Neural Knowledge Base of Entity States" addresses the limitations of LLMs in performing Theory-of-Mind (ToM) reasoning, particularly in higher-order scenarios that require multi-hop reasoning. The approach integrates a neuro-symbolic framework leveraging a Neural Knowledge Base (NKB) for improved ToM capabilities.

Framework Overview

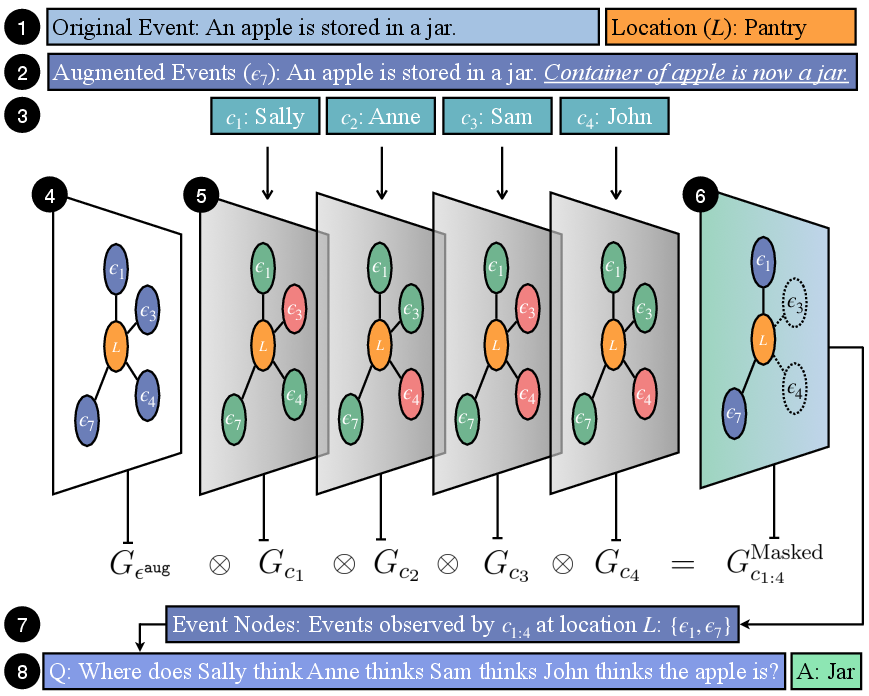

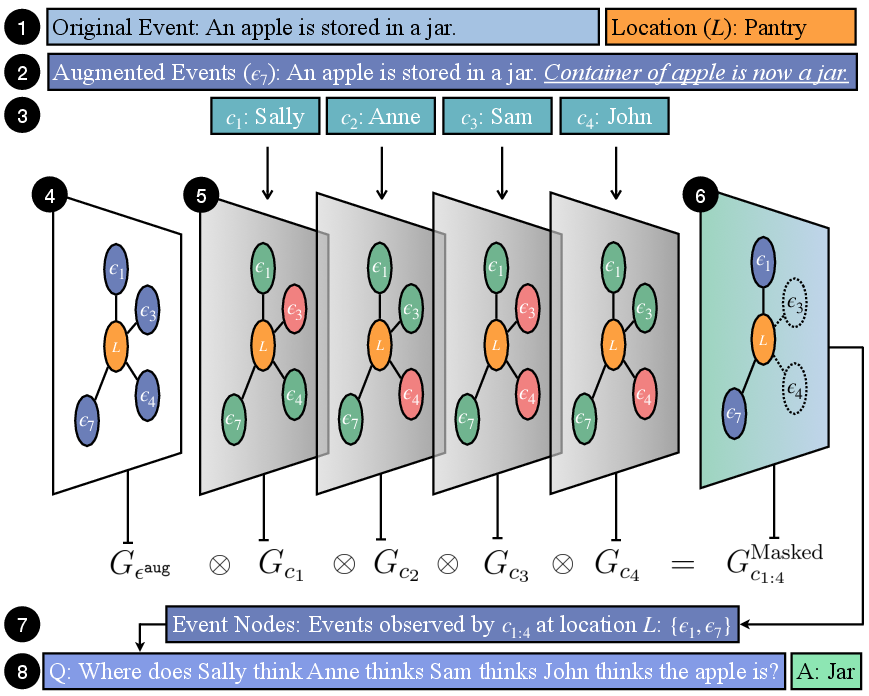

EnigmaToM advances ToM reasoning via a neuro-symbolic framework that includes:

- Neural Knowledge Base (NKB): A repository of structured representations of entity states, essential for constructing spatial scene graphs for belief tracking.

- Iterative Masking Mechanism: This psychology-inspired technique facilitates efficient perspective-taking by masking unobservable events specific to a given character's viewpoint.

- Knowledge Injection: Enriches events with fine-grained entity state details, essential for accurate ToM inference.

This multi-layered approach allows LLMs to offload complex reasoning to a symbolic component, improving efficiency and scalability in ToM tasks.

Figure 1: Example use-case of framework in fourth-order ToM reasoning.

Implementation Details

Neural Knowledge Base

The NKB is implemented using a sequence-to-sequence trained Llanguage model. Training utilizes the OpenPI2.0 dataset, which provides human-annotated entity state changes. The NKB generates entity-state information vital for constructing spatial scene graphs and conducting effective event reasoning.

Perspective-Taking Mechanism

Perspective-taking is executed by constructing both omniscient and character-centric spatial scene graphs. Characters' perspectives iteratively mask these graphs to exclude unobservable events. This iterative masking reduces LLMs' reasoning burden by focusing only on relevant, observable events.

Efficiency Considerations

The design of the EnigmaToM framework ensures scalability with respect to both the number of entities and the order of ToM reasoning. The masking mechanism allows the computational complexity to scale linearly with the number of entities, independent of the required depth of reasoning.

Experimental Results

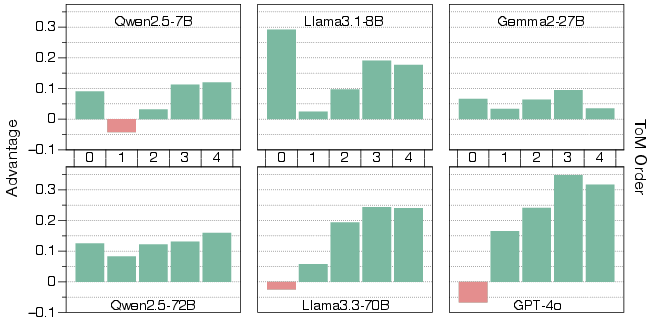

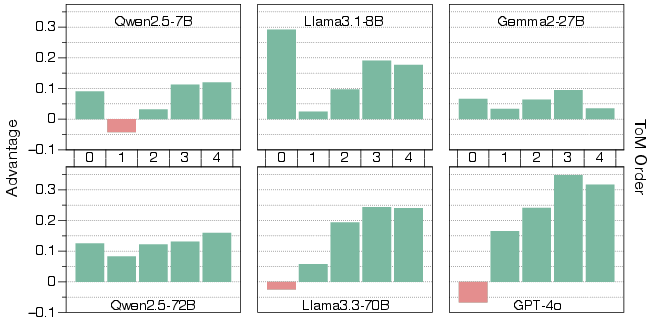

EnigmaToM was tested on various datasets, revealing substantial improvements over baseline methods across all ToM reasoning orders, especially in scenarios requiring higher-order reasoning (up to the fourth order).

Figure 2: Relative advantage of on HiToM dataset with respect to ToM order.

- Higher-Order Reasoning: Demonstrated increased advantages in more complex ToM tasks, with up to 16% improvement in third-order reasoning accuracy.

- Baseline Comparison: Achieved superior results compared to existing methods such as SimulatedToM and TimeToM, particularly with smaller model architectures.

Analysis

Ablation Study

Removing the iterative masking mechanism resulted in significant performance drops, emphasizing the necessity of perspective-taking. Conversely, knowledge injection had a varied impact, with substantial importance in dialogue-based datasets (e.g., FANToM) but less influence in event-based matrices.

Model Scaling Impact

Further analysis illustrated that scaling the base LLMs affects the efficacy of EnigmaToM, with benefits diminishing for ToMi but enhancing for FANToM with larger models. This underscores the essential role of entity state knowledge compression in narrative interpretation.

Conclusion

EnigmaToM effectively enhances LLMs' ToM reasoning by combining neural representations and symbolic reasoning techniques. Its efficiency and scalability make it a promising approach for deploying AI systems requiring advanced social intelligence capabilities.

Future Scope

Further research could explore non-perceptual ToM reasoning, expanding the NKB's scope to encompass emotional and causal state information. Additionally, improving error propagation control within the masking mechanism will enhance robustness.