- The paper introduces Interleaved Gibbs Diffusion, which interleaves discrete and continuous denoising in a Gibbs sampling framework to model constrained, mixed data without needing distribution factorization.

- It incorporates the ReDeNoise algorithm and state-space doubling to enhance conditional generation and inference efficiency in complex domains like layout and molecule generation.

- Empirical evaluations demonstrate a 7% improvement in 3-SAT task performance along with robust results in layout and molecular generation, underscoring its state-of-the-art capability.

"Interleaved Gibbs Diffusion: Generating Discrete-Continuous Data with Implicit Constraints" (2502.13450)

Introduction

The paper presents Interleaved Gibbs Diffusion (IGD), an innovative generative modeling framework designed for the effective handling of mixed continuous-discrete data within constrained generation tasks. While traditional diffusion models have demonstrated proficiency in discrete and continuous settings through factorized denoising distributions, IGD addresses a critical limitation in modeling strong dependencies among random variables in constrained environments. By interleaving continuous and discrete denoising algorithms through a Gibbs sampling-like Markov chain, IGD circumvents the need for distribution factorizability. The versatility of the IGD framework enables the incorporation of various denoisers, supports conditional generation via state-space doubling, and improves inference time efficiency through the ReDeNoise method. Empirical results from complex domains like 3-SAT, molecule generation, and layout generation underscore IGD's state-of-the-art performance, highlighting a 7% improvement in 3-SAT tasks compared to established models.

Model Framework

Interleaved Gibbs Diffusion

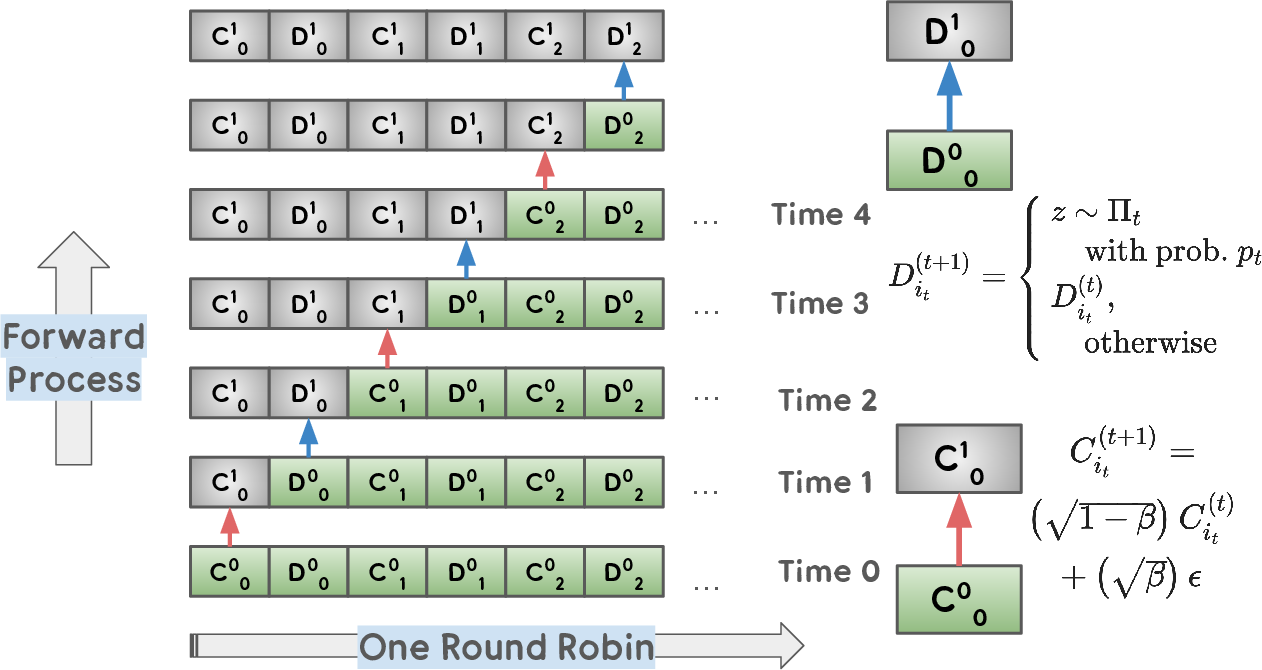

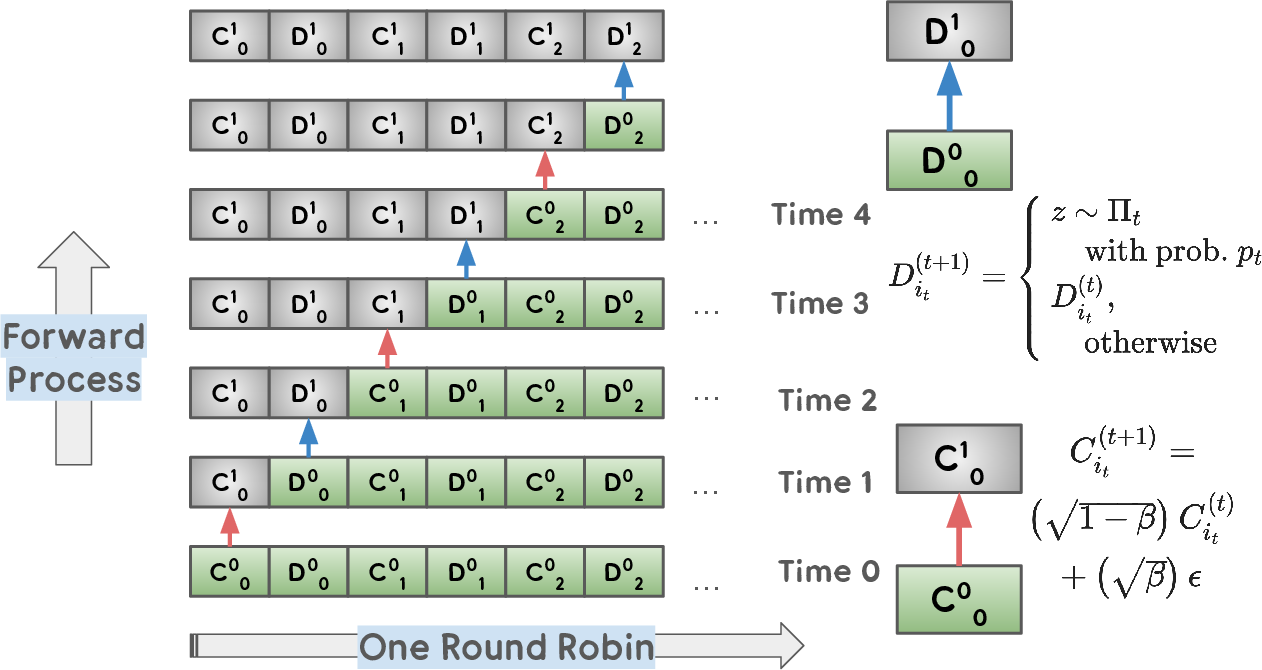

IGD employs a novel strategy for constrained generative tasks by interleaving discrete and continuous denoising processes. It uses a Gibbs sampling-inspired approach to denoise mixed sequences of continuous vectors and discrete tokens one element at a time, providing flexibility in choosing denoisers without requiring the factorization of the denoising process.

- Forward Noising Process: The noising is performed through a sequence that independently applies either discrete or continuous noise at each position. Discrete noising uses a noise schedule applied through additional token spaces, while continuous noising utilizes a monotonically increasing noise schedule. This strategic separation allows for direct sampling of noised states without sequential dependency, facilitating efficient learning.

- Reverse Denoising Process: The reverse process depends on accurately trained discrete and continuous denoisers, non-factorizable but capable of exact reversal of the forward process. Continuous denoising relies on adapted Tweedie's formula, enabling restoration of continuous elements from sequences with changing conditioning.

Enhancements in the Framework

ReDeNoise Algorithm: An additional inference strategy, ReDeNoise utilizes iterative noise-denoise cycles to enhance generation accuracy at the cost of increased computational resources, demonstrating potential accuracy gains in practical applications.

Conditional Generation: The framework adapts state-space doubling, a strategy inspired by Domain Layout Transformer (DLT), for conditional generative tasks, effectively embedding condition constraints in the learning process.

Experimental Validation

The IGD framework was evaluated across three key domains:

- Layout Generation: IGD shows superior results in generating coherent layouts satisfying complex constraints, with evaluation metrics outperforming leading baseline models on datasets like PubLayNet and RICO. Key metrics included Frechet Inception Distance (FID) and Maximum Intersection over Union (mIoU).

- Molecule Generation: In handling the 3D structures in the QM9 dataset, IGD demonstrated impressive results in synthesizing molecules without relying on specialized equivariant architectures, maintaining high atom stability and molecular validity.

- Boolean Satisfiability (3-SAT): IGD achieved benchmark improvements in solving random 3-SAT instances, particularly noting scalable performance as the complexity of variable assignments increased.

Figure 1: Interleaved Noising Process: Sequential noising of discrete tokens (D_s) and continuous vectors (C_s). Noising occurs one element at a time, keeping other elements unchanged.

Conclusion

The Interleaved Gibbs Diffusion framework represents a significant advancement in generative modeling for discrete-continuous domains subject to implicit constraints. By sidestepping the need for denoising factorizability, IGD offers a robust approach to tackling complex, constrained generation tasks with empirical validations affirming its efficacy across diverse applications. Future work may explore optimizing sampler choices and integrating more specialized architectures and loss functions tailored to niche applications in layout and molecular generation.