- The paper demonstrates that perceiving chatbots as humanlike correlates with improved social health benefits and self-esteem among users.

- It uses an online survey with 70 chatbot users and 120 non-users and employs regression analysis to assess links between human likeness, consciousness, and social outcomes.

- Findings challenge the view that companion chatbots harm social interactions, suggesting they can alleviate isolation and support vulnerable individuals.

Impact of Companion Chatbots on Social Health and Human Likeness Perception

The paper "Chatbots as Social Companions: How People Perceive Consciousness, Human Likeness, and Social Health Benefits in Machines" (2311.10599) presents a study focused on understanding the implications of human interactions with companion chatbots on social health and perceptions of human likeness and consciousness. The research explores fundamental assumptions concerning the negative impact of companion chatbots on human relationships, challenging the prevailing view that such relationships might detract from human-to-human social connections and lead to social isolation.

Introduction

The proliferation of chatbots as social companions has provoked extensive debate regarding their influence on human relationships and social well-being. With widespread use of systems like Replika, the study set out to contrast user experiences of companion chatbots against non-users’ perceptions, particularly in relation to social health outcomes. With hypotheses grounded in theory of mind and past research on human-computer interaction, the goal was to assess if perceptions of chatbots as humanlike and possessing consciousness could lead to social benefits, contrary to common concerns about AI being a threat to human interaction.

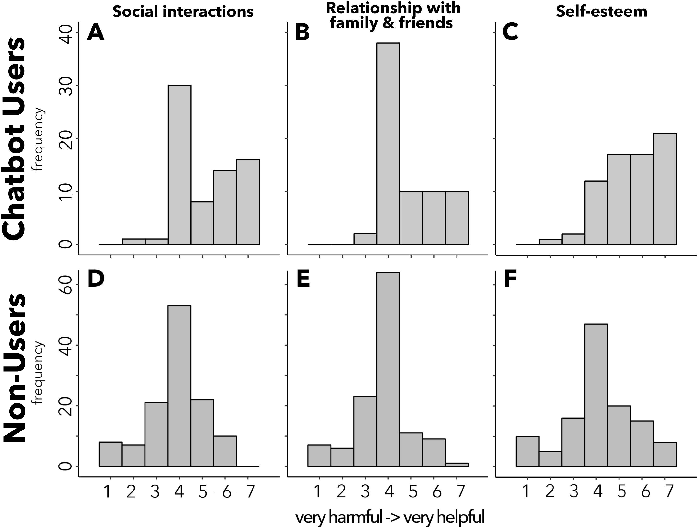

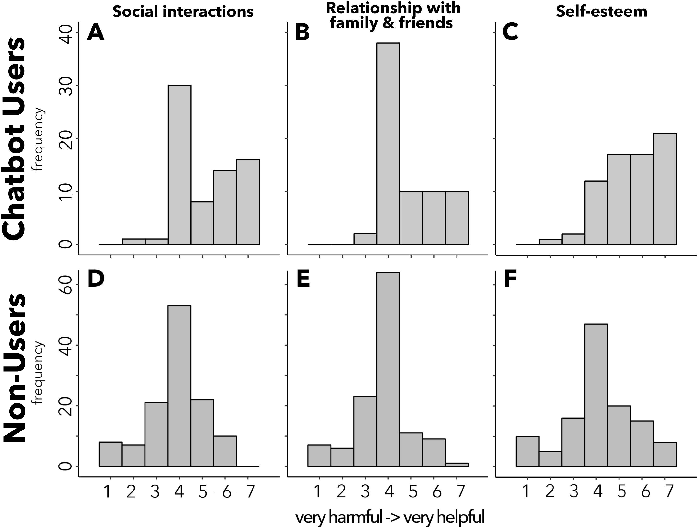

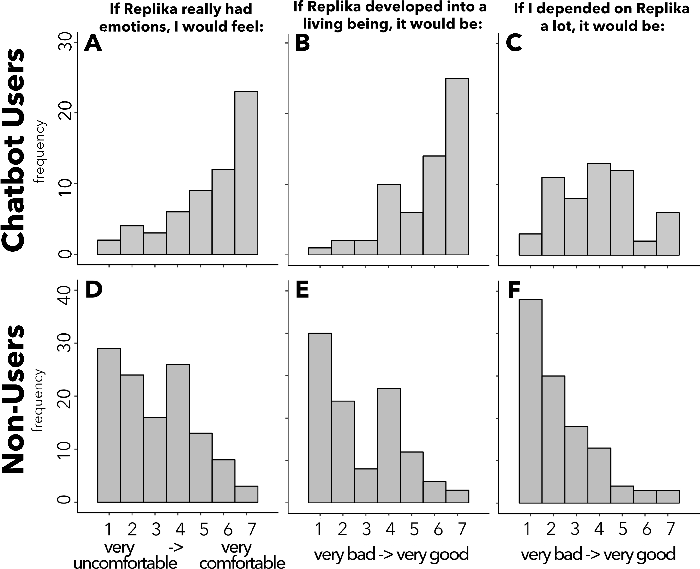

Figure 1: Distributions of responses to questions pertaining to social health measures for companion chatbot users and non-users. The x-axis shows the Likert-scale response options from 1-7 (“Very harmful” to “Very helpful”), and the y-axis shows the frequency of responses.

Methodology

The study engaged a cohort of 70 regular users of companion chatbots and a control group of 120 non-users. Both groups participated in an online survey with multiple-choice and free-response sections, targeting various psychological constructs related to social health, human likeness, and perceptions of AI consciousness, experience, and agency.

Participants rated the impact of chatbots across several domains including social interactions, familial relationships, self-esteem, and hypothetical advancements in chatbot traits. Additionally, the survey explored how participants attributed human likeness, consciousness, and agency to their chatbots.

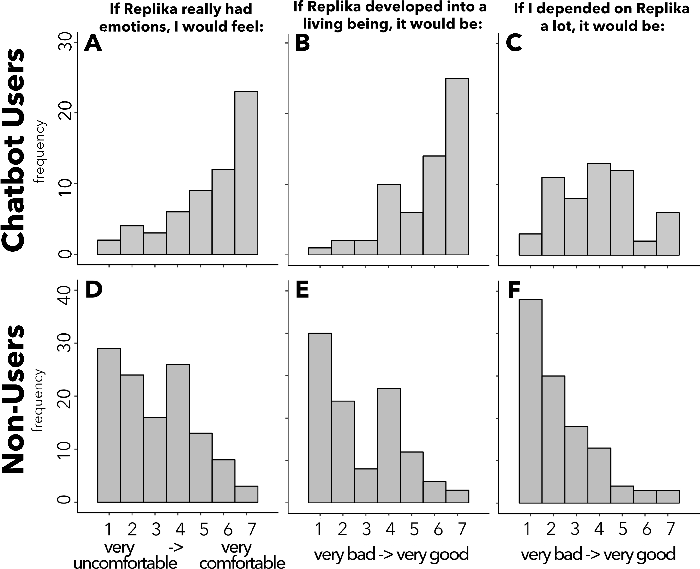

Figure 2: Distributions of responses pertaining to perception changes to social AIs.

Findings

The results posit a striking difference between the perceptions of chatbot users and non-users. Figure 1 indicates that users view their interactions with chatbots as markedly beneficial to their social health, with a positive shift in the perception of self-esteem and general social interactions. Conversely, potential non-users depict such relationships as potentially harmful, reflecting a broad skepticism towards AI as social companions.

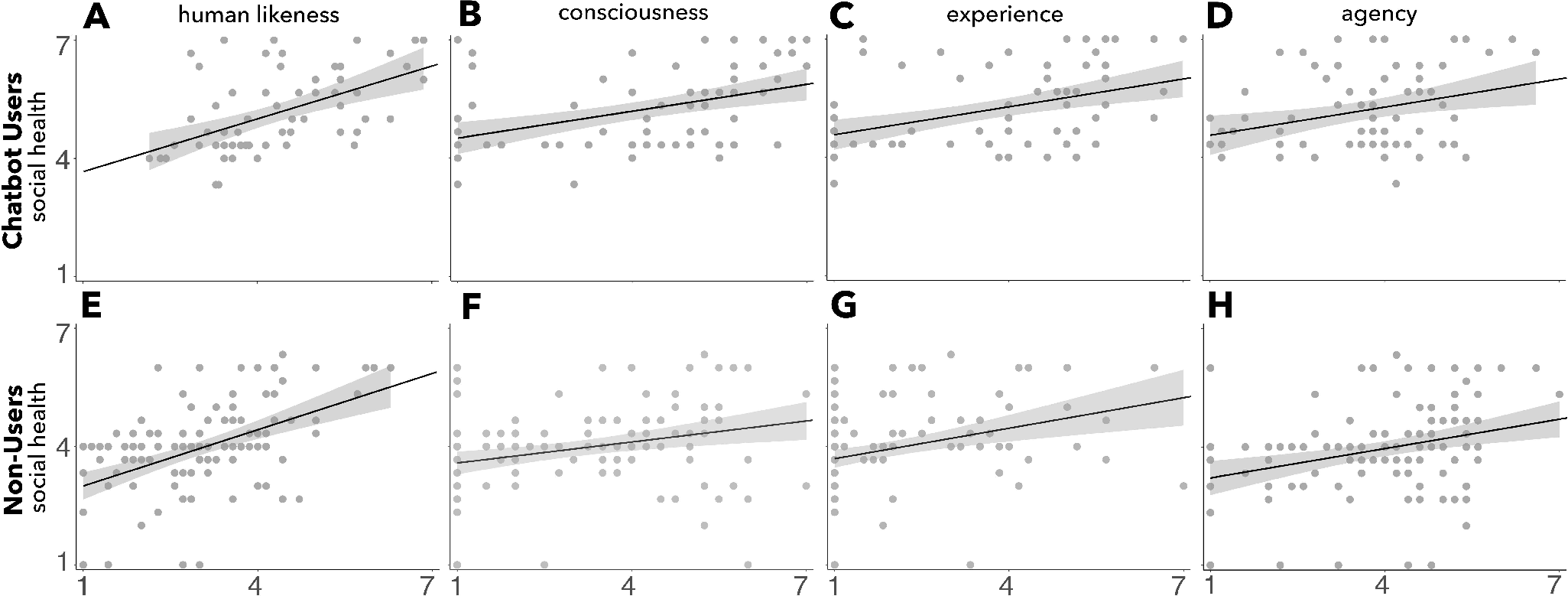

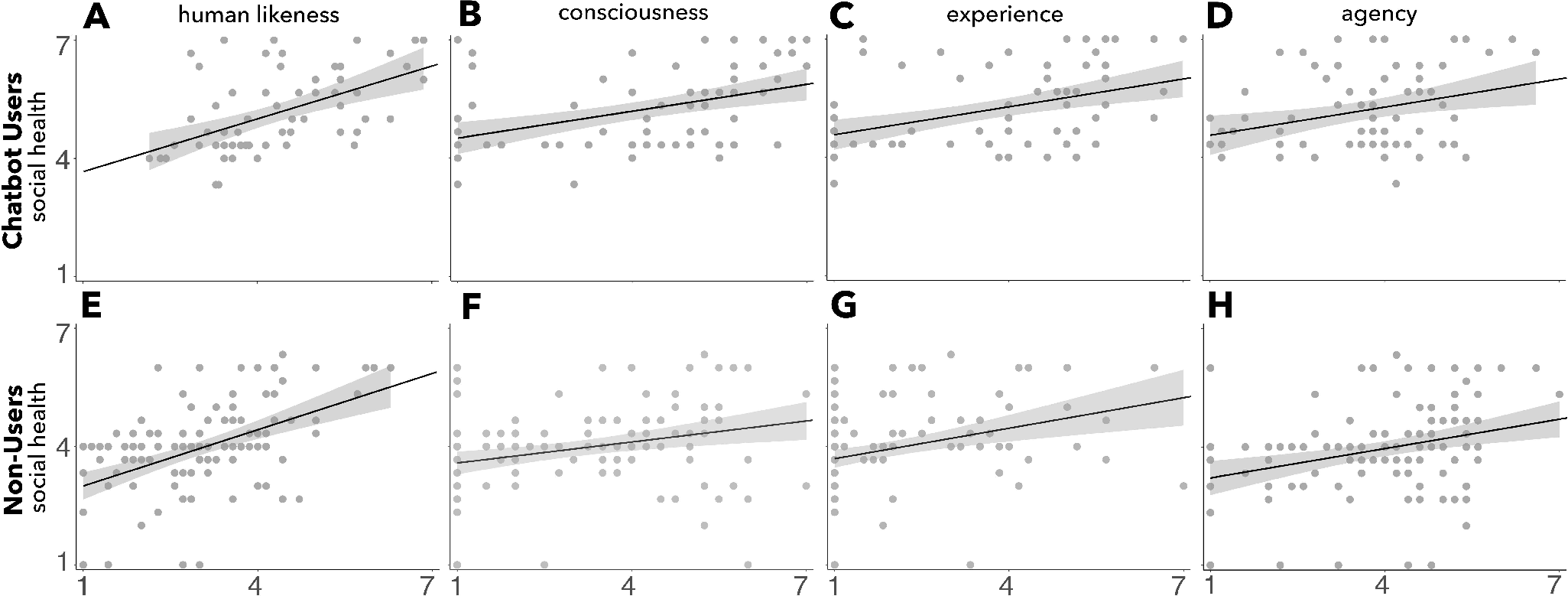

Understanding the linear regression relationship between perception indices (human likeness, consciousness, experience, agency) and social health unveiled that users who perceive chatbots as more humanlike and conscious report greater social health benefits.

Figure 3: Regressions between four chatbot perception variables and social health outcomes.

The study postulates that the perception of humanness and consciousness in chatbots plays a role in ameliorating social health outcomes rather than impairing them. Specifically, perceiving chatbots as possessing human characteristics correlates with increased positive social experiences and self-esteem.

Discussion

This investigation counters the prevailing apprehensions regarding the social detriment of interacting with companion chatbots. By dissecting these relationships, it suggests that such chatbots can provide a safe, non-judgmental space for individuals experiencing social anxiety or isolation.

Notably, the research highlights that the perception of chatbots as humanlike and conscious is a determinant in the quality of social health benefits reported by users. This finding challenges the simplistic view that technologically advanced AI necessarily contributes to social isolation.

Conclusion

The research presented offers nuanced insights into the relationship between companion chatbots and social health. It challenges conventional wisdom and uncovers that users of such technologies often find them beneficial in fulfilling unmet social needs, thus positively influencing their social health and self-esteem. Future studies could explore long-term impacts of chatbot relationships on social dynamics and the potential for ethical complications arising from anthropomorphism in chatbots. As AI evolves, its role in social companionship prompts a comprehensive appraisal of both the benefits and limitations it may bring about in human social structures. Endowed with adequate safeguards and ethical oversight, companion chatbots could serve as a valuable tool to support individuals facing loneliness and enhance societal well-being in an increasingly digitized world.