- The paper introduces a unified OCR model that integrates diverse optical signals via a three-stage process involving pre-training, joint-training, and post-training customization.

- It employs an efficient VitDet-based image encoder and a Qwen decoder, outperforming state-of-the-art systems in plain document, scene text, and formatted text recognition.

- GOT reduces operational complexity and enhances scalability across tasks, setting the stage for a transformative shift towards OCR-2.0.

General OCR Theory: Towards OCR-2.0 via a Unified End-to-end Model

Introduction

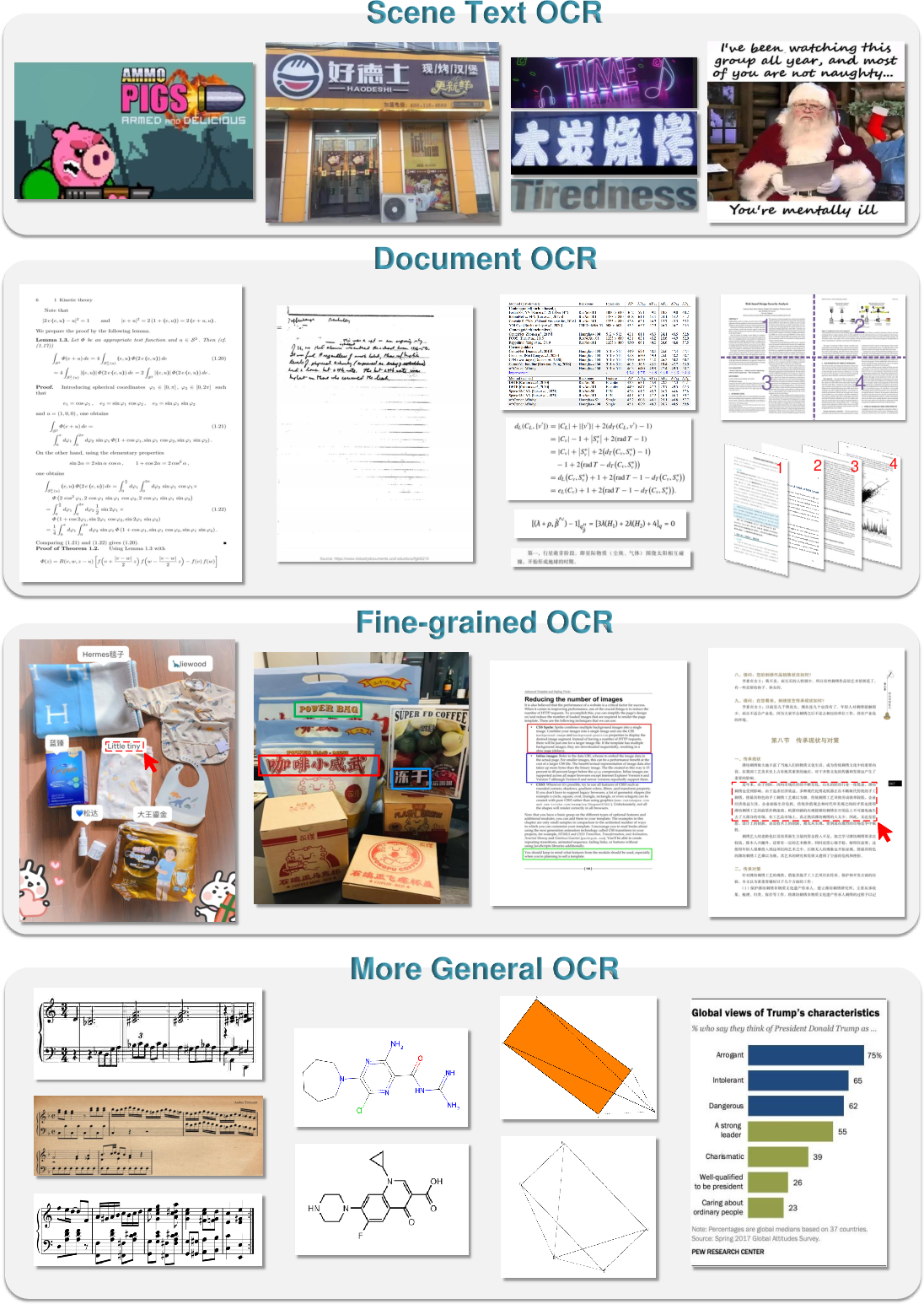

Optical Character Recognition (OCR) has been a cornerstone technology for digitizing and processing text embedded in images. This paper introduces General OCR Theory, heralding the advent of OCR-2.0 facilitated by the introduction of a novel model, General OCR Theory (GOT). The paradigm shift from OCR-1.0, characterized by modular pipelines with high maintenance and specificity, gives way to a unified model that integrates diverse optical signals such as plain texts, mathematical formulas, and molecular structures into a single, efficient system. This transition addresses the limitations of traditional OCR systems and emerging large vision-LLMs (LVLMs) that focus heavily on visual reasoning over perception.

Model Architecture

The GOT framework is architecturally driven by simplicity and efficiency through a three-stage process involving pre-training, joint-training, and post-training customization. The architecture comprises an image encoder capable of high compression, a linear connector, and a versatile language decoder. The encoder is designed using the VitDet approach, allowing optimal handling of high-resolution inputs while reducing token dimensionality. The decoder utilizes Qwen with 500M parameters, which supports long-context scenarios necessary for various OCR tasks.

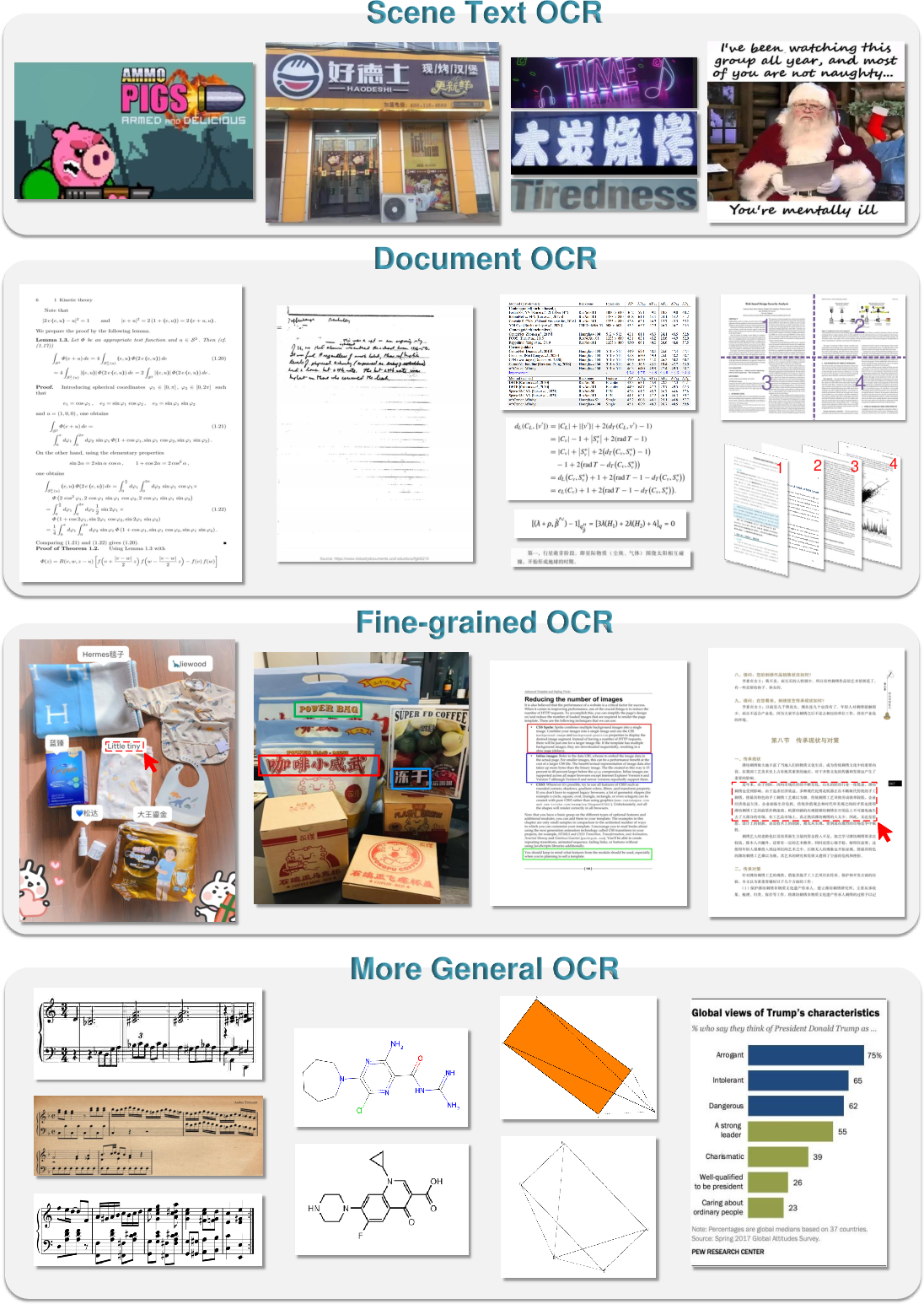

Figure 1: GOT supports a wide range of optical image types and tasks, from music sheets to molecular formulas.

Data Engine and Training Strategy

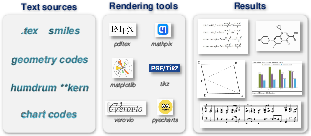

To support the comprehensive OCR-2.0 remit, an intricate data generation process was employed, leveraging six rendering tools to synthesize diverse datasets. This involves capturing scene and document OCR data, formatted text, and non-traditional inputs like sheet music and charts. A unique aspect of this data strategy is the ability to efficiently produce structured outputs in Mathpix markdown or other formats crucial for specific application domains.

Figure 2: GOT leverages multiple rendering engines to excel in diverse OCR scenarios, enhancing model performance across tasks.

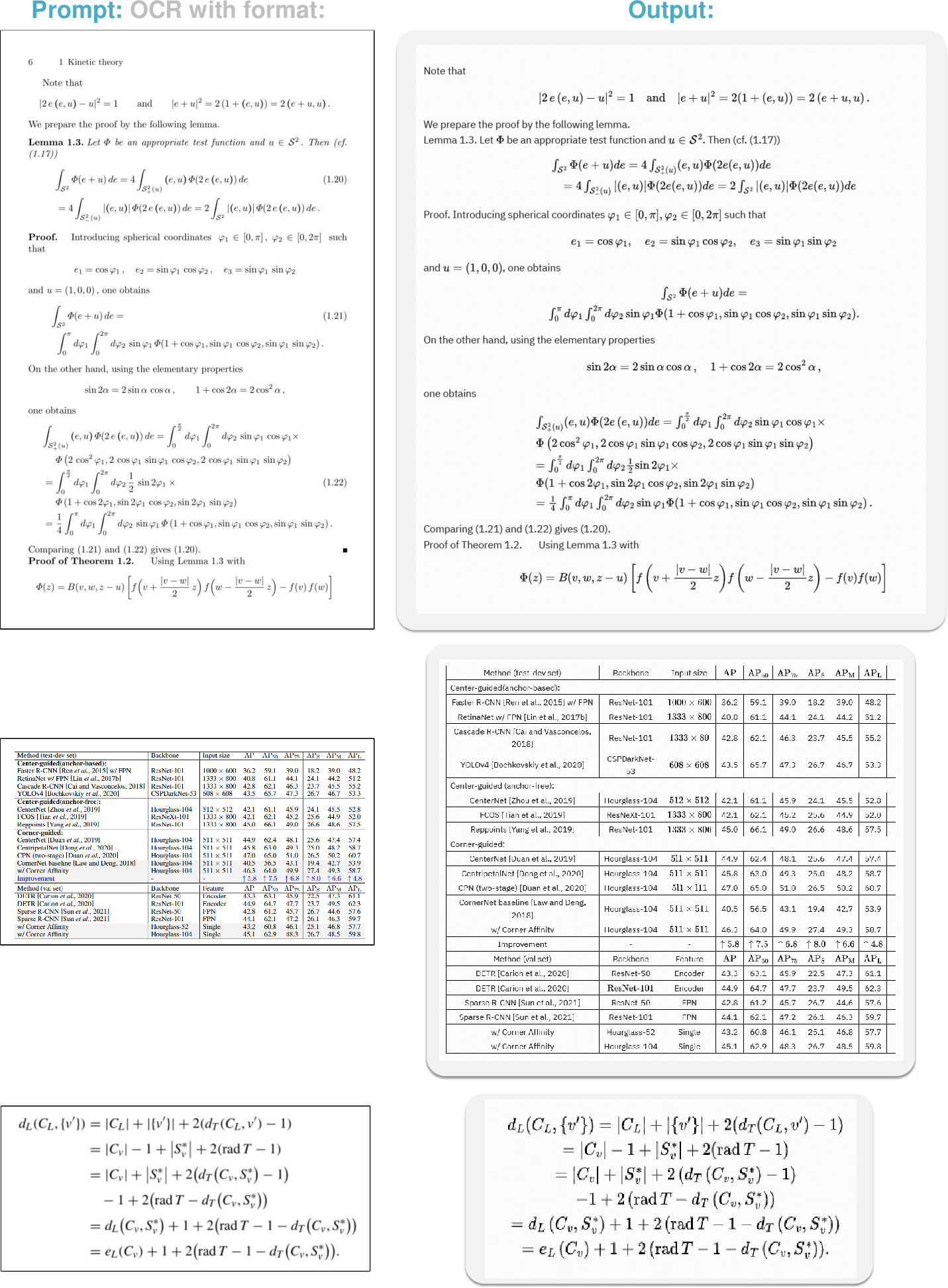

The pre-training phase optimizes the vision encoder using a small-scale decoder to ensure low GPU resource utilization, whereas the joint-training phase scales up the OCR tasks knowledge by integrating larger datasets. The post-training focuses on enhancing custom features such as fine-grained and dynamic resolution OCR.

Evaluation and Results

Comprehensive evaluations across multiple OCR tasks demonstrate GOT's superlative performance. Key results include outperforming state-of-the-art systems in plain document OCR, scene text recognition, and formatted text extraction. Additionally, GOT showcased its strength in handling complex, fine-grained OCR tasks where precision and adaptability are critical.

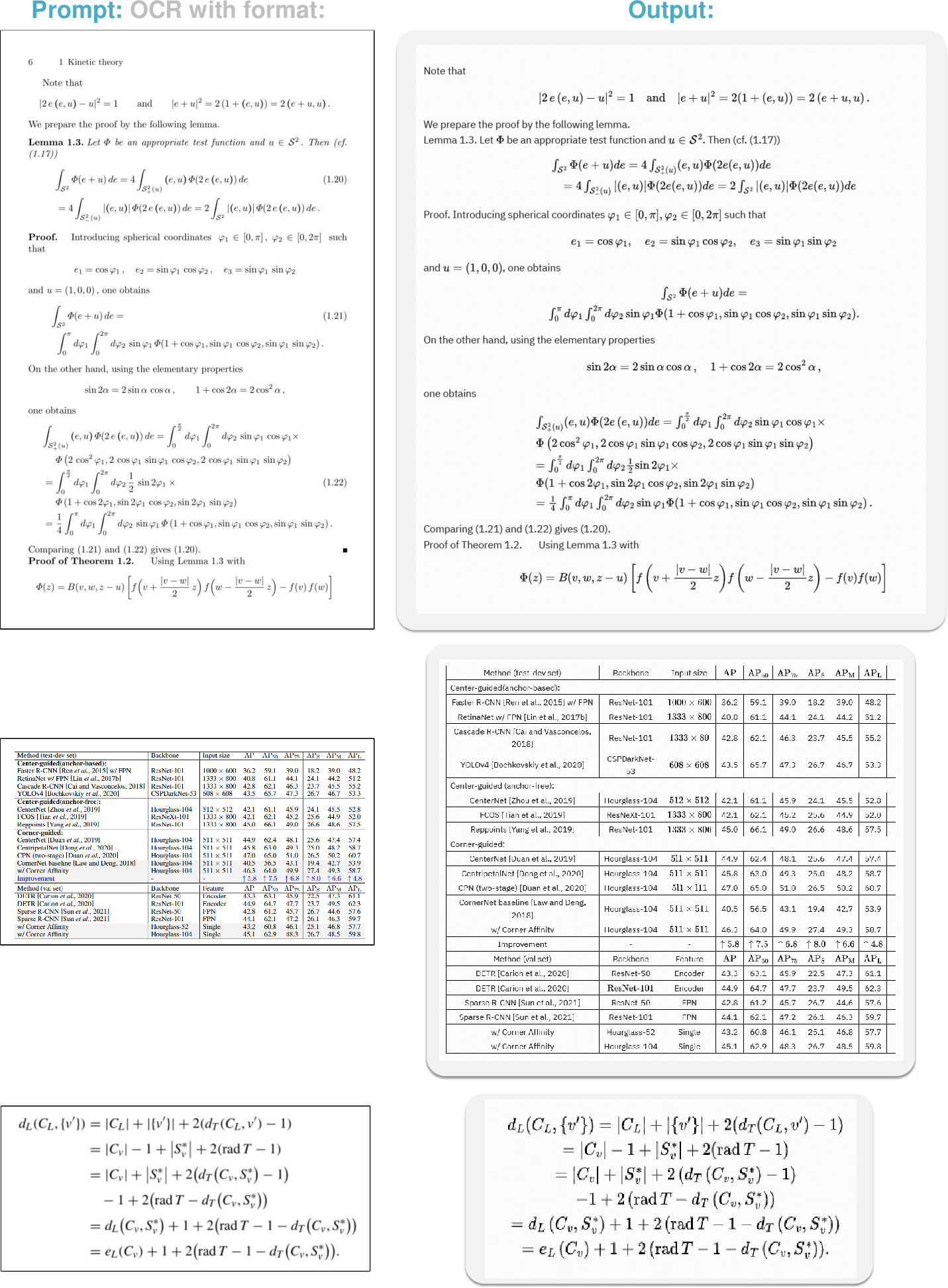

Figure 3: GOT excels in formatted OCR tasks, handling diverse input forms with high accuracy.

The model's ability to manage more general OCR tasks, exemplified by its performance on charts and sheet music, underline its versatility and alignment with the evolving demands of text-centric image understanding.

Conclusion

GOT facilitates a transformative approach to OCR by unifying various data types and tasks into a single end-to-end model. This shift not only reduces operational complexity but enhances scalability and applicability across domains. The integration of robust data engines and a flexible architecture positions GOT as a pivotal model in advancing towards OCR-2.0, promising broader applicability and efficiency. The journey towards full realization of OCR-2.0 continues, with future focus on expanding language support and refining complex geometric recognitions.