Reinforcement Learning Pair Trading: A Dynamic Scaling approach

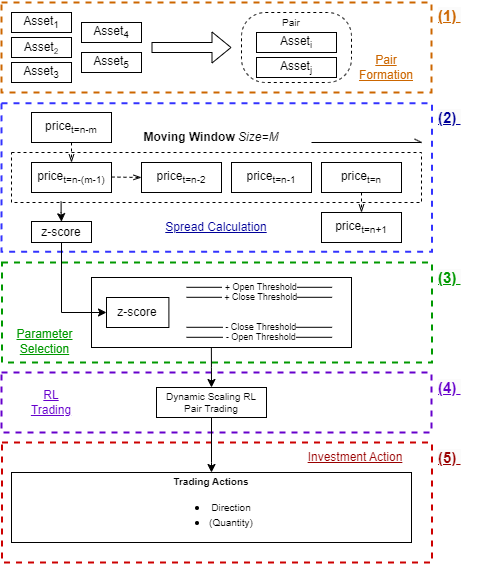

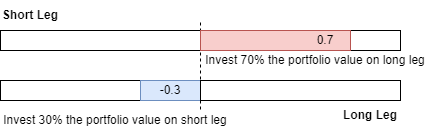

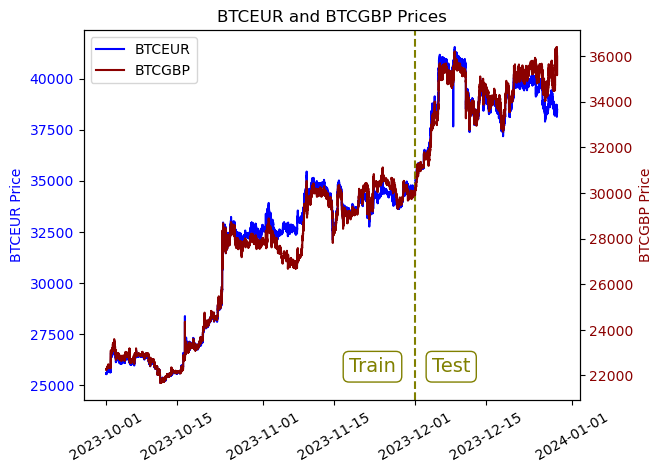

Abstract: Cryptocurrency is a cryptography-based digital asset with extremely volatile prices. Around USD 70 billion worth of cryptocurrency is traded daily on exchanges. Trading cryptocurrency is difficult due to the inherent volatility of the crypto market. This study investigates whether Reinforcement Learning (RL) can enhance decision-making in cryptocurrency algorithmic trading compared to traditional methods. In order to address this question, we combined reinforcement learning with a statistical arbitrage trading technique, pair trading, which exploits the price difference between statistically correlated assets. We constructed RL environments and trained RL agents to determine when and how to trade pairs of cryptocurrencies. We developed new reward shaping and observation/action spaces for reinforcement learning. We performed experiments with the developed reinforcement learner on pairs of BTC-GBP and BTC-EUR data separated by 1 min intervals (n=263,520). The traditional non-RL pair trading technique achieved an annualized profit of 8.33%, while the proposed RL-based pair trading technique achieved annualized profits from 9.94% to 31.53%, depending upon the RL learner. Our results show that RL can significantly outperform manual and traditional pair trading techniques when applied to volatile markets such as~cryptocurrencies.

- Reinforcement learning algorithms: An overview and classification. (pp. 1–7). IEEE.

- Bellman, R. (1957). A Markovian Decision Process. Journal of Mathematics and Mechanics, 6, 679–684. URL: https://www.jstor.org/stable/24900506. Publisher: Indiana University Mathematics Department.

- Making Financial Trading by Recurrent Reinforcement Learning. In B. Apolloni, R. J. Howlett, & L. Jain (Eds.), Knowledge-Based Intelligent Information and Engineering Systems Lecture Notes in Computer Science (pp. 619–626). Berlin, Heidelberg: Springer. doi:10.1007/978-3-540-74827-4_78.

- High-Frequency Trading and Price Discovery. The Review of Financial Studies, 27, 2267–2306. URL: https://doi.org/10.1093/rfs/hhu032. doi:10.1093/rfs/hhu032.

- Burgess, A. N. (2003). Using Cointegration to Hedge and Trade International Equities. In Applied Quantitative Methods for Trading and Investment (pp. 41–69). John Wiley & Sons, Ltd. URL: https://onlinelibrary.wiley.com/doi/abs/10.1002/0470013265.ch2. doi:10.1002/0470013265.ch2 section: 2 _eprint: https://onlinelibrary.wiley.com/doi/pdf/10.1002/0470013265.ch2.

- David Silver, Demis Hassabis (2016). AlphaGo: Mastering the ancient game of Go with Machine Learning. URL: https://blog.research.google/2016/01/alphago-mastering-ancient-game-of-go.html.

- Distribution of the Estimators for Autoregressive Time Series with a Unit Root. Journal of the American Statistical Association, 74, 427–431. URL: https://doi.org/10.1080/01621459.1979.10482531. doi:10.1080/01621459.1979.10482531. Publisher: Taylor & Francis _eprint: https://doi.org/10.1080/01621459.1979.10482531.

- Does Simple Pairs Trading Still Work? Financial Analysts Journal, 66, 83–95. URL: https://doi.org/10.2469/faj.v66.n4.1. doi:10.2469/faj.v66.n4.1. Publisher: Routledge _eprint: https://doi.org/10.2469/faj.v66.n4.1.

- Cointegration portfolios of European equities for index tracking and market neutral strategies. Journal of Asset Management, 6, 33–52. URL: https://doi.org/10.1057/palgrave.jam.2240164. doi:10.1057/palgrave.jam.2240164.

- Arbitrage. In J. Eatwell, M. Milgate, & P. Newman (Eds.), Finance (pp. 57–71). London: Palgrave Macmillan UK. URL: https://doi.org/10.1007/978-1-349-20213-3_4. doi:10.1007/978-1-349-20213-3_4.

- Air power’s quest for strategic paralysis. Proceedings of the School of Advanced Airpower Studies, .

- Pairs Trading: Performance of a Relative Value Arbitrage Rule. URL: https://papers.ssrn.com/abstract=141615. doi:10.2139/ssrn.141615.

- Gold, C. (2003). FX trading via recurrent reinforcement learning. 2003 IEEE International Conference on Computational Intelligence for Financial Engineering, 2003. Proceedings., (pp. 363–370). URL: http://ieeexplore.ieee.org/document/1196283/. doi:10.1109/CIFER.2003.1196283. Conference Name: 2003 IEEE International Conference on Computational Intelligence for Financial Engineering. Proceedings ISBN: 9780780376540 Place: Hong Kong, China Publisher: IEEE.

- Soft Actor-Critic Algorithms and Applications. URL: http://arxiv.org/abs/1812.05905. doi:10.48550/arXiv.1812.05905 arXiv:1812.05905 [cs, stat].

- Mastering Pair Trading with Risk-Aware Recurrent Reinforcement Learning. URL: http://arxiv.org/abs/2304.00364 arXiv:2304.00364 [cs, q-fin].

- Huang, C. Y. (2018). Financial Trading as a Game: A Deep Reinforcement Learning Approach. URL: http://arxiv.org/abs/1807.02787. doi:10.48550/arXiv.1807.02787 arXiv:1807.02787 [cs, q-fin, stat].

- Huck, N. (2010). Pairs trading and outranking: The multi-step-ahead forecasting case. European Journal of Operational Research, 207, 1702–1716. URL: https://www.sciencedirect.com/science/article/pii/S0377221710004820. doi:10.1016/j.ejor.2010.06.043.

- Optimizing the Pairs-Trading Strategy Using Deep Reinforcement Learning with Trading and Stop-Loss Boundaries. Complexity, 2019, e3582516. URL: https://www.hindawi.com/journals/complexity/2019/3582516/. doi:10.1155/2019/3582516. Publisher: Hindawi.

- FinRL-Meta: Market Environments and Benchmarks for Data-Driven Financial Reinforcement Learning. URL: http://arxiv.org/abs/2211.03107. doi:10.48550/arXiv.2211.03107 arXiv:2211.03107 [q-fin].

- FinRL: A Deep Reinforcement Learning Library for Automated Stock Trading in Quantitative Finance. URL: http://arxiv.org/abs/2011.09607. doi:10.48550/arXiv.2011.09607 arXiv:2011.09607 [cs, q-fin].

- FinRL: Deep Reinforcement Learning Framework to Automate Trading in Quantitative Finance. In Proceedings of the Second ACM International Conference on AI in Finance (pp. 1–9). URL: http://arxiv.org/abs/2111.09395. doi:10.1145/3490354.3494366 arXiv:2111.09395 [cs, q-fin].

- A Deep Reinforcement Learning Approach for Automated Cryptocurrency Trading. In J. MacIntyre, I. Maglogiannis, L. Iliadis, & E. Pimenidis (Eds.), Artificial Intelligence Applications and Innovations IFIP Advances in Information and Communication Technology (pp. 247–258). Cham: Springer International Publishing. doi:10.1007/978-3-030-19823-7_20.

- Mandelbrot, B. (1967). The Variation of Some Other Speculative Prices. The Journal of Business, 40, 393–413. URL: https://www.jstor.org/stable/2351623. Publisher: University of Chicago Press.

- Regime-switching recurrent reinforcement learning for investment decision making. Computational Management Science, 9, 89–107. URL: https://doi.org/10.1007/s10287-011-0131-1. doi:10.1007/s10287-011-0131-1.

- Reinforcement Learning in Financial Markets. Data, 4, 110. URL: https://www.mdpi.com/2306-5729/4/3/110. doi:10.3390/data4030110. Number: 3 Publisher: Multidisciplinary Digital Publishing Institute.

- Playing Atari with Deep Reinforcement Learning. URL: http://arxiv.org/abs/1312.5602. doi:10.48550/arXiv.1312.5602 arXiv:1312.5602 [cs].

- Perlin, M. (2007). M of a Kind: A Multivariate Approach at Pairs Trading. URL: https://papers.ssrn.com/abstract=952782. doi:10.2139/ssrn.952782.

- Perlin, M. S. (2009). Evaluation of pairs-trading strategy at the Brazilian financial market. Journal of Derivatives & Hedge Funds, 15, 122–136. URL: https://doi.org/10.1057/jdhf.2009.4. doi:10.1057/jdhf.2009.4.

- Pricope, T.-V. (2021). Deep Reinforcement Learning in Quantitative Algorithmic Trading: A Review. URL: http://arxiv.org/abs/2106.00123. doi:10.48550/arXiv.2106.00123 arXiv:2106.00123 [cs, q-fin].

- Stable-baselines3: Reliable reinforcement learning implementations. Journal of Machine Learning Research, 22, 1–8.

- Enhancing a Pairs Trading strategy with the application of Machine Learning. Expert Systems with Applications, 158, 113490. URL: https://www.sciencedirect.com/science/article/pii/S0957417420303146. doi:10.1016/j.eswa.2020.113490.

- Proximal Policy Optimization Algorithms. URL: http://arxiv.org/abs/1707.06347. doi:10.48550/arXiv.1707.06347 arXiv:1707.06347 [cs].

- Sharpe, W. F. (1964). Capital Asset Prices: A Theory of Market Equilibrium Under Conditions of Risk*. The Journal of Finance, 19, 425–442. URL: https://onlinelibrary.wiley.com/doi/abs/10.1111/j.1540-6261.1964.tb02865.x. doi:10.1111/j.1540-6261.1964.tb02865.x. _eprint: https://onlinelibrary.wiley.com/doi/pdf/10.1111/j.1540-6261.1964.tb02865.x.

- Reinforcement learning: An introduction. MIT press. URL: https://books.google.com/books?hl=en&lr=&id=uWV0DwAAQBAJ&oi=fnd&pg=PR7&dq=info:t8N5xiW9bXoJ:scholar.google.com&ots=mjnJv51Yh6&sig=MCRPFr8I5VMRpz8m8L9PMXdGHk0.

- Deep reinforcement learning applied to statistical arbitrage investment strategy on cryptomarket. Applied Soft Computing, 153, 111255. URL: https://www.sciencedirect.com/science/article/pii/S1568494624000292. doi:10.1016/j.asoc.2024.111255.

- Improving Pairs Trading Strategies via Reinforcement Learning. In 2021 International Conference on Applied Artificial Intelligence (ICAPAI) (pp. 1–7). URL: https://ieeexplore.ieee.org/document/9462067. doi:10.1109/ICAPAI49758.2021.9462067.

- Optimal market-neutral currency trading on the cryptocurrency platform. URL: http://arxiv.org/abs/2405.15461. doi:10.48550/arXiv.2405.15461 arXiv:2405.15461 [cs, q-fin].

- Using a Genetic Algorithm to Improve Recurrent Reinforcement Learning for Equity Trading. Computational Economics, 47, 551–567. URL: https://doi.org/10.1007/s10614-015-9490-y. doi:10.1007/s10614-015-9490-y.

- Deep Reinforcement Learning for Trading. The Journal of Financial Data Science, . URL: https://www.pm-research.com/content/iijjfds/early/2020/03/16/jfds.2020.1.030. doi:10.3905/jfds.2020.1.030. Company: Institutional Investor Journals Distributor: Institutional Investor Journals Institution: Institutional Investor Journals Label: Institutional Investor Journals Publisher: Portfolio Management Research.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.