- The paper introduces PICASSO, a feed-forward framework that converts CAD sketches into parametric representations via rendering self-supervision.

- It employs a two-part design with the Sketch Parameterization Network and the Sketch Rendering Network to reduce order-specific biases and enhance prediction consistency.

- PICASSO demonstrates superior zero- and few-shot learning performance on the SketchGraphs dataset through effective image-to-image loss and multiscale evaluation metrics.

PICASSO: A Feed-Forward Framework for Parametric Inference of CAD Sketches via Rendering Self-Supervision

Overview of PICASSO

Computer-Aided Design (CAD) is a crucial component in mechanical design. Automating the parameterization of CAD sketches, particularly from hand-drawn images, is an area of growing interest due to the complexity and time-intensive nature of manual sketch translation into parametric models. The paper introduces PICASSO, a novel framework designed to address these challenges. PICASSO facilitates the conversion of CAD sketch images, either precise or hand-drawn, into parametric primitives without the necessity for extensive parameter-level annotations. This is accomplished via a unique implementation of rendering self-supervision, which utilizes the geometric properties of sketches to pre-train the network.

PICASSO consists of two main components: the Sketch Parameterization Network (SPN) and the Sketch Rendering Network (SRN). SPN focuses on predicting parametric primitives, while SRN renders these primitives in a differentiable manner, allowing for image-to-image loss computation. This framework supports zero- and few-shot learning scenarios, enhancing its utility in real-world applications where annotated datasets might be scarce.

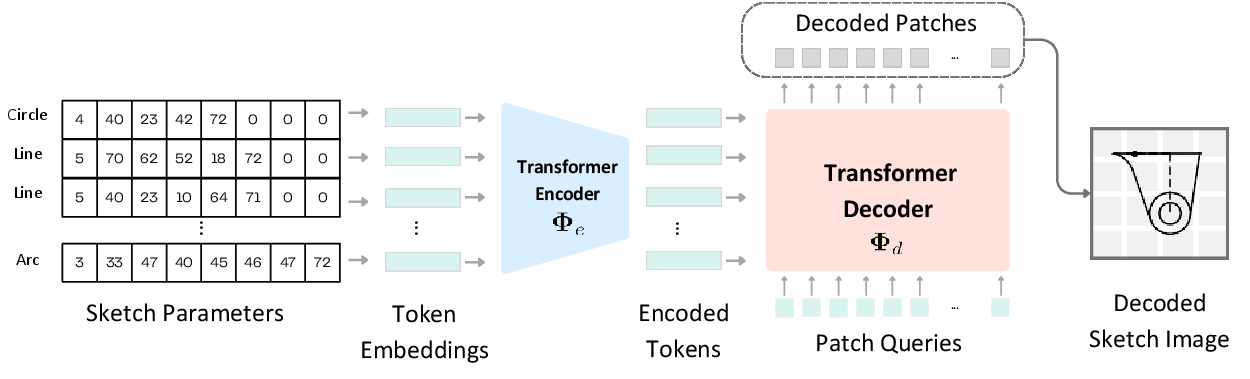

Sketch Rendering Network (SRN)

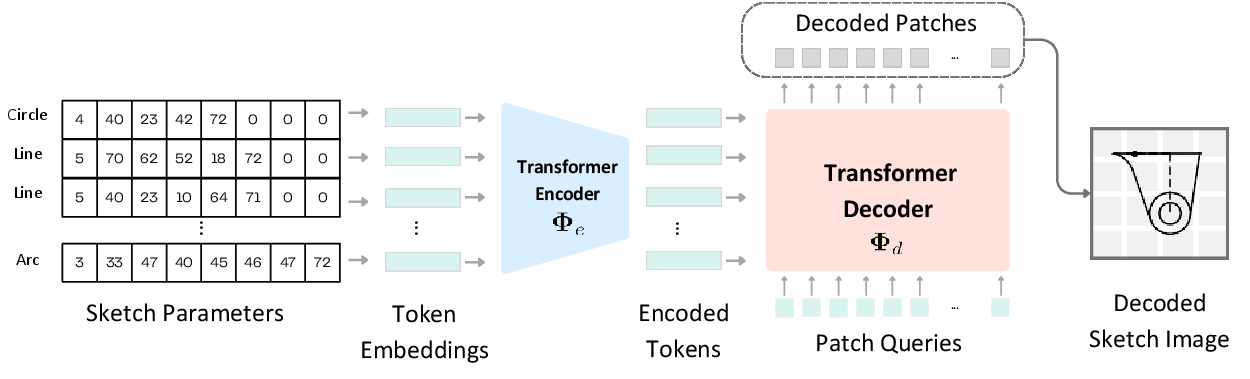

SRN is a critical component of PICASSO, responsible for rendering parametric CAD sketches to facilitate differentiable learning objectives. The network uses a transformer-based encoder-decoder architecture, processing input tokens (parametric primitives) into an embedded form, which is then transformed into a sketch image through a differentiable rendering process.

Figure 1: Overview of the Sketch Rendering Network (SRN). SRN is modeled by a transformer encoder-decoder that learns a mapping from parametric primitive tokens to the sketch image domain. Through neural differentiable rendering, SRN allows the computation of an image-to-image loss between predicted raster sketches and input precise or hand-drawn sketches.

The SRN operates on one-hot encoded tokens, and through neural differentiable rendering, allows the computation of an image-to-image loss. This mechanism supports scale misalignments by integrating a multiscale loss, effectively bridging conceptual gaps in rough sketches.

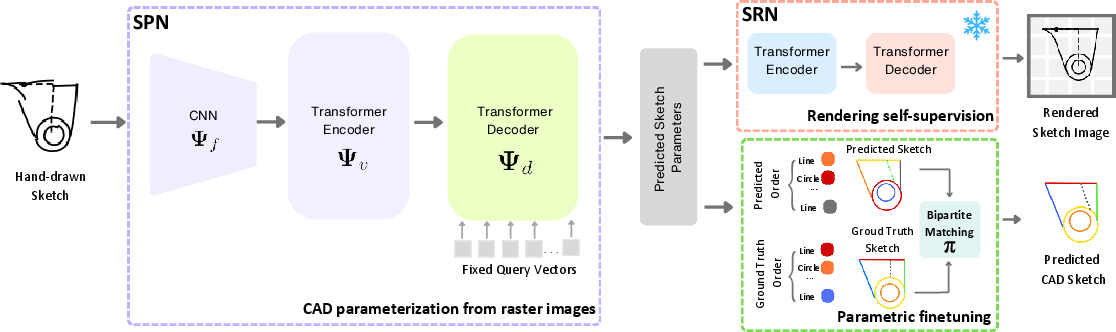

Sketch Parameterization Network (SPN)

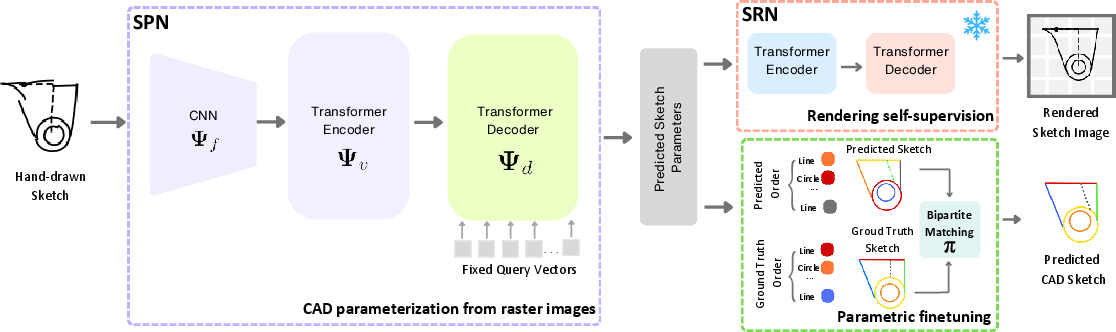

The SPN leverages a convolutional backbone followed by a transformer encoder-decoder to process input sketch images into parametric representations. Unlike autoregressive models, SPN treats CAD sketches as unordered sets of primitives, significantly reducing the potential solution space and eliminating order-specific biases in parameter prediction.

Figure 2: Overview of the Sketch Parameterization Network (SPN). An input raster sketch image is processed by a convolutional backbone and the produced feature map is fed to a transformer encoder-decoder for sketch parameterization. SPN is pre-trained using rendering self-supervision provided by SRN, allowing zero-shot CAD sketch parameterization, and finetuned with parameter-level annotations for few-shot scenario.

The feed-forward design of SPN addresses several limitations of prior autoregressive approaches, improving prediction consistency and enhancing robustness against exposure bias.

Implementation and Evaluation

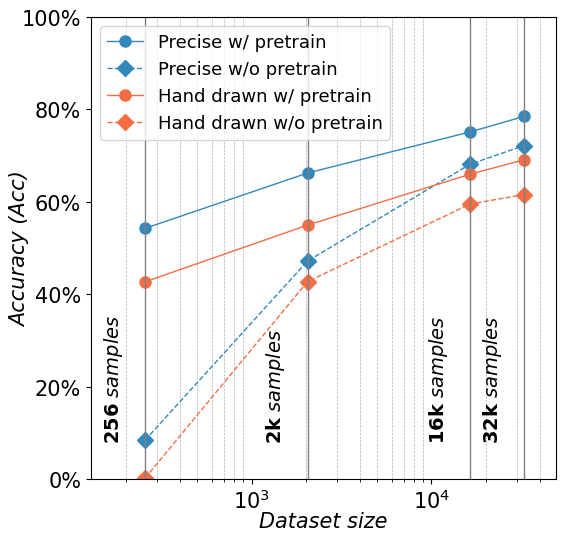

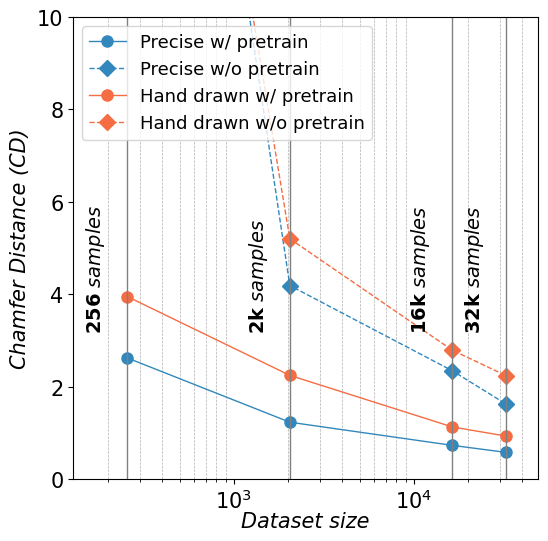

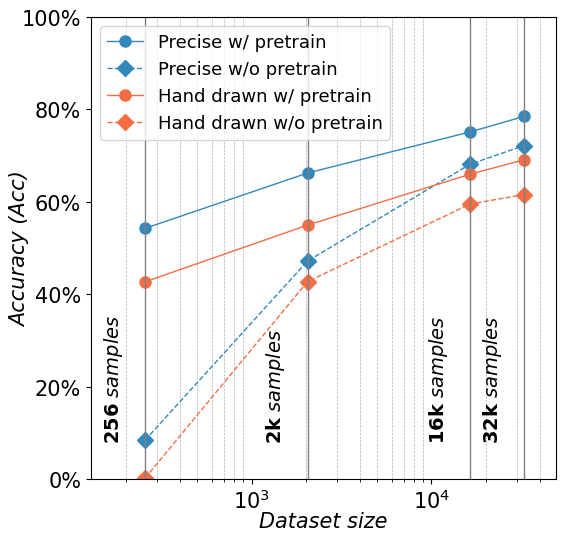

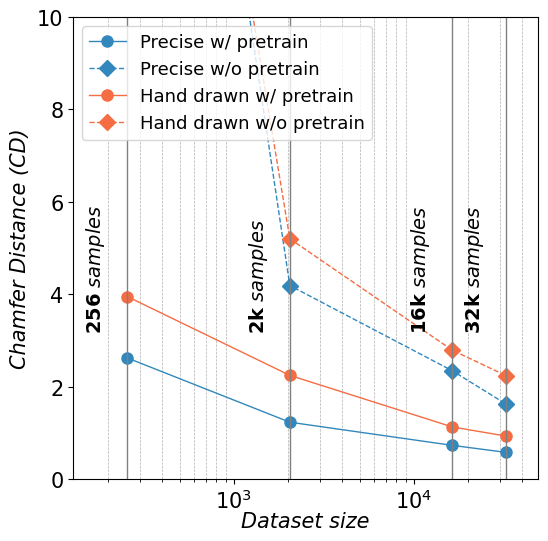

PICASSO's performance is validated on the SketchGraphs dataset, demonstrating the framework's capability to perform CAD sketch parameterization with limited supervision and across diverse sketch types. The inclusion of rendering self-supervision enables effective pre-training of SPN, validated quantitatively through accuracy and parameter-based metrics, as well as qualitatively via visual results.

Figure 3: Effectiveness of rendering self-supervision on a few-shot evaluation. PICASSO is pre-trained on a dataset of both precise and hand-drawn sketches. We report (left) parametric Accuracy (Acc) and (right) image-level Chamfer Distance (CD). Pre-trained SPN (w/ pre-train) is compared to a from-scratch counterpart (w/o pre-train). Best viewed in colors.

Notably, PICASSO's design leads to superior results in zero and few-shot learning scenarios when compared to state-of-the-art models, showcasing its potential for deployment in environments with restricted training data.

Conclusion

PICASSO represents a significant advancement in CAD sketch parameterization, particularly in its ability to generalize from limited data through rendering self-supervision. By decoupling the learning of parameterization from manual annotation dependencies, PICASSO not only streamlines the CAD sketching workflow but opens the door for enhanced model design in CAD environments. Future work could explore the incorporation of additional primitive types and optimization of SPN and SRN architectures to further improve precision and flexibility in real-world CAD applications.