- The paper introduces Richelieu, a self-evolving LLM framework that enhances diplomatic strategies through iterative self-play and adaptive memory.

- It integrates a strategic planner with reflection and a social reasoning module to improve long-term planning, negotiation, and alliance formation.

- Experimental evaluations show higher win and survival rates against models like Cicero, evidencing its robust adaptability across various LLMs.

Richelieu: Self-Evolving LLM-Based Agents for AI Diplomacy

Introduction

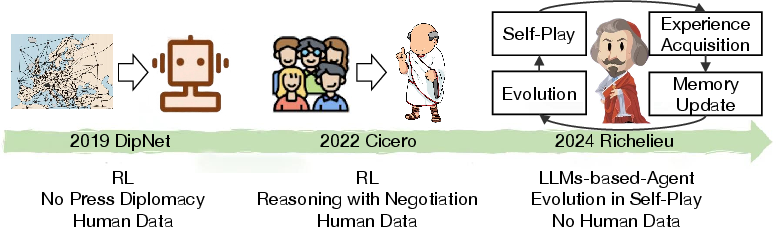

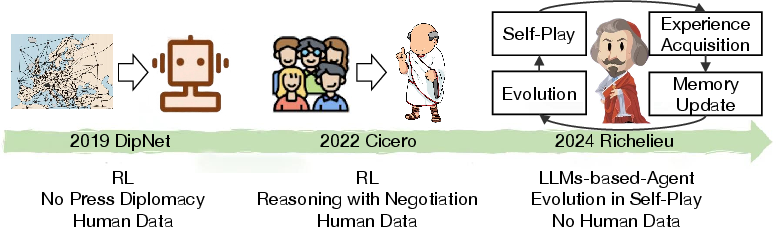

The paper introduces Richelieu, a self-evolving agent model leveraging LLMs to tackle the complexities of AI Diplomacy. Building on the premise that diplomacy requires intricate social reasoning, long-term planning, and negotiation skills, Richelieu aims to establish a unified system to simulate sophisticated diplomatic agents without relying heavily on domain-specific human data. This novel framework integrates strategic planning, negotiation, and memory management, highlighted by its ability to evolve through self-play, thereby improving its effectiveness in diplomacy-based gaming scenarios.

Architecture and Methodology

Richelieu is structured around several core modules, each contributing to its prowess in AI diplomacy tasks.

Strategic Planner with Reflection

The strategic planner serves as a critical component that defines sub-goals aligned with long-term objectives, capitalizing on LLMs' capabilities. Despite LLMs' tendency toward short-term optimization, Richelieu includes a reflection mechanism to refine sub-goals by drawing on historical experiences stored in its memory, thus enhancing rational decision-making.

Social Reasoning and Negotiation

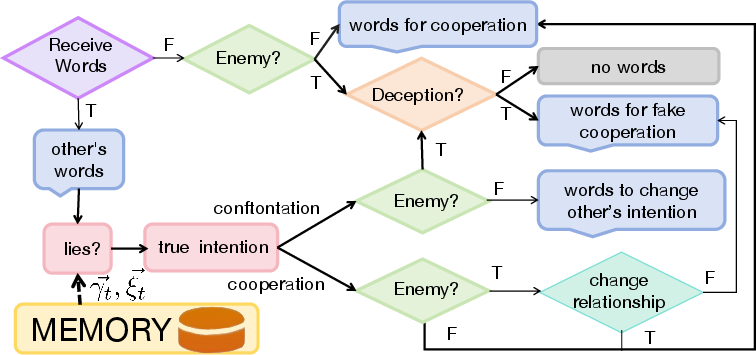

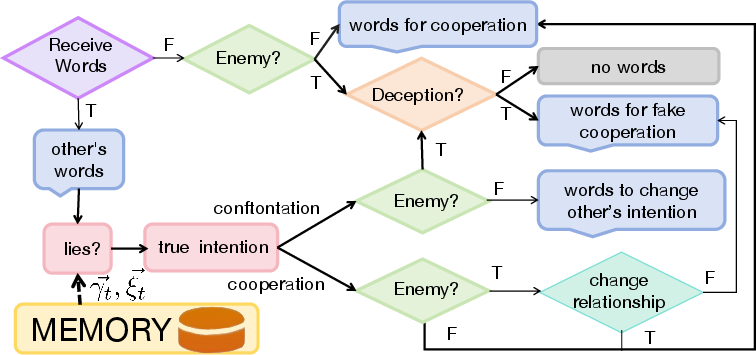

Social reasoning is fundamental to assessing the intentions and relationships of other players. Richelieu employs a social belief system to adapt and refine its strategies in response to evolving game dynamics. The negotiation module is vital for interaction, employing a dedicated reasoning flow to discern opponents' true intentions and adjust the diplomatic discourse accordingly.

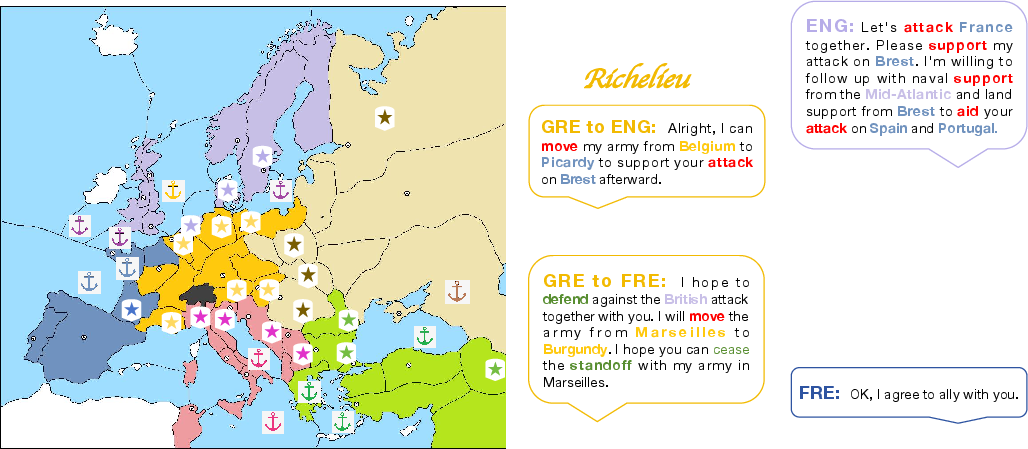

Figure 1: The social reasoning flow for negotiation. With the received words and memory, the agent will reason by answering the following questions: Is the opponent lying?",What is the true intention of the opponent?", is the opponent enemy?",Is it necessary to deceive the opponent?", and ``Is it necessary to change the relationship with the opponent?", and then generate the words accordingly for negotiation.

Memory Management and Self-Evolution

The memory module accrues historical game data, enabling Richelieu to evaluate past strategies, update relational dynamics, and drive the self-evolution process. Through self-play games, Richelieu enriches its memory bank with diversified experiences, continuously optimizing strategies without the need for human-driven data.

Figure 2: A new paradigm for building AI Diplomacy agent.

Experimental Evaluation

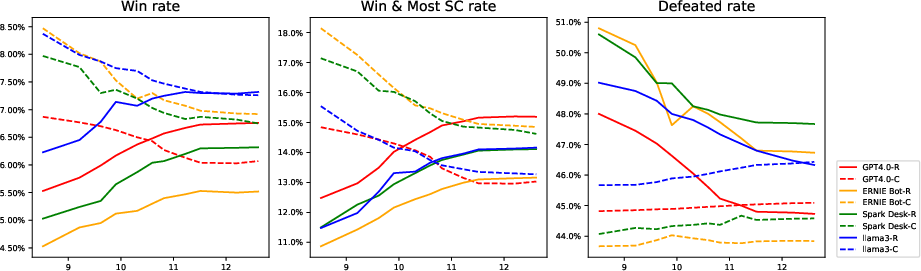

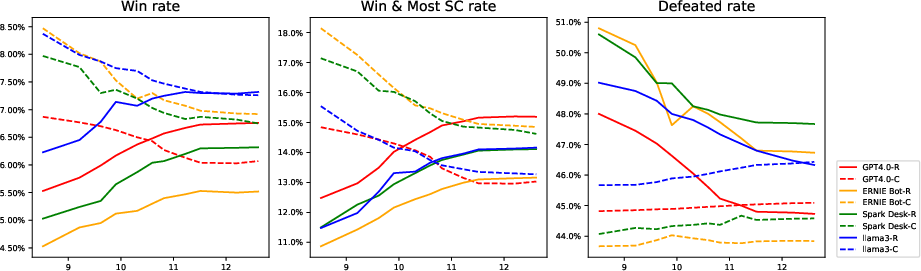

Richelieu was benchmarked against state-of-the-art models like Cicero in both no-press and press settings. The experimental results underscore Richelieu's superiority, exhibiting notably higher win and survival rates, attributed to its refined strategic planning and negotiation capabilities.

Figure 3: Richelieu modules benefit different LLMs. The solid line represents the experimental results for Richelieu, while the dashed line corresponds to Cicero. Different colors are used for different LLMs. The horizontal axis represents the logarithm of the number of training sessions, and the vertical axis denotes the rate.

Generalization Across LLMs

Richelieu's framework is tested across various LLMs, including GPT-4.0, ERNIE Bot, Spark Desk, and Llamma 3. Despite intrinsic differences among these models, Richelieu consistently demonstrated robustness and adaptive improvement post-training, highlighting its framework's versatility.

Example Case Studies

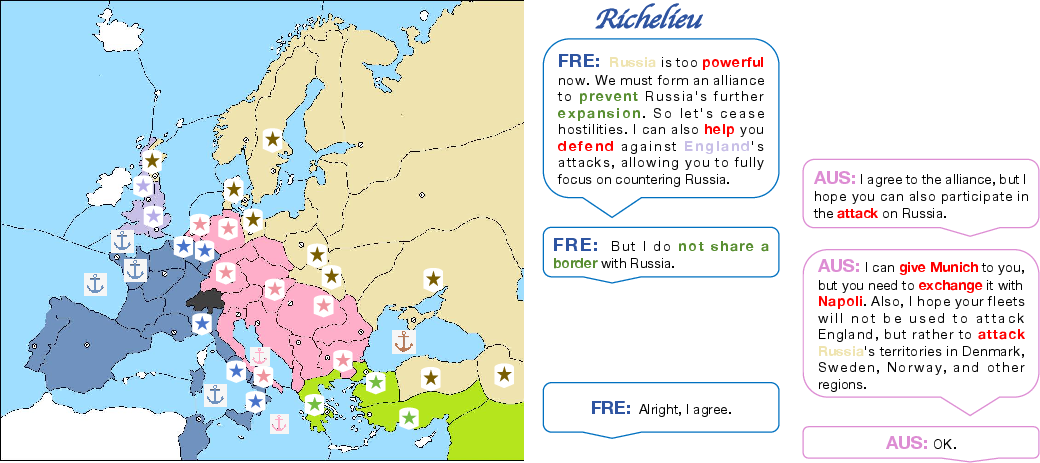

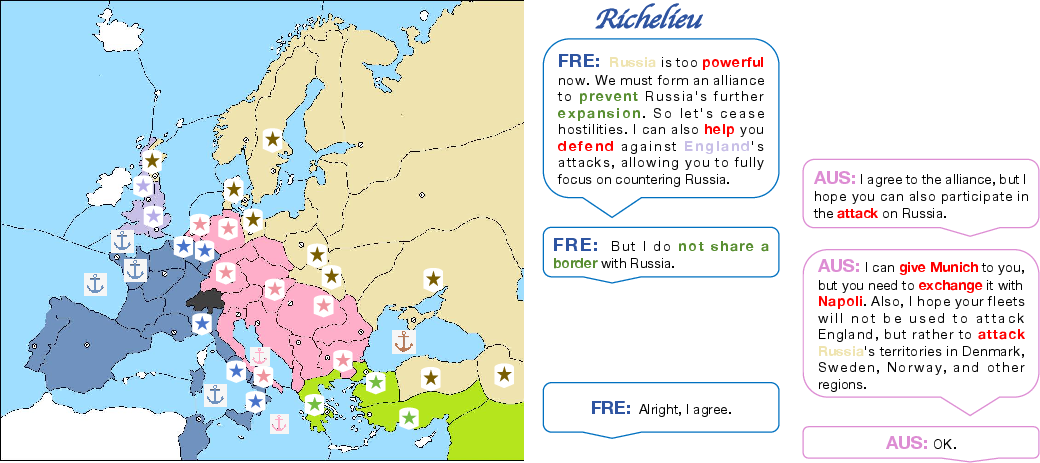

Alliance Formation

In scenarios involving imminent threats from powerful adversaries, Richelieu effectively negotiates alliances by offering strategic concessions, ensuring mutual goals align against stronger opponents.

Figure 4: An example case of negotiating with a nation to form an alliance to confront a strong enemy.

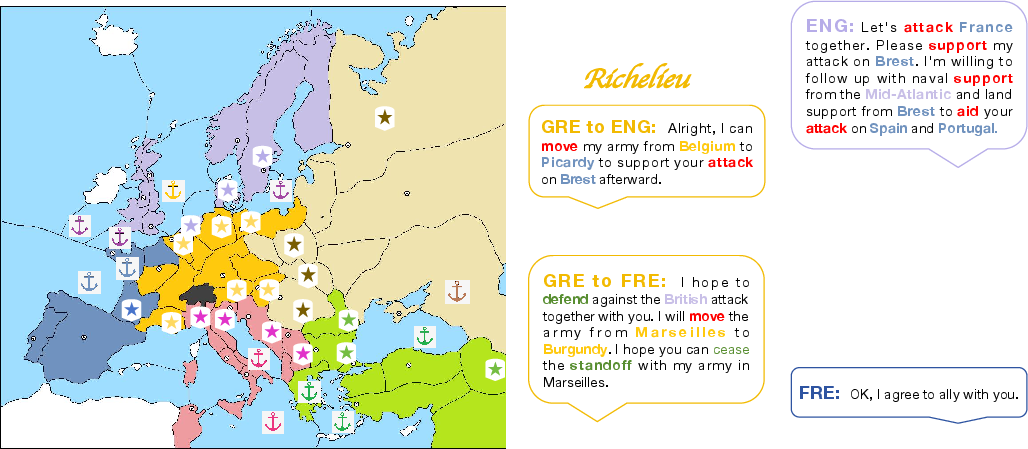

Deception Avoidance

Richelieu showcases its capacity to identify deceptive strategies by other nations, counteracting potential betrayals during negotiations, thus safeguarding its strategic interests.

Figure 5: An example case of avoiding being deceived by other countries during negotiations.

Conclusion

Richelieu advances the frontier of AI Diplomacy by integrating LLM-based strategic planning, negotiation, and memory-driven reflection without reliance on human-generated datasets. This autonomous ability to evolve and adapt in real-time positions Richelieu as a formidable contender in multi-agent simulation environments, with broad implications for future developments in AI-driven social interactions and negotiations. Furthermore, its generalizability across various LLMs extends its applicability beyond games into real-world diplomatic and strategic planning scenarios.